Abstract

Stochastic Value Gradient (SVG) methods underlie many recent achievements of model-based Reinforcement Learning agents in continuous state-action spaces. Despite their practical significance, many algorithm design choices still lack rigorous theoretical or empirical justification. In this work, we analyze one such design choice: the gradient estimator formula. We conduct our analysis on randomized Linear Quadratic Gaussian environments, allowing us to empirically assess gradient estimation quality relative to the actual SVG. Our results justify a widely used gradient estimator by showing it induces a favorable bias-variance tradeoff, which could explain the lower sample complexity of recent SVG methods.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Model-Based Reinforcement Learning (MBRL) [16, 20] is a promising framework for developing intelligent systems for sequential decision-making from limited data. Unlike in model-free Reinforcement Learning (RL) methods, MBRL agents use collected experiences to fit a predictive model of the environment. The agent can use the model to evaluate potential action sequences, saving costly trial-and-error experimentation in the real world, or to estimate quantities useful for improving its learned behavior. Stochastic Value Gradient (SVG) methods belong to the latter category, using the model to estimate the value gradient. While model-free methods use the score-function estimator of the value gradient, SVG methods can leverage the model to produce gradients via the pathwise derivative estimator, usually found to be more stable in practice [23]. Recently proposed RL agents using the SVG approach have demonstrated its effectiveness in learning robotic locomotion from data with unprecedented sample-efficiency [1, 4, 8], i.e. with few collected experiences.

Although SVG methods have been validated empirically, some of the algorithm design choices still lack rigorous theoretical or empirical justification. As recent work on model-free methods has shown, there can be a gap between the theoretical underpinnings of RL methods and their behavior in practice, often due to code-level optimizations [6, 11, 13]. Moreover, it is common for theoretically promising MBRL algorithms to fail in practice [14], although negative results are not often publicized.

In this work, we take a step towards a better understanding of the core tenets behind SVG methods by analyzing an important algorithm design choice: the gradient estimator computation [17, 23]. We aim to identify the key practical differences between the theoretically sound, unbiased formulation from the Deterministic Policy Gradient (DPG) framework [24] and an estimator more often used in SVG methods [1, 4, 8] that deviates from traditional policy gradient theory. The former represents an approach that’s theoretically sound, but lacks empirical validation, while the latter generalizes the methods that deviate from the theory, but achieve state-of-the-art results in continuous control benchmarks.

We evaluate gradient estimation quality and policy optimization performance on Linear Quadratic Gaussian (LQG) regulator environments. The LQG framework is extensively studied in the Optimal Control literature and is a special class of RL with continuous actions (a.k.a. continuous control), where SVG methods seem to excel. LQG has been proposed as a simple, yet nontrivial class of continuous control problems to help distinguish the various approaches to RL [21]. More importantly, LQG has a simple environment formulation, allowing us to compute the ground-truth policy performance and gradient, an ability not present in more complicated, nonlinear benchmarks for continuous control [28]. Thus, we can perform a more rigorous empirical assessment of gradient estimation quality and policy optimization performance within the LQG framework.

Therefore, our main contribution in this paper is a careful empirical analysis, using the LQG framework, of the practical differences between gradient estimators for SVG methods. The rest of this paper is organized as follows. Section 2 outlines related work on SVG methods and the gap between theory and practice of RL. Section 3 introduces the reader to the minimal technical background on RL, LQG and SVG, required to follow our analysis. Section 4 describes the scope of the empirical analysis and the experimental setup developed accordingly. Section 5 contains the main experiments, results, and our analysis thereof. Finally, we summarize our observations in Sect. 6 and point to possible future work to explore remaining gaps in our knowledge of the core tenets of SVG methods.

2 Related Work

Model-based policy gradients have been recently used in a variety of RL algorithms for continuous control. The PILCO algorithm is one of the first to leverage a learned model’s derivatives to compute the policy gradient with few samples, but its use of Gaussian processes hinders scalability to larger problems [5]. The original SVG paper introduced gradient estimation with stochastic neural network models using the reparameterization trick, an approach scalable to higher-dimensional problems [9]. Dreamer and Imagined Value Gradients explore SVGs with latent-space models [2, 8]. Model-Augmented Actor-Critic (MAAC) and SAC-SVG extend the SVG framework to that of maximum-entropy RL to incentivize exploration and stabilize optimization [1, 4]. However, the computation of the gradient is not studied in isolation or contrasted with other gradient estimation methods. Our work analyses, in detail, the gradient computation used in recent SVG-style algorithms that show a high level of performance with efficient use of data [1, 4, 8].

A few recent works have analyzed the gap between theory and practice in different areas of RL, highlighting our poor understanding of current methods. Reliability and reproducibility concerns regarding modern RL methods have been raised by several works [3, 10, 12], indicating a disconnect between the theory motivating these algorithms and their behavior in practice. Code-level optimizations have been found to contribute more to successful policy gradient methods than the choice of general training algorithm [6]. Closest to our work is that of Ilyas et al. [11], which shows that model-free policy gradient algorithms succeed in optimizing the policy despite having poor gradient estimation quality metrics in the relevant sample regime. Our work is, to the best of our knowledge, the first to propose a fine-grained analysis of gradient estimation in SVG methods using LQGs to provide solid references of their expected behavior.

3 Background

3.1 A Brief Introduction to RL

We consider the agent-environment interaction modeled as a continuous Markov Decision Process (MDP) [25], defined as the tuple \(({\mathcal S}, {\mathcal A}, R^{}, p^{*}, {\rho }, H)\), with each component described in what follows. Interaction with the environment occurs in a sequence of discrete timesteps  , where \(H\in {\mathbb N}\) denotes the time horizon, after which an episode of interaction is over. At every timestep t, the agent observes the current state \({\mathbf {s}}_t\in {\mathcal S}\subseteq {\mathbb R}^{n}\) from the set of possible states of the environment. It must then select an action \({\mathbf {a}}_t\in {\mathcal A}\subseteq {\mathbb R}^{d}\) from the set of possible actions to be executed. The environment then transitions to the next state by sampling from the transition probability kernel,

, where \(H\in {\mathbb N}\) denotes the time horizon, after which an episode of interaction is over. At every timestep t, the agent observes the current state \({\mathbf {s}}_t\in {\mathcal S}\subseteq {\mathbb R}^{n}\) from the set of possible states of the environment. It must then select an action \({\mathbf {a}}_t\in {\mathcal A}\subseteq {\mathbb R}^{d}\) from the set of possible actions to be executed. The environment then transitions to the next state by sampling from the transition probability kernel,  , and emits a reward signal using its reward function, \(r_{t+1} = R^{}({\mathbf {s}}_t, {\mathbf {a}}_t)\). The initial state is sampled from the initial state distribution, \({\mathbf {s}}_0 \sim {\rho }(\cdot )\).

, and emits a reward signal using its reward function, \(r_{t+1} = R^{}({\mathbf {s}}_t, {\mathbf {a}}_t)\). The initial state is sampled from the initial state distribution, \({\mathbf {s}}_0 \sim {\rho }(\cdot )\).

A policy defines a mapping from environment states to actions, \({\mathbf {a}}_t = {\mu }({\mathbf {s}}_t)\). The objective of an RL agent is to find a policy that produces the highest cumulative reward, or return, from the initial state:  . Here, the expectation is implicitly w.r.t. the initial state distribution and the sequential application of

. Here, the expectation is implicitly w.r.t. the initial state distribution and the sequential application of  . The key difference between Optimal Control and RL, both frameworks for optimal sequential decision making, is that in the former the agent has access to the full MDP, while in the latter the agent only knows the state and action space and has to learn its policy by trial-and-error in the environment.

. The key difference between Optimal Control and RL, both frameworks for optimal sequential decision making, is that in the former the agent has access to the full MDP, while in the latter the agent only knows the state and action space and has to learn its policy by trial-and-error in the environment.

3.2 Linear Quadratic Gaussian Regulator

The LQG is a special class of continuous MDP in which the transition kernel is linear Gaussian and the reward function is quadratic concave [27]:

LQGs are often used as a discretization of continuous-time dynamics described as linear differential equations, such as those of physical systems.

An important class of policies in this context is that of time-varying (a.k.a. nonstationary) linear policies:

where \(\mathbf {K}_t \in {\mathbb R}^{d\times n}\) and \(\mathbf {k}_t \in {\mathbb R}^{d}\), a.k.a. the dynamic and static gains respectively in the optimal control literature. Here, \({\theta }\) is the flattened parameter vector corresponding to the collection  of function coefficients. Given any linear policy \({\mu }_{{\theta }}\), its state-value function, the expected return from each state, can be computed recursively via the Bellman equations [25]:

of function coefficients. Given any linear policy \({\mu }_{{\theta }}\), its state-value function, the expected return from each state, can be computed recursively via the Bellman equations [25]:

where \(V^{{\mu }_{{\theta }}}({\mathbf {s}}_{H}) = 0\) and the expectation is w.r.t.  . Since the policy is linear, rewards are quadratic, and the transitions, Gaussian, the expectations in Eq. (4) can be computed analytically by iteratively solving Eq. (4) from timestep \(H-1\) to 0 (a dynamic programming method). The solution \(V^{{\mu }_{{\theta }}}\) is itself quadratic and thus the policy return can be computed analytically:

. Since the policy is linear, rewards are quadratic, and the transitions, Gaussian, the expectations in Eq. (4) can be computed analytically by iteratively solving Eq. (4) from timestep \(H-1\) to 0 (a dynamic programming method). The solution \(V^{{\mu }_{{\theta }}}\) is itself quadratic and thus the policy return can be computed analytically:

Moreover, LQGs can be solved for their optimal policy \({\mu }_{{\theta }}^\star \), which is time-varying linear, by modifying the dynamic programming method above to solve for the policy that maximizes the expected value from each timestep based on Eq. (4) (also computable analytically). Algorithm 1 shows the pseudocode of the procedure we use to derive the optimal policy, which can be slightly modified to return the value function for a given policy. LQG is thus one of the few classes of nontrivial continuous control problems which allows us to evaluate RL methods against the theoretical best solutions.

3.3 Stochastic Value Gradient Methods

In the broader RL context, methods that learn parameterized policies, often called policy optimization methods, have gained traction in the recent decade. As function approximation research, specially on deep learning, has advanced, parameterized policies were able to unify perception (processing sensorial input from the environment) and decision-making (choosing actions to maximize return) tasks [15]. To improve such parameterized approximators from data, the workhorse behind many policy optimization methods is Stochastic Gradient Descent (SGD) [22]. Thus, it is imperative to estimate the gradient of the expected return w.r.t. policy parameters, a.k.a. the value gradient, from data (states, actions and rewards) collected via interaction with the environment.

SVG methods build gradient estimates by first using the available data to learn a model of the environment, i.e., a function approximator  . A common approach to leveraging the learned model is as follows. First, the agent collects B states via interaction with the environment, potentially with an exploratory policy \(\beta \) (we use \({\mathbf {s}}_t \sim d^{\beta }\) to denote sampling from its induced state distribution). Then, it generates short model-based trajectories with the current policy \({\mu }_{{\theta }}\), branching off the states previous collected. Finally, it computes the average model-based returns and forms an estimate of the value gradient using backpropagation [7]:

. A common approach to leveraging the learned model is as follows. First, the agent collects B states via interaction with the environment, potentially with an exploratory policy \(\beta \) (we use \({\mathbf {s}}_t \sim d^{\beta }\) to denote sampling from its induced state distribution). Then, it generates short model-based trajectories with the current policy \({\mu }_{{\theta }}\), branching off the states previous collected. Finally, it computes the average model-based returns and forms an estimate of the value gradient using backpropagation [7]:

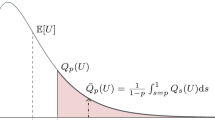

Here, \(\hat{Q}^{{\mu }_{{\theta }}}\) is an approximation (e.g., a learned neural network) of the policy’s action-value function  . We refer to Eq. (6) as the MAAC(K) estimator, as it uses K steps of simulated interaction and was featured prominently in the MAAC paper [4].Footnote 1

. We refer to Eq. (6) as the MAAC(K) estimator, as it uses K steps of simulated interaction and was featured prominently in the MAAC paper [4].Footnote 1

We question, however, if Eq. (6) actually provides good empirical estimates of the true value gradient. To elucidate this matter, we compare MAAC(K) to the value gradient estimator provided by the DPG theorem [24]:

Besides the fact that Eq. (7) requires us to use the on-policy distribution of states \(d^{{\mu }_{{\theta }}}\), more subtle differences with Eq. (6) can be seen by expanding the definition of the action-value function to form a K-step version of Eq. (7), which we call the DPG(K) estimator. Note that DPG(0) is equivalent to Eq. (7).

Figure 1 shows the Stochastic Computation Graphs (SCGs) of the MAAC(K) and DPG(K) estimators [23]. Here, borderless nodes denote input variables; circles denote stochastic nodes, distributed conditionally on their parents (if any); and squares denote deterministic nodes, which are functions of their parents. Because of the  term in Eq. (7), we’re not allowed to compute the gradients of future actions w.r.t. policy parameters in DPG(K), hence why only the first action has a link with \({\theta }\). On the other hand, MAAC(K) backpropagates the gradients of the rewards and value-function through all intermediate actions. Our work aims at identifying the practical implications of these differences and perhaps help explain why MAAC(K) has been used in SVG methods and not DPG(K).

term in Eq. (7), we’re not allowed to compute the gradients of future actions w.r.t. policy parameters in DPG(K), hence why only the first action has a link with \({\theta }\). On the other hand, MAAC(K) backpropagates the gradients of the rewards and value-function through all intermediate actions. Our work aims at identifying the practical implications of these differences and perhaps help explain why MAAC(K) has been used in SVG methods and not DPG(K).

4 Methodology

We now turn to our research goals and the methods employed to perform the proposed empirical analysis.

4.1 Scope of Evaluation

In this work, we propose a fine-grained analysis of the properties of DPG(K) and MAAC(K) in practice. We simplify our evaluation by using on-policy versions of the gradient estimators, i.e., by substituting \(d^{{\mu }_{{\theta }}}\) for \(d^{\beta }\) where present. We also opted for using perfect models of the environment dynamics and rewards, instead of learning them from data, to focus on the differences between gradient estimators. Thus, we approximate the expectations in Eqs. (6) and (7) via Monte Carlo sampling, using the actual transition kernel \(p^{*}\) and reward function \(R^{}\), to generate (virtual) transitions and compute the bootstrapped returns. One can view this setting as the best possible case in an SVG algorithm: when the model-learning subroutine has perfectly approximated the true MDP, allowing us to focus on the gradient estimation analysis.

We also compute the true action-value function, required for the K-step returns in Eqs. (6) and (7), recursively via dynamic programming (analogously to Eq. (4)). Computing the ground-truth action-value function allows us to further isolate any observed differences between the estimators as a consequence of their properties alone.

4.2 Randomized Environments and Policies

To test gradient estimation across a wide variety of scenarios, we define how to sample LQG instances, \({\mathcal M}= ({\mathcal S}, {\mathcal A}, R^{}, p^{*}, {\rho })\), to run our experiments on. The main configurations for our procedure are: state dimension (\(n\)), action dimension (\(d\)) and time horizon (\(H\)). From these parameters we define the state space \({\mathcal S}={\mathbb R}^n\), action space \({\mathcal A}={\mathbb R}^d\) and timesteps  .

.

The transition dynamics are stationary, sharing \(\mathbf {F}, \mathbf {f}, \mathbf {\Sigma }\) across all timesteps. The coefficients \(\mathbf {F_s}\) and \(\mathbf {F_a}\) are initialized so that the system may be unstable, i.e., with some eigenvalues of \(\mathbf {F_s}\) having magnitude greater or equal to 1, but always controllable, meaning there is a dynamic gain \(\mathbf {K}\) such that the eigenvalues of \((\mathbf {F_s}+ \mathbf {F_a}\mathbf {K})\) have magnitude less than 1. This ensures we are able to emulate real-world scenarios where uncontrolled state variables and costs may diverge to infinity, while ensuring there exists a policy which can stabilize the system [21]. Finally, we fix the transition bias to \(\mathbf {f}= {\mathbf {0}}\) and the Gaussian covariance to the identity matrix, \(\mathbf {\Sigma }= {I}\).

The initial state distribution is always initialized as a standard Gaussian distribution:  . As for the reward parameters, we initialize both \({{\mathbf {C}}_{{\mathbf {s}}{\mathbf {s}}}}\) and \({{\mathbf {C}}_{{\mathbf {a}}{\mathbf {a}}}}\) (see Eq. (2)) as random symmetric positive definite matrices, sampled via the scikit-learn library for machine learning in Python [19].Footnote 2

. As for the reward parameters, we initialize both \({{\mathbf {C}}_{{\mathbf {s}}{\mathbf {s}}}}\) and \({{\mathbf {C}}_{{\mathbf {a}}{\mathbf {a}}}}\) (see Eq. (2)) as random symmetric positive definite matrices, sampled via the scikit-learn library for machine learning in Python [19].Footnote 2

Since we consider the problem of estimating value gradients for linear policies in LQGs, we also define a procedure to generate randomized policies. We start by initializing all dynamic gains \(\mathbf {K}_t = \mathbf {K}\) so that \(\mathbf {K}\) stabilizes the system. This is done by first sampling target eigenvalues uniformly in the interval (0, 1) and then using the scipy library to compute \(\mathbf {K}\) that places the eigenvalues of \((\mathbf {F_s}+ \mathbf {F_a}\mathbf {K})\) in the desired targets [26, 29].Footnote 3 This process ensures the resulting policy is safe to collect data in the environment without having state variables and costs diverge to infinity. The generating procedure also serves to mimic practical situations where engineers devise a policy which can keep a system stable, but is not able to optimize running costs, which is where RL can serve to fine-tune it. Finally, we initialize all static gains as \(\mathbf {k}_t = {\mathbf {0}}\).

5 Empirical Analysis

We analyze the behavior of each estimator on two main settings: (I) gradient estimation for fixed policies and (II) impact of gradient quality on policy optimization.

5.1 Gradient Estimation for Fixed Policies

Following previous work on model-free policy gradients [11], we evaluate the quality of the gradient estimates, for a given policy, produced by each estimator using two metrics: (i) the average cosine similarity with the true policy gradient and (ii) the average pairwise cosine similarity.

The first metric is a measure of gradient accuracy and we denote it as such in the following plots. For a given minibatch size B and step size K, we compute 10 estimates of the gradient, each using B initial states sampled on-policy (\({\mathbf {s}}_t \sim d^{{\mu }_{{\theta }}}\)) and K-step model-based rollouts from each state. Then, we compute the accuracy as the average cosine similarity of each of the 10 estimates with the true policy gradient, obtained as follows. We first compute the true expected return of a policy \({\mu }_{{\theta }}\) via dynamic programming, following Eqs. (4) and (5). Our implementation in PyTorch [18] then allows us to use automatic differentiation to compute the gradient of the expected return w.r.t. policy parameters.

The second metric is a measure of gradient precision and we denote it as such in the following plots. Again, we compute 10 estimates of the gradient in the same manner used in computing the accuracy. Then, we compute the precision as the average pairwise cosine similarity of the 10 estimates (the higher this quantity, the lower the variance).

We first analyze the accuracy of each estimator when given enough states from the policy’s distribution \(d^{{\mu }_{{\theta }}}\) to approximate their true expected values. Figure 2 shows the accuracy obtained by DPG and MAAC for different values of K using 50000 states from the policy’s distribution. The LQGs considered have state and action spaces of dimension 2 and horizon of length 20. For each value of K, we initialize 10 different environment-policy pairs and compute the accuracy for each, denoted as different markers in each vertical line.Footnote 4 Note how all but one of the instances using DPG(K) converged to the true value gradient, indicating that it is indeed an unbiased estimator. On the other hand, MAAC(K) incurs a larger bias with increasing values of K, indicating that the added action action-gradient terms (see Fig. 1) influence the final direction.

Although the results above indicate that MAAC(K) is biased at convergence, most SVG algorithms operate on a much smaller sample regime. Figure 3 shows the accuracy across 10 different environment-policy pairs; this time, however, using smaller sample sizes from the policy distribution. Lines denote the average results and shaded areas, the 95% confidence interval.Footnote 5 For \(K=0\), the estimators are equivalent, which is verified in practice. In this more practical sample regime, we see that MAAC(K) produces more accurate results, specially for larger values of K.

Similar to Fig. 3, Fig. 4 shows the gradient precision in the same setting. We see that the variance of MAAC(K) is lower than that of DPG(K) across all tested values of \(K>0\). Overall, Figs. 2, 3 and 4 illustrate a classic instance of the bias-variance tradeoff in machine learning: MAAC(K) introduces bias, although a small one, in return for a much more stable (less variable) estimate of the gradient, whereas the unbiased DPG(K) demands much more samples to justify its use.

Note that the accuracy and precision metrics only account for differences in gradient direction and orientation. The magnitude may also be important, as it influences the learning rate when used to update policy parameters. Figure 5 shows that MAAC(K) produces gradients with higher norms compared to DPG(K). One should keep this in mind when choosing the learning rate for SGD, as the following experiments show that the gradient norm have a significant impact on policy optimization.

5.2 Impact of Gradient Quality on Policy Optimization

Previous work on model-free policy gradients has shown that policy optimization algorithms can improve a policy despite using poor gradient estimation [11]. It is not clear, however, if better policy gradient estimation translates to more stability or faster convergence in SVG algorithms. We therefore conduct our next experiments comparing the MAAC and DPG estimators for policy optimization.

Figures 6 and 7 show learning curves as total cost (negative return) against the number of SGD iterations across several instances of LQGs (\(\text {dim}({\mathcal S}) = \text {dim}({\mathcal A}) = 2\) and \(H= 20\)). We use the same hyperparameters for both estimators.Footnote 6 The results in Fig. 6 suggest that the better quality metrics observed for MAAC(K) in Figs. 3 and 4 do translate to faster and more stable policy optimization. However, if we normalize the gradient estimates before passing them to SGD, as in Fig. 7, we see that both estimators are evenly matched. These results suggest that the main advantage of MAAC(K) over DPG(K) is in its stronger gradient norm (see Fig. 5), which has been alluded to in previous work as a “strong learning signal” [4], inducing a faster learning rate.

Policy optimization with unnormalized SVG estimation. Each panel corresponds to a different LQG instance (generated via different random seeds). Lines denote the average results and shaded regions, one standard deviation, across 10 runs of the algorithm, each with a different random initial policy. Results obtained with the 8-step versions of each estimator.

Policy optimization with normalized SVG estimation. Each panel corresponds to a different LQG instance (generated via different random seeds). Lines denote the average results and shaded regions, one standard deviation, across 10 runs of the algorithm, each with a different random initial policy. Results obtained with the 8-step versions of each estimator. Gradients were normalized before being passed to SGD.

We also evaluate if our previous findings generalize to higher state-action space dimensions, where sample-based estimation gets progressively harder. Our performance metric is the suboptimality gap, i.e., the percentage difference in expected return between the current policy and the optimal one: \(100 \times (J({\mu }_{{\theta }}^\star ) - J({\mu }_{{\theta }}))/J({\mu }_{{\theta }}^\star )\).Footnote 7 Table 1 summarizes our results with policy optimization with varying LQG sizes and time budgets.Footnote 8 We don’t normalize gradients in this case, as that is not a common practice in SVG algorithms.Footnote 9 Our findings show that the performance gap between DPG(K) and MAAC(K) tends to widen with higher dimensionalities, with policies trained via the latter outperforming those using the former. These results further emphasize the practicality of MAAC(K) over DPG(K), justifying the former’s use in recent SVG methods [1, 4, 8].

6 Conclusions and Future Work

In this work, we take an important step towards a better understanding of current SVG methods. Using the LQG framework, we show that the gradient estimation used by MAAC and similar methods induces a slight bias compared to the true value gradient. On the other hand, using a corresponding unbiased estimator such as the K-step DPG one increases sample-complexity due to high variance. Moreover, the MAAC gradient estimates have higher magnitudes, which could help explain the fast learning performance of current methods. Indeed, we found that policies trained with MAAC converge faster to the optimal policies than those using the K-step DPG across several LQG instances.

Future work may further leverage the LQG framework to perform fine-grained analyses of other important components of SVG algorithms. For example, little is known about the interplay between model, value function, and policy learning from data in practice. A study on model and value function optimization metrics and their relation to the gradient estimation accuracy and precision can help in the design of stable and efficient SVG algorithms in the future. Another direction for investigation is analyzing the impact of off-policy data collection for model training. Since models have limited representation capacity, learning the MDP dynamics from the distribution of another policy may not translate to good gradient estimation of the target policy.

Notes

- 1.

Our formula differs slightly from the original in that it considers a deterministic policy instead of a stochastic one.

- 2.

We use the make_spd_matrix function.

- 3.

We use the scipy.signal.place_poles function.

- 4.

We use the same 10 random seeds for experiments across values of K.

- 5.

We use seaborn.lineplot to produce the aggregated curves.

- 6.

Learning rate of \(10^{-2}\), \(B=200\), and \(K=8\).

- 7.

Recall from Sect. 3 that LQG allows us to compute the optimal policy analytically.

- 8.

We found that the computation times for both estimators were equivalent.

- 9.

We only clip the gradient norm at a maximum of 100 to avoid numerical errors.

References

Amos, B., Stanton, S., Yarats, D., Wilson, A.G.: On the model-based stochastic value gradient for continuous reinforcement learning. CoRR arXiv:2008.1 (2020)

Byravan, A., et al.: Imagined value gradients: model-based policy optimization with transferable latent dynamics models. In: CoRL. Proceedings of Machine Learning Research, vol. 100, pp. 566–589. PMLR (2019)

Chan, S.C.Y., Fishman, S., Korattikara, A., Canny, J., Guadarrama, S.: Measuring the reliability of reinforcement learning algorithms. In: ICLR. OpenReview.net (2020)

Clavera, I., Fu, Y., Abbeel, P.: Model-augmented actor-critic: backpropagating through paths. In: ICLR. OpenReview.net (2020). https://openreview.net/forum?id=Skln2A4YDB

Deisenroth, M.P., Rasmussen, C.E.: PILCO: a model-based and data-efficient approach to policy search. In: Getoor, L., Scheffer, T. (eds.) Proceedings of the 28th International Conference on Machine Learning, ICML 2011, Bellevue, Washington, USA, 28 June–2 July 2011, pp. 465–472. Omnipress (2011). https://icml.cc/2011/papers/323_icmlpaper.pdf

Engstrom, L., et al.: Implementation matters in deep RL: a case study on PPO and TRPO. In: ICLR. OpenReview.net (2020). https://github.com/implementation-matters/code-for-paper

Goodfellow, I.J., Bengio, Y., Courville, A.C.: Deep Learning. Adaptive Computation and Machine Learning. MIT Press, Cambridge (2016)

Hafner, D., Lillicrap, T.P., Ba, J., Norouzi, M.: Dream to control: learning behaviors by latent imagination. In: ICLR. OpenReview.net (2020)

Heess, N., Wayne, G., Silver, D., Lillicrap, T.P., Erez, T., Tassa, Y.: Learning continuous control policies by stochastic value gradients. In: NIPS, pp. 2944–2952 (2015). http://papers.nips.cc/paper/5796-learning-continuous-control-policies-by-stochastic-value-gradients

Henderson, P., Islam, R., Bachman, P., Pineau, J., Precup, D., Meger, D.: Deep reinforcement learning that matters. In: AAAI, pp. 3207–3214. AAAI Press (2018)

Ilyas, A., et al.: A closer look at deep policy gradients. In: ICLR. OpenReview.net (2020)

Islam, R., Henderson, P., Gomrokchi, M., Precup, D.: Reproducibility of benchmarked deep reinforcement learning tasks for continuous control. CoRR arXiv:1708.04133 (2017)

Liu, Z., Li, X., Kang, B., Darrell, T.: Regularization matters for policy optimization - an empirical study on continuous control. In: International Conference on Learning Representations (2021). https://github.com/xuanlinli17/iclr2021_rlreg

Lovatto, A.G., Bueno, T.P., Mauá, D.D., de Barros, L.N.: Decision-aware model learning for actor-critic methods: when theory does not meet practice. In: Proceedings on “I Can’t Believe It’s Not Better!” at NeurIPS Workshops. Proceedings of Machine Learning Research, vol. 137, pp. 76–86. PMLR, December 2020. http://proceedings.mlr.press/v137/lovatto20a.html

Mnih, V., et al.: Human-level control through deep reinforcement learning. Nature 518(7540), 529–533 (2015)

Moerland, T.M., Broekens, J., Jonker, C.M.: Model-based reinforcement learning: a survey. In: Proceedings of the International Conference on Electronic Business (ICEB) 2018-December, pp. 421–429 (2020). http://arxiv.org/abs/2006.16712

Mohamed, S., Rosca, M., Figurnov, M., Mnih, A.: Monte Carlo gradient estimation in machine learning. J. Mach. Learn. Res. 21, 132:1–132:62 (2020)

Paszke, A., et al.: PyTorch: An imperative style, high-performance deep learning library. In: Advances in Neural Information Processing Systems, vol. 32, pp. 8024–8035. Curran Associates, Inc. (2019). http://papers.nips.cc/paper/9015-pytorch-an-imperative-style-high-performance-deep-learning-library.pdf

Pedregosa, F., et al.: Scikit-learn: machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011)

Polydoros, A.S., Nalpantidis, L.: Survey of model-based reinforcement learning: applications on robotics. J. Intell. Robot. Syst. 86(2), 153–173 (2017). https://doi.org/10.1007/s10846-017-0468-y

Recht, B.: A tour of reinforcement learning: the view from continuous control. Ann. Rev. Control Robot. Auton. Syst. 2(1), 253–279 (2019). https://doi.org/10.1146/annurev-control-053018-023825, http://arxiv.org/abs/1806.09460

Ruder, S.: An overview of gradient descent optimization algorithms. CoRR arXiv:1609.04747 (2016)

Schulman, J., Heess, N., Weber, T., Abbeel, P.: Gradient estimation using stochastic computation graphs. In: NIPS, pp. 3528–3536 (2015)

Silver, D., Lever, G., Technologies, D., Lever, G.U.Y., Ac, U.C.L.: Deterministic Policy Gradient (DPG). In: Proceedings of the 31st International Conference on Machine Learning, vol. 32, no. 1, pp. 387–395 (2014). http://proceedings.mlr.press/v32/silver14.html

Szepesvári, C.: Algorithms for Reinforcement Learning. Synthesis Lectures on Artificial Intelligence and Machine Learning. Morgan & Claypool Publishers (2010). https://doi.org/10.2200/S00268ED1V01Y201005AIM009

Tits, A.L., Yang, Y.: Globally convergent algorithms for robust pole assignment by state feedback. IEEE Trans. Autom. Control 41(10), 1432–1452 (1996). https://doi.org/10.1109/9.539425

Todorov, E.: Optimal Control Theory. Bayesian Brain: Probabilistic Approaches to Neural Coding, pp. 269–298 (2006)

Todorov, E., Erez, T., Tassa, Y.: MuJoCo: a physics engine for model-based control. In: IEEE International Conference on Intelligent Robots and Systems, pp. 5026–5033. IEEE (2012). https://doi.org/10.1109/IROS.2012.6386109, http://ieeexplore.ieee.org/document/6386109/

Virtanen, P., et al.: SciPy 1.0: fundamental algorithms for scientific computing in Python. Nat. Meth. 17, 261–272 (2020). https://doi.org/10.1038/s41592-019-0686-2

Acknowledgments

This work was partly supported by the CAPES grant 88887.339578/2019-00 (first author), FAPESP grant 2016/22900-1 (second author), and CNPq scholarship 307979/2018-0 (third author).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Lovatto, Â.G., Bueno, T.P., de Barros, L.N. (2021). Gradient Estimation in Model-Based Reinforcement Learning: A Study on Linear Quadratic Environments. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13073. Springer, Cham. https://doi.org/10.1007/978-3-030-91702-9_3

Download citation

DOI: https://doi.org/10.1007/978-3-030-91702-9_3

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91701-2

Online ISBN: 978-3-030-91702-9

eBook Packages: Computer ScienceComputer Science (R0)