Abstract

The Quadratic Assignment Problem (QAP) can be subdivided into different versions, being present in several real-world applications. In this work, it is used a version that considers many objectives. QAP is an NP-hard problem, so approximate algorithms are used to address it. This work analyzes a Hyper-Heuristic (HH) that selects genetic operators to be applied during the evolutionary process. HH is based on the NSGA-III framework and on the Thompson Sampling approach. Our main contribution is the analysis of the use of a many objective algorithm using HH for QAP, as this problem was still under-explored in the context of many objective optimization. Furthermore, we analyze the behavior of operators forward the changes related to HH (TS). The proposal was tested considering 42 instances with 5, 7 and 10 objectives. The results, interpreted using the Friedman statistical test, were satisfactory when compared to the original algorithm (without HH), as well as when compared to algorithms in the literature: MOEA/DD, MOEA/D, SPEA2, NSGA-II and MOEA/D-DRA.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

The Quadratic Assignment Problem (QAP) is an NP-Hard problem even for small instances [1]. The QAP was initially introduced to model a plant location problem, with the objective of placing facilities in places so that the sum of the product between distances and flows is minimal [2]. Depending on the needs, several variations of the problem arose, such as the linear allocation problem, quadratic bottleneck allocation problem, the quadratic multi-objective allocation problem, among others. Each variation works to solve different real-world problems [1]. The multi-objective QAP (mQAP) [3] and the many objective QAP (MaQAP) are versions of the problem where a distance matrix and several flow matrices (objectives) are used, with two or more functions configuring the mQAP and with more than three functions the MaQAP. The greater the number of objective functions to be optimized, the amount of non-dominated solutions and the computational cost for calculating the fitness of the solutions and the operations of the algorithm increases [4]. These versions are used to solve disposition problems, for example, the allocation of clinics and departments in hospitals to reduce the distance between doctors, patients, nurses and medical equipment [1].

NSGA-III [5] is an Evolutionary Algorithm for many objective problems that uses a non-dominance classification approach based on reference points, being a hybrid strategy. Different classifications are used according to the method that the problem is solved [4]. Such an approach has been showing good results in the literature of the area, so it will be investigated in this work.

Hyper Heuristics (HHs) [6] are high-level methods that generate or select heuristics, used to free the user from choosing a heuristic or its configuration. The Thompson Sampling (TS) [7] approach is a HH that selects a heuristic based on information accumulated during the execution of the algorithm to improve their performance.

In this work, a study was carried out on the methods already used to solve MaQAP, implementing and testing the NSGA-III with the Thompson Sampling, which makes the selection of the crossover operator combined with a mutation operator. We tested different versions by modifying the way of Thompson Sampling action. TS uses success and failure factors to determine the best operator choices to apply. Different ways of handling these factors and the frequency of resetting them are proposed. The changes of success and failures factors are based on the dominance relation and the average of the objectives. In addition to the comparison between the previously mentioned versions, the proposal, named NSGA-III\(_{TS}\), is also evaluated in terms of effectiveness by comparison with the original algorithm that does not use HH. Finally, the performance of the NSGA-III\(_{TS}\) is compared with five other evolutionary multi-objective algorithms: MOEA/DD [8], MOEA/D [9], SPEA2 [10], NSGA-II [11] and MOEA/D-DRA [12]. The main contribution of this work is to analyze the behavior of a many objective algorithm based on hyper-heuristic applied to MaQAP. MaQAP is an under-explored problem in the literature of the area, which motivated its choice for use in this work due to several fronts of scientific contribution. In addition, to analyze how the choice of operators proceeds when important factors related to the HH used (TS) are modified.

This paper is organized as follows. Section 2 presents concepts related to work, such as the QAP, the used algorithm (NSGA-III) and the HHs. The section ends with some related works. Section 3 presents the proposed methodology for the development of the work. The results are shown and discussed in Sect. 4. Section 5 ends the work with the conclusions and future directions.

2 Background

2.1 Many-Objective Quadratic Assignment Problem - MaQAP

QAP’s objective is to associate a set of n facilities to n different locations, in order to minimize the cost of transporting the flow between the facilities [2]. Over time the problem has been adapted to solve different real-world problems [13]. One of its existing variations is the QAP with many objectives, which is an extension of the multi-objective QAP, but which more than 3 flow matrices.

The MaQAP is formalized by the Eq. 1, with the condition of Eq. 2 being satisfied. \(\pi \) is a solution that must belong to a set of feasible solutions.

The calculation of each objective is shown in Eq. 2, and two matrices of the same size are used, the matrix a\(_{ij}\) has the distance between the locations, b\(_{\pi _i\pi _j}^{k}\) is a matrix of the k-th objective flow, with \(\pi _i\) and \(\pi _j\) being the position of i and j in \(\pi \); n is the number of locations and k is an iterator. The objective is to find a permutation \(\pi \), belonging to the set of all possible permutations (P(n)), which simultaneously minimizes m objective functions each represented by the k-th C. In order to represent problems with many objectives, the constraint \(m>3\) must be added.

2.2 Hyper-Heuristics (HH)

Hyper heuristics are high-level methodologies developed for the optimization of complex problems [6], looking for the best option to fulfill your objective by generating heuristics through components (generation HH) or by selecting from a set of low-level heuristics (selection HH). The basic composition of a selection HH is a high-level strategy, which uses a mechanism to decide which low-level heuristic to use, and an acceptance criterion that can accept or reject a solution. The other element of HH is a set of low-level heuristics, which must be appropriate for the tested problem [14]. Low-level heuristics work on the set of solutions, they can be of two types, constructive or perturbative.

Among the ways that the HHs can learn are: online, where the learning takes place during the execution of the search process, or offline where the learning already comes with the rules defined before the execution of the algorithm. The use of HHs is due to their ability to find good results for NP-hard problems, seeking the best way to achieve a good result and removing the need for the user to choose a single heuristic or its configuration [15]. But as the HHs have evolved, updated information is needed to better organize the classifications to reflect the challenges found in the current world, [16] presents an extended version of the categorization of selection heuristics.

In the Fig. 1, a diagram showing the elements of the HH used in this work is shown. In which, the high level strategy is formed by the TS as a heuristic selection mechanism, and the NSGA-III acceptance criterion is used. The low and high level heuristics are separated because they work in different search spaces, the high level ones works searching in the space of low level heuristics, and the low level ones work in the space of solutions. The heuristic selection mechanism chooses within a predefined set of low-level heuristics based on its own evaluation criteria. In this case, Thompson Sampling uses the success and failure factors associated to each operator who performs a count using different methods to define whether a low-level heuristic was efficient or not. Finally, the solution is sent to the acceptance criterion, which can choose whether or not to accept the new solution (NSGA-III acceptance criterion).

Adapted from [14].

Hyper-Heuristic elements.

Low-Level Heuristics - Genetic Operators: The set of genetic operators is from the permutational type, so they don’t generate infactible solutions. These operators use two solutions, to create two new solutions, and they are: i) Partially Matched Crossover (PMX) [17]: 2 cutoff points are selected, so both parents are divided into 3 parts. When creating an offspring, the elements between the points are copied in the same absolute position, then the elements of the other parent are taken. If they have not been used, they are copied in the same position, and if there is a conflict, they are exchanged according to their position in the offspring. The parents order to raise a second offspring is reversed. ii) Cyclic Crossover (CX) [18]: sequences of connected positions are created by the values and positions. It starts at the first value on the left, takes the position at parent 2, checks and looks for the value at parent 1 and repeats the process until it finds cycles. Each cycle is passed alternately to the offspring. iii) Permutational Two Points Crossover (2P) [17]: two points are chosen at random, separating the individual into three parts. The initial and final parts of a parent are passed on to the offspring. Then, the parent is changed and the offspring receive values according to the order in the parent of the missing elements.

In this work the Swap mutation [19] was used, then two positions of the solution are chosen at random and they are exchanged with each other.

High Level Heuristic - Thompson Sampling: Thompson Sampling was proposed by [7] and is used for online decision problems, where information is accumulated during the execution of the algorithm to performance optimization. As shown in [20], TS can incorporate a Bayesian model to represent the uncertainty associated with decision making on K operators (actions). When used, a k operator produces, in the context of Bernoulli, a reward of 1 with probability \(\theta _k\) and a reward of 0 with probability \(1-\theta _k\). In the present work, for each k action, the previous probability density function of \(\theta _k\) is given by a \(\beta \)-distribution with parameters \(\boldsymbol{\alpha } = (\alpha _1, ..., \alpha _K)\) e \(\boldsymbol{\beta } = (\beta _1, ..., \beta _K)\):

with \(\varGamma \) denoting the gamma function. As the observations are collected, the distribution is updated according to the Bayes rule and the \(\beta \)-prior conjugate. This means that \(\alpha _k\) or \(\beta _k\) increases by one with each success or failure observed, respectively. Here \(p_{op}(g) = \theta _k\) (operator probability in generation g) and the operator chosen is the one with the highest value of \(p_{op}(g)\). The success estimate of the probability is sampled randomly from the later distribution.

2.3 NSGA-III

NSGA-III [5] arose from adaptations of NSGA-II [11] which is used for multiobjective problems, in order to better address problems with many objectives. NSGA-III is an algorithm based on Pareto dominance and Decomposition concepts. Pareto dominance happens when an individual has at least one of the objectives better than the others and all the others must be equivalent or better [21]. As decomposition-based algorithms, the NSGA-III uses an adaptive reference point mechanism, when the population for the next generation is formed, to preserve the diversity and convergence of the algorithm.

Any structure of reference points can be used, but in this work, as well as in many decomposition based algorithms, is considered the technique applied in [5]. In this technique, in order to avoid generating a very large number of reference points, the reference points are generated twice and then joined.

2.4 Related Works

When analyzing the papers on QAP [1], mQAP [22,23,24] and MaQAP [25, 26], it is possible to observe that with the increasing objectives and, consequently, on the problem complexity, there is less research being carried out on the theme. In researching different sources of scientific work, few correlated works are found for the QAP with many objectives. In [25] the Pareto stochastic local search is used. The authors compare different versions using algorithms that make use of the Cartesian product of scaling functions to reduce the number of objectives in the search space. The benchmark considered for testing has problems with 3 objectives, and the quality indicator used was the hypervolume. In [26] a comparison research was carried out between 18 state-of-art evolutionary algorithm to solve QAP with many objectives. The instances used in the tests have 3, 5 and 10 objectives. The performance metric used was IGD (Inverted Generational Distance). In the work [27] two frameworks for multi-objective optimization, MOEA/D and NSGA-II, were hybridized with Transgenetic Algorithms for the multi- and many-objective QAP solution. The benchmark instances considered 2, 3, 5, 7 and 10 objectives. The results were analyzed by different quality indicators of the Pareto frontier and statistical tests. The work shows the performance gain of the inclusion of operators based on computational transgenetics in the analyzed frameworks.

The many objective HHs have already been used for some problems in the literature [28,29,30,31,32], especially for continuous optimization problems with benchmark instances, although they are still under-explored. However, there is a gap for MaQAP, where the use of HH has not yet been explored. This is one of the contributions that we intend to obtain with this work.

3 Proposed Approach

The algorithm proposed in this work, named NSGA-III\(_{TS}\), is presented in Algorithm 1, in which the functions marked in gray are part of the TS-based HH. The NSGA-III\(_{TS}\) algorithm starts with the random generation of the initial population, of size NP, and the evaluation of its solutions. The NP reference points (Z) are initialized as an uniformly distributed hyperplane intersecting the axis of each objective (Step 1). Then, a main loop (Steps 3–27) begins , each iteration is a new generation (g). Internally to the main repetition, another loop (Steps 5–12) is used to create a new population who starts by selecting two solutions (parents), carried out at random (Step 6). The Thompson Sampling chooses among three different crossover operators (Step 7), the PMX, the CX or the 2P, combined with the swap mutation, using the beta distribution. After the application of the chosen crossover and, followed by the Swap mutation (Step 8), the new solutions are evaluated (Step 9) and inserted in a NP size population formed only by the offspring. The next action of the algorithm (Step 10) is related to the different versions of the algorithm which have been created. This step returns the obtained reward for each operator, after applying the swap mutation.

When the population of offspring is full, respectively in Steps 13 and 14, an union with the population of the parents is made, and the joined population is organized by the non-dominance ordering method. The first set of solutions to be part of next population is formed by the fronts of non-dominance that fully fit into the new population (Steps 16–19). If individuals are missing, the algorithm normalizes the objectives (Step 23) to determine the ideal and extreme points and adjust the reference points accordingly. The projected distance of each solution on the lines formed by the ideal and reference points are used to associate each solution with a reference point (Step 24) and a niche procedure is performed to select the solutions remaining, prioritizing clusters with fewer solutions (Step 25). Unused solutions are discarded. The algorithm ends when it reaches the maximum number of evaluations performed.

The different versions tested in Step 10 represent contributions of this work. What differentiates one version from another is the success and failure factor and the frequency of resetting successes and failures count, used by TS. The success and failure factor concerns the decision on which operator applications will contribute to the update of the TS counters (success - \(\alpha _k\) and failure - \(\beta _k\) in Eq. 3).

The NSGA-III\(_{TS}\)’s versions that change the success and failures factors are based on the dominance relation (ND) and the average of the objectives (AVG) and work as follows: i) ND: after applying the crossover, a two-phase method is used. In the first, parents and offspring are ordered by level of non-dominance. If all parents and offspring are at the same level, the best average of the objectives is used as success. On the other hand, if at least one offspring succeeds in dominating a parent, it is considered success, otherwise it is failure; ii) AVG: after applying the crossover, the mean of the objectives is used. Comparing the average of the parents with that of the offspring, if there is improvement it is considered success, otherwise failure;

The different ways of treating the success and failure counting of operators are presented below: i) V1: the success and failure counter variables are not reset at any point in the search; ii) V2: the variables success and failure counters are reset when creating a new population, that is, at each iteration; iii) V3: the variables success and failure counters are reset during the execution, at the moment when they reach 1/3 and 2/3 of the evaluations performed.

4 Experiments and Discussion

For the tests, 42 MaQAP instances were used with 50 locations and 5, 7 and 10 objectives (flows), these instances were proposed in [27] using the generators presented in [33]. Each instance is named following the format: mqapX-Yfl-TW, with X is the number of locations, Y is the number of objectives (or flow types), T means that the correlation is positive (p) or negative (n) and W shows whether the problem is uniform (uni) or real-like (rl) [27].

All experiments are evaluated by the IGD+ (Inverted Generational Distance plus) quality indicator [34]. This metric evaluates different properties of a Pareto front approximation and provides a single performance value for the set. IGD+ indicates the distance from the set found by the algorithm in relation to a reference set. The reference set used in this work is composed of the non-dominated solutions resulting from the union of the approximation front of all the algorithms involved in the comparison.

The experiments were organized in four stages. Firstly, the different strategies for resetting the count of successes and failures are compared with each other, for the two success and failure factors: by the dominance relation criterion (ND) and by the average criterion (AVG). Next, considering the best counting strategies, AVG and ND are confronted. In the third stage, the best version of the proposed algorithm is compared with the separately applied operators. In the last stage, the best proposed HH is compared with some approaches from literature.

The proposed approaches, as well as the other considered algorithms, are executed 30 times with different seeds. The parameters for all experiments in this study are in Table 1. TS does not have any input parameters to be defined, which represents a positive point for the technique. All algorithms, quality indicator and statistical test were developed in jMetal framework [35].

Tables 2, 3, 4, 5 and 6 show a comparison between the algorithms, involving all instances for 5, 7 and 10 objectives, using the test statistical analysis of Friedman test [36]. The tables show the rank of the algorithms and the hypothesis that the first place approach is equivalent to the others. Whenever the hypothesis is rejected, the first ranked approach is statistically superior to those in the rejected hypothesis line.

4.1 Stage 1: Selecting the Success and Failure Counting Strategy

The number of succeed and fail operator applications is the main element for the operation of the Thompson Sampling heuristic. Therefore, experiments are presented below for the analysis of different strategies for resetting these counts throughout the search process. This analysis is performed for the two proposed success factor - dominance relation (ND) and the average of the objectives (AVG), detailed in Sect. 3.

Table 2 analyzes the different ways of counting on the ND success factor (Algorithm column) and shows the Friedman test on the IGD+ indicator (Ranking and Hypothesis columns), considering all 42 instances for all number of objectives. The percentage of usage of the different operators used is also shown (% PMX, % 2P, % CX columns. From the table it is possible to observe that the NSGA-III\(_{TS-ND}\)V3, which resets the count in two moments of the search process, is the best algorithm, followed by NSGA-III\(_{TS-ND}\)V1 (version without reset). The algorithm that resets the success and failure counts at each iteration (NSGA-III\(_{TS-ND}\)V2) has the worst performance. This table also shows the average percentage usage of operators throughout the search process. It is worth noting that the algorithms that selected the CX operator more often have better performance. In addition, the version that resets success and failure counts at each iteration tends to distribute the use of operators more evenly, as well as in a random selection. The same analysis is performed in Table 3, considering the success and failure factors by the average of the objectives (AVG). According to the IGD+ quality indicator and the Friedman test, the NSGA-III\(_{TS-AVG}\)V1, which does not reset the count during the search, is the best algorithm, while the NSGA-III\(_{TS-AVG}\)V3 algorithm got second place. Again, the algorithm that resets the success and failure counts at each iteration (NSGA-III\(_{TS-AVG}\)V2) has the worst performance. The two algorithms that have the highest choice frequency for the CX operator have the best performance.

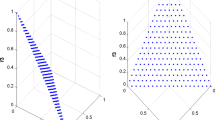

In view of these analyzes, it is possible to observe that the best count reset strategy for the ND success and failure factors is that which reset the variables in 1/3 and 2/3 of the maximum number of performed evaluations (V3). As for the AVG success and failure factors, is the best strategy that accumulates the scores throughout all evaluations, without resetting them at any time (V1). The next section compares the two success and failure factor (ND and AVG), taking into account your best success and failure count reset strategies, NSGA-III\(_{TS-AVG}\)V1 and NSGA-III\(_{TS-ND}\)V3. In order to understand how the different forms of counting and factors of success and failure behave in the operators choice, the frequencies of use for each operator during the search were analyzed. Figures 2 and 3 show how often each of the three available operators is selected by HH during the search, taking as example three instances, they are: \(mqap50-5fl-n25uni\), \(mqap50-7fl-p00rl\), \(mqap50-10fl-n75rl\). The choice of instances aimed to vary their characteristics such as the number of objectives, the correlation and the form of generation (rl or uni). These figures show the percentage of use of the operator (y axis) over the generations (x axis), considering the average of 5 independent executions. Each point on the curve represents the frequency with which each operator is used in a given generation. Figure 2 shows, for the AVG success and failure factor, that the version with the best performance (NSGA-III\(_{TS-AVG}\)V1) selects the CX more frequently than the others operators. However, all three restart strategies favor the choice of the CX operator. V2, which resets the counts at each iteration, presents an oscillation in the choice of operators throughout the search. V3, which resets the count in two moments, behaves similarly to the version that does not reset the counts (V1). Considering ND, Fig. 3 also shows that the version with the best performance (NSGA-III\(_{TS-ND}\)V3) selects the CX operator more frequently than the others. The oscillation in the choice of operators in the version that reinitializes the count at each iteration (V2) is even greater in this success and failure factors (ND). In order to make a fair comparison, the same stopping criterion was applied in all experiments (MaxEv = 300000). Thus, as can be seen in the figures, the number of generations for each instance is equal to 2832 for 5 objectives, 1022 for 7 objectives and 1088 for 10 objectives.

4.2 Stage 2: Selecting the Success and Failure Factor

The tests carried out in this stage answer the research question related to which the most appropriate success and failure factor in the proposed scenario. It is based on the best strategy selected in the previous simulation stage, that is, performs the comparison of the NSGA-III\(_{TS-AVG}\)V1 and NSGA-III\(_{TS-ND}\)V3 algorithms.

Friedman’s test, in Table 4, makes clear the supremacy of the version that considers the average of the objectives when deciding whether the application of a given operator is a success or a failure (AVG). NSGA-III\(_{TS-AVG}\)V1 represents the best HH version proposed in the scope of this work and will be compared with the operators applied in isolation in the next section.

4.3 Stage 3: Effect of Operator Selection by Hyper-Heuristic

The use of a hyper-heuristic in the selection of different operators throughout the search process releases the user from choosing the best operator to be applied in the solution of a given problem. This avoids the necessary simulations and even a more in-depth knowledge of the technique and the problem in question. The simulations of this stage aim to analyze the performance of the automatic selection made by Thompson Sampling in relation to the performance of the operators applied in isolation. Table 5 shows that the performance of HH and the CX operator are similar to each other, while the PMX and 2P operators perform worse, considering the IGD + quality indicator. Friedman’s test indicates statistical equivalence between NSGA-III\(_{TS-AVG}\)V1 and NSGA-III\(_{CX}\), which shows that the automation of the choice of operators is done properly.

4.4 Stage 4: Literature Comparison

The efficiency of the best proposed HH is measured at this stage in the face of the algorithms reported in the literature. Table 6 compares the NSGA-III\(_{TS-AVG}\)V1 algorithm with the MOEA/DD [8], MOEA/D [9], SPEA2 [10], NSGA-II [11], MOEA/D-DRA [12] algorithms. All comparison approaches are present in the framework jMetal version 4.5 and the parameters used, with the exception of those presented in Table 1, are the standard parameters of the framework for each of these algorithms.

According to the Friedman test, presented in Table 6, the hypothesis that the algorithm with the lowest rank (NSGA-III\(_{TS-AVG}\)V1) is equivalent to the others is rejected for all algorithms. Therefore, the proposed algorithm can be seen as a competitive approach for MaQAP.

5 Conclusions

In this work, it was proposed an approach combining selection HH with the NSGA-III framework. The selection HH considered was Thompson Sampling. The HH was used to generate each offspring through the selected operator from a set of LLH, according to an updated probability based on their previous performance. Three candidates for operators were considered: PMX, 2P and CX. A set of 42 benchmarks instances was considered with 5, 7 and 10 objectives.

Two success and failure factors have been proposed with different ways of resetting the counters. The results were analyzed with the IGD+ quality indicator and Friedman’s statistical test. The IGD+ points out that the best way to restart the success and failure counts varies according to the success and failure factor adopted. The best proposed HH (NSGA-III\(_{TS-AVG}\)V1) presented equivalent, or even better, performance to operators applied in isolation. This shows the benefit of automatic selection made by TS, as it releases the user from determining the best operator to be applied in the problem solution. In comparison with the literature, the NSGA-III\(_{TS-AVG}\)V1 outperforms all the considered algorithms.

It should be noted that, in addition to its competitive results, Thompson Sampling is a simple technique in relation to other high-level heuristics implemented for HHs and without parameters to be adjusted. As future work we can consider the adaptive control of the size of the set of operators and the test in different optimization problems with multiple and many objectives.

References

Abdel-Basset, M., Manogaran, G., Rashad, H., Zaied, A.N.: A comprehensive review of quadratic assignment problem: variants, hybrids and applications. In: Journal of Ambient Intelligence and Humanized Computing, pp. 1–24 (2018)

Koopmans, T.C., Beckmann, M.: Assignment problems and the location of economic activities. Econometrica 25(1), 53–76 (1957)

Uluel, M., Ozturk, Z.K.: Solution approaches to multiobjective quadratic assignment problems. In: European Conference on Operational Research (2016)

Fritsche, G.: The cooperation of multi-objective evolutionary algorithms for many-objective optimization. Ph.D. dissertation, Universidade Federal do Paraná, Curitiba, PR, BR (2020)

Deb, K., Jain, H.: An evolutionary many-objective optimization algorithm using reference-point-based nondominated sorting approach, part i: Solving problems with box constraints. IEEE Trans. Evol. Comput. 18(4), 577–601 (2014)

Burke, E., et al.: Hyper-heuristics: a survey of the state of the art. J. Oper. Res. Soc. 64, 1695–1724 (2013)

Thompson, W.R.: On the likelihood that one unknown probability exceeds another in view of the evidence of two samples. Biometrika 25(3–4), 285–294 (1933)

Li, K., Deb, K., Zhang, Q., Kwong, S.: An evolutionary many-objective optimization algorithm based on dominance and decomposition. IEEE Trans. Evol. Comput. 19(5), 694–716 (2015)

Zhang, Q., Li, H.: MOEA/D: A multiobjective evolutionary algorithm based on decomposition. IEEE Trans. Evol. Comput. 11(6), 712–731 (2007)

Zitzler, E., Laumanns, M., Thiele, L.: SPEA2: Improving the strength pareto evolutionary algorithm for multiobjective optimization, vol. 3242 (2001)

Deb, K., Pratap, A., Agarwal, S., Meyarivan, T.: A fast and elitist multiobjective genetic algorithm: NSGA-II. IEEE Trans. Evol. Comput. 6(2), 182–197 (2002)

Zhang, Q., Liu, W., Li, H.: The performance of a new version of moea/d on cec09 unconstrained mop test instances. In: Congress on Evolutionary Computation, pp. 203–208. IEEE Press (2009)

Çela, E.: The Quadratic Assignment Problem: Theory and Algorithms, ser. Combinatorial Optimization. Springer, US (2013)

Sabar, N., Ayob, M., Kendall, G., Qu, R.: A dynamic multiarmed bandit-gene expression programming hyper-heuristic for combinatorial optimization problems. IEEE Trans. Cybern. 45, 06 (2014)

Pillay, N., Qu, R.: Hyper-heuristics: theory and applications, 1st ed. Springer Nature (2018). https://doi.org/10.1007/978-3-319-96514-7

Drake, J.H., Kheiri, A., Özcan, E., Burke, E.K.: Recent advances in selection hyper-heuristics. Eur. J. Oper. Res. 285(2), 405–428 (2020)

Talbi, E.-G.: Metaheuristics: From Design to Implementation. Wiley, Hoboken (2009)

Oliver, I., Smith, D., Holland, J.R.: Study of permutation crossover operators on the traveling salesman problem. In: Genetic Algorithms and Their Applications: Proceedings of the Second International Conference on Genetic Algorithms: July 28–31, at the Massachusetts Institute of Technology, Cambridge, MA. Hillsdale, NJ: L. Erlhaum Associates. (1987)

Larranaga, P., Kuijpers, C., Murga, R., Inza, I., Dizdarevic, S.: Genetic algorithms for the travelling salesman problem: a review of representations and operators. Artif. Intell. Rev. 13, 129–170 (1999)

Russo, D.J., Roy, B.V., Kazerouni, A., Osband, I., Wen, Z.: A tutorial on thompson sampling. Found. Trends Mach. Learn. 11, 1–96 (2018)

Zitzler, E., Laumanns, M., Bleuler, S.: A tutorial on evolutionary multiobjective optimization. In: Gandibleux, X., Sevaux, M., Sörensen, K., T’kindt, V. (Eds.) Metaheuristics for Multiobjective Optimisation, Springer, Berlin Heidelberg, pp. 3–37 (2004). https://doi.org/10.1007/978-3-642-17144-4_1

Dokeroglu, T., Cosar, A.: A novel multistart hyper-heuristic algorithm on the grid for the quadratic assignment problem. Eng. Appl. Artif. Intell. 52, 10–25 (2016)

Dokeroglu, T., Cosar, A.: A novel multistart hyper-heuristic algorithm on the grid for the quadratic assignment problem. Eng. Appl. Artif. Intell. 52, 10–25 (2016)

Senzaki, B.N.K., Venske, S.M., Almeida, C.P.: Multi-objective quadratic assignment problem: an approach using a hyper-heuristic based on the choice function. In: Cerri, R., Prati, R.C. (eds.) Intelligent Systems, pp. 136–150. Springer International Publishing, Cham (2020). https://doi.org/10.1007/978-3-030-61377-8_10

Drugan, M.M.: Stochastic pareto local search for many objective quadratic assignment problem instances. In: 2015 IEEE Congress on Evolutionary Computation (CEC), pp. 1754–1761, May 2015

Rahimi, I., Gandomi, A.: Evolutionary many-objective algorithms for combinatorial optimization problems: A comparative study. Arch. Comput. Methods Eng. 28, 03 (2020)

de Almeida, C.P.: Transgenética computacional aplicada a problemas de otimização combinatória com múltiplos objetivos. Ph.D. dissertation, Federal University of Technology - Paraná, Brazil (2013)

Castro, O.R., Pozo, A.: A mopso based on hyper-heuristic to optimize many-objective problems. In: 2014 IEEE Symposium on Swarm Intelligence, pp. 1–8 (2014)

Walker, D.J., Keedwell, E.: Towards many-objective optimisation with hyper-heuristics: identifying good heuristics with indicators. In: Handl, J., Hart, E., Lewis, P.R., López-Ibáñez, M., Ochoa, G., Paechter, B. (eds.) PPSN 2016. LNCS, vol. 9921, pp. 493–502. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-45823-6_46

Kuk, J., Gonçalves, R., Almeida, C., Venske, S., Pozo, A.: A new adaptive operator selection for nsga-iii applied to cec 2018 many-objective benchmark. In: 2018 7th Brazilian Conference on Intelligent Systems (BRACIS), pp. 7–12 (2018)

Fritsche, G., Pozo, A.: The analysis of a cooperative hyper-heuristic on a constrained real-world many-objective continuous problem. In: 2020 IEEE Congress on Evolutionary Computation (CEC), pp. 1–8 (2020)

Friesche, G., Pozo, A.: Cooperative based hyper-heuristic for many-objective optimization. In: Genetic and Evolutionary Computation Conference, ser. GECCO ’19. New York, NY, USA: Association for Computing Machinery, pp. 550–558 (2019)

Knowles, J., Corne, D.: Instance generators and test suites for the multiobjective quadratic assignment problem. In: Fonseca, C.M., Fleming, P.J., Zitzler, E., Thiele, L., Deb, K. (eds.) EMO 2003. LNCS, vol. 2632, pp. 295–310. Springer, Heidelberg (2003). https://doi.org/10.1007/3-540-36970-8_21

Ishibuchi, H., Masuda, H., Tanigaki, Y., Nojima, Y.: Modified distance calculation in generational distance and inverted generational distance. In: Gaspar-Cunha, A., Henggeler Antunes, C., Coello, C.C. (eds.) EMO 2015. LNCS, vol. 9019, pp. 110–125. Springer, Cham (2015). https://doi.org/10.1007/978-3-319-15892-1_8

Nebro, A.J., Durillo, J.J., Vergne, M.: Redesigning the jmetal multi-objective optimization framework. In: Conference on Genetic and Evolutionary Computation, ser. GECCO Companion ’15. New York, NY, USA: Association for Computing Machinery, pp. 1093–100 (2015)

Conover, W.: Practical nonparametric statistics, 3rd ed., ser. Wiley series in probability and statistics. New York, NY [u.a.]: Wiley (1999)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Senzaki, B.N.K., Venske, S.M., Almeida, C.P. (2021). Hyper-Heuristic Based NSGA-III for the Many-Objective Quadratic Assignment Problem. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13073. Springer, Cham. https://doi.org/10.1007/978-3-030-91702-9_12

Download citation

DOI: https://doi.org/10.1007/978-3-030-91702-9_12

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91701-2

Online ISBN: 978-3-030-91702-9

eBook Packages: Computer ScienceComputer Science (R0)