Abstract

Genetic-based clustering meta-heuristics are important bioinspired algorithms. One such technique, termed Genetic Algorithm for Decision Boundary Analysis (GADBA), was proposed to support Structural Health Monitoring (SHM) processes in bridges. GADBA is an unsupervised, non-parametric approach that groups data into natural clusters by means of a specialized objective function. Albeit it allows a competent identification of damage indicators of SHM-related data, it achieves lackluster results on more general clustering scenarios. This study improves the objective function of GADBA based on a Cluster Validity Index (CVI) named Mutual Equidistant-scattering Criterion (MEC) to expand its applicability to any real-world problem.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

The world is increasingly filled with fruitful data, most of which daily stored in electronic media. As such, there is a high potential of technique research and development for automated data retrieval, analysis, and classification [15]. Around 90% of the data produced up to 2017 were generated in 2015 and 2016, and the tendency is to biennially double this amount [17]. The exponential increase in size and complexity of Big Data are aspects worthy of attention.

Latent potentialities for decision-making based on insights learned from historical data are only actually exploited if pushed into practice. A comprehensive information extraction procedure is composed of two sub-processes: data management and data analysis [15]. Management supports data acquisition, handling, storage, and retrieval for analysis [18, 29]; in contrast, data analysis refers to the evaluation and acquisition of intelligence from data.

It was pointed out by [17] that researchers of various subjects have been adopting methods from Machine Learning (ML) [5, 10] and Data Mining (DM) [31]. Many of them did so in Big Data contexts such as stock data monitoring, financial analysis, traffic monitoring, and Structural Health Monitoring (SHM) [14]. [6] demonstrated the relationships between the data management and analysis sub-processes in practice, with a specific focus on the application herein highlighted. On one hand, this work claims that Big Data is not restricted to a computerized manipulation of massive data streams; on the other hand, it emphasizes that SHM can learn ipsis litteris with the conscientious use of ML.

The problem of data grouping (i.e., data clustering) is one of the main tasks in ML [2, 5] and DM [31], prevailing in any discipline involving multivariate data analysis [21]. It gained a prominent place in many applications lately, especially in speech recognition [11], web applications [35], image processing [3], outlier detection [23], bioinformatics [1], and SHM [9].

A wide variety of Genetic Algorithm (GA)-based clustering techniques have been proposed in recent times [25, 28, 42]. Their search ability is commonly exploited to find suitable prototypes in the feature space such that a per-chromosome measure of the clustering results is optimized in each generation. In [2], two conflicting functions were proposed and defined based on cluster cohesion and connectivity. The goal was to reach well-separated, connected, and compact clusters by means of two criteria in an efficient, multi-objective particle swarm optimization algorithm. More recently, [20] combined K -means and a GA through a differentiate arrangement of genetic operators to conglomerate different solutions, with the intervention of fast hill-climbing cycles of K -means.

An unsupervised, non-parametric, GA-based approach to support the SHM process in bridges termed Genetic Algorithm for Decision Boundary Analysis (GADBA), which was proposed by [36], aims to group data into natural clusters. The algorithm is also supported by a method based on spatial geometry to eliminate redundant clusters. Upon testing, GADBA was more efficient in the task of fitting the normal condition than its state-of-the-art counterparts in SHM contexts. However, due to the specialization of its objective function to SHM contexts, GADBA is lackluster on more general clustering scenarios.

This work aims to improve the objective function of GADBA to expand its application potential to a wider range real-world problems. In this sense, a version of GADBA based on the Mutual Equidistant-scattering Criterion (MEC) is proposed as a general-purpose clustering approach. Four clustering algorithms are compared against the new proposal: K -means [26], Gaussian Mixture Models (GMM) [30], Linkage [22], and GADBA, of which the first three are well-known and explored in literature.

The remaining sections of this paper are divided as follows. Section 2 and Sect. 3 respectively define GADBA and MEC. Section 4 discusses the performance of the new proposal under some experimental evaluations. Finally, Sect. 5 summarizes and ends the paper.

2 Genetic Algorithm for Decision Boundary Analysis

Given a minimum (\(K_{min}\)) and maximum (\(K_{max}\)) number of clusters, clustering is done by the combination of a GA to dispose their centroids in the M-dimensional feature space, and a method called Concentric Hypersphere (CH) to agglutinate clusters, to choose an appropriate \(K \in [K_{min}, K_{max}]\) [37].

The initial population \(\mathbf {P}(t{=}0)\) is randomly created and each individual represents a set of K centroids, where K is randomly selected. The chromosome is then formed by concatenating K feature vectors (Fig. 1), whose values are initialized by randomly selecting K data points from the training set. A length ratio is defined as \(\gamma _{i} = K_{i} / K_{max}\), where \(K_{i}\) is the number of active centroids in individual i. The role of \(\gamma \) is to define the number of active centroids for a given candidate solution, since a single individual might have enabled/disabled centroids during the recombination process.

The parent selection is based on tournament with reposition, where R individuals are randomly selected and the fittest one is chosen to recombine with other individual chosen in the same way. The recombination is conducted in three steps for each pair of parents \(P_i\) and \(P_j\) to generate a pair of descendants:

-

1.

A random number \(r \in [0,1]\) is compared with \(p_{rec}\) defined a priori. If \(r \le p_{rec}\), then two cut points \(\pi _1\) and \(\pi _2\) are selected such that \(1 \le \pi _1 < \pi _2 \le min(K_i,K_j)\). The centroids in the range are then swapped. If \(r > p_{rec}\), the parents remain untouched.

-

2.

Likewise, two random numbers \(r, T \in [0,1]\) are picked for each centroid position in the parents and, if \(r \le p_{pos}\), defined a priori, an arithmetic recombination is conducted as follows:

$$\begin{aligned} F_{\mathbf {x},t}^{i} = F_{\mathbf {x},t}^{i} + (F_{\mathbf {y},t}^{j}-F_{\mathbf {x},t}^{i})T, \end{aligned}$$(1)$$\begin{aligned} F_{\mathbf {x},t}^{j} = F_{\mathbf {x},t}^{j} + (F_{\mathbf {y},t}^{i}-F_{\mathbf {x},t}^{j})T, \end{aligned}$$(2)where \(F_{\mathbf {x},t}^{i}\) and \(F_{\mathbf {x},t}^{j}\) are the values in the tth position of \(\mathbf {x}\)th centroid from parents \(P_i\) and \(P_j\), respectively. Similarly, \(F_{\mathbf {y},t}^{i}\) and \(F_{\mathbf {y},t}^{j}\) respectively correspond to the yth centroid of the i and jth parents. A pair of parents can recombine even if they have a different number of genes.

-

3.

Finally, the last step consists in arithmetically recombining the parents’ length ratio to define the length ratios of the offspring individuals.

The mutation is the result of a personalized two-step process:

-

1.

Let \(T_{\mathbf {x}} = K_{max}^{-1}\) and \(T_r\) be a random number on interval [0, 1]. The number of centroids to be enabled in an offspring individual is

. When \(K < K_{new} \le K_{max}\), the missing positions are filled with the information of \(K_{new}-K\) data points chosen at random.

. When \(K < K_{new} \le K_{max}\), the missing positions are filled with the information of \(K_{new}-K\) data points chosen at random. -

2.

Each centroid position can be mutated with a probability \(p_{mut}\), defined a priori, in which a Gaussian mutation is applied by using

$$\begin{aligned} F_{\mathbf {x},t}^{j} = F_{\mathbf {x},t}^{j} + \mathcal {N}(0,1), \end{aligned}$$(3)where \(\mathcal {N}(0,1)\) is a random number from a standard Gaussian distribution and \(F_{\mathbf {x},t}^{j}\) is the value in the tth position of \(\mathbf {x}\)th centroid.

Survivor selection is based on elitism, where the parents \(\mathbf {I}^{(t)}_p\) and offspring \(\mathbf {I}^{(t)}_c\) are concatenated into \(\mathbf {I}^{(t+1)}_p = \mathbf {I}^{(t)}_p \cup \mathbf {I}^{(t)}_c\), which is then sorted according to a fitness measure based on Pareto Front and Crowding Distance. The new population \(\mathbf {P}(t+1)\) is composed by the \(|\mathbf {P}|\) best individuals [8].

Parent selection, recombination, mutation, and survivor selection are repeated until a maximum number of iterations is reached and/or the difference of the current solution against the last one is smaller than a given threshold \(\epsilon \).

As mentioned, the CH algorithm is used to regularize the number of clusters encoded in the individuals. It is executed in each individual prior to their evaluation by determining the regions that limit each cluster in three steps:

-

1.

For each cluster, its centroid is dislocated to the mean of its data points.

-

2.

Each centroid is the center of a hypersphere whose radius will increase while the difference of density between two consecutive inflations is positive.

-

3.

If more than one centroid is found inside a hypersphere, they are agglutinated into a centroid located at their mean point.

3 A New Objective Function

3.1 Basic Notations and Definitions

The objective of clustering is to find out the best way to split a given data set \(\mathcal {X} \in \mathbb {R}^{N \times M}\), with N input vectors in an M-dimensional real-valued feature space  , into K mutually disjoint subsets (\(K \le N\)). Assume the vectors in \(\mathcal {X}\) have hard labels marking them as members of one cluster. A set of prototypes \(\varTheta \) is described as a function of \(\mathcal {X}\) and K as

, into K mutually disjoint subsets (\(K \le N\)). Assume the vectors in \(\mathcal {X}\) have hard labels marking them as members of one cluster. A set of prototypes \(\varTheta \) is described as a function of \(\mathcal {X}\) and K as

whereupon \(\varTheta \) contains K representative vectors  .

.

Let the hard label for cluster \(\kappa \) be

The prototypes are computed whereby N label vectors are organized into a partition over the vectors in \(\mathcal {X}\) such that

subject to

where \(\mu _i = y_\kappa \Leftrightarrow x_i\) is in cluster \(\kappa \).

Put another way, the membership of \(x_i\) to cluster \(\kappa \), \(\mu _{i \kappa }\), is either 1 if the ith object belongs to the \(\kappa \)th cluster, or 0 otherwise. Accordingly, \(\mu _{i \kappa } = 1\) for one value of \(\kappa \) only, such that [41]

The resulting grouping in this structure is hard [24], since one object belonging to one cluster cannot simultaneously belong to another.

In general, data clustering involves finding \(\{U,\varTheta \}\) to partition \(\mathcal {X}\) somehow. For a given initial \(\varTheta \), the optimal set of prototypes can be represented by centroids, medians, medoids, and others, in which the optimized partition U is obtained by assigning each input vector to the cluster with the nearest prototype. Both U and \(\varTheta \) comprise a dual structure (if one of them is known, the other one will also be) named clustering solution.

3.2 Cluster Validation

Most researchers have some theoretical difficulty in describing what a cluster is without assuming an induction principle (i.e., a criterion) [21]. A classic definition for them is: “objects are grouped based on the principle of maximizing intra-class similarity and minimizing inter-class similarity”. Another cluster definition involving density defines it as a connected, dense component such that high-density regions are separated by low-density ones [1, 19].

In clustering algorithms, K is usually assumed to be unknown. Since clustering is an unsupervised learning procedure (i.e., there is no prior knowledge on data distribution), the significance of the defined clusters must be validated for the data [33]. Therefore, one of the most challenging aspects of clustering is the quantitative examination of clustering results [31]. This procedure is performed by Cluster Validity Indices (CVIs), sometimes called criteria, which also targets hard problems such as cluster quality assessment and the degree wherewith a clustering scheme fits into a specific data set. The most common application of CVIs is to fine-tune K. Given \(\mathcal {X}\), a specific clustering algorithm and a range of values of K, these steps are executed [10, 43]:

-

1.

Successively repeat a clustering algorithm according to a number of clusters from a fixed range of values defined a priori: \(K \in [K_{min},K_{max}]\);

-

2.

Obtain the clustering result \(\{U,\varTheta \}\) for each K in the range;

-

3.

Calculate the validity index score for all solutions; and

-

4.

Select \(K_{opt}\) for which data partitioning provides the best clustering result.

CVIs are considered to be independent of the clustering algorithms used [40] and usually fall into one of two categories: internal and external [27, 32]. Internal validation does not require knowledge about the problem for it only uses information intrinsic to the data; hence, it has a practical appeal. Conversely, external validation is more accurate, but not always feasible [32]. Knowing this, it can be evaluated how well the achieved solution approaches a predefined structure based on previous and intuitive understanding regarding natural clusters.

3.3 Mutual Equidistant-Scattering Criterion

This work proposes the replacement of the objective function of GADBA with a CVI called MEC [12]. MEC is a non-parametric, internal validation index for crisp clustering. An immediate benefit of MEC is the absence of fine-tuning hyper-parameters, thus mitigating the user’s effort in operational terms and enabling the use of GADBA to cluster real-world data whose structure is unknown.

MEC assumes that “objects belonging to the same data cluster will tend to be more equidistantly scattered among themselves compared to data points of distinct clusters” [12]. As such, the mean absolute difference \(\mathcal {M}_\kappa \) is applied using multi-representative data in every clustering solution \(\{U,\varTheta \}\) obtained from a pre-determined K. MEC is weighted by a penalty of local restrictive nature to each cluster \(\kappa \) as well, while a global penalty is then applied a posteriori. Such penalties are a measure of intra-cluster homogeneity and inter-cluster separation.

Mathematical Formulations. The mean absolute difference is calculated between any possible pair of intra-cluster dissimilarities

where \(n_\kappa \) objects within the cluster are considered as representative data in formulation (thereby multi-representative). That is, \(D_\kappa \) is a strictly upper triangular matrix of order \(n_\kappa \).

Nevertheless, only the pairwise distances matter in \(\mathcal {M}_\kappa \). Thus, \(\varUpsilon (\cdot )\) reshapes all elements above the main diagonal of \(D_\kappa \) (Eq. 6) into a column vector

denoted by \(L_\kappa = \frac{n_\kappa (n_\kappa -1)}{2}\) intra-cluster Euclidean distances. The key part of MEC is then defined in Eq. 8 as

where \(L_\kappa> 1 \Leftrightarrow n_\kappa > 2\),  is the absolute value and \(\eta _\kappa = \frac{L_\kappa (L_\kappa -1)}{2}\) stands for the total number of differences for a single cluster.

is the absolute value and \(\eta _\kappa = \frac{L_\kappa (L_\kappa -1)}{2}\) stands for the total number of differences for a single cluster.

An exponential-like distance measure provides a robust property based on the analysis of the influence function [39]. [12] empirically observed that it works properly, particularly when we look for \(K_{opt}\) within a hierarchical data set. Therefore, a new homogeneity measure \(\varSigma _\kappa \) of non-negative exponential type was modelled as a penalty over \(\mathcal {M}_\kappa \) as

where \(\sigma _\kappa ^2\) is the variance of \(d_\kappa \).

One can observe that the homogeneity measure gets closer to zero with the approximation of the ideal model solution, where the criterion value is zero and, therefore, the loss of information is null. Thus, we have MEC defined as

where

The measure of global separation and penalty \(\lambda \), therefore, does not depend exclusively on the \(\kappa \)th cluster, but on the greater distance between the pairs of representative points of each data cluster (e.g., centroids). In a few words, \(\lambda \) globally weights the result of the solution. The presence of K, in Eq. 11, is a simple way to avoid overfitting as a result of clustering solutions already sufficiently accommodated to the data. In addition to avoiding overfitting, an improvement over other indices is the possibility of evaluating the clustering tendency (\(K{=}1\)) without resorting to additional, external techniques [43].

To illustrate, Fig. 2 shows the MEC composition for three feasible cluster solutions, where each dotted line represents one measure of intra-cluster dissimilarity and each cluster is depicted by a quadratic centroid. The operating mechanism of MEC, which encompasses both homogeneity (Eq. 9) and separation (Eq. 11), is visualised for \(K = 1, 2, 4\) (Figs. 2a, 2b, and 2c, respectively). The motivation is that the dissimilarity measures should be similar to each other when looking at each cluster. In this case, Fig. 2a contains the least suitable solution among those shown graphically, as their dissimilarity measures are more divergent in magnitude than those in Fig. 2b and 2c. The four-cluster solution (Fig. 2c) is the best within the solution set, as the distances among objects are exactly the same in each cluster.

At last, it is worth noting that Eq. 10 should be minimized,

where \(K \in [K_{min},K_{max}]\) and \(\hat{K}\) is inferred by the variation of K which determines the lowest MEC value, regardless of the clustering algorithm.

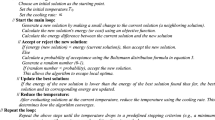

Improving the Time-Complexity of MEC. Equation 8 can be equivalently computed in terms of a log-linear time complexity as a function of \(L_\kappa \), to improve the computational efficiency of MEC. To do so, Eq. 8 can be reformulated to generate an auxiliary vector, as well as in sorting \(d_\kappa \) with an algorithm of same complexity (e.g., HeapSort). In fact, the time complexity of MEC will be entirely dependent on the complexity of the chosen sorting algorithm. As such, we have a complexity of \(\mathcal {O}(L_\kappa \log {}L_\kappa )\) with the Heap-Sort algorithm, or even \(\mathcal {O}(N^2)\), by the reformulated

where \(\hat{d_\kappa }\) is the increasing ordering of the values of \(d_\kappa \) and \(\tilde{L}_\kappa = L_\kappa - 1 = \left| c_\kappa \right| \); \(c_\kappa \) is an auxiliary variable that consists of a cumulative and naturally ordered vector of \(\hat{d_\kappa }\) defined as

Looking at Fig. 3, each square and value between square brackets depicts some vector position (l notation). In Eq. 13, the general form (\(L_\kappa -l\)) consists of the number of subtractions (Eq. 8) represented by arrows in the Figure, with \(\hat{d}_{l \kappa }\) depending on its location. The ordered \(\hat{d}_\kappa \) ensures that \(\hat{d}_{l \kappa } \le \hat{d}_{l+1,\kappa }\). By transitivity we have that, in Eq. 13,

Hence, \(\hat{d}_\kappa \) is sensibly less accessed, thus reducing the time complexity of MEC.

4 Results and Analyses

This section describes the results achieved by the five algorithms compared in this study: GADBA, its new version GADBA-MEC, K -means, GMM, and Linkage. Since none of the last three techniques automatically finds \(\hat{K}\), the Calinski and Harabasz Cluster Validity Index (CVI) is used to optimize \(\hat{K}\) through cluster validation (Sect. 3.2). Section 4.1 presents the methodology as how to, and by what means, the results were generated; Sect. 4.2 discusses the results highlighting the techniques that clustered the data; and the statistical significance of the results is analysed in Sect. 4.3.

4.1 Applied Methodology

The accuracy of the clustering algorithms is explained in a set of statistical indicators, such as absolute frequency, mean and standard deviation of \(\hat{K}\), in twenty clustering validations for each data set (i.e., \(N_r = 20\)). The Mean Absolute Percentage Error (MAPE) was then estimated between the desired (\(K_{opt}\)) and optimized (\(\hat{K}\)) number of clusters in Sect. 4.3. It generally expresses accuracy as a percentage which is designated by

Table 1 presents data sets from different benchmarks used for performance analysis when comparing clustering algorithms. To evaluate the algorithms, ten sets were selected as archetypes of real challenges faced in cluster validation (e.g., data hierarchy, clustering tendency, different densities/sizes).

The GADBA-MEC algorithm works through some previously specified hyper-parameters. Considering an oscillation of the best fitness in the order of \({1 \times 10^{-4}}\), the number of generations needed to infer the convergence of the fitness value is 50. The crossover and mutation probabilities are 0.8 and 0.03, respectively. The ring size of the tournament method for individual selection is set to 3. The population size and the maximum number of clusters are 100 and 30, respectively.

All experiments presented herein were conducted on a computer with an Intel© CoreTM i5 CPU @ 3.00 GHz with 8 GB of memory running MATLAB® 2017a. Most packages used in our tests are internal to MATLAB®.

4.2 Cluster Detection Results

One approach to evaluate the performance of the clustering algorithms is to analyse how frequently \(\hat{K} = K_{opt}\). In this sense, Table 2 shows the frequency of \(K_{opt}\) with emphasis on the highest absolute frequency by algorithm in blue.

Only GADBA is inconsistent with \(K_{opt}\) overall due to its SHM-related objective function, as proven by the performance of GADBA-MEC. Moreover, GADBA is the most unstable algorithm, as shown by the standard deviation values. The only highlight of GADBA was reached in Iris, although this might be explained by its tendency of settling on lower K values. Thus, a new version is justified ex post facto, attesting to the generalization potential of GADBA-MEC.

An important highlight of the proposed version is the detection of low-separation hierarchical data in H\(_1\). Virtually all other techniques tended to settle on expected sub-optimal K values. Contrastingly, in cases where GADBA-MEC did not reach the highest frequency (i.e., Dim-32, Hepta, and Iris), it at least approached the expected result in a stable manner, unlike GADBA.

For the rest of the algorithms, it should be noted that Linkage is deterministic, so its null standard deviation is expected. It was the second best in finding \(K_{opt}\), although it failed to assess clustering tendency. In this regard, only GADBA-MEC determined that \(\hat{K} = 1\) in GolfBall and One-G.

4.3 Statistical Significance Analyses

Friedman’s test is a non-parametric statistical test analogue to the two-way ANOVA (analysis of variance) [1, 16]. This statistical test is used to determine whether there are any statistically significant differences among algorithms from sample evidences. The samples to be considered are clustering algorithm performance results collected over the data sets, where the null hypothesis \(H_0\), to be considered is that all algorithms obtained similar results. Friedman’s test converts all results to ranks where all algorithms are classified for each problem according to its performance. As such, p-values can be computed for hypothesis testing. The p-value represents the probability of obtaining a result as extreme as the one observed, given \(H_0\) [7]. Specifically, given the significance level \(\alpha = 0.05\), the null hypothesis is rejected if \(p < \alpha \).

Since we want to know which algorithms are significantly different from each other when \(H_0\) is rejected, a post-hoc procedure is necessary to compare all possible algorithm pairs. In this work, the procedure presented in [16] is employed, in which the means of critical values at \(\alpha \) are compared to each absolute difference on mean ranks as  , \(i \ne j\). The absolute difference must be greater than \(\alpha \) to determine statistical significance.

, \(i \ne j\). The absolute difference must be greater than \(\alpha \) to determine statistical significance.

In this section, we verify the significance of GADBA-MEC using the Friedman’s test F. For this purpose, we calculate the MAPE of each data set. Once the algorithm with the smallest error is determined, the statistical significance test is applied to verify if the obtained difference is substantial. If this is the case, one can justify using one algorithm instead of another with more confidence.

Table 3 summarizes MAPE per data set emphasizing values above 100%. All algorithms had error rates above 100%, except GADBA-MEC with the lowest overall value (4.99%). Table 4 focuses on these errors, for which the Friedman’s test rejects the null hypothesis for an obtained p-value \(\ll \alpha = 0.05\). Accordingly, Friedman’s post-hoc test shows that there are significant improvements of the proposed version in terms of MAPE (Table 5), as well as the fact that significant differences are shown in virtually all algorithm pairs.

5 Conclusions and Further Work

Genetic-based clustering approaches play an important role in natural computing. In this sense, GADBA was introduced as an efficient, bioinspired approach to cluster data in SHM. Despite its competitive performance identifying structural components, it produces poor results on more general clustering scenarios. For this reason, this study proposed the replacement of its objective function for MEC, a recently developed CVI based on mutual equidistant-scattering analysis.

GADBA-MEC outperforms conventional clustering algorithms when statistically evaluated across various data sets, attaining the expected number of clusters more often than others. The results showed that GADBA-MEC yielded better results in terms of cluster validation and MAPE errors, in particular when handling hierarchical data and data with low separation. Also, only GADBA-MEC is able to verify the clustering tendency in the data sets addressed.

As future work, we intend to expand GADBA-MEC to multi-objective optimization contexts. It is also relevant to apply GADBA-MEC in real-world problems to validate its efficiency in finding natural clusters. Finally, comparing other CVI’s and bioinspired algorithms would be pertinent as well.

References

Alswaitti, M., Albughdadi, M., Isa, N.A.M.: Density-based particle swarm optimization algorithm for data clustering. ESWA 91, 170–186 (2018)

Armano, G., Farmani, M.R.: Multiobjective clustering analysis using particle swarm optimization. Expert Syst. Appl. 55, 184–193 (2016)

Bayá, A.E., Larese, M.G., Namías, R.: Clustering stability for automated color image segmentation. Expert Syst. Appl. 86, 258–273 (2017)

Bezdek, J.C., Pal, N.R.: Some new indexes of cluster validity. TSMC-B (1998)

Campello, R.: Generalized external indexes for comparing data partitions with overlapping categories. Pattern Recogn. Lett. 31(9), 966–975 (2010)

Cremona, C.: Big data and structural health monitoring. In: Challenges in Design and Construction of an Innovative and Sustainable Built Environment, 19th IABSE Congress Stockholm, pp. 1793–1801, September 2016

Daniel, W.W.: Applied nonparametric statistics. PWS-KENT, USA (1990)

Deb, K., Pratap, A., Agarwal, S., Meyarivan, T.: A fast and elitist multiobjective genetic algorithm: NSGA-ii. IEEE TEC 6(2), 182–197 (2002)

Diez, A., Khoa, N.L.D., Makki Alamdari, M., Wang, Y., Chen, F., Runcie, P.: A clustering approach for structural health monitoring on bridges. JCSHM (2016)

Dziopa, T.: Clustering validity indices evaluation with regard to semantic homogeneity. In: FedCSIS 2016, Gdańsk, Poland, 11–14 September 2016, pp. 3–9 (2016)

Esfandian, N., Razzazi, F., Behrad, A.: A clustering based feature selection method in spectro-temporal domain for speech recognition. EAAI 25(6), 1194–1202 (2012)

Flexa, C., Santos, R., Gomes, W., Sales, C., Costa, J.C.: Mutual equidistant-scattering criterion: a new index for crisp clustering. ESWA 128, 225–245 (2019)

Fränti, P., Sieranoja, S.: K-means properties on six clustering benchmark datasets. Appl. Intell. 48(12), 4743–4759 (2018)

Fumeo, E., Oneto, L., Anguita, D.: Condition based maintenance in railway transportation systems based on big data streaming analysis. PCS 53, 437–446 (2015)

Gandomi, A., Haider, M.: Beyond the hype: big data concepts, methods, and analytics. Int. J. Inf. Manage. 35(2), 137–144 (2015)

García, S., Fernández, A., Luengo, J., Herrera, F.: Advanced nonparametric tests for multiple comparisons in the design of experiments in computational intelligence and data mining: experimental analysis of power. IS 180(10), 2044–2064 (2010)

Gardiner, A., Aasheim, C., Rutner, P., Williams, S.: Skill requirements in big data: a content analysis of job advertisements. JCIF 58(4), 374–384 (2018)

Gil, D., Songi, I.Y.: Modeling and management of big data: challenges and opportunities. Futur. Gener. Comput. Syst. 63, 96–99 (2016)

Güngör, E., Özmen, A.: Distance and density based clustering algorithm using gaussian Kernel. Expert Syst. Appl. 69, 10–20 (2017)

Islam, M.Z., Estivill-Castro, V., Rahman, M.A., Bossomaier, T.: Combining k-means and a genetic algorithm through a novel arrangement of genetic operators for high quality clustering. Expert Syst. Appl. 91, 402–417 (2018)

Jain, A.K.: Data clustering: 50 years beyond k-means. PRL 31(8), 651–666 (2010)

Johnson, S.C.: Hierarchical clustering schemes. Psychometrika, pp. 241–254 (1967)

Langone, R., Reynders, E., Mehrkanoon, S., Suykens, J.A.: Automated structural health monitoring based on adaptive kernel spectral clustering. In: MSSP (2017)

Lingras, P., Chen, M., Miao, D.: Qualitative and quantitative combinations of crisp and rough clustering schemes using dominance relations. In: IJAR, pp. 238–258 (2014)

Lucasius, C., Dane, A., Kateman, G.: On k-medoid clustering of large data sets with the aid of a genetic algorithm: background, feasiblity and comparison. Anal. Chim. Acta 282(3), 647–669 (1993)

MacQueen, J.B.: Some methods for classification and analysis of multivariate observations. In: BSMSP, vol. 1, pp. 281–297. University of California Press (1967)

Mary, S.A.L., Sivagami, A.N., Rani, M.U.: Cluster validity measures dynamic clustering algorithms. ARPN J. Eng. Appl. Sci. 10(9), 4009–4012 (2015)

Maulik, U., Bandyopadhyay, S.: Genetic algorithm-based clustering technique. Pattern Recogn. 33(9), 1455–1465 (2000)

McAfee, A., Brynjolfsson, E.: Big data: the management revolution (2012)

Mclachlan, G., Basford, K.: Mixture Models: Inference and Applications to Clustering, vol. 38, January 1988

Moulavi, D., Jaskowiak, P.A., Campello, R.J.G.B., Zimek, A., Sander, J.: Density-based clustering validation. In: 14th SIAM ICDM, Philadelphia, PA (2014)

Pagnuco, I.A., Pastore, J.I., Abras, G., Brun, M., Ballarin, V.L.: Analysis of genetic association using hierarchical clustering and cluster validation indices. Genomics 109(5), 438–445 (2017)

Rubio, E., Castillo, O., Valdez, F., Melin, P., Gonzalez, C.I., Martinez, G.: An extension of the fuzzy possibilistic clustering algorithm using type-2 fuzzy logic techniques. Adv. Fuzzy Sys. 2017, 7094046 (2017)

Salvador, S., Chan, P.: Determining the number of clusters/segments in hierarchical clustering/segmentation algorithms. In: 16th IEEE ICTAI, USA (2004)

Silva, J.A., Hruschka, E.R., Gama, J.: An evolutionary algorithm for clustering data streams with a variable number of clusters. ESWA 67, 228–238 (2017)

Silva, M., Santos, A., Figueiredo, E., Santos, R., Sales, C., Costa, J.C.W.A.: A novel unsupervised approach based on a genetic algorithm for structural damage detection in bridges. Eng. Appl. Artif. Intell. 52(C), 168–180 (2016)

Silva, M., Santos, A., Santos, R., Figueiredo, E., Sales, C., Costa, J.C.: Agglomerative concentric hypersphere clustering applied to structural damage detection. Mech. Syst. Signal Process. 92, 196–212 (2017)

Ultsch, A.: Clustering with SOM: U*C. In: Proceedings of Workshop on Self-organizing Maps, pp. 75–82, January 2005

Wu, K.L., Yang, M.S.: Alternative c-means clustering algorithms. Pattern Recogn. 35, 2267–2278 (2002)

Wu, K.L., Yang, M.S.: A cluster validity index for fuzzy clustering. PRL (2005)

Xu, R., Wunsch, D., II.: Survey of clustering algorithms. TNN 16(3), 645–678 (2005)

Yang, C.L., Kuo, R., Chien, C.H., Quyen, N.T.P.: Non-dominated sorting genetic algorithm using fuzzy membership chromosome for categorical data clustering. Appl. Soft Comput. 30, 113–122 (2015)

Zhao, Q.: Cluster Validity in Clustering Methods. Ph.D. thesis, UEF, June 2012

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Flexa, C., Gomes, W., Moreira, I., Santos, R., Sales, C., Silva, M. (2021). Improving a Genetic Clustering Approach with a CVI-Based Objective Function. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13073. Springer, Cham. https://doi.org/10.1007/978-3-030-91702-9_14

Download citation

DOI: https://doi.org/10.1007/978-3-030-91702-9_14

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91701-2

Online ISBN: 978-3-030-91702-9

eBook Packages: Computer ScienceComputer Science (R0)

. When

. When