Abstract

Context: in the field of machine learning models are trained to learn from data, however often the context at which a model is deployed changes, degrading the performances of trained models and giving rise to a problem called Concept Drift (CD), which is a change in data distribution. Motivation: CD has attracted attention in machine learning literature, with works proposing modification to well-known algorithms’ structures, ensembles, online learning and drift detection, but most of the CD literature regards classification, while regression drift is still poorly explored. Objective: The goal of this work is to perform a comparative study of CD detectors in the context of regression. Results: we found that (i) PH, KSWIN and EDDM showed higher detection averages; (ii) the base learner has a strong impact in CD detection and (iii) the rate at which CD happens also affects the detection process. Conclusion: our experiments were executed in a framework that can easily be extended to include new CD detectors and base learners, allowing future studies to use it.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Machine learning is a sub-field of artificial intelligence which develops models that are able to learn from data. Learning usually happens statically with fixed labels during the process. In the context of regression with continuous dependent variables, many models have been proposed with useful results in many scenarios. However, there has been a growing interest in models that are able to process streams of data and can learn incrementally [10].

While working with data streams, models often face changes in the probability distribution of input data, which can negatively affect performance if the model was trained on data sampled from a significantly different distribution. This problem is most commonly referred to as concept drift (CD). CD can happen in many different scenarios, such as weather forecasting, stock price prediction and fake news identification [17]. CD might happen in the conditional distribution of the dependent variable given the predictors, which is called real drift, or it can affect only the independent variable distribution, which is called virtual drift [12].

Due to its significance, CD has been widely studied in recent years with approaches involving structural changes applied to well-known models, ensembles and drift detection. However, most of these works targeted the task of classification. In this context, Gonçalves et al. (2014) [9] ran a large comparative study of CD detectors using Naive Bayes as the base learner. Their work showed that there is no single best CD detector and performance depends on the pair (dataset, CD detector), i.e. “there is no free lunch”, as expected in machine learning. These results motivate a similar study in the context of regression with continuous targets.

Therefore, this paper’s main contribution is to perform a wide comparative study of CD detectors for regression. We used seven different CD detectors together with 10 regression models. The 70 detector-base learner combinations were applied to four synthetic and four real datasets with virtual CD. Thus, our study presents significant variation of base learners, detectors and datasets. Experiments were evaluated according to prediction error and number of detected drift points. As another contribution, we develop our work such that future research can easily expand it by adding new detectors, base learners and datasets. In addition, this study focuses on continuous outputs in the dependent variable, which is still scarcely explored in the literature.

The rest of this paper is organized as follows: Sect. 2 presents the drift detection methods. Section 3 describes the parameters used in the drift detectors, the datasets used in the experiments, and the adopted evaluation methodology. The results obtained in the experiments are analyzed in Sect. 4. Finally, Sect. 5 presents our conclusions.

2 Background

CD detection methods use a base learner (regressor/classifier) to identify if an input is a drift point, i.e. for each instance the method outputs a drift prediction based on the base learner prediction and the observed target value. Most detectors perform this analysis in groups of instances at each time.

Many detectors have been proposed for regression. One of the first was the drift detection method (DDM) [8], which is based on the idea that the base learner’s error rate decreases as the number of samples increases, as long as the data distribution is stationary. If DDM finds an increased error rate above a calculated threshold, it detects that CD has happened. The threshold is given by \(p_{min}+s_{min}\) is minimum. Where, \(p_{min}\) is the minimum recorded error rate and \(s_{min}\) is the minimum recorded standard deviation.

DDM was later extended to monitor the average distance between two errors instead of only the error rate. This new method was called early drift detection method (EDDM) [2].

A different approach, called adaptive windowing (ADWIN) [4], is a sliding window algorithm which detects drifts and records updated statistics about data streams. The size of the sliding window is decided based on statistics calculated at different points of the stream. If the difference between the calculated statistics is higher than a predefined threshold, ADWIN detects the CD and discards all data from the stream up to the detected drift point.

Another early CD detector, called Page-Hinkley (PH) [14], computes observed values and their average up until the current point in a data stream. PH then detects the drift if the observed average is higher than a predefined threshold.

Frías-Blanco et al. (2014) [7] proposed two detectors. The first, called HDDM_A, is based on Hoeffding’s inequality and used the average of the continuous values in a data stream to determine if the data contain CD. The second method, HDDM_W, uses McDiarmid’s bounds and the exponentially weighted moving average (EWMA) statistic to estimate if an instance represents CD or not.

The Kolmogorov-Smirnov Windowing (KSWIN) method [16] uses the Kolmo- gorov-Smirnov (KS) statistical test to monitor data distributions without assuming any particular distributions. KSWIN keeps a fixed window size and compares the cumulative distribution of the current window to the previous one, detecting CD if the KS test rejects the null hypothesis that the distributions are the same.

3 Experiment Configuration

In this section we describe the datasets and the parametrization used for the drift detectors as well as the evaluation methodology used in the experiments.

3.1 Datasets

The datasets chosen for the experiments have all been previously used to study the concept drift problem. To analyze the performance of the methods, four synthetic datasets and four real-world datasets were used. The datasets allow us to analyze how the detectors identify the points of deviation in the data and false points detected. We use the synthetic data sets from the work of Almeida et al. (2019) [1] following the same methodological process of adding deviation. For each data set 5000 samples are created. The domain of each attribute is divided into ten equal-sized parts. The first 2000 samples correspond to the first seven parts of the domain of each variable. 1000 new instances are added and the domain is expanded until the 5000 samples are completed. Table 1 presents synthetic data.

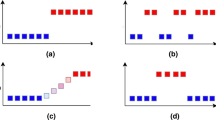

The real-world datasets used are Bike [12], FCCU1 (gasoline concentration), FCCU2 (LDO concentration) and FCCU3 (LPG concentration) [18]. We performed an analysis on the target variable using the interquartile range method (IQR), \(IQR=Q_{3}-Q_{1}\), where \(=Q_{3}\) is the third quartile and \(=Q_{1}\) is the first quartile. We perform a variability estimate to calculate lower \(L_{inf}=\bar{y}-1.5\, *\, IQR\) and higher \(L_{sup}=\bar{y}+1.5 * IQR\) bounds for drift identification, where \(\bar{y}\) is the mean of the target variable. At the end of the analysis we noticed that none of the data points were out of bounds. Thus, we artificially added drift along the data. Figure 1 shows the distributions of y and \(y_{Drift}\) (y with artificial drift).

To add the deviation we add the value of the dependent variable (y) by multiplying the standard deviation and a number that leaves the value of y greater than \(L_{sup}\). The added drift in the Bike dataset ((a)) has the characteristic of recurring and abrupt speed, as it appears in the data in a certain period of time, returns to the original concept, and appears again, in addition to abruptly changing the concept. We add two offsets by multiplying by the numbers three and four respectively. In the FCCU1, FCCU2, FCCU3 dataset we add three abrupt deviations. Which we multiply: by three, seven and, twelve (For FCCU1); three, six, three (For FCCU2); three, six, nine (For FCCU3).

Table 2 presents a summary of the main characteristics of each dataset, such as number of predictive attributes, number of samples and amount of drift.

3.2 Evaluation Setup

To evaluate the drift detection methods using the presented datasets, we use a methodology similar to the one described in [1]. For each time step, a specified amount of instances of the training set are read and used to train the regressor. Next, other examples from the test set are used to test the regressor. If the detection method identifies the occurrence of CD, the test data are used for training, which corresponds to the popular train-test-train approach. This procedure is repeated 30 times.

We used seven base learners paired with all tested drift detection methods. They are: Boosting Regressor (BR) [6], Decision Trees (DT) [3], Lasso Regression (LR) [20], Hoeffding Tree Regressor (HTR) [13], Multilayer Perception (MLP) [19], Bagging with meta-estimator BR (B-BR), Bagging with meta-estimator LR (B-LR), Bagging with meta-estimator DT (B-DT), Bagging with meta-estimator HTR (B-HTR) and Bagging with meta-estimator MLP (B-MLP) [5]. The experiments described here used Scikit-multiflow: A multi-output streaming framework [11]. To run the experiments, we used a machine running a 64-bit operational system with 4 GB of RAM and a 2.20 GHz Intel(R) i5-5200U CPU.

We use \(RelMAE= \frac{\left| y\_true-y\_pred \right| }{mean\_absolute\_error(y\_true,y\_pred)}\) to evaluate the predicted value against the actual value of the data set, indicating whether it correctly classified the instance.

If RelMAE gets a value less than 1, we treated the learner’s prediction as correct, otherwise it was considered wrong. This value is passed to a parameterized drift detector, which will flag the example for no drift or true drift. If true drift is identified, the base learner is trained on the instance that has just arrived. If no drift is found, the instance is not used for training. Scikit-multiflow provides the following concept drift detection methods: ADWIN, DDM, EDDM, HDDM_A, HDDM_W, KSWIN and PageHinkley, which were used with their default settings for the paper. The prequential evaluation method or interleaved test-then-train method strategy is used for the evaluation of the learning models with concept drift and we performed thirty iterations [1]. We chose the Mean Square Error (MSE) for error metric. In addition, we carry out statistical tests to analyze the predictive performance of the learning models in relation to the MSE error. The Friedman test is applied with \(\alpha = 0.05\) [9]. We present our statistical analysis using critical difference diagrams.

We initially performed experiments to identify the best parameter settings for each of the base learners. Specifically, we conducted a grid search to choose hyperparameters from the base learners using the initial training data. After identifying the parameters that most influence performance we conducted the actual experiments. All models are from sklearn [15] and scikit-multiflow [11].

4 Experimental Results

In this section we present the results of the experiments with the concept drift detection methods.

4.1 Predictive Accuracy and Drift Identification

We performed a broad base learner study together with the detectors. Therefore, in addition to the detectors, we compare the base learner without updating with test data (called No Partial) and updating whenever new test data arrives, regardless of detection (called Partial). Figure 2 shows the heat map of the mean MSE value of all CD detectors and base learners across the 30 iterations with distinct seeds. Errors are normalized between 0 and 1.

We analyzed the average points of detection for all different scenarios. Figure 3 presents the mean error along the data stream for dataset 3d_Mex.hat. Only the LR, HTR, B-LR and B-HTR base learners showed lower errors as the new test data arrived, even in cases when no CD was detected by ADWIN, DDM, HDDM_A and HDDM_W. A higher number of wrong detections can lead to larger errors, because models are retrained with data from the detected new concept. Additionally, due to the small number of training data of each new concept, base learners that are not able to learn incrementally may show worse performances.

Differently from the previous scenario, Fig. 4 shows that for dataset \(Friedman\#1\) most models have increasing errors along the data stream. However, base learner HTR together with detector PH have decreased errors beginning with point 2000. This happened due to successful drift point detections followed by base learner updates. Base learners MLP and B-MLP also showed lower errors when paired with the ADWIN and PH detectors.

Figure 5 presents results for dataset \(Friedman\#3\). Most base learners, except MLP and B-MLP, had lower errors at point 2000. For dataset Mult, Fig. 6 shows variation in the error value, with increasing errors starting at point 2000 in most cases. In such cases, the error increases because it is necessary to retrain the base learners given the new concept. Thus, we can see that the base learners were not updated correctly.

Regarding synthetic data, the best average detection performances were obtained by EDDM, KSWIN and PH, considering the different base learners. These indicates that in these scenarios where concept drift happens gradually, these methods are able to detect small variations in data distribution.

For the Bike dataset (Fig. 7), only ADWIN and PH were not able to detect any concept drift in the data. The base learners varied significantly according in their error values. Additionally, we observed that all pairs of base learners and detectors increased their errors around point 200, with worse performances coming from ADWIN and PH. These detectors did not adjust well to the concept changes in this dataset.

Due to small changes in the average errors for datasets FCCU1, FCCU2 and FCCU3, Fig. 8 only shows the average detection points. Only KSWIN was able to find drift points. This is likely due to lack of enough data to train the base learners, which would allow better precision for the detectors. For FCCU1 and FCCU3, only the Partial model, which always retrains with the new test data, obtained significantly lower errors. For FCCU2, all models behaved similarly, with slightly decreasing errors.

Our results show that, in addition to the base learner and to the detector, the pace at which the drift happens and the number of instances available to update the base learner all contribute significantly to the overall performance.

4.2 Statistical Analysis

We used critical difference diagrams and Nemenyi test to compare all detectors across all 8 datasets for the different base learners. The resulting diagrams can be seen in Fig. 9. This analysis shows that the detectors have statistically similar performances for all base learners.

As shown in Fig. 9a, all detectors had similar performances with base learner BR. This even includes the model without any CD detectors (No Partial), which only learns from the first training data and is only used for testing. The same happens in Figs. 9b, 9f, 9i and 9g. Figure 9c, on the other hand, shows that there are two distinct groups of detectors. This is mainly because the Partial method, which always updates with every new block of test data in the stream, significantly outperformed every detector, except for EDDM and KSWIN. HDDM_A, HDDM_W, ADWIN, PH and DDM had worse errors than No Partial on average, although these results were not statistically significant. Finally, DDM was statistically worse than Partial, because their distance is larger than the critical difference.

Figure 9d shows that No Partial, ADWIN, DDM, HDDM_A and HDDDM_W share the same average rank, which means that these detectors did not improve the performance of the base learner trained only on the original training data. The same happened with DDM with base learner MLP (9e), where DDM and No Partial were outside the critical difference when compared to Partial, which led to two separate performance groups. Similarly, 9h shows that ADWIN and No Partial are statistically worse than Partial, resulting in two groups: the first includes methods that had statistically similar errors to Partial and the second includes those that were similar to ADWIN and No Partial. Finally, Fig. 9j shows that only EDDM, No Partial and DDM were statistically worse than Partial.

The only case were Partial was not alone in the top rank was Fig. 9i, were it shared the position with No Partial, which means that there was not benefit in retraining the model. It is important to point out that it is actually a good result when a detector is statistically similar to Partial, because it means that, as a result of using the detector, the base learner performed just as well as if it was always retrained, even though it only retrains when the detector identifies a drift.

5 Conclusion

In this paper, seven CD detectors were compared using ten base learners and eight real and synthetic datasets. The resulting Python-based frameworkFootnote 1 used the sklearn and skmultiflow libraries and will be available for future use by other researchers, who will be able to extend it with other base learners, detectors and datasets. All base learners were optimized using a grid search procedure, such that they would present their best results considering the training data.

Average MSE results indicate that PH and KSWIN were the best detectors for the synthetic datasets. For the Bike dataset, EDDM, HDDM_A and HDDM_W showed the best average errors. For the other real datasets, none of the detectors identified any drift points.

The base learner has an important impact on CD detection. Additionally, considering the approach that we used in this paper, i.e. the base learner is updated whenever CD is detected, it is useful that the base learner is able to perform incremental learning, such that it gradually adapts to new concepts.

Therefore, the main limitation of our work is that only one of the base learners can learn incrementally, with the other ones training from scratch with every batch of test data that was flagged as CD. Another important limitation was that our real datasets did not have a ground truth for the presence of CD, thus we artificially added drift to the data, but most detectors were not able to capture that.

Future works include adding other incremental learners to the framework, investigating additional real datasets and analyzing the impact of different rates of change in data distribution.

References

de Almeida, R., Goh, Y.M., Monfared, R., Steiner, M.T.A., West, A.: An ensemble based on neural networks with random weights for online data stream regression. Soft Comput., 1–21 (2019)

Baena-Garcıa, M., del Campo-Ávila, J., Fidalgo, R., Bifet, A., Gavalda, R., Morales-Bueno, R.: Early drift detection method. In: Fourth International Workshop on Knowledge Discovery from Data Streams, vol. 6, pp. 77–86 (2006)

Batra, M., Agrawal, R.: Comparative analysis of decision tree algorithms. In: Panigrahi, B.K., Hoda, M.N., Sharma, V., Goel, S. (eds.) Nature Inspired Computing. AISC, vol. 652, pp. 31–36. Springer, Singapore (2018). https://doi.org/10.1007/978-981-10-6747-1_4

Bifet, A., Gavalda, R.: Learning from time-changing data with adaptive windowing. In: Proceedings of the 2007 SIAM International Conference on Data Mining, pp. 443–448. SIAM (2007)

Breiman, L.: Bagging predictors. Mach. Learn. 24(2), 123–140 (1996)

Duffy, N., Helmbold, D.: Boosting methods for regression. Mach. Learn. 47(2), 153–200 (2002)

Frias-Blanco, I., del Campo-Ávila, J., Ramos-Jimenez, G., Morales-Bueno, R., Ortiz-Diaz, A., Caballero-Mota, Y.: Online and non-parametric drift detection methods based on Hoeffding’s bounds. IEEE Trans. Knowl. Data Eng. 27(3), 810–823 (2014)

Gama, J., Medas, P., Castillo, G., Rodrigues, P.: Learning with drift detection. In: Bazzan, A.L.C., Labidi, S. (eds.) SBIA 2004. LNCS (LNAI), vol. 3171, pp. 286–295. Springer, Heidelberg (2004). https://doi.org/10.1007/978-3-540-28645-5_29

Gonçalves Jr., P.M., de Carvalho Santos, S.G., Barros, R.S., Vieira, D.C.: A comparative study on concept drift detectors. Expert Syst. Appl. 41(18), 8144–8156 (2014)

Mastelini, S.M., de Leon Ferreira de Carvalho, A.C.P.: 2CS: correlation-guided split candidate selection in Hoeffding tree regressors. In: Cerri, R., Prati, R.C. (eds.) BRACIS 2020. LNCS (LNAI), vol. 12320, pp. 337–351. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-61380-8_23

Montiel, J., Read, J., Bifet, A., Abdessalem, T.: Scikit-multiflow: a multi-output streaming framework. J. Mach. Learn. Res. 19(72), 1–5 (2018). http://jmlr.org/papers/v19/18-251.html

Oikarinen, E., Tiittanen, H., Henelius, A., Puolamäki, K.: Detecting virtual concept drift of regressors without ground truth values. Data Min. Knowl. Discov. 35(3), 726–747 (2021). https://doi.org/10.1007/s10618-021-00739-7

Osojnik, A., Panov, P., Džeroski, S.: Tree-based methods for online multi-target regression. J. Intell. Inf. Syst. 50(2), 315–339 (2017). https://doi.org/10.1007/s10844-017-0462-7

Page, E.S.: Continuous inspection schemes. Biometrika 41(1/2), 100–115 (1954)

Pedregosa, F., et al.: Scikit-learn: machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011)

Raab, C., Heusinger, M., Schleif, F.M.: Reactive soft prototype computing for concept drift streams. Neurocomputing 416, 340–351 (2020)

dos Santos, V.M.G., de Mello, R.F., Nogueira, T., Rios, R.A.: Quantifying temporal novelty in social networks using time-varying graphs and concept drift detection. In: Cerri, R., Prati, R.C. (eds.) BRACIS 2020. LNCS (LNAI), vol. 12320, pp. 650–664. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-61380-8_44

Soares, S.G., Araújo, R.: An on-line weighted ensemble of regressor models to handle concept drifts. Eng. Appl. Artif. Intell. 37, 392–406 (2015)

Valença, M.: Fundamentos das redes neurais: exemplos em java. Olinda, Pernambuco: Editora Livro Rápido (2010)

Xu, H., Caramanis, C., Mannor, S.: Robust regression and lasso. IEEE Trans. Inf. Theory 56(7), 3561–3574 (2010)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Lima, M., Filho, T.S., de A. Fagundes, R.A. (2021). A Comparative Study on Concept Drift Detectors for Regression. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13073. Springer, Cham. https://doi.org/10.1007/978-3-030-91702-9_26

Download citation

DOI: https://doi.org/10.1007/978-3-030-91702-9_26

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91701-2

Online ISBN: 978-3-030-91702-9

eBook Packages: Computer ScienceComputer Science (R0)