Abstract

Numerous problems in machine learning require some type of dimensionality reduction. Unsupervised metric learning deals with the definition of intrinsic and adaptive distance functions of a dataset. Locally linear embedding (LLE) consists of a widely used manifold learning algorithm that applies dimensionality reduction to find a more compact and meaningful representation of the observed data through the capture of the local geometry of the patches. In order to overcome relevant limitations of the LLE approach, we introduce the LLE Kullback-Leibler (LLE-KL) method. Our objective with such a methodological modification is to increase the robustness of the LLE to the presence of noise or outliers in the data. The proposed method employs the KL divergence between patches of the KNN graph instead of the pointwise Euclidean metric. Our empirical results using several real-world datasets indicate that the proposed method delivers a superior clustering allocation compared to state-of-the-art methods of dimensionality reduction-based metric learning.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

The main objective of dimensionality reduction unsupervised metric learning is to produce a lower dimensional representation that preserves the intrinsic local geometry of the data. The locally linear embedding (LLE) consists of one of the pioneering algorithms of such a class, which has been successfully applied to non-linear feature extraction for pattern classification tasks [9]. Although more efficient than linear methods, the LLE still has some important limitations.

Firstly, it is rather sensitive to noise or outliers [14]. Secondly, in datasets that are not represented by smooth manifolds, the LLE frequently fails to produce reasonable results. It is worth mentioning that variations of the LLE have been proposed to overcome such limitations, with varying degrees of success. In Hessian eigenmaps, the local covariance matrix used in the computation of the optimal reconstruction weights is replaced by the Hessian matrix (i.e., the second order derivatives matrix), which encodes relevant curvature information [1, 13, 15]. A further extension is known as the local tangent space alignment (LTSA).

The difference is that the LTSA builds the locally linear patch with an approximation of the tangent space through the application of the principal component analysis (PCA) over a linear patch. Subsequently, the local representations are aligned to the point in which all tangent spaces become aligned in the unfolded manifold [16]. In addition, the modified LLE (MLLE) introduces several linearly independent weight vectors for each neighborhood of the KNN graph. Some works in the relevant literature describe that the local geometry of MLLE is more stable in comparison with the LLE [17]. One remaining issue still present in such recent developments of the LLE method is the adoption of Euclidean distance as the similarity measure within a linear patch.

In the proposed LLE-KL method, we incorporate the KL divergence into the estimation of the optimal reconstruction weights. The main contribution of the proposed method is that, through the replacement of the pointwise Euclidean distance by a patch-based information-theoretic distance (i.e., KL divergence), the LLE becomes less sensitive to the presence of noise or outliers. Our empirical clustering analysis performed subsequently to the dimensionality reduction for several real-world datasets popularly used in the machine learning literature, indicate that the proposed LLE-KL method is capable of generating more reasonable clusters in terms of silhouette coefficients compared to state-of-the-art manifold learning algorithms, such as the Isomap [12] and UMAP [6].

The remainder of the paper is organized as follows. Section 2 discusses previous relevant work, with focus on the relative entropy and the regular LLE algorithm. Section 3 details the proposed LLE-KL algorithm. Section 4 presents the data used as well as computational experiments and empirical results. Section 5 concludes with our final remarks and suggestions for future research.

2 Related Work

In this section, we discuss the relative entropy (KL divergence) between probability density functions as a similarity measure among random variables, exploring its computation in the Gaussian case. Moreover, we discuss the LLE algorithm and detail its mathematical derivation.

2.1 Kullback-Leibler Divergence

In machine learning applications, the problem of quantifying similarity levels between different objects or clusters consists of a challenging task, especially in cases where the standard Euclidean distance is not a reasonable choice. Many studies on feature selection adopt statistical divergences to select the set of features that should maximize some measure of separation between classes. Part of their success is due to the fact that most dissimilarity measures are related to distance metrics. In such a context, information theory provides a solid mathematical background for metric learning in pattern classification. The entropy of a continuous random vector x is given by:

where p(x) is the probability density function (pdf). In a similar fashion, we may define the cross-entropy between two probability density functions p(x) and q(x):

The KL divergence is the difference between cross-entropy and entropy [4]:

An important property is that the relative entropy is non-negative, therefore, \(D_{KL}(p, q) \ge 0\).

2.2 The LLE Algorithm

The LLE consists of a local method, thus, the new coordinates of any \(\vec {x}_i \in R^m\) depends only on the neighborhood of the respective point. The main hypothesis behind the LLE is that for a sufficiently high density of samples, it is expected that a vector \(\vec {x}_i\) and its neighbors define a linear patch (i.e., they all belong to an Euclidean subspace [9]). Hence, it is possible to characterize the local geometry by linear coefficients:

consequently, we can reconstruct a vector as a linear combination of its neighbors.

The LLE algorithm requires as input an \(n \times m\) data matrix X, with rows \(\vec {x}_i\), a defined number of dimensions \(d < m\), and an integer \(k > d + 1\) for finding local neighborhoods. The output is an \(n \times d\) matrix Y, with rows \(\vec {y}_i\). The LLE algorithm may be divided into three main steps [9, 10], as follows:

-

1.

From each \(\vec {x}_i \in R^m\) find its k nearest neighbors;

-

2.

Find the weight matrix W that minimizes the reconstruction error for each data point \(\vec {x}_i \in R^m\);

-

3.

Find the coordinates Y which minimize the reconstruction error using the optimum weights.

In the following subsections, we describe how to obtain the solution to each step of the LLE algorithm.

Finding Local Linear Neighborhoods. A relevant aspect of the LLE is that this algorithm is capable of recovering embeddings which intrinsic dimensionality d is smaller than the number of neighbors, k. Moreover, the assumption of a linear patch imposes the existence of an upper bound on k. For instance, in highly curved datasets, it is not reasonable to have a large k, otherwise such an assumption would be violated. In the uncommon situation where \(k > m\), it has been shown that each sample may be perfectly be reconstructed from its neighbors, from which another problem emerges: the reconstruction weights are not anymore unique.

To overcome this limitation, some regularization is necessary in order to break the degeneracy [10]. Lastly, a further concern in the LLE algorithm refers to the connectivity of the KNN graph. In the case the graph contains multiple connected components, then the LLE should be applied separately on each one of them, otherwise the neighborhood selection process should be modified to assure global connectivity [10].

Least-Squares Estimation of the Weights. The second step of the LLE is to reconstruct each data point from its nearest neighbors. The optimal reconstruction weights may be computed in closed form. Without loss of generality, we may express the local reconstruction error at point \(\vec {x}_i\) as follows:

Defining the local covariance matrix C as:

we then have the following expression for the local reconstruction error:

Regarding the constraint \(\sum _j w_j = 1\), that may be interpreted in two different manners, namely geometrically and probabilistically. From a geometric point of view, it provides invariance under translation, thus, by adding a given constant vector \(\vec {c}\) to \(\vec {x}_i\) and all of its neighbors, the reconstruction error remains unchanged. In terms of probability, enforcing the weights to sum to one leads W to become a stochastic transition matrix [10] directly related to Markov chains and diffusion maps [11]. As detailed below, in the minimization of the squared error the solution is found through an eigenvalue problem. In fact, the estimation of the matrix W reduces to n eigenvalue problems. Considering that there are no constraints across the rows of W, we may then find the optimal weights for each sample \(\vec {x}_i\) separately, drastically simplifying the respective computations. Therefore, we have n independent constrained optimization problems given by:

for \(i = 1, 2, ..., n\). Using Lagrange multipliers, we express the Lagrangian function as follows:

Taking the derivatives with relation to \(\vec {w}_i\) as follows:

leads to

Which is equivalent to solving the following linear system:

and then normalizing the solution to guarantee that \(\sum _j w_i(j) = 1\) by dividing each coefficient of the vector \(\vec {w}_i\) by the sum of all the coefficients:

In the case that k (i.e., number of neighbors) is greater than m (i.e., number of features) then, in general, the space spanned by k distinct vectors consists of the whole space. This means that \(\vec {x}_i\) may be expressed exactly as a linear combination of its k-nearest neighbors. In fact, if \(k > m\), there are generally infinitely many solutions to \(\vec {x}_i = \sum _j w_j \vec {x}_j\), due to the fact that there would be more unknowns (k) than equations (m). In such a case, the optimization problem is ill-posed and, thus, regularization is required. A common regularization technique refers to the Tikonov regularization, which adds a penalization term to the least squares problem, as follows:

where \(\alpha \) controls the degree of regularization. In other words, it selects the weights which minimize a combination of reconstruction error as well as the sum of the squared weights. In the case that \(\alpha \rightarrow 0\), then there is a least-squares problem. However, in the opposite limit (i.e., \(\alpha \rightarrow \infty \)), the squared-error term becomes negligible, allowing the minimization of the Euclidean norm of the weight vector \(\vec {w}\). Typically, \(\alpha \) is set to be a small but non-zero value. In this case, the n independent constrained optimization problems are:

for \(i = 1, 2, ..., n\). The Lagrangian function is defined by:

Taking the derivative with respect to \(\vec {w}_i\) and setting the result to zero:

where \(\lambda \) is selected to properly normalize \(\vec {w}_i\), which is equivalent to solving the following linear system:

and then normalize the solution. In other words, to regularize the problem it is necessary to add a small perturbation into the main diagonal of the matrix \(C_i\). In all experiments reported in the present paper, we use the regularization parameter \(\alpha = 10^{-4}\).

Finding the Coordinates. The main idea in the last stage of the LLE algorithm is to use the optimal reconstruction weights estimated by least-squares as the proper weights on the manifold and then solve for the local manifold coordinates. Thus, fixing the weight matrix W, the goal is to solve another quadratic minimization problem to minimize the following:

Hence, the following question needs to be addressed: what are the coordinates \(\vec {y}_i \in R^d\) (approximately on the manifold), that such weights (W) reconstruct? In order to avoid degeneracy, we have to impose two constraints, as follows:

-

1.

The mean of the data in the transformed space is zero, otherwise we would have an infinite number of solutions;

-

2.

The covariance matrix of the transformed data is the identity matrix, therefore, there is no correlation between the components of \(\vec {y} \in R^d\) (this is a statistical constraint to assess that the output space is the Euclidean one, defined by an orthogonal basis).

However, unlikely the estimation of the weights W, finding the coordinates does not simplify into n independent problems, because each row of Y appears in \(\varPhi \) multiple times, as the central vector \(y_i\) and also as one of the neighbors of other vectors. Thus, firstly, we rewrite equation (21) in a more meaningful manner using matrices, as follows:

Applying the distributive law and expanding the summation, we then obtain:

Denoting by Y the \(d \times n\) matrix in which each column \(\vec {y}_i\) for \(i=1,2,...,n\) stores the coordinates of the i-th sample in the manifold and acknowledging that \(\vec {w}_i(j) = 0\), unless \(\vec {y}_j\) is one of the neighbors of \(\vec {y}_i\), we may express \(\varPhi (Y)\) as follows:

Defining the \(n \times n\) matrix M as:

we get the following optimization problem:

Thus, the Lagrangian function is given by:

Differentiating and setting the result to zero, finally leads to:

where \(\beta = \frac{\lambda }{n}\), showing that the Y must be composed by the eigenvectors of the matrix M. Since there is a minimization problem to be solved, it is then required to select Y to compose the d eigenvectors associated to the d smallest eigenvalues. Note that M being an \(n \times n\) matrix, it contains n eigenvalues and n orthogonal eigenvectors. Although the eigenvalues are real and non-negative values, its smallest value is always zero, with the constant eigenvector \(\vec {1}\). Such a bottom eigenvector corresponds to the mean of Y and should be discarded to impose the constraint that \(\sum _{i=1}^{n} \vec {y}_i = 0\) [7]. Therefore, to get \(\vec {y}_i \in R^d\), where \(d < m\), it is necessary to select the \(d+1\) smallest eigenvectors and discard the constant eigenvector with zero eigenvalue. In other words, one must select the d eigenvectors associated to the bottom non-zero eigenvalues.

3 The KL Divergence-Based LLE Method

The main motivation to introduce the LLE-KL method is to find a surrogate for the local matrix \(C_i\) for each sample of the dataset. Recall that, originally, \(C_i(j, k)\) is computed as the inner product between \(\vec {x}_i - \vec {x}_j\) and \(\vec {x}_i - \vec {x}_k\), which means that we employ the Euclidean geometry in the estimation of the optimal reconstruction weights. In the definition of such matrix it is used a non-linear distance function, namely the relative entropy between Gaussian densities estimated within different patches of the KNN graph. Our inspiration is the parametric PCA, an information-theoretic extension of the PCA method that applies the KL divergence to compute a surrogate for the covariance matrix (i.e., entropic covariance matrix) [5].

Let \(X = \{ \vec {x}_1 , \vec {x}_2 , \ldots , \vec {x}_n \}\), with \(\vec {x}_i \in R^m\), be our data matrix. The first step in the proposed method consists of building the KNN graph from X. At this early stage, we employ the extrinsic Euclidean distance to compute the nearest neighbors of each sample \(\vec {x}_i\). Denoting by \(\eta _i\) the neighborhood system of \(\vec {x}_i\), a patch \(P_i\) is defined as the set \(\{ \vec {x}_i \cup \eta _i \}\). It is worth noticing that the number of elements of \(P_i\) is \(K+1\), for \(i = 1, 2, ..., n\). In other words, a patch \(P_i\) is given by an \(m \times (k+1)\) matrix:

The rationale of the proposed method is considering each row of the matrix \(P_i\) as a sample of a univariate Gaussian random variable, and then estimating the parameters \(\mu \) (mean) and \(\sigma ^2\) (variance) of each row, leading to an m-dimensional vector of tuples, as follows:

Let p(x) and q(x) be univariate Gaussian densities, \(N(\mu _1, \sigma _1^2)\) and \(N(\mu _2, \sigma _2^2)\), respectively. Then, the relative entropy becomes:

By the definition of central moments, we then have:

which leads to the following closed-form equation:

As the relative entropy is not symmetric, it is possible to compute its symmetrized counterpart as follows:

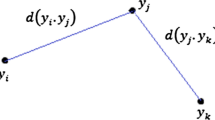

Let \(\vec {d}_{ij}\) be the m-dimensional vector of symmetrized relative entropies between the components of \(\vec {p}_i\) and \(\vec {p}_j\), computed by the direct application of equation (36) in each pair of parameter vectors. Then, in the proposed method, the entropic matrix \(C_i\) is computed as follows:

where the column vector \(\vec {d}_{ij}\) involves the patches \(\vec {p}_i\) and \(\vec {p}_j\), while the row vector \(\vec {d}_{ik}^T\) involves the patches \(\vec {p}_i\) and \(\vec {p}_k\). It is worth noticing that unlike the established LLE method - which employs the pairwise Euclidean distance, the proposed LLE-KL method employs a patch-based distance (relative entropy) instead, becoming less sensitive to the presence of noise and outliers in the observed data. The remaining steps of the algorithm are precisely the same as in the established LLE. The set of logical steps comprising the LLE-KL method is detailed in Algorithm 1.

In the subsequent sections, we present some computational experiments to compare the performance of the proposed method against several manifold learning algorithms.

4 Data, Experiments and Results

In the present section is presented the data used, experiments performed, and a discussion on the respective empirical results.

4.1 Data

In the present study, a total of 25 datasets are used as input data. Such datasets are widely used in machine learning estimations and applications. The datasets used in the present study were collected from openML.org, which contains detailed information regarding the number of instances, features, and classes for each one of datasets.

4.2 Experiments and Results

In order to test and evaluate the proposed method, a series of computational experiments is performed to quantitatively compare clustering results obtained subsequently to the dimensionality reduction into 2-D spaces using the silhouette coefficient (SC) - i.e., a measure of goodness-of-fit into a low-dimensional representation [8]. All results are reported in Table 1. It is worth noticing that in 23 out of 25 datasets (i.e., 92% of the cases), the LLE-KL method obtains the best performance in terms of the SC.

Regarding the means and medians, one may realize that the proposed method performs better in comparison with the established LLE as well as two of its variations (i.e., Hessian LLE and LTSA). In addition, the proposed method also achieves a superior performance compared to the state-of-the-art algorithm UMAP. It is worth noticing that, commonly, the UMAP requires a reasonably large sample size to perform well, which is not always the case in the empirical analysis of the present paper.

To check if the results obtained by the proposed method are statistically superior to the competing methods, we perform a Friedman test - i.e. non-parametric test for paired data in case of more than two groups [2]. For a significant level \(\alpha = 0.01\), we conclude that there is strong evidence to reject the null hypothesis that all groups are identical (\(p = 1.12 \times 10^{-11}\)). In order to analyze which groups are significantly different, we then perform a Nemenyi post-hoc test [3]. According to this test, there is strong evidence that the LLE-KL method produces significantly higher SCs compared to the PCA (\(p < 10^{-3}\)), Isomap (\(p < 10^{-3}\)), LLE (\(p < 10^{-3}\)), Hessian LLE (\(p < 10^{-3}\)), LTSA (\(p < 10^{-3}\)), and UMAP (\(p < 10^{-3}\)).

The proposed method also has some limitations. One caveat of the LLE-KL - which is common to other manifold learning algorithms, consists of the out-of-sample problem. It is not clear how to properly evaluate new samples that are not part of the training set. Another drawback refers to the definition of the parameter K (i.e., number of neighbors) that controls the patch size. Previous computational experiments reveal that the SC is rather sensitive to changes in such a parameter. Our strategy in this case is then as follows: for each dataset, we build the KKN graphs for all values of K in the interval [2, 40].

We select the best model as the one that maximizes the SC among all values of K. Although we are using the class labels to perform model selection, in fact, the LLE-KL method performs unsupervised metric learning, in the sense that we do not employ the class labels in the dimensionality reduction. A data visualization comparison of the clusters obtained through the application of the LLE and LLE-KL methods for two distinct datasets (i.e., tic-tac-toe and corral) are depicted in Figs. 1 and 2. It is worth noticing that the discrimination between the two classes is more evident in the proposed method than in the established version of the LLE, since there is visibly less overlap between the blue and red cluster.

5 Conclusion

In the present study, an information-theoretic LLE is introduced to incorporate the KL divergence between local Gaussian distributions into the KNN adjacency graph. The rationale of the proposed method is to replace the pointwise Euclidean distance by a more appropriate patch-based distance. Such a methodological modification results in a more robust method against the presence of noise or outliers in the data.

Our claim is that the proposed LLE-KL is a promising alternative to the existing manifold learning algorithms due to the proposition of its theoretical methodological improvements and considering our computational experiments that support two main points. Firstly, the quality of the clusters produced by the LLE-KL method indicates a superior performance than those obtained by competing state-of-the-art manifold learning algorithms. Secondly, the LLE-KL non-linear features may be more discriminative in supervised classification than features obtained by state-of-the-art manifold learning algorithms.

Suggestions of future work include the use of other information-theoretic distances, such as the Bhattacharyya and Hellinger distances, as well as geodesic distances based on the Fisher information matrix. Another possibility is the non-parametric estimation of the local densities using kernel density estimation techniques (KDE). In this case, non-parametric versions of the information-theoretic distances may be employed to compute a distance function between the patches of the KNN graph. The \(\epsilon \)-neighborhood rule may also be used for building the adjacency relations that define the discrete approximation for the manifold, leading to non-regular graphs. Furthermore, a supervised dimensionality reduction based metric learning approach may be created by removing the edges of the KNN graph for which the endpoints belong to different classes as a manner to impose that the optimal reconstruction weights use only the neighbors that belong to the same class within the sample.

References

Donoho, D.L., Grimes, C.: Hessian eigenmaps: locally linear embedding techniques for high-dimensional data. Proc. Natl. Acad. Sci. 100(10), 5591–5596 (2003)

Friedman, M.: The use of ranks to avoid the assumption of normality implicit in the analysis of variance. J. Am. Stat. Assoc. 32(200), 675–701 (1937)

Hollander, M., Wolfe, D.A., Chicken, E.: Nonparametric Statistical Methods, 3 edn. Wiley, New York (2015)

Kullback, S., Leibler, R.A.: On information and sufficiency. Ann. Math. Stat. 22(1), 79–86 (1951)

Levada, A.L.M.: Parametric PCA for unsupervised metric learning. Pattern Recognit. Lett. 135, 425–430 (2020)

McInnes, L., Healy, J., Melville, J.: UMAP: uniform manifold approximation and projection for dimension reduction. arXiv 1802.03426 (2020)

de Ridder, D., Duin, R.P.: Locally linear embedding for classification. Technical report, Delft University of Technology (2002)

Rousseeuw, P.J.: Silhouettes: a graphical aid to the interpretation and validation of cluster analysis. J. Comput. Appl. Math. 20, 53–65 (1987)

Roweis, S., Saul, L.: Nonlinear dimensionality reduction by locally linear embedding. Science 290, 2323–2326 (2000)

Saul, L., Roweis, S.: Think globally, fit locally: unsupervised learning of low dimensional manifolds. J. Mach. Learn. Res. 4, 119–155 (2003)

Talmon, R., Cohen, I., Gannot, S., Coifman, R.R.: Diffusion maps for signal processing: a deeper look at manifold-learning techniques based on kernels and graphs. IEEE Signal Process. Mag. 30(4), 75–86 (2013)

Tenenbaum, J.B., de Silva, V., Langford, J.C.: A global geometric framework for nonlinear dimensionality reduction. Science 290, 2319–2323 (2000)

Wang, J.: Hessian locally linear embedding. In: Wang, J. (ed.) Geometric Structure of High-Dimensional Data and Dimensionality Reduction, pp. 249–265. Springer, Heidelberg (2012). https://doi.org/10.1007/978-3-642-27497-8_13

Wang, J., Wong, R.K., Lee, T.C.: Locally linear embedding with additive noise. Pattern Recognit. Lett. 123, 47–52 (2019)

Xing, X., Du, S., Wang, K.: Robust hessian locally linear embedding techniques for high-dimensional data. Algorithms 9, 36 (2016)

Zhang, Z.Y., Zha, H.Y.: Principal manifolds and nonlinear dimensionality reduction via tangent space aligment. SIAM J. Sci. Comput. 26(1), 313–338 (2004)

Zhang, Z., Wang, J.: MLLE: modified locally linear embedding using multiple weights. In: Schölkopf, B., Platt, J.C., Hofmann, T. (eds.) Advances in Neural Information Processing Systems 19, Proceedings of the 20th Conference on Neural Information Processing Systems, Vancouver, British Columbia, pp. 1593–1600 (2006)

Acknowledgments

This study was partially financed the Coordenação de Aperfeiçoamento de Pessoal de Nível Superior - Brasil (CAPES) - Finance Code 001.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Levada, A.L.M., Haddad, M.F.C. (2021). A Kullback-Leibler Divergence-Based Locally Linear Embedding Method: A Novel Parametric Approach for Cluster Analysis. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13073. Springer, Cham. https://doi.org/10.1007/978-3-030-91702-9_27

Download citation

DOI: https://doi.org/10.1007/978-3-030-91702-9_27

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91701-2

Online ISBN: 978-3-030-91702-9

eBook Packages: Computer ScienceComputer Science (R0)