Abstract

Semi-supervised learning has received attention from researchers, as it allows one to exploit the structure of unlabeled data to achieve competitive classification results with much fewer labels than supervised approaches. The Local and Global Consistency (LGC) algorithm is one of the most well-known graph-based semi-supervised (GSSL) classifiers. Notably, its solution can be written as a linear combination of the known labels. The coefficients of this linear combination depend on a parameter \(\alpha \), determining the decay of the reward over time when reaching labeled vertices in a random walk. In this work, we discuss how removing the self-influence of a labeled instance may be beneficial, and how it relates to leave-one-out error. Moreover, we propose to minimize this leave-one-out loss with automatic differentiation. Within this framework, we propose methods to estimate label reliability and diffusion rate. Optimizing the diffusion rate is more efficiently accomplished with a spectral representation. Results show that the label reliability approach competes with robust \(\ell _1\)-norm methods and that removing diagonal entries reduces the risk of overfitting and leads to suitable criteria for parameter selection.

This study was financed in part by the Coordenação de Aperfeiçoamento de Nível Superior - Brasil (CAPES) - Finance Code 001, and São Paulo Research Foundation (FAPESP) grant #18/01722-3.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Machine learning (ML) is the subfield of Computer Science that aims to make a computer learn from data [11]. The task we’ll be considering is the problem of classification, which requires the prediction of a discrete label corresponding to a class.

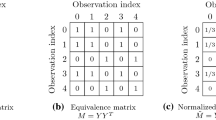

Before the data can be presented to an ML model, it must be represented in some way. Our input is nothing more than a collection of n examples. Each object of this collection is called an instance. For most practical applications, we may consider an instance to be a vector of d dimensions. Graph-based semi-supervised learning (GSSL) relies a lot on matrix representations, so we will be representing the observed instances as an input matrix

A ML classifier should learn to map an input instance to the desired output. To do this, it needs to know the labels (i.e. the output) associated with instances contained in the training set. In semi-supervised learning, only some of the labels are known in advance [3]. Once again, it is quite convenient to use a matrix representation, referred to as the (true) label matrix

Most approaches are inductive in nature so that we can predict the labels of instances not seen before deployment. However, most GSSL methods are transductive, which simply means that we are only interested in the fixed but unknown set of labels corresponding to unlabeled instances [17]. Accordingly, we may represent this with a classification matrix:

In order to separate the labeled data \(\mathcal {L}\) from the unlabeled data \(\mathcal {U}\), we divide our matrices as following:

The idea of SSL is appealing for many reasons. One of them is the possibility to integrate the toolset developed for unsupervised learning. Namely, we may use unlabeled data to measure the density \(P( \mathbf {x})\) within our d-dimensional input space. Once that is achieved, the only thing left is to take advantage of this information. To do this, we have to make use of assumptions about the relationship between the input density \(P( \mathbf {x})\) and the conditional class distribution \(P(y\!\mid \! \mathbf {x})\). If we are not assuming that our datasets satisfy any kind of assumption, SSL can potentially cause a significant decrease compared to baseline performance [14]. This is currently an active area of research: safe semi-supervised learning is said to be attained when SSL never performs worse than the baseline, for any choice of labels for the unlabeled data. This is indeed possible in some limited circumstances, but also provably impossible for others, such as for a specific class of margin-based classifiers [9].

In Fig. 1, we illustrate which kind of dataset is suitable for GSSL. There are two clear spirals, one corresponding to each class. In a bad dataset for SSL, we can imagine the spiral structure to be a red herring, i.e. something misguiding. A very common assumption for SSL is the smoothness assumption, which is one of the cornerstones for GSSL classifiers. It states that “If two instances \( \mathbf {x}_1\), \( \mathbf {x}_2\) in a high-density region are close, then so should be the corresponding outputs \(y_1\), \(y_2\)” [3]. Another important assumption is that the (high-dimensional) data lie (roughly) on a low-dimensional manifold, also known as the manifold assumption [3]. If the data lie on a low-dimensional manifold, the local similarities will approximate the manifold well. As a result, we can, for example, increase the resolution of our image data without impacting performance.

Graph-based semi-supervised learning has been used extensively for many different applications across different domains. In computer vision, these include car plate character recognition [19] and hyperspectral image classification [13]. GSSL is particularly appealing if the underlying data has a natural representation as a graph. As such, it has been a promising approach for drug property prediction from the structure of molecules [8]. Moreover, it has had much application in knowledge graphs, such as the development of web-scale recommendation systems [16].

GSSL methods put a greater emphasis on using geodesics by expressing connectivity between instances through the creation of a graph. Many successful deep semi-supervised approaches use a similar yet slightly weaker assumption, namely that small perturbations in input space should cause little corresponding perturbation on the output space [12].

It turns out that we can best express our concepts by defining a measure of similarity, instead of distance. In particular, we search for an affinity matrix  , such that

, such that

where w is some function determining the similarity between any two instances \( \mathbf {x}_i, \mathbf {x}_j\). When constructing an affinity matrix in practice, instances are not considered neighbors of themselves, i.e. we have \(\forall i \in \{1..n\}: \mathbf {W}_{ii} = 0\).

The specification of an affinity matrix is a necessary step for any GSSL classifier, and its sparsity is often crucial for reducing computational costs. There are many ways to choose a neighborhood. Most frequently, it is constructed by looking at the K-Nearest neighbors (KNN) of a given instance.

One last important concept to GSSL is that of the graph Laplacian operator. This operator is analogue to the Laplace-Beltrami operator on manifolds. There are a few graph Laplacian variants, such as the combinatorial Laplacian

where \( \mathbf {D}\), called the degree matrix, is a diagonal matrix whose entries are the sum of each row of \( \mathbf {W}\). There is also the normalized Laplacian, whose diagonal is the identity matrix \(\mathbf {I}\):

Each graph Laplacian \( \mathbf {L}\) induces a measure of smoothness with respect to the graph on a given classification matrix \( \mathbf {F}\), namely

where tr is the trace of the matrix and c the number of classes. If we consider each column \( \mathbf {f}\) of \( \mathbf {F}\) individually, then we can express graph smoothness of each graph Laplacian as

Each graph Laplacian also has an eigendecomposition:

where the set of columns of \( \mathbf {U}\) is an orthonormal basis of eigenfunctions, with \( \mathbf {\Lambda }\) a diagonal matrix with the eigenvalues. As \( \mathbf {U}\) is a unitary matrix, any real-valued function on the graph may be expressed as a linear combination of eigenfunctions. We map a function to the spectral domain by pre-multiplying it by \( \mathbf {U}^\top \) (also called graph Fourier transform, also known as GFT). Additionally, pre-multiplying by \( \mathbf {U}\) gives us the inverse transform. This spectral representation is very useful, as eigenfunctions that are smooth with respect to the graph have smaller eigenvalues. Outright restricting the amount of eigenfunctions is known as smooth eigenbasis pursuit [6], a valid strategy for semi-supervised regularization.

In this work, we explore the problem of parameter selection for the Local and Global Consistency model. This model yields a propagation matrix, which itself depends on a fixed parameter \(\alpha \) determining the diffusion rate. We show that, by removing the diagonal in the propagation matrix used by our baseline, a leave-one-out criterion can be easily computed. Our first proposed algorithm attempts to calculate label reliability by optimizing the label matrix, subject to constraints. This approach is shown to be competitive with robust \(\ell _1\)-norm classifiers. Then, we consider the problem of optimizing the diffusion rate. Doing this in the usual formulation of LGC is impractically expensive. However, we show that the spectral representation of the problem can be exploited to easily solve an approximate version of the problem. Experimental results show that minimizing leave-one-out error leads to good generalization.

The remaining of this paper is organized as follows. Section 2 summarizes the key ideas and concepts that are related to our work. Section 3 presents our two proposed algorithms: \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) for determining label reliability, and \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\) for determining the optimal diffusion rate parameter. Our methodology is detailed in Sect. 4, going over the basic framework and baselines. The results are presented in Sect. 5. Lastly, concluding remarks are found in Sect. 6.

2 Related Work

In this section, we present some of the algorithms and concepts that are central to our approach. We describe the inner workings of our baseline algorithm, and how eliminating diagonal entries of its propagation matrix may lead to better generalization.

2.1 Local and Global Consistency

The Local and Global Consistency (LGC) [17] algorithm is one of the most widely known graph-based semi-supervised algorithms. It minimizes the following cost:

LGC addresses the issue of label reliability by introducing the parameter \(\mu \in (0, \infty )\). This parameter controls the trade-off between fitting labels, and achieving high graph smoothness.

LGC has an analytic solution. To see this, we take the partial derivative of the cost with respect to \( \mathbf {F}\):

where \( \mathbf {S} = \mathbf {D}^{-\frac{1}{2}} \mathbf {W} \mathbf {D}^{-\frac{1}{2}} = \mathbf {I} - \mathbf {L}_{\mathbb {N}}\). By dividing the above by (\(1+ \mu \)), we observe that this derivative is zero exactly when

with

and

The matrix \(( \mathbf {I} - \alpha \mathbf {S})\) can be shown to be positive-definite and therefore invertible, so the optimal \( \mathbf {F}\) can be obtained as

We hereafter refer to \((I - \alpha S)^{-1}\) as the propagation matrix \( \mathbf {P}\). Each entry \( \mathbf {P}_{ij}\) represents the amount of label information from \(X_j\) that \(X_i\) inherits. It can be shown that the inverse is a result of a diffusion process, which is calculated via iteration:

Moreover, it can be shown that the closed expression for F at any iteration is

S is similar to \(D^{-1}W\), whose eigenvalues are always in the range \([-1,1]\) [17]. This ensures the first term vanishes as t grows larger, whereas the second term converges to \( \mathbf {P}Y\). Consequently, \( \mathbf {P}\) can be characterized as

The transition probability matrix \(\widetilde{W} = \mathbf {D^{-1}W}\) makes it so we can interpret the process as a random walk. Let us imagine a particle walking through the graph according to the transition matrix. Assume it began at a labeled vertex \(v_a\), and at step i it reaches a labeled vertex \(v_b\), initially labeled with class \(c_b\). When this happens, \(v_a\) receives a confidence boost to class \(c_b\). Alternatively, one can say that this boost goes to the entry which corresponds to the contribution from \(v_b\) to \(v_a\), i.e. \( \mathbf {P}_{ab}\). This boost is proportional to \(\alpha ^i\). This gives us a good intuition as to the role of \(\alpha \). More precisely, the contribution of vertices found later in the random walk decays exponentially according to \(\alpha ^i\).

2.2 Self-influence and Leave-One-Out-Error

There is one major problem with LGC’s solution: the diagonal of \( \mathbf {P}\). At first glance, we would think that “fitting the labels” means looking for a model that explains our data very well. In reality, this translates to memorizing the labeled set. The main problem resides within the diagonal of the propagation matrix. Any entry \( \mathbf {P}_{ii}\) stores the self-influence of a vertex, which is calculated according to the expected reward obtained by looping around and visiting itself. The optimal solution w.r.t. label fitting occurs when \(\alpha \) tends to zero. For labeled instances, an initial reward is given for the starting vertex itself, and the remaining are essentially ignored.

We argue that the diagonal is directly related to overfitting. It essentially tells the model to rely on the label information it knows. There are a few analogies to be made: say that we are optimizing the number of neighbors k for a KNN classifier. The analog of “LGC-style optimal label fitting” would be to include each labeled instance as a neighbor to itself, and set \(k = 1\). This is obviously not a good criterion. The answer that maximizes a proper “label fitting” criteria, in this case, is selecting k that minimizes classification error with the extremely important caveat: directly using each instance’s own label is prohibited.

In spite of the problems we have presented, the family of LGC solutions remains very interesting to consider. We will have to eliminate diagonals, however. Let

By eliminating the diagonal, we obtain, for each label, its classification if it were not included in the label propagation process. As such, it can be argued that minimizing

also minimizes the leave-one-out (LOO) error. There is an asterisk: each instance is still used as unlabeled data, but this effect should be insignificant. It is also interesting to row-normalize (a small constant \(\epsilon \) may be added for stabilization) the rows, so that we end up with classification probabilities.

Previously, we developed a semi-supervised leave-one-out filter [2, 4]. We also managed to reduce the amount of storage used by only calculating a propagation submatrix. The LOO-inspired criterion encourages label information to be redundant, so labels that are incoherent with the implicit model are removed.

The major drawback of our proposal is that it needs an extra parameter r, which is the number of labels to remove. The optimal r is usually around the number of noisy labels, which is unknown to us. This was somewhat addressed in [4]: we can instead use a threshold, which tells us how much labels can deviate from the original model. Nonetheless, it is desirable to solve this problem in a way that removes such a parameter. We will do this by introducing a new optimization problem.

3 Proposal

In this work, we consider the optimal value of \({\alpha }\) for our LOO-inspired loss [4]. The objective is to develop a method to minimize surrogate losses based on LOO error for the LGC GSSL classifier and evaluate the generalization of its solutions.

We use the term “surrogate loss” [9] to denote losses such as squared error, cross-entropy and so on. We use this term to purposefully remind that the solution \( \mathbf {H}(\alpha )\) that minimizes the loss on the test set does not also necessarily be the one that maximizes accuracy. We use automatic differentiation as our optimization procedure. As such, we call our approach \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) and \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\) when learning label reliability and diffusion rate, respectively. We exploit the fact that we can compute the gradients of our loss.

3.1 \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\): Automatic Correction of Noisy Labels

Let \( \mathbf {P}\) be the propagation matrix, whose submatrix \( \mathbf {P}_{\mathcal {L}\mathcal {L}}\) corresponds to kernel values between labeled data only. Then, the modified LGC solution is given by \(\widetilde{ \mathbf {P}} \mathbf {Y}_\mathcal {L}\), where

Our optimization problem is to optimize a diagonal matrix \( \mathbf {\Omega }\) indicating the updated reliability of each label:

where \(\texttt {LOSS}\) denotes some loss function such as squared error or cross-entropy.

3.2 \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\): Automatic Choice of Diffusion Rate

In [4], we use a modified version of the power method to calculate the submatrix \( \mathbf {P}_\mathcal {L}\). This is enough to give us the answer in a few seconds for a fixed \(\alpha \), but would quickly turn into a huge bottleneck if we were to constantly update \(\alpha \). Our new approach uses the graph Fourier transform. In other words, we adapt the idea of smooth eigenbasis pursuit to this particular problem. Let us write the propagation matrix using eigenfunctions:

Using the graph Fourier transform, we have that

So it follows that

where \(\forall i \in \{1..N\}\):

In practice, we can assume that \( \mathbf {U}\) is an  matrix, with l the number of labeled instances and p the chosen amount of eigenfunctions. The diagonal entries are given by

matrix, with l the number of labeled instances and p the chosen amount of eigenfunctions. The diagonal entries are given by

Next, we’ll analyze the complexity of calculating the leave-one-out error for a given diffusion rate \(\alpha \), given that we have stored the first p eigenfunctions in the matrix \( \mathbf {U}\). Let  be the matrix of eigenfunctions with domain restricted to labeled instances. Exploiting the fact that \( \mathbf {Y}_\mathcal {U}= 0\), it can be shown that

be the matrix of eigenfunctions with domain restricted to labeled instances. Exploiting the fact that \( \mathbf {Y}_\mathcal {U}= 0\), it can be shown that

We can precompute  with \(\mathcal {O}(plc)\) multiplications. For each diffusion rate candidate \(\alpha \), we obtain

with \(\mathcal {O}(plc)\) multiplications. For each diffusion rate candidate \(\alpha \), we obtain  by multiplying each restricted eigenfunction with its new eigenvalue. The only thing left is to post-multiply it by the pre-computed matrix. As a result, we can compute the leave-one-out error for arbitrary \(\alpha \) with \(\mathcal {O}(plc)\) operations.

by multiplying each restricted eigenfunction with its new eigenvalue. The only thing left is to post-multiply it by the pre-computed matrix. As a result, we can compute the leave-one-out error for arbitrary \(\alpha \) with \(\mathcal {O}(plc)\) operations.

We have shown that, by using the propagation submatrix, we can re-compute the propagation submatrix \( \mathbf {P}_{\mathcal {L}\mathcal {L}}\) in \(\mathcal {O}(plc)\) time. In comparison, the previous approach [4] requires, for each diffusion rate, a total of O(tknlc) operations, assuming t iterations of the power method and a sparse affinity matrix with average node degree equal to k. Even if we use the full eigendecomposition (\(p = n\)), this new approach is significantly more viable for different learning rates. Moreover, we can lower the choice of p to use a faster, less accurate approximation.

4 Methodology

Basic Framework. We have a configuration dispatcher which enables us to vary a set of parameters (for example, the chosen dataset and the parameters for affinity matrix generation).

We start out by reading our dataset, including features and labels. We use the random seed to select the sampled labels, and create the affinity matrix \( \mathbf {W}\) necessary for LGC. For automatic label correction, we assume that \(\alpha \) is given as a parameter. For choosing the automatic diffusion rate, we need to extract the p smoothest eigenfunctions as a pre-processing step. Next, we repeatedly calculate the gradient and update either \(\alpha \) or \( \mathbf {\Omega }\) to minimize LOO error. After a set amount of iterations, we return the final classification and perform an evaluation on unlabeled examples.

The programming language of choice is Python 3, for its versatility and support. We also make use of the tensorflow-gpu [1] package, which massively speeds up our calculations and also enables automatic differentiation of loss functions. We use a Geforce GTX 1070 GPU to speed up inference, and also for calculating the k-nearest neighbors of each instance with the faiss-gpu package [7].

Evaluation and Baselines. In this work, our datasets have a roughly equal number of labels for each class. As such, we will report the mean accuracy, as well as its standard deviation. However, one distinction is that we calculate the accuracy independently on labeled and unlabeled data. This is done to better assess whether our algorithms are improving classification on instances outside the labeled set, or if it outperforms its LGC baseline only when performing diagnosis of labels.

Our approach, \(\texttt {LGC}\_\texttt {LVO}\_\texttt {Auto}\), is compatible with any differentiable loss function, such as mean squared error (MSE) or cross-entropy (xent). The chosen optimizer was Adam, with a learning rate of 0.7 and 5000 iterations. For the approximation of the propagation matrix in \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\), we used \(t=1000\) iterations throughout.

Perhaps the most interesting classifier to compare our approach to is the LGC algorithm, as that is the starting point and the backbone of our own approach. We will also be comparing our results with the ones reported by [6]. These include: Gaussian Fields and Harmonic Functions (GFHF) [18], Graph Trend Filtering [15], Large-Scale Sparse Coding (LSSC) [10] and Eigenfunction [5].

5 Results

This section presents the results of employing our two approaches \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) and \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\) on ISOLET and MNIST datasets, compared to other graph-based SSL algorithms from the literature.

5.1 Experiment 1: \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) on ISOLET

Experiment Setting. In this experiment, we compared \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) to the baselines reported in [6], specifically for the ISOLET dataset. Unlike the authors, our 20 different seeds also control both the label selection and noise processes. The graph construction was performed exactly as in [6], a symmetric 10-nearest neighbors graph with the width \(\sigma \) of the RBF kernel set to 100. We emphasize that the reported results by the authors correspond to the best-performing parameters, divided for each individual noise level. In [6], \(\lambda _1\) is set to \(10^5\), \(10^2\), \(10^2\) and \(10^2\) for the respective noise rates of 0%, 20%, 40% and 60%; \(\lambda _2\) is kept to 10, and the number of eigenfunctions is \(m=30\). We could not find any implementation code for SIIS, so we had to manually reproduce it ourselves. As for parameter selection for \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\), we simply set \(\alpha = 0.9\) (equivalently, \(\mu =0.1111\)). We reiterate that having a single parameter is a strength of our approach. In future work, we will try to combine \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) with \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\) to fully eliminate the need for parameter selection.

Experiment Results. The results are contained in Tables 1a and b. With respect to the accuracy on unlabeled examples, we observed that:

-

SIIS appeared to have a slight edge in the noiseless scenario.

-

LGC’s own inherent robustness was evident. When 60% noise was injected, it went from 84.72 to 70.69, a decrease of \(16.55\%\). In comparison, SIIS had a decrease of \(14.39\%\); GFHF a decrease of \(22.08\%\); GTF a decrease of \(21.82\%\).

-

With \(60\% \) noise, \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\)(xent) decreased its accuracy by \(11.52\%\), so \({{\mathbf {\mathtt{{LGC}}}}}\_{{\mathbf {\mathtt{{LVO}}}}}\_{{\mathbf {\mathtt{{AutoL}}}}}\)(xent) had the lowest percentual decrease.

-

\({{\mathbf {\mathtt{{LGC}}}}}\_{{\mathbf {\mathtt{{LVO}}}}}\_{{\mathbf {\mathtt{{AutoL}}}}}\)(MSE) disappointed for both labeled and unlabeled instances.

-

\({{\mathbf {\mathtt{{LGC}}}}}\_{{\mathbf {\mathtt{{LVO}}}}}\_{{\mathbf {\mathtt{{AutoL}}}}}\) was not noticeably superior to LGC when there was less than 60% noise.

With respect to the accuracy on unlabeled examples, we observed that:

-

LGC was unable to correct noisy labels.

-

\(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}(xent)\) discarded only 5% of the labels for the noiseless scenario, which is better than SIIS and LSSC.

-

Moreover, \({{\mathbf {\mathtt{{LGC}}}}}\_{{\mathbf {\mathtt{{LVO}}}}}\_{{\mathbf {\mathtt{{AutoL}}}}}(xent)\) had the highest average accuracy on labeled instances for \(20\%,40\%,60\%\) label noise.

5.2 Experiment 2: \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) on MNIST

Experiment Setting. This experiment was based on [10], where a few classifiers were tested on the MNIST dataset subject to label noise. In that paper, the parameters for the graph were tuned to minimize cross-validation errors. Moreover, an anchor graph was used, which is a large-scale solution. We did not use such a graph, as our TensorFlow iterative implementation of \({{\mathbf {\mathtt{{LGC}}}}}\_{{\mathbf {\mathtt{{LVO}}}}}\_{{\mathbf {\mathtt{{AutoL}}}}}\) was efficient enough to perform classification on MNIST in just a few seconds. As we also included the results for LGC (without anchor graph), it is interesting to observe that its accuracy decreases similarly to the previously reported results: the main difference is better performance for the noiseless scenario, which is to be expected (the anchor graph is an approximation).

Once again, we simply set \(\alpha =0.9\) for \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\). We used a symKNN matrix with \(k=15\) neighbors, and a heuristic sigma \(\sigma =423.57\) obtained by taking one-third of the mean distance to the 10th neighbor (as in [3]).

Experiment Results. The results are found in Tables 2a and b. With respect to the accuracy on unlabeled examples, we observed that:

-

\({{\mathbf {\mathtt{{LGC}}}}}\_{{\mathbf {\mathtt{{LVO}}}}}\_{{\mathbf {\mathtt{{AutoL}}}}}\) with cross-entropy improved the LGC baseline significantly on unlabeled instances. For 30% label noise, mean accuracy increases from 74.58% to 84.46%.

-

The mean squared error loss is once again consistently inferior to cross-entropy when there is noise.

-

\(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) was not able to obtain better results than LSSC.

With respect to the accuracy on labeled examples, we observed that:

-

LGC was not able to correct the labeled instances.

-

\({{\mathbf {\mathtt{{LGC}}}}}\_{{\mathbf {\mathtt{{LVO}}}}}\_{{\mathbf {\mathtt{{AutoL}}}}}\) with cross-entropy improved the LGC baseline significantly on labeled instances. With 30% noise, accuracy goes from 70.00% to 89.75%.

5.3 Experiment 3: \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\) on MNIST

Experiment settings were kept the same as Experiment 2 (without noise), and \(p = 300\) eigenfunctions were extracted. In Fig. 2, we show accuracy on the labeled set as red, and on the unlabeled set as purple. The x values on the horizontal axis relate to \(\alpha \) as following: \(\alpha = 2^{-1/x}\). Looking at Fig. 2b, we can see that, if we do not remove the diagonal, there is much overfitting and the losses reach their minimum value much earlier than does the accuracy on unlabeled examples. When the diagonal is removed, the loss-minimizing estimates for \(\alpha \) get much closer to the optimal one (Fig. 2a). Moreover, by removing the diagonal we obtain a much better estimation of the accuracy on unlabeled data. Therefore, we can simply select the \(\alpha \) corresponding to the best accuracy on known labels.

\(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\) on MNIST. The x coordinate on the horizontal axis determines diffusion rate \(\alpha = 2^{-1/x}\) for \(x\ge 1\). The orange, blue and green curves represent the surrogate losses: mean absolute error, mean squared error, cross-entropy. Moreover, the accuracy on the known labeled data is shown in red, and the accuracy on unlabeled data in purple. (Color figure online)

6 Concluding Remarks

We have proposed the \(\texttt {LGC}\_\texttt {LVO}\_\texttt {Auto}\) framework, based on leave-one-out validation of the LGC algorithm. This encompasses two methods, \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) and \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\), for estimating label reliability and diffusion rates. We use automatic differentiation for parameter estimation, and the eigenfunction approximation is used to derive a faster solution for \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\), in particular when having to recalculate the propagation matrix for different diffusion rates.

Overall, \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) produced interesting results. For the ISOLET dataset, it was very successful at the task of label diagnosis, being able to detect and remove labels with overall better performance than every \(\ell _1\)-norm method. On the other hand, did not translate too well for unlabeled instance classification. For the MNIST dataset, performance is massively boosted for unlabeled instances as well. In spite of outperforming its LGC baseline by a wide margin, it could not match the reported results of LSSC on MNIST. Preliminary results showed that \({{\mathbf {\mathtt{{LGC}}}}}\_{{\mathbf {\mathtt{{LVO}}}}}\_{{\mathbf {\mathtt{{AutoD}}}}}\) is a viable way to get a good estimate of the optimal diffusion rate, and removing the diagonal entries proved to be the crucial step for avoiding overfitting.

For future work, we will be further evaluating \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\). We will also try to integrate \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\) and \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoL}\) together into one single algorithm. Lastly, we will aim to extend \(\texttt {LGC}\_\texttt {LVO}\_\texttt {AutoD}\) to a broader class of graph-based kernels, in addition to the one resulting from the LGC baseline.

References

Abadi, M., et al.: TensorFlow: Large-scale machine learning on heterogeneous systems (2015). http://tensorflow.org/, software available from tensorflow.org

de Aquino Afonso, B.K.: Analysis of Label Noise in Graph-Based Semi-supervised Learning. Master’s thesis (2020)

Chapelle, O., Schölkopf, B., Zien, A. (eds.): Semi-supervised Learning. MIT Press, Cambridge (2006). http://www.kyb.tuebingen.mpg.de/ssl-book

de Aquino Afonso, B.K., Berton, L.: Identifying noisy labels with a transductive semi-supervised leave-one-out filter. Pattern Recognit. Lett. 140, 127–134 (2020). https://doi.org/10.1016/j.patrec.2020.09.024. http://www.sciencedirect.com/science/article/pii/S0167865520303603

Fergus, R., Weiss, Y., Torralba, A.: Semi-supervised learning in gigantic image collections. In: Advances in Neural Information Processing Systems, pp. 522–530 (2009)

Gong, C., Zhang, H., Yang, J., Tao, D.: Learning with inadequate and incorrect supervision. In: 2017 IEEE International Conference on Data Mining (ICDM), pp. 889–894. IEEE (2017)

Johnson, J., Douze, M., Jégou, H.: Billion-scale similarity search with GPUs. IEEE Trans. Big Data (2019)

Kearnes, S., McCloskey, K., Berndl, M., Pande, V., Riley, P.: Molecular graph convolutions: moving beyond fingerprints. J. Comput.-Aided Mol. Des. 30(8), 595–608 (2016). https://doi.org/10.1007/s10822-016-9938-8

Krijthe, J.H.: Robust semi-supervised learning: projections, limits and constraints. Ph.D. thesis, Leiden University (2018)

Lu, Z., Gao, X., Wang, L., Wen, J.R., Huang, S.: Noise-robust semi-supervised learning by large-scale sparse coding. In: AAAI, pp. 2828–2834 (2015)

Mitchell, T.: Machine Learning. McGraw-Hill, New York (1997)

Miyato, T., Maeda, S.I., Ishii, S., Koyama, M.: Virtual adversarial training: a regularization method for supervised and semi-supervised learning. IEEE Trans. Pattern Anal. Mach. Intell. 41, 1979–1993 (2018)

Shao, Y., Sang, N., Gao, C., Ma, L.: Probabilistic class structure regularized sparse representation graph for semi-supervised hyperspectral image classification. Pattern Recognit. 63, 102–114 (2017)

Van Engelen, J.E., Hoos, H.H.: A survey on semi-supervised learning. Mach. Learn. 109(2), 373–440 (2020). https://doi.org/10.1007/s10994-019-05855-6

Wang, Y.X., Sharpnack, J., Smola, A.J., Tibshirani, R.J.: Trend filtering on graphs. J. Mach. Learn. Res. 17(1), 3651–3691 (2016)

Ying, R., He, R., Chen, K., Eksombatchai, P., Hamilton, W.L., Leskovec, J.: Graph convolutional neural networks for web-scale recommender systems. In: Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 974–983 (2018)

Zhou, D., Bousquet, O., Lal, T.N., Weston, J., Schölkopf, B.: Learning with local and global consistency. In: Advances in Neural Information Processing Systems, pp. 321–328 (2004)

Zhu, X., Ghahramani, Z., Lafferty, J.: Semi-supervised learning using gaussian fields and harmonic functions. In: Proceedings of the Twentieth International Conference on International Conference on Machine Learning, pp. 912–919. AAAI Press (2003)

Catunda, J.P.K., da Silva, A.T., Berton, L.: Car plate character recognition via semi-supervised learning. In: 2019 8th Brazilian Conference on Intelligent Systems (BRACIS), pp. 735–740. IEEE (2019)

Author information

Authors and Affiliations

Corresponding authors

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

de Aquino Afonso, B.K., Berton, L. (2021). Optimizing Diffusion Rate and Label Reliability in a Graph-Based Semi-supervised Classifier. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13073. Springer, Cham. https://doi.org/10.1007/978-3-030-91702-9_34

Download citation

DOI: https://doi.org/10.1007/978-3-030-91702-9_34

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91701-2

Online ISBN: 978-3-030-91702-9

eBook Packages: Computer ScienceComputer Science (R0)