Abstract

One important task in the COVID-19 clinical protocol involves the constant monitoring of patients to detect possible signs of insufficiency, which may eventually rapidly progress to hepatic, renal or respiratory failures. Hence, a prompt and correct clinical decision not only is critical for patients prognosis, but also can help when making collective decisions regarding hospital resource management. In this work, we present a network-based high-level classification technique to help healthcare professionals on this activity, by detecting early signs of insufficiency based on Complete Blood Count (CBC) test results. We start by building a training dataset, comprising both CBC and specific tests from a total of 2,982 COVID-19 patients, provided by a Brazilian hospital, to identify which CBC results are more effective to be used as biomarkers for detecting early signs of insufficiency. Basically, the trained classifier measures the compliance of the test instance to the pattern formation of the network constructed from the training data. To facilitate the application of the technique on larger datasets, a network reduction option is also introduced and tested. Numerical results show encouraging performance of our approach when compared to traditional techniques, both on benchmark datasets and on the built COVID-19 dataset, thus indicating that the proposed technique has potential to help medical workers in the severity assessment of patients. Especially those who work in regions with scarce material resources.

This work was carried out at the Center for Artificial Intelligence (C4AI-USP), with support by the São Paulo Research Foundation (FAPESP) under grant number: 2019/07665-4 and by the IBM Corporation. This work is also supported in part by the Coordenação de Aperfeiçoamento de Pessoal de Nível Superior - Brasil (CAPES) - Finance Code 001, FAPESP under grant numbers 2015/50122-0, the Brazilian National Council for Scientific and Technological Development (CNPq) under grant number 303199/2019-9, and the Ministry of Science and Technology of China under grant number: G20200226015.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Since the COVID-19 outbreak, advanced machine learning techniques have been applied in the development of clinical decision support tools, to help medical workers on guiding the monitoring and treatment of affected patients. Examples of these studies include the automation and introduction of new methods for routine medical activities, such as: the analysis of computed tomography (CT) images [21], COVID-19 diagnosis and screening [27], detection of new viral species and subspecies [15], medication [2], and patients monitoring through biomarkers, such as the ones provided by blood samples [26].

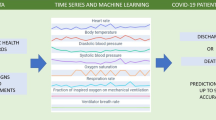

When a new COVID-19 case is confirmed, the clinical protocol involves an initial assessment, made by healthcare professionals, to classify its severity as mild, moderate or grave, followed by a monitoring activity to evaluate the progression of the disease [20]. This procedure, although it may slightly vary, depending on the region, usually includes specific tests, such as creatinine, lactic dehydrogenase and blood urea nitrogen, as well as a Complete Blood Count (CBC), which is a series of tests used to evaluate the composition and concentration of cellular components in the blood. Such type of testing, as the CBC, is widely used because it can be easily collected at any place, even in situations of medical resource scarcity. Moreover, it is usually processed in a matter of minutes, up to two hours. Therefore, it is considered as a fast, low-cost and reliable resource to assess the patient’s overall conditions. During the monitoring process, healthcare professionals then need to decide whether to order complementary tests for detecting possible signs of hepatic, renal or respiratory insufficiency. Considering that progressive respiratory failure is the primary cause of death from COVID-19, this monitoring activity hence is critical for the patient. However, we must face the problem that such complementary tests incur high costs, and may not be available in some specific places due to scarcity of material and human resources.

In this study, we present a technique that may assist medical professionals on this monitoring activity, by detecting early signs of insufficiency in COVID-19 patients solely based on CBC test results. Specifically, our approach can help to identify the level of risk and the type of insufficiency for each patient. We start by building a training dataset comprising results from both CBC and specific tests used to detect signs of hepatic, renal and respiratory insufficiency, from a total of 2,982 COVID-19 patients, who received treatment in the Israelite Albert Einstein Hospital, from Sao Paulo, Brazil [10]. In this process, we identify which CBC tests are more effective to be used as biomarkers to detect signs from each type of insufficiency. The dataset resulting from this analysis is then delivered to a modified network-based high-level classification technique, also introduced in this study. The obtained results present competitive performance of the proposed technique compared to classic and state-of-the-art ones, both on benchmark classification datasets and on the built COVID-19 dataset.

Real-world datasets usually contain complex and organizational patterns beyond the physical features (similarity, distance, distribution, etc.). Data classification, which takes into account not only physical features, but also the organizational structure of the data, is referred to as high level classification. Complex networks are suitable tools for data pattern characterization due to their ability of capturing the spatial, topological, and functional relationship among data [1, 6, 22, 23]. In this study, we present a modified high-level classification technique based on complex network modeling. It can perform classification tasks taking into account both physical attributes and the pattern formation of the data. Basically, the classification process measures the compliance of the test instance to the pattern formation of each network constructed from a class of training data. The approach presented in this work is inspired by the high-level data classification technique introduced in previous works [6, 8, 22], and here it is modified in terms of the network building parameters automation method, the topological measure used for characterizing the data pattern structure, and the inclusion of a network reduction option, to facilitate its application on larger datasets by saving processing and memory resources.

The main contributions of this work are summarized as follows:

-

Building a training dataset from unlabeled original data, comprising medical tests from almost 3,000 de-identified COVID-19 patients, provided by a hospital in Brazil, which will be made publicly available to be used in other relevant researches,

-

Introducing a network-based high level classification technique, based on the betweenness centrality measure, with a network reduction option included in the training phase, to make the technique faster and to facilitate its application on larger datasets, and

-

Applying the proposed technique both on benchmark classification datasets and on the built dataset, and comparing the results with those achieved by other traditional classification techniques, on the same data, to evaluate the possibility of detecting early signs of insufficiency in COVID-19 patients solely based on CBC tests, used as biomarkers.

2 The Proposed MNBHL Technique

In this section we describe the proposed modified network-based high level (MNBHL) classification technique. All implementations are made using the igraph Python package [9].

In classic supervised learning, initially we have an input dataset comprising n instances in the form of an array \(X_{train} = [x_1, x_2, . . . , x_n]\), where each instance \(x_i\) itself is a m-dimensional array, such that \(x_i = [x_{i, 1}, x_{i, 2}, . . . , x_{i, m}]\), representing m features of the instance. Correspondingly, the labels of the instances are represented by another array \(Y = [y_1, y_2, . . . , y_n]\), where \(y_{i} \in \mathcal {L} = \{L_{1} . . . , L_{C}\}\), and \(L_i\) is the label of the ith class. The objective of the training phase is to construct a classifier by generating a mapping \(f: X_{train} \xrightarrow {\varDelta } Y\). In the testing phase, this mapping is used to classify data instances without label. The test dataset is denoted by \(X_{test}\).

A network can be defined as a graph \(\mathcal {G}=(\mathcal {V, E})\), where \(\mathcal {V}\) is a set of nodes, \(\mathcal {V} = \{ v_1, v_2, ..., v_n \}\), and \(\mathcal {E}\) is a set of tuples, \(\mathcal {E} = \{ (i,j) : i,j \in \mathcal {V} \}\), representing the edges between each pair of nodes. In the proposed modified high-level classification technique, each node in the network represents a data instance \(x_i\) in the dataset, and the connections between nodes are created based on the their similarity in the attribute space, pairwise. In the training phase, one network component is constructed for the data instances of each class. Afterwards, there is an option to reduce the built network, by preserving only its \(r\%\) most central nodes, according to a selected centrality index. In the testing phase, each data instance is inserted as a new node in the network, one at a time, by using the same rules from the training phase. In case only one network component is affected by the insertion, then its label will be equal to the respective class of that component. In case more than one component is affected by the insertion, then its label will be given by the class whose component is the least impacted by the new node’s insertion, in terms of the network topological structure.

2.1 Description of the Training Phase

The training phase starts by balancing the values in \(X_{train}\), for dealing with unbalanced datasets. For this end, we introduce a list \(\alpha \), where each element is given by:

where \(|X_{train} |\) is the total number of elements of the training set and \(|X_{train}^{(L)} |\) is the number of elements in the subset \(X_{train}\) whose corresponding class label in Y is L. These values are also normalized, assuming \(\alpha _i = \frac{\alpha _i}{\sum _i^n \alpha _i}\).

The edges between nodes in the network are generated by measuring the pairwise similarity of the corresponding data instances in the attribute space through a combination of two rules: kNN and \(\epsilon \)-radius. The \(\epsilon \)-radius rule is used for dense regions, while the kNN is employed for sparse regions. The value of \(\epsilon \) is yielded by:

where \(D_i\) is a 1d vector containing the Euclidean distances between each element and element \(x_i\) in \(X_{train}\), and Q is a function which returns the p-th quantile of the data in D. For the second rule used for generating the edges in the network, the kNN, we opt to set the initial value for the parameter k as the \(\min (\min v, p \overline{v})\), where v is a vector containing the number of data instances per each class in the dataset. Hence, the maximum possible value for k will be the average number of data instances per class in the dataset multiplied by the same parameter p used for returning the quantile from the \(\epsilon \)-radius technique. By proceeding this way, we are therefore linking the value of k to the value of \(\epsilon \) and, consequently, to the characteristics of each dataset (here represented by \(\overline{v}\)). This is also a novelty introduced in this technique, compared to its previous versions [8, 22], with the aim of avoiding exaggerated disproportions between the number of edges yielded by the two rules.

With the initial values of \(\epsilon \) and k being set, the model then proceeds to generate the edges of the network \(\mathcal {G}\). The neighbors to be connected to a training node \(x_{i}\) are yielded by:

where \(y_i\) denotes the class label of the training instance \(x_{i}\), \(\epsilon \text {-radius}(x_i, y_i)\) returns the set \(\{x_j, \forall j \in \mathcal {V} \mid dist(x_i, x_j) \le \epsilon \wedge y_i=y_j\}\), and kNN\((x_i, y_i)\) returns the set containing the k nearest vertices of the same class of \(x_i\).

A common issue regarding the application of network-based techniques on larger datasets is that, depending on the available computational resources, the process may become too expensive, both in terms of processing power and memory consumption. For this reason, in this study, we introduce an option to reduce the number of vertices in the network, for the sake of saving processing time and memory resources, both in the training and testing phases. There are different possible strategies for reducing a network [24] and, in this work, we opt for one which consists in keeping only the nodes that occupy more central positions in it, measured in terms of the betweenness centrality [3]. This measure, broadly speaking, estimates the extent to which a vertex lies on paths between other vertices, and is considered essential in the analysis of complex networks. In a network that does not present communities, the nodes with higher betweenness centrality are among the most influential ones [7], while in networks that present communities, these nodes are the ones which usually work as links between the communities. We chose to use this measure for believing that it is able to identify the most representative nodes in the network, such that only these selected nodes can be used in the classification task.

For controlling this option, we add a reduction parameter \(r \in [0, 1]\) in the model, such that when its value is set as less than 1, only the ratio of r vertices with higher betweenness centrality are kept in the network. In this case, the values in \(X_{train}\) and Y are also reduced, accordingly. The value of \(\epsilon \) is updated, and the network edges are generated again, by using the same rules provided in Eq. 2 and Eq. 3. If, after this procedure, the number of components in the network is higher than the number of classes in the dataset, then the value of k is increased by 1, and the edges are updated again. This step can be repeated more times, if necessary, until we have, at the end, one component in the network per class in the dataset.

The complete process of the training phase is outlined in Algorithm 1. In line 2, there is the balancing task, in which the parameter \(\alpha \) is generated. Then, in line 3, the network \(\mathcal {G}\) is built, by using the values of parameters \(\epsilon \) and k. In lines 4–7, there is the optional procedure to reduce the network, by leaving only its \(r\%\) most central nodes. In lines 8–10, we have the validation of whether the number of network components is equal to the number of classes in the dataset, with the parameter k and the network \(\mathcal {G}\) being updated, if necessary.

2.2 Description of the Testing Phase

In the testing phase, the data instances from \(X_{test}\) are inserted as new vertices in the network \(\mathcal {G}\), one at a time, and the model then needs to infer the label of each testing instance. The model extracts two groups of network measures for each component, before and after the insertion of a testing instance, respectively. The testing instance is classified to the class of the component which its insertion caused the smallest variation in the network measures. In other words, a testing instance is classified to a certain class because it conforms the pattern formed by the training data of that class. In this way, the data instances from \(X_{test}\) are inserted in the network, to be classified, one by one.

The new node’s insertion is made by using the same rules described in Eq. 3 for generating the edges between the new node and the other nodes in the network. The only difference now is that, since we do not know the class of the new data instance \(x_i\), the “same label” restriction is removed from the procedure, so that the nodes to be connected to \(x_i\) are yielded by:

where \(\epsilon \text {-radius}(x_i)\) returns the set \(\{x_j, \forall j \in \mathcal {V} \mid dist(x_i, x_j) \le \epsilon \}\), and kNN\((x_i)\) returns the set containing the k nearest vertices of \(x_i\).

The model will assign the label for the new node according to the number of components affected by the node’s insertion in the network. In case only one component is affected by its insertion, i.e., when all the target nodes of its edges belong to the same component, then the model will assign the class of this component for the new node. On the other hand, when more than one component is affected by the insertion, then the model will extract new measures of the affected components and assign a class label to the testing data instance as the label of the component less impacted by the insertion, in terms of the network topology. In this work, we use betweenness centrality for measuring these impacts.

The overall impact \(I^{(L)}\) on each affected component of class L, caused by the insertion of the testing instance, is calculated and balanced according to:

where \(M_0^{(L)}\) and \(M_1^{(L)}\) are the extracted network measures for the component representing class L, before and after the insertion of the testing instance, respectively. The probability \(P(x_i^{\hat{y_i}=L})\) of the testing instance \(x_i\) to belong to class L is given by the reciprocal of the value in vector I, in the normalized form, as in:

where \(C^{(L)}\) is the network component representing class L. Finally, the label \(\hat{y_i}\) to be assigned for the testing instance \(x_i\) is yielded by:

The complete process of the testing phase is described in Algorithm 2. In lines 2–3, an initial empty list is created to store the predicted labels for each data instance in \(X_{test}\), and the current measures of each network component are extracted. In lines 4–13, the model generates a label for each testing instance, one by one. In case only one component is affected by the insertion of the testing data, then its label will be this component’s class. In case more than one component is affected by the insertion, then the testing data is classified to that class whose component is least impacted by the insertion, in terms of topological structure. In line 14, the classification list is updated with each predicted label.

3 Materials and Methods

We first evaluate the performance of the proposed MNBHL model by applying it to well-known benchmark classification datasets, both artificial and real ones. Afterwards, we apply the MNBHL model to a dataset specially built for this work, obtained from the analysis of publicly available data of de-identified COVID-19 patients from one of the main hospitals in Brazil. Each dataset is processed 20 times by all models, each time having their data items shuffled by using a different random seed. The final accuracy scores are the averaged ones achieved by each model, on each dataset. For splitting each dataset into 2 subdatasets, for training and testing purposes, we make use of a function that returns a train-test split with 75% and 25% the size of the inputs, respectively.

A succinct meta-information of the benchmark datasets used for obtaining the experimental results is given in Table 1. Circles_00 and Circles_02 datasets are two concentric circles without noise and with a 20% noise, respectively. Moons_00 and Moons_02 datasets are two moons without noise and with a 20% noise, respectively. For a detailed description of the real datasets, one can refer to [14].

We built the COVID-19 dataset from originally unlabeled data [10] collected from COVID-19 patients of the Israelite Albert Einstein Hospital, located in Sao Paulo, Brazil. The original database comprises a total of 1,853,695 results from 127 different medical tests, collected from 43,562 de-identified patients, who received treatment in the hospital from January 1, 2020 until June 24, 2020. Firstly, we identify the patients who tested positive in at least one of the following COVID-19 detection tests: polymerase chain reaction (PCR), immunoglobulin M (IgM), immunoglobulin G (IgG) and enzyme-linked immunosorbent assay (ELISA). Next, we filter the patients and left in the dataset only the ones who have made at least one complete blood count (CBC) test, in a date no earlier than the date to be tested positive for COVID-19. In case a patient has made more than one CBC test, we then consider only the results of the first test for predicting early signs of insufficiency. We adopt this procedure because we aim to make predictions based on early signs of insufficiency, hence the use of only the first CBC test for the predictions. Afterwards, we run an algorithm to automatically label each patient of the dataset as presenting or not signs from each type of insufficiency, strictly according to results from specific tests, listed in the second column of Table 2, and using their respective reference values provided in the same original database, which are standardized in the medical literature.

After the data cleansing, we end up with a total of 2,982 different patients in the dataset, with each of them belonging to one of the following 4 classes: Healthy, Hepatic Insufficiency Signs, Renal Insufficiency Signs, and Respiratory Insufficiency Signs. For determining the predictive attributes (or biomarkers) for each type of insufficiency, we make use of the Pearson correlation coefficient [16], to select the CBC tests that are most correlated to the labels, i.e., to each type of insufficiency. At the end, the following biomarkers were used for this end: age, neutrophils, basophils, lymphocytes, eosinophils and red cell distribution width (RDW). In Table 2, we provided an overview of how the COVID-19 dataset has been built, showing all tests considered, both for the labels and predictive attributes generation. The details regarding all patients in the dataset are summarized in Table 3.

The models performances on the COVID-19 dataset are evaluated by the following indicators: accuracy (ratio of true predictions over all predictions), precision, sensitivity/recall and F1 score, as defined below:

where TP, TN, FP and FN stand for true positive, true negative, false positive and false negative rates, respectively.

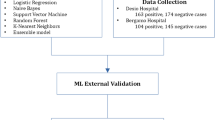

For the application, we set the parameter p from the proposed technique, responsible for the network edges generation, as \(p=0.01\) when processing the benchmark datasets, and \(p=0.02\) when processing the COVID-19 dataset. Regarding the network reduction option, we set \(r=0.1\) (10%) when processing the Digits dataset and the COVID-19 dataset and, for the others, we do not make use of this resource, since they are smaller ones. For the sake of comparison, the following traditional classification models are applied on all datasets: Decision Tree [19], Logistic Regression [12], Multilayer Perceptron (MLP) [13], Support Vector Machines (SVM) with an RBF kernel [25] and Naive Bayes [18]. We also apply the following ensemble methods: Bagging of MLP [4], Random Forest [5] and AdaBoost [11]. All models were implemented through Scikit-learn [17], by using their respective default parameter values, in all tests performed.

4 Tests Performed on Benchmark Datasets

In Table 4, we present the obtained results, in terms of average accuracy, from the application of the proposed MNBHL model to the benchmark datasets, as well as the comparison with the performances achieved by traditional models. These results indicate that the model’s overall performance is competitive, being ranked as second one when compared to traditional techniques, in the average rank. We highlight the good performance achieved on the Moons_00 and Moons_02 datasets, in which it was the only model that obtained an 100% accuracy in all 20 classification tasks processed. This result, in particular, is a strong sign that the model is able to correctly capture the topological pattern present in these two datasets, as expected in high level techniques. Observing the running times of all considered models, we can say that the MNBHL is quite competitive on that aspect as well.

In Fig. 1, we show the effect of the network reduction option on the MNBHL model performance, in terms of average accuracy and processing time, on two benchmark datasets. Each dataset is processed 10 times for obtaining these values, each time using a different random seed. As one can note, from this figure, when the network built for the Digits dataset is reduced in 90%, i.e., to only 10% of the training data, the average accuracy rate decreases from around 0.955 to around 0.905, thus with a negative variation of about 5%, while the average processing time decreases from 55 s to only 2 s, thus with a negative variation of about 96%. As for the Wine dataset, there is an initial improvement in the accuracy, when using a 30% reduction option, which is somewhat surprising, with a later decrease, as the reduction increases. Overall, on the two datasets considered, a 90% network reduction option has a negative impact of around 4% on the average accuracy rate and of around 80% on the average running time. Therefore, this resource can be used in situations when one needs to process larger datasets using a network-based approach and needs to adapt the problem to locally available computational resources, both in terms of memory and processing capacity, as it is the case in this study.

5 Experimental Results

We start this section by showing an example of different processing stages from the proposed technique, when applied to detect respiratory insufficiency signs. In Fig. 2(a), there is the initial network built by the model, i.e., the network formed by all training data instances. This network has 2.236 nodes in total (representing the patients), and two classes (with and without insufficiency signs), denoted by the orange and blue colors. In Fig. 2(b), we have the reduced version of this network, with only the 10% of nodes (223 in total) with the highest betweenness values. In this example, the new node’s insertion during the classification phase, in Fig. 2(c), affected both network components, and hence its label will be yielded by the class whose component is least impacted, in terms of betweenness centrality.

In Table 5, we display the results obtained by all classification models considered, in terms of accuracy, on detecting early signs of each type of insufficiency, on the built COVID-19 dataset. Overall, all models are more successful on detecting signs of respiratory insufficiency over other types, with most of them achieving an accuracy of more than 70% on this task, while the most difficult signs to be detected are those regarding the hepatic insufficiency. The proposed MNBHL model is the one that achieved the best performance, considering all tasks, followed by Logistic Regression and the BagMLP model, respectively. It is worth noting that the results achieved by the MNBHL model were obtained by using a network reduction option of 90%, i.e., with only 10% of the training data being preserved for testing purposes. Hence, it is expected that these accuracy rates should increase, if one can process the same data without using the network reduction resource.

Given the nature of the COVID-19 dataset, the input data may become more or less unbalanced in each age group. In this sense, younger patients, from age groups 00–20 and 21–40 years old, are expected to present a lower incidence of insufficiency cases than older patients, from age groups 60–80 and 80+ years old (see Table 3). For analyzing how such differences may affect the model’s performance, we present, in Fig. 3, the overall accuracy obtained by the MNBHL model on detecting early signs from any type of insufficiency, on each age group. The boxplots indicate that the model is able to achieve higher performances when analyzing data from older patients, reaching an average accuracy of more than 90% for the 80+ years old age group.

People who are older than 60 years are in the high risk group for COVID-19. If any sign of insufficiency is detected in patients from this group, prompt measures should be taken by healthcare professionals, oftentimes by making use of mechanical ventilation. For this reason, we also evaluate, in Table 6, how the models perform, in terms of precision, sensitivity and F1 score, specifically on data from this group, when detecting signs from any type of insufficiency. From this table, we see that the proposed MNBHL model again obtains competitive results in comparison to classic ones.

6 Final Remarks

In this work, we evaluate the possibility of predicting early signs of insufficiency in COVID-19 patients based only on CBC test results, used as biomarkers, through a supervised learning approach. For this end, a training dataset has been built, from original data of de-identified COVID-19 patients who received treatment in one of the main hospitals in Brazil. Additionally, for processing the built dataset, we use a modified network-based high-level classification technique, with novelties including the method used for automating the network building parameters, the measure used for capturing the data pattern structure, and the inclusion of a network reduction option, to facilitate its application on larger datasets. The proposed technique is evaluated by applying it firstly on benchmark classification datasets, and then on the built COVID-19 dataset, as well as by comparing its performance with those achieved by other traditional techniques, on the same data. The experimental results are encouraging, with the proposed technique obtaining competitive performance, both on the benchmark datasets and on the built COVID-19 dataset. Moreover, the considered models were particularly successful on data regarding patients from the risk group, who are older than 60 years old. Therefore, we believe the method proposed in this study can be potentially used as an additional tool in the severity assessment of COVID-19 patients who have higher risk of complications, thus helping healthcare professionals in this activity. Especially the ones who work in regions with scarce material and human resources, as in some places of Brazil.

As future research, we plan to explore the possibility of extending the classification technique by using additional centrality measures, in a combined form, both in the network reduction method and for the new node’s insertion impact evaluation. We also plan to make applications on larger datasets, such as X-ray and CT images, to further assess the model’s robustness on different type of data.

References

Anghinoni, L., Zhao, L., Ji, D., Pan, H.: Time series trend detection and forecasting using complex network topology analysis. Neural Netw. 117, 295–306 (2019)

Beck, B.R., Shin, B., Choi, Y., Park, S., Kang, K.: Predicting commercially available antiviral drugs that may act on the novel coronavirus (SARS-CoV-2) through a drug-target interaction deep learning model. Comput. Struct. Biotechnol. J. 18, 784–790 (2020)

Brandes, U.: A faster algorithm for betweenness centrality. J. Math. Sociol. 25(2), 163–177 (2001)

Breiman, L.: Bagging predictors. Mach. Learn. 24(2), 123–140 (1996)

Breiman, L.: Random forests. Mach. Learn. 45(1), 5–32 (2001)

Carneiro, M.G., Zhao, L.: Organizational data classification based on the importance concept of complex networks. IEEE Trans. Neural Netw. Learn. Syst. 29(8), 3361–3373 (2017)

Chen, D., Lü, L., Shang, M.S., Zhang, Y.C., Zhou, T.: Identifying influential nodes in complex networks. Phys. A: Stat. Mech. Appl. 391(4), 1777–1787 (2012)

Colliri, T., Ji, D., Pan, H., Zhao, L.: A network-based high level data classification technique. In: 2018 International Joint Conference on Neural Networks (IJCNN), pp. 1–8. IEEE (2018)

Csardi, G., Nepusz, T., et al.: The Igraph software package for complex network research. Int. J. Complex Syst. 1695(5), 1–9 (2006)

Fapesp: Research data metasearch. https://repositorio.uspdigital.usp.br/handle/item/243. Accessed 1 Feb 2021

Freund, Yoav, Schapire, Robert E..: A desicion-theoretic generalization of on-line learning and an application to boosting. In: Vitányi, Paul (ed.) EuroCOLT 1995. LNCS, vol. 904, pp. 23–37. Springer, Heidelberg (1995). https://doi.org/10.1007/3-540-59119-2_166

Gelman, A., Hill, J.: Data Analysis Using Regression and Multilevel Hierarchical Models, vol. 1. Cambridge University Press, New York (2007)

Hinton, G.E.: Connectionist learning procedures. Artif. Intell. 40(1–3), 185–234 (1989)

Lichman, M.: UCI machine learning repository (2013). http://archive.ics.uci.edu/ml

Metsky, H.C., Freije, C.A., Kosoko-Thoroddsen, T.S.F., Sabeti, P.C., Myhrvold, C.: CRISPR-based surveillance for COVID-19 using genomically-comprehensive machine learning design. BioRxiv (2020)

Pearson, K.: Note on regression and inheritance in the case of two parents. Proc. Royal Soc. London 58(347–352), 240–242 (1895)

Pedregosa, F., et al.: Scikit-learn: machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011)

Rish, I.: An empirical study of the Naive Bayes classifier. In: IJCAI 2001 Workshop on Empirical Methods in Artificial Intelligence, vol. 3(22) (2001), IBM New York

Safavin, S.R., Landgrebe, D.: A survey of decision tree classifier methodology. IEEE Trans. Syst., Man, Cybern. 21(3), 660–674 (1991)

Secr. Saude SP: prefeitura.sp.gov.br/cidade/secretarias/saude/vigilancia\(\_\)em\(\_\)saude/. Accessed 9 May 2021

Shan, F., et al.: Lung infection quantification of COVID-19 in CT images with deep learning. arXiv preprint arXiv:2003.04655 (2020)

Silva, T.C., Zhao, L.: Network-based high level data classification. IEEE Trans. Neural Netw. Learn. Syst. 23(6), 954–970 (2012)

Strogatz, S.H.: Exploring complex networks. Nature 410(6825), 268–276 (2001)

Valejo, A., Ferreira, V., Fabbri, R., Oliveira, M.C.F.d., Lopes, A.D.A.: A critical survey of the multilevel method in complex networks. ACM Comput. Surv. (CSUR) 53(2), 1–35 (2020)

Vapnik, V.N.: The Nature of Statistical Learning Theory. Springer, New York (2000). https://doi.org/10.1007/978-1-4757-3264-1

Yan, L., et al.: An interpretable mortality prediction model for COVID-19 patients. Nat. Mach. Intell. 2(5), 1–6 (2020)

Zoabi, Y., Deri-Rozov, S., Shomron, N.: Machine learning-based prediction of COVID-19 diagnosis based on symptoms. npj Digital Med. 4(1), 1–5 (2021)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Colliri, T., Minakawa, M., Zhao, L. (2021). Detecting Early Signs of Insufficiency in COVID-19 Patients from CBC Tests Through a Supervised Learning Approach. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13074. Springer, Cham. https://doi.org/10.1007/978-3-030-91699-2_4

Download citation

DOI: https://doi.org/10.1007/978-3-030-91699-2_4

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91698-5

Online ISBN: 978-3-030-91699-2

eBook Packages: Computer ScienceComputer Science (R0)