Abstract

Early identification of patients with COVID-19 is essential to enable adequate treatment and to reduce the burden on the health system. The gold standard for COVID-19 detection is the use of RT-PCR tests. However, due to the high demand for tests, these can take days or even weeks in some regions of Brazil. Thus, an alternative for detecting COVID-19 is the analysis of Digital Chest X-rays (XR). Changes due to COVID-19 can be detected in XR, even in asymptomatic patients. In this context, models based on deep learning have great potential to be used as support systems for diagnosis or as screening tools. In this paper, we propose the evaluation of convolutional neural networks to identify pneumonia due to COVID-19 in XR. The proposed methodology consists of a preprocessing step of the XR, data augmentation, and classification by the convolutional architectures DenseNet121, InceptionResNetV2, InceptionV3, MovileNetV2, ResNet50, and VGG16 pre-trained with the ImageNet dataset. The obtained results for our methodology demonstrate that the VGG16 architecture presented a superior performance in the classification of XR, with an Accuracy of \(85.11\%\), Sensitivity of \(85.25\%\), Specificity of \(85.16\%\), F1-score of \(85.03\%\), and an AUC of 0.9758.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Severe Acute Respiratory Syndrome Coronavirus 2 (SARS-CoV-2) is a new beta-coronavirus first identified in December 2019 in Wuhan Province, China [3]. Since the initial outbreak, the number of patients confirmed with COVID-19 has exceeded 178 million in the world. More than 3.85 million people have died as a result of COVID-19 (June 19, 2021) [11]. These numbers can be even higher due to asymptomatic cases and flawed tracking policies. In Brazil, a study points to seven times more infections than that reported by the authorities [12].

SARS-CoV-2 shares \(79.6\%\) of the SARS-CoV base pairs genome [37]. Despite its resemblance to the 2002 SARS-CoV virus, SARS-CoV-2 has rapidly spread across the globe challenging health systems [28]. The burden on healthcare systems is due to the high rates of contagion and how SARS-CoV-2 manifests itself in the infected population [22]. According to data from epidemiological analyses, about \(20\%\) to \(30\%\) of patients are affected by a moderate to severe form of the disease. In addition, approximately \(5\%\) to \(12\%\) of infected patients require treatment in the Intensive Care Unit (ICU). Of those admitted to ICUs, about \(75\%\) are individuals with pre-existing comorbidities or older adults [8].

In Brazil, the first case was officially notified on February 25, 2020 [5]. Since then, due to the continental proportions of Brazil, several measures to contain and prepare the health system have been carried out in the Federative Units [24]. Brazil went through two delicate moments for the health system, with hospitals without beds and even the lack of supplies for patients in some regions of the country [33].

Currently, reverse transcription polymerase chain reaction (RT-PCR) is the test used as the gold standard for the diagnosis of COVID-19 [20, 32]. However, due to difficulties in purchasing inputs, increases in the prices of materials and equipment, the lack of laboratories and qualified professionals, and the high demands for RT-PCR tests, the diagnosis can take days or even weeks in some cities in Brazil [20]. This delay can directly impact the patient’s prognosis and be associated with a greater spread of SARS-CoV-2 in Brazil [7].

As alternatives to RT-PCR, radiological exams as Computed Tomography (CT) and Digital Chest Radiography (XR) are being used as valuable tools for the detection and definition of treatment of patients with COVID-19 [9]. Studies show equivalent sensitivities to the RT-PCR test using CT images [2, 35]. Lung alterations can be observed even in asymptomatic patients of COVID-19, indicating that the disease can be detected by CT even before the onset of symptoms [19].

XR has lower sensitivity rates compared to CT. However, due to some challenges in CT use, health systems adopted XR in the front line of screening and monitoring of COVID-19 [4]. The main challenges in using CT compared to XR are: (i) exam cost, (ii) increased risk for cross-infection, and (iii) more significant exposure to radiation [34]. Furthermore, in underdeveloped countries, the infrastructure of health systems generally does not allow RT-PCR tests or the acquisition of CT images for all suspected cases. However, devices for obtaining radiographs are now more widespread and can serve as a fundamental tool in the fight against the epidemic in these countries [9].

In this perspective, artificial intelligence techniques have shown significant results in the processing of large volumes of information in various pathologies and have significant potential in aiding the diagnosis and prognosis of COVID-19 [10, 16, 18, 36]. Thus, this work aims to explore the application of Deep Learning (DL) techniques to detect pneumonia caused by COVID-19 through XR images. In particular, our objective is to evaluate the performance and provide a set of pre-trained Convolutional Neural Network (CNN) models for use as diagnostic support systems. The CNN architectures evaluated in this article are: DenseNet121 [15], InceptionResNetV2 [30], InceptionV3 [31], MovileNetV2 [27], ResNet50V2 [13], and VGG16 [29].

The study is organized into five sections. In Sect. 2, we present the most significant related works for the definition of the work. Section 3 presents the methodology of the work. Section 4 details the results. Finally, Sect. 5 presents the conclusions of the work.

2 Related Work

Artificial intelligence has made significant advances motivated by large data sets and advances in DL techniques. These advances have allowed the development of systems to aid in analyzing medical images with a precision similar to healthcare specialists. Furthermore, machine learning or data mining techniques extract relevant features and detect or predict pathologies in the images. Therefore, this section describes some relevant works in the literature to detect pneumonia and COVID-19 in XR images.

Since the initial outbreak of COVID-19, studies applying CNNs have been used to detect COVID-19 in XR images. However, at the beginning of the outbreak, the lack of positive XR images was a problem. In [14], the performance of seven CNNs using fine-tuning was compared in a set of 50 XR, 25 positive cases, and 25 negative cases for COVID-19. However, the few images used, the lack of a test set, and the learning graphs presented indicate that the models could not generalize the problem.

In the work proposed by [1], a CNN-based Decompose, Transfer, and Compose (DeTraC) model is used for COVID-19 XR classification. The model consists of using a pre-trained CNN for the ImageNet set as a feature extractor. Selected features go through Principal Component Analysis (PCA) to reduce dimensionality. These selected characteristics are then classified by a CNN into COVID-19 or not. The proposed model reached an accuracy of 93.1% and sensitivity of 100% for the validation set.

DarkCovidNet, a convolutional model based on DarkNet, is used for the COVID-19 detection task in XR [21]. The authors used seventeen DarkNet convolutional layers, achieving an accuracy of 98.08% for binary classification and 87.02% for multi-class classification. In [17] it is proposed to use the pre-trained CNN Xception for the ImageNet dataset for the classification of images into normal, bacterial, viral, and COVID-19 pneumonia. The study achieved an average accuracy of 87% and an F1-Score of 93%.

Another alternative for detecting COVID-19 in XR is detection in levels using VGG-16 [6]. At the first level, XRs are analyzed to detect pneumonia or not. In the second level, the classification of XRs in COVID-19 or not is performed. Finally, on the third level, the heat maps of the activations for the COVID-19 XRs are presented. The accuracy of the study, according to the authors, is 99%.

In summary, several recent works have investigated the use of CNNs for the COVID-19 XR classification. However, most of these works were based on small datasets, performed evaluations directly on the validation sets, and only a few performed the multiclass classification. Therefore, these studies lack evidence on their ability to generalize the problem, making it unfeasible to be used as an aid system for the radiologist. Thus, our contribution to these gaps is the proposal to evaluate six convolutional models in a dataset with more than five thousand XR. We evaluated models on a set of XRs not used in training and validation.

3 Materials and Methods

An overview of the methodology employed in this work is presented in Fig. 1. The methodology is divided into four stages: preprocessing, data augmentation, training, and testing. Image preprocessing consists of image resizing and contrast normalization (Sect. 3.2). The data augmentation step describes the methods used to generate synthetic images (Sect. 3.3). In the training stage, the CNN models and the parameters used are defined (Sect. 3.4). Finally, in the test step, we performed the performance evaluation of the CNN models (Sect. 3.4).

3.1 Dataset

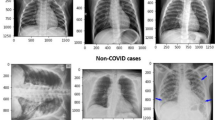

We use XRs from the Curated Dataset for COVID-19 [26]. The dataset is a combination of chest XR images from different sources. The dataset has XRs for normal lung, viral pneumonia, bacterial pneumonia, or COVID-19. The authors performed an analysis of all images to avoid having duplicate images. In addition, for the final selection of images, the authors used a CNN to remove images with noise, such as distortions, cropped, and with annotations [26]. In Table 1 we present the number of images used in our work.

3.2 Preprocessing

In this step, we resize the XRs to \(512\times 512\) pixels. This size limitation is imposed by available computing power. To avoid distortions in the XR, a proportional reduction was applied to each of the dimensions of the images. We added a black border to complement the size for the dimension that was smaller than 512 pixels.

As radiographic findings are often low contrast regions, contrast enhancement techniques can be used in XR images. The application of techniques such as Contrast-Limited Adaptive Histogram Equalization (CLAHE) to breast and chest radiography images helped in the generalization of convolutional models and an increase in performance metrics [23, 36]. Therefore, we use CLAHE to enhance XR images. CLAHE subdivides the image into sub-areas using interpolation between the edges. To avoid noise increase, uses a threshold level of gray, redistributing the pixels above that threshold in the image. CLAHE can be defined by:

where p is the pixel’s new gray level value, the values \(p_{\text {max}} \) and \(p_{\text {min}} \) are the pixels with the lowest and highest values low in the neighborhood and G(f) corresponds to the cumulative distribution function [38]. In Fig. 2 we present an example with the original and preprocessed image.

Finally, we use stratified K-fold cross-validation as a method for evaluating the models, with K=10. With a 10-fold we train the models 10 times for each model. At the end of the training, we calculated the mean and standard deviation of the results for the defined metrics.

3.3 Data Augmentation

A technique that helps in the convergence and learning of CNN is the use of data augmentation. Thus, we use the ImageDataGenerator class from Keras to perform on-the-fly data augmentation. We apply a horizontal flip, rotations of up to 20\(^\circ \)C, and shear in the training set for each fold. We do not apply transformations on XRs in the validation and test set.

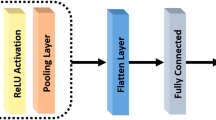

3.4 Convolutional Architectures

We use six CNN architectures for chest XR classification: DenseNet121 [15], InceptionResNetV2 [30], InceptionV3 [31], MovileNetV2 [27], ResNet50V2 [13], and VGG16 [29]. We use the pre-trained weights provided by Keras for the ImageNet dataset for each model. This process of initializing pre-trained weights speeds up the convergence process of the models. Table 2 presents the hyper-parameters used for each of the architectures evaluated in this work.

In the training stage, we use categorical cross-entropy as the loss function. The categorical cross-entropy measures the log-likelihood of the probability vector. To optimize the weights of the models, we use the Adam algorithm. At the end of each epoch, we used the validation set to measure the model’s accuracy in an independent set and obtain the best training weights.

4 Results and Discussion

In this section, we present and evaluate the results obtained using the proposed convolutional models.

4.1 Models Training and Validation

We train the models for 100 epochs for each fold. We evaluate each model at the end of training in the validation set. The choice of the best set of weights was performed automatically based on the error for the validation set. Figure 3, presents the confusion matrices for each of the models.

Analyzing the confusion matrices (Fig. 3), we can see that all classifiers could correctly classify the vast majority of cases. We can highlight the tendency to classify cases of viral pneumonia as bacterial and bacterial pneumonia as viral. This trend may indicate that the number of viral and bacterial pneumonia cases was insufficient for an optimized generalization for these two classes. As for the classification of normal cases, the ResNet50V2 model had the highest rate of misclassification. The classification of pneumonia due to COVID-19 showed similar success rates. The highest false-negative rate for COVID-19 was presented by the InceptionResNetV2 model, with 4 cases. The lowest rate of false negatives was presented by the InceptionV3, ResNet-50, and VGG16 models, with 1 cases.

From the confusion matrix, we can calculate the performance metrics of the models [25]. Table 3 presents the values obtained for the evaluation metrics in the test fold based on the confusion matrices presented in Fig. 3.

Analyzing the results, it is clear that there was relative stability in the performance metrics analyzed for each model. For accuracy, the largest standard deviation was ±2.27%, and the largest difference between the models was 3.83% (VGG16 and DenseNet121). These results indicate an adequate generalization of each model for detecting pneumonia due to COVID-19. As for sensitivity, which measures the ability to classify positive classes correctly, the models differ by 3.96%. The maximum variation between models for specificity was 3.83%.

In general, for the chest XR classification, the ResNet50V2 and VGG16 models showed the best results. This better performance can be associated with the organizations of the models. For ResNet50V2, we can highlight the residual blocks that allow an adaptation of the weights to remove filters that were not useful for the final decision [13]. As for VGG16, the performance may indicate that the classification of XR resources from lower levels, such as more basic forms, is better to differentiate viral pneumonia, bacterial pneumonia, COVID-19, and normal. However, the VGG16 is computationally heavier and requires more training time. Also, VGG16 has a vanishing gradient problem.

Figure 4 presents the ROC curves for each fold and model in the test fold. When comparing the accuracy, sensitivity, specificity, and F1-score metrics, the Area under the ROC Curve (AUC) showed the greatest stability, with a variation of only \(\pm 0.80\%\).

4.2 Comparison with Related Work

A direct quantitative comparison with related works is difficult. Due to private datasets, methodologies with different image cuts, selecting specific aspects of the datasets, removing some images, and with different classification objectives. In this way, we organized in Table 4 a quantitative comparison with similar works.

In general, the convolutional architectures evaluated in our work achieved performance similar to the works that perform multiclassification [1, 17, 21]. If compared to works that perform binary classification, the difference for accuracy is ±13%. However, the work with the best accuracy uses a reduced set of positive images for COVID-19 (250 images) out of a total of 6523 [6]. Thus, the dataset used in the work is not balanced and may indicate a bias in the study. This bias may be associated with a lower sensitivity (87.00%) of the study compared to other metrics.

The sensitivity was similar to the other studies, except for the work with 100% sensitivity [1]. However, the size of the dataset used in the study (196 XR images) can influence the results of the method. In addition, the method proposed by [1] is more complex compared to the proposed in our study. In this way, we can highlight that our method use a balanced dataset. We also use stratified K-fold cross-validation for models training. In this way, we can assess the stability of the assessment metrics and the confidence interval. Finally, the trained models and source code is available on GitHub [Hidden ref.].

5 Conclusion

This article compared six convolutional architectures for the detection of pneumonia due to COVID-19 in chest XR images. In order to improve the generalizability of the results, we apply a set of preprocessing techniques. We use several models with pre-trained weights for the ImageNet dataset, and we propose the classification in normal cases, viral pneumonia, bacterial pneumonia, or COVID-19.

The main scientific contribution of this study was the performance comparison of the DenseNet121, InceptionResNetV2, InceptionV3, MovileNetV2, ResNet50, and VGG16 convolutional architectures for the detection pneumonia due to COVID-19. These pre-trained models can serve as a basis for future studies and provide a second opinion to the radiologist during XR analysis.

As future work, we intend to analyze the influence of datasets on the characteristics learning of COVID-19 XR images, analyzing whether CNNs can generalize characteristics for different datasets. Furthermore, we hope to investigate Explainable Artificial Intelligence approaches to convey specialists the features present in the images used to form the diagnostic suggestion. Finally, using multimodal methodologies, for example, using clinical data and images, can be helpful in the transparency of diagnostic recommendations.

References

Abbas, A., Abdelsamea, M.M., Gaber, M.M.: Classification of covid-19 in chest x-ray images using DeTraC deep convolutional neural network. Appl. Intell. 51(2), 854–864 (2021)

Ai, T., et al.: Correlation of chest CT and RT-PCR testing for coronavirus disease 2019 (covid-19) in china: a report of 1014 cases. Radiology 296(2), E32–E40 (2020). https://doi.org/10.1148/radiol.2020200642

Andersen, K.G., Rambaut, A., Lipkin, W.I., Holmes, E.C., Garry, R.F.: The proximal origin of SARS-CoV-2. Nat. Med. 26(4), 450–452 (2020)

Borghesi, A., Maroldi, R.: Covid-19 outbreak in Italy: experimental chest x-ray scoring system for quantifying and monitoring disease progression. La radiologia medica 125(5), 509–513 (2020)

Brasil, M.D.S.: Boletim epidemiológico especial: Doença pelo coronavírus covid-19 (2020). https://saude.gov.br/images/pdf/2020/July/22/Boletim-epidemiologico-COVID-23-final.pdf

Brunese, L., Mercaldo, F., Reginelli, A., Santone, A.: Explainable deep learning for pulmonary disease and coronavirus covid-19 detection from x-rays. Comput. Methods Program. Biomed. 196, 105608 (2020)

Candido, D.S., et al.: Evolution and epidemic spread of SARS-CoV-2 in Brazil. Science 369(6508), 1255–1260 (2020)

CDC COVID-19 Response Team: Severe outcomes among patients with coronavirus disease 2019 (covid-19)-united states, February 12-march 16, 2020. MMWR Morb. Mortal Wkly. Rep 69(12), 343–346 (2020)

Cohen, J.P., et al.: Covid-19 image data collection: Prospective predictions are the future (2020)

De Fauw, J., et al.: Clinically applicable deep learning for diagnosis and referral in retinal disease. Nat. Med. 24(9), 1342–1350 (2018)

Dong, E., Du, H., Gardner, L.: An interactive web-based dashboard to track covid-19 in real time. Lancet. Infect. Dis 20(5), 533–534 (2020)

EPICOVID: Covid-19 no brasil: várias epidemias num só país (2020). http://epidemio-ufpel.org.br/uploads/downloads/276e0cffc2783c68f57b70920fd2acfb.pdf

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Hemdan, E.E.D., Shouman, M.A., Karar, M.E.: Covidx-net: A framework of deep learning classifiers to diagnose covid-19 in x-ray images. arXiv preprint arXiv:2003.11055 (2020)

Huang, G., Liu, Z., Van Der Maaten, L., Weinberger, K.Q.: Densely connected convolutional networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4700–4708 (2017)

Jatobá, A., Lima, L., Amorim, L., Oliveira, M.: CNN hyperparameter optimization for pulmonary nodule classification. In: Anais do XX Simpósio Brasileiro de Computação Aplicada à Saúde, pp. 25–36. SBC, Porto Alegre, RS, Brasil (2020). https://doi.org/10.5753/sbcas.2020.11499, https://sol.sbc.org.br/index.php/sbcas/article/view/11499

Khan, A.I., Shah, J.L., Bhat, M.M.: Coronet: a deep neural network for detection and diagnosis of covid-19 from chest x-ray images. Comput. Methods Program. Biomed. 196, 105581 (2020)

Lakhani, P., Sundaram, B.: Deep learning at chest radiography: automated classification of pulmonary tuberculosis by using convolutional neural networks. Radiology 284(2), 574–582 (2017)

Lee, E.Y., Ng, M.Y., Khong, P.L.: Covid-19 pneumonia: what has CT taught us? Lancet. Infect. Dis 20(4), 384–385 (2020)

Marson, F.A.L.: Covid-19 - 6 million cases worldwide and an overview of the diagnosis in brazil: a tragedy to be announced. Diagn. Microbiol. Infect. Dis. 98(2), 115113 (2020)

Ozturk, T., Talo, M., Yildirim, E.A., Baloglu, U.B., Yildirim, O., Rajendra Acharya, U.: Automated detection of covid-19 cases using deep neural networks with x-ray images. Comput. Biol. Med. 121, 103792 (2020)

Pascarella, G., et al.: Covid-19 diagnosis and management: a comprehensive review. J. Internal Med. 288(2), 192–206 (2020)

Pooch, E.H.P., Alva, T.A.P., Becker, C.D.L.: A deep learning approach for pulmonary lesion identification in chest radiographs. In: Cerri, R., Prati, R.C. (eds.) BRACIS 2020. LNCS (LNAI), vol. 12319, pp. 197–211. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-61377-8_14

Rafael, R.D.M.R., Neto, M., de Carvalho, M.M.B., David, H.M.S.L., Acioli, S., de Araujo Faria, M.G.: Epidemiologia, políticas públicas e pandemia de covid-19: o que esperar no brasil?[epidemiology, public policies and covid-19 pandemics in brazil: what can we expect?][epidemiologia, políticas públicas y la pandémia de covid-19 en brasil: que podemos esperar?]. Revista Enfermagem UERJ 28, 49570 (2020)

Ruuska, S., Hämäläinen, W., Kajava, S., Mughal, M., Matilainen, P., Mononen, J.: Evaluation of the confusion matrix method in the validation of an automated system for measuring feeding behaviour of cattle. Behav. Process. 148, 56–62 (2018). https://doi.org/10.1016/j.beproc.2018.01.004

Sait, U., et al.: Curated dataset for covid-19 posterior-anterior chest radiography images (x-rays). (September 2020). https://doi.org/10.17632/9xkhgts2s6.3

Sandler, M., Howard, A., Zhu, M., Zhmoginov, A., Chen, L.C.: Mobilenetv 2: inverted residuals and linear bottlenecks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4510–4520 (2018)

Shereen, M.A., Khan, S., Kazmi, A., Bashir, N., Siddique, R.: Covid-19 infection: origin, transmission, and characteristics of human coronaviruses. J. Adv. Res. 24, 91–98 (2020)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556 (2014)

Szegedy, C., Ioffe, S., Vanhoucke, V., Alemi, A.: Inception-v4, inception-resnet and the impact of residual connections on learning. In: Proceedings of the AAAI Conference on Artificial Intelligence (2017)

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J., Wojna, Z.: Rethinking the inception architecture for computer vision. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2818–2826 (2016)

Tang, Y.W., Schmitz, J.E., Persing, D.H., Stratton, C.W.: Laboratory diagnosis of covid-19: current issues and challenges. J. Clin. Microbiol. 58(6) (2020). https://doi.org/10.1128/JCM.00512-20, https://jcm.asm.org/content/58/6/e00512-20

Taylor, L.: Covid-19: Is manaus the final nail in the coffin for natural herd immunity? bmj 372 (2021)

Wong, H.Y.F., et al.: Frequency and distribution of chest radiographic findings in patients positive for covid-19. Radiology 296(2), E72–E78 (2020)

Xie, X., et al.: Chest CT for typical coronavirus disease 2019 (covid-19) pneumonia: Relationship to negative RT-PCR testing. Radiology 296(2), E41–E45 (2020)

Zeiser, F.A., et al.: Segmentation of masses on mammograms using data augmentation and deep learning. J. Digital Imaging 1–11 (2020)

Zhou, P., et al.: A pneumonia outbreak associated with a new coronavirus of probable bat origin. Nature 579(7798), 270–273 (2020)

Zuiderveld, K.: Graphics gems iv. In: Heckbert, P.S. (ed.) Graphics Gems, chap. Contrast Limited Adaptive Histogram Equalization, pp. 474–485. Academic Press Professional Inc, San Diego, CA, USA (1994)

Acknowledgements

We thank the anonymous reviewers for their valuable suggestions. The authors would like to thank the Coordination for the Improvement of Higher Education Personnel - CAPES (Financial Code 001), the National Council for Scientific and Technological Development - CNPq (Grant numbers 309537/2020-7), the Research Support Foundation of the State of Rio Grande do Sul - FAPERGS (Grant numbers 08/2020 PPSUS 21/2551-0000118-6), and NVIDIA GPU Grant Program for your support in this work.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Zeiser, F.A., Costa, C.A.d., Ramos, G.d.O., Bohn, H., Santos, I., Righi, R.d.R. (2021). Evaluation of Convolutional Neural Networks for COVID-19 Classification on Chest X-Rays. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13074. Springer, Cham. https://doi.org/10.1007/978-3-030-91699-2_9

Download citation

DOI: https://doi.org/10.1007/978-3-030-91699-2_9

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91698-5

Online ISBN: 978-3-030-91699-2

eBook Packages: Computer ScienceComputer Science (R0)