Abstract

Collaborative filtering recommender systems are essential tools in many modern applications. Their main advantage compared with the alternatives is that they require only a matrix of user-item interactions to recommend a subset of relevant items for a given user. However, the increasing volume of the data consumed by these systems may lead to a representation model with very high sparsity and dimensionality. Several approaches to overcome this problem have been proposed, neural embeddings being one of the most recent. Since then, many recommender systems were made using this representation model, but few consumed temporal information during the learning phase. This study shows how to adapt a pioneering method of item embeddings by adding a sliding window over time, in conjunction with a split in the user’s interaction history. Results indicate that considering temporal information when learning neural embeddings for items can significantly improve the quality of the recommendations.

This study was financed by the Coordenação de Aperfeiçoamento de Pessoal de Nível Superior – Brasil (CAPES) Finance Code 88882.426978/2019-01, and Fundação de Amparo à Pesquisa do Estado de São Paulo (FAPESP) grant #2020/09354-3.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Personalized recommendations have become an increasingly common practice in our digital lives [5]. With the popularization of technology and ease of making information available, users have access to many items such as movies, music, and news, making it challenging for them to discover everything available and explore their interests. Recommender systems then emerged in the 1990s to overcome this problem, employing filtering techniques to direct the user to a restricted subset of relevant items [2].

Among several types of recommender systems, collaborative filtering is currently the most prolific in scientific literature and practical applications. Their popularity can be credited to its ease of application, since it depends only on a matrix of interactions between users and items to generate recommendations based on similar tastes among users [5].

In early studies, the general approach uses item-based neighborhood algorithms for generating the recommendation [7]. However, the representation commonly adopted grows fast in size as the number of users and items increases; in real-world scenarios, these numbers grow constantly and much faster than the number of user-item interactions. Consequently, the traditional representation model became highly sparse and with an inflated dimensionality, demanding more and more computational power and reducing the quality of the results [18].

Many approaches have been presented to overcome this problem, such as matrix factorization [20] and neural models [36]. In the latter, methods based on neural embeddings have been gaining ground in recent literature. These methods aim to represent items or users as distributed vectors, resulting in a representation with reduced dimensionality while keeping its latent meaning [4, 13].

Although many studies have proposed different methods based on neural embeddings [11, 31], most of these studies do not consider the moment when the user interacted with the item [6]. The static approach – as it is known – while very present in the literature, has proven flaws [21]. Taking into account the timing and order of interactions can lead to significant improvements in results [3, 6, 33].

In this context, this study shows how to adapt Item2Vec [4] – a pioneering method of neural embeddings for a recommendation context – to consider temporal information during training by outlining an approach based on a sliding window over time, a strategy commonly employed in similar scenarios [33].

2 Related Work

Neighborhood algorithms became very popular in the first collaborative filtering recommender systems, given their relative low complexity and ability to produce satisfying results. Early neighborhood algorithms represented users as vectors and yielded recommendations based on the interactions history of the users closest to the target user [7]. Subsequent approaches began to represent items as vectors [28]. This strategy achieved better results, the training was more efficient, and the results were more stable over time having less need for retraining [17].

Gradually, the data used by recommender systems has grown, bringing new challenges to the area such as high sparsity and dimensionality of the commonly adopted representation [18]. Many algorithms for dimensionality reduction have been proposed to overcome this problem. Among different strategies, matrix factorization techniques have become state-of-the-art and are still very relevant to this day [25]. In this approach, users and items are represented as matrices of reduced dimensionality, and the recommendation is made using user-item similarities [20].

With the growth in research of neural networks, the use of neural models for recommender systems has increased significantly in recent years. Among several proposals for using neural networks for recommendation, neural embeddings have been gaining ground in the literature [13]. Inspired by Natural Language Processing (NLP) neural models capable of generating vector representations of words [24], these models were adapted to learn low-dimensional embeddings for items and users [27].

Grbovic et al. [13] addressed an ad recommendation problem based on receipts collected by email and proposed the Prod2Vec and User2Vec methods. The former is based on a skip-gram architecture [24] learning product embeddings and recommending through item similarity, while the latter is based on Paragraph Vector [22], learning embeddings of users and items concurrently and recommending through the similarities between users and items.

With an approach similar to Prod2Vec, Barkan & Koenigstein [4] proposed Item2Vec in the following year. The method was also inspired by consolidated techniques from the NLP area [24] and achieved promising results, surpassing state-of-the-art matrix factorization methods in different tasks.

In the ensuing years, different strategies for learning neural embeddings in recommender systems were proposed, such as (i) adapting existing models to leverage item metadata [11, 31]; (ii) using content information during the embeddings training phase [14, 35]; (iii) employing more complex neural models, e.g., LSTMs or CNNs [29, 30]; (iv) training unaltered NLP models over textual data of items, users, or interactions [9, 15]; and (v) considering different types of user-item interaction [37].

Few studies on neural embeddings in recommender systems explored the impact of using temporal information, i.e., when the interaction happened. The most common approach is session-based recommendation, in which only items consumed by the user in a short period are used for training the embeddings, considering an ordered relationship [12] or not [13].

The static approach is still customary in recommender systems as a whole, even though it has flaws [21]. User’s interests change over time, and by not accounting for it, important information can be lost [6, 33]. It is known that considering temporal aspects tends to improve the quality of the recommendation and generate greater confidence for the user [3]. Additionally, static algorithms trained in offline scenarios can suffer a drop in performance when applied in online and real scenarios with temporal properties [21]

Recommender systems capable of learning relevant information from temporal data have been proposed since the beginning of the field [1], but this has gained relevance when timeSVD++ [19], a temporal adaptation of the matrix factorization method SVD++, won one of the rounds of The Netflix PrizeFootnote 1.

Two approaches can be applied to temporal information: using time as context, based on the idea of cyclical phenomena as it is done in Time-Aware Recommender Systems (TARS); or use time as a sequence, viewing temporal information as a chronologically ordered sequence, the strategy used in Time-Dependent Recommender Systems (TDRS) [33].

In TDRS two data interpretation methodologies are commonly adopted: the use of decay functions, which make newer interactions have more weight during the learning phase than older interactions [10]; and the use of sliding windows (also known as “forgetting techniques”) in which interactions are sorted chronologically, and only a slice of the data is consumed during training, with the remaining information receiving less weight or being ignored [33].

In the last decade, some studies showed promising results using sliding window strategies for recommender systems. For instance, Vinagre & Jorge [32] employed two different sliding window techniques in a neighborhood-based recommender. Matuszyk et al. [23] proposed five forgetting strategies for matrix factorization using temporal windows or fading factors during data preprocessing and model training. Wang et al. [34] trained a neural network to predict shopping baskets in an e-commerce environment using a unit-size sliding window over past baskets. All studies above surpassed baseline algorithms or improved scalability without compromising accuracy.

In line with the positive results that have been presented in the literature, we propose SeqI2V, a sequential Item2Vec [4] adaptation using sliding windows over time. In our proposal, the embeddings learning phase is influenced by when interactions occur, increasing the context these item representations carry. The final recommendation is statically computed using an item-based neighborhood approach, just like the original method.

3 The Proposed Method

Methods based on neural embeddings are becoming state-of-the-art for recommender systems, presenting results comparable to established approaches, such as neighborhood techniques and matrix factorization. The Item2Vec was a pioneering technique in introducing neural embedding techniques from Natural Language Processing to the recommendation scenario, adapting the skip-gram network to generate item embeddings. As stated by the authors, the method outperformed the well-known SVD in different evaluated tasks.

Some subsequent studies adapted Item2Vec to specific scenarios or improved the embeddings training phase [11, 31]. However, temporal information is still underexplored, limiting the application of Item2Vec to offline scenarios, in which pattern variations over time are ignored. To fill this important gap, we propose using sliding windows over time to introduce temporal information in the learning stage while keeping the elegant simplicity of Item2Vec.

In the following, we explain the traditional Item2Vec model and present SeqI2V – a sequential Item2Vec adaptation using sliding windows over time.

3.1 Item2Vec

Item2Vec is an artificial neural network composed of three layers: an input, a hidden and an output layer, as shown in Fig. 1.

Given a recommender system composed of a set U of users, a set I of items and multiple sets \(I_u\) of items consumed by user u, the input layer \(\mathcal {J}\) has a size |I|, as is comprised of items i encoded as one-hot vectors (all elements in the array have a value of 0, except for the one that represents the item, which has a content value of 1). The middle layer \(\mathcal {H}\) has size M, which corresponds to the desired dimensionality for the final vector representation of each item. Between the input and the middle layers, there is a weight matrix \(\mathcal {W}\), of size \(|I| \times M\), used to activate the initial layer through the operation \(\mathcal {H} = \mathcal {J} \cdot \mathcal {W}\). The output layer \(\mathcal {O}\) is made up of \(|I_u|-1\) vectors of size |I| connected to the middle layer by the transpose of the same weight matrix \(\mathcal {W}\) and a softmax activation function. Thus, \(\mathcal {O} = \sigma (\mathcal {H} \cdot \mathcal {W}^T)\), where \(\sigma \) represents the softmax function shown in Eq. 1.

The network is trained over multiple epochs. For each epoch, each item \(i \in I_u\) for every user u is used as input. In the output layer \(\mathcal {O}\), we have \(|I_u|-1\) one-hot encoded vectors (the number of items consumed by the user removing the target item), each associated with an item \(j \in I_u \mid j \ne i\). In other words, the network receives an item and must predict another item consumed by the user, approximating them in the vector space. For every pair of input and output items, the network calculates the prediction error and updates the weights. The main goal of the network is to maximize the objective function given by Eq. 2.

At the end of the learning phase, the weight matrix \(\mathcal {W}\) will contain dense vector representations of dimensionality M for every item processed.

Item2Vec come with some drawbacks that would be impractical in scenarios with many items, since it must predict a related item (when output is 1) and all unrelated items (when output is 0) for each target item. Thus, two strategies commonly used in NLP are adopted [24]: (i) negative sampling, i.e., the error is calculated over a small subset of N negative items, randomly drawn so that frequent items are more likely to be selected according to Eq. 3; and (ii) subsampling of frequent items, i.e., a subset of the interactions of popular items are discarded with a probability of being kept shown in Eq. 4. In both equations, function z(i) returns the frequency of item i, and the hyperparameters \(\gamma \) and \(\rho \) can be fine-tuned.

3.2 Sequential Item2Vec

Standard Item2Vec assumes that all items consumed by a user are equally related, shuffling them at the beginning of training. Therefore, given a particular item, the network considers that another item consumed in the distant past (e.g., years ago) has the same similarity to the target item as an item consumed in the recent past (e.g., same day). In other words, all other items are considered contextually equal to the target item, as illustrated in Fig. 3 for the example shown in Fig. 2.

We have adopted a sliding window of fixed and parameterizable size during training to restrict the context. In this way, only items consumed in sequence are considered to be related to the target item. In a first approach, we are not interested in the moment the interaction occurred, but only in the chronological order, as shown in Fig. 4.

The neural architecture for SeqI2V is very similar to Item2Vec, the only difference taking place in the number of vectors present in the output layer: instead of a vector for each of the other \(|I_u|-1\) items consumed by the user, the network must predict only S items, where S corresponds to the window size (Fig. 5).

Although this model considers the chronological order in which items are consumed, it fails to capture changes in users who spend long periods without interacting with the system. To overcome this problem, we propose splitting a user’s interaction history into various chronological sequences using absolute and pre-defined time intervals to decide where to split. This approach allows that when two continuous interactions of the same user have an interval greater than D days, their chronological sequence is then split into two. As we are only interested in the relationship between items, the network would interpret this single-user as different ones, restricting learning to its behavior in a defined time interval, as illustrated by Fig. 6. The value of the parameter controlling the number of days to define the binding time window is flexible and can be adjusted for each specific application scenario a user might want to use SeqI2V on. In this work, we opt for an absolute value of days when splitting as it is a less complex and humanly understandable strategy.

During the learning phase and when generating the final recommendation, SeqI2V also employs all techniques used by standard Item2Vec, such as negative sampling and item subsampling during training, and also an item-based K-Nearest Neighbor when recommending.

4 Experiments and Results

In order to make the experiments reproducible, this section describes the experimental protocol conducted during the study. First, we introduce the datasets used, in conjunction with a description of how the training was carried out. Then, we present the achieved results.

4.1 Datasets

The datasets used in the experiment are listed in Table 1. All of them are publicly available and were selected based on their relevance within literature. DeliciousBookmarks and MovieLens belong to GroupLens repositoryFootnote 2; BestBuyFootnote 3 and NetflixPrizeFootnote 4 were datasets used in challenges conducted by, respectively, Association for Computing Machinery (ACM) and Netflix, Inc.; CiaoDVDFootnote 5 was crawled from Ciao website; RetailRocket is the database of the retention management platform with the same nameFootnote 6. In all these datasets, we removed users or items with a single interaction since most of the trained algorithms aim to learn relations between same-user or item interactions.

4.2 Experimental Protocol

We sorted the interactions chronologically in each dataset and separated them into a train, validation, and test partitions, with an 8:1:1 ratio, respectively. Then, we optimized the hyperparameters using grid search on the validation partition, aiming to maximize NDCG in a top-15 recommendation scenario. Finally, the final results were obtained on the test partition. Due to hardware limitations, we performed the parameter optimization/final experiment of larger datasets over a small and randomly comprised subset: 25%/100% for MovieLens and 5%/25% for NetflixPrize. Additionally, we removed cold-start cases, i.e., users or items with no interaction on the training set, since this is a problem beyond the scope of our study.

Although our main goal is to improve the results of Item2Vec, we also compared the performance achieved by the proposed Sequential Item2Vec (SeqI2V) with classical recommender algorithms. For this, we have implemented the established item-based version of K-Nearest Neighbors (KNN) [28] and two state-of-the-art matrix factorization methods: implicit singular value decomposition (ALS) [16] and Bayesian Personalized Ranking (BPR) [26]. In addition to the standard Item2Vec (I2V), we have also implemented User2Vec (U2V) [13], a user-item neural embeddings-method.

For KNN we used the implementation of turicreate library, without requiring hyperparameter choices. Both matrix factorization methods were implemented using implicit library, with latent factors ranging in \(\{50, 100, 200\}\), regularization factor in \(\{10^{-6}, 10^{-4}, 10^{-2}\}\), number of epochs in \(\{10, 20, 50, 100\}\), and learning rate in \(\{0.0025, 0.025, 0.25\}\). All neural embeddings models, including Sequential Item2Vec, were implemented using gensim library, with hyperparameter being tuned according insights proposed by Caselles-Duprés et al. [8]. Thus, the dimensionality M was fixed in 100 and 5 negative samples were randomly drawn for each item, with the number of epochs ranging in \(\{50, 100, 150\}\), negative exponent for the negative sampling in \(\{-1.0, -0.5, 0.5, 1.0\}\), and the subsampling factor in \(\{10^{-5}, 10^{-4}, 10^{-3}\}\). Additionally, for the sequential adaptation, we tested different window sizes ranging in \(\{1,3,5,7,9\}\), and the interval of days for splitting in \(\{182, 365, 730, \text {no split}\}\).

4.3 Results

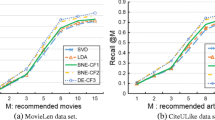

We evaluated the algorithms in a top-15 recommendation task, i.e. for each user, the methods must recommend 15 items in a ranked list. Then, we calculated the F1-Score (Table 2) and the Normalized Discounted Cumulative Gain, or NDCG (Table 3). All results are in grayscale, with darker tones representing better performances. The best value for each dataset is highlighted in bold.

To facilitate comparing the SeqI2V with its baseline (Item2Vec), Table 4 presents the improvements and worsenings resulting from the use of SeqI2V concerning the results obtained by Item2Vec in each database and performance measure. In both metrics, the results obtained by Sequential Item2Vec are promising. In all datasets, except for BestBuy, the SeqI2V outperformed the I2V. On the RetailRocket dataset, the performance of SeqI2V was approximately 150% higher. The F1-Score achieved by SeqI2V was 52% and 43% higher than Item2Vec, in average and median, respectively. Moreover, the NDCG achieved by SeqI2V was 48% and 55% higher, in average and median.

When compared to the other established recommender algorithms, the results of Sequential Item2Vec remain auspicious. For the F1-Score, it attained the best result in 66% of the datasets. On the other hand, as BPR optimizes the ranking, it achieved the best performance when NDCG is used as an evaluation metric. Still, for both metrics, SeqI2V was the best or second-best in 83% of the cases.

It is important to highlight that regarding the number of days used to split the temporal sequences, RetailRocket was the only dataset in which sequences with no split achieved the best performance. For all the others datasets, the best performance was attained using sequences split between 182 and 730 days. These results evidence the effectiveness of splitting user sequences and the need to adapt the interval for each specific application. Moreover, even when the split was not necessary, using sliding windows significantly improved the results.

It is also important to highlight that, although SeqI2V demands an extra step of data preparation, training the embeddings is less costly than in Item2Vec. For each target item, it makes predictions only for a reduced set of items (the window size) instead of all items previously consumed by the user. Thus, the proposed method is more computationally efficient and presents better results without increasing implementation complexity.

The main limitation of the proposed method relies on exceptional cases in which the user always takes a long period between two interactions, greater than the defined window size. In this case, consumption contexts would be composed of unitary relationships, allowing sliding windows but making the interactions sequence splitting unfeasible.

5 Conclusion

Item2Vec is a well-known method capable of learning neural embeddings for items in a recommender system. This study presented Sequential Item2Vec – an adaptation of the original approach that uses a sliding window over time, giving temporal context awareness for the item embeddings. We also proposed splitting the user’s interaction history into multiple sequences so that the network would not learn relationships between items consumed in considerably different moments, thus capturing changes in the user’s behavior.

In order to assess whether the proposed approach was able to improve the results obtained with the standard Item2Vec, we compared both models with other established methods in the field, over six well-known and publicly available databases. Metrics of hit and ranking were calculated in a top-15 recommendation scenario. The results indicate that Sequential Item2Vec outperformed the results obtained by Item2Vec. It was superior to its predecessor in almost all of the datasets. The proposed approach was also competitive compared to the other methods, matching the Bayesian Personalized Ranking, a state-of-the-art matrix factorization method.

Although giving time awareness to the item embeddings, the recommendation generated by Sequential Item2Vec is still statically. In future research, we aim to consider time in the recommendation step, analyzing its impact. Additionally, we suggest studying other ways to include time during the learning phase. One promising approach can be the use of decay functions instead of - or in conjunction with - sliding windows.

Notes

- 1.

The well-known competition of recommender systems, organized by the streaming company Netflix from 2006 to 2009: https://www.netflixprize.com/rules.html.

- 2.

GroupLens. Available at: www.grouplens.org/datasets/. Access in August 31, 2021.

- 3.

Data Mining Hackathon on BIG DATA (7 GB) Best Buy mobile web site. Available at: www.kaggle.com/c/acm-sf-chapter-hackathon-big. Access in August 31, 2021.

- 4.

Netflix Prize data. Available at: www.kaggle.com/netflix-inc/netflix-prize-data. Access in August 31, 2021.

- 5.

CiaoDVD Movie Ratings. Available at: www.konect.cc/networks/librec-ciaodvd-movie_ratings/. Access in August 31, 2021.

- 6.

Retailrocket recommender system dataset. Available at: www.kaggle.com/retailrocket/ecommerce-dataset. Access in August 31, 2021.

References

Adomavicius, G., Tuzhilin, A.: Multidimensional recommender systems: a data warehousing approach. Electron. Commer. 2232, 180–192 (2001). https://doi.org/10.1007/3-540-45598-1_17

Adomavicius, G., Tuzhilin, A.: Toward the next generation of recommender systems: a survey of the state-of-the-art and possible extensions. IEEE Trans. Knowl. Data Eng. 17(6), 734–749 (2005). https://doi.org/10.1109/TKDE.2005.99

Baltrunas, L., Amatriain, X.: Towards time-dependant recommendation based on implicit feedback. In: Proceedings of the RecSys 2009 Workshop on Context-Aware Recommender Systems, RecSys 2009, pp. 1–5. Association for Computing Machinery, New York (2009)

Barkan, O., Koenigstein, N.: Item2Vec: neural item embedding for collaborative filtering. In: IEEE 26th International Workshop on Machine Learning for Signal Processing, MLSP 2016, pp. 1–6. IEEE, Piscataway, NJ, USA (2016). https://doi.org/10.1109/MLSP.2016.7738886

Bobadilla, J., Ortega, F., Hernando, A., Gutiérrez, A.: Recommender systems survey. Knowl.-Based Syst. 46, 109–132 (2013). https://doi.org/10.1016/j.knosys.2013.03.012

de Borba, E.J., Gasparini, I., Lichtnow, D.: Time-aware recommender systems: a systematic mapping. In: Kurosu, M. (ed.) HCI 2017. LNCS, vol. 10272, pp. 464–479. Springer, Cham (2017). https://doi.org/10.1007/978-3-319-58077-7_38

Breese, J.S., Heckerman, D., Kadie, C.: Empirical analysis of predictive algorithms for collaborative filtering. In: Proceedings of the 14th Conference on Uncertainty in Artificial Intelligence, UAI 1998, pp. 43–52. Morgan Kaufmann Publishers Inc., San Francisco, CA, USA (1998)

Caselles-Duprés, H., Lesaint, F., Royo-Letelier, J.: Word2vec applied to recommendation: hyperparameters matter. In: Proceedings of the 12th ACM Conference on Recommender Systems, RecSys 2018, pp. 352–356. Association for Computing Machinery, New York (2018). https://doi.org/10.1145/3240323.3240377

Collins, A., Beel, J.: Document embeddings vs. keyphrases vs. terms for recommender systems: a large-scale online evaluation. In: Proceedings of the 2019 ACM/IEEE Joint Conference on Digital Libraries, JCDL 2019, pp. 130–133. IEEE, New York (2019). https://doi.org/10.1109/JCDL.2019.00027

Ding, Y., Li, X.: Time weight collaborative filtering. In: Proceedings of the 14th ACM International Conference on Information and Knowledge Management, CIKM 2005, pp. 485–492. Association for Computing Machinery, New York (2005). https://doi.org/10.1145/1099554.1099689

Peng, F.U., LV, J.H. and LI, B.J.: Attr2vec: a neural network based item embedding method. In: Proceedings of the 2nd International Conference on Computer, Mechatronics and Electronic Engineering, CMEE 2017, pp. 300–307. DEStech Publications, Lancaster, PA, USA (2017). https://doi.org/10.12783/dtcse/cmee2017/19993

Grbovic, M., Cheng, H.: Real-time personalization using embeddings for search ranking at Airbnb. In: Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD 2018, pp. 311–320. Association for Computing Machinery, New York (2018). https://doi.org/10.1145/3219819.3219885

Grbovic, M., et al.: E-commerce in your inbox: product recommendations at scale. In: Proceedings of the 21th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD 2015, pp. 1809–1818. Association for Computing Machinery, New York (2015). https://doi.org/10.1145/2783258.2788627

Greenstein-Messica, A., Rokach, L., Friedman, M.: Session-based recommendations using item embedding. In: Proceedings of the 22nd International Conference on Intelligent User Interfaces, IUI 2017, pp. 629–633. Association for Computing Machinery, New York (2017). https://doi.org/10.1145/3025171.3025197

Hasanzadeh, S., Fakhrahmad, S.M., Taheri, M.: Review-based recommender systems: a proposed rating prediction scheme using word embedding representation of reviews. Comput. J. 1–10 (2020). https://doi.org/10.1093/comjnl/bxaa044

Hu, Y., Koren, Y., Volinsky, C.: Collaborative filtering for implicit feedback datasets. In: Proceedings of the 8th IEEE International Conference on Data Mining, ICDM 2008, pp. 263–272. IEEE Computer Society, Washington, D.C., USA (2008). https://doi.org/10.1109/ICDM.2008.22

Karypis, G.: Evaluation of item-based top-n recommendation algorithms. In: Proceedings of the 10th International Conference on Information and Knowledge Management, CIKM 2001, pp. 247–254 (2001). https://doi.org/10.1145/502585.502627

Khusro, S., Ali, Z., Ullah, I.: Recommender systems: issues, challenges, and research opportunities. In: Information Science and Applications (ICISA) 2016. LNEE, vol. 376, pp. 1179–1189. Springer, Singapore (2016). https://doi.org/10.1007/978-981-10-0557-2_112

Koren, Y.: Collaborative filtering with temporal dynamics. In: Proceedings of the 15th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD 2009, pp. 447–456 (2009). https://doi.org/10.1145/1557019.1557072

Koren, Y., Bell, R., Volinsky, C.: Matrix factorization techniques for recommender systems. Computer 42(8), 30–37 (2009). https://doi.org/10.1109/MC.2009.263

Lathia, N., Hailes, S., Capra, L.: Temporal collaborative filtering with adaptive neighbourhoods. In: Proceedings of the 32nd International ACM SIGIR Conference on Research and Development in Information Retrieval, SIGIR 2009, pp. 796–797. Association for Computing Machinery, New York (2009). https://doi.org/10.1145/1571941.1572133

Le, Q., Mikolov, T.: Distributed representations of sentences and documents. In: Proceedings of the 31st International Conference on Machine Learning, ICML 2014, pp. 1188–1196. JMLR.org (2014). https://doi.org/10.5555/3044805.3045025

Matuszyk, P., Ao Vinagre, J., Spiliopoulou, M., Jorge, A.M., Ao Gama, J.: Forgetting methods for incremental matrix factorization in recommender systems. In: Proceedings of the 30th Annual ACM Symposium on Applied Computing, SAC 2015, pp. 947–953. Association for Computing Machinery, New York (2015). https://doi.org/10.1145/2695664.2695820

Mikolov, T., Sutskever, I., Chen, K., Conrado, G., Dan, J.: Distributed representations of words and phrases and their compositionality. In: Proceedings of the 26th International Conference on Neural Information Processing Systems, NIPS 2013, pp. 3111–3119. Curran Associates Inc., Red Hook, NY, USA (2013). https://doi.org/10.5555/2999792.2999959

Rendle, S.: Factorization machines. In: Proceedings of the 10th IEEE International Conference on Data Mining, ICDM 2010, pp. 14–17. IEEE, New York (2010). https://doi.org/10.1109/ICDM.2010.127

Rendle, S., Freudenthaler, C., Gantner, Z., Schmidt-Thieme, L.: BPR: Bayesian personalized ranking from implicit feedback. In: Proceedings of the 25th Conference on Uncertainty in Artificial Intelligence, UAI 2009, pp. 452–461. AUAI Press, Arlington, VA, USA (2009). https://doi.org/10.5555/1795114.1795167

Rudolph, M., Ruiz, F.J.R., Mandt, S., Blei, D.M.: Exponential family embeddings. In: Proceedings of the 30th International Conference on Neural Information Processing Systems, NIPS 2016, pp. 478–486. Curran Associates Inc., Red Hook, NY, USA (2016). https://doi.org/10.7916/D8NZ9RHT

Sarwar, B.M., Karypis, G., Konstan, J.A., Riedl, J.T.: Item-based collaborative filtering recommendation algorithms. In: Proceedings of the 10th International Conference on World Wide Web, WWW 2001, pp. 285–295. Association for Computing Machinery, New York (2001). https://doi.org/10.1145/371920.372071

Sidana, S., Trofimov, M., Horodnytskyi, O., Laclau, C., Maximov, Y., Amini, M.-R.: User preference and embedding learning with implicit feedback for recommender systems. Data Min. Knowl. Disc. 35(2), 568–592 (2021). https://doi.org/10.1007/s10618-020-00730-8

Tang, J., Wang, K.: Personalized top-n sequential recommendation via convolutional sequence embedding. In: Proceedings of the 11th ACM International Conference on Web Search and Data Mining, WSDM 2018, pp. 565–573. Association for Computing Machinery, New York (2018). https://doi.org/10.1145/2939672.2939673

Vasile, F., Smirnova, E., Conneau, A.: Meta-prod2vec: product embeddings using side-information for recommendation. In: Proceedings of the 10th ACM Conference on Recommender Systems, RecSys 2016, pp. 225–232. Association for Computing Machinery, New York (2016). https://doi.org/10.1145/2959100.2959160

Vinagre, J., Jorge, A.M.: Forgetting mechanisms for scalable collaborative filtering. J. Braz. Comput. Soc. 18(4), 271–282 (2012). https://doi.org/10.1007/s13173-012-0077-3

Vinagre, J., Jorge, A.M., Gama, J.: An overview on the exploitation of time in collaborative filtering. WIREs Data Min. Knowl. Disc. 5, 195–215 (2015). https://doi.org/10.1002/widm.1160

Wang, P., Guo, J., Lan, Y., Xu, J., Wan, S., Cheng, X.: Learning hierarchical representation model for nextbasket recommendation. In: Proceedings of the 38th International ACM SIGIR Conference on Research and Development in Information Retrieval, SIGIR 2015, pp. 403–412. Association for Computing Machinery, New York (2015). https://doi.org/10.1145/2766462.2767694

Zhang, F., Yuan, N.J., Lian, D., Xie, X., Ma, W.Y.: Collaborative knowledge base embedding for recommender systems. In: Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD 2016, pp. 353–362. Association for Computing Machinery, New York (2016). https://doi.org/10.1145/2939672.2939673

Zhang, S., Yao, L., Sun, A., Tay, Y.: Deep learning based recommender system: a survey and new perspectives. ACM Comput. Surv. 52(1), 5:1–5:35 (2019). https://doi.org/10.1145/3285029

Zhao, X., Louca, R., Hu, D., Hong, L.: The difference between a click and a cart-add: learning interaction-specific embeddings. In: Companion Proceedings of the Web Conference 2020, WWW 2020, pp. 454–460. Association for Computing Machinery, New York (2020). https://doi.org/10.1145/3366424.3386197

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Pires, P.R., Pascon, A.C., Almeida, T.A. (2021). Time-Dependent Item Embeddings for Collaborative Filtering. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13074. Springer, Cham. https://doi.org/10.1007/978-3-030-91699-2_22

Download citation

DOI: https://doi.org/10.1007/978-3-030-91699-2_22

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91698-5

Online ISBN: 978-3-030-91699-2

eBook Packages: Computer ScienceComputer Science (R0)