Abstract

Studies in sentiment analysis have examined how different features are effective in identifying subjective sentences. Several studies on sentiment analysis exist in the literature that have already performed some evaluation of NLP classifications. However, the vast majority of them did not handle texts in the Brazilian Portuguese language, and there is no one to consider the combination of sets of text features of NLP tasks with classifiers. Therefore, in our investigation, we combined empirical features to identify subjective sentences in Portuguese and provide a comprehensive analysis of each set of features’ relative importance using a representative set of user reviews.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

A vast quantity of user reviews is constantly published on the Web every day in recent years, thanks to the rapid development of social media. These reviews assist other users in making decisions, such as purchasing new products, as they are also useful for companies to improve their products. Sentiment analysis (SA) is an area of study that aims to find computational techniques to extract and analyze people’s opinions and to identify the sentiment expressed in those opinions. Subjectivity identification is an essential task of SA because most polarity detection tools are optimized for distinguishing between positive and negative texts. Subjectivity identification hence ensures that factual information is filtered out and only opinionated information is passed on to the polarity classifier [9]. Several studies have demonstrated that information provided by some textual features is valuable for sentiment classification [2, 11, 20]. Many types of text features have been proposed and evaluated in the literature, such as syntactic features and part-of-speech features.

SA for the English language is well advanced. However, the linguistic resources available for sentiment analysis in other languages are still limited [23]. Portuguese is one of the languages with few linguistic resources available, despite being among the top five languages used online. Thus, the small number of specific works and resources for Portuguese-focused sentiment analysis provides a scenario with great challenges and opportunities.

Given this scenario, our study aims to present research regarding the use of machine learning algorithms for subjectivity identification of user reviews published in Portuguese. In addition, we will also investigate which text features are more important to address during this task. In particular, we will show that certain features play a more important role than others under different circumstances. In this way, this work contributes to an analysis of these combinations for the SA task from texts written in the Portuguese language, with the objective of answering the following research questions:

RQ1: Is there a single machine learning algorithm for subjectivity identification that is always the best for a diversified set of datasets?

RQ2: Given a set of feature groups, is there a group that is always the best regardless of the algorithm used?

RQ3: How do different feature combinations affect the predictive performance of a classifier?

We carried out an exhaustive evaluation of combinations considering five classifiers for four sentiment analysis datasets in the Brazilian Portuguese language, with the aim being to answer these research questions. We chose four well-known algorithms used in text classification: Gradient Boosting Trees (GBT), Maximum Entropy (ME), Random Forest (RF), and Support Vector Machine (SVM). We also used AutoML, a machine learning algorithm where the model finds the solution to the given data and produces results automatically. Specifically, we used AutoGluonFootnote 1 because it was one of the most recently released AutoML frameworks [14]. We didn’t focus on presenting the best combinations or even improving the state-of-the-art predictive performance for each dataset. Instead, the goal was to raise the importance of text features for subjectivity identification.

Our main contribution is to report an extensive set of experiments aimed to evaluate the relative effectiveness of different linguistic features for subjectivity identification.

The remainder of this paper is organized as follows. Section 2 provides a brief description of the main related works. Then, the method and materials are described in Sect. 3. Experiments are reported in Sect. 4, where we also describe the evaluation and discuss the results. Finally, we draw the conclusion and future works in Sect. 5.

2 Related Work

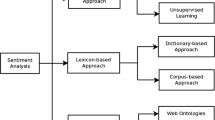

In this section, we discuss prior works related to subjectivity identification on user reviews. The main approach is using machine learning techniques with different linguistic features. There are many types of classifiers for this task using supervised learning algorithms, but the most popular classifiers are Support Vector Machine (SVM), Naive Bayes (NB), and Maximum Entropy [2, 3]. Previous studies [2, 11] also have explored the use of English features, such as adjectives, nouns and phrases, and have applied these features to identify subjective sentences. Chenlo and Losada [11] in their survey paper evaluated a wide range of features that have been employed to date for subjectivity classification. Some of the prominent features include POS, lexicon, number and proportion of subjective terms in the sentence, and so on. The subjectivity classification part is relevant to our study.

Moraes et al. [19] investigate the problem of subjectivity classification for the Portuguese language. They created a corpus of tweets on the area of technology called ComputerBR. Inspired by the methods for English, the evaluated methods were based on the use of sentiment lexicons and machine learning algorithms, such as SVM and Naive Bayes. Carvalho et al. [7] proposed a comparative study test between three learning algorithms (Naive Bayes, SVM and Maximum Entropy) and three feature selection methods for classifying texts of election-related news in Brazil. Belisário et al. [5] focused specifically on the task of subjectivity classification. The authors reported the study and comparison of machine learning methods of different paradigms (Naive-Bayes and SVM) to perform subjectivity classification of book review sentences in Portuguese. The authors also evaluated lexical and discourse features.

In summary, most methods for subjectivity identification are supervised and use different subsets of text features for English. Therefore, there is not a clear picture of the impact of every feature set for the most common machine learning algorithms. Thus, there is room for further studies in Portuguese. In our work, we evaluate a large set of text features with machine learning algorithms, including a recent automatic machine learning approach, for subjectivity identification from texts written in the Brazilian Portuguese language. We evaluated all features and algorithms across four different datasets.

3 Materials and Methods

We deal with a binary classification task: factual versus subjective sentences. This task can be performed by text classifiers composed of training data in a supervised strategy.

3.1 Features

The characteristics of user reviews will be encoded as features in vector representation. These vectors and the corresponding labels feed the classifiers. To build our classifiers, we considered the following sets of feature groups.

POS Features. Part-Of-Speech (POS) information is used to locate different types of information of interest inside text documents. For instance, adjectives usually represent opinions, while adverbs are used as modifiers to represent the degree of expressiveness of opinions. For the extraction of POS tags, the sentences were initially tokenized. Then the tokens were tagged with their respective word classes using spaCyFootnote 2 POS tagger [16]. The tokens were then lowercased. The following POS featuresFootnote 3 were extracted from the processed sentences: adjective (ADJ), conjunction (ADP), adverb (ADV), verb auxiliary (AUX), coordinating conjunction (CCONJ), determiner (DET), interjection (INTJ), noun (NOUN), cardinal number (NUM), pronoun (PRON), proper noun (PROPN), punctuation mark (PUNCT), subordinating conjunction (SCONJ), symbol (SYM), verbs (VERB), comparative (COMP), superlative (SUP), and other tags (X). The latter is used for words that can not be assigned a real POS category for any reason. In Table 1, each POS feature is described with an example and its feature values (FV).

Syntactic Patterns. Natural language texts are based on syntactic patterns which create a sequence of words based on grammar rules. In our study, we used syntactic patterns as indicative of subjective sentences. The patterns were originally proposed by Turney [25] and we adapted them to use spaCy toolkit. In Table 2, each syntactic pattern is described with an example and its feature values (FV). The rules are described in Table 2 with arrows (\(\rightarrow \)) denoting immediate sequence between two POS tags, while Not indicates that the absence of a given tag is expected. In feature 23, the verb can be in different forms such as participles (PCP), gerund (GER), perfect simple (PS), and imperfect (IMPF).

Sentiment Lexicons. Some of the most important indicators in the analysis of subjective text are sentiment lexicons. These features are based on counting the sentiment-bearing terms that occur in the sentence [1, 10]. We consider the following terms: subjectives, positives, and negatives. The sentiment lexicon is a Portuguese translation of the lexicon from Pennebaker et al. [22], a well-known subjective classifier [4]. We include the number and percentage of opinionated terms in a sentence as features for our classifiers. We also consider the number and percentage of exclamation marks “!” and question marks “?” in the sentences because they usually indicate the strength of sentiment. In Table 3, each sentiment lexicon feature is described with an example and its feature values (FV).

Concept-Level Features. Concept-level features can help with a semantic analysis of text through web ontologies or semantic networks. Hence, they allow for aggregating conceptual and affective information associated with natural language opinions [24]. We adopted the same set of concept-level features used in [11], which in turn, measured different aspects of these concepts, including: pleasantness, attention, aptitude, sensitivity, and polarity. To do this, we used the SenticNet corpus [6]. We have represented this group of features considering only each concept’s absolute score since highly opinionated concepts tend to have higher absolute values. In Table 4, each concept-level feature is described with an example and its feature values (FV).

Structural Features. Structural features encode the number of words, words with an upper letter, and the number of upper letters. Intuitively, the number of words could be indicative of subjectivity. For instance, factual sentences may be shorter [11]. We include the ratio of the number of uppercase words and uppercase characters in a sentence as features for our classifiers. In Table 5, each structural feature is described with an example and its feature values (FV).

Twitter Features. Because the language used on Twitter is often informal and differs from traditional text types, we used the number of URLs, mentions and elongated words as features [18]. We also include emoji sentiment scores provided in [17] and emoticons sentiment scores provided in [15]. In Table 6, each Twitter feature is described with an example and its feature values (VoF).

Miscellaneous Features. We adopted a set of miscellaneous features that did not belong to the aforementioned groups. As adopted by Palshikar et al. [21], we include the number of named entities and datetimes. The intuition is that factual information generally has lots of dates and entities. We also include the number of words that are present in a dictionary [18]. Therefore, misspelled words were not counted. We used a list of more than 300,000 wordsFootnote 4. In Table 7, each miscellaneous feature is described with an example and its feature values (FV).

3.2 Models

We chose four conventional methods frequently used in text classification when the features set was selected to investigate which feature groups are best between: Gradient Boosting Trees (GBT), Maximum Entropy (ME), Random Forest (RF), and Support Vector Machine (SVM). We also used AutoGluon because it is one of the most recently released AutoML frameworks. We used the Python Scikit-LearnFootnote 5 library to build conventional methods.

3.3 Recursive Feature Elimination

Recursive Feature Elimination (RFE) is considered a kind of wrapper feature selection method [8]. Initially, all the features are selected for feature selection. At every iteration, some features will be removed from the full features set, based on the inference of the training model. The goal is to repeatedly build a model and choose the best-performing feature. This procedure is repeated until all features in the dataset are evaluated. Features are then ranked according to the elimination order. As such, it is a greedy optimization for finding the best performing subset of features. The coefficients of features are generally used as the index of importance evaluation. The closer the coefficient to 0, the smaller effect on the target variable is. The importance ranking of the candidate features is finally achieved by following the sequence order of elimination of features during every iteration.

4 Experiments

4.1 Setup

Table 8 presents the datasets we have used in our experiments. Dataset ReLi contains 2,000 sentences from user reviews of books taken from [12]. Dataset ComputerBR consists of 2,281 sentences of tweets regarding computers taken from [19]. Dataset Tripadvisor contains 1,049 sentences from user reviews of restaurants taken from [20]. Dataset Hotel consists of 840 sentences from user reviews of hotel services taken from [13]. Note that only dataset ReLi is well-balanced.

4.2 Metrics

For a particular sentence, the class predicted by a model is considered correct if it matches the class assigned in the datasets described previously. We use traditional metrics for evaluating the performance of models - macro-averaged Precision (P), Recall (R) and F-score (F1). For macro-averaged metrics, we compute the metrics for each class separately, then take their average to prevent bias towards high-frequency classes.

4.3 Comparison of Classifier Performances

To answer the research question RQ1, we conducted experiments to confirm whether there is a single, best-suited machine learning algorithm for subjectivity identification for a diversified set of datasets. Table 9 shows each classifier’s performance in terms of F-measure (F\(_1\)) and accuracy (A) along with its standard deviation. The bold values indicate the best scores. In this experiment, each result denotes an average of 5-fold cross-validation.

The results in Table 9 reveal the following trends. Overall, the GBT model achieved the best average F\(_1\) among all the datasets. The difference in the results with the best classifier (RF) in terms of the accuracy measure is only 0.001 on average. We also note that although the models used in our experiments were considerably different, there is minimal difference in their final results. For example, the difference between the best (GBT) and worst (ME and AG) classifier was only 0.014 for F\(_1\) average. Meanwhile, the difference between the best (GBT) and worst (SVM) classifier was only 0.021 for accuracy average.

To ensure that the results were not achieved by chance, the Friedman test was performed on the results. The Friedman test is a non-parametric test used to measure the statistical differences of methods over multiple datasets. For each dataset, the methods were ranked based on the results of classifier metrics. We adopted F\(_1\) metrics to rank the results.

Let M be the number of models evaluated and N be the number of datasets. The Friedman test is distributed according to the Fisher distribution with \(M - 1\) and (\(M - 1\))(\(N - 1\)) degrees of freedom. The null hypothesis in the Friedman test means that all methods perform equivalently at the significance level \(\alpha \). The null hypothesis is accepted when the value of Fisher distribution is less than the critical value; otherwise, it is rejected. For a confidence interval \(\alpha \)=0.05, M=5, and N=4, the critical value was 3.259. Therefore, the null hypothesis is accepted as 1.764.

4.4 Feature Ablation Study

To answer the research question RQ2, we conducted a feature ablation study to evaluate the importance of the proposed features in the performance of the classifiers.

An ablation study is performed by removing sets of features individually and evaluating the classifier’s performance to identify which of those features impact the most on the results. For each feature group, we retrained and retested the best performing classifier overall (GBT, as shown in Sect. 4.3), evaluating its performance with 5-fold cross-validation and F\(_1\) score as a metric.

In Table 10, each row labeled as All -F represents the results obtained by training the classifier with all groups of features, except F. The row labeled as All shows the results for all groups of features together. For each dataset, we show the average F\(_1\) achieved between the two classes (factual and subjectivity), and the F\(_1\) for each class.

We also provide the difference of each F\(_1\) measure from the score obtained by all groups of features together, labeled as Loss. A positive Loss indicates that a group F of features contributes significantly to the overall performance. A negative Loss indicates that removing F from the features set, in fact, improves the classifier’s performance.

We observed that different groups of features perform differently in each dataset. However, it is noticeable that the POS features always contribute to all datasets. This may indicate that Part-of-Speech tags are very relevant information for subjectivity classification in the Portuguese language, being the only group in our experiments shown to be neither structure nor domain-specific.

It is interesting to mention that the Syntactic Patterns group have a low contribution for ReLi, TripAdvisor, and Hotel datasets, and a negative performance for ComputerBR which contains only tweets. Since Syntactic Patterns tend to be language-specific, many of the chosen rules potentially do not represent opinions in Portuguese texts. Likewise, the whole group of concept-level features only contributed to the Hotel dataset. Interestingly, this is the smallest dataset used in our experiments. Thus, concept-level features may work better in smaller scopes.

We also observed that, as expected, the most contributing group in the ComputerBR dataset was the Twitter group. This indicates that extracting specific features of Twitter texts is a better strategy than relying on language-specific resources such as sentiment lexicons and language patterns.

4.5 Feature Ranking

To answer the research question RQ3, we performed the Recursive Feature Elimination (RFE) algorithm to evaluate how different combinations of features affect the predictive performance of a classifier. Firstly, all 54 features are used to train the GBT model. The performance of each feature is separately maintained. GBT was chosen because it achieved the best performance as a learning algorithm (see Sect. 4.3). The evaluation is performed using 5-fold cross-validation and macro F\(_1\) score as the metric. Secondly, the features with the lowest coefficients will be omitted during every epoch and retrained after removing each feature from the input set and continued until the required number of features are retained. As an outcome of RFE, features with the worst coefficients are eliminated individually during every iteration of the training process.

Figure 1 shows the performance of the GBT model using different sizes of feature subsets. The best result in each dataset is identified with a star. First of all, we can see that using all the features does not produce the best results. Therefore, using a technique of feature selection is necessary to remove unnecessary features. Interestingly, the best results in the Hotel dataset were achieved with just 8 features. In addition, we observed an improvement in the results when using feature selection. For example, using feature selection yielded gains of F\(_1\) of approximately 10.9% for ComputerBR and 2.6% for the Hotel dataset.

Figure 2 lists the top 10 features for each dataset. Individually, the number of adjectives (Feat. 1 - Table 1) is the most important feature in three datasets (ComputerBR, ReLi, and Tripadvisor). This feature is still the eleventh most important feature in the Hotel dataset. Concept-level features (Table 4) are the most important group of features. Concept-level features represent 65% among the top 10 features of the four datasets.

It can be concluded that RFE as a feature selection assists in increasing the classification accuracy by generating a more significant feature-weighted ranking. The number of features plays an important role in text classification. A large number of features does not guarantee the best classification performances and vice-versa. Yet, the best number of features will generate optimum classification accuracy.

5 Conclusion

In this paper, we have presented an empirical study of a vast set of text features for identifying subjective sentences for the Portuguese language. We examined the performance of these features within supervised learning methods along with four different datasets.

Firstly, we examined the performance of well-known machine learning algorithms for subjectivity identification. The experimental results showed that, although the classifiers used in our experiments were considerably different, there is minimal difference between the results obtained. This indicates that choosing the correct set of features is the most important factor for identifying subjective sentences. Secondly, we conducted a feature ablation study to evaluate the importance of the proposed features in the performance of the classifiers. The experimental results showed that POS features always contribute to all datasets. This indicates that POS tags are the most relevant feature group for subjectivity classification. Finally, we performed the recursive feature elimination algorithm to evaluate how different combinations of individual features affect the performance of a classifier. The experimental results showed that the number of adjectives is the most important feature in three out of four datasets. It was also possible to conclude that feature selection assists in increasing the classification accuracy.

In future works, we will try to evaluate the same set of text features against other NLP tasks, such as identifying comparative sentences and calculating the polarity of subjective sentences.

Notes

- 1.

- 2.

The Python package version was v3.0.0 with model pt_core_news_lg, except for the COMP and SUP POS tags, which were extracted with v2.2.0 and model pt_core_news_sm.

- 3.

- 4.

- 5.

References

Agarwal, A., Xie, B., Vovsha, I., Rambow, O., Passonneau, R.J.: Sentiment analysis of twitter data. In: Proceedings of the Workshop on Language in Social Media (LSM 2011), pp. 30–38 (2011)

Almatarneh, S., Gamallo, P.: Linguistic features to identify extreme opinions: an empirical study. In: Yin, H., Camacho, D., Novais, P., Tallón-Ballesteros, A.J. (eds.) IDEAL 2018. LNCS, vol. 11314, pp. 215–223. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-03493-1_23

Almatarneh, S., Gamallo, P.: Comparing supervised machine learning strategies and linguistic features to search for very negative opinions. Information 10(1), 16 (2019)

Balage Filho, P., Pardo, T.A.S., Aluísio, S.: An evaluation of the Brazilian Portuguese liwc dictionary for sentiment analysis. In: Proceedings of the 9th Brazilian Symposium in Information and Human Language Technology (2013)

Belisário, L.B., Ferreira, L.G., Pardo, T.A.S.: Evaluating richer features and varied machine learning models for subjectivity classification of book review sentences in portuguese. Information 11(9), 437 (2020)

Cambria, E., Li, Y., Xing, F.Z., Poria, S., Kwok, K.: Senticnet 6: ensemble application of symbolic and subsymbolic ai for sentiment analysis. In: Proceedings of the 29th ACM International Conference on Information & Knowledge Management, pp. 105–114 (2020)

Carvalho, C.M.A., Nagano, H., Barros, A.K.: A comparative study for sentiment analysis on election Brazilian news. In: Proceedings of the 11th Brazilian Symposium in Information and Human Language Technology, pp. 103–111 (2017)

Chai, Y., Lei, C., Yin, C.: Study on the influencing factors of online learning effect based on decision tree and recursive feature elimination. In: Proceedings of the 10th International Conference on E-Education, E-Business, E-Management and E-Learning, pp. 52–57 (2019)

Chaturvedi, I., Cambria, E., Welsch, R.E., Herrera, F.: Distinguishing between facts and opinions for sentiment analysis: survey and challenges. Inf. Fusion 44, 65–77 (2018)

Chenlo, J.M., Losada, D.E.: A machine learning approach for subjectivity classification based on positional and discourse features. In: Lupu, M., Kanoulas, E., Loizides, F. (eds.) IRFC 2013. LNCS, vol. 8201, pp. 17–28. Springer, Heidelberg (2013). https://doi.org/10.1007/978-3-642-41057-4_3

Chenlo, J.M., Losada, D.E.: An empirical study of sentence features for subjectivity and polarity classification. Inf. Sci. 280, 275–288 (2014)

Freitas, C., Motta, E., Milidiú, R., César, J.: Vampiro que brilha... rá! desafios na anotaçao de opiniao em um corpus de resenhas de livros. Encontro de Linguística de Corpus 11, 22 (2012)

Freitas, L.A.D., Vieira, R.: Ontology-based feature-level sentiment analysis in Portuguese reviews. Int. J. Bus. Inf. Syst. 32(1), 30–55 (2019)

Ge, P.: Analysis on approaches and structures of automated machine learning frameworks. In: 2020 International Conference on Communications, Information System and Computer Engineering (CISCE), pp. 474–477. IEEE (2020)

Hogenboom, A., Bal, D., Frasincar, F., Bal, M., De Jong, F., Kaymak, U.: Exploiting emoticons in polarity classification of text. J. Web Eng. 14(1 & 2), 22–40 (2015)

Honnibal, M., Montani, I.: spacy 2: natural language understanding with bloom embeddings, convolutional neural networks and incremental parsing. To appear 7(1), 411–420 (2017)

Kimura, M., Katsurai, M.: Investigating the consistency of emoji sentiment lexicons constructed using different languages. In: Proceedings of the 20th International Conference on Information Integration and Web-based Applications & Services, pp. 310–313 (2018)

Mansour, R., Hady, M.F.A., Hosam, E., Amr, H., Ashour, A.: Feature selection for twitter sentiment analysis: an experimental study. In: Gelbukh, A. (ed.) CICLing 2015. LNCS, vol. 9042, pp. 92–103. Springer, Cham (2015). https://doi.org/10.1007/978-3-319-18117-2_7

Moraes, S.M.W., Santos, A.L.L., Redecker, M., Machado, R.M., Meneguzzi, F.R.: Comparing approaches to subjectivity classification: a study on Portuguese tweets. In: Silva, J., Ribeiro, R., Quaresma, P., Adami, A., Branco, A. (eds.) PROPOR 2016. LNCS (LNAI), vol. 9727, pp. 86–94. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-41552-9_8

Oliveira, M., Melo, T.: Investigando features de sentenças para classificação de subjetividade e polaridade em português do brasil. In: Anais do XVII Encontro Nacional de Inteligência Artificial e Computacional, pp. 270–281. SBC (2020)

Palshikar, G., Apte, M., Pandita, D., Singh, V.: Learning to identify subjective sentences. In: Proceedings of the 13th International Conference on Natural Language Processing, pp. 239–248 (2016)

Pennebaker, J.W., Francis, M.E., Booth, R.J.: Linguistic inquiry and word count: Liwc 2001. Mahway: Lawrence Erlbaum Assoc. 71(2001), 2001 (2001)

Pereira, D.A.: A survey of sentiment analysis in the Portuguese language. Artif. Intell. Rev. 54(2), 1087–1115 (2021)

Poria, S., Cambria, E., Winterstein, G., Huang, G.B.: Sentic patterns: dependency-based rules for concept-level sentiment analysis. Knowl.-Based Syst. 69, 45–63 (2014)

Turney, P.: Thumbs up or thumbs down? semantic orientation applied to unsupervised classification of reviews. In: Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics, pp. 417–424 (2002)

Acknowledgments

The authors are thankful for the financial and material support provided by the Amazonas State Research Support Foundation (FAPEAM) through Project PPP 04/2017 and for the assistance of the Intelligent Systems Laboratory (LSI) of the Amazonas State University (UEA). We also gratefully acknowledge the support provided by the Gratificação de Produtividade Acadêmica (GPA) of the Amazonas State University (Portaria 086/2021).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

de Oliveira, M., de Melo, T. (2021). An Empirical Study of Text Features for Identifying Subjective Sentences in Portuguese. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13074. Springer, Cham. https://doi.org/10.1007/978-3-030-91699-2_26

Download citation

DOI: https://doi.org/10.1007/978-3-030-91699-2_26

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91698-5

Online ISBN: 978-3-030-91699-2

eBook Packages: Computer ScienceComputer Science (R0)