Abstract

Deep learning models have been the state-of-the-art for a variety of challenging tasks in natural language processing, but to achieve good results they often require big labeled datasets. Deep active learning algorithms were designed to reduce the annotation cost for training such models. Current deep active learning algorithms, however, aim at training a good deep learning model with as little labeled data as possible, and as such are not useful in scenarios where the full dataset must be labeled. As a solution to this problem, this work investigates deep active-self learning algorithms that employ self-labeling using the trained model to help alleviate the cost of annotating full datasets for named entity recognition tasks. The experiments performed indicate that the proposed deep active-self learning algorithm is capable of reducing manual annotation costs for labeling the complete dataset for named entity recognition with less than 2% of the self labeled tokens being mislabeled. We also investigate an early stopping technique that doesn’t rely on a validation set, which effectively reduces even further the annotation costs of the proposed active-self learning algorithm in real world scenarios.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

The task of named entity recognition (NER) is widely used for information extraction with trained models being able to directly extract named entities from unstructured text. The named entities can be used to create databases of structured information, as well as to improve the performance of models in more challenging natural language processing tasks. Deep neural models have been the state of the art for solving NER tasks, but to be trained to achieve it they often require big labeled sets of data. In many practical scenarios it’s not realistic to expect big amounts of data to be manually annotated. Active learning (AL) algorithms are often used to reduce the cost of annotation by identifying a small and representative subset of samples to be labeled. It’s expected that a model trained on this representative subset, and on the whole dataset, should achieve similar performances. Thus, by labeling the small representative subset, it is possible to reduce the annotation costs while training a good machine learning model.

Deep Active Learning. (DAL) applied to sequence tagging tasks (e.g. NER, POS-tagging) has only recently appeared in the literature [15], even though traditional active learning strategies applied to such tasks have appeared much earlier [14]. The use of neural-based models in active learning scenarios, instead of classic shallow models, brings with it challenges that need to be addressed. For once, deep learning models are slow to be retrained from scratch for each active learning iteration when compared to shallow models. Shen et al. [15] proposes the first DAL algorithm applied to a sequence tagging task. It proposes to use iterative training where the neural model’s training continues from where it stopped in the previous iteration of the active learning process. This iterative training coupled with early stopping based on the validation set incurs in a reduction of execution time. This may be justified by the fact that as the subset of labeled samples becomes more representative of the whole dataset, new unlabeled samples are less likely to bring new relevant information to it. Thus, the iteratively trained model is more likely to require fewer training epochs to assimilate the newer samples that were recently labeled. They also propose the CNN-CNN-LSTM model, a neural model which is lightweight to train and the Maximum Normalized Log-Probability (MNLP) sampling function which normalizes the confidence of the model’s predictions based on the sentence’s length. The AL algorithm of Shen et al. [15] trained a neural model to peak performance using just over 25% of labeled data from the training set of the OntoNotes 5.0 [11] dataset.

Siddhant and Lipton [16] extend the previous work by Shen et al. [15]. They rely on the Bayesian Active Learning through Disagreement (BALD) [4] sampling technique, which queries unlabeled samples that generate the most disagreement from multiple passes on bayesian neural models and apply it to sequence tagging problems. They also use early stopping based on the performance of the model on the validation set, but limit the size of the validation set for it to be proportional to the size of the labeled set used to train the model. The experiments performed investigate the performance of the bayesian DAL algorithm proposed using the CNN-CNN-LSTM [15] and the CNN-biLSTM-CRF [7] models on the OntoNotes 5.0 and CoNLL 2003 [13] datasets. The results indicate that the proposed bayesian sampling functions consistently outperform the MNLP function, but with marginal improvement.

Current works on deep active learning applied to NER rely on validation sets for early stopping of the model training. In real world scenarios, active learning algorithms are used to reduce annotation costs, and separating a part of the labeled dataset to be used as a validation set seems to go against the core idea of AL in general.

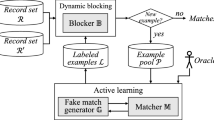

Active-Self Learning. (ASL) combines traditional active learning with self-training techniques, where an oracle (i.e. human annotator) annotates the most informative samples queried by a sampling function (e.g. least confidence, MNLP) while the trained model labels samples for which it has the highest confidence in its predictions. The ASL algorithm comes to further reduce the costs of labeling a dataset, when compared to the pure active learning process. Tran et al. [17] shows that the symbiotic relationship created by both the human and a trained Conditional Random Field model, labeling samples from the unlabeled set, increases the performance of the model while significantly reduce labeling costs. One major drawback of the ASL algorithm appears in its sensitivity to the quality of the initial labeled set, where a model trained on an ill-sampled initial labeled set can have its performance significantly reduced throughout the ASL process.

In This Work, we propose a deep active-self learning algorithm, that builds upon previous works from the literature [6, 17]. We propose some key changes to the algorithm from the literature in order to try and minimize its sensitivity to the initial set of labeled samples. We also propose an early stopping strategy based on the model’s confidence on an unlabeled set, which renders the use of a validation set unnecessary.

2 Methodology

This section presents the proposed deep active-self learning algorithm, which extends previous works from the literature [6, 17] with the use of deep learning models and key changes to alleviate the sensitivity of the self learning process to the initial labeled set. Along with the ASL algorithm, we also propose an early stopping criterion based on the model’s confidence on its predictions for the unlabeled samples, effectively dismissing the use of a validation set throughout the ASL procedure. Sections 2.1 and 2.2 present the proposed ASL algorithm and the early stopping criterion, respectively. Section 2.3 presents the experiments to be performed to evaluate the proposed strategies, as well as the baselines for comparison and hardware setup used for simulations.

2.1 Deep Active-Self Learning Algorithm

The active-self learning algorithms from the literature require the human and the trained model to cooperatively annotate samples from the unlabeled set. This process is very sensitive to the initial labeled set, which is used to train the initial machine learning model [17]. We argue that this sensitivity comes from the fact that samples annotated by the model in early rounds of the ASL algorithm may add a permanent bias to the labeled set, if poorly annotated. This stems from the fact that the samples annotated by the model are considered as reliable as those annotated by the oracle. Based on that, we propose an active-self learning algorithm that separates samples labeled by the model from those labeled by the human annotator, with the former having less impact on the model’s parameters during training. We also propose to return the samples labeled by the model to the unlabeled set at the end of each iteration of the ASL algorithm. We expect these two changes to reduce the risk of adding permanent bias to both the trained model and the labeled sets. The proposed algorithm can be thought as an iterative semi-supervised learning process, as for each iteration it identifies a set of unlabeled samples that can be reliably used for self-training, an approach that resembles current semi-supervised works from the literature [1]. Figure 1 presents a comparison between the labeling process and training of the model by the ASL algorithms presented in the literature and the one proposed here. Algorithm 1 presents a more detailed explanation of the proposed active-self learning algorithm.

In the diagrams, U and L represent the unlabeled and labeled sets, respectively. A.L. is the active labeled set, which contains samples labeled by the oracle. S.L. stands for self-labeled, meaning the set containing the samples labeled by the trained model. Figure (a) presents the ASL algorithm found in the literature, where both the oracle and the trained model cooperatively annotate unlabeled samples and add them to the same labeled set, which is then used to train the machine learning model. Figure (b) shows the proposed ASL algorithm, where samples labeled by the oracle and by the trained model are separated into different labeled sets, which are used for further training of the model, but with the self-labeled set having a lesser impact on the model’s parameters during training. It also shows that after training, all samples labeled by the model are returned to the unlabeled set, thus reducing the risk of introducing permanent bias to the model throughout the ASL process.

Note that in the Algorithm 1, m represents the machine learning model, Q is the query budget, \(min\_confidence\) is the minimum confidence the model must have to annotate an unlabeled sample, A.L. stands for active labeled set which contains samples annotated by the oracle, S.L. contains the samples labeled by the trained model, and U represents the set of unlabeled data.

The active learning procedure, represented by the \(Active\_Learning\_Query(\cdot )\) function in Algorithm 1, identifies the most informative unlabeled samples to be annotated by the oracle. For this work we selected the Maximum Normalized Log-Probability (MNLP) [15] as the sampling function, as it was shown to be competitive with more sophisticated sampling techniques [16] while being less expensive to be computed. The MNLP of a sequence X of length N can be computed as:

For the experiments, we follow previous works from the literature [16] and define a query budget as the number of tokens that can be selected by the sampling function to be manually annotated by the human annotator in a single iteration of the active learning process. We chose a query budget of approximately 2% of the tokens from the whole training set. Thus, the query budget was 20,000, 6,000 and 4,000 words for the datasets OntoNotes5.0, Aposentadoria, and CoNLL 2003, respectively.

The \(Self\_Learning\_Query(\cdot )\) function in Algorithm 1 identifies the samples for which the current trained model has a confidence higher than a predefined threshold. These samples are considered to be reliable and, therefore, can be used for self-training. A minimum confidence of 0.99 was selected in this work as the threshold, based on experiments performed using the validation set. The learning rate for training the model with self-labeled samples is one tenth of the learning rate used for training with the active-labeled samples, with this value being selected from initial experiments using the validation set.

For the model training, represented as \(Train\_model(\cdot )\) in Algorithm 1, we used classic supervised training. The single difference is that during training, we alternate using minibatches from the A.L. and S.L. sets.

2.2 Dynamic Update of Training Epochs - DUTE

Inspired by the overall uncertainty stopping criterion for active learning algorithms, proposed by Zhu et al. [18], we propose the Dynamic Update of Training Epochs (DUTE), an early stopping strategy that reduces the number of training epochs based on the mean confidence of the trained model on its predictions for the unlabeled set. We hypothesize that the model’s mean confidence indicates how well the labeled set represents the whole dataset, meaning that adding more unlabeled samples to the labeled set incurs in little change of the labeled set’s label distribution. Because of that, we argue that fewer training epochs are required as the model’s confidence on the unlabeled set increases.

To measure the model’s confidence on the unlabeled set, we propose to use the harmonic mean of the model’s normalized confidence for each unlabeled sample. The harmonic mean was used to emphasize those unlabeled samples for which the trained model has lower confidence in its predictions. The normalized confidence of a sequence of elements X can be computed as

where MNLP(.) is the Maximum Normalized Log-Probability, presented in Eq. 1. The harmonic mean for the model’s confidence on the unlabeled set U is computed as

Given the mean confidence of the trained model on the unlabeled set, the DUTE strategy computes the number of training epochs to be used at the k-th iteration of the active learning algorithm, as presented in Eq. 4.

In this work, a momentum of 0.9 was selected based on initial experiments performed using the validation sets of the datasets presented in Sect. 2.3.

2.3 Experiments

This section describes the experiments designed in order to validate the proposed ASL algorithm and DUTE strategy. This validation was done in two separate experiments. The first experiment compares the proposed ASL algorithm with the DUTE strategy to the AL algorithm proposed by Shen et al. The second experiment is an ablation study, where we investigate the impact that the DUTE strategy has on both the execution time of the algorithm and the trained model’s performance. The NER datasets, neural models and baselines used on both experiments are presented in the following sections.

Datasets

For the experiments, we used two english NER datasets frequently used in the literature, namely the OntoNotes 5.0 [11] and the CoNLL 2003 [13]. Additionally, we also experimented on a novel legal domain NER dataset in portuguese, named aposentadoria (retirement, in direct translation) dataset. The aposentadoria dataset contains named entities from 10 distinct classes related to retirement acts of public employees published in the Diário Oficial do Distrito Federal (Brazilian Federal District official gazette, in direct translation). It’s a part of a major dataset created by collaborators of project KnEDLeFootnote 1. More information about the dataset as well as a download link to it are available at the github repositoryFootnote 2.

Table 1 presents the datasets used in the experiments, along with relevant information such as domain area and language.

For the experiments, all datasets were preprocessed by converting them to the IOBES notation which is frequently adopted by works on NER in the literature [1, 5, 7] for being capable of significantly improving the model’s performance [12]. Also, numeric characters were replaced by # and 0 characters for english and portuguese datasets, respectively. Both replacements were made in order to enable the use of pretrained word embeddings used by the neural models, which are better described next.

Neural Models

The models to be used are those that appear in the work by Siddhant and Lipton [16], namely the CNN-CNN-LSTM introduced by Shen et al. [15] and the CNN-biLSTM-CRF designed by Ma and Hovy [7]. The hyperparameters used for both models were similar to those from their original papers for the experiments performed in the english NER datasets, and a grid-search was performed using the validation set on the aposentadoria dataset to find the best performing parameters for both models.

The parameters for the CNN-CNN-LSTM to be trained on the english NER datasets were similar to those presented in the experiments of Siddhant and Lipton [16], where the character-level CNN uses 25 dimensional character embeddings, the 1-d convolution layer has 50 filters, kernel size of 3, stride and padding of 1. The word-level CNN has two blocks, where each block has a 1-d convolutional layer with 800 filters, kernel size of 5, stride of 1 and padding of 2. The LSTM tag decoder consists of an LSTM layer of size 256. All dropout layers for the CNN-CNN-LSTM model have probability 0.5. For the portuguese NER dataset, a grid-search was performed using the validation set and the final architecture chosen had the following hyperparameters: The character-level CNN had the same parameters as those used for the english datasets. The word-level CNN has one block, consisting of a 1-d convolution layer with 400 filters, kernel size of 5, stride of 1 and padding of 2. The LSTM tag decoder has size 128. All dropout probabilities are still of 0.5.

The CNN-biLSTM-CRF model trained on the english NER datasets has a character-level CNN with 30 dimensional character embeddings, a 1-d convolution layer with 30 filters, kernel size of 3, padding of 1 and stride of 1. The biLSTM layer has size 300. All dropout probabilities are set to 0.5. For the portuguese dataset, a grid-search was employed to define the model’s best parameters. The chosen model had the same parameters for the character-level CNN as that used for english datasets, while the biLSTM layer has size 256.

The pretrained embeddings used for both models for the english datasets were the GloVe embeddings of 100 dimensions pretrained on an english newswire corpus [10]. For the portuguese dataset, the GloVe embeddings of 300 dimensions pretrained on a multi-genre portuguese corpus [2] was used.

For optimization of the models throughout training, we used stochastic gradient descent of the cross entropy loss for the CNN-CNN-LSTM and the negative log-likelihood for the CNN-biLSTM-CRF. A constant momentum of 0.9 was selected and gradient was clipped at 5.0. Learning rates were 0.015 for both models trained on english datasets. For the portuguese dataset, learning rates of 0.010 and 0.0025 were selected for the CNN-CNN-LSTM and CNN-biLSTM-CRF models, respectively.

Baselines and Evaluation Metrics

For the first experiment, the baseline to compare the performance of the proposed ASL algorithm with the DUTE strategy is the deep active learning algorithm proposed by Shen et al. [15], using the MNLP sampling function. One key change is that we do not employ early stopping based on a validation set as the original work, instead we train the machine learning model for the full number of training epochs at each iteration of the DAL process. This change is justified by the fact that the early stopping is applied mainly to avoid overfitting of the models during training, and throughout all the experiments performed this phenomenon didn’t occur. The f1-scores will be computed on the test set at the end of the training process. Query budget, number of training epochs, and initial labeled set size will be the same as those used by the ASL algorithm.

For the second experiment, the ablation study, we wish to investigate the impact of the proposed DUTE strategy. As such, the baseline will be the proposed ASL algorithm without the DUTE strategy.

A trained model’s performance will be evaluated using the exact-match (i.e. span-based) micro-averaged f1-score on the test set.

Experiment Setup

All experiments reported were implemented and executed on the Google Colab platform with a pro subscription using the PyTorch [9] framework. GPUs available were the Tesla P100, the Tesla V100, and the Tesla T4 all with 16GB.

3 Results

The results for the experiments on the proposed deep active-self learning algorithm along with its baselines are presented in this section. We also show an ablation study conducted to investigate the impact of the DUTE strategy on the performance and execution time of the proposed ASL algorithm.

3.1 Deep Active-Self Learning with DUTE Strategy

Figure 2 shows the performance curves comparing the traditional deep active learning algorithms found in the literature and the proposed deep active-self learning algorithm with DUTE strategy. Each experiment was executed six times with unique initial labeled sets, and we report the mean performance curve for each of them. The same six initial labeled sets were used for experiments on the same dataset for all training algorithms and neural models. The algorithms were executed until at least 50% of the training set had been annotated by the oracle, in a similar setup used in previous works [15, 16]. It has been observed that the proposed ASL algorithm achieved similar performance to the deep active learning baseline for most experiments.

One advantage of the active-self learning algorithm is its capabilities of allowing for self-labeling, thus potentially reducing human annotation efforts. Figure 3 reports the performance of the self-labeling process throughout the active-self learning algorithm. The figures present the percentage of self-labeled samples by the percentage of actively labeled samples (i.e. human annotation) and discriminates the amount of correctly and wrongly self-labeled tokens. In general, the trained model predicted most tokens correctly at all iterations of the algorithm. Across all experiments performed, \(1.31\%\) was the highest percentage of tokens to be mislabeled by the trained model in any given iteration of the ASL process. Even though most tokens were correctly self-labeled, little impact has been observed on the trained model’s performance due to self-training. This may be justified by the fact that NER datasets are imbalanced, with most tokens not being a part of a named entity, and entity level annotations being unreliable at earlier rounds.

Comparison between the correctly and wrongly self-annotated samples, at token level, for the experiments performed on all three datasets. Note that the Y-axis on each graph presents the percentage of the whole training set that has been self-labeled by the trained model in a given iteration of the ASL algorithm, while the X-axis presents the percentage of the whole training set that has been labeled by the human annotator.

3.2 Ablation Study

Next we conduct an ablation study to investigate the impact of the proposed DUTE strategy on the performance and execution time of the proposed deep active-self learning algorithm. Differently from the previous experiment, in this ablation study we executed the algorithms until at least 30% of the training set had been annotated by the oracle. Figure 4 compares the performance of the ASL algorithm with and without the DUTE strategy, while Table 2 reports the mean and standard deviation for the execution times of the simulations. It has been observed that for all the experiments conducted, the DUTE strategy is capable of significantly reducing execution time, while maintaining similar model performance.

4 Conclusion

From the experiments conducted, we noticed that the proposed deep active-self learning algorithm is capable of self-annotating unlabeled samples reliably. The self-training hasn’t, however, shown significant impact on the model’s performance. The proposed DUTE strategy has also shown promising results, being capable of significantly reducing the execution times for simulations of the proposed algorithm with little impact on the model’s performance, while not relying on a validation set.

One of the main limitations of the proposed ASL algorithm still continues to be its reliance on labeled data for hyperparameter tuning. While our DUTE strategy effectively avoids using validation sets throughout the ASL process, we still used training and validation sets for tuning of the hyperparameters for the neural models and others such as learning rates and batch sizes. This still poses one of the biggest limitations to the use of active learning algorithms in real-world low-resource scenarios.

Another observation made is that the self-training technique employed here wasn’t able to improve the model’s performance. Future works may investigate more sophisticated self-training techniques, such as consistency regularization techniques, which are often employed by current state-of-the-art semi-supervised methods [1]. These more recent self-training techniques also tend to use soft targets for training, instead of hard targets (i.e. one hot encoded vectors) [1, 8], in a setup similar to knowledge distillation [3].

References

Clark, K., Luong, M.T., Manning, C.D., Le, Q.: Semi-supervised sequence modeling with cross-view training. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, pp. 1914–1925. Association for Computational Linguistics, Brussels, October–November 2018. https://doi.org/10.18653/v1/D18-1217

Hartmann, N.S., Fonseca, E.R., Shulby, C.D., Treviso, M.V., Rodrigues, J.S., Aluísio, S.M.: Portuguese word embeddings: evaluating on word analogies and natural language tasks. In: Anais do XI Simpósio Brasileiro de Tecnologia da Informação e da Linguagem Humana, pp. 122–131. SBC, Porto Alegre, RS, Brasil (2017). https://sol.sbc.org.br/index.php/stil/article/view/4008

Hinton, G., Vinyals, O., Dean, J.: Distilling the knowledge in a neural network (2015)

Houlsby, N., Huszár, F., Ghahramani, Z., Lengyel, M.: Bayesian active learning for classification and preference learning (2011)

Lample, G., Ballesteros, M., Subramanian, S., Kawakami, K., Dyer, C.: Neural architectures for named entity recognition. In: Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pp. 260–270. Association for Computational Linguistics, San Diego, June 2016. https://doi.org/10.18653/v1/N16-1030, https://www.aclweb.org/anthology/N16-1030

Lin, Y., Sun, C., Xiaolong, W., Xuan, W.: Combining self learning and active learning for Chinese named entity recognition. J. Softw. 5, May 2010. https://doi.org/10.4304/jsw.5.5.530-537

Ma, X., Hovy, E.: End-to-end sequence labeling via bi-directional LSTM-CNNs-CRF. In: Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 1064–1074. Association for Computational Linguistics, Berlin, August 2016. https://doi.org/10.18653/v1/P16-1101

Miyato, T., Dai, A.M., Goodfellow, I.: Adversarial training methods for semi-supervised text classification. In: International Conference on Learning Representations (ICLR) (2017)

Paszke, A., et al.: Pytorch: an imperative style, high-performance deep learning library. In: Wallach, H., Larochelle, H., Beygelzimer, A., d’Alché-Buc, F., Fox, E., Garnett, R. (eds.) Advances in Neural Information Processing Systems 32, pp. 8024–8035. Curran Associates, Inc. (2019). http://papers.neurips.cc/paper/9015-pytorch-an-imperative-style-high-performance-deep-learning-library.pdf

Pennington, J., Socher, R., Manning, C.: GloVe: global vectors for word representation. In: Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 1532–1543. Association for Computational Linguistics, October 2014. https://doi.org/10.3115/v1/D14-1162

Pradhan, S., et al.: Towards robust linguistic analysis using OntoNotes. In: Proceedings of the Seventeenth Conference on Computational Natural Language Learning, pp. 143–152. Association for Computational Linguistics, Sofia, August 2013

Ratinov, L., Roth, D.: Design challenges and misconceptions in named entity recognition. In: Proceedings of the Thirteenth Conference on Computational Natural Language Learning (CoNLL-2009), pp. 147–155. Association for Computational Linguistics, Boulder, June 2009. https://www.aclweb.org/anthology/W09-1119

Sang, E.F.T.K., Meulder, F.D.: Introduction to the CoNLL-2003 shared task: Language-independent named entity recognition. In: Proceedings of the Seventh Conference on Natural Language Learning at HLT-NAACL 2003, pp. 142–147 (2003)

Settles, B., Craven, M.: An analysis of active learning strategies for sequence labeling tasks. In: Proceedings of the Conference on Empirical Methods in Natural Language Processing, EMNLP 2008, pp. 1070–1079. Association for Computational Linguistics, USA (2008)

Shen, Y., Yun, H., Lipton, Z., Kronrod, Y., Anandkumar, A.: Deep active learning for named entity recognition. In: Proceedings of the 2nd Workshop on Representation Learning for NLP, pp. 252–256. Association for Computational Linguistics, Vancouver, August 2017. https://doi.org/10.18653/v1/W17-2630

Siddhant, A., Lipton, Z.C.: Deep Bayesian active learning for natural language processing: results of a large-scale empirical study. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, pp. 2904–2909. Association for Computational Linguistics, Brussels, October–November 2018. https://doi.org/10.18653/v1/D18-1318

Tran, V.C., Nguyen, N.T., Fujita, H., Hoang, D.T., Hwang, D.: A combination of active learning and self-learning for named entity recognition on twitter using conditional random fields. Knowl.-Based Syst. 132, 179–187 (2017). https://doi.org/10.1016/j.knosys.2017.06.023

Zhu, J., Wang, H., Hovy, E., Ma, M.: Confidence-based stopping criteria for active learning for data annotation. ACM Trans. Speech Lang. Process. 6(3), April 2010. https://doi.org/10.1145/1753783.1753784

Acknowledgements

The authors are supported by the Fundação de Apoio a Pesquisa do Distritio Federal (FAP-DF) as members of the Knowledge Extraction from Documents of Legal content (KnEDLe) project from the University of Brasilia.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Neto, J.R.C.S.A.V.S., Faleiros, T.d.P. (2021). Deep Active-Self Learning Applied to Named Entity Recognition. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13074. Springer, Cham. https://doi.org/10.1007/978-3-030-91699-2_28

Download citation

DOI: https://doi.org/10.1007/978-3-030-91699-2_28

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91698-5

Online ISBN: 978-3-030-91699-2

eBook Packages: Computer ScienceComputer Science (R0)