Abstract

Reviews are valuable sources of information to support the decision making process. Therefore, the task of classifying reviews according to their helpfulness has paramount importance to facilitate the access of truly informative content. In this context, previous studies have unveiled several aspects and architectures that are beneficial for the task of review perceived helpfulness prediction. The present work aims to further investigate the influence of the text specificity aspect, defined as the level of details conveyed in a text, with the same purpose. First, we explore an unsupervised domain adaptation approach for assigning text specificity scores for sentences from product reviews and we propose an evaluation measure named Specificity Prediction Evaluation (SPE) in order to achieve more reliable specificity predictions. Then, we present domain-oriented guidelines on how to incorporate, into a CNN architecture, either hand-crafted features based on text specificity or the text specificity prediction task as an auxiliary task in a multitask learning setting. In the experiments, the perceived helpfulness classification models embodied with text specificity showed significant higher precision results in comparison to a popular SVM baseline.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Reviews written by consumers on e-commerce platforms are valuable resources in the decision making process of product purchase. Nowadays, it is hard to imagine buying a product without reading at least some reviews first. Moreover, online product reviews are an essential part of the acquisition of business intelligence and market advantage for the retailers [17, 22] once they can retrieve informative evaluations about several aspects of a product.

Due to the great number of reviews constantly posted, many websites provide a voting system through which consumers can express whether they perceive a review as helpful or not. These votes are frequently summarized to the users with a message next to the review, similar to “H of T people found this helpful”. This message means that from T people who evaluated this comment, H found the review helpful.

From the helpfulness votes, the perceived helpfulness of a review can be derived, which is characterized as the majority impression of the consumers about the review utility during the purchase decisions. The name “perceived helpfulness” is used to emphasize that we are tackling a definition obtained from the helpfulness votes rather than some expert judgment [1, 12, 17]. Given this concept, the helpfulness score of a review is calculated as \(\theta = \frac{H}{T}\). Having all posted comments with a helpfulness score assigned is beneficial for consumers and merchants in several ways, including as a dimension to rank them.

Since most of the reviews do not receive any votes at all [13], especially for less popular and recently released products, several reviews cannot be graded with a perceived helpfulness score. Therefore, this scenario has been fruitful for researchers to study what makes a review helpful and how to build intelligent systems that can distinguish reviews with different levels of helpfulness. These studies have mainly concentrated their efforts on feature engineering since several complex factors can contribute to the performance of a model in the helpfulness prediction task. Subjectivity [1, 6, 8] and readability [6, 7] are some of the many aspects that have been investigated in the last years.

More recently, hand-crafted features based on text specificity, which is defined as the level of details about a subject expressed in a text [14], also proved to be interesting attributes for the classification of reviews according to their helpfulness [12]. Text specificity has been widely used to assess the writing quality of a text. In the field of automatically generated summaries, [15] argued that the quantity of specific and general sentences, as well as how sequences of them are disposed, affect the writing quality of a text.

The quality of a text is also related to how easy it is to understand its content, i.e. what is its level of readability. This aspect has also been considered important for the helpfulness prediction task [6, 7]. Based on the aforementioned points, we argue that text specificity may play an important role in helpfulness prediction.

The first part of our proposal aims to combine the specificity based features proposed in [12] into a well known Convolutional Neural Network (CNN) architecture [9]. CNNs have been successfully employed in some earlier helpfulness prediction studies by reducing the need for labor-intensive feature engineering and by achieving good performance [1, 3, 4, 20]. Secondly, we propose to integrate the specificity prediction as an auxiliary task in a Multitask learning (MTL) version of the same CNN architecture used in the first part. MTL can be used to improve model generalization and learning efficiency by sharing underlying common structures between complementary tasks [2]. The multitask paradigm has recently also shown promising results in the helpfulness prediction task [4].

The specificity based features are derived from the specificity degree, which is assigned to each review using an unsupervised domain adaptation technique [10]. Although [12] proposed these features, the study did not discuss how different experimental setups, beyond the recommended by the authors of the domain adaptation method, can affect the specificity degree predictions of online product reviews. Therefore, the present study also aims to bridge this gap by empirically finding more suitable experimental settings for our domains. To evaluate the specificity scores predicted in this unsupervised context, we also propose a measure named Specificity Prediction Evaluation (SPE), which is calculated as the average length of general sentences over the average length of specific sentences.

In a nutshell, our contribution is three-fold: 1) a better understanding of how to obtain more reliable specificity prediction results in the domain of product reviews; 2) proposing a method for the evaluation of specificity predictions of unlabeled sentences, and 3) presenting domain-oriented guidelines on how to incorporate the specificity aspect into a CNN architecture. The experiments showed that the CNN-based models embodied with text specificity could achieve significant higher precision results in comparison to a popular SVM baseline.

The rest of this paper is structured as follows. Section 2 presents some of the related studies on perceived helpfulness prediction. Section 3 explains how did the review specificity predictions were obtained and how they were evaluated using the SPE. Our proposed perceived helpfulness classification model using a CNN architecture and text specificity is presented in Sect. 4. Details about the experiments are defined in Sect. 5 and their results are discussed in Sect. 6. Section 7 summarizes the concluding remarks and future work.

2 Related Work

One of the first studies to investigate the helpfulness prediction task is in [8]. This work proposed a regression task in which the target was the helpfulness score based on the votes received by the review. One of its main goals was to analyze the performance of five categories of features for this task: structural, lexical, syntactic, semantic, and metadata. They reported that the best combination of features was the length of the review, the lemmatized unigrams, and the star rating score given by the review author to the product.

The effect of review length on perceived helpfulness prediction has also been much explored by past researches [6,7,8, 17]. According to [8], in the reviews used in their experiments, among the longest reviews the average helpfulness score was 0.82 while the average score among the shortest ones was only 0.23. [17] also empirically demonstrated that reviews with more words have a higher helpfulness degree. Therefore, the researchers have been arguing that longer reviews are likely to contain more information and, consequently, can influence more readers to vote them as “helpful” [18].

Besides the review extensiveness, how well the text is organized and written, to facilitate the understanding of its content, has also been considered an important factor. In [7] the behavior of the review length as a determinant of review helpfulness was further investigated and they revealed some factors that moderate the relation between length and helpfulness. According to their experiments, review length has a positive influence on helpfulness only when the readability is high. Additionally, [6] reported that not only review readability has an impact on review helpfulness, but also it influences the product sales. In their experiments, for some types of products, having reviews with higher readability scores is related to higher sales of such products. This elucidates the importance of studying the influence of factors related to writing quality on review helpfulness.

Based on the previous studies, [12] started to investigate the influence of text specificity in the perceived helpfulness context. This work reported that combining lemmatized unigrams with three hand-crafted features, derived from text specificity, either outperformed or showed similar performance on more than 80% of the results, when compared to models using only the unigrams. At the opposite side, using only the features based on sentence specificity achieved the lowest results. The ideas developed in this work have inspired the present study and, therefore, the features proposed by its authors are used to evaluate our proposal to incorporate them into a CNN architecture.

Several machine learning algorithms have been used to model the helpfulness prediction task, including CNNs. [3] proposed a novel end-to-end cross-domain architecture for helpfulness prediction using word-level and character-based representations. Their CNN architecture is based on the one designed by [9] which they further adjusted with auxiliary domain discriminators to transfer knowledge between domains, using large datasets as the source domains and small ones as the target domains. In their experiments, the CNN models yielded better results than known baselines which used hand-crafted features.

Likewise, [1] proposed a helpfulness classification model by combining convolutional layers and Gated Recurrent Units. Instead of using embeddings, they selected 100 linguistic and meta data features, based on prior work, to form the input review representation. Their approach outperformed other classification methods by 0.04 and 0.02 in terms of F1-measure and accuracy, respectively.

Due to the successful usage of MTL on a wide range of tasks, [4] introduced an end-to-end MTL architecture to learn at the same time the helpfulness classification of online product reviews and the star rating given by the reviewer to the product. The architecture consists of convolutional layers, equipped with an attention mechanism, which receives as input the concatenation of word embeddings and their character-level encoding. The main purpose of this model is to jointly learn the helpfulness classification task (main task) by using the hints learned from the star rating prediction task (auxiliary task). Using Amazon reviews, the study empirically validated that the model achieved a superior performance than single-task learning (STL) models similar to the proposed MTL model, as well as prior techniques using hand-designed features.

3 Specificity Prediction on Sentences from Reviews

The text specificity prediction task aims to build machine learning models that can learn, from examples, to assign a specificity degree to a text representation, usually on sentence-level [10, 11, 14, 15]. Even though different approaches have been proposed in the last years, the majority of them had tackled the problem in a supervised way, which requires extensive manual annotation. To overcome this matter, a robust unsupervised domain adaptation solution was proposed in [10] to predict the specificity score of unlabeled domains.

The unsupervised domain adaptation technique consists of using labeled sentences from a source domain to assign reliable specificity scores (between 0 and 1) to unlabeled sentences from a different target domain, without access to any labeled example from the target domain. [10] reported good generalization results when evaluated their approach with social media posts, restaurant reviews, and movie reviews as the target domains. The source data is a publicly available dataset with about 4,300 labeled news sentences [14].

This approach is based on the self-ensembling of a single base model, which combines hand-crafted features (sentence length, average word length, percentage of strongly subjective words, etc.), word embeddings and BiLSTM (Bidirectional Long Short-term Memory) to encode the input sentences. Because of the good performance of this technique on review domains (restaurant and movie reviews), it was employed by the present work to infer the specificity degree of the unlabeled sentences from our product reviews.

Although this solution was also exploited in [12] to predict the specificity of reviews’ sentences, the study did not report any broader analysis about these experiments. In summary, for each dataset (for example, reviews about books), they have sampled 4,300 sentences as the training data (the same amount of sentences of the source news data) and trained the model for 30 epochs, which is the same value that [10] reported for their best results. However, during our experiments, we detected more robust specificity degrees when increasing the training set and the number of epochs. The best settings regarding these two aspects for our domains are further discussed in Sect. 6, along with more details about the experiments.

One of the features used in the base model introduced in [10] is the sentence length. Their labeled and unlabeled datasets (source and target data) have sentences with lengths varying between 15 and 23 tokens, on average. Therefore, despite coming from different domains, the sentences used in their experiments have close lengths. Likewise, the datasets used in our experiments contain reviews with sentences varying, on average, between 17 and 22 tokens.

However, while the longest sentence from the target domains used by [10] has 202 tokens, there are many much longer sentences within our datasets. This may be caused by noises in the reviews, which, even after several preprocessing techniques, may be harming the sentence splitting process. Still, we are assuming, for now, this is an intrinsic characteristic of these datasets. We noticed that this aspect was negatively affecting the specificity prediction results when training for few epochs or with few sentences. In many cases, the longest sentences were scored with the lowest specificity degrees and the opposite for the shortest sentences. After trying different experimental settings, the models could achieve more robust results by increasing the training size and the number of epochs.

Given the problems observed in the experiments, to systematically choose a trained model that could designate the most reliable specificity scores for each target domain of product reviews, we propose a measure called Specificity Prediction Evaluation (SPE). Considering \(\sigma \) as the specificity degree of a sentence, general sentences having \(\sigma < 0.5\), and specific sentences having \(\sigma \ge 0.5\). SPE is based on one assumption: on average, reliable specific sentences tend to be longer than reliable general sentences [11]. This assumption is supported by related past studies and by examining the datasets used in the experiments performed in [10]. Therefore, SPE is calculated as follows:

where G is the set of general sentences, S is the set of specific sentences, |G| denotes the quantity of general sentences, |S| refers to the quantity of specific sentences, \(L_{avg}(G)\) denotes the average length of general sentences, and \(L_{avg}(S)\) is the average length of general sentences.

We assume \(SPE < 1\) is a satisfactory value because, in this case, the average length of specific sentences is longer than the general ones. In fact, the datasets from [10] have SPE values varying between 0.52 and 0.74. In addition to that, it is important to highlight that the text specificity aspect is composed by several other text characteristics beyond the length. For that reason, SPE is a first effort to evaluate the specificity scores without having labeled sentences and, in future endeavors, it should be applied along with other evaluation approaches.

After the improvements performed to get more reliable specificity predictions, we extracted the same three hand-crafted features proposed in [12]: SpecificityDegree, the review-level specificity degree; SpecificSents%, the percentage of specific sentences in the review; and SpecificityBalance, which is a balance measure between the number of specific and general sentences in the review. The values of all these features lie in the range [0, 1].

The next section explains how these features were incorporated into a CNN architecture for the perceived helpfulness classification task.

4 Perceived Helpfulness Classification Model

For a review r, let \(H_r\) be the number of helpful votes received by the review and \(T_r\) be the total number of votes given to r. The helpfulness ratio of the review r is calculated as \(\theta _r = \frac{H_r}{T_r}\). To transform the helpfulness ratio into binary labels, we set the threshold value to 0.5, which is a common value used on related studies [4]. If \(\theta _r > 0.5\), r is categorized as “very helpful”, otherwise if \(\theta _r \le 0.5\), r is said to be “poorly helpful”. In summary, the task consists of classifying reviews into two categories: “very helpful” and “poorly helpful”.

Based on the successful application of CNN architectures on previous related works [1, 3, 4, 20], this type of neural network was employed here to validate the influence of text specificity on perceived helpfulness. The usage of convolutional and pooling layers is known to be successful in several text classification tasks when there are strong local clues (sequence of tokens) that could appear in different locations of the texts. For example, in the context of product reviews, expressions such as “I did not like” or “I recommend” could be frequent sequences that are important to distinguish reviews with different levels of helpfulness.

Our helpfulness classification models have a base CNN classification architecture which is the single channel architecture proposed in [9] for sentence classification. This architecture has achieved good performance across many different tasks, including sentiment classification. It consists of a single convolutional layer, with 3 types of filter of kernel sizes 3, 4, and 5 and 100 filters each, followed by a max-pooling layer. Each filter processes all the possible windows of words, according to its kernel size, from the input review representation, and generates a feature map. Then, the max-pooling operation captures, for each feature map, the feature with the highest value, which is presumably the most important feature in the map. Finally, the learned pooled vector is passed to a fully connected layer that computes the model output.

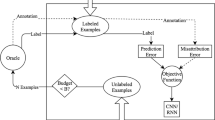

Figure 1 illustrates a review representation at different steps of the classification process using this CNN design. The input representation is a \(L \times D\) matrix where D is the dimension of the embedding vectors used to represent each word in the review and L is the text length which is a fixed value for all the reviews. Truncation and zero padding is applied to the reviews when necessary.

The input, outputs, and intermediate representations in the CNN architecture proposed on [9] and adjusted for our proposal.

To incorporate the text specificity aspect into the base CNN structure, we propose two approaches: i) using the specificity based features, and ii) jointly learning the specificity prediction and the helpfulness classification task in a MTL setting. Concerning the features, they are simply concatenated into the pooled vector. Then, the classification output is computed using not only the CNN learned representation but also the specificity hand-crafted features.

Regarding the MTL scheme, the goal of the model is to learn all the network parameters by minimizing the joint loss presented on Eq. 2. Here the weights \(\lambda _h\) and \(\lambda _s\) were empirically set to 1 after some initial experiments.

where \(\mathcal {L}_h(\hat{y}_h, y_h)\) is the loss of the helpfulness classification task and \(\mathcal {L}_s(\hat{y}_s, y_s)\) is the loss of the specificity prediction task.

Review specificity prediction is a regression task that consists of learning to predict a specificity score between 0 and 1 for each review. We tackled this problem as a supervised task by using, as the true labels, the review specificity degrees obtained with the approach explained in Sect. 3. After assigning specificity scores for each sentence in the review, the review specificity degree is calculated as the average sentence specificity degree.

5 Experimental Setup

5.1 Data and Preprocessing

The experiments were performed with public datasets of Amazon reviews written in English from 9 different product categories [16]. Each dataset originally contains at least five reviews per product and per user. We decided to use Amazon reviews in the experiments to be consistent with most of the studies from the related literature [1, 3,4,5,6,7,8, 12, 17, 20]. Table 1 shows some relevant characteristics of these datasets.

Before using these collections as inputs to our classification models, some cleaning procedures were applied to reduce the negative effect of noisy data in the experiments. This preprocessing step is specially important to improve tokenization and splitting the comments into sentences, which were done here with the help of the spaCy libraryFootnote 1. The procedures employed to clean the datasets and to improve sentence boundaries are listed below:

-

Replace URLs by the placeholder token “URL”;

-

Remove ASCII symbols, double quotes, simple emoticons (e.g. “=)”), and empty brackets (e.g. “()”);

-

Replace ellipsis by a single period mark (“...” \(\longrightarrow \) “.”)

-

Add a blank space after every comma or period mark when it was followed by a word, a special symbol or a number (if the token is a number, the token before the comma or the period must not be a number as well);

-

Replace dots by hyphens on enumerations (e.g.: “1. item” \(\longrightarrow \) “1 - item”).

Besides the previous steps, invalid reviews with more helpful votes than the total amount of votes (\(H > T\)) were discarded. Also, it was removed the reviews with \(T = 0\) because the helpfulness score \(\theta \) cannot be calculated for such reviews.

Regarding the datasets with the largest sizes in their original form (Books, CDs and Vinyl, Electronics, and Movies and TV), reviews with \(T < 5\) were removed in order to increase the confidence in the perceived helpfulness scores as more people has evaluated the selected reviews. This type of cut-off is a known approach among the prior related studies [8]. Additionally, reviews with \(0.3< \theta < 0.7\) were removed because this approach seems to avoid dealing with reviews that received almost the same number of helpful and not helpful votes, i.e. the reviews with a more uncertain perception of helpfulness [12].

These Amazon datasets are known to be highly imbalanced [12, 13] in which the class “very helpful” is the majority one. Handling the challenges related to learning on imbalanced scenarios is out of the scope of the present work, so data balancing was applied by undersampling the majority class.

5.2 Training

Even though all collections have product reviews from Amazon, each dataset was analyzed as a distinct domain because past studies showed that the product type influences how people perceive helpfulness [17]. Therefore, in the experiments, the models were independently trained for each domain. The code was developed with the aid of KerasFootnote 2, TensorflowFootnote 3 and scikit-learnFootnote 4 libraries. All the random seeds were set to 42 during the experiments.

The results reported in Sect. 6 were obtained using stratified 5-fold cross-validation. The classifiers embodied with the text specificity aspect were also statistically compared against two baselines with the non-parametric Friedman test, considering \(p-value < 0.05\), and the post-hoc Nemenyi test.

Regarding the CNN hyperparameters, valid padding was performed and the stride was set to 1. In comparison to the original proposal [9], we used much less regularization, a dropout of 0.1 in the penultimate layer and l2-norm regularization of 0.01, because of observed performance improvements after some initial experiments. Consequently, the number of training epochs was decreased to two since the models would overfit beyond this point. Training was performed with the Adam optimizer using \(\eta = 0.001\). For the helpfulness classification task, it was used the Binary Cross Entropy loss and the ReLU activation function. For the auxiliary specificity prediction task, it was used Mean Squared Error and sigmoid. ReLU was also the chosen activation in the convolutional layers.

For every CNN-based model, similar to experiments performed in [4, 9], we tested two ways of initializing the 300-dimensional embeddings: randomly and by using pretrained GloVe [19] embeddings which were trained on a large 42 billion token corpus. In either approach, the word embeddings were fine-tuned during training time. The dimension size of 300 was defined because of its good performance when using CNN on other text classification tasks [21].

Due to the broad range of review sizes among the Amazon datasets, it was also tested two maximum input length values for each domain: the mean review length and 512 tokens. In fact, other values were tested but we noticed more interesting results when comparing these two values. The batch size was set to 32 when using the mean length and to 16 when using a length of 512 due to memory constraints.

5.3 Model Variations

The following models were compared during our experiments:

-

Baselines:

-

CNN combined with text specificity (CNN+SPECIFICITY):

- \(\bullet \):

-

MTL CNN: The multitask CNN model, without the specificity features.

- \(\bullet \):

-

SpecificityDegree: The CNN model embodied with the SpecificityDegree feature.

- \(\bullet \):

-

SpecificSents%: The CNN model embodied with the SpecificSents% feature.

- \(\bullet \):

-

SpecificityBalance: The CNN model embodied with the SpecificityBalance feature.

- \(\bullet \):

-

All features: The CNN model embodied with all the three features (SpecificityDegree, SpecificSents%, SpecificityBalance).

With respect to the models SpecificityDegree, SpecificSents%, SpecificityBalance, and All features, both STL and MTL architectures were tested.

6 Results and Discussion

6.1 Perceived Helpfulness Classification

Table 2 summarizes the best F1 results obtained with the STL CNN and the MTL CNN models. Together with the F1 measure, we also report which combination of embeddings initialization approach (randomly or with GloVe pre-trained vectors) and maximum input length (mean length or 512 tokens) yielded such performance. Finally, the table also shows the GloVe embeddings coverage for each domain, i.e. the percentage of all words for which a pre-trained GloVe embedding was found. The closer this coverage is to 100%, the smaller is the amount of out-of-vocabulary (OOV) words, which may affect the performance.

The results indicate that jointly learning the text specificity prediction task with the perceived helpfulness classification task could enhance the classifier performance for most of the datasets. This reveals some evidence regarding the benefit of incorporating text specificity into a CNN architecture, at least for 5 out of 9 domains. Apart from this performance comparison between STL CNN and MTL CNN, the purpose of this first part of the experiments was to investigate the effect of embeddings initialization and maximum input length.

Learning the word embeddings from the GloVe pre-trained weights seemed overall a good strategy for both STL and MTL approaches, even for domains with a considerable proportion of OOV words, such as the CDs and Vinyl dataset (35%). Besides that, except for Health and Personal Care and Sports and Outdoors, the choice of embeddings initialization did not appear to be influenced by the learning paradigms (single-task or multitask).

Likewise, the results in Table 2 also reveal that the maximum input length seemed to be more related to the domain than to the type of learning paradigm. For most of the datasets, the best input length was the same for STL CNN and MTL CNN. Additionally, even though there is not a clear relation between these best input lengths to the data characteristics presented on Table 1, the datasets with higher average review length showed better performance when using 512 as the maximum input length. All of these observations demonstrate that there may be a suitable domain-oriented choice of embeddings initialization and maximum input length, considering the single-task and multitask CNN approaches here presented. This finding may be used as a starting point on future experiments.

Even though having a good F1 score is desirable, having a high precision is crucial for the helpfulness classification problem. Considering a real-world application, either for recommendation [5] or for automatic summarization of the opinions expressed on helpful reviews [13], it is preferable to correctly identify very helpful reviews, even if they are few, and to avoid misclassifying poorly helpful reviews. In other words, we expect the number of false positive instances to be as close to zero as possible. That’s why the best results achieved with the CNN+SPECIFICITY models were compared against the baseline models using both F1 (Table 3) and precision (Table 4). As most of the best results from the first part of the experiments (Table 2) were obtained with GloVe initialization, and to simplify the amount of settings to vary, the subsequent experiments were conducted without testing the random embeddings initialization.

Even though the SVM baseline seemed to outperform the other two approaches, the F1 results are not statistically significantly different, according to Friedman test at \(p-value < 0.05\). On the other hand, when comparing the models using precision, CNN+SPECIFICITY greatly outperformed SVM with significant difference. This might indicate that incorporating the text specificity into a CNN classifier, whether as features or as an auxiliary prediction task, can specially reduce the amount of misclassified poorly helpful reviews.

All the five CNN+SPECIFICITY model variations appeared in the best results, therefore any of them should be worth testing when doing experiments with perceived helpfulness classification on other domains. Regarding the best settings, the results suggest that they seemed to vary according to both the domain and the classifier architecture, which do not indicate a clear domain-oriented behavior. Nevertheless, multi-task was a popular approach among most of the best results, across several datasets.

6.2 Sentence Specificity Prediction

Besides analyzing the performance of the helpfulness classification models with and without incorporating the text specificity aspect, we also present here the main points regarding our experiments with the sentence specificity prediction approach proposed in [10]. Therefore, the SPE (explained in Sect. 3) results are reported considering the specificity predictions on sentence-level. In other words, for each dataset containing product reviews, their sentences were extracted, then some or all of them were used as the target source to train the unsupervised domain adaptation model, and finally, the trained model was used to assign the specificity scores for all of these sentences.

Concerning the size of the training set, two variations were tested: a random sample of 100k sentences and all of the sentences in the dataset. After some preliminary experiments, better results were observed using a bigger training set rather than just 4.3k instances, as it was set in [12]. We decided to experiment with 100k sentences because this is about the amount of unlabeled sentences in the restaurant reviews domain used in the experiments performed in [10].

By increasing the training set, superior SPE results were also observed when training the models for more epochs. Therefore, using a training set with 100k instances, the model was trained for 80, 100, 120, 140, 160, and 180 epochs, and with all the sentences, it was trained for 60, 80, 100, and 120 epochs.

The best and worst SPE results for each dataset are summarized in Table 5. Beauty and Books domains were the only ones that achieved a truly satisfactory SPE score (\(< 1\)), according to what was discussed in Sect. 3. Nevertheless, when comparing the worst and the best results we can see that testing different training settings was important to achieve more reliable specificity predictions in our product domains. In addition to that, it is interesting to note that the size of the training set was crucial for the performance because, for every dataset, when the sample of 100k sentences achieved the preferred result, using all sentences to train the model presented an inferior performance, and vice-versa.

Some datasets, even the ones with more than 1M sentences, required all the sentences to be used as the target source to train the domain adaptation model. Furthermore, the results show that training with more data requires fewer epochs to achieve better results. However, choosing a suitable training set size for this specificity prediction approach depends on the domain.

7 Conclusion

The present study aims to contribute to the perceived helpfulness prediction literature by exploring the text specificity aspect of product reviews. It is not a trivial activity to access truly valuable online reviews due to an overwhelming volume of poor quality comments. This elucidates the need for intelligent systems with the ability to identify helpful reviews. In this sense, we argue that text specificity may play an important role to improve such systems. Therefore, we empirically validated the usefulness of different ways to incorporate the text specificity aspect into a CNN architecture. In summary, the results revealed that the proposed models seem to have the desired ability to reduce the amount of false positive (increasing precision) in comparison to a SVM baseline.

In future endeavors, we plan to carry out analysis about the influence of the text specificity in the helpfulness prediction, using, among other solutions, explainability methods. Besides that, we aim to empirically evaluate incorporating the text specificity aspect into other modern architectures (e.g. Transformers) due to their successful application on a variety of text classification problems.

References

Basiri, M.E., Habibi, S.: Review helpfulness prediction using convolutional neural networks and gated recurrent units. In: 2020 6th International Conference on Web Research (ICWR), pp. 191–196 (2020)

Caruana, R.: Multitask learning. Mach. Learn. 28(1), 41–75 (1997)

Chen, C., Yang, Y., Zhou, J., Li, X., Bao, F.S.: Cross-domain review helpfulness prediction based on convolutional neural networks with auxiliary domain discriminators. In: Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, vol. 2 (Short Papers), pp. 602–607 (2018)

Fan, M., Feng, Y., Sun, M., Li, P., Wang, H., Wang, J.: Multi-task neural learning architecture for end-to-end identification of helpful reviews. In: 2018 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining (ASONAM), pp. 343–350 (2018)

Fan, M., Feng, C., Sun, M., Li, P.: Reinforced product metadata selection for helpfulness assessment of customer reviews. In: Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing, pp. 1675–1683 (2019)

Ghose, A., Ipeirotis, P.G.: Estimating the helpfulness and economic impact of product reviews: mining text and reviewer characteristics. IEEE Trans. Knowl. Data Eng. 23(10), 1498–1512 (2011)

Kang, Y., Zhou, L.: Longer is better? a case study of product review helpfulness prediction. In: Americas Conference on Information Systems (2016)

Kim, S.M., Pantel, P., Chklovski, T., Pennacchiotti, M.: Automatically assessing review helpfulness. In: Conference on Empirical Methods in Natural Language Processing, pp. 423–430 (2006)

Kim, Y.: Convolutional neural networks for sentence classification. In: Proceedings of the Conference on Empirical Methods in Natural Language Processing, pp. 1746–1751 (2014)

Ko, W.J., Durrett, G., Li, J.J.: Domain agnostic real-valued specificity prediction. In: Proceedings of the AAAI Conference on Artificial Intelligence (2019)

Li, J.J., Nenkova, A.: Fast and accurate prediction of sentence specificity. In: Proceedings of the AAAI Conference on Artificial Intelligence, pp. 2281–2287 (2015)

Lima, B., Nogueira, T.: Novel features based on sentence specificity for helpfulness prediction of online reviews. In: 2019 8th Brazilian Conference on Intelligent Systems (BRACIS), pp. 84–89 (2019)

Liu, J., Cao, Y., Lin, C.Y., Huang, Y., Zhou, M.: Low-quality product review detection in opinion summarization. In: Conference on Empirical Methods in Natural Language Processing, vol. 7, pp. 334–342 (2007)

Louis, A., Nenkova, A.: Automatic identification of general and specific sentences by leveraging discourse annotations. In: Proceedings of 5th International Joint Conference on Natural Language Processing, pp. 605–613 (2011)

Louis, A., Nenkova, A.: Text specificity and impact on quality of news summaries. In: Proceedings of the Workshop on Monolingual Text-To-Text Generation, pp. 34–42 (2011)

McAuley, J., Targett, C., Shi, Q., Van Den Hengel, A.: Image-based recommendations on styles and substitutes. In: Proceedings of the ACM SIGIR Conference on Research and Development in Information Retrieval, pp. 43–52 (2015)

Mudambi, S.M., Schuff, D.: What makes a helpful online review? a study of customer reviews on amazon.com. MIS Q. 34(1), 185–200 (2010)

Ocampo Diaz, G., Ng, V.: Modeling and prediction of online product review helpfulness: a survey. In: Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics, vol. 1: Long Papers, pp. 698–708 (2018)

Pennington, J., Socher, R., Manning, C.: GloVe: global vectors for word representation. In: Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing, pp. 1532–1543 (2014)

Saumya, S., Singh, J.P., Dwivedi, Y.K.: Predicting the helpfulness score of online reviews using convolutional neural network. Soft Comput. 24(15), 10989–11005 (2019). https://doi.org/10.1007/s00500-019-03851-5

Zhang, Y., Wallace, B.: A sensitivity analysis of (and practitioners’ guide to) convolutional neural networks for sentence classification. In: Proceedings of the Eighth International Joint Conference on Natural Language Processing, vol. 1: Long Papers, pp. 253–263. Asian Federation of Natural Language Processing (2017)

Zhang, Y., Lin, Z.: Predicting the helpfulness of online product reviews: a multilingual approach. Electron. Commer. Res. Appl. 27, 1–10 (2018)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Lima, B., Nogueira, T. (2021). Incorporating Text Specificity into a Convolutional Neural Network for the Classification of Review Perceived Helpfulness. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13074. Springer, Cham. https://doi.org/10.1007/978-3-030-91699-2_33

Download citation

DOI: https://doi.org/10.1007/978-3-030-91699-2_33

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91698-5

Online ISBN: 978-3-030-91699-2

eBook Packages: Computer ScienceComputer Science (R0)