Abstract

Event Extraction (EE) is the task of identifying mentions of particular event types and their arguments in text, and it constitutes an important and challenging task within the area of Information Extraction (IE). However, in the context of the Portuguese language, very little work has been conducted on this topic. In this paper, we propose a neural-based method for EE, as well as a data resource to mitigate this research gap. We also present a data augmentation strategy for EE, employing an Open Information Extraction (OIE) system, aiming to overcome the shortage in annotated data for the problem in the Portuguese language. Our experimental results show that our method is able to predict event types and arguments automatically, and the proposed method of data augmentation, in one of the two evaluated samples, contributes to the performance of the tested models in the subtask of argument role prediction. Further, an implementation of our method is available to the community, as the models trained in our experiments (https://github.com/FORMAS/TEFE).

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Event Extraction (EE) is an important and challenging task of Information Extraction (IE) in the field of Natural Language Processing (NLP). The task comprises identifying the mentions of particular event types and their arguments, i.e. mentions to entities participating in the event, and the roles of these arguments in relation to an event type.

The term event has different, definitions in the literature [6, 23]. While events are undeniably temporal entities, they also may possess a rich non-temporal structure that is important for intelligent information access systems [1]. In this work, we consider events as things that happen or occur involving participants and attributes, most notably spatio-temporal attributes. To be more precise, we adopt the definition presented in the ACE 2005 annotation guidelines [6]: an event is a specific occurrence involving participants; it is something that happens and can frequently be described as a change of state.

Based on the event annotation guideline ACE 2005 [6], and related literature on EE [18, 20], EE can be divided into two main subtasks: Event Detection (ED), i.e. the task of identifying and classifying event triggers, and Argument Role Prediction (ARP), i.e. the task of identifying the arguments of an even and labeling their roles.

In this context, an event trigger consists of an expression denoting the occurrence of the event and each event mention in a sentence is identified by a trigger term. An event trigger may be expressed primarily through verbs and nominalizations but also by other word classes such as adjectives and prepositions. As such, one of the challenging aspects of the EE task is that the same trigger term can express different events in different contexts. That is, trigger words may be ambiguous. To illustrate, consider the following sentences:

\(s_1\): “... os árabes teriam de apoiar o Iraque numa luta contra o seu inimigo comum israelita.”Footnote 1

\(s_2\): “Ambas as empresas são aliadas da Navigation Mixte na luta contra uma OPA hostil ...”Footnote 2

Both of \(s_1\) and \(s_2\) contain a word, “luta” (fight), functioning as an event trigger. However, in s1, “luta” means a hostile encounter between opposing forces, which denotes a Hostile encounter event. In s2, “luta” means a dispute between parties with incompatible opinions, and denotes an event of type Quarreling [2].

On the other hand, the same event can be described by various different expressions, by means of different trigger words. For example, we observe in the annotated TimeBankPT Event Frame Annotation (TEFA) corpus that the following trigger words denote an occurrence of an event of type Statement: “dito”, “disse”, “referiu”, “anunciado”, “acrescentou”, etc.Footnote 3

Furthermore, when dealing with multiple event mentions in the same sentence, it is possible that different events share arguments with different roles. Consider the following sentence:

“A Meridian National Corp. disse que vendeu 750.00 ações oridinárias ao grupo da família McAlpine, por 1 milhão de dólares, ou 1,35 dólares por ação.”Footnote 4

the entity mention “Meridian National Corp.” takes two different (and not related) argument roles: Speaker and Seller, respectively, in the events Statement and Commerce_sell denoted by the event triggers “disse” (said) and “vendeu” (sold).

Event mentions in natural language text are ubiquitously present in different domains and genres and encompass a great amount of information encoded in a sentence.

As noted by Ahn [1], solutions for EE can benefit the development of other NLP applications, such as text summarization, question answering and information retrieval.

Notably, there are two variations of the task of EE regarding the types of target event that are considered [30]: open domain, when no schema and list of target event types are defined for the task; and closed domain, when the set of target event types and their respective schemata are pre-defined. In this paper, we will discuss a method for closed domain EE for the Portuguese language. We developed a method to identify and classify a closed set of event types whose arguments and their roles were previously specified based on machine learning.

As there is no publicly available dataset and corpus for the task of closed domain EE for the Portuguese language that we are aware, we built a dataset by enriching the TimeBankPT [8] corpus with event annotation schemata from the FrameNet project [2]. TimeBankPT is the first corpus of Portuguese with rich temporal annotations, i.e. it includes annotations not only of temporal expressions but also about events and temporal relations [8].

In this work, we propose a method to the task of EE developed and evaluated over a newly created data set with event types and argument roles annotations, labeled over the TimeBankPT corpus, from which we maintain only the event trigger annotations. Our method jointly performs ED and ARP by learning a shared intermediary representation for the ED and ARP subtasks.

Our main contributions are: (1) We propose a novel method for the EE task, simultaneously tackles the ED and ARP subtasks. (2) We demonstrate the usefulness of the application of OIE systems as a strategy to data augmentation for EE annotated sentences. (3) We have conducted experiments on a proposed enriched TimeBankPT corpus, achieving modest performance with low feature engineering effort.

2 Related Work

Most work on the literature on EE have focused on languages such as English and Chinese, for which there are standard corpora for the task [30], such as the ACE 2005 corpus [27]. Regarding the approaches adopted in the literature, there are two main categorizations with respect to the input features and the modularization of the methods, respectively divided between discriminative featured-based [1, 15] and representation learning-based methods [10, 17], and the pipeline [15] versus the joint approach [20].

Recently, however, methods based on the application of deep neural networks, more specifically, the use of representation learning to address the task of EE [4, 19], have achieved good performance improvement for the task compared to earlier feature-based approaches and, as such, we will focus on a neural-based approach in this work.

While ED is a much well-established task, if we consider the ARP subtask, very few works on the literature focused on both argument identification and argument role labeling [20]. Most of the related work assumes that argument identification is a previously resolved problem and focus only on the task role classification. Among the work on argument identification and role labeling, the works of Nguyen et al. [18, 20] propose the use of recurrent neural networks (RNNs) for the ARP, achieving good performance for the task. These works will be the main inspiration for our method. It is also important to notice that the use of contextualized word embeddings is also a recent development [26].

To our knowledge, very little work has been done on event extraction for the Portuguese language. Notably, this task has been addressed in a limited scope in the HAREM [3] named entity recognition evaluation, which included temporal named entities of the type event. From a more general perspective, the work of Costa and Branco [7] and Quaresma [22] are some of the only works on the literature on this problem for the Portuguese language, to our knowledge.

Costa e Branco [7] proposed a method for event identification and classification using an approach based on feature engineering and a decision tree trained over the TimeBankPT corpus. The corpus, however, only contains annotation on a very broad event classification typology, e.g. REPORTING, OCCURRENCE, STATE, I_STATE, and I_ACTION, and does not contain annotation on event structure, i.e. its arguments and their roles in the event.

Quaresma et al. [22] focus on the task of event extraction proposing an algorithm that relies on the output of a semantic role labeling (SRL) system. In doing so, the authors assumed that only predicates are event trigger candidates. In their work, the authors did not classify events by type, and they also limited the classification of event arguments, considering only the roles provided by SRL schemas. Unfortunately, the data set provided by the authorsFootnote 5 contains only the information on trigger output predictions of their systems, without a gold reference that would allow comparison at least for event identification.

3 Task Definition

In this work, we are concerned with the task of Event Extraction for the Portuguese language. Throughout this work, we will employ the following terminology:

-

Event mention: a phrase or sentence in which an event occurs, including one trigger and an arbitrary number of arguments.

-

Event trigger: the main word that most clearly expresses an event occurrence.

-

Event argument: an entity mention, temporal expression or value (e.g. Money) that servers as a participant or attribute with a specific role in an event mention.

-

Argument role: the relationship between an argument to the event in which it participates.

The corpus that we employ in our experiments contains 514 event types (e.g., Statement, Commerce_buy, Firing) that correspond to the types of event triggers associated with each event mention. Each event type has its own set of roles that can be performed by one of the entities participating in the event, i.e. its arguments. For instance, some of the roles of the event type Statement are Speaker, Message, Topic, Addresse and Time. The total number of roles for all the event types on the annotated corpus is 1936.

Following this terminology, we explicitly define the standard evaluation procedures as follows, as presented by [30]:

-

Trigger identification: A trigger is correctly detected if its offsets (viz., the position of the trigger word in the text) match a reference trigger

-

Trigger classification: An event type is correctly classified if both the trigger’s offset and event type match a reference trigger and its event type.

-

Argument identification: An argument is correctly identified if its offsets match any of the reference argument mentions (viz., correctly recognizing participants in an event)

-

Argument role classification: An argument role is correctly classified if its event type, offsets, and role match any of the reference argument mentions.

4 Model

We propose a model that jointly performs ED and ARP at the sentence level. As such, let \(W = \langle w_1, w_2, ..., w_n \rangle \) be a sentence, where n is the number of words/tokens and \(w_i\) is its i-th token. We will model the ED problem, as a multi-class word classification problem, following prior works [10, 16, 26] in assuming that event triggers are single words/tokens in the sentences. This definition leads to a sequence classification problem on the input sentence W, in which we predict the event type \(t_i\) for each \(w_i \in W\) - where \(t_i\) can be “None” to indicate the word \(w_i\) is not a trigger, or it is not any of the target event types. It is assumed that a trigger candidate cannot refer to more than one event mentioned in the same sentence.

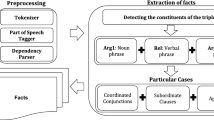

For event argument identification and role prediction, we need to recognize the entity mentions that act as an argument for each of the event mentions occurring in W (argument identification) and classify their associated role. So, for the sake of explanation, considering that each sentence has only one event mention, this task is cast as a sequence labeling task in which for each word/token in the sentence, we predict a sequence of labels \(E = e_1, e_2, ..., e_n\). In summary, for each trigger candidate word \(w_i\) we must predict the corresponding argument role labels for each word/token in W. An illustration of the architecture of our model with the joint prediction of event mentions and their arguments is depicted in Fig. 1.

4.1 Trigger and Sentence Encoding

In the component of trigger encoding, every trigger candidate word \(w_i \in W\) is transformed into a word vector \(c_i\) using the pre-trained word embedding \(d_i\) of \(w_i\). We employ the BERTimbau model [25], a transformer model trained over Portuguese language data, for obtaining these representations.

For the sentence encoding step, we only use the respective word embedding for each word/token of the sentence. Since BERTimbau applies WordPiece [28] tokenizations, possibly generating subword units, we choose to compose the respective word embeddings by summing the vector representation of each word’s subword units.

4.2 Sentence Representation

After the sentence encoding step, the input sentence W is transformer in a sequence of word embedding vectors \(X = \langle x_1, x_2, ..., x_n\rangle \). To produce the sentence representation, we feed the sequence X into a bidirecional recurrent neural network [24], which produces the hidden representation vector for each input vector \(x_t\) from X. We obtain the sequence \(H = \langle [\overrightarrow{h_1};\overleftarrow{h_1}], [\overrightarrow{h_2};\overleftarrow{h_2}], \cdots , [\overrightarrow{h_n};\overleftarrow{h_n}]\rangle \) of two hidden states at each time-step t, one for the left-to-right propagation \(\overrightarrow{h_t}\) and another for the right-to-left propagation \(\overleftarrow{h_t}\). At each step t, we employ a LSTM network [13] that accepts a current input \(x_t\) and a previous hidden state \(h_{t-1}\) to compute the current hidden state \(h_t = LSTM(x_t, h_{t-1})\).

4.3 Trigger Representation

For the trigger representation, we feed the word embedding vector of the current trigger candidate \(c_i\) into a feed-forward layer \(FF(c_i)\). The feature representation from this layer is shared between the two next prediction states to perform ED and ARP simultaneously. The value of this layer activations is computed as: \({R_i^{ED}} = ReLU(W_{tr}c_i + b_{tr})\) where \(W_{tr}\) is a weight matrix and \(b_{tr}\) a bias term.

4.4 Event Detection and Argument Role Prediction

The predictions of the event trigger classifier and argument role classifier components are done sequentially over the sentence from left to right. At the current word/step i, we attempt to compute the probability

where \(\varGamma = \langle W,a_{<i},t_{<i}\rangle \), and \(a_{i<j} = a_{i,1},a_{i,2},\cdots ,a_{i,j-1}\).

In the argument role prediction classifier, at each time step j, we generate the feature representation vector \(R_{i,j}^{ARP}\) for the word/token \(w_j\) by concatenating the hidden layer representation \(h_j\) of the sentence representation stage with the shared vector of activations of the current trigger candidate, the trigger representation vector \(R_i^{ED}\), to obtain: \(R_{i,j}^{ARP} = [h_j, R_i^{ED}]\).

In order to compute the probability \(P(t_i|W,t_{<i})\), we feed the feature representation \(R_{i}^{ED}\) into a feed-forward neural network with a softmax layer \(FF^{ED}\), resulting in the a probability distribution over the possible event types: \(P(t_i|W,t_{<i})\) \(=\) \(FF^{ED}(R_{i}^{ED})\). The event type of the trigger candidate i is selected by a greedy decoder: \(t_{i}^{p} = argmax P(t_i|W,t_{<i})\).

For the argument role distribution \(P(a_{i,j}|W,a_{i,<j},t_{<i+1})\), we follow similar procedure. We feed the feature representation \(R_{i,j}^{ARP}\) into a feed-forward neural network with a ReLU activation function, followed by a softmax layer: \(P(a_{i,j}|W,a_{i,<j},t_{<i+1})\) \(=\) \(FF^{ARP}(ReLU(R_{i,j}^{ARP}))\). We apply the greedy strategy to predict the argument role: \(a_{i,j}^p\) \(=\) \(argmax P(a_{i,j}|W,a_{i,<j},t_{<i+1})\).

4.5 Training

We train the network by minimizing the loss function values. The optimization objective function is defined as multi-class cross-entropy loss:

The gradients are computed with backpropagation with the stochastic gradient descent with mini-batches applying RMSProp [12] weights-average.

5 Corpus

To our knowledge, there is no currently publicly available dataset for EE in the Portuguese language that encompasses annotation both on event occurrences and their arguments, which include event types and argument roles.

Between the two public available corpora found in the literature [8, 22], the TimeBankPT meets most of our requirements, as it was annotated following a well-defined guideline, which is well-known and used to guide the annotation of corpora in other languages [23]. Also, it provides others annotation information, besides event mentions, that can be used to perform related tasks, such as event ordering. It is important to notice that the notion event in TimeBankPT guidelines agrees with that of ACE 2005 guidelines, which we adopt in this work. Thus, we consider this corpus as an interesting resource to base our work. While TimeBankPT describes classes attributes, it does not provide a fine-grain distinction of the semantics of events by means of a semantically relevant typology of events. More yet, it does not contain annotations of event arguments and their roles, which are necessary for our problem.

As such, we enrich the TimeBankPT corpus with annotation about event type and argument roles. In this annotation, we employ the event type schemata from the FrameNet project to define event types and argument roles. We adopted a semi-automatic annotation process, in which firstly, the LOME [29] frame parser automatically annotated all the sentences in the corpus, followed by a manual validation and correction by two human annotators. From the 7887 events originally annotated in TimeBankPT, 6220 (79%) were automatically identified by LOME and analyzed by the human annotators. We measured the inter-annotator over 10% of a common set of event type annotations to assess the reliability of the annotation process. The annotators achieved an inter-annotator agreement of 0.78 as measured by the Cohen’s Kappa [5] coefficient, which is considered a good value.

We evaluate the proposed model for EE on this TEFA (TimeBankPT Event Frames Annotation) dataset obtained as a result of our annotation process. The TEFA corpus contains annotation of 6620 event mentions of 514 different types and 1936 arguments.

6 Experiments

In the following, we describe our empirical investigation on EE for the Portuguese language, based on the joint identification and classification model proposed in Sect. 4 and evaluated on the TEFA corpus described in the previous section.

6.1 Dataset, Parameters, and Resources

For all the experiments, in the trigger and sentence encoding phase, we use 768 dimensions for the word embeddings. We extracted these embeddings from the BERTimbau Base model, where we compose the resulting WordPiece sub-tokens by adding them.

We use 350 units in the hidden layers for the LSTMs. For the trigger representation, during training, we use a dropout layer of 25% rate over the input word embedding followed by a feed-forward neural network with 700 units with ReLU activation function. After this layer, we employed a batch normalization layer [14], and another dropout layer of 50% rate.

Regarding the argument prediction phase, at each time step of the BiLSTM, of the sentence representation phase, we concatenate the trigger representation with the two hidden unit vectors, constructing a vector representation of 1400 activations, and applied a time distributed feed-forward layer with 700 units with ReLU activation function followed by a final softmax layer for probability distribution prediction for the argument role labels. For the ED part of the model, we apply a softmax dense layer over the shared event trigger representation. Finally, during training, we use a mini-batch of size 128. These parameter values were selected according to the validation data.

We applied the pre-trained language representations from BERTimbau-Base in a feature-based approach. We follow Devlin et al. [9], applying a feature-based approach by extracting the activations from one or more layers without fine-tuning any parameters of BERTimbau-Base. During the model development, we evaluated three different strategies: capturing the last hidden layer activations, summing the last four, and summing all the twelve. From now on we will refer to these strategies as Last Hidden, Sum Last 4, and Sum All 12, respectively.

Regarding the event types and argument roles labels, we consider a set of 514 event types from FrameNet [2] plus the None type, which identifies a word as not denoting an event, and a set of 1936 argument roles types as defined in FrameNet [2]. We adopted the BIO annotation schema to assign argument role labels to each token in the sentence, achieving a total of 3873 labels (two times the number of argument role types, corresponding to the labels Begin and In for each argument role type, plus one Outside label).

To evaluate our method, we defined two scenarios considering the amount of annotated examples for each event type. The first consisted only of event mentions of target types containing more than 100 examples in the training corpus, consisting of 7 event types with 93 argument roles. The second consisted of all event types annotated in the corpus, containing 514 event types with 1936 argument roles. The purpose of this procedure was to investigate the influence of the number of training examples in the performance of the method and possibly infer the effort to further support new event types.

We use the criteria for evaluation described on Sect. 3. To provide a meaningful comparison with possible future work, we employ the precision (P), recall (R), and \(F_1\) Score (\(F_1\)) evaluation metrics. We report the micro-average scores for event type classification and argument role prediction as in previous work [18]. We also show the \(F_1\) micro-average score for argument role label prediction on the test set.

6.2 Data Augmentation via OIE

In order to obtain more annotated data and possibly increase the robustness of our method, we explore the use of a data augmentation method for the task of EE using new sentences formed by Open Information Extraction (OIE) triples over the event annotated sentences from the original corpus. To the best of our knowledge, this strategy of data augmentation for the task of EE has not been mentioned in the literature.

OIE is the task of extracting facts, or simple propositions, from text without limiting the analysis to a predefined set of relationships or predicates [11]. As described by Oliveira et al. [21], OIE systems usually extract facts from a sentence in the form of triples \(t = (arg1, rel, arg2)\), where arg1 and arg2 are two arguments and rel describes a semantic relationship between arg1 and ag2.

We argue that this technique of EE data augmentation can boost the model performance because it provides simpler syntactic realizations of event mentions to model the relationship between an event trigger and their arguments.

To make sense of the previous argument, consider the following sentence: “Na Bolsa de Valores Americana, as ações da Citadel fecharam ontem nos 45,75 dólares, descendo 25 cêntimos.”Footnote 6. One of the triples obtained by the execution of DptOIE [21]Footnote 7, an OIE system for the Portuguese language, over the previous sentence was: (“As ações da Citadel”, “fecharam”, “nos 45,74 dólares”).

If we consider as a new sentence, which we refer to as a synthetic sentence, simply the concatenation of arg1, rel and arg2 from the extracted triple, we obtain the sentence: “As ações da Citadel fecharam nos 45,74 dólares”Footnote 8. In most cases, this simple approach produces grammatical and semantic correct sentences.

The synthetic sentences obtained from the triples are at most the same length that the original sentences, and those that are equal we do not count as new ones. Our hypothesis is that this data augmentation approach will be beneficial when dealing with long sentences for which the synthetic sentences are shorter and include the relevant arguments because the new sentence will help reduce the number of long-term dependencies in the input sequence the model needs to remember. Also, as we maintain the original sentence it will not prohibit the model from learning these dependencies from more complex and real examples. We create the augmented dataset by iterating into the extractions from the OIE system, producing the synthetic sentences and selecting the one where the event trigger is part of the relation descriptor in the obtained triple. Following that, we transfer the annotations of the extracted sentence to the synthetic one.

The application of this data augmentation strategy over the original dataset produced an increase of 192% of annotated sentences (i.e. from 1962 to 5747 examples), 70% of event triggers, i.e. from 5345 to 9130 examples, and 56% of arguments, i.e. from 10727 to 16779 examples.

6.3 Word Embedding Layers Selection

We evaluate different approaches to extract the activations from layers of BERTimbau to obtain the pretrained word embeddings, which we employ as input features to the joint model for EE. Table 1 presents the performance on the development set of the augmented training set when Last Hidden, Sum Last 4, and Sum All 12 layers extractions are used to obtain the word embeddings from the same corpus over the two different scenarios (more than 100 examples and more than 0 examples). The development set also contains sentences resulting from the data augmentation via OIE.

We can observe from the results depicted in Table 1, that the Last Hidden input word embeddings performs best for the task of ARP, and the Sum All 12 performs best for the ED subtask. We observe the same pattern on the original development set evaluation, i.e. without data augmentation. From these results, we chose to prioritize the ARP subtask by choosing the Last Hidden combination as the input word embeddings to produce the final models on both original and augmented datasets.

6.4 Test Evaluation

Table 2 shows the results of the comparison between the proposed method trained in four different scenarios, where we compare the performance of the models trained on the original TEFA data (blstme) and over the augmented training set (blstmea). We notice that data augmentation strategy was beneficial to the task of ARP when the data per event type and argument role were more scarce, and the BIO tags labeling task performance does not match the performance on argument role classification. However, we did not notice the same benefit on event types and, consequently, arguments roles with a greater number of examples in the corpus. More than that, the augmentation appears to diminish the \(F_1\) performance of the model, but in contrast, it raises the ARP individual labels classification.

7 Error Analysis

We examine the outputs of blstme-0 on the test set to find out the contributions of each event type to event detection errors. Following Nguyen et al. [20], we highlight two types of errors: (i) missing an event mention in the test set (called MISSED), and (ii) incorrectly detecting an event mention (called INCORRECT).

In Table 3, we list the top five event types appearing in these two types of errors and their corresponding percents over the total number of errors, and the number of available event mentions on the training and testing data sets. The total number of event types present on the test set amounts to 244.

These top five event types amount to 8.1% of the MISSED errors and 31.1% of the INCORRECT errors. The MISSED errors are more evenly distributed between the event types than the INCORRECT errors. Examples from the Statement event type appears in both top five type of errors, and it is ranked in the first place primarily due to the greater amount of examples on the test set. When we observe one of the MISSED errors from the Statement event type, even the trigger word “relata” being available on the training set, due to a different and somehow confusing context, the model failed to detect the event mention in the following sentence: “A correspondente da BBC Karyn Coleman relata do Kosovo.”Footnote 9. When we change the word order of this sentence: “Do Kosovo, a correspondente da BBC Karyn Coleman relata.” the model was able to detect the Statement event mention. Despite this example, the MISSED errors were detected more frequently in cases where the trigger words are not present in the training data.

From the analysis of the INCORRECT errors, we notice that similar context, when the sentence contains event arguments common to different event types, the most predominant example event type hinder the performance of similar event types. As an example of such a case, in the sentence: “Os funcionários americanos afirmam que já veem sinais de que Saddam Hussein está a ficar nervoso.”Footnote 10, the model detected a Statement event type, instead of Affirm_or_deny event type, despite a very indicative trigger word. This may indicate an over-reliance of the model on lexical information, as opposed to the semantic information we expect to be encoded in the word and sentence representation stages of our architecture. Another explanation may reside in the much fine-grained schemata that we employed and the imbalance between these two event type annotated examples.

8 Conclusion

In this paper, we have presented a method for event extraction in the Portuguese language over an enriched event frame annotated corpus constructed from the TimeBankPT corpus. Our approach requires less effort on feature engineering, using as input only contextualized word embeddings, and jointly performs ED and ARP. We also proposed and evaluated a method of data augmentation for the EE task using an OIE system. The annotation process required to produce the corpus turns out to be tested by this experimentation evaluation, validating the process of annotation, using three language resources already available: the TimebankPT corpus, the LOME frame parser, and the FrameNet schemata.

Our experimental results show that our method is able to learn and automatically predict with good precision some event types and argument roles over the annotated corpus. Also, we notice an improvement in the performance on the argument role prediction for the models that used the augmented data from OIE when dealing with event types and argument roles with scarce annotated examples, showing that this strategy may be valuable to improve the performance of EE systems for languages with little training data for the task, as is the case of the Portuguese language.

Notes

- 1.

s1: “... the Arabs would have to support Iraq in a fight against their common Israeli enemy.”.

- 2.

s2: “Both companies are allies of Navigation Mixte in its fight against a hostile takeover bid ...”.

- 3.

“said”, “referred”, “announced”, “added”.

- 4.

“Meridian National Corp. said it sold 750,000 shares of its common stock to the McAlpine family interests, for $1 million, or $1.35 a share.”

- 5.

- 6.

“In American Stock Exchange composite trading, Citadel shares closed yesterday at $45.75, down 25 cents”.

- 7.

Available at: https://github.com/FORMAS/DptOIE.

- 8.

“Citadel shares closed at $45.75”.

- 9.

“BBC correspondent Karyn Coleman reports from Kosovo.”.

- 10.

“U.S. officials claim they already see signs Saddam Hussein is getting nervous.”.

References

Ahn, D.: The stages of event extraction. In: Proceedings of the Workshop on Annotating and Reasoning about Time and Events, pp. 1–8 (2006)

Baker, C.F., Fillmore, C.J., Lowe, J.B.: The berkeley framenet project. In: 36th Annual Meeting of the Association for Computational Linguistics and 17th International Conference on Computational Linguistics, vol. 1, pp. 86–90 (1998)

Carvalho, P., Gonçalo Oliveira, H., Santos, D., Freitas, C., Mota, C.: Segundo harem: Modelo geral, novidades e avaliaçao. quot; In Cristina Mota; Diana Santos (ed) Desafios na avaliação conjunta do reconhecimento de entidades mencionadas: O Segundo HAREM Linguateca 2008 (2008)

Chen, Y., Xu, L., Liu, K., Zeng, D., Zhao, J.: Event extraction via dynamic multi-pooling convolutional neural networks. In: Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), pp. 167–176 (2015). https://doi.org/10.3115/v1/P15-1017

Cohen, J.: A coefficient of agreement for nominal scales. Educ. Psychol. Meas. 20(1), 37–46 (1960). https://doi.org/10.1177/001316446002000104

Consortium, L.D.: Ace (automatic content extraction) English annotation guidelines for events. Version (5.4.3) (2005)

Costa, F., Branco, A.: Lx-timeanalyzer: a temporal information processing system for Portuguese (2012). http://hdl.handle.net/10451/14148

Costa, F., Branco, A.: Timebankpt: a timeml annotated corpus of portuguese. In: LREC, pp. 3727–3734 (2012)

Devlin, J., Chang, M.W., Lee, K., Toutanova, K.: Bert: pre-training of deep bidirectional transformers for language understanding (2018). https://doi.org/10.18653/v1/N19-1423

Ding, R., Li, Z.: Event extraction with deep contextualized word representation and multi-attention layer. In: Gan, G., Li, B., Li, X., Wang, S. (eds.) ADMA 2018. LNCS (LNAI), vol. 11323, pp. 189–201. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-05090-0_17

Glauber, R., de Oliveira, L.S., Sena, C.F.L., Claro, D.B., Souza, M., et al.: Challenges of an annotation task for open information extraction in Portuguese. In: Villavicencio, A. (ed.) PROPOR 2018. LNCS (LNAI), vol. 11122, pp. 66–76. Springer, Cham (2018). https://doi.org/10.1007/978-3-319-99722-3_7

Hinton, G., Srivastava, N., Swersky, K.: Neural networks for machine learning lecture 6a overview of mini-batch gradient descent. Cited on 14(8), 2 (2012)

Hochreiter, S., Schmidhuber, J.: Long short-term memory. Neural Comput. 9(8), 1735–1780 (1997). https://doi.org/10.1162/neco.1997.9.8.1735

Ioffe, S., Szegedy, C.: Batch normalization: accelerating deep network training by reducing internal covariate shift. In: International Conference on Machine Learning, pp. 448–456. PMLR (2015)

Ji, H., Grishman, R.: Refining event extraction through cross-document inference. In: Proceedings of ACL-08: Hlt, pp. 254–262 (2008). https://aclanthology.org/P08-1030

Li, Q., Ji, H., Huang, L.: Joint event extraction via structured prediction with global features. In: Proceedings of the 51st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 73–82. Association for Computational Linguistics (2013). https://www.aclweb.org/anthology/P13-1008

Liu, J., Chen, Y., Liu, K., Zhao, J.: Event detection via gated multilingual attention mechanism. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 32 (2018)

Nguyen, T.H., Cho, K., Grishman, R.: Joint event extraction via recurrent neural networks. In: Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pp. 300–309. Association for Computational Linguistics (2016). https://doi.org/10.18653/v1/N16-1034

Nguyen, T.H., Grishman, R.: Event detection and domain adaptation with convolutional neural networks. In: Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 2: Short Papers), pp. 365–371. Association for Computational Linguistics (2015). https://doi.org/10.3115/v1/P15-2060

Nguyen, T.M., Nguyen, T.H.: One for all: Neural joint modeling of entities and events. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 33, pp. 6851–6858 (2019)

Oliveira, L.D., Claro, D.B.: Dptoie: a Portuguese open information extraction system based on dependency analysis (2019)

Quaresma, P., Nogueira, V.B., Raiyani, K., Bayot, R.: Event extraction and representation: a case study for the Portuguese language. Information 10(6), 205 (2019). https://doi.org/10.3390/info10060205

Saurı, R., Littman, J., Knippen, B., Gaizauskas, R., Setzer, A., Pustejovsky, J.: Timeml annotation guidelines version 1.2. 1 (2006)

Schuster, M., Paliwal, K.K.: Bidirectional recurrent neural networks. IEEE Trans. Sig. Process. 45(11), 2673–2681 (1997). https://doi.org/10.1109/78.650093

Souza, F., Nogueira, R., Lotufo, R.: BERTimbau: pretrained BERT models for Brazilian Portuguese. In: 9th Brazilian Conference on Intelligent Systems, BRACIS, Rio Grande do Sul, Brazil, October 20–23 (2020). https://doi.org/10.1007/978-3-030-61377-8_28

Wadden, D., Wennberg, U., Luan, Y., Hajishirzi, H.: Entity, relation, and event extraction with contextualized span representations. In: Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), pp. 5788–5793 (2019). https://doi.org/10.18653/v1/D19-1585

Walker, C., Strassel, S., Medero, J., Maeda, K.: Ace 2005 multilingual training corpus. Linguist. Data Consortium Philadelphia 57, 45 (2006)

Wu, Y., et al.: Google’s neural machine translation system: Bridging the gap between human and machine translation. arXiv preprint arXiv:1609.08144 (2016)

Xia, P., et al.: LOME: Large ontology multilingual extraction. In: Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: System Demonstrations, pp. 149–159. Association for Computational Linguistics (2021). https://aclanthology.org/2021.eacl-demos.19

Xiang, W., Wang, B.: A survey of event extraction from text. IEEE Access 7, 173111–173137 (2019)

Acknowledgments

Anderson da Silva Brito Sacramento would like to thank Coordenação de Aperfeiçoamento de Pessoal de Nível Superior - CAPES for financial support (88887. 467864/2019-00).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Sacramento, A.d.S.B., Souza, M. (2021). Joint Event Extraction with Contextualized Word Embeddings for the Portuguese Language. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13074. Springer, Cham. https://doi.org/10.1007/978-3-030-91699-2_34

Download citation

DOI: https://doi.org/10.1007/978-3-030-91699-2_34

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91698-5

Online ISBN: 978-3-030-91699-2

eBook Packages: Computer ScienceComputer Science (R0)