Abstract

In this paper we present an efficient method for training models for speaker recognition using small or under-resourced datasets. This method requires less data than other SOTA (State-Of-The-Art) methods, e.g. the Angular Prototypical and GE2E loss functions, while achieving similar results to those methods. This is done using the knowledge of the reconstruction of a phoneme in the speaker’s voice. For this purpose, a new dataset was built, composed of 40 male speakers, who read sentences in Portuguese, totaling approximately 3h. We compare the three best architectures trained using our method to select the best one, which is the one with a shallow architecture. Then, we compared this model with the SOTA method for the speaker recognition task: the Fast ResNet–34 trained with approximately 2,000 h, using the loss functions Angular Prototypical and GE2E. Three experiments were carried out with datasets in different languages. Among these three experiments, our model achieved the second best result in two experiments and the best result in one of them. This highlights the importance of our method, which proved to be a great competitor to SOTA speaker recognition models, with 500x less data and a simpler approach.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Voice recognition is widely used in many applications, such as intelligent personal assistants [14], telephone-banking systems [4], automatic question response [12], among others. In several of these applications, it is useful to identify the speaker, as is the case in voice-enabled authentication and meeting loggers. Speaker verification can be done in two scenarios: open-set and closed-set. In both scenarios, the objective is to verify that two audio samples belong to the same speaker. However, in the closed-set scenario, the verification is restricted only to speakers seen during the training of the models. On the other hand, in the open-set scenario, verification occurs with speakers not seen in model training [11, 17]. The verification of speakers in an open-set scenario is especially desired in applications such as meeting loggers, since in these applications speakers can be added frequently, thus, the use of closed-set models would imply the retraining of the model after the insertion of new speakers.

The first works to use deep neural networks in speaker recognition in an open-set scenario learned speaker embeddings were [30, 31], using the Softmax function. Although the Softmax classifier can learn different embeddings for different speakers, it is not discriminatory enough [8]. To work around this problem, models trained with softmax were combined with back-ends built on Probabilistic Linear Discriminant Analysis (PLDA) [16] to generate scoring functions [27, 31]. On the other hand, Softmax Angular [19] was proposed and it uses cosine similarity as a logit entry for the softmax layer, and it proved to be superior to the use of softmax only.

Thereafter, Additive Margins in Softmax (AM-Softmax) [35] was proposed to increase inter-class variance by introducing a cosine margin penalty in the target logit. However, according to [8] training with AM-Softmax and AAM-Softmax [10] proved to be a challenge, as they are sensitive to scale and margin value in the loss function. The use of Contrastive Loss [7] and Triplet Loss [5, 28] also achieved promising results in speaker recognition, but these methods require careful choice of pairs or triplets, which costs time and can interfere with performance [8]. Finally, the use of Prototypical networks [36] for speaker recognition was proposed. Prototypical networks seek to learn a metric space in which the classification of open-sets of speakers can be performed by calculating distances to prototypical representations of each class. Generalized end-to-end loss (GE2E) [34] and Angular Prototypical (AngleProto) [8] follow the same principle and achieved state-of-the-art (SOTA) results recently in speaker recognition. Parallel with this work, [15] proposed the use of the AngleProto loss function in conjunction with Softmax, presenting a result superior to the use of AngleProto only. The authors proposed a new architecture presenting SOTA results.

In this work, we propose a new method for training speaker recognition models, called Speech2Phone. This method was trained with approximately 3.5 h of speech and surpassed a model trained with 2.000 h of speech using the GE2E loss function. Our method is based on the reconstruction of the pronunciation of a specific phoneme and has shown promise in scenarios with few available resources. In addition, the simplicity of its architecture makes our method suitable for real-time applications with low processing power.

Finally, to simplify the reproduction of this work, Python code and download links for the datasets used to reproduce all experiments are publicly available on the Github repositoryFootnote 1.

This work is organized as follows. Section 2 details the datasets used as well as the preprocessing performed to attend the proposed experiments. Section 3 presents the Speech2Phone method and experiments carried out to find the best model trained with this method for the identification of speakers in an open-set scenario. Section 4 compares the best model trained with the Speech2Phone method with the state of the art in the literature. Finally, Sect. 5 shows the conclusions of this paper.

2 Datasets and Pre-processing

Section 2.1 presents the datasets used, as well as describes a new dataset created to attend the needs of our experiments. Sections 2.2 and 2.3 detail the pre-processing performed on the datasets to allow the execution of all the proposed experiments.

2.1 Audio Datasets

To train our method, it was necessary to build a specific dataset, which we call the Speech2Phone dataset. This dataset includes 40 male speakers, aged between 20 and 50 years. The dataset includes only Portuguese utterances, because that is the native language of the speakers. We chose to focus only on male speakers, because during the collection phase of our dataset we were able to collect only voices from 5 female speakers. To collect the data, each speaker was given a phonetically balanced text, according to the work of [29], which was comprised of 149 words. The reading time ranged from 42 to 95 s, totaling approximately 43 min of speech.

Additionally, we asked each speaker to say the phoneme /a/ for approximately three seconds. The central second of each capture was extracted and then used as expected output for the embedding models. The phoneme /a/ was chosen because it is simple to articulate and very frequent in the Portuguese language. The Speech2Phone dataset is publicly available on the Github repositoryFootnote 2

To evaluate our method and compare it with related works, we use the VCTK [33] and Common Voice (CV) [2] datasets. The VCTK is an English language dataset with a total of 109 speakers. During its creation, the 109 speakers spoke approximately 400 sentences. The same phrases are spoken by all speakers. The dataset has approximately 44 h of speech and is sampled at 48 KHz. Common Voice is a massively multilingual transcribed speech dataset for research and development of speech technology. CV has 54 subsets and each of these sets have data from a language, currently the dataset has a total of 5,671 h. In this work, we use version 5.2 of the corpus.

2.2 Preprocessing of the Speech2Phone Dataset

To preprocess the Speech2Phone dataset we extracted five-second speech segments from the original audio length. The five-second window was defined after preliminary experiments, varying the input duration. In order to maximize the number of speech segments, we used the overlapping technique, in which the window was shifted one second each time and an instance was extracted during the total audio duration. The main dataset resulted in 2,394 speech segments totaling 3 h and 23 min of speech. The next step was to divide the dataset into smaller sets to attend the needs of each proposed experiment. Therefore, the original dataset was divided into four subsets. The Partitions \(A_1\) and \(B_1\) each have 20 different speakers and have approximately 1,097 samples each. Partitions \(A_2\) and \(B_2\) have approximately 100 speech segments each and have, respectively, speakers from the \(A_1\) and \(B_1\) partition. Thus, A partitions do not have speakers in common with B partitions.

2.3 Pre-processing of Speaker Verification Datasets

We preprocessed the VCTK and CV datasets in order to use them for speaker verification. For the VCTK we chose to use the entire dataset and for CV we used the test subsets of the dataset in Portuguese (PT), Spanish (ES) and Chinese spoken in China (ZH).

The VCTK dataset has, in many of its samples, long initial and final silences. In order to ensure that this feature does not affect our analysis, we chose to remove these silences. So, we applied Voice Activity Detection (VAD) using the Python implementation of the Webrtcvad toolkitFootnote 3.

We used VCTK and CV to test our models. The datasets were used to build audio pairs. The positive class is composed from audio pairs from the same speaker, while negative class has pairs from different speakers. In this scenario, it is possible to build more examples from the negative class. To avoid class imbalance issues, we defined the maximum number of negative pairs analyzed as the number of positive samples divided by the number of speakers. We also removed speakers with less than two samples.

Table 1 shows the number of speakers, language and number of positive and negative speech segments of the datasets used to verify the speaker in our experiments. The Python code used for the preprocessing of the dataset, as well as the link to download the versions of the VCTK and CV datasets used are available in the Github repositoryFootnote 4.

3 Speech2Phone

Section 3.1 presents the experiments carried out to choose the best model trained with the Speech2Phone method to identify speakers in the open-set scenario. On the other hand, Sect. 3.2 presents the results of Speech2Phone experiments.

3.1 Proposed Method

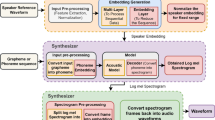

The goal of open-set models is to be speaker independent; additionally, a desirable feature is to be multilingual and text independent. In pursuit of these goals, we propose that the neural network training uses five second speech fragments as input and, as expected output, the reconstruction of a second of a simple phoneme (/a/ in our experiments). As the phoneme sounds differ according to each speaker, a good reconstruction would allow the model to distinguish between speakers. Focusing on a single phoneme allows for dimensionality reduction in the embedding layer.

In the Speech2Phone experiments, the model’s input and the expected output is represented in the form of Mel Frequency Cepstral Coefficients (MFCCs) [20]. We extract MFCCs using the Librosa [21] library. The default sampling rate (22 KHz) was used and 13 MFCCs (empirically defined) were extracted. Windowed frames were used as defined by the default parameters in Librosa 0.6, namely, a 512 Hop Length and 2,048 as the window length for the Fast Fourier Transform [25]. In addition, as the models must reconstruct an MFCC segment they were induced using Mean Square Error (MSE) [13].

Several models using the Speech2Phone method, and consequently the Speech2Phone dataset, have been tested. We report the three most interesting ones in this section. To evaluate these experiments, we used the accuracy of the speaker identification.

To calculate the accuracy, we randomly chose and entered an embedding of a sample of each of the speakers in a database. For the calculation it is necessary to search the extracted embedding in an embeddings database. This is done by running the KNN [22] algorithm with \(k=1\) and using the Euclidean distance. If the embedding of a speaker has a Euclidean distance closer to its embedding registered in the database than the embedding of all other speakers, this will be counted as a hit, otherwise it will be counted as an error.

In the experiments, we compared the performance of the open-set models in a closed-set scenario. All of these experiments were trained with the Speech2Phone dataset \(A_1\) subset, described previously in Sect. 2.2. Therefore, the models were trained with only 1,037 speech segments of 20 different speakers, approximately 1.5 h of speech.

Tensorflow [1] and TFlearn [32] were used to generate the neural networks for all Speech2Phone Experiments. All models were trained using the Adam Optimizer [18]. The convolutional layers in all experiments have a stride of 1. Hyperparameters used to train each model are presented in Table 2.

To try to find the best topology for the Speech2Phone method, we propose the following experiments:

-

Experiment 1 (Dense): this experiment consists of a fully connected feed-forward neural network with one hidden layer for embedding extraction. The architecture of the model used in this experiment is shown in the Fig. 1.

-

Experiment 2 (Conv): This experiment is based on Experiment 1, but it uses a fully convolutional neural network. This network has only convolutional layers, in addition the decoder uses upsampling layer. Following [8] we use 2D convolutions. The speech segments can be seen as a bidimensional image matrix where columns are time steps and the rows are cepstral coefficients. Convolutions have the advantage of being translationally invariant in this matrix. As we try to reconstruct a specific phoneme, this is a desired property, since the location of the instance may contain the target phoneme may occur in different parts of the window. The model architecture used in this experiment is shown in the Fig. 2.

-

Experiment 3 (Recurrent): This experiment is based on Experiment 1, but it consists of a recurrent fully-connected neural network for embedding generation. The five-second window is split into five segments containing one second each, in which the model analyzes one at a time. Considering that the recurrence window is small, we used classical recurrence instead of long term models like LSTM [13], since vanishing gradients are less prone to occur. If the phoneme of interest happens in one of the five fragments, the recurrence network should store it in its memory before reconstructing it in the final step, potentially improving the reconstruction accuracy. The approach reduces the number of learned parameters and, consequently, also improves training times. The model architecture used in this experiment is shown in the Fig. 3.

3.2 Speech2Phone Results

Table 3 shows the results of the Speech2Phone experiments, showing accuracy obtained in the open-set scenario, that is, evaluations using different speakers for training and testing the models and closed-set, where the training and test speakers are the same.

In this set of experiments, the neural models received 5 s of audio from a specific speaker and were induced to reconstruct 1 s of the phoneme /a/ in the voice of that speaker. The goal is to obtain good results with little training data in contrast to the GE2E and AngleProto loss functions, which need a large data set for good performance. To conduct the proposed experiments, samples from 20 speakers were used for training and samples from another 20 speakers for testing, as previously discussed in Sect. 3.1.

Experiment 1 used a fully connected neural network. In an open-set scenario, it obtained an accuracy of 76.96%, the best accuracy in the open-set scenario for all experiments. On the other hand, in the closed-set scenario, it obtained 77.50% accuracy. This was the worst result for all closed-set experiments. We believe that the fully connected model achieved the best results on the open-set due to the low number of parameters, thus being less prone to overfit. In addition, the dataset is very small and in this way, deeper models are very likely to memorize features dependent on the speaker or noise artifacts. Apparently, the recurrent and convolutional models specialized in extracting particular features for the speakers in the training set in order to reconstruct the output. In this way, as the deeper models learn specific characteristics of the speakers, their generalization for new speakers is impaired, having good performance in the closed-set scenario and a drop in performance in the open-set.

Experiment 2, which explored the use of a fully convolutional neural network for generating embeddings, presented the second best result (64.43%) in the open-set scenario. On the other hand, in the closed-set, the model achieved the best result obtaining an accuracy of 100%. Translational invariance is a useful feature from convolutional networks and can be used to detect specific phonemes independently of where their occur in the audio. We believe that convolutional models are suitable for this task; however, due to the low amount of data the models learn characteristics dependent on speakers, which leaves this model at a disadvantage in open-set scenario, having a worse performance than Experiment 1.

Experiment 3 explored the use of a recurrent neural network with fully connected layers for generating embeddings, resulting in the worst accuracy in the open-set scenario (50.28%) and the second worst in the closed-set scenario (88.75%). Recurrent models can perform a more detailed analysis on the input audio, searching patterns in one input fragment at a time. However, a problem may happen when this pattern is split in a different analysis window. The simple recurrent model tested could not overcome this issue. We also evaluated, in preliminary experiments, a recurrent LSTM network, but it did not perform as well as simple recurrence, as there is no need for long-term memory in a 5-step analysis process.

In the open-set scenario, the superiority of the fully connected model (Experiment 1) is noticeable. This is because they are able to generalize better for new speakers and have proven to be less prone to overfitting for the task. In addition, the fully connected model was able to maintain very close accuracy in the closed-set scenario (77.50%), compared to the open-set scenario (76.96%), while the other models had a great drop in performance. On the other hand, the fully convolutional model (Experiment 2) also showed promising results with a performance 12.53% below the fully connected model.

4 Application: Speaker Verification

Section 4.1 presents the proposed experiments to compare our method with the state of the art in the literature. On the other hand, Sect. 4.2 presents the results of speaker verification experiments.

4.1 Speaker Verification Experiments

An important question is how well a model trained with the Speech2Phone method performs in speaker verification. To answer this question we compared Speech2Phone with the Fast ResNet–34 model proposed by [8] trained using the Angular Prototypical [8] and GE2E [34] losses function. We chose this model because it presents state-of-the-art results in the VoxCeleb [23] dataset, in addition the authors made the pre-trained models available in the Github repositoryFootnote 5.

To compare the models, we chose two datasets, one of which is multi-language, presented in Sect. 2.3. As Speech2Phone receives 22 kHz sample rate audio, while Fast ResNet–34 16 kHz audio input. We cannot directly compare the results of the models in the VoxCeleb test dataset. As VoxCeleb is sampled at 16 kHz, it would not be a fair comparison to resample audios from 16 kHz to 22 kHz. Therefore, we chose other datasets with a sample rate greater than 22 kHz. In addition, using the dataset test that the Fast ResNet–34 model was trained on could put the Speech2Phone model at a disadvantage.

The audios for each dataset were resampled to 16 kHz for the test with the Fast ResNet–34 model and to 22 kHz for the test with Speech2Phone, thus making a fair comparison between the models. For comparison, we use the metric Equal Error Rate (EER) [6], the lower the EER is, the greater the accuracy becomes.

To compare the best model trained with the Speech2Phone method with the SOTA we propose the following experiments:

-

Experiment 4 (Speech2Phone): This experiment uses the same model and hyperparameters as experiment 1; however, the model was trained with the entire Speech2Phone dataset. Therefore, the model was trained with 2,394 speech segments of 40 speakers totaling approximately 3 h and 23 min of speech.

-

Experiment 5 (Fast AngleProto): This experiment uses the Fast ResNet–34 model, proposed in [8], this model is trained with the Angular Prototypical loss function in the Voxceleb dataset, which has approximately 2,000 h of speech by approximately 7,000 speakers. This model achieves an EER of approximately 2.2 in the voxceleb dataset test set, as reported in [8].

-

Experiment 6 (Fast GE2E): This experiment also uses the Fast ResNet–34 model, and was also trained with the same number of speakers and hours of experiment 5. However the model is trained using the GE2E loss function.

For the evaluation of the Fast ResNet–34 model we followed the original work and sample ten 4-s temporal crops at regular intervals from each test segment. As the model accepts audios of variable size when the audio was less than 4-s, like the original work, we only used the audio without sample crops.

Unlike the Fast ResNet–34 model, which accepts audios of varying sizes, our model receives an MFCC of just 5 s of speech as input. However, in the VCTK and CV datasets we have audios with variable sizes. Therefore, for a fair comparison and each model to have access to all the audio content for calculating the EER of the Speech2Phone model, we proceed as follows. For audios longer than 5 s, we use the overlapping technique, as described in Sect. 2.2. Therefore, after applying the overlapping technique for a six-second audio, two five-second samples are obtained, the resulting embedding for that sample is the average between the predicted embedding for these two five-second samples. On the other hand, for sample less than 5 s, we repeat the audio frames until reaching at least 5 s, for example, a three-second audio is repeated once, thus obtaining a six-second audio, where the resulting audio applies the overlapping technique, since we audios longer than 5 s.

4.2 Speaker Verification Results

In this set of experiments we compared the performance of our best model trained with the Speech2Phone method with the Fast ResNet–34 model proposed by [8], trained with approximately 2,000 h of speech using the Angular Prototypical loss function [8] and GE2E [34]. Table 4 shows the EER of these experiments in English using the VCTK dataset, in Portuguese (PT), Spanish (ES) and Chinese (ZH) using the Common Voice dataset.

Experiment 4 consisted of the best Speech2Phone result trained using the entire Speech2Phone dataset (approximately 3 h and 23 min of speech from 40 different speakers). This achieved the best EER of all experiments in the VCTK dataset. For the Common Voice dataset, the model achieved the second best result for the PT and ZH subsets, being surpassed only by the Fast ResNet–34 model trained with the Angular Prototypical loss function (Experiment 5). On the other hand, in the ES subset, this experiment had the worst performance of all experiments.

Experiment 5 used Fast ResNet–34 model trained with the Angular Prototypical loss function, obtaining the second best EER in the VCTK dataset, only surpassed by Speech2Phone. In addition, this experiment achieved the best result in all subsets of the Common Voice dataset.

Experiment 6, which consisted of using Fast ResNet–34 model trained with the GE2E loss function, obtained the worst EER in the VCTK dataset. This experiment also had the worst EER in the ZH and PT subsets of Common Voice. However, the model achieved the second best EER in the ES subset of Common Voice.

Experiment 4 showed the potential of the Speech2Phone approach, which despite having been trained with only 3 h and 23 min of speech from only 40 speakers, obtained better results than Fast ResNet–34 model trained with 2000 h of speech and approximately 7,000 speakers using the GE2E loss function. In addition, this experiment achieved better results than both Experiments 5 and 6 in the VCTK dataset.

In experiment 5 we observed better results in 3 of the 4 evaluated subsets, when compared with experiment 4. This was expected due to the model in experiment 5 having access to more than 1.996 h of speech and 6.960 speakers. In addition, the Experiment 4’s training dataset has only male voices, as the reconstruction of a female voice is different and the VCTK and Common voice datasets have female speakers this should cause a decrease of performance at test time. Another point is that the training dataset used in Experiment 4 is a high quality dataset with low background noise, so the model probably did not learn to ignore or filter out noise, probably harming the reconstruction of the /a/ phoneme. Despite audio quality and low noise facilitate learning during training, the model may be impaired in a noisy situation which is a scenario observed in other domains such as images [24]. Its better overall performance in the VCTK dataset, also high quality and low noise, built for speech synthesis and voice transfer applications, is yet another evidence for this behavior. The performance of the model drops, compared to experiment 5, when the model is used in audios recorded in uncontrolled environments such as Common Voice.

In Experiments 5 and 6 we verified what has already been shown in [8], that the Fast ResNet–34 model trained with the AngleProto loss function in the Voxceleb dataset obtains an EER higher than the same model trained with the GE2E loss function. However, the authors in their work compared the models only in the VoxCeleb test dataset, we on the other hand, made a comparison using 4 different languages and different datasets, thus making a broader comparison.

An important consideration is that given the way we propose our experiments, we cannot say that language is a factor that decreases or increases the performance of the models. The high values of EER in the English language, for example, are due to the way the speaker verification dataset was set up. The VCTK has only 109 speakers and many samples were considered for each speaker as can be seen in Table 1. A greater number of test instances make the task more difficult and tend to increase the EER values. Therefore, we can only compare the individual performance of each model in the datasets and we cannot discuss decrease or increase in performance with the language change.

5 Conclusions and Future Work

In this article, we proposed a novel training method for speaker recognition models, called Speech2Phone. To enable the training of this method, we also built a novel dataset. Fully connected, fully convolutional and recurring models were explored. We observed that the fully connected models have a better performance in open-set scenarios, although they have had the worst performance for the closed-set scenario, while convolutional models have a better performance in the closed-set scenario, but they do not generalize well for the open-set scenario. The best model in our experiments was trained on 3 h and 23 min of speech and compared to two SOTA models in the VoxCeleb dataset, which were trained with approximately 2000 h of speech. The results obtained were comparable to SOTA even with an amount of data approximately 500 times smaller.

This work contributes directly to the area of speaker recognition, presenting a promising method for training speaker recognition models. In addition, the model proposed here can be used in tasks such as speech synthesis [26], voice cloning [3] and multilingual speech conversion [37]. In these tasks, the speaker recognition system embeddings are used to represent the speaker. In addition, an advantage of Speech2Phone in relation to the models proposed in [8] is the speed of execution, as our best model is a fully connected shallow neural network, making it faster. This feature is very desirable for applications due to the need to run in real time.

As our model demands a specific dataset format, we were not able to evaluate its training in a large dataset. We plan to address this issue in future works. For this we intend to increase the model’s training dataset as much as possible, and make it public. Additionally, we intend to explore the use of multispeaker speech synthesis [9] and voice cloning [3] to generate a dataset with more speakers and a larger vocabulary. On the other hand, we intend to investigate the possibility of a hybrid method that uses a Speech2Phone technique and Angular Prototypical loss function, thus being able to learn from a larger amount of data and at the same time guide the model’s learning with the reconstruction of a phonemeFootnote 6. Evaluating the limits of generalization across different languages is also a matter of future investigation. In addition, we intend to explore the insertion of noise in the training dataset in order to make the model more robust to noiseFootnote 7.

Notes

- 1.

- 2.

- 3.

- 4.

- 5.

- 6.

- 7.

References

Abadi, M., et al.: TensorFlow: large-scale machine learning on heterogeneous distributed systems. arXiv preprint arXiv:1603.04467 (2016)

Ardila, R., et al.: Common voice: a massively-multilingual speech corpus. In: Proceedings of the 12th Language Resources and Evaluation Conference, pp. 4218–4222 (2020)

Arik, S., Chen, J., Peng, K., Ping, W., Zhou, Y.: Neural voice cloning with a few samples. In: Advances in Neural Information Processing Systems, pp. 10019–10029 (2018)

Bowater, R.J., Porter, L.L.: Voice recognition of telephone conversations. US Patent 6,278,772 (21 August 2001)

Bredin, H.: TristouNet: triplet loss for speaker turn embedding. In: 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 5430–5434. IEEE (2017)

Cheng, J.M., Wang, H.C.: A method of estimating the equal error rate for automatic speaker verification. In: 2004 International Symposium on Chinese Spoken Language Processing, pp. 285–288. IEEE (2004)

Chopra, S., Hadsell, R., LeCun, Y.: Learning a similarity metric discriminatively, with application to face verification. In: 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, CVPR 2005, vol. 1, pp. 539–546. IEEE (2005)

Chung, J.S., et al.: In defence of metric learning for speaker recognition. In: Proceedings of the Interspeech 2020, pp. 2977–2981 (2020)

Cooper, E., et al.: Zero-shot multi-speaker text-to-speech with state-of-the-art neural speaker embeddings. In: 2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), ICASSP 2020, pp. 6184–6188. IEEE (2020)

Deng, J., Guo, J., Xue, N., Zafeiriou, S.: ArcFace: additive angular margin loss for deep face recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4690–4699 (2019)

Ertaş, F.: Fundamentals of speaker recognition. Pamukkale Üniversitesi Mühendislik Bilimleri Dergisi 6(2–3) (2011)

Ferrucci, D., et al.: Building Watson: an overview of the DeepQA project. AI Mag. 31(3), 59–79 (2010)

Goodfellow, I., Bengio, Y., Courville, A.: Deep Learning. MIT Press (2016). http://www.deeplearningbook.org

Gruber, T.: Siri, a virtual personal assistant-bringing intelligence to the interface (2009)

Heo, H.S., Lee, B.J., Huh, J., Chung, J.S.: Clova baseline system for the VoxCeleb speaker recognition challenge 2020. arXiv preprint arXiv:2009.14153 (2020)

Ioffe, S.: Probabilistic linear discriminant analysis. In: Leonardis, A., Bischof, H., Pinz, A. (eds.) ECCV 2006. LNCS, vol. 3954, pp. 531–542. Springer, Heidelberg (2006). https://doi.org/10.1007/11744085_41

Kekre, H., Kulkarni, V.: Closed set and open set speaker identification using amplitude distribution of different transforms. In: 2013 International Conference on Advances in Technology and Engineering (ICATE), pp. 1–8. IEEE (2013)

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization. arXiv preprint arXiv:1412.6980 (2014)

Liu, W., Wen, Y., Yu, Z., Li, M., Raj, B., Song, L.: SphereFace: deep hypersphere embedding for face recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 212–220 (2017)

Logan, B., et al.: Mel frequency cepstral coefficients for music modeling. In: ISMIR, vol. 270, pp. 1–11 (2000)

McFee, B., et al.: librosa: audio and music signal analysis in Python. In: Proceedings of the 14th Python in Science Conference, pp. 18–25 (2015)

Michalski, R.S., Carbonell, J.G., Mitchell, T.M.: Machine learning: an artificial intelligence approach. Springer Science & Business Media (2013). https://doi.org/10.1007/978-3-662-12405-5

Nagrani, A., Chung, J.S., Zisserman, A.: VoxCeleb: a large-scale speaker identification dataset. In: Proceedings of the Interspeech 2017, pp. 2616–2620 (2017)

Nazaré, T.S., da Costa, G.B.P., Contato, W.A., Ponti, M.: Deep convolutional neural networks and noisy images. In: Mendoza, M., Velastín, S. (eds.) CIARP 2017. LNCS, vol. 10657, pp. 416–424. Springer, Cham (2018). https://doi.org/10.1007/978-3-319-75193-1_50

Nussbaumer, H.J.: The fast Fourier transform. In: Fast Fourier Transform and Convolution Algorithms, vol. 2, pp. 80–111. Springer, Heidelberg (1981). https://doi.org/10.1007/978-3-662-00551-4_4

Ping, W., et al.: Deep Voice 3: scaling text-to-speech with convolutional sequence learning. In: International Conference on Learning Representations (2018)

Ramoji, S., Krishnan V, P., Singh, P., Ganapathy, S.: Pairwise discriminative neural PLDA for speaker verification. arXiv preprint arXiv:2001.07034 (2020)

Schroff, F., Kalenichenko, D., Philbin, J.: FaceNet: a unified embedding for face recognition and clustering. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 815–823 (2015)

Seara, I.: Estudo Estatístico dos Fonemas do Português Brasileiro Falado na Capital de Santa Catarina para Elaboração de Frases Foneticamente Balanceadas. Ph.D. thesis, Dissertação de Mestrado, Universidade Federal de Santa Catarina ... (1994)

Snyder, D., Garcia-Romero, D., Povey, D., Khudanpur, S.: Deep neural network embeddings for text-independent speaker verification. In: Interspeech, pp. 999–1003 (2017)

Snyder, D., Garcia-Romero, D., Sell, G., Povey, D., Khudanpur, S.: X-vectors: robust DNN embeddings for speaker recognition. In: 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 5329–5333. IEEE (2018)

Tang, Y.: TF.Learn: Tensorflow’s high-level module for distributed machine learning. arXiv preprint arXiv:1612.04251 (2016)

Veaux, C., Yamagishi, J., MacDonald, K., et al.: Superseded-CSTR VCTK corpus: English multi-speaker corpus for CSTR voice cloning toolkit. University of Edinburgh, The Centre for Speech Technology Research (CSTR) (2016)

Wan, L., Wang, Q., Papir, A., Moreno, I.L.: Generalized end-to-end loss for speaker verification. In: 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 4879–4883. IEEE (2018)

Wang, F., Cheng, J., Liu, W., Liu, H.: Additive margin Softmax for face verification. IEEE Sig. Process. Lett. 25(7), 926–930 (2018)

Wang, J., Wang, K.C., Law, M.T., Rudzicz, F., Brudno, M.: Centroid-based deep metric learning for speaker recognition. In: 2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), ICASSP 2019, pp. 3652–3656. IEEE (2019)

Zhou, Y., Tian, X., Xu, H., Das, R.K., Li, H.: Cross-lingual voice conversion with bilingual Phonetic PosteriorGram and average modeling. In: 2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), ICASSP 2019, pp. 6790–6794. IEEE (2019)

Acknowledgments

This study was financed in part by the Coordenação de Aperfeiçoamento de Pessoal de Nível Superior – Brasil (CAPES) – Finance Code 001, as well as CNPq (National Council of Technological and Scientific Development) grant 304266/2020-5. Also, we would like to thank the CyberLabs and Itaipu Technological Park (Parque Tecnológico Itaipu—PTI) for financial support for this paper. We also gratefully acknowledge the support of NVIDIA Corporation with the donation of the GPU used in part of the experiments presented in this research.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Casanova, E. et al. (2021). Speech2Phone: A Novel and Efficient Method for Training Speaker Recognition Models. In: Britto, A., Valdivia Delgado, K. (eds) Intelligent Systems. BRACIS 2021. Lecture Notes in Computer Science(), vol 13074. Springer, Cham. https://doi.org/10.1007/978-3-030-91699-2_39

Download citation

DOI: https://doi.org/10.1007/978-3-030-91699-2_39

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-91698-5

Online ISBN: 978-3-030-91699-2

eBook Packages: Computer ScienceComputer Science (R0)