Abstract

Alongside the increased use of algorithms as decision making tools, there have been an increase of cases where minority classes have been harmed. This gives rise to study of algorithmic fairness that deals with how to include fairness aspects in the design of algorithms. With this in mind, we define a new problem of fair coverage called Multi-Attribute Fairer Cover, that deals with the task of selecting a subset for training that is as fair as possible. We applied our method to the age regression model using instances from the UTKFace dataset. We also present computational experiments for an Integer Linear Programming model and for the age regression model. The experiments showed significant reduction on the error of the regression model when compared to a random selection.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

In recent years, there have been a surge of cases where algorithms applications have been linked to some sort of discrimination towards a minority group of people. These cases are known as algorithmic injustice, or even algorithmic racism, when the discrimination is based on race. Silva [12] has compiled a timeline showing that illustrates the increasing frequency of news reports on such cases. Algorithms have been extensively used in the decision making process. The level of human participation varies in these decisions. For example, O’Neil [10] reports on the case where American teachers are evaluated by a proprietary algorithms and are required to keep a certain score. Although these scores can affect their careers, the teachers have no idea how the scores are assigned by the algorithm. In this example, the algorithms are helping on the decision making process, but one extreme case can be found in the report by Soper [13]. The author showcases how some human-resources operations from an online retailer have supposedly been transferred to algorithms. The report discusses how the decisions made by these algorithms are affecting the lives of their collaborators, culminating even on wrongful terminations.

With the increased use of these tools, the scientific community has been reacting and giving attention to the potential problems that the indiscriminate use of these tools may cause. For instance, Chung [2] has presented an extensive research of cases of algorithmic racism. They pointed out among others things that we need to address the issues of fairness during the design of the algorithm, as well as explicitly including protected attributes in auditing processes in order to properly identify bias. It has been shown that including these attributes even on the training phase can improve accuracy [6, 8].

There are several approaches to insert fairness into models and mitigate the problems a biased model may cause. For example, Galhotra et al. [4] investigated the selection of features in order to ensure a fairer model, paying special attention to sensitive characteristics. Roh et al. [11] presented a greedy algorithm to select a fair subset during the sampling step in the batch selection. Asudeh et al. [1] proposed that fairness be considered in a the coverage problem. They defined a combinatorial optimization problem that, given a universe set of colored elements and a family of subsets, aims to select k subsets such that the number of covered elements is the same for each color and the sum of the weight of these elements is maximum, showing that this problem is NP-hard. Based on this interpretation of the problem, Dantas et al. [3] proposed the Fairer Cover problem, that removes restriction of having the same number of covered elements from each class and puts this in the objective function. Therefore, the goal is to find a cover that is as fair as possible. Both of these works consider fairness as a part of the algorithm design, instead of just optimizing models regardless of the impact in the different classes.

In this work, we conjecture that a fair training subset will improve the quality of a machine learning model, specifically of a regression model. We present a new variation and application of a fair coverage problem, and we define a generalization of the Fairer Cover problem introduced by Dantas et al. [3], which we call as Multi-Attribute Fairer Cover. We propose a new Integer Linear Programming (ilp) Model to solve instances of this problem and derive instances from the facial dataset UTKFaces. We also present computational experiments for the ilp model and attest the improvement of the age regression model when using a fair subset in the training. The experiments showed significant reduction on the error of the regression model.

The remainder of this paper is organized as follows. In Sect. 2, we explain our methodology, starting with a few notations, followed by a description of the dataset used in the computational experiments and a detailing of our method to solve the fair coverage problem. In Sect. 3, we present and discuss the results of our computational experiments. Lastly, in Sect. 4, we outline our conclusions and point out future work.

2 Methodology

In order to address the task of selecting a fairer subset as a combinatorial optimization problem, it is essential to begin by introducing a few definitions. Let \(\mathcal {U}\) be the universe set of \(u_{j}\) elements. Let \(\mathcal {S}\) be a family of subsets \(\mathcal {U}\). Lastly, let \(\mathcal {C}\) represent a set of \(\chi \) colors and let \(C: \mathcal {U}\rightarrow \mathcal {C}\) be a function that assigns one color to each \(u_{j}\). We call a k-cover, a selection of k subsets from \(\mathcal {S}\), and we say that an element \(u_{j}\) is covered if there is a subset \(\mathcal {S}_{\ell }\) from a k-cover that contains \(u_{j}\). A k-cover is considered fair if the number of covered elements is the same for every color.

We refer to as tabular a dataset that is represented as a matrix of where the rows are the samples and the columns are the attributes. We consider that the cell from the i-th row and the j-th column of the dataset contains exactly one of the possible values for the attribute described by the column j. That is, if the column j represents eye color and the possible values for eye color are either blue or brown, the cell from the i-th row and the j-th column has exactly one of these two values. We can use this type of dataset to create a combinatorial optimization problem by translating it into a family of subsets and treating the task of selecting a fair subset of size k for training as the task of finding a fair k-cover.

To translate a tabular dataset into a family of subsets, we start by representing each cell of the dataset by a distinct element \(u_{j}\) and the universe set \(\mathcal {U}\) is the set containing every cell. We create subsets \(\mathcal {S}_{\ell }\) of universe \(\mathcal {U}\) based on the rows of the dataset, such that each row is represented by one subset \(\mathcal {S}_{\ell }\). For this paper, let us consider that every attribute is discrete. This way, each possible value for an attribute of the dataset can be mapped to a finite set of colors. We color the elements of \(\mathcal {U}\) based on the class of the attribute contained in the cell that it represents. Note that, since a subset \(\mathcal {S}_{\ell }\) corresponds to a row of the dataset, every subset \(\mathcal {S}_{\ell }\) contains one element for each attribute (column) and they are all of different colors. We assume that each row of a tabular dataset is complete and there is no missing data. Under this assumption, we have that every row of the dataset has the same number of valid attributes, as do the subsets representing them.

Asudeh et al. [1] defined an optimization problem called Fair Maximum Coverage – fmc. In this problem, each element has a weight and there is a family \(\mathcal {S}\) of subsets \(\mathcal {S}_{\ell }\) of \(\mathcal {U}\). The goal of the fmc is to select k subsets \(\mathcal {S}_{\ell }\) such that the k-cover formed by these subsets is fair and has a maximum sum of the weights. As pointed out by Dantas et al. [3], the interpretation of Asudeh et al. may result in a limited solution pool, often leading to infeasible instances.

Dantas et al. [3] defined a new problem called Fairer Cover – fc, based on the fmc. This problem relaxes the fairness aspect, allowing for solutions to have a different number of covered elements for each color. This was achieved by removing the need to have a fair coverage as prerequisite and tackling this aspect in the objective function. The fc problem is given in Definition 2.1, and its goal is to find a k-cover that is as fair as possible and covers at least s elements, with k and s being given in the instance. The fc was applied to the Human Protein Atlas dataset obtained from the “Human Protein Atlas - Single Cell Classification”. This dataset has a very distinct characteristic: there is a single set of attributes, which are the labels of the identified proteins. We expand the work presented in Dantas et al. [3] to deal with multiple attributes using as an example the UTKFace dataset, which will be presented in the following subsection.

By dealing with multiple classes of attributes, it becomes necessary to divide the colors that represent the values of the attributes into groups. This happens because each class of attribute may have different sizes and meanings. For example, suppose we have a tabular dataset containing the attributes eye color and age group, where the eye color can be either blue or brown and age group can be either child, teenager or adult. In addition, suppose that each row is made up of a pair of eye color and age group. Each of the values blue, brown, child, teenager and adult will be represented by one of five distinct colors. To obtain a fair coverage of this dataset we would need a subset of rows where each of the five colors has the exact same number of elements. We should not aim to have the same number of covered elements representing adult and blue because they are of different types, therefore fc and fmc are not suitable for this kinds of instances. Also, it is worth noting that in the case of the fmc, where the equality of the covered classes is a characteristic of the solution, the instance may become infeasible.

Given a list of color groups \(\mathcal {G} = \{ G_1, G_2, \dots , G_h\}\) that puts each color in exactly one group, that is, \(\bigcup _{a=1}^h G_a = \mathcal {C}\) and \(G_a \cap G_b = \emptyset \) for all \(a, b\in \{1, 2, \dots , h\}\) with \(a \ne b\). We propose a generalized version of the fc called Multi-Attribute Fairer Cover – mafc as shown in Definition 2.2. This version of the problem considers the fairness among the colors of the group, that is, our goal is to have a selection that is as fair as possible when analyzed in each group of colors individually. Note that, if \(h=1\), we have a problem equivalent to fc.

The definition of the mafc differs from that of fc in two main points, one is the already discussed need to consider the fairness among the groups of elements and the other is the removal of parameter s that specifies the minimum number of elements to be covered. In an instance where every subset \(\mathcal {S}_{\ell }\) has the same size t, a k-cover always covers exactly \(s\times t\) elements. Therefore, there is no need to specify a minimum number of covered elements.

In the remainder of this section, we describe the dataset used in our experiments, as well as the proposed method for selecting a fair coverage and the model employed for the age regression task. We also present the evaluation metrics and the setup used for our experiments.

2.1 UTKFace Dataset

To evaluate our proposed method for a fair selection of samples, we use UTKFace dataset [14] in our experiments. This database consists of face images of people with different characteristics, which includes age (integer value ranging from 1 to 116), gender (Male or Female), and race (White, Black, Asian, Indian, and Other, that is, Hispanic, Latino, and Middle Eastern). It is worth noting that even though the age ranges from 1 to 116, the dataset does not contain samples for every value. In fact, there are only 104 distinct values.

From the original UTKFace dataset, we split it into 64%, 16%, and 20% for training, validation, and test sets, respectively. Table 1 presents the number of samples of each set of our split from UTKFace.

The frequencies of gender and race of training, validation, and test sets are presented in Figs. 1a and 1b. Concerning gender, there are more images of males than females in all three sets, although this difference is less accentuated. Considering race, the White class is more frequent, followed by Black, Indian, and Asian, and Other (Hispanic, Latino, and Middle Eastern) have the lowest representation in the sets. It is worth noting that the most frequent class is roughly double the second most frequent class, which showcases how unbalanced the dataset is.

2.2 Proposed Method

In this subsection, we describe the proposed method for fair age regression, divided into ilp and age regression models.

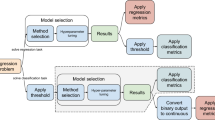

The pipeline of the proposed method, with ilp and age regression models, is illustrated in Fig. 2. From the original UTKFace images and annotations, we created instances for the mafc combinatorial problem. We then solved each instance of the mafc using an ilp model. From the ilp solution, we obtained a fair training subset considering age, gender, and race. Lastly, we trained and evaluated the predictions of the age regression model.

ILP Model. In this section we present an ilp formulation that models the mafc problem. The model is described by Eqs. (1a) - (1g).

The model uses three decision variables: \(x_j\), \(y_\ell \) and \(Q_c\). The first two are binary and the last one is a positive integer. The variable \(x_j\) indicates whether the element \(u_{j}\) is covered and the variable \(y_\ell \) indicates whether the subset \(\mathcal {S}_{\ell }\) was selected to be a part of the k-cover. The variable \(Q_c\) indicates the difference in the number of covered elements that have color c and the ideal number of covered elements that have color c. The ideal number of each color is given by the constants \(I_c\), the estimation for this value can be adjusted depending on the application.

The model also uses the constants h to represent the number of color groups, m to indicate the number of elements in \(\mathcal {U}\), k to indicate the number of subsets to be selected, n to define total number of subsets, and \(\chi \) to specify the total number of colors used in the instance. We also define subsets \(C_c\), which are the subsets of the elements \(u_{j}\) that are colored with the color c.

Considering the concept of fairness as the equality among the classes in the cover and the objective of the mafc as it is stated in Definition 2.2, we can consider the task of finding a k-cover that is as fair as possible as the minimization of the differences among the number of covered elements in each class. Dantas et al. [3] modeled this directly in the objective function as the minimization of the difference in the number of color of each pair of colors. This approach might not be viable when the number of color increases, if we have for example 100 colors it would be necessary to minimize the sum of \(\frac{100\times 99}{2} = 4950\) pairs of colors. Therefore, we choose to minimize the difference in the number covered elements for a given color c to its ideal value \(I_c\). The ideal value \(I_c\) can be easily estimated, since we know the number of colors in each group of colors and the number of selected subsets. In the case of a tabular dataset we can find the exact number \(I_c\) by simply dividing k by the number of colors in the group \(G_a\) to which c belongs. Going back to the example given in Sect. 2, the ideal number of elements covered representing the attribute blue is \(\frac{k}{2}\), since the class in which blue is contained has size two. With these definitions, the ilp model reads:

The objective function that reflects this modeling decision is given in Eq. (1a). The remaining equations describe the restrictions of the model, through which we specify the characteristics of a solution.

Equation (1b) indicates that an element \(u_{j}\) can only be considered covered (\(x_j = 1\)), if there is at least one subset \(\mathcal {S}_{\ell }\) such that \(u_{j} \in \mathcal {S}_{\ell }\) and this subset is selected to be in the cover (i.e., \(y_\ell = 1\)). Equation (1c) specifies that if a subset \(\mathcal {S}_{\ell }\) is selected to be in the cover (\(y_\ell = 1\)), then every element \(u_{j}\) of \(u_{\ell }\) is considered to be covered, along with the previous set of restrictions we guarantee the basic characteristics of a k-cover. Equation (1d) restricts that exactly k subsets must be selected to form a k-cover.

Equations (1e) and (1f) are complementary and together they are used to define the value of the decision variable \(Q_c\). Note that the sums of \(x_j\) are used to indicate the number of covered elements with color c. Suppose that this sum is smaller than the ideal value of \(I_c\). Then, Equation (1e) states that \(Q_c\) must be larger than a negative value and, since \(Q_c\) must be positive, this restriction is satisfied by any possible value of \(Q_c\). Now in the same scenario, Equation (1f) establishes that \(Q_c\) must be larger than a positive value. Although there is no positive upper bound on the variable \(Q_c\), we know that in an optimal solution this value will not be greater than the value of the difference. This is true because the objective function is a minimization on the value of \(Q_c\). Lastly, Equation (1g) indicates the domain of the decision variables.

Age Regression Model. For evaluation of ILP model and comparison with random selection of sub-samples of the original UTKFace dataset, we fine-tune ResNet50 architecture [5] for regression task about age prediction of each image. To do so, we remove the original output layer from ImageNet database [7] and we include the regression output layer, which consists of a single neuron, in order to predict a single float value corresponding to the age of each sample.

As each image from the dataset can have different lengths of pixel dimensions, that is, width and height sizes, we resize each image to have the same shape. With this preprocessing step, all images are transformed to 128 pixels of width and 128 pixels of height.

2.3 Evaluation Metrics

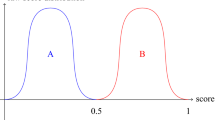

To assess our proposed method and comparison with a random selection of images, we apply Balanced MAE during our experiments. Balanced MAE is derived from Mean Absolute Error (MAE), which is presented in Eq. 2, where n indicates the number of samples, \(y_{i}\) and \(\hat{y}_i\) represent the ground-truth and predicted values for sample i, respectively.

Based on the MAE score for each age, that is, the value calculated using only the images from a specific age, we calculate Balanced MAE (BMAE), as shown in Eq. 3. In this equation, c represents the number of ages evaluated at the current stage and \({\text {MAE}}_{j}\) indicates the MAE score for a specific age j.

It is important to note that BMAE gives the same importance for each age, that is, independently of the number of samples of a specific age. For example, the age with the majority number of samples, it has the same impact as any other age (for example, the age with the minority number of samples). Since we are aiming for a model that has a fair training in several aspects, including the coverage of the ages, this score is more appropriate when compared to a simple MAE, where we would have that errors in ages that are underrepresented would have a lesser impact on the overall metric.

3 Computational Results

In this section, we present and discuss the results of our computational experiments. Our experiments are divided into two phases: experimentation with the ilp model and experimentation with the age regression model. In both phases, we analyze the concepts of fairness and how they are manifested in each case.

3.1 Computational Setups

Before we present our computational results, let us first present the setups used in each phase of our experiments.

ILP Setup: We used the IBM CPLEX Studio (version 12.8) to solve the combinatorial optimization instances described in the previous sections. We used the programming language C++ to implement the model described in Sect. 2 with compiler g++ (version 11.3.0), flags C++19 and -O3. The experiments reported in Sect. 3.2 were run in a Ubuntu 22.04.2 LTS desktop with Intel® Core™ i7-7700K and 32 GB of RAM.

Age Regression Model Setup: The age regression model was implemented using the programming language Python and TensorFlow library. To train and evaluate our model, we used Google Colaboratory virtual environment.

During the training step, the model was trained during 200 epochs, with early stopping technique with patience of 20 epochs and reduction of learning rate by a factor of \(10^{-1}\) if the model did not improved the results on validation set after 10 epochs. For the optimization process, we employed MAE as loss function and AdamW optimizer [9] with initial learning rate equal to \(10^{-4}\). It is worth noting that we tuned these parameter with experiments on the full training dataset, without any fairness adjustments. We followed this configuration for both fair and random selection of images for age regression models.

3.2 ILP Model Results

With the model implemented, we started the experiments with the different instances created from the UTKFace dataset. To create the instances, we considered the annotations of gender, age and race provided in the dataset to be a tabular set with three attributes and 15172 samples, that is, we considered only the images from training split of the original dataset.

For each possible value of the three attributes, we assigned a distinct color and created the sets corresponding to the each sample. Note that each subset of elements has size three, since it contains one element for each attribute. We specified four arbitrary values for k to use in the instances: 1000, 2000, 5000, 10000. These numbers will specify the sizes of the cover.

After defining the elements, the sets, the coloring and the values for the parameter k, we needed to estimate the ideal values for each color. In a perfect scenario, the ideal value \(I_c\) for a given color c would be defined as \(\frac{k}{|G_a|}\), where \(c \in G_a\), but from analyzing the dataset, we saw that this cannot always be achieved since there were sub-represented colors in the dataset. For these cases we devised a simple procedure to compute the value \(I_c\) for each color group \(G_a\).

We start the procedure by defining a temporary \(T_i\) ideal value as \(\frac{k}{|G_a|}\). For every color in \(c \in G_a\) that has a frequency smaller than \(T_i\), we set \(I_c\) as the value of the frequency of color c, next we update \(T_i = \frac{k - k^\prime }{|G_a| - n^\prime }\), where \(k^\prime \) is the sum of frequency of the colors that just had their ideal value \(I_c\) set and \(n^\prime \) is the number of said colors. We repeat this until every color has a frequency of at least \(T_i\). The remaining colors have their \(I_c\) value set to \(T_i\). By doing this, we set realistic ideal values for each class. That is, the classes that have a frequency lower than the ideal, have ideal value set to their respective frequencies because the solution has to respect this limitation. Then, the remaining classes have a new ideal value that accounts for the elements that are underrepresented.

In our initial experiments, we noticed a tendency of the model’s solutions to have a discrepancy in the covering when considering the combinations of the attributes. In Fig. 3, we show the distribution of gender (Fig. 3a) and race (Fig. 3b) for each of the ages in the training split of the dataset. This figure considers that the parameter k is set to 1000. Since our model is considering only the equality of each attribute separately, we had several cases where all the covered images of an given age were just from the class Male or just the class Female, as shown in Fig. 3a. In Fig. 3b, most ages are predominantly from a single race, in special the race Other is predominant in the ages 20 through 40.

This showed a possible problem for our model, that points towards a necessity to consider also the pairings of the attributes. This aspect could be considered either in the model for the problem or in the instance. For example, we could add to model restrictions that decrease the difference of each pair formed by one color from group \(\mathcal {G}_a\) and one color from group \(\mathcal {G}_b\). We choose to contour this problem by inserting a new element to each set, and color it with colors from a fourth group. This new element was colored based on the three original attributes combined. That is, if the sample corresponded was annotated as \(\{\texttt {male}, \texttt {black}, \texttt {3 years old}\}\), the new element received the color c and every other sample that had this exact three characteristics also received color c in the fourth element. Note that if any of the three characteristics is different, the color of the fourth element would also be different.

This approach increases the size of the instance, since the new element added to each set has the potential to add 2 genders \(\times 5\) races \( \times 104 \) ages \( = 1040\) new colors. This was not the case because the dataset does not have a sample for each of possible triples formed by the attributes. The increase on the instance size (more colors and more elements) is an aspect that cannot be avoided, either we increase the instance or increase the number of restrictions in the modeling. By creating this new element we avoid modifying the model and the problem definition. We note that we could also have considered pairs of attributes to color new elements in the set, but this would result in a much larger instance.

With these modifications, we executed the model with the new instances and we can see the impact of these changes in Fig. 4. We now have a more even distribution of the both gender and races in each age. It is worth noting that the dataset itself, has some gaps that will reflect in the possibility of equality in our problem. For example, the samples for ages larger than 100 years are all of female subjects. Over the age of 80 years, we do not have samples of the race Other, which tends to skew the selection of the classes that are present in these ages. That is, despite the race White being the most frequent in every age, its presence becomes more dominant on the larger ages because there are no other options to select the sets and also maintain the aspect of fairness in the colors referent to the classes of ages.

The distribution of each attribute in the solutions given by the model can be seen in Fig. 5. The first two graphs show the frequency of the elements of each class that were covered in the solution. In Fig. 5a, the values for the gender are very close to the ideal for each of the values of k, meaning that for this attribute the model found a near perfectly fair solution. Now, in Figs. 5b and 5c, we start to see small variations on the number of covered elements of each age and class of race. Such variations are accentuated when k is larger, as expected, because the least common attributes will reach their limits.

It is worth noting that the solutions obtained by the ilp model are optimal solutions. There was a time limit of 30 min for the executions of these experiments, but this limit was never reached. All instances finished execution within a few seconds, even after increasing the size of the instance with the addition of the fourth element to each set.

3.3 Age Regression Results

Based on the fair subsets generated by ilp model, we ran each experiment for the regression task. We also ran experiments for a random selection of the images, considering the same size of each fair set. For each experiment, we executed five runs and compared the mean value of each configuration.

In our first analysis, we evaluated fair and random sets for each size considering BMAE metric. Table 2 presents the results, showing that fair achieved the best outcomes of each size. We can highlight that fair selection outperformed random selection on smallest sets, with the difference of more than 2 years on 1000 set and more than 1 year on 2000 and 5000 sets on average for each age.

The average error for each age of fair selection based on ilp model is lower than random selection, as shown in Fig. 6. The fair selection achieved the lowest errors on ages with more than 80 years on sets with a limited number of images, which are the ages with less number of samples on the dataset.

Then, we assessed ilp fair and random sets considering gender and race classes. To do so, we filtered images from the condition in analysis, e.g., images of gender male, and evaluated using BMAE. Table 3 presents the outcomes of each selection approaches. Concerning gender, the fair set obtained the lowest errors on all the four training sizes against random selection, with the highest difference on training set with 1000 images.

Considering race, fair selection also presented best outcomes compared to random selection in all the classes for each training set size for k greater than 2000, and the best results for most of the classes on k equal to 1000. For White, Black, Asian, and Indian classes, which are the four most frequent ones, the highest difference of fair and random selections occurred on the smallest training set, that is, set with size equal to 1000, and the difference decreases considering the size of the training size. For Other, which is the least frequent class, the difference tends to increase considering the size of the training set. We can conclude that this occured due to the frequency of Other class, which represents less than 10% of the original training set, as well as the validation and test sets.

It is worth noting that, although the classes representing race and gender are very balanced, as shown in Fig. 5, indicating that we have a fair cover on these characteristics, we still have a noticeable disparity in the BMAE when considering the different classes of race and gender. For example, the error for the regression of Female is always greater than that of Male. We also have the race Black has a greater error consistently in all cases. This behavior is present in both the training with a fair subset and with a random subset. Although the training with the fair subset has decreased this difference in every case.

4 Conclusions and Future Work

In this paper, we defined a generalized version of the Fairer cover, called Multi-Attribute Fairer Cover. In this version of the problem, we were able to handle the selection of a training subset on a tabular dataset that has multiple attributes and applies the fairness condition to each attribute individually. We present an Integer Linear Programming (ilp) formulation that models this problem and also detail a procedure to create a combinatorial instance from a tabular dataset. We presented experiments with our ilp model and instances derived from a real application dataset. The model achieved a good performance, returning the optimal solution to every instance tested in just a few seconds. We also analyzed the solutions given by the model, and showed how to adjust the instance in order to satisfy the fairness condition through the pairing of the attributes.

We developed an age regression model to test the training with the different sizes of fair subsets obtained with the ilp model and compared these models with their random counterparts. We found that error was reduced when using the fair subsets, specially when dealing with more advanced ages. We also reduced the disparity in error between the samples of the different genders and races.

As future work, we intend to investigate how the fair training subset affects a different regression models specifically tuned for fairness. Since the UTKFace dataset has annotations on both race and genders, we also intend to experiment with our model on other tasks, such as race and gender classification, and investigate how to decrease the error disparity between gender and race classes.

References

Asudeh, A., Berger-Wolf, T., DasGupta, B., Sidiropoulos, A.: Maximizing coverage while ensuring fairness: a tale of conflicting objectives. Algorithmica 85(5), 1287–1331 (2023)

Chung, J.: Racism In. Public Citizen, Racism Out - A Primer on Algorithmic Racism (2022)

Dantas., A.P.S., de Oliveira., G.B., de Oliveira., D.M., Pedrini., H., de Souza., C.C., Dias., Z.: Algorithmic fairness applied to the multi-label classification problem. In: 18th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications - Volume 5: VISAPP, pp. 737–744. SciTePress (2023)

Galhotra, S., Shanmugam, K., Sattigeri, P., Varshney, K.R.: Causal feature selection for algorithmic fairness. In: International Conference on Management of Data, SIGMOD2022, pp. 276–285. Association for Computing Machinery (2022)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Conference on Computer Vision and Pattern Recognition, pp. 770–778. IEEE (2016)

Kleinberg, J., Ludwig, J., Mullainathan, S., Rambachan, A.: Algorithmic fairness. AEA Papers Proc. 108, 22–27 (2018)

Krizhevsky, A., Sutskever, I., Hinton, G.E.: ImageNet classification with deep convolutional neural networks. In: Advances in Neural Information Processing Systems (NIPS), pp. 1097–1105. Curran Associates, Inc. (2012)

Lin, Y., Guan, Y., Asudeh, A., Jagadish, H.V.J.: Identifying insufficient data coverage in databases with multiple relations. VLDB Endowment 13(12), 2229–2242 (2020)

Loshchilov, I., Hutter, F.: Decoupled weight decay regularization, pp. 1–11 (2019). arXiv:1711.05101

O’Neil, C.: Weapons of math destruction: how big data increases inequality and threatens democracy. In: Crown (2017)

Roh, Y., Lee, K., Whang, S., Suh, C.: Sample selection for fair and robust training. Adv. Neural. Inf. Process. Syst. 34, 815–827 (2021)

Silva, T.: Algorithmic racism timeline (2020). https://bit.ly/3O6RJYC

Soper, S.: Fired by Bot at Amazon: ‘It’s You Against the Machine’ (2021). https://www.bloomberg.com/news/features/2021-06-28/fired-by-bot-amazon-turns-to-machine-managers-and-workers-are-losing-out, https://bit.ly/3IvhUXy

Zhang, Z., Song, Y., Qi, H.: Age progression/regression by conditional adversarial autoencoder. In: Conference on Computer Vision and Pattern Recognition, pp. 5810–5818. IEEE (2017)

Acknowledgements

The authors would like to thank the São Paulo Research Foundation [grants #2017/12646-3, #2020/16439-5]; the National Council for Scientific and Technological Development [grants #306454/2018-1, #161015/2021-2, #302530/2022-3, #304836/2022-2]; the Coordination for the Improvement of Higher Education Personnel; and Santander Bank, Brazil.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Dantas, A.P.S., de Oliveira, G.B., Pedrini, H., de Souza, C.C., Dias, Z. (2023). The Multi-attribute Fairer Cover Problem. In: Naldi, M.C., Bianchi, R.A.C. (eds) Intelligent Systems. BRACIS 2023. Lecture Notes in Computer Science(), vol 14195. Springer, Cham. https://doi.org/10.1007/978-3-031-45368-7_11

Download citation

DOI: https://doi.org/10.1007/978-3-031-45368-7_11

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-45367-0

Online ISBN: 978-3-031-45368-7

eBook Packages: Computer ScienceComputer Science (R0)