Abstract

Regression models are commonly used to model the associations between a set of features and an observed outcome, for purposes such as prediction, finding associations, and determining causal relationships. However, interpreting the outputs of these models can be challenging, especially in complex models with many features and nonlinear interactions. Current methods for explaining regression models include simplification, visual, counterfactual, example-based, and attribute-based approaches. Furthermore, these methods often provide only a global or local explanation. In this paper, we propose a hybrid multilevel explanation (Hybrid Multilevel Explanation - HuMiE) method that enhances example-based explanations for regression models. In addition to a set of instances capable of representing the learned model, the HuMiE method provides a complete understanding of why an output is obtained by explaining the reasons in terms of attribute importance and expected values in similar instances. This approach also provides intermediate explanations between global and local explanations by grouping semantically similar instances during the explanation process. The proposed method offers a new possibility of understanding complex models and proved to be able to find examples statistically equal to or better than the main competing methods and to provide a coherent explanation with the context of the explained model.

This work was supported by FAPEMIG (through the grant no. CEX-PPM-00098-17), MPMG (through the project Analytical Capabilities), CNPq (through the grant no. 310833/2019-1), CAPES, MCTIC/RNP (through the grant no. 51119) and IFMG - Campus Sabará.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Regression models are widely used in various fields, including medicine, engineering, finance, marketing, among others. They are used to generate associations between a set of features and an observed outcome, and can be used for: (i) prediction—when, given a prediction value, we want to interpolate or extrapolate from observations to estimate the outcome, or (ii) to find associations between the independent features and the outcome and how strong these associations are.

When the regression models can account for non-linear data relationships, the produced models cannot be directly interpreted by analyzing their coefficients, as it is usual in linear regression. As these models can be complex, have many features and nonlinear interactions, having methods able to understand the reasons that lead to a regression model’s output is important, especially in critical areas that can affect human life.

Current methods for explaining regression models provide different approaches, including simplification, visual, counterfactual, example-based, and feature-based explanations [3, 9, 16]. Each of these approaches provides complementary explanations for the model’s output, but often they do not provide a complete understanding of why an output occurs. Additionally, these approaches usually provide a global explanation of the overall model behavior or a local explanation about the output of a specific instance.

In this paper we propose Hybrid Multilevel Explanation (HuMiE), a method for explaining regression models that build up on example-based explanations. Example-based explanations have the advantage of being similar to the human way of explaining things by similarity to past events, but they often do not provide a complete understanding of why an output occurs. Our proposed method, on the other hand, provides a complete understanding of the reasons why an output is obtained, in terms of feature importance and expected values in similar instances.

For each selected example, HuMiE explain the reasons why the output is obtained in terms of feature importance and expected values in similar instances. Furthermore, the method provides intermediate explanations between the possibilities of global and local explanations, grouping semantically similar instances during the explanation process and bringing new possibilities of output understanding to the user.

The remainder of this paper is organized as follows. In Sect. 2, we review the existing literature on explanation methods for regression models. In Sect. 3, we introduce HuMiE. In Sect. 4, we report the experimental results. Finally, in Sect. 5, we report our conclusions and possibilities of future work.

2 Related Work

As the use of models created by machine learning algorithm is increasingly used to make critical decisions in our daily lives, so does the concern to understand the internal mechanisms that lead such models to obtain their outputs.

Machine learning models can be divided into two categories: those that create intrinsically interpretable models and those that are considered black-boxes. Intrinsically interpretable models are easily understandable by humans, and include linear models, and decision trees. In black box models, in turn, a human cannot easily understand the internal mechanisms that led to the output [13]. It is fair to assume that currently the most accurate models are precisely of this type [9]. Examples include Support Vector Regressors (SVR) and Neural Network Regression (NNR).

The eXplainable Artificial Intelligence (XAI) literature encompasses methods generated for explaining black-box models, with an emphasis on classification tasks [12]. More recently we have seen various initiatives aimed to explain regression models, where the output is represented by a continuous value instead of a categorical one.

Among the main explanation approaches described in the literature, we have: i) simplification, where mathematical substitutions are made, generating more trivial algebraic expressions; ii) visual, where the model is explained through graphical elements [1, 23]; iii) counterfactual, methods demonstrate that minimal modifications made to the input data can significantly modify the output [8, 17]; iv) feature-based, analyze the characteristics of the data-including, for example, statistical summaries and feature importance- that cause a given black-box model to perform a prediction [20, 22]; and v) example-based, where representative instances of the model are created or selected from the training set (and are also called prototypes) to generate a global explanation [2, 10, 11, 15], and local explanations are based on the distance of these prototypes to the instance to be explained.

In this context, we observe that example-based explanation approaches, although working in a similar to humans in the sense of justifying events based on analogies by similarity, end up not fully explaining why a similar instance resulted in a specific output. Therefore, we propose a hybrid multilevel approach that will fill this gap, providing feature-based explanations that will demonstrate how their output was obtained for each selected example.

One of the few works that has already developed hybrid approaches in the literature was MAME (Model Agnostic Multilevel Explanations) [19]. As per MAME, little attention has been given to obtaining insights at an intermediate level of model explanations. MAME is a meta-method that builds a multilevel tree from a given method of local explanation. The tree is built by automatically grouping local explanations, without any hybrid character combining different types of explanation. The intermediate levels are based on this automatic grouping of the local explanations. Furthermore, the local explanation methods evaluated in MAME were not specifically built to work with regression problems, which can cause some damage when we start to have a continuous range of possible values for each instance output. Finally, MAME did not make the source code available and neither did they make available the tree generated for the only regression dataset used in their work (Auto-MPG), which makes any kind of comparison between the approaches difficult.

3 Hybrid Multilevel Explanations

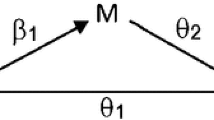

The section introduces Hybrid Multilevel Explanation (HuMiE), an explanation method that combines both example-based and feature-base explanations. HuMiE produces a tree where each node represents a prototype selected from the training set. After prototypes are selected, a feature-based approach is used to provide further details on the models decision.

The dynamics of creating this explanation tree is exemplified in Fig. 1. In the figure, each square represents an instance from the training set (T), and the colors of the squares reflect the values of the features of the instances. We calculate the similarity between instances using these features. In this example, HuMiE first selects two prototypes as the root of the tree (r). After that, the distance from the remaining instances to each prototype is calculated, and each instance associated with its most similar prototype, generating 2 subgroups. For each of these subgroups, again two children prototypes are chosen. This process is repeated until it reaches the height (h) of the tree defined by the user.

Having a tree of prototypes, for each node of the tree, HuMiE shows the most relevant features considering the prototype associated with that node, together with the range of values assumed by the instances of the subgroup represented by that prototype. Finally, HuMiE allows for a local explanation of a test instance by selecting the leaf-level prototype most similar to the instance to be explained.

Different algorithms can be used to select both the prototypes and to determine features importance. In this paper, we use M-PEER (Multiobjective Prototype-basEd Explanation for Regression) [7] to select prototypes and DELA (Dynamic Explanation by Local Approximation) [5] to determine feature importance, although they could be replaced by other algorithms.

3.1 Prototype Selection

The prototype selection is done by M-PEER. M-PEER is based on the SPEA-2 evolutionary algorithm and simultaneously optimizes three metrics: fidelity of global prototypes, stability of global prototypes, and Root Mean Squared Error (RMSE).

The fidelity and stability measures were originally proposed in the context of classification [14, 18] and adapted in [7] for regression. The fidelity metric assesses how close the output of the chosen prototypes is from the output of the instances to be explained, and is defined in Eq. 1.

The second metric, global stability, measures whether the characteristics of the prototypes are similar to the characteristics of the instances to be explained, as defined in Eq. 3.

where \(x_i\) and \(x_j\) are the features of instances to be compared, d is the number of dimensions, and \(dist_{Mink}\) is the Minkowski distance. In addition, \(\overline{GS}\) and \(\overline{GF}\) are normalized using min-max.

In M-PEER, the authors observed that the obtained results were better when the \(\overline{GS}\) and \(\overline{GF}\) metrics are used together through the GFS combination, shown in Eq. 5. By using GFS we make the similarity take into account both the features of the instance and the output predicted by the model.

Finally, the error is used to ensure that the selected set of prototypes is as close as possible to the error of the black-box model.

3.2 Feature Importance

After selecting the prototypes, we want to obtain a feature-based explanation showing what motivated the output obtained for the specific instance. For this, we use the DELA explanation method.

DELA is an improvement on the work presented by ELA (Explanation by Local Approximation) [6]. By analyzing the coefficients found in local linear regressions, this method provides a global explanation of the model, using a stacked area chart where the user can check the feature importance for different output values, and also provides local explanations for specific instances through the local importance of the features, expected value ranges, and the coefficients of the local explanation. Additionally, DELA considers the characteristics of the data sets used in the model training stage, chooses the most appropriate distance measure to be used, dynamically defines the number of neighbors that will be used for local explanation according to the local data density, and calculates feature importance based on the location of the test instance.

DELA calculates the most relevant features of a prototype using Eq. 6:

\(LevelImportance_i\) is defined as:

where \(b_i\) is the coefficient returned for feature i and \(V_i\) the vector of values found in the neighboring examples (set of closest instances) for feature i - and the minimum and maximum values of each feature, extracted considering the prototype and the instances in its subgroup.

3.3 Algorithm

The method is illustrated in Algorithm 1. It receives as input the training (T) and test (\(T'\)) sets, together with the height h of the explanation tree to be built and the number r of root nodes present in the tree, where the root nodes represent the global explanations of the model.

In line 1, we create the regression model to be explained. Note that HuMiE is model agnostic, meaning that it has the ability to explain any regression model. To ensure semantically valid results, HuMiE works with continuous features only. Any categorical feature should be pre-processed. Next, we initialize some important variables for building the multilevel tree. In line 2, the tree is initialized as an array. This array will be filled with tuples containing three pieces of information: the selected prototype, the prototype’s depth level in the tree, and the prototype at the previous level (parent) of the selected prototype. In line 3, a dictionary is initialized to store, for each selected prototype, the minimum and maximum values of each feature found in the subset of instances from where the prototype was chosen. This information will serve in the future to show the minimum and maximum values of each feature within the subset that the prototype will be representing. In line 4, an array is initialized to store the prototypes already chosen during the tree construction process. Finally, on line 5, we initialize an array to store the set of prototypes selected at the top level. As the explainer is built on a top-down fashion (i.e., starting from the root level), it has no higher-level prototype, and this variable is initialized with \(-1\). In line 6 it is defined that the number of prototypes of the root level is the value of r received as a parameter in the algorithm.

After that, we carry out the selection of the prototypes that will belong to the tree in the loop between lines 7 and 29. Note that the construction of this tree will start from the root, which will represent the global explanation of the model. After that, for each root level prototype selected, we expand it up to the level at limit h, defined by the user. In line 10 it is defined that for intermediate levels of the explanation tree we will have a binary division of the prototypes. In the loop of lines 11 to 13, we check, for all instances of the training set, which prototype of the previous level is the most similar to represent it. We are redistributing the training points to identify the most suitable prototypes in a subset. Note that if we are at the first level this array will be an empty set (line 8). To measure the similarity between the instances we used the L2 of the normalized values of LF and LS measure (Eq. 8).

\(\overline{LF}\) and \(\overline{LS}\) are normalized values by the min-max of the original metrics.

In the loop between lines 15 and 28 we find the prototypes that represent each of the subsets divided by the prototypes in the previous iteration. To accomplish this, in line 20, we check the indexes of valid training points, i.e., all training points explained by the same prototype in the previous tree level, excluding instances already selected as prototypes. The conditional performed on line 17 verifies whether the chosen prototypes are from the root level, if so, all instances of the training set make up the list of valid indexes. In line 20, the M-PEER method is called for this subset of instances and the chosen prototypes are added to the tree in line 21. In line 22, the minimum and maximum values of all instances used to select each of the prototypes are checked, and in line 24 the set of selected prototypes is added to the array of previously selected prototypes. Finally, the condition in line 25 tests whether we have reached the last level h defined by the user, which corresponds to the leaf nodes of the tree that will be later associated with test instances.

The selection of the most relevant attributes and the range of expected values for each attribute of similar instances is performed in the loop between lines 31 and 33 for selected prototype points at all levels of the tree. The same procedure of feature analysis is performed in the loop between lines 35 and 38 for the set of test instances. This is done by DELA. In line 36, we check which leaf node is closest to the test point to associate the two in the final view of the tree.

Finally, on line 39, a function is called to draw the tree, showing the most relevant features and the range of expected features for the selected prototype instance and for the test instances.

4 Experiments

We perform both a quantitative and a qualitative evaluation of the prototypes chosen by HuMiE and their characteristics.

4.1 Experimental Setup

In the quantitative evaluation, we compare the prototypes chosen by HuMiE for local explanation with those chosen by other methods of explanation based on examples: ProtoDash [10] and the standalone version of M-PEER. ProtoDash is an example-based explanation method that selects prototypes by comparing the distribution of training data and the distribution of candidate prototypes, i.e., it does not take into account the model output.

For that, we use the 25 datasets presented in Table 1. To measure the quality of the prototypes, we use the classic root mean squared error (RMSE) and also the metrics GF, GS and GFS presented in Sect. 3.1. We also performed a qualitative analysis on the classic Auto-MPG dataset, which portrays the fuel consumption of automobiles (miles per gallon - MPG) according to their construction characteristics.

4.2 Results

This section first presents a quantitative experimental analysis of the explainability method proposed. The models to be explained were built using a Random Forest Regression algorithm, implemented with sklearnFootnote 1. All parameters were used with their default values.

Taking as input the model built using each of the datasets shown in Table 1, we run the explanation methods ProtoDash, M-PEER and HuMiE to compare the quality of the selected prototypes. For ProtoDash, we selected 6 global explanation prototypes and assigned each of the test instances to the most similar. For the M-PEER method we selected 3 global prototypes that are used for local explanation according to the proximity of the test instances. Finally, in HuMiE we select 3 global prototypes (the same as in the M-PEER method) to generate a sublevel of 6 prototypes that were then used to explain the test instances.

The results obtained for each dataset are shown in Table 2, where the best ones are highlighted in bold. In addition, we show the critical diagrams of each of the metrics in the graphs in Fig. 2. These diagrams were generated after carrying out an adapted Friedman test followed by a Nemenyi post-hoc with a significance level of 0.05. As we are comparing multiple approaches, Bonferroni’s correction was applied to all tests [4]. In these diagrams, the main line shows the average ranking of the methods, i.e., how well one method did when compared to the others. This ranking takes into account the absolute value obtained by each method according to the evaluated metric. The best methods are shown on the left (lowest rankings).

Critical diagrams of the choice of prototypes for local explanation of test instances according to RMSE metrics (a), Global Stability (b), Global Fidelity (c) and GFS (d). From left to right, methods are ranked according to their performance, and those connected by a bold line present no statistical difference in their results.

As we can see, the creation of a prototype explanation intermediate level managed to keep the same RMSE as its competitors, as not method was statistically superior than the others. Furthermore, HuMiE was statistically better than the explanation with only one M-PEER prototype level in relation to Global Stability and GFS. HuMiE was also statistically better than all competitors in terms of the Global Fidelity of the chosen prototypes.

Next, we show a qualitative analysis for Auto-MPG, which has 398 instances described by 8 features. To evaluate the model error, we divided the data into two subsets, the first being the training set with 70% of the instances (279) and the test set with the remaining 30% (119). Note that, in this case, the model error is used only to identify how good the explanation model is related to the original regression model to be explained. As our focus is the explanation provided and not minimizing the error, we do not perform any further evaluation, for example, through cross-validation.

The Auto-MPG dataset portrays a classic regression problem whose objective is to find the consumption of a car having as features constructive characteristics of the vehicle, they are: cylinders, displacement, horsepower, weight, acceleration, model year and origin. To evaluate the explanation provided by our HuMiE approach we build a model using all available features in a Random Forest algorithm. The RMSE error found on the test set was 0.36.

Figure 3 shows the explanation provided for the model. In the explanations provided in this work we defined the height h of the tree as 2 and the total number of prototypes in the global explanation of the root as 3. Two test instances, one with a low output value and another with a high output value, were previously selected for local explanation.

In the explanation, each node highlighted in gray represents a prototype selected from the training set and the nodes, highlighted in red represent two test instances selected for local explanation. In addition, within each of the nodes, the actual output value of the instance (y) and the respective value predicted by the regressor model (\(y'\)) are presented in the first line. The other lines of the tree node represent the 5 main features identified for that instance with their respective value (the most relevant number of features to be shown can easily be changed if the user wants to).

The bars represent the observed threshold values between all valid closest points when finding the prototype. In the case of the first upper level (root of the tree) the limit is imposed by the values of all instances of the training set. In the case of the test node the limit is imposed by all the closest features that originated the prototype local explanation leaf (leaf node of the hybrid explanation tree). The red dot represents the feature-specific value for the instance. The green range represents the allowable variation for creating a similar instance, determined by the DELA method. Specifically, it represents the range of feature values found among instances that deviate no more than 10% from the output predicted by the model. When hovering over one of the features, the green range is shown numerically with a highlight balloon for the user.

Note that the global explanation prototypes managed to cover the solution space well, with the first prototype being a high fuel consumption car (14.5 MPG), the second an intermediate consumption car (19.08 MPG) and the last one a low fuel consumption car of fuel (28.05 MPG). In addition, among the most relevant features at this level, we find those that domain experts traditionally portray as determining the consumption of a car, we can highlight: weight, displacement, horsepower and acceleration.

In the second level (intermediate explanation) we observe that the prototypes really represent subgroups of the prototypes of the global explanation. This observation can be made both in terms of the output returned by the model and the range for each of the features. In the output we can observe that the values obtained do not extrapolate to values of the side prototypes of the previous level. In the range of features, we observed, for example, that in the first prototype of the second level the displacement feature varied between 260 and 455 while in its parent prototype these values varied between 68 and 455, portraying a specification of the group.

Finally, we can also observe that the L2FS similarity metric was adequate when associating the leaf prototypes of the explanation tree with the test instances, since both the values returned by the regressor model and the feature intervals represent the best possible association in each specific scenario.

Note that an explanation provided by the M-PEER for this dataset would only consist of the 3 instances represented at the root level of the explanation tree. In this context, we would only have information about the values for each of the features for these instances.

We can also compare our explanation with what we could obtain from a popular feature-based explanation method called LIME (Local Interpretable Model-Agnostic Explanations) [21]. LIME explains an observation locally by generating a linear model (considered interpretable by humans) using randomly generated instances in the neighborhood of the instance of interest. The linear model is then used to portray the feature’s contribution to the output of the specific instance based on the model’s coefficients.

Using the same two test instances shown in Fig. 3, we have the explanations provided by LIME in Fig. 4. As we can observe, LIME would end up showing semantically invalid interpretations to the user, such as portraying a positive contribution of the “weight” feature for cars weighing below 2250.0, without a lower limit. Thus, one could interpret, for example, the possibility of a negative weight for the car. Furthermore, with our hybrid multilevel approach, we have access to both similar instances in the training set and the characteristics that make these instances relevant, providing an explanation that brings new information about the model.

5 Conclusions and Future Work

HuMiE is a method capable of creating multilevel hybrid explanations for regression problems. By hybrid we mean that the provided explanation encompasses as many elements as explanations based on examples, through the selection of prototypes from the training set, as well as the presentation of explanations based on features such as, for example, the most relevant features and the expected values of features for the region of a given instance. By multilevel, we refer to the fact that HuMiE presents the model’s global explanations in a tree format, with local explanations for specific test instances being at the leaves of this tree, global explanations at the root but also intermediate explanations, portraying semantically similar subgroups. HuMiE uses the M-PEER approach as a auxiliar method for choosing prototypes and the DELA approach for features analysis.

Experiments with real-world datasets quantitatively showed that the prototypes chosen by HuMiE were better than all competitors (M-PEER and ProtoDash) in relation to the fidelity metric and better than M-PEER with a single level in relation to the stability metrics and GFS. Qualitatively, we showed that HuMiE is able to diversify the choice of prototypes according to the characteristics of the presented dataset, both in terms of model output and features. In addition, HuMiE was able to find subgroups of instances that are similar, providing an intermediate interpretation between the local and global explanations which might be useful for the user to understand the moded’s rationale. In this context, HuMiE was able to identify which features are most important according to the subgroup the instance belongs to.

As future work, we propose to explore additional metrics to evaluate HuMiE’s performance and the robustness of explanations, in order to gain a more comprehensive view of the method’s capabilities. Furthermore, combining other explanation approaches, such as counterfactuals, during the construction of the hybrid explanation could contribute to an even more thorough understanding of the model.

References

Adadi, A., Berrada, M.: Peeking inside the black-box: a survey on explainable artificial intelligence (XAI). IEEE Access 6, 52138–52160 (2018)

Bien, J., Tibshirani, R.: Prototype selection for interpretable classification. Ann. Appl. Stat. 5(4), 2403–2424 (2011)

Carvalho, D.V., Pereira, E.M., Cardoso, J.S.: Machine learning interpretability: A survey on methods and metrics. Electronics 8(8), 832 (2019)

Demšar, J.: Statistical comparisons of classifiers over multiple data sets. J. Mach. Learn. Res. 7, 1–30 (2006)

Filho, R.M.: Explaining Regression Models Predictions. Ph.D. thesis, Universidade Federal de Minas Gerais (2023)

Filho, R.M., Lacerda, A., Pappa, G.L.: Explaining symbolic regression predictions. In: 2020 IEEE Congress on Evolutionary Computation (CEC), pp. 1–8 (2020)

Filho, R.M., Lacerda, A.M., Pappa, G.L.: Explainable regression via prototypes. ACM Trans. Evol. Learn. Optim. 2(4) (2023)

Grari, V., Lamprier, S., Detyniecki, M.: Adversarial learning for counterfactual fairness. Mach. Learn. 11, 1–23 (2022)

Guidotti, R., Monreale, A., Ruggieri, S., Turini, F., Giannotti, F., Pedreschi, D.: A survey of methods for explaining black box models. ACM Comput. Surv. 51(5), 9:31-93:42 (2018)

Gurumoorthy, K.S., Dhurandhar, A., Cecchi, G.A., Aggarwal, C.C.: Efficient data representation by selecting prototypes with importance weights. In: ICDM 2019 (2019)

Kim, B., Khanna, R., Koyejo, O.O.: Examples are not enough, learn to criticize! criticism for interpretability. In: Lee, D., Sugiyama, M., Luxburg, U., Guyon, I., Garnett, R. (eds.) Advances in Neural Information Processing Systems, vol. 29. Curran Associates, Inc. (2016)

Letzgus, S., Wagner, P., Lederer, J., Samek, W., Muller, K.R., Montavon, G.: Toward explainable artificial intelligence for regression models: a methodological perspective. IEEE Sig. Process. Mag. 39(4), 40–58 (2022)

Loyola-González, O.: Black-box vs. white-box: understanding their advantages and weaknesses from a practical point of view. IEEE Access 7, 154096–154113 (2019)

Melis, D.A., Jaakkola, T.: Towards robust interpretability with self-explaining neural networks. In: NeurIPS 2018, pp. 7775–7784 (2018)

Ming, Y., Xu, P., Qu, H., Ren, L.: Interpretable and steerable sequence learning via prototypes. In: KDD 2019, pp. 903–913 (2019)

Molnar, C.: Interpretable Machine Learning, 2nd edn. Lulu.com (2022). https://christophm.github.io/interpretable-ml-book

Mothilal, R.K., Sharma, A., Tan, C.: Explaining machine learning classifiers through diverse counterfactual explanations. In: Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, FAT* 2020, pp. 607–617. Association for Computing Machinery, New York, NY, USA (2020)

Plumb, G., Al-Shedivat, M., Xing, E.P., Talwalkar, A.: Regularizing black-box models for improved interpretability. CoRR (2019)

Ramamurthy, K.N., Vinzamuri, B., Zhang, Y., Dhurandhar, A.: Model agnostic multilevel explanations. In: Larochelle, H., Ranzato, M., Hadsell, R., Balcan, M., Lin, H. (eds.) Annual Conference on Neural Information Processing Systems 2020, NeurIPS 2020. Advances in Neural Information Processing Systems 33, 6–12 December 2020, Virtual (2020)

Ribeiro, M.T., Singh, S., Guestrin, C.: “why should i trust you?”: explaining the predictions of any classifier. In: Proceedings of the 22Nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, KDD 2016, pp. 1135–1144. ACM, New York, NY, USA (2016)

Ribeiro, M.T., Singh, S., Guestrin, C.: “Why should i trust you?” Explaining the predictions of any classifier. In: KDD 2016, pp. 1135–1144 (2016)

Schwab, P., Karlen, W.: CXPlain: causal explanations for model interpretation under uncertainty. In: NeurIPS 2019, pp. 10220–10230 (2019)

Zhao, Q., Hastie, T.: Causal interpretations of black-box models. J. Bus. Econ. Stat. 39(1), 272–281 (2021)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Filho, R.M., Pappa, G.L. (2023). Hybrid Multilevel Explanation: A New Approach for Explaining Regression Models. In: Naldi, M.C., Bianchi, R.A.C. (eds) Intelligent Systems. BRACIS 2023. Lecture Notes in Computer Science(), vol 14195. Springer, Cham. https://doi.org/10.1007/978-3-031-45368-7_26

Download citation

DOI: https://doi.org/10.1007/978-3-031-45368-7_26

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-45367-0

Online ISBN: 978-3-031-45368-7

eBook Packages: Computer ScienceComputer Science (R0)