Abstract

The great complexity and size of electrical power systems makes their operation and control a challenging task. Maintaining a stable voltage profile to assure the security and stability of the system is one of many tasks that must be conducted daily by power system operators and its automatic control equipment. This work proposes a deep reinforcement learning framework for controlling the equipment responsible for keeping the voltages across the system buses within their limits. More specifically, a smart agent that is capable of deciding the best course of action in order to keep the system’s voltages within a specified range while taking into account system’s conditions is proposed. Besides the traditional deep reinforcement learning approach, three novel reinforcement learning variations named windowed, ensemble and windowed ensemble Q-Learning, which alter the agent’s learning process for voltage control, are presented and tested on IEEE 13, 37 and 123 bus systems, simulated on OpenDSS.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Electric Power Systems (EPS) are complex systems with both physical and digital resources that must work together in order to deliver electricity safely and efficiently to all kinds of customers be them industrial, commercial or residential [17]. Over the last decades the demand for energy is constantly increasing as people wish for better quality of life [22]. With this growth, maintaining the quality of service (QoS) and system safety becomes harder and new tools and resources must be included into the process of operating and controlling the system.

Mainly, the operation of EPSs is executed by several trained professionals called system operators (SO) who must constantly observe the system’s condition and conduct different tasks such as dispatch of generators, frequency control, fault mitigation, among others [29]. One of these tasks known as voltage control regards keeping voltage on all system buses between certain limits which are usually defined by regulatory associations and take into account the systems’ correct functioning. As the demand fluctuates through the day, the voltages on system’s buses also change and operators must maneuver and switch several equipment in order to keep it under control.

As most other tasks, voltage control must be executed by the SOs in real time who usually must react in a short time frame as the system’s resources may be at stake. Voltage can also be controlled by automatic control equipment that react to voltage fluctuations according to some embedded logic using control mechanisms that monitor the voltage at certain system’s points and issues control commands that keep it in between the predefined limits [3].

Due to the need for fast reaction, operators usually do not have time for detailed analysis of their actions. They rely on their experience and on actions that have worked in the past, but this may not be optimal because the system varies due to various unknown factors. Automatic control equipment also has fixed logic that does not consider the constantly changing dynamics of the system.

Over the years several different techniques have been proposed in order to address the voltage control problem. As it is formally known, volt-var control has seen applications of a plethora of different methods, such as classical optimization [24, 25], meta-heuristics [20, 21], classic control [1, 2], neural networks [8], fuzzy logic [15, 16], etc. Also, it has been successfully applied both to distribution and transmission systems.

There are many different approaches to voltage control since it can be seen as both a planning and a real-time operation problem [6, 7, 13, 14] [13]. Online control uses different techniques and methodologies [11, 12, 23, 32], from classical control to reinforcement learning. Usually, equipment such as shunt capacitor banks and OLTC transformers are controlled, but other digital technologies and distributed generation resources are also used. Recent studies have increasingly explored the use of reinforcement learning in energy systems, with different training techniques and architectures [30], Q-Learning [4, 28], and deep reinforcement learning [5, 31]. Bus voltages and branch power flows are used as state representations.

Most voltage control works focus on offline scenarios and use a variety of methods, mainly capacitor banks and transformer taps. When controlled online, adaptations are necessary, and reinforcement learning is a well-suited technique.

This work proposes a framework that uses reinforcement learning to control the system’s voltage in real-time, taking into account equipment constraints and different system parameters. Three new methodologies that consider system complexity are also proposed. The methodologies are tested on three IEEE distribution circuits with 13, 37, and 123 buses.

2 Background

In this section, we will provide a brief description of the voltage control problem and discuss the application of reinforcement learning as a solution to the problem.

2.1 Voltage Control

The power system experiences fluctuations in consumption and generation, causing voltage to vary and potentially violate safety limits set by authorities. Power system operators have to make quick decisions to keep voltage within limits by maneuvering equipment or shedding loads, but this can sacrifice assertiveness. Due to the complexity of the power system, operators rely on previous experiences when controlling voltage, causing some equipment to be overused.

However, operators are not fully responsible for controlling voltages. There are equipment that have automatic control mechanisms and monitor voltages at specific points in the system, but these usually rely on simple feedbacks and do not always provide the best control decisions. Furthermore, as the system changes along the day, the parameter configuration of these controls would be better if adjusted, which most of the time, doesn’t happen.

With this in mind, the tool proposed in this work aims to be an automatic system operator that is capable of replacing both human operators and equipment control by deciding and taking the best course of action in order to keep voltages inside its limits.

In later sections, the voltage control problem will be described further with more technical details.

2.2 Deep Reinforcement Learning

According to [27], reinforcement learning is a machine learning paradigm based on interactions between an agent and an environment. The agent interacts with the environment through actions and observations, evaluating the effect of its actions on the environment and seeking the actions that lead to the most desired outcomes. Essential elements for reinforcement learning include a state-action map, a policy to guide actions, a reward signal to evaluate the effect of actions on the environment, and intrinsic values for the states of the environment that represent the benefit of being in those states. By repeating the process of interaction, observation, and adaptation, it is expected that the agent will learn the best actions in an activity or process (Fig. 1).

Reinforcement learning process [27].

In environments where the number of states are small, this method of exploring and learning is very effective. However, as the number of states grow in an environment, experiencing all states becomes unfeasible. In this context, the deep reinforcement learning methodology arises [9, 10, 18, 19]. This variation allows the agent to generalize experiences for states it has not seen before by using a deep neural network

In further sections, the application of reinforcement learning to the voltage control problem will be discussed.

3 Problem Definition

As indirectly explained on previous sections:

Voltage Control is the act of operating and configuring different control equipment in the right moments in order to keep bus’ voltages within specified limits, which are determined by physical and commercial aspects.

More formally, the problem can be written as shown in Eq. 1.

where t represents the current time step and T the maximum possible time step and n is the total number of equipment. In the real world this time would be continuous as voltage is controlled all the time. Although in order to simulate it, the operation period must be discretized. A certain system bus is represented by b and B is the set of all system buses. \(V_{bt}\) is the voltage at bus b on time t and \(\overline{V}\) is the voltage target. For the constraints, in constraint (a), called the voltage limit constraint, \(V_{-}\) and \(V_{+}\) represent the lower and upper voltage limits across all buses and in constraint (b), \(V_{bt}\) is represented as a function of the system’s active (P) and reactive (Q) powers and all equipment set-points at time t, which is how the voltage is essentially controlled (by changing equipment set-points). In constraint (c), also known as the equipment set-point constraint, since control equipment have different set-points restrictions, \(e_{il}\) and \(e_{iu}\) represent the minimum and maximum set-points that an equipment \(e_i\) can have at a certain time (t). Finally, constraint (d) called the maximum set-point change, represents how much the set-point of an equipment can change from one time step to another. This is to account for delays that an equipment may have in order to change its set-point.

It is important to note that the data needed for the optimization (i.e. the voltage on each bus) is obtained online and in real time. That means that \(V_{bt}\) can only be obtained at time step t and not before that. Therefore, the optimization process is conducted at each time step, as shown in Fig. 2.

In order to control the voltage, the existing equipment in the network must be manipulated. In electrical networks, there are many kinds of equipment that are capable of regulating voltage: capacitors, reactors, transformers, synchronous condensers, FACTS equipment, etc. Although there is no clear advantage of using one equipment over another, different actions may have different short and long term impacts on the system.

Mainly, voltage can be controlled by two means: automatic control equipment, which monitor the network and adjust themselves accordingly and by network operators, who choose a certain action (based on experience and/or intuition) which they think is best for the current scenario. The automatic control equipment usually have fixed control logic which is certainly not suitable for every scenario. Furthermore, because there’s usually no time to conduct detailed studies, operators tend to rely purely on their own knowledge and past experiences increasing the bias towards certain actions and overusing individual equipment.

4 Proposed Solution

Because power systems are composed by multiple discrete and continuous variables, the amount of possible configurations it can attain is quasi-infinite, rendering the classical tabular reinforcement learning not feasible. Therefore, a deep reinforcement learning technique is developed to deal with this characteristic.

Besides the usual deep reinforcement learning (DRL) approach, three different methodologies are proposed. These propositions involve slight modifications to the reinforcement learning technique that intend to adapt the procedure to specific characteristics of power system operation.

Many different kinds of reinforcement learning solutions exist depending on the type of problem that is being solved. For the voltage control problem, Q-Learning is a well suited method. Besides being very simple to implement, it is a model-free method, meaning that the model of the environment does not need to be fully known (which would be very hard for power systems). Furthermore, it can account for the effect that an action may have on a future state, that is not immediately achieved after taking said action.

Although there are many suitable methods to perform reinforcement learning, most of them have limitations, including Q-Learning, due to the need to create a finite map of state to actions. Discretizing the state can be infeasible or lead to inaccuracies if there are many continuous variables involved. Deep reinforcement learning overcomes these limitations, allowing the agent to generalize to states never seen before through a deep neural network.

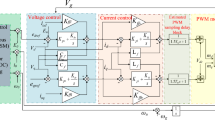

In deep Q-Learning, the neural network’s inputs are every variable that represents the system’s state and its outputs are the “worth” or also called Q-Values for each action. During the training process, as the agent explores the environment, the neural network is trained with information from the system’s state, the actions taken by the agent and their effects. During operation, the system’s state is simply fed as an input to the network and the action with the highest Q-Value is chosen.

Over the years, many advancements have been made in deep Q-Learning. In this work, two of them are considered: double Q-Learning and experience replay. Both these techniques are better described in [10]. In addition, two new different modifications are proposed to the deep Q-Learning framework. These modifications do not modify how the learning process works, bur rather change how the techniques are applied to the problem at hand.

The first technique will be named windowed Q-Learning. In problems where episodes are too long, the agent may take longer to learn optimal actions for the entire length of the episode. Also, by being long, the episode may have very distinct behaviors on its course. That is, in a certain problem with a constant number of steps per episode, steps i to j may be very different in behavior from steps m to n. The proposed windowed Q-Learning methodology attempts to address this characteristics by dividing the episode into windows Fig. 3(a) and for each window a different agent is trained. That way, each agent only sees states relative to a certain interval and can learn the specificities of each window better when training. During operation, depending on which window the episode falls into, a different agent is consulted for making decisions.

The second technique will be called ensemble Q-Learning and takes inspiration from the way that humans learn. Because when subjected to different experiences people learn things differently, this technique proposes that multiple agents are trained for the same problem Fig. 3(b). These agents can either be equal and rely on the variability of the environment in order to experience things differently or can perceive the environment differently for example by having different reward functions. When operating, every agent is consulted when deciding which action to take. The decision can be made by following several criteria such as averaging every agent’s value for the actions and taking the highest average (Eq. 2) or by taking the action with the maximum value over every agent (Eq. 3).

where \(\phi _{ij}\) is the value given to action i by agent j.

The third and final technique is a combination of the other two and is called windowed ensemble Q-Learning. In addition to splitting the episode into windows, for each window multiple agents are trained Fig. 3(c). This allows for the combination of both methods’ advantages. One disadvantage may be increased training times. During operation, the actions are also chosen by combining both methods: when the episode moment falls into a certain window, all agents trained for that window are consulted when choosing the action.

4.1 Voltage Control as a DRL Problem

In this section, the voltage control problem in an electrical system is modeled. Episodes have a fixed length of 1440, corresponding to each minute in a 24-hour period, and the system loads are updated at each step. The agent has the option to execute actions in the system, such as increasing or decreasing the stage of a capacitor bank, increasing or decreasing a transformer tap, or doing nothing. States are determined by the total active and reactive loads of the system, the current minute of the simulation, and the states of the transformers and capacitor banks. The state representation has a direct impact on the agent’s learning, and rewards are defined to lead the voltage at all system buses closer to a certain target and keep them within certain operational limits. The rewards are defined following Algorithm 1. All learning parameters are detailed in the Case Study section.

5 Case Study

In this chapter the proposed techniques will be tested on simulated power systems. The utilized systems are the 13, 37 and 123 bus IEEE test circuits [26]. For each system, four models were trained: a pure reinforcement learning model, a windowed model, a ensemble model and a windowed ensemble model. Additionally, for comparison effect the system was run without any form of control and also with the control capabilities available at the simulation software (OpenDSS).

For every system, the parameters used during training were very similar with the exception of the number of episodes which was 100 for both the 13 and 123 bus systems and 200 for the 37 bus system. For the double deep Q-Learning with experience replay, the parameters are as follows: the used memory size N was 1024, the minimum number of samples to start training was 512 and the batch size, 256. The discount factor \(\gamma \) was 0.9. For epsilon, instead of keeping it static, a decay technique was used. That way, epsilon decays linearly with the episodes from 1.0 to a minimum of 0.3.

The neural network structure is shown on Fig. 4 and its learning rate \(\alpha \) was 0.001. The chosen architecture is quite simple when considering its size and activation functions. This is due to the nature of deep reinforcement learning which does not require complex structures in order to predict the action values and also because of the tested systems’ sizes. Its input and output layer sizes depends on the system’s characteristics, that is the size of its state and the number of available actions, both of which depends on the number of capacitors and tap changing transformers that can be controlled. This data is shown on Table 1.

Finally, for the rewards as shown in Algorithm 1, the values used (\(v_1 = -1\), \(v_2 = 0.7\), \(v_3 = -0.8\), \(v_4 = -0.8\), \(v_5 = 1\), \(v_6 = -1\) and \(v_7 = 0.2\)). The upper and lower voltage limits considered are 1.05 and 0.92 p.u. while the target is 1.0 p.u. An exception is the ensemble approach where two alternative agents with more rigorous limits are used. The first alternative agent uses 1.03 and 0.95 p.u. for the upper and lower limits while the second uses 1.02 and 0.98 p.u. Also, for the ensemble agents the “vote” on the best action is conducted by following Eq. 2.

6 Results and Discussion

After the training procedure, the agents were tested on the same systems on different days. The days were simulated by randomly choosing a load profile and introducing some random noise into the loads. As stated in the previous section, the agents’ performance is compared to the systems’ without any form of control, with the control present on the system itself and also with a “pure” deep reinforcement learning approach as proposed by [9, 10, 18, 19] and implemented, with slight variations, by [4, 5, 28, 30, 31].

In order to obtain the results, a total of 50 days for each technique and system were simulated. A few metrics are used to show the achieved results: the total number of violations, that is, the number of times the voltage surpassed the limits of 1.05 and 0.92 p.u., the maximum and minimum voltage achieved across the 50 days, the average real power loss across the 50 days and for the reinforcement learning methods, the number of actions the agents took (Tables 2a, 2b and 2c).

For the number of actions, the values are only available for the reinforcement learning techniques, since for the no control no actions are taken and for the system control method, it is not possible to obtain this value from the simulation. Furthermore, a separate day is simulated in order to closely demonstrate the results and the average system voltage across the day is shown, along with the distribution of the voltages during the day (Fig. 5 and 6) and a diagram showing the voltage during the day at each system bus (Fig. 7, 8 and 9).

Regarding the training process, all agents were satisfactorily trained. The training times were varied but the windowed methodology has shown a reduced training time even when compared to the traditional reinforcement learning approach. The results indicate that the proposed techniques are capable of controlling the voltage on power systems.

For the 13-bus system, because it’s already a fairly balanced and small system, the results were marginal. Nevertheless, the windowed methodology was capable of removing all voltage violations when compared to the control already present on the system while keeping the number of required actions low and not affecting the real power losses significantly. Most importantly, Fig. 5(a), 6(a), and 7 show also that the system’s voltage profile was improved with the voltages getting closer to the target.

For the 37-bus system, while the pure reinforcement learning approach actually increased the number of voltage violations, the other three proposed methodologies completely eliminated them. The improvements are clear on the results shown on Fig. 5(b), 6(b), and 8. It is possible to see that the voltages are much closer to the target of 1 p.u. Also, the voltage profile in general is much closer to \(\pm 1\%\) of the target green areas on Fig. 8. In this case, the windowed ensemble methodology has executed the task in the least number of actions. For all the methodologies, the average losses were kept fairly close to its original values.

Finally, for the 123-bus system, while there were no violations on the system both with and without control, the proposed methodologies were capable of significantly improving its voltage profile. For this system, the average losses for three of the four methodologies were increased by a significant margin. The ensemble technique, has shown a better performance overall, controlling the voltage satisfactorily while also keeping the losses at a better acceptable value and the number of actions at a minimum.

7 Conclusion

Reinforcement Learning has been around for some time and has shown great results across many different scientific and real-world problems. When combined with the power of deep neural networks, deep Q-Learning can tackle a plethora of problems. In this work, a deep Q-Learning methodology was proposed to solve the voltage control problem on electrical power systems. Besides the regular reinforcement learning approach, three other novel methodologies were proposed with the goal of improving the technique’s performance on this specific problem. The results show that the application of the techniques was successful and has shown great value when compared to the traditional DRL approach and also with the systems’ own control. The trained intelligent agents are capable of controlling the system voltage in a completely autonomous way while keeping the number of actions taken low and having little effect on the real power losses.

References

Baran, M.: Ming-Yung Hsu: Volt/VAr control at distribution substations. IEEE Trans. Power Syst. 14(1), 312–318 (1999). https://doi.org/10.1109/59.744549. https://ieeexplore.ieee.org/document/744549/

Borozan, V., Baran, M., Novosel, D.: Integrated volt/VAr control in distribution systems. In: 2001 IEEE Power Engineering Society Winter Meeting. Conference Proceedings (Cat. No. 01CH37194), Columbus, OH, USA, vol. 3, pp. 1485–1490. IEEE (2001). https://doi.org/10.1109/PESW.2001.917328. http://ieeexplore.ieee.org/document/917328/

Corsi, S.: Voltage Control and Protection in Electrical Power Systems: From System Components to Wide-Area Control. Springer, London (2015). https://doi.org/10.1007/978-1-4471-6636-8

Custódio, G., Ochoa, L., Trindade, F., Alpcan, T.: Using Q-learning for OLTC voltage regulation in PV-rich distribution networks (2020)

Diao, R., Wang, Z., Shi, D., Chang, Q., Duan, J., Zhang, X.: Autonomous voltage control for grid operation using deep reinforcement learning (2019). http://arxiv.org/abs/1904.10597

Franco, J.F., Rider, M.J., Lavorato, M., Romero, R.: A mixed-integer LP model for the optimal allocation of voltage regulators and capacitors in radial distribution systems. Int. J. Electr. Power Energy Syst. 48, 123–130 (2013). https://doi.org/10.1016/j.ijepes.2012.11.027. https://linkinghub.elsevier.com/retrieve/pii/S0142061512006801

Gallego, R., Monticelli, A., Romero, R.: Optimal capacitor placement in radial distribution networks. IEEE Trans. Power Syst. 16(4), 630–637 (2001). https://doi.org/10.1109/59.962407. https://ieeexplore.ieee.org/document/962407/

Gu, Z., Rizy, D.: Neural networks for combined control of capacitor banks and voltage regulators in distribution systems. IEEE Trans. Power Deliv. 11(4), 1921–1928 (1996). https://doi.org/10.1109/61.544277. https://ieeexplore.ieee.org/document/544277/

van Hasselt, H., Guez, A., Silver, D.: Deep reinforcement learning with double Q-learning (2016). http://arxiv.org/abs/1509.06461

Hessel, M., et al.: Rainbow: combining improvements in deep reinforcement learning. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 32 (2018)

Homaee, O., Zakariazadeh, A., Jadid, S.: Real-time voltage control algorithm with switched capacitors in smart distribution system in presence of renewable generations. Int. J. Electr. Power Energy Syst. 54, 187–197 (2014). https://doi.org/10.1016/j.ijepes.2013.07.010. http://linkinghub.elsevier.com/retrieve/pii/S0142061513003074

Hu, Z., Wang, X., Chen, H., Taylor, G.: Volt/Var control in distribution systems using a time-interval based approach. IEE Proc.-Gener. Transm. Distrib. 150(5), 548 (2003). https://doi.org/10.1049/ip-gtd:20030562

Khanabadi, M., Ghasemi, H., Doostizadeh, M.: Optimal transmission switching considering voltage security and N-1 contingency analysis. IEEE Trans. Power Syst. 28(1), 542–550 (2013). https://doi.org/10.1109/TPWRS.2012.2207464. https://ieeexplore.ieee.org/document/6253283/

Levitin, G., Kalyuzhny, A., Shenkman, A., Chertkov, M.: Optimal capacitor allocation in distribution systems using a genetic algorithm and a fast energy loss computation technique. IEEE Trans. Power Deliv. 15(2), 623–628 (2000). https://doi.org/10.1109/61.852995. https://ieeexplore.ieee.org/document/852995/

Liang, R.H., Chen, Y.K., Chen, Y.T.: Volt/Var control in a distribution system by a fuzzy optimization approach. Int. J. Electr. Power Energy Syst. 33(2), 278–287 (2011). https://doi.org/10.1016/j.ijepes.2010.08.023. https://linkinghub.elsevier.com/retrieve/pii/S0142061510001596

Liu, Y., Zhang, P., Qiu, X.: Optimal volt/var control in distribution systems. Int. J. Electr. Power Energy Syst. 24(4), 271–276 (2002). https://doi.org/10.1016/S0142-0615(01)00032-1. https://linkinghub.elsevier.com/retrieve/pii/S0142061501000321

Meier, A.V.: Electric Power Systems: A Conceptual Introduction. Wiley survival guides in engineering and science, IEEE Press: Wiley-Interscience, Hoboken (2006). oCLC: ocm62616191

Mnih, V., et al.: Playing atari with deep reinforcement learning. arXiv preprint arXiv:1312.5602 (2013)

Mnih, V., et al.: Human-level control through deep reinforcement learning. Nature 518(7540), 529–533 (2015)

Niknam, T.: A new approach based on ant colony optimization for daily Volt/Var control in distribution networks considering distributed generators. Energy Convers. Manag. 49(12), 3417–3424 (2008). https://doi.org/10.1016/j.enconman.2008.08.015. https://linkinghub.elsevier.com/retrieve/pii/S0196890408003087

Niknam, T., Firouzi, B.B., Ostadi, A.: A new fuzzy adaptive particle swarm optimization for daily Volt/Var control in distribution networks considering distributed generators. Appl. Energy 87(6), 1919–1928 (2010). https://doi.org/10.1016/j.apenergy.2010.01.003. https://linkinghub.elsevier.com/retrieve/pii/S030626191000005X

Rashid, M.H. (ed.): Electric renewable energy systems. Elsevier/AP, Academic Press is an imprint of Elsevier, Amsterdam (2016)

Ribeiro, L.C., Schumann Minami, J.P.O., Bonatto, B.D., Ribeiro, P.F., de Souza, A.C.Z.: Voltage control simulations in distribution systems with high penetration of PVs using the OpenDSS. In: 2018 Simposio Brasileiro de Sistemas Eletricos (SBSE), pp. 1–6. IEEE. https://doi.org/10.1109/SBSE.2018.8395639. https://ieeexplore.ieee.org/document/8395639/

Roytelman, I., Wee, B., Lugtu, R.: Volt/var control algorithm for modern distribution management system. IEEE Trans. Power Syst. 10(3), 1454–1460 (1995). https://doi.org/10.1109/59.466504. https://ieeexplore.ieee.org/document/466504/

Saric, A.T., Stankovic, A.M.: A robust algorithm for Volt/Var control. In: 2009 IEEE/PES Power Systems Conference and Exposition, Seattle, WA, USA, pp. 1–8. IEEE (2009). https://doi.org/10.1109/PSCE.2009.4840211. https://ieeexplore.ieee.org/document/4840211/

Schneider, K.P., et al.: Analytic considerations and design basis for the IEEE distribution test feeders. IEEE Trans. Power Syst. 33(3), 3181–3188 (2018). https://doi.org/10.1109/TPWRS.2017.2760011. https://ieeexplore.ieee.org/document/8063903/

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. Adaptive Computation and Machine Learning Series, 2nd edn. The MIT Press, Cambridge (2018)

Vlachogiannis, J., Hatziargyriou, N.: Reinforcement learning for reactive power control. IEEE Trans. Power Syst. 19(3), 1317–1325 (2004). https://doi.org/10.1109/TPWRS.2004.831259. https://ieeexplore.ieee.org/document/1318666/

Wood, A.J., Wollenberg, B.F., Sheblé, G.B.: Power Generation, Operation, and Control, 3rd edn. Hoboken, Wiley-IEEE (2013)

Xu, H., Domínguez-García, A.D., Sauer, P.W.: Optimal tap setting of voltage regulation transformers using batch reinforcement learning. IEEE Trans. Power Syst. 35(3), 1990–2001 (2019). https://doi.org/10.1109/TPWRS.2019.2948132. https://arxiv.org/abs/1807.10997

Yang, Q., Wang, G., Sadeghi, A., Giannakis, G.B., Sun, J.: Two-Timescale Voltage Control in Distribution Grids Using Deep Reinforcement Learning. arXiv:1904.09374 (2019)

Zhang, W., Liu, Y.: Multi-objective reactive power and voltage control based on fuzzy optimization strategy and fuzzy adaptive particle swarm. IEEE Trans. Power Syst. 30(9), 525–532 (2019). https://doi.org/10.1016/j.ijepes.2008.04.005. https://linkinghub.elsevier.com/retrieve/pii/S0142061508000380

Acknowledgments

The authors would like to thank Companhia de Transmissão de Energia Elétrica Paulista (ISA-CTEEP) for the financial support to Project PD-00068-0044-2019 - intelligent real-time decision support tool for transmission operations centers, developed under the Research and Development program of the National Electric Energy Agency (ANEEL R &D), which the engineering company carried out Radix Engenharia e Software S/A, Rio de Janeiro, Brazil.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Barg, M.W. et al. (2023). Deep Reinforcement Learning for Voltage Control in Power Systems. In: Naldi, M.C., Bianchi, R.A.C. (eds) Intelligent Systems. BRACIS 2023. Lecture Notes in Computer Science(), vol 14196. Springer, Cham. https://doi.org/10.1007/978-3-031-45389-2_15

Download citation

DOI: https://doi.org/10.1007/978-3-031-45389-2_15

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-45388-5

Online ISBN: 978-3-031-45389-2

eBook Packages: Computer ScienceComputer Science (R0)