Abstract

Accurately identifying plant species and varieties is crucial across various disciplines, such as biology, medicine, and agronomy. While species identification is challenging, variety identification presents an even greater difficulty. Conventional identification methods, although effective, often require specialized and costly equipment, making them less accessible. In this work, we explore the problem of hop variety classification, comparing traditional feature extraction methods with deep learning approaches using the UFOP-HVD dataset. We address two research questions: whether traditional techniques can achieve competitive results given the limited number of images and whether combining traditional techniques and deep learning can improve the current state-of-the-art. Our findings indicate that traditional techniques yield competitive results for hop variety identification, offering advantages such as interpretability, reduced computational costs, and potential integration into mobile devices. Moreover, we introduce an ensemble method that improves the accuracy from 77.16% to 81.90%, establishing a new state-of-the-art for the UFOP-HVD dataset. These results demonstrate the potential of merging traditional methods with deep learning for challenging hop variety classification tasks, providing an initial baseline for future research.

Supported by Universidade Federal de Ouro Preto, (FAPEMIG, grants APQ-01518-21, APQ-01647-22), CAPES and (CNPq, grants 307151/2022-0, 308400/2022-4).

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Accurately identifying plant species and varieties holds significant value across numerous disciplines. Within biology, it serves as both a subject of investigation and an instrumental tool for teaching botany and promoting biodiversity conservation among high school and undergraduate students [20]. In medicine, plant identification enhances the quality and safety of herbal remedies, consequently reducing poisoning and intoxication incidents [8]. Forensic experts utilize this knowledge to bolster criminal investigations and support drug enforcement efforts [14]. In agronomy, the precise identification of plant varieties can optimize planting efficiency [5], as well as aid in detecting weeds detrimental to crop development.

Numerous methods exist for the identification of plant species and varieties, such as gas chromatography [1], mass spectrometry [18], electrochemical fingerprinting [12], molecular analysis [21], among other techniques. Although these methods effectively assist in recognizing plant varieties, they necessitate specialized and costly equipment, often utilized within laboratory settings.

The application of machine learning for plant recognition through image analysis has garnered interest due to its user-friendliness and accessibility [4]. In the machine learning approach, the only required equipment is a device capable of capturing plant images (or their components), such as a smartphone or a standard camera, which individuals can utilize without prior experience in plant classification. Although some researchers have demonstrated the feasibility of classifying plant species using computer vision with remarkable accuracy, a more challenging subclass of the problem persists: distinguishing varieties within the same species. Plants of the same species often exhibit strikingly similar appearances across leaves, stems, and flowers [19]. Nevertheless, in certain applications, it is crucial to classify plant varieties, as exemplified by hops used in beer production. Hops impart flavor, aroma, and bitterness; depending on the variety, the beverage’s characteristics are significantly affected. Chemical processes differentiate hop varieties in the industry, but these methods are expensive and time-consuming.

In this context, researchers have made available a dataset, the UFOP-HVD [6]Footnote 1, containing images of 12 hop varieties. In [7], deep learning techniques, specifically convolutional networks, were explored for hop variety classification. However, the dataset is limited, containing only 1,592 images (4,159 hop leaves). As a result, two research questions emerge: RQ1, given the limited number of images in the UFOP-HVD dataset, which may not favor deep learning techniques, is it possible to achieve better or more competitive results using traditional feature extraction techniques? And RQ2, can the combination of traditional techniques and deep learning improve the current state-of-the-art for the UFOP-HVD dataset?

The experimental findings of this study indicate that traditional techniques yield competitive results and offer several advantages, such as easier interpretability, reduced computational costs, and the potential for seamless integration into mobile devices, compared to deep learning techniques. Nevertheless, merging traditional methods with deep learning has shown potential to yield significant advancements in classification. This was particularly evident in the tested hop varieties dataset, where an improvement in accuracy was observed from 77.16% to 81.90%, as demonstrated by our experimental findings.

Given that, the dataset under investigation is relatively new, this work also establishes an initial baseline for the problem, encompassing traditional and deep learning approaches.

2 Related Works and Dataset

Humulus lupulus L., commonly referred to as hops, is a climbing plant whose flowers are a principal ingredient in beer production worldwide. Hops impart flavor, bitterness, and aroma to the beverage [3], and play an important role in stabilization. Over 250 cataloged varieties of this plant exist [17], with distinguishing features including alpha-acids, beta-acids, and essential oils [25]. These components endow each beer with unique characteristics, potentially influencing its classification.

Numerous methods exist for identifying hop varieties based on their acids and essential oils [11, 13, 24]. However, these techniques may be inaccessible or unavailable to farmers. Machine learning and computer vision-based methods offer promising alternatives, given the extensive literature on plant species recognition [4]. For hops, the focus is on classifying the variety, which is a taxonomic level below species. As [19] states, intraspecific varieties share common genotype or phenotype traits. In the case of hops, visually observable morphological features are quite similar, as demonstrated in Fig. 1. To address this problem with computer vision, Brazilian researchers have provided an image dataset to facilitate the development of machine-learning techniques for the classification of hop varieties.

In [7], an end-to-end method for hop variety classification using the UFOP-HVD was proposed. The researchers investigated several CNN architectures: ResNets, EfficientNets, and InceptionNets. They explored image classification with and without leaf segmentation, with and without data augmentation, and proposed an ensemble architecture called Multi-Cropped-Full, which combined six models. For the leaf-only classification problem, termed “cropped classification” by the authors, a ResNet50 architecture with a 50% dropout achieved the best results, attaining 78% accuracy. The ensemble model, Multi-cropped-FULL, utilized multiple leaves from the same image and the entire image as input, achieving 81% accuracy. With the application of data augmentation techniques, the Multi-cropped-FULL model reached 95% accuracy. In the present study, we explore traditional machine learning techniques using the dataset in the “cropped” configuration, wherein each detected leaf image (an image that can have multiple hop leaves) is transformed into a new input for the problem. In this cropped evaluation format, only the main leaf should be considered. Given the limited number of samples, it is hypothesized that traditional machine-learning techniques should yield results comparable to those reported in [7].

2.1 UFOP Hop Varieties Dataset

The UFOP Hop Varieties Dataset (UFOP-HVD) [6] comprises 1,592 images of young and adult hop leaves across 12 varieties. The images were captured without controlling lighting, focus, distance, or angle and have resolutions ranging from 1,040\(\,\times \,\)520 to 4,096\(\,\times \,\)3,072. The dataset allocates 70% of the images for training, 15% for validation, and 15% for testing, establishing a protocol to be adhered to by authors. Representative samples from the dataset can be viewed in Fig. 1.

Each image in the dataset is accompanied by an XML file that specifies the plant variety and includes one or more leaves labeled using bounding boxes. These bounding boxes are defined by x and y coordinates for the upper-left and lower-right corners. Leaves that are not sharp or are significantly occluded have been excluded by the dataset author to maintain data quality.

Table 1 presents the number of images per class for each set. The photographs were captured using Motorola Moto G7, Samsung Galaxy A11, and Apple iPhone 11 devices.

3 Methodology

In this study, hop classification is not conducted using the entire image. Instead, only the main leaf, which possesses the largest area among all leaves, is utilized. As illustrated in Fig. 2, the main leaf is marked with a red bounding box, while the remaining leaves are outlined in yellow. The initial step involves evaluating the area of all leaves in the image and extracting the largest one. Subsequently, the cropped image is resized such that its longest side measures L pixels. This parameter is further investigated in Sect. 4. This standardization process is essential to account for varying image resolutions. Lastly, the image is converted to grayscale in preparation for feature extraction algorithms.

3.1 Proposed Method

Figure 3 showcases the method, unifying all steps. The original image is pre-processed through cropping, resizing, and grayscale conversion. Next, GLCM, LBP, DAISY, and KASE feature extractors are applied. DAISY and KASE descriptors are normalized between 0 and 1, and fed to the Bag of Visual Words to create feature vectors. These vectors are normalized and concatenated into one, then sent to the SVM classifier.

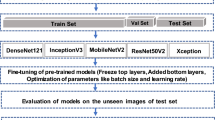

To assess the impact of using traditional techniques on convolutional networks, a simple ensemble [10] method for combining the outputs of both is evaluated. In this study, the ensemble sums the predictions of the models to generate a final prediction, as demonstrated in Fig. 4.

3.2 Traditional Machine Learning Techniques

Feature extraction is vital for image classification in machine learning, as it helps mitigate errors caused by angle, lighting, and scale variations. This study investigates five robust feature extraction techniques resilient to these variations and employs a Support Vector Machine (SVM) with Radial Basis Function (RBF) for the classification step.

Gray Level Co-Occurrence Matrix (GLCM). [15] proposes a texture feature extraction method based on second-order statistics. A p \(\times \) p matrix P is generated, where p represents the number of possible image intensities. Pairs of intensities are counted based on location parameters, such as distance and angle. Then, the co-occurrence matrix is normalized so that the sum of all its elements is 1. This matrix is usually sparse, and there are a few ways to better adapt it for classification. Haralick et al. [15] suggested 28 attributes generated from the matrix P, and those applied in this work are contrast, dissimilarity, homogeneity, angular second moment, energy, and correlation. The scalars obtained as a result form a vector of attributes.

Local Binary Pattern. The Local Binary Pattern (LBP) is a texture analysis feature extractor [22]. For each image pixel, it generates a value representing the pixel and its surroundings by determining the distance and number of neighbors. A binary code is formed by comparing the central pixel with its neighbors, and then converted to a decimal value. The original image pixels are replaced by these numerals, forming a new matrix that can be used directly as a feature or converted to a histogram as an attribute vector. Parameters, such as increasing the radius to the central pixel, can be adjusted. To reduce possible variations, [23] proposed a uniformity and circularity model. Uniform patterns have a maximum of 2-bit inversions, and circularity allows codes with the same shifted sequence to be marked as equivalent, reducing the number of combinations.

DAISY. [28] introduces a local region descriptor generator initially designed for stereo matching, but applicable for classification. It works with histograms of oriented gradients and can be applied to entire images or fragments around points of interest. The algorithm defines a central pixel c and calculates the gradient histogram in o orientations. R rings are generated around c with radius \(r_i\). For each ring \(R_i\), h points are uniformly distributed from 0 to 360\(^\circ \), and histograms with o directions are calculated. The total number of histograms H is \(R \times h + 1\). The Gaussian filter size increases proportionally with \(r_i\). Histograms are independently normalized and concatenated, resulting in a feature vector of size \(H \times o\).

KASE. [2] is a method for detecting regions of interest and generating rotation and scale invariant descriptors. It involves building image gradients at various scales, calculating Hessian determinants on each scale, and using a sliding window to find local maxima for identifying points of interest. Descriptors are created for rectangular regions centered on each point, using first-order derivatives in horizontal and vertical directions. The 64-attribute descriptor is made rotation invariant by calculating the main orientation in a local neighborhood and rotating the descriptor towards that angle.

Bag of Visual Words. Direct use of DAISY and KASE descriptors in classifiers presents challenges due to varying input sizes and local rotation and scale invariance. The Bag of Visual Words (BOVW) [9, 29] method overcomes these issues by grouping similar descriptors and generating a histogram representing a visual word vocabulary. This creates a constant-size feature vector suitable for classifiers. Clustering algorithms, specifically K-means, are used to group similar descriptors in this work.

3.3 Deep Learning Techniques

In this study, the Cropped Base convolutional networks outlined in [7] were replicated, employing the blocks from InceptionV3 [26], ResNet50 [16], and EfficientNetB3 [27] architectures. The purpose of reproducing these networks is to facilitate a comparison with traditional feature extraction and classification methods.

4 Experiments and Results

To address the research questions posed in this study, the experiments are divided into two groups. First, we investigate the feature extraction techniques with an SVM classifier, followed by tests with the re-implemented convolutional networks from the proposed work in [7], under the same setup, using UFOP-HVD data. Next, we evaluate the combination (ensemble) of multiple techniques. The evaluation metric employed is accuracy. In addition to experimenting with the models, this section also presents data on the interpretability of the base models. The source code for loading and preparing the data from the database is available at https://anonymous.4open.science/r/anonymous-8291.

4.1 RQ1: Can Better or More Competitive Results Be Achieved Using Traditional Feature Extraction Techniques?

Table 2 displays the classification accuracy using features generated by GLCM. Each row corresponds to a different configuration, and the ‘Id’ column identifies the configuration for reference. For all configurations, the co-occurrence angles of the pixels were 0\(^\circ \), 45\(^\circ \), 90\(^\circ \), 135\(^\circ \), 180\(^\circ \), 225\(^\circ \), 270\(^\circ \), and 315\(^\circ \). The parameter L, introduced earlier, corresponds to the length of the longest side of the resized image and can assume values of 400, 500, and 600 pixels. These values determine the model instances, which will be named L400, L500, and L600, respectively. The variable parameter was the distance between the co-occurring pixels. It can be observed that L400 and L500 instances benefited from the use of longer distances, while the L600’s performance declined under these conditions. The accuracy contribution from greater distances suggests the need to further increase them to investigate the gain limit.

Table 3 shows the classification results with LBP attributes. The variable parameter was the radius length, and the number of points was set as 8 times the radius value. The feature extraction method was the uniform pattern. L400 and L600 instances performed better with larger radii, indicating potential exploration of increasing these parameters. L500, which achieved the best accuracy for the attribute, did not exhibit noticeable patterns concerning the radius. LBP demonstrated the best individual performance among all extractors.

The DAISY feature extractor has the most adjustable parameters and, consequently, more available configurations. The accepted values for the descriptor radius were 9, 18, and 36, which may contain 2 or 3 rings, as shown in Table 4. Additionally, for each instance, a different step parameter was used: 25, 33, and 40 for L400, L500, and L600, respectively. All settings employed a fixed number of 8 orientations and 16 histograms. Besides the DAISY descriptors parameterization, the number of K-means clusters, which form the basis for Bag of Visual Words, varied between 25, 50, 100, and 200 clusters. L500 performed better with larger clusters, while L400 and L600 performed optimally with 100.

Lastly, Table 5 presents the accuracy for attributes obtained with KASE. The modified parameters were the number of clusters for the K-means algorithm and the presence or absence of contrast enhancement. When contrast enhancement was applied, a factor of 2 was used. The L500 instance achieved the best performance. In all cases, increased contrast contributed to an improvement in the hit rate. This is possible because the number of regions of interest detected by KASE increases, generating more descriptors that can help better discriminate one leaf from another. Moreover, all instances performed better with more clusters. It is crucial to investigate whether increasing the contrast factor or the number of clusters may lead to an even greater gain in accuracy.

Combined Attributes. To explore different image features, the attributes generated by GLCM, LBP, DAISY, and KASE were combined into a single feature vector. Tests were conducted on the validation set using the SVM classifier with the parameter \(C = 30\). Several combinations were performed with each extractor configuration. However, to reduce the total number of solutions, a heuristic was followed. Initially, all pairs composed of GLCM and LBP attributes were tested. Then, the pair with the best accuracy was chosen for the next step, where it was combined with the remaining features (DAISY and KASE). The tests were executed again, and this time, the tuple with the highest hit rate was chosen as the base model. This process was performed for the L400, L500, and L600 instances, and the base models returned are named MB400, MB500, and MB600, respectively. Table 6 displays the chosen configuration of each attribute for the base models, ensuring maximum performance. The total attributes of the final feature vector are also recorded.

The best models on the validation were executed on the test set to obtain the final accuracy. Table 7 presents these accuracies and compares them with those achieved by the Cropped Base models from the work of [7]. In [7], the Cropped Bases were applied to the validation set. Therefore, here we rerun them on the test set for comparison purposes. Cropped Base also uses the main cropped leaf at a \(300\,\times \,300\) resolution. It has variants with the InceptionV3, ResNet50, and EfficientNetB3 neural networks, subject to a dropout rate of 0.2, 0.5, or 0.8, as seen in parentheses in the Table 7 ‘Model’ column. MB400 and MB600 models outperformed InceptionV3 with dropout rates of 0.2 and 0.5. MB500 only surpassed InceptionV3 with a dropout rate of 0.2. However, it is worth noting the significant reduction in the proposed models’ size in MB. The base models are at least 10 times smaller than the CNN-base model, making them suitable for use on devices with limited storage and memory capacity.

4.2 RQ2: Can the Combination of Techniques Improve the Current State-of-the-Art?

The final test setup focuses on the ensemble of the proposed models and the Cropped Bases. Table 8 displays the accuracies of the ensemble models in the ‘Model’ column with MB400, MB500, MB600, and their combinations. It can be observed that the ensembles improved the accuracy of all Cropped Bases. The integration with MB400 showed greater gains in the convolutional networks with dropout rates of 0.2 and 0.5. The network achieving the highest success rate was EfficientNetB3, with a dropout rate of 0.5, increasing from 77.16% to 81.90% accuracy when its predictions were combined with the outputs of the MB400 and MB500 models simultaneously. It is also noted that the union of base models can enhance performance, as is the case with MB400 and MB600, which produced a 68.10% accuracy together.

Figure 5 presents the confusion matrix of the ensemble of models MB400, MB500, and EfficientNetB3 (dropout rate of 0.5). A significant improvement in many varieties is observed. Cascade achieved 100% accuracy, and five other varieties had an accuracy of at least 85%. Despite some improvement, Nugget had the lowest performance, while Cluster remained among the worst.

4.3 Interpretability

This study used variable permutation to evaluate the importance of GLCM, LBP, KASE, and DAISY features for the MB400, MB500, and MB600 models. LBP was the most significant feature, contributing over 50% to the final prediction (See Fig. 6). GLCM also played a substantial role, while MB500 relied more on DAISY and MB600 on KASE. MB400 had minimal influence from KASE and DAISY. Analyzing the feature importance by class revealed potential bottlenecks and opportunities for improving the models, such as removing or replacing KASE attributes for MB400, increasing LBP configurations for MB500, and adjusting GLCM parameters for MB600.

5 Conclusion

In this study, we examine the problem of hop variety classification, comparing traditional feature extraction methods with deep learning approaches. We also introduce an ensemble method, achieving a new state-of-the-art for the UFOP-HVD Dataset (improving the accuracy from 77.16% to 81.90%). Our findings indicate that it is possible to develop competitive methods for this problem using traditional techniques and handcrafted features. The three proposed methods have a small footprint, making them suitable for devices with limited memory capacity, such as IoT devices for agriculture or even smartphones. This characteristic could facilitate their deployment in the field.

It is worth noting that the images in the dataset were captured using three different sensors (3 cell phones) in an uncontrolled environment. Moreover, the data comprises numerous classes, and the leaf images exhibit highly similar morphological features, which renders classification more challenging. Despite these factors, the results presented here demonstrate robustness.

Among the limitations of this study, one is the manual annotation of the bounding boxes. It would be worthwhile to explore techniques for automatic object detection. In addition, potential areas for model improvement were presented through attribute permutation and the calculation of the importance of each attribute. Consequently, it is expected that future work will yield even better results by fine-tuning each feature extraction technique.

Notes

- 1.

Available at https://doi.org/10.6084/m9.figshare.14933178.

References

Afifi, S.M., El-Mahis, A., Heiss, A.G., Farag, M.A.: Gas chromatography-mass spectrometry-based classification of 12 fennel (foeniculum vulgare miller) varieties based on their aroma profiles and estragole levels as analyzed using chemometric tools. ACS Omega 6(8), 5775–5785 (2021)

Alcantarilla, P.F., Bartoli, A., Davison, A.J.: KAZE features. In: Fitzgibbon, A., Lazebnik, S., Perona, P., Sato, Y., Schmid, C. (eds.) ECCV 2012. LNCS, vol. 7577, pp. 214–227. Springer, Heidelberg (2012). https://doi.org/10.1007/978-3-642-33783-3_16

Astray, G., Gullón, P., Gullón, B., Munekata, P.E., Lorenzo, J.M.: Humulus lupulus L. As a natural source of functional biomolecules. Appl. Sci. 10(15), 5074 (2020)

Azlah, M.A.F., Chua, L.S., Rahmad, F.R., Abdullah, F.I., Wan Alwi, S.R.: Review on techniques for plant leaf classification and recognition. Computers 8(4), 77 (2019)

Bhattarai, U., Karkee, M.: A weakly-supervised approach for flower/fruit counting in apple orchards. Comput. Ind. 138, 103635 (2022)

Castro, P., Luz, E., Moreira, G.: Dataset for hop varieties classification. Data Brief 38, 107312 (2021)

Castro, P.H.N., Moreira, G.J.P., da Silva Luz, E.J.: An end-to-end deep learning system for hop classification. IEEE Latin America Trans. 20(3), 430–442 (2021)

Chen, S., et al.: A renaissance in herbal medicine identification: from morphology to DNA. Biotechnol. Adv. 32(7), 1237–1244 (2014)

Csurka, G., Dance, C., Fan, L., Willamowski, J., Bray, C.: Visual categorization with bags of keypoints. In: Workshop on Statistical Learning in Computer Vision, ECCV, pp. 1–2 (2004)

Dietterich, T.G.: Ensemble methods in machine learning. In: Kittler, J., Roli, F. (eds.) MCS 2000. LNCS, vol. 1857, pp. 1–15. Springer, Heidelberg (2000). https://doi.org/10.1007/3-540-45014-9_1

Duarte, L.M., Adriano, L.H.C., de Oliveira, M.A.L.: Capillary electrophoresis in association with chemometrics approach for bitterness hop (humulus lupulus L.) classification. Electrophoresis 39(11), 1399–1409 (2018)

Fan, B., et al.: Development of an electrochemical technology for ten clematis SPP varieties identification. Int. J. Electrochem. Sci. 15, 10212–10220 (2020)

Farag, M.A., Mahrous, E.A., Lübken, T., Porzel, A., Wessjohann, L.: Classification of commercial cultivars of humulus lupulus L. (hop) by chemometric pixel analysis of two dimensional nuclear magnetic resonance spectra. Metabolomics 10(1), 21–32 (2014)

Ferri, G., Alù, M., Corradini, B., Beduschi, G.: Forensic botany: species identification of botanical trace evidence using a multigene barcoding approach. Int. J. Legal Med. 123(5), 395–401 (2009)

Haralick, R.M., Shanmugam, K., Dinstein, I.H.: Textural features for image classification. IEEE Trans. Syst. Man Cybern. SMC 3(6), 610–621 (1973)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Healey, J.: The Hops List: 265 Beer Hop Varieties From Around the World (2016)

Jakab, A., Héberger, K., Forgács, E.: Comparative analysis of different plant oils by high-performance liquid chromatography-atmospheric pressure chemical ionization mass spectrometry. J. Chromatogr. A 976(1–2), 255–263 (2002)

Jenks, M.A.: Plant nomenclature. Purdue University-Department of Horticulture and Landscape Architecture, Disponível (2011)

Killermann, W.: Research into biology teaching methods. J. Biol. Educ. 33(1), 4–9 (1998)

Lotti, C., Ricciardi, L., Rainaldi, G., Ruta, C., Tarraf, W., De Mastro, G.: Morphological, biochemical, and molecular analysis of L. Open Agric. J. 13(1) (2019)

Ojala, T., Pietikäinen, M., Harwood, D.: A comparative study of texture measures with classification based on featured distributions. Pattern Recogn. 29(1), 51–59 (1996)

Ojala, T., Pietikainen, M., Maenpaa, T.: Multiresolution gray-scale and rotation invariant texture classification with local binary patterns. IEEE Trans. Pattern Anal. Mach. Intell. 24(7), 971–987 (2002)

Shellie, R.A., Poynter, S.D., Li, J., Gathercole, J.L., Whittock, S.P., Koutoulis, A.: Varietal characterization of hop (humulus lupulus L.) by GC-MS analysis of hop cone extracts. J. Sep. Sci. 32(21), 3720–3725 (2009)

Steenackers, B., De Cooman, L., De Vos, D.: Chemical transformations of characteristic hop secondary metabolites in relation to beer properties and the brewing process: a review. Food Chem. 172, 742–756 (2015)

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J., Wojna, Z.: Rethinking the inception architecture for computer vision. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2818–2826 (2016)

Tan, M., Le, Q.: EfficientNet: rethinking model scaling for convolutional neural networks. In: International Conference on Machine Learning, pp. 6105–6114. PMLR (2019)

Tola, E., Lepetit, V., Fua, P.: DAISY: an efficient dense descriptor applied to wide-baseline stereo. IEEE Trans. Pattern Anal. Mach. Intell. 32(5), 815–830 (2009)

Yang, J., Jiang, Y.G., Hauptmann, A.G., Ngo, C.W.: Evaluating bag-of-visual-words representations in scene classification. In: Proceedings of the International Workshop on Workshop on Multimedia Information Retrieval, pp. 197–206 (2007)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Castro, P., Fortuna, G., Silva, P., Bianchi, A.G.C., Moreira, G., Luz, E. (2023). Merging Traditional Feature Extraction and Deep Learning for Enhanced Hop Variety Classification: A Comparative Study Using the UFOP-HVD Dataset. In: Naldi, M.C., Bianchi, R.A.C. (eds) Intelligent Systems. BRACIS 2023. Lecture Notes in Computer Science(), vol 14196. Springer, Cham. https://doi.org/10.1007/978-3-031-45389-2_21

Download citation

DOI: https://doi.org/10.1007/978-3-031-45389-2_21

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-45388-5

Online ISBN: 978-3-031-45389-2

eBook Packages: Computer ScienceComputer Science (R0)