Abstract

The accurate identification of promoter regions in DNA sequences holds significant importance in the field of bioinformatics. While this problem has garnered substantial attention in the literature, it remains unresolved. Several researchers have achieved notable outcomes by employing diverse machine-learning techniques to predict promoter regions. However, only a few have thoroughly explored the utilization of features derived from the physicochemical properties of DNA across various organism types. This study investigates the advantages of incorporating these features in the training of machine-learning models. The research evaluates and compares the performance of multiple metrics on diverse datasets encompassing both prokaryotic and eukaryotic organisms. The state-of-the-art CNNProm method is employed as the baseline for our experiments. The models and source code associated with this study can be accessed at the following URL of the project’s repository: https://anonymous.4open.science/r/bracis-paper-1458/.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

The identification of gene products and their location within DNA sequences that have not been experimentally characterized, commonly known as gene finding, is a central topic of interest in computational biology. Prediction of promoter sequences and transcriptional start points can help signal a transcript’s approximate start, thereby identifying one end of a gene. This information is particularly useful in DNA sequences derived from higher eukaryotes, where coding regions are isolated segments embedded within a non-coding DNA background [28].

A promoter region in DNA is a noncoding sequence of DNA located upstream of a gene that is responsible for regulating the expression of that gene [17]. The promoter region contains binding sites for transcription factors, which are proteins that bind to the promoter and recruit RNA polymerase to initiate transcription. The specific pattern of binding sites in the promoter determines the level of gene expression and the conditions under which the gene is active [5].

High-resolution promoter recognition in DNA is an important area of research in bioinformatics. It involves the use of algorithms to find the transcription start site (TSS) of a gene without the need for laborious and costly experimental techniques such as aligning expressed sequence tags (ESTs), complementary DNAs (cDNAs) or messenger RNAs (mRNAs) to the entire genome [33]. The TSS is a specific genomic location within the promoter region where RNA polymerase binds and initiates transcription. It marks the starting point of the transcription process, where the DNA sequence is transcribed into RNA. Defining the TSS position as a reference, the upstream region is the DNA sequence located before the transcription start site, and the downstream region is situated after the TSS.

Identifying the promoter region allows us to study the regulation of gene expression and understand how different genes are controlled in various tissues, conditions, and stages of development [31]. In addition, by identifying the promoter regions for a set of genes, one can infer information about the molecular mechanisms that control gene expression and how these mechanisms may change in response to different stimuli. So, these algorithms can efficiently delimit regions involved in transcriptional regulation, guiding further experimental work, given the lower cost associated with computational approaches [33].

The identification of promoter regions is often performed by computational methods, such as promoter prediction algorithms, that analyze the DNA sequence and look for characteristic features such as TATA boxes [20] and CpG islands [10] and other short conserved sequences. However, multiple groups of genes don’t contain these features. Methods for identifying promoters have been widely adopted in bioinformatics, as it allows for the generation of comprehensive maps of promoter regions in a genome which can be used for further analysis, such as studying gene regulation in response to environmental cues or identifying novel regulatory elements. Despite recent advances in promoter recognition algorithms, accurately identifying promoters remains challenging due to the diversity and complexity of these sequences in genome [34].

Methods for promoter prediction have been established to recognize promoter regions within the DNA sequences of both prokaryotic and eukaryotic organisms. These methods harness various machine learning algorithms for their functioning. The support vector machines (SVM) [9] were used in [26] to evaluate the strength in a dataset of Escherichia coli Trc promoter. [1] applied a genetic algorithm to calibrate the SVM hyperparameters using a human promoter dataset. [3] used datasets of three higher eukaryotes, Saccharomyces cerevisiae, A. thaliana, and human, to train Convolutional Neural Network (CNN) [24] with Long Short Term Memory (LSTM) [18] and Random forest (RF) [4] models. A study of promoter prediction on bacterial datasets was performed by [8] using RF models. In [25] a stacked ensemble of LightGBM [21], XGBoost [6], AdaBoost [14], GBDT [15], and RF models were used on a Escherichia coli dataset. The work of [30] proposed some CNN models to identify promoters on different organisms datasets. In [27] the same datasets were used to train a capsule neural network (CapsNet) [29].

The existing literature of promoter prediction lacks comprehensive investigations regarding the influence of DNA physicochemical properties on the accuracy of promoter prediction across diverse organisms. Consequently, the primary objective of this study is to examine the efficacy of features derived from DNA physicochemical properties in training machine learning models for predicting promoter regions in both prokaryotic and eukaryotic organisms. To assess the performance of our models, we utilize the datasets introduced by Umarov et al. (2017) [30], which encompass a wide range of organisms. For each property, we train and compare multiple machine learning models, as well as ensembles of these models, to identify the most effective properties. To evaluate the overall predictive capacity of these models, we compare their performance against the state-of-the-art method for these datasets, namely CNNProm [29]. The results indicate that the models based solely on properties do not yield optimal performance. Still, they may serve as a foundational step for future research by combining them with other techniques and models to enhance predictive accuracy.

2 Materials and Methods

2.1 Benchmark Datasets

The seven datasets utilized in this study were sourced from the supplementary materials made available by [30] and can be accessed on the corresponding GitHub repositoryFootnote 1. Regrettably, the dataset pertaining to “Human TATA” could not be accessed through the repository and therefore was excluded from the analysis in this work.

The eukaryotic organism sequences, spanning 251bp (base pairs) with a transcription start site (TSS) located at the 200th position, were sourced exclusively from the EPD database [12]. The dataset includes Arabidopsis TATA and Arabidopsis non-TATA sequences from a plant species, as well as Mouse TATA, Mouse non-TATA, and Human non-TATA sequences from mammalian species. Non-promoter sequences in these datasets are comprised of random gene fragments located after the first exons.

The bacterial promoter sequences of Bacillus subtilis were obtained from DBTBS [19], while the Escherichia coli s70 sequences were acquired from RegulonDB [16]. These sequences, which comprise 81bp, contain the transcriptional start site (TSS) at the 60th position. Conversely, the non-promoter sequences for these prokaryotic organisms include the reverse sequences of random fragments extracted from protein-coding genes.

Figure 1 shows the number of promoters and non-promoters in each dataset. Notably, all datasets have more non-promoter samples than promoter samples. This characteristic can potentially affect the model learning process as they tend to classify the data into the majority class [22]. Besides, the prokaryotic datasets have fewer samples compared to eukaryotic datasets.

2.2 Feature Representation

Adenine (A), cytosine (C), guanine (G), and thymine (T) are the four nucleotides that comprise the alphabet \(V=\{A, C, G, T\}\) forming the basis of DNA. A DNA sample of length \(l_{S} \in \mathbb {N}^{*}\) base pairs is represented by \({S} \in V^{l_{S}}\), which can be written as a sequence \({S} = s_{1} ... s_{i} ... s_{l_{{S}}}\), where \(s_{i}\) is the i-th nucleotide of the sequence S.

To convert the DNA sequence into a numeric format, we can use the physicochemical properties of dinucleotides or trinucleotides. A dinucleotide represents a pair of adjacent nucleotides in a DNA sequence, while a trinucleotide represents three adjacent nucleotides. These properties capture the physicochemical characteristics of nucleotides and the structural features of DNA [7]. For example, the properties may include measures of hydrogen bond formation, base stacking, base pairing, or flexibility of the DNA helix.

K-mers are substrings of length k that are extracted from a longer DNA or protein sequence. This bioinformatics concept can be used in sequence analysis to identify motifs, patterns, or features characteristic of a specific biological function. Considering the nucleotide alphabet V, there is \(|V|^{k}\) possible mers with length k.

As the name suggests, dinucleotides are two adjacent nucleotides that occur in a DNA sequence. It can be seen as a special case of k-mers with \(k=2\). There are \(4^{2} = 16\) possible dinucleotides, such as “AT”, “CG”, “TA”, etc. While trinucleotides are three adjacent nucleotides that occur in a DNA sequence. It is a special case of k-mers with \(k=3\). There are \(4^{3} = 64\) possible trinucleotides, such as “ATG”, “CGT”, “TAA”, etc.

There are several physicochemical properties in the literature. The work of [7] used 38 properties for dinucleotides and 12 for trinucleotides, making the mapping values available for each in the supplementary data section. We used these data to convert the dataset sequences and create the input features for our tested models. In Table 1, we show the names of all of these 50 properties that we used. There is one column for the dinucleotide names and one for the trinucleotide names.

To convert the DNA sequence into dinucleotide or trinucleotide physicochemical properties, we can use a function that maps each dinucleotide or trinucleotide to a corresponding numerical value based on the selected physicochemical property. This mapping function can be represented as \(f: V^{k} \rightarrow \mathbb {R}\).

A sequence S with \(l_{s}\) nucleotides is converted to a sequence of \(l_{s}-k+1\) property values. So, \(f_{{d}_{m}}(S) \rightarrow \mathbb {R}^{l_{s}-1}\) is the function that maps each dinucleotide to its related physicochemical property m, where \(1 \le m \le 38\). While \(f_{{t}_{n}}(S) \rightarrow \mathbb {R}^{l_{s}-2}\) define a similar mapping function to convert trinucleotides into the m-th physicochemical property, where \(1 \le n \le 12\).

Using a sliding window strategy, with length 2 bp for dinucleotides and 3 bp for trinucleotides, and a step size of 1 bp, we apply the corresponding mapping function to get all physicochemical property numeric values and create the feature vectors that we use in this work to train machine learning models. Figure 2 shows an example of converting a nucleotide sequence of length 8 bp into two numeric vectors to be used as features. On the left, we use a sliding window of 2 bp to extract all 7 dinucleotides and then apply the mapping function to convert them to numeric values using the physicochemical property “Shift”. On the right, we apply a similar strategy with a sliding window of 3 bp to create a mapped vector of length 6 using the trinucleotide physicochemical property “Nucleosome”.

With this approach, we can describe the profiles of properties in each dataset. For example, Fig. 3 illustrates all 12 trinucleotide average profiles in the Human_non_tata dataset. There is one graph for each trinucleotide property. Each point presents the average property value in a specific position of the analyzed sequences. The positions in the x-axis give negative values for the upstream part of sequences and positive ones for the downstream portion. Position zero is the TSS. We can see the different patterns in the promoter and non-promoter samples. The non-promoter’s averages tend not to show significant variations, while the promoter’s averages tend to vary around the TSS position.

2.3 K-Fold Cross-validation Evaluation

K-fold cross-validation [13] is a popular technique used in machine learning and statistical modeling for assessing model performance. It involves dividing the dataset into k subsets or folds, using one fold as the validation set and the remaining k-1 folds as the training set. This process is repeated k times, with each fold serving as the validation set once. By repeatedly training and evaluating the model on different subsets of the data, k-fold cross-validation provides a robust estimate of the model’s performance on new, unseen data, helping to mitigate the risk of overfitting.

To address the issue of imbalanced target variables, a modification of k-fold cross-validation called stratified k-fold cross-validation [11] is often employed. Stratified k-fold cross-validation ensures that each fold maintains a similar distribution of classes as the overall dataset. It involves dividing the dataset into k folds and adjusting the fold split to preserve the class distribution in both the training and validation sets. This technique is particularly useful when dealing with imbalanced datasets, as it ensures that the model is evaluated on all classes rather than being biased towards the most frequent class.

The performance metrics obtained from each fold in k-fold cross-validation are typically averaged to obtain an overall estimate of the model’s performance. This technique applies to various evaluation metrics and provides a more reliable assessment of the model’s generalization ability. By employing k-fold cross-validation, researchers can confidently evaluate their models and make informed decisions regarding model selection and performance comparison in the field of machine learning and statistical modeling.

2.4 Evaluation Measures

The problem of Promoter Classification can be defined as a binary classification task, where the predicted class variable can take on two values: 1 (one) if the DNA sequence is predicted to be a promoter and 0 (zero) otherwise. This classification task leads to four possible outcomes: a true positive (TP), which occurs when the model correctly identifies a promoter sequence; a true negative (TN), which occurs when the model correctly identifies a non-promoter sequence; a false positive (FP), which occurs when the model incorrectly identifies a non-promoter sequence as a promoter; and a false negative (FN), which occurs when the model incorrectly identifies a promoter sequence as a non-promoter.

Precision (Prec) measures the relevance of the predicted true positives (Eq. 1). Sensitivity, also known as Recall (Sn), measures the proportion of true positives (Eq. 2). Specificity (Sp) measures the proportion of true negatives (Eq. 3). Accuracy (Acc) reflects the proportion of correct predictions (Eq. 4). The F1-score (F1) metric, a harmonic mean of precision and recall, combines both measures and assesses the classifier’s performance (Eq. 5).

Matthews Correlation Coefficient (MCC) is a balanced metric that considers all four components of the confusion matrix and provides a balanced measure ranging from -1 to +1, with +1 indicating perfect classification, 0 indicating random classification, and -1 indicating completely opposite classification (Eq. 6). It is more robust than other metrics in handling class imbalance and biased datasets [35]

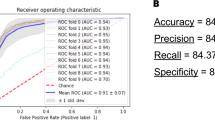

The Area Under the Curve (AUC) is a metric used to evaluate the performance of a binary classification model. It represents the area under the Receiver Operating Characteristic (ROC) curve, which plots the true positive rate against the false positive rate for different classification thresholds. AUC ranges from 0 to 1, with higher values indicating better classification performance.

2.5 Hyperparameter Optimization

The Tree-structured Parzen Estimator (TPE) [2] is a Bayesian optimization algorithm that efficiently explores the search space to find optimal hyperparameters for machine learning models. TPE models the relationship between hyperparameters and performance metrics by maintaining two probability density functions (PDFs) representing successful and unsuccessful configurations. It utilizes these PDFs to guide the search towards regions of the search space more likely to yield improved performance.

During the tuning process, TPE samples hyperparameter configurations based on its probability distributions, evaluate model performance using cross-validation and updates the PDFs accordingly. This iterative approach enables the algorithm to explore and refine the search space, ultimately leading to the identification of hyperparameter configurations that optimize model performance. TPE’s combination of exploration and exploitation strategies makes it a powerful tool for effectively and efficiently optimizing machine learning models.

2.6 Ensemble Methods

The voting ensemble method [23] combines predictions from multiple individual models to make a final prediction. It involves aggregating the outputs of different models, either by majority voting (for classification problems) or by averaging (for regression problems). By combining the predictions of multiple models, the voting ensemble could enhance the overall prediction accuracy and robustness.

The stacking ensemble method [32] leverages the predictions of multiple base models to train a meta-model, which learns to make predictions based on the outputs of the base models. The predictions, along with the original target variable, are then used to train a meta-model that learns to combine the base models’ outputs. The stacking ensemble can capture complex interactions among the models and exploit their complementary strengths, leading to improved prediction performance.

3 Experiments and Results

In order to assess the performance of physicochemical properties in the context of promoter prediction, a series of experiments were conducted. The evaluation encompassed the utilization of individual properties as well as combinations of the most promising ones across seven diverse datasets representing various organism types. To ascertain the effectiveness of the proposed methods within the domain of the problem, the obtained results were compared against a baseline that is regarded as state-of-the-art on these specific datasets.

3.1 Experiment Workflow

The implemented processing workflow encompasses the transformation of raw DNA sequences within a given dataset into physicochemical property values. Subsequently, one or more properties are selected, and their corresponding values are concatenated to form the feature set. The evaluation process involves a 5-fold cross-validation, which partitions the dataset into training and test subsets. Within the training subset, a further 5-fold cross-validation is conducted to compare various machine learning methods and identify the optimal one for hyperparameter tuning. Finally, the tuned model is evaluated using the test dataset.

To conduct our experiments, we utilized PyCaret 3Footnote 2, an advanced machine learning library. PyCaret offers a comprehensive range of eighteen classification methods, which were employed in our study (Table 2).

3.2 Individual Properties’ Performances

Using the 5-fold cross-validation method, we compared several machine learning models to observe the prediction power of each physicochemical property. Then, we chose the best one that uses that property. After, we tuned the hyperparameters of the selected models using the same training data and the 5-fold cross-validation. Figure 4 presents the results from the tuned models. The bars show the MCC scores’ maximum, minimum, and average from evaluating these models. Each dataset has fifty models, one for each property, among dinucleotides and trinucleotides. The graph suggests that the models trained on prokaryotic datasets get worse results than those from the eukaryotic datasets. The labels on the x-axis of the graph refer to Table 1. Although we tested eighteen different models, all the best models were the Light Gradient Boosting Machine.

3.3 Performance Comparisons

We utilized CNNProm [30] and MCC scores baseline for comparing the performance of the models under evaluation, given our belief that it represents the state-of-the-art solution for this problem. The corresponding results can be found in Table 3. The scores attributed to CNNProm are as reported by its authors. Columns labeled as “Single” denote the best-performing individual property model. The columns “Vote5”, “Vote10”, “Stack5”, and “Stack10” represent results from ensemble models that employed either the voting or stacking method, incorporating the top 5 or 10 properties, respectively.

The performance of the “Single” model demonstrated relatively lower average results when compared with other models. Interestingly, the outcomes derived from the ensemble models did not exhibit a significant discrepancy whether they incorporated the top 5 or 10 properties, insinuating that an increased number of properties does not substantially enhance the final model’s efficacy. Likewise, no notable divergence was observed between the “Vote” and “Stack” ensemble methodologies.

When comparing the investigated models to the baseline model CNNProm, we observed similar performance in 3 out of 7 datasets. Specifically, in the Arabidopsis_non_tata dataset, our model exhibited a marginally lower performance by \(1\%\) compared to CNNProm. Conversely, in the Arabidopsis_tata dataset, our model achieved a slightly higher performance by \(1\%\) in comparison. In addition, the results for the Mouse_non_tata dataset were equivalent to the baseline model. However, in the Bacillus subtilis, Escherichia coli s70 (procaryotes), and Human_non_tata datasets, our implementations did not yield comparable results to the baseline model.

4 Conclusions

This work presents a study of the use of the physicochemical properties of DNA as features in training machine-learning models. Using different metrics, we evaluated several models using seven datasets of procaryotic and eucaryotic organisms. Our study analyzed the prediction performance of individual properties models and ensemble models using the best properties, comparing them with the state-of-art method CNNProm for the evaluated datasets. We concluded that even though the models trained with the properties achieved competitive prediction results, they were unable to surpass the established baseline. So, it is necessary to evaluate new strategies to use these physicochemical properties values, like deriving other features through feature engineering or using deep learning models to automatically derive better features from them.

Notes

- 1.

- 2.

Official page: https://pycaret.gitbook.io/.

References

Arslan, H.: A new promoter prediction method using support vector machines. In: 2019 27th Signal Processing and Communications Applications Conference (SIU), pp. 1–4. IEEE (2019)

Bergstra, J., Bardenet, R., Bengio, Y., Kégl, B.: Algorithms for hyper-parameter optimization. In: Advances in Neural Information Processing Systems, vol. 24 (2011)

Bhandari, N., Khare, S., Walambe, R., Kotecha, K.: Comparison of machine learning and deep learning techniques in promoter prediction across diverse species. PeerJ Comput. Sci. 7, e365 (2021)

Breiman, L.: Random forests. Mach. Learn. 45, 5–32 (2001)

Cartharius, K., et al.: Matinspector and beyond: promoter analysis based on transcription factor binding sites. Bioinformatics 21(13), 2933–2942 (2005)

Chen, T., Guestrin, C.: XGBoost: a scalable tree boosting system. In: Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 785–794 (2016)

Chen, W., Lei, T.Y., Jin, D.C., Lin, H., Chou, K.C.: PSEKNC: a flexible web server for generating pseudo k-tuple nucleotide composition. Anal. Biochem. 456, 53–60 (2014)

Chevez-Guardado, R., Peña-Castillo, L.: Promotech: a general tool for bacterial promoter recognition. Genome Biol. 22, 1–16 (2021)

Cortes, C., Vapnik, V.: Support-vector networks. Mach. Learn. 20, 273–297 (1995)

Deaton, A.M., Bird, A.: CPG islands and the regulation of transcription. Genes Dev. 25(10), 1010–1022 (2011)

Dietterich, T.G.: Approximate statistical tests for comparing supervised classification learning algorithms. Neural Comput. 10(7), 1895–1923 (1998)

Dreos, R., Ambrosini, G., Cavin Périer, R., Bucher, P.: EPD and EPDNEW, high-quality promoter resources in the next-generation sequencing era. Nucleic Acids Res. 41(D1), D157–D164 (2013)

Efron, B.: Estimating the error rate of a prediction rule: improvement on cross-validation. J. Am. Stat. Assoc. 78(382), 316–331 (1983)

Freund, Y., Schapire, R.E.: A decision-theoretic generalization of on-line learning and an application to boosting. J. Comput. Syst. Sci. 55(1), 119–139 (1997)

Friedman, J.H.: Greedy function approximation: a gradient boosting machine. Ann. Stat. 1189–1232 (2001)

Gama-Castro, S., et al.: Regulondb version 9.0: high-level integration of gene regulation, coexpression, motif clustering and beyond. Nucleic Acids Res. 44(D1), D133–D143 (2016)

Goñi, J.R., Pérez, A., Torrents, D., Orozco, M.: Determining promoter location based on DNA structure first-principles calculations. Genome Biol. 8(12), R263 (2007)

Hochreiter, S., Schmidhuber, J.: Long short-term memory. Neural Comput. 9(8), 1735–1780 (1997)

Ishii, T., Yoshida, K.i., Terai, G., Fujita, Y., Nakai, K.: DBTBS: a database of bacillus subtilis promoters and transcription factors. Nucleic Acids Res. 29(1), 278–280 (2001)

Juven-Gershon, T., Kadonaga, J.T.: Regulation of gene expression via the core promoter and the basal transcriptional machinery. Dev. Biol. 339(2), 225–229 (2010)

Ke, G., et al.: LightGBM: a highly efficient gradient boosting decision tree. In: Advances in Neural Information Processing Systems, vol. 30 (2017)

Kotsiantis, S., Kanellopoulos, D., Pintelas, P., et al.: Handling imbalanced datasets: a review. GESTS Int. Trans. Comput. Sci. Eng. 30(1), 25–36 (2006)

Kuncheva, L.I.: Combining pattern classifiers: methods and algorithms. John Wiley & Sons (2014)

LeCun, Y., Bottou, L., Bengio, Y., Haffner, P.: Gradient-based learning applied to document recognition. Proc. IEEE 86(11), 2278–2324 (1998)

Li, F., et al.: Computational prediction and interpretation of both general and specific types of promoters in Escherichia coli by exploiting a stacked ensemble-learning framework. Brief. Bioinform. 22(2), 2126–2140 (2021)

Meng, H., Ma, Y., Mai, G., Wang, Y., Liu, C.: Construction of precise support vector machine based models for predicting promoter strength. Quant. Biol. 5, 90–98 (2017)

Moraes, L., Silva, P., Luz, E., Moreira, G.: CapsProm: a capsule network for promoter prediction. Comput. Biol. Med. 147, 105627 (2022)

Pedersen, A.G., Baldi, P., Chauvin, Y., Brunak, S.: The biology of eukaryotic promoter prediction-a review. Comput. Chem. 23(3–4), 191–207 (1999)

Sabour, S., Frosst, N., Hinton, G.E.: Dynamic routing between capsules. In: Advances in Neural Information Processing Systems, vol. 30 (2017)

Umarov, R.K., Solovyev, V.V.: Recognition of prokaryotic and eukaryotic promoters using convolutional deep learning neural networks. PLoS ONE 12(2), e0171410 (2017)

Wasserman, W.W., Sandelin, A.: Applied bioinformatics for the identification of regulatory elements. Nat. Rev. Genet. 5(4), 276–287 (2004)

Wolpert, D.H.: Stacked generalization. Neural Netw. 5(2), 241–259 (1992)

Zeng, J., Zhu, S., Yan, H.: Towards accurate human promoter recognition: a review of currently used sequence features and classification methods. Brief. Bioinform. 10(5), 498–508 (2009)

Zhang, M., et al.: Critical assessment of computational tools for prokaryotic and eukaryotic promoter prediction. Brief. Bioinform. 23(2) (2022)

Zhu, Q.: On the performance of Matthews correlation coefficient (MCC) for imbalanced dataset. Pattern Recogn. Lett. 136, 71–80 (2020)

Acknowledgment

The authors would also like to thank the Coordenação de Aperfeiçoamento de Pessoal de Nível Superior - Brazil (CAPES) - Finance Code 001, Fundacão de Amparo à Pesquisa do Estado de Minas Gerais (FAPEMIG, grants APQ-01518-21, APQ-01647-22), Conselho Nacional de Desenvolvimento Científico e Tecnológico (CNPq, grants 307151/2022-0, 308400/2022-4) and Universidade Federal de Ouro Preto (PROPPI/UFOP) for supporting the development of this study. We want to express our gratitude for the collaboration of the Laboratório Multiusuários de Bioinformática of Núcleo de Pesquisas em Ciências Biológicas (NUPEB/UFOP).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Moraes, L., Luz, E., Moreira, G. (2023). Physicochemical Properties for Promoter Classification. In: Naldi, M.C., Bianchi, R.A.C. (eds) Intelligent Systems. BRACIS 2023. Lecture Notes in Computer Science(), vol 14196. Springer, Cham. https://doi.org/10.1007/978-3-031-45389-2_25

Download citation

DOI: https://doi.org/10.1007/978-3-031-45389-2_25

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-45388-5

Online ISBN: 978-3-031-45389-2

eBook Packages: Computer ScienceComputer Science (R0)