Abstract

Statistical tests of hypothesis play a crucial role in evaluating the performance of machine learning (ML) models and selecting the best model among a set of candidates. However, their effectiveness in selecting models over larger periods of time remains unclear. This study aims to investigate the impact of statistical tests on ML model selection in sequential experiments. Specifically, we examine whether selecting models based on statistical tests leads to higher quality models after a significant number of iterations and explore the effect of the number of tests performed and the preferred statistical test for different experimental time horizons.

The study on binary classification problems reveals that the use of statistical tests should be approached with caution, particularly in challenging scenarios where generating improved models is difficult. The analysis demonstrates that statistical tests may impede progress and impose overly stringent acceptance criteria for new models, hindering the selection of high-quality models. The findings also indicate that the dominance of versions without statistical tests remained consistent, suggesting the need for further research in this area.

Although this study is limited by the number of datasets and the absence of pre-test assumption verification, it emphasizes the importance of understanding the impact of statistical tests on ML model selection.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

In recent years, machine learning (ML) has become a popular tool for data analysis in various fields such as finance [1], healthcare [2], and marketing [8]. The ultimate goal of machine learning is to build predictive models that can accurately predict the target variable based on the input features.

Choosing the best model among a set of candidate models, however, can be a daunting task. One approach is to use statistical tests of hypothesis to compare the performance of different models [4].

The statistical tests of hypothesis are widely used in the scientific community to evaluate the significance of a result or to compare the performance of different methods [7]. In machine learning, statistical tests are used to determine whether there is a significant difference between the performance of two or more models.

The use of statistical tests in ML model selection has become an important topic of research due to its impact on the performance of the final model [3,4,5, 14, 17]. The use bayesian statistics is studied in [3, 5] while the use of frequentist tests is the focus of [4, 14, 17]. While these studies investigate the ability of statistical tests to choose the best model in a single experiment, this study aims to understand their effectiveness when they are applied over larger periods of time. More specifically, this study investigates whether the statistical tests commonly used for selecting the best ML model in a single experiment remain effective when applied in sequences of experiments. To achieve this, using a set a binary classification problems, the quality of the models selected by each test is examined after a significant number of iterations. Other parameters such as the number of iterations and the amount of data collected to feed the statistical tests are also investigated. In this context, the research questions investigated in this study can be laid down as follows:

-

1.

The selection of machine learning models based on statistical tests of hypothesis lead to higher quality models after a large number of iterations?

-

2.

What is the effect of the number of tests performed to acquire data to these statistical test of hypothesis?

-

3.

Is there a preferred statistical test for different experimental time horizons?

The rest of the paper is organized as follows. In Sect. 2, we will provide a brief overview of statistical tests of hypothesis and their application in machine learning. In Sects. 3 and 4, we will describe the experimental setup and datasets used in this study. In Sect. 5, we will present and analyze the results of our experiments. Finally, in Sect. 6, we will conclude the paper with a discussion of the implications of our findings and future research directions.

2 Statistical Hypothesis Testing

In this section, we introduce the hypothesis tests used in this study. Given our objective of comparing two groups, which is the simplest scenario, i.e., comparing two algorithms, we have selected methods for this purpose. We have chosen the t-test as a representative parametric test, while the Mann-Whitney U test has been selected as the non-parametric option.

2.1 Paired T-Test for Two Related Samples

A paired t-test is a statistical test used to determine whether there is a significant difference between the means of two related samples [11]. While using it, each individual in one sample is paired with an individual in the other sample based on some common characteristic, such as before-and-after measurements of the same individual, or measurements from two different methods applied to the same subjects. It assumes that the two samples are normally distributed and have equal variances.

The null hypothesis of the paired t-test is that there is no difference between the means of the two samples. If the p-value returned by it, which is a representation of the probability of obtaining a statistic as extreme as the one observed, assuming that the null hypothesis is true, is less than a predefined significance level (e.g., 0.05), the null hypothesis can be rejected, and it usually concluded that there is a significant difference between the means of the two samples.

The scipy.stats.ttest_rel function is the method in the SciPy library [16] that performs a paired t-test for two related samples. It can be used in machine learning to compare the performance of two models on the same dataset. This information can be useful in model selection and can help to identify the best performing model for a given task. The test statistic t is computed as follows:

where, \(\overline{X}_D\) and \(s_D\) are the average and the standard deviation of the difference between all pairs. \(\mu _0\) is the true mean of the difference under the null hypothesis. It is zero if we want to test whether the average of the difference is significant. The number of pairs is represented by n, which is also used to calculate the degrees of freedom as \(n-1\).

2.2 Independent T-Test for Two Independent Samples

An independent t-test is a statistical test used to determine whether there is a significant difference between the means of two independent samples.

The scipy.stats.ttest_ind function is a method in the SciPy library [16] that performs an independent t-test for two independent samples. It assumes that the two samples are normally distributed and have equal variances.

The null hypothesis of the independent t-test is that there is no difference between the means of the two samples [11]. If the p-value returned by the ttest_ind function is less than a predefined significance level (e.g., 0.05), the null hypothesis can be rejected, and it can be concluded that there is a significant difference between the means of the two samples. The test t statistic is computed as:

where

\(s_ p\) is the pooled standard deviation of the two samples. It is defined in this way so that its square is an unbiased estimator of the common variance. For more details, see [12].

2.3 Mann-Whitney U Test

The Mann-Whitney U test is a non-parametric statistical test used to determine whether there is a significant difference between the distributions of two independent samples.

The ss.mannwhitneyu function is a method in the SciPy library [16] that performs the Mann-Whitney U test, also known as the Wilcoxon rank-sum test.

A very general formulation is to assume that:

-

All the observations from both groups are independent of each other,

-

The responses are at least ordinal (i.e., one can at least say, of any two observations, which is the greater),

-

Under the null hypothesis \(H_0\), the distributions of both populations are identical.

-

The alternative hypothesis \(H_1\) is that the distributions are not identical.

The function takes two arrays of different sizes as input. The arrays represent the two samples that are being compared. The function returns two values: the calculated U statistic and the associated p-value. The U statistic is a measure of the difference between the ranks of the two samples.

The null hypothesis of the Mann-Whitney U test is that there is no difference between the distributions of the two samples. If the p-value returned by the ss.mannwhitneyu function is less than a predefined significance level (e.g., 0.05), the null hypothesis can be rejected, and it can be concluded that there is a significant difference between the distributions of the two samples.

The Mann-Whitney U test is commonly used in machine learning to compare the performance of two models, especially when the assumptions of the t-test (such as normality and equal variances) are not met.

In details, let \(X_1, \cdots , X_n\) be an independent and identically distributed (i.i.d.) sample from X and \(Y_1, \cdots , Y_m\) an i.i.d. sample from Y and each sample independent from another. The corresponding Mann-Whitney U statistic is defined as:

with

For large samples, assign numeric rank to all the observations, independent of the group they are. Then, U is given by \(min(U_1, U_2)\), where: \(U_1 = n_1 n_2 + \frac{n_1(n_1 + 1)}{2} - R_1,\) and \(U_2 = n_1 n_2 + \frac{n_2(n_2 + 1)}{2} - R_2\). \(R_i\) is the sum of the ranks from observations of the group i and \(n_i\) is the sample size of the group i.

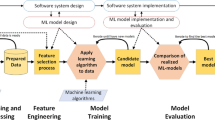

3 The Test Bed

To evaluate the impact of using the aforementioned statistical tests for model selection over time, a simplified evolutionary algorithm is defined. This algorithm operates on a population of n model types, each having a set of associated hyper-parameters. The model with the highest performance, according to metric M, is designated as best model.

During each generation, a random model type is selected and its parameters undergo random mutations. The mutated model then competes with the model of the same type in the population. If a statistical test reveals a significant difference in performance at a significance level of \(\alpha = 0.05\), the hyper-parameters of the model in the population are updated. In such cases, the mutated model also competes against the current best model using the same process.

Ultimately, the method returns the best model obtained through this evolutionary process. A pseudocode for the method is given in Algorithm 1.

4 Experimental Setup

4.1 Datasets

Four binary classification problems defined over four datasets from the UCI repository [6] were selected for analysis. They are described below:

-

1.

The Banking Dataset - Marketing Targets [13] (Banking) represents customer data in the banking industry for predicting conversion. It features a large sample size (\(n \approx 45000\)) and includes seventeen predictors, consisting of seven numeric and nine categorical variables (three of which are binary).

-

2.

The Default of Credit Card Clients Dataset [18] (Credit) contains information about default payments of credit card clients in Taiwan from 2005. With a substantial sample size (\(n \approx 30000\)), it comprises 24 predictors, comprising fifteen numeric and nine categorical variables.

-

3.

The Heart Disease Dataset [10] (Heart) integrates information from four databases: Cleveland, Hungary, Switzerland, and Long Beach V. It encompasses 76 attributes, but published experiments focus on a subset of 14 attributes. The “target” field denotes the presence of heart disease in patients. The dataset has a sample size of \(n = 303\) and includes thirteen attributes, of which five are numeric and eight are categorical.

-

4.

The Spambase Data Set [9] (Spambase) consists of a sample of \(n = 4601\) emails classified as either “spam” or “non-spam.” It contains fifty-seven numeric predictors, including metrics such as capital character frequency and the percentage of matching words.

The datasets are preprocessed by converting categorical variables to numerical values using LabelEncoder and normalizing the feature values using preprocessing normalize from scikit-learn. The preprocessed data is then split into training and testing sets using train_test_split from scikit-learn.

4.2 Models and Hyper-parameters

The following models were chosen for the study:

-

Random Forest Classifier

-

K-Nearest Neighbors Classifier

-

Decision Tree Classifier

-

XGBoost Classifier

The sampling functions used in the hyper-parameter mutation phase are denoted by:

-

\(\mathcal {S}_{[\mu _1,\mu _2,\min ]}\): Samples from a Skellan Distribution defined with \(\mu _1\) and \(\mu _2\) and the center on the current value of the hyper-parameter. The samples truncated to \(\min \) if its value is below \(\min \)

-

\(\mathcal {N}_{[\sigma ,\min ,\max ]}\): Samples from a Normal Distribution defined with \(\mu \) on the current value of the hyper-parameter and \(\sigma \). The sample is truncated to \(\min \) if its value is below \(\min \) or \(\max \) if its value is above max

-

\(\mathcal {U}_{[v_1,v_2,...,v_k]}\): Samples the values \(v_1,v_2,...,v_k\) using a uniform distribution.

The hyper-parameters varied in each model and the related sampling functions are given below:

-

Random Forest Classifier:

-

Number of estimators: \(\mathcal {S}_{[10,10,3]}\)

-

Maximum depth: \(\mathcal {S}_{[2,2,2]}\)

-

Maximum number of features: \(\mathcal {U}_{['sqrt', 'log2', None]}\)

-

-

K-Nearest Neighbors Classifier:

-

Number of neighbors: \(\mathcal {S}_{[1,1,3]}\)

-

Weights: \(\mathcal {U}_{['uniform', 'distance']}\)

-

-

Decision Tree Classifier:

-

Maximum depth: \(\mathcal {S}_{[1,1,2]}\)

-

Maximum number of features: \(\mathcal {U}_{['sqrt', 'log2', None]}\)

-

Criterion: \(\mathcal {U}_{['gini', 'entropy', 'log\_loss']}\)

-

-

XGBoost Classifier:

-

Tree method: \(\mathcal {U}_{['auto', 'exact', 'approx']}\)

-

Maximum depth: \(\mathcal {S}_{[1,1,1]}\)

-

Booster: \(\mathcal {U}_{['gbtree', 'dart']}\)

-

Number of estimators: \(\mathcal {S}_{[1,1,1]}\)

-

Subsample: \(\mathcal {N}_{[0.3,10^-2,1]}\)

-

If no sampling function is the defined, the mutation selects a random element from the set of possible values. Otherwise, it ramdomly samples from the defined distribution.

The F1 score [15] is used as the evaluation metric for the classification models. The F1 metric is a particular case of a general measure \(F_{\beta }\). This measure is a weighting between precision and recall, indicated in cases of unbalanced data, which can generate more inaccurate results when only one of these two measures is used.

4.3 Statistical Tests

The statistical tests used by Algorithm 1 to compare the performance of different hyper-parameters are:

-

1.

Paired t-test for two related samples (ttest_rel)

-

2.

Two-sample t-test (ttest_in)

-

3.

Mann-Whitney U test (mannwhitneyu)

They are performed on the set of evaluation metrics collected using k-fold cross-validation. As baseline, Algorithm 1 was also without any statistical test. In this case is simply selects the models with the best average performance as given by the cross-validation procedure. This procedure will be called dummy_stats_test for rest of the text.

4.4 Experimental Procedure

Algorithm 1 was executed 10 independent times for each combination of the following factor levels:

-

Datasets: [Banking,Credit,Heart,Spambase]

-

Statistical Tests: [Paired t-test for two related samples (ttest_rel), Two-sample t-test (ttest_in), Mann-Whitney U test (mannwhitneyu), Dummy (dummy_stats_test)]

-

K folds: [10, 30, 50]

-

Number of generations: [25, 100, 1000]

5 Results

This section summarizes the results obtained using Algorithm 1 for all tested conditions related to the research questions in Sect. 1. The results are presented as the percentage improvement in performance between the final best model (\(F1_{final}\)) and the initial best model (\(F1_0\)) which was computed by the equation below:

5.1 Performance over Datasets

Figure 1 shows the box-plots for the \(\%improvement\) obtained with Algorithm 1 combining all the possible configurations. It can be seen that the proposed procedure was more successful for the Spambase dataset with an average improvement around 7% reaching 17.5% improvement in some scenarios. Conversely, the improvements obtained for the other datasets were more modest with averages around 3%, 1.7% and 2.6% for the Banking, Credit and Heart datasets, respectively.

5.2 Performance of the Statistical Tests Varying Number of Folds

Figure 2 shows the box-plots for the \(\%improvement\) obtained with Algorithm 1 when we vary the applied statistical test and the number of folds used in the cross-validations procedure. It can be seen that, in this scenario where the dataset effect is confounded increasing the number of folds had no effect in the \(\%improvement\).

Figure 3 presents the factors from Fig. 2 for each dataset, separately. Looking at the Spambase results, the dataset where Algorithm 1 performed the set, it can be seen that, even though there is still no significant difference in the average \(\%improvement\), increasing the number of folds contributed to reduce the variance of the results for the parametric tests. This phenomenon, however, could not be observed in the overall results, possibly because of the difficulty Algorithm 1 had to generate improved models.

5.3 Performance of the Statistical Tests Varying the Maximum Number of Generations

Figure 4 displays the performance Algorithm 1 in regards to the \(\%improvemnt\) when varying the statistical test and the maximum number of generations (iterations) allowed. As expected, when the number of generations increases the \(\%improvement\) also increases. These overall results do not show an interaction between the statistical test and the number of iterations since the difference in the performance among the different statistical tests and the dummy test remains almost constant as the number o generations increases.

Figure 5 displays the performance Algorithm 1 in regards to the \(\%improvemnt\) for each dataset. For the datasets Heart, Banking and Credit, even for a small number of iterations, or 25 or 100, the use of no statistical test (dummy) has led to better average performances of Algorithm 1. Among the versions with an actual statistical test, the paired t-test with relates samples (ttest_rel) has led to better or almost equal results on average.

For the Spambase where is was easy to generate improved models, the difference between the dummy and the other versions, only shows at the limit of 1000 generations.

Overall, these results suggest that in a challenging scenario where generating improved models is difficult, the use of statistical tests may hinder progress by imposing a an excessively stringent standard for accepting new models.

6 Conclusion

This study investigates the effectiveness of statistical tests commonly used for selecting the best machine learning (ML) model when applied over larger periods of time. The study aims to understand whether a selection procedure based on statistical tests leads to higher quality models after a significant number of iterations. Additionally, the impact of the number of tests (number of folds in cross-validation) performed to acquire data for these statistical tests and the preferred statistical test for different experimental time horizons are examined.

In summary, the analysis suggests that the use of statistical tests in ML model selection should be carefully considered, especially in challenging scenarios, as they may hinder progress and impose overly stringent criteria for accepting new models.

Although the number of datasets in this study is limited, and the proposed procedure did not verify the assumptions of the applied tests beforehand, it is noteworthy that the dominance of the versions without statistical tests remained consistent. This highlights the importance of conducting further research and exploration in this area. The use of statistical tests of hypothesis is fundamental in many scientific fields, and it is crucial to better understand their impact on the selection of machine learning models.

References

Aygun, B., Gunay, E.K.: Comparison of statistical and machine learning algorithms for forecasting daily bitcoin returns. Avrupa Bilim ve Teknoloji Dergisi (21), pp. 444–454 (2021)

Bao, D., et al.: Discriminating between p16-negative oropharyngeal and non-oropharyngeal origins by their metastatic lymph nodes using machine learning approach based on MRI radiomics (2022)

Benavoli, A., Corani, G., Demšar, J., Zaffalon, M.: Time for a change: a tutorial for comparing multiple classifiers through bayesian analysis. J. Mach. Learn. Res. 18(77), 1–36 (2017). http://jmlr.org/papers/v18/16-305.html

Bender, A., Schneider, N., Segler, M., Patrick Walters, W., Engkvist, O., Rodrigues, T.: Evaluation guidelines for machine learning tools in the chemical sciences. Nat. Rev. Chem. 6(6), 428–442 (2022)

Corani, G., Benavoli, A.: A bayesian approach for comparing cross-validated algorithms on multiple data sets. Mach. Learn. 100(2–3), 285–304 (2015)

Dua, D., Graff, C.: UCI machine learning repository (2017). http://archive.ics.uci.edu/ml

Fagerland, M.W.: t-tests, non-parametric tests, and large studies-a paradox of statistical practice? BMC Med. Res. Methodol. 12(1), 1–7 (2012)

Hair, J.F., Jr., Sarstedt, M.: Data, measurement, and causal inferences in machine learning: opportunities and challenges for marketing. J. Market. Theory Practice 29(1), 65–77 (2021)

Hopkins, M., Reeber, E., Forman, G., Suermondt, J.: Spambase. UCI Machine Learning Repository (1999). https://doi.org/10.24432/C53G6X

Janosi, A., Steinbrunn, W., Pfisterer, M., Detrano, R., M.D., M.: Heart Disease. UCI Machine Learning Repository (1988). https://doi.org/10.24432/C52P4X

Kim, T.K.: T test as a parametric statistic. Korean J. Anesthesiol. 68(6), 540–546 (2015)

Morettin, P.A., Bussab, W.O.: Estatística básica. Saraiva Educação SA (2017)

Moro, S., Rita, P., Cortez, P.: Bank Marketing. UCI Machine Learning Repository (2012). https://doi.org/10.24432/C5K306

Trawiński, B., Smetek, M., Telec, Z., Lasota, T.: Nonparametric statistical analysis for multiple comparison of machine learning regression algorithms. Int. J. Appl. Math. Comput. Sci. 22(4), 867–881 (2012)

Van Rijsbergen, C.J.: Information retrieval. (No Title) (1979)

Virtanen, P., et al.: SciPy 1.0 Contributors: SciPy 1.0: fundamental algorithms for scientific computing in python. Nature Methods 17, 261–272 (2020). https://doi.org/10.1038/s41592-019-0686-2

Wong, T.T., Yeh, P.Y.: Reliable accuracy estimates from k-fold cross validation. IEEE Trans. Knowl. Data Eng. 32(8), 1586–1594 (2019)

Yeh, I.C.: default of credit card clients. UCI Mach. Learn. Repository (2016). https://doi.org/10.24432/C55S3H

Acknowledgments

This work was supported by CNPq - National Council for Scientific and Technological Development, CAPES - Coordination for the Improvement of Higher Education Personnel and UFOP - Federal University of Ouro Preto.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Gonçalves, M.C., Silva, R. (2023). The Effect of Statistical Hypothesis Testing on Machine Learning Model Selection. In: Naldi, M.C., Bianchi, R.A.C. (eds) Intelligent Systems. BRACIS 2023. Lecture Notes in Computer Science(), vol 14196. Springer, Cham. https://doi.org/10.1007/978-3-031-45389-2_28

Download citation

DOI: https://doi.org/10.1007/978-3-031-45389-2_28

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-45388-5

Online ISBN: 978-3-031-45389-2

eBook Packages: Computer ScienceComputer Science (R0)