Abstract

Robust optimization considers uncertainty in the decision variables while noisy optimization concerns with uncertainty in the evaluation of objective and constraint functions. Although many evolutionary algorithms have been proposed to deal with robust or noisy optimization problems, the research question approached here is whether these methods can deal with both types of uncertainties at the same time. In order to answer this question, we extend a test function generator available in the literature for multi-objective optimization to incorporate uncertainties in the decision variables and in the objective functions. It allows the creation of scalable and customizable problems for any number of objectives. Three evolutionary algorithms specifically designed for robust or noisy optimization were selected: RNSGA-II and RMOEA/D, which utilize Monte Carlo sampling, and the C-RMOEA/D, which is a coevolutionary MOEA/D that uses a deterministic robustness measure. We did experiments with these algorithms on multi-objective problems with (i) uncertainty in the decision variables, (ii) noise in the output, and (iii) with both robust and noisy problems. The results show that these algorithms are not able to deal with simultaneous uncertainties (noise and perturbation). Therefore, there is a need for designing algorithms to deal with simultaneously robust and noisy environments.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

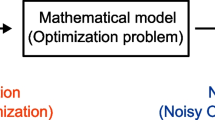

Uncertainties exist in the real world, such as in the measurement system or final control element. The search for optimal solutions in the presence of uncertainties is often referred to as robust optimization in the literature [3, 4, 9, 11]. However, the term robust optimization can be used to encompass uncertainties in both the decision variables and the parameters of the problem, as discussed by Ben-Tal, El Ghaoui, and Nemirovski in their book on Robust Optimization [2]. This includes considering uncertainty in the input of the mathematical model of the optimization problem. In this article, our focus is specifically on uncertainties in the decision variables, referred to as perturbations within the context of robust optimization. On the other hand, uncertainties in the objective functions, which pertain to the output of the process, are referred to as noise within the context of noisy optimization. Figure 1 illustrates these definitions and the distinctions between noisy and robust optimization.

Real optimization problems can have different types of uncertainties. These uncertainties can prevent the implementation of practical solutions if not taken into account. The designer (optimizer) therefore faces the challenge of finding solutions that are less sensitive to uncertainties. Optimization with uncertainty is a relatively new and rapidly growing research field that has gained significant attention in the past decade [10]. Some works with applications using optimization under uncertainties are [1, 7, 12, 17, 20, 25]. Examples of uncertainties include noise, model inaccuracies, time variations, measurement inaccuracies, disturbances, and other uncontrolled factors that can degrade the performance of the designed solutions [19].

Recently, Shaaban Sahmoud and Haluk Rahm [22] worked on noisy and dynamic optimization. We aim to fill a gap in the literature by conducting a study that tests evolutionary algorithms in the presence of both types of uncertainties; in decision variables and objective functions. In order to answer this research question, we extend a test function generator available in the literature for multi-objective optimization. The goal is to provide an examination of the behavior of these algorithms under such conditions.

The generator of benchmark problems from [18] uses a bottom-up approach and generates problems with various features, the objective space, and the decision space separately. It allows the creation of scalable and customizable problems for any number of objectives and Pareto fronts with different shapes and topologies, and can incorporate different features such as dissimilarity, robustness, and modality. The objective space can always be represented as a vector \(({\textbf {x}}_p, {\textbf {x}}_d)\), where \({\textbf {x}}_p\) responsible for the spatial location of the points in the objective space and \({\textbf {x}}_d\) governs the convergence.

The extension introduces different types of noise (Gaussian, Uniform, and Cauchy) for the objective functions. These noises have different properties, such as being additive or multiplicative, and can have different intensities. The noise intensities were taken from the work of [8]. Proposed intensities were implemented, along with a third intensity we specifically proposed for the Gaussian and Uniform noises.

Then, three evolutionary algorithms designed for robust or noisy optimization are selected: RNSGA-II, RMOEA/D, and C-RMOEA/D. The results of the tests show that the algorithms were not able to handle simultaneous uncertainties in decision variables and objective functions, leading to a degradation in the quality of the solutions compared to only one type of uncertainty (either perturbation or noise). Thus, we conclude that there is a need for designing algorithms specifically for handling simultaneous uncertainties.

In this work, the preliminary definitions and concepts such as uncertainties and robustness measures are presented in Sect. 2. In Sect. 3 presents the extension of the function generator proposed in this work. The results of the computational experiment, including a brief description of the three algorithms used and the experimental setup, are presented in Sect. 4. Finally, the conclusion of the work is presented in Sect. 5.

2 Preliminary Concepts

Some of the main concepts covered in this section include types of uncertainties and robustness measures. These concepts provide the foundation for the later discussions in the paper about robust and noisy multi-objective optimization.

2.1 Types of Uncertainties

There are several classifications of uncertainties in the literature. Three prevalent classifications of uncertainties are presented in Table 1. The parameter \(\delta \) represents uncertainties in the decision variables (perturbation), \(f({\textbf {x}}+\delta )\), or in the objective functions (noise), \(f({\textbf {x}})+\delta \).

According to [4], uncertainties are categorized based on their sources, with some sources of different types of uncertainties being identified in the design and optimization process. Ong et al. [21] consider which elements of the model are affected, such as the objective function, variables, or environment. Finally, Jin and Branke [13] classify uncertainties in optimization problems according to how they impact the optimization process.

We observed that there is an equivalence between the classifications of uncertainties mentioned in the literature. This equivalence is depicted in Table 2. To illustrate this equivalence, consider Type B, which classifies the source of uncertainty as production tolerance. For example, a final control element has uncertainty (a hypothetical example would be a drill that aims to drill 5 m, but may have a \({\pm }10\%\) error). This error in the final control element generates a perturbation in the decision variables, thus making it both Type II and Category II.

2.2 Robustness Measurements

Mathematically, there are different ways to quantify the uncertainties classified above. One paper that defined robustness measures was by [4]. According to this work, uncertainties can basically be modeled deterministically, probabilistically, or possibilistically:

-

1.

The deterministic type defines domains of uncertainty parameters. An example is the worst-case measure:

$$\begin{aligned} R({\textbf {x}},\delta ) = \max _{\delta \in \varDelta }f({\textbf {x}},\delta ) \end{aligned}$$(1) -

2.

The probabilistic type defines probability measures that describe the probability by which a given event can occur. An example is the Monte Carlo integration followed by the mean:

$$\begin{aligned} \hat{R}({\textbf {x}},\delta ) = \frac{1}{k} \sum _{i=1}^{k} [f({\textbf {x}}+\delta ^i)] \end{aligned}$$(2) -

3.

The possibilistic type defines fuzzy rules that describe the degree of adherence by which a given event may be acceptable. An example is the treatment of uncertainty sets using fuzzy logic and the theory of evidence [14].

3 Function Generator Extension - Robust Optimization And/or Noisy Optimization

A contribution of this work is the proposal of an extension to the function generator described in [18]. The main aspect of this extension is the integration of concepts from robust and noisy optimization. The Gaussian, Uniform, and Cauchy noise models present in the BBOB functions [8] are incorporated into the function generator, resulting in an optimization problem formulation with uncertainty in both the decision variables and the objective functions. Mathematically:

where \(\delta _x\) indicates the uncertainties in the decision variables and \(\delta _f\) indicates the uncertainties in the objective functions. In Eq. 3, the noise is considered additive, but it can also be multiplicative:

The main objective of the proposed extension is to evaluate the behavior of algorithms in problems with uncertainty in both the decision variables and the objective functions. To date, the algorithms have only been tested in either robust optimization or noisy optimization. They were designed specifically for one type of uncertainty, so the question is whether they will perform well in the presence of two types of uncertainty simultaneously.

The test function used in this study is called GPD and is taken from [18]. This problem allows for changing the format of the Pareto front through a parameter p. The parameter p defines the norm (\(p \ge 1\)) or quasi-norm (\(0 < p < 1\)) used in the function \(h({\textbf {x}}) = || T({\textbf {x}}) ||_p\). If \(0 < p < 1\), the Pareto front is convex. If \(p=1\), it is a hyperplane, and if \(p > 1\), it is concave. In this article, it was defined as follows: \(p = 0.5\) or \(p = 1\) or \(p = 2\).

The focus of this work is to assess the robustness and noise tolerance of the algorithms. To achieve this, unrestricted and bi-objective problems were chosen. The problem GPD is evaluated according to the methodology described in [18].

As previously stated, this work utilizes three models of noise: Gaussian, Uniform, and Cauchy (\(\delta _f\) of Eqs. 3 and 4). These noises will be incorporated into the function generator. These noise models were adopted from the work of [8] (BBOB Functions). Finck et al. [8] defined two levels of intensity for the noise: moderate and severe. The mathematical description of the three noise models is presented below.

-

Gaussian Noise

$$\begin{aligned} f_{GN}(f,\beta ) = f \times \exp (\beta \mathcal {N}(0,1)) \end{aligned}$$(5)where \(\beta \) controls the intensity of the noise and \(\mathcal {N}(0,1)\) is a random variable with a normal distribution with a mean of 0 and a variance of 1.

-

Uniform noise

$$\begin{aligned} f_{UN}(f,\alpha ,\beta ) = f \times \mathcal {U}(0,1)^\beta \max \left( \begin{array}{c} 1,(\frac{10^9}{f + \epsilon })^{\alpha \mathcal {U}(0,1)} \end{array} \right) \end{aligned}$$(6)where \(\mathcal {U}(0,1)\) represents a random, uniformly distributed number in the interval (0, 1) and \(\alpha \) is a parameter that controls the intensity of the noise in conjunction with the parameter \(\beta \), resulting in two random factors. The first factor is uniformly distributed in the interval [0,1] for \(\beta = 1\). The second factor (\(\max \)) is greater than or equal to 1. Finally, the factor \(\epsilon \) is introduced to avoid division by zero, with a value of \(10^{-99}\) being adopted. The Uniform noise is considered to be more severe than Gaussian noise.

-

Cauchy Noise

$$\begin{aligned} f_{CN}(f,\alpha ,\text {p}) = f + \alpha \left( \begin{array}{c} 1000 + \mathbb {I}_{\{\mathcal {U}(0,1)<\text {p}\}} \frac{\mathcal {N}(0,1)}{|\mathcal {N }(0,1)| + \epsilon } \end{array} \right) \end{aligned}$$(7)where \(\alpha \) defines the intensity of the noise and p determines the frequency of the noise disturbance. The value of \(\epsilon \) is \(10^{-199}\). This noise model has two important characteristics. First, only a small percentage of the function values are affected by noise. Second, the noise distribution is considered to be unusual, with outliers occurring from time to time.

The Gaussian and Uniform noises are multiplicative, so the intensity remains constant regardless of the range of values in the objective function. For example, if the maximum noise value is 5, the effect on the objective function will be to increase its value by 5 times, whether the value ranges from [0,1] or [0,100]. Cauchy’s noise differs from the previous two in that it is additive. In this case, the scale of the objective function must be considered. For example, if the range of values for one problem is [0,1] and for another problem is [0,100], and the noise peak has an intensity of 1, the intensity for the first problem will be \(100\%\) since the noise is additive, whereas, for the second problem, it will only be \(1\%\). Therefore, in this article, Eq. 7 will not include the 1000 summation term. In this article, the intensity values from [8] are kept.Footnote 1

4 Computational Experiment

In this section, the computational experiments will be presented. A brief description of the three selected algorithms and the testing setup will be provided. Subsequently, the solutions that the algorithms found for problems with perturbation and/or noise will be displayed. Finally, a discussion of the results will be made.

4.1 Algorithms

Typically, robustness measures (discussed in Sect. 2.2) are employed to account for uncertainties, resulting in a new objective function. As a result, existing algorithms in the literature are transformed into robust versions. For instance, the RMOEA/D employs MOEA/D [27] and the RNSGA-II employs NSGA-II [5], with the addition of Monte Carlo sampling in the uncertainty space. The average of this sampling is then calculated [6]. The RMOEA/D selects a set of vectors with the best weighting.

C-RMOEA/D [19] is a coevolutionary algorithm for robust multi-objective optimization that employs the worst-case minimization methodology and the decomposition/aggregation strategy. The decomposition approach facilitates the implementation of a coevolutionary approach [19]. The algorithm uses a competitive strategy between two populations of candidate solutions, with X being a set of vectors from the decision space and \(\varDelta \) being a set of perturbation vectors.

The RNSGA-II and RMOEA/D employ a probabilistic robustness measure, whereas C-RMOEA/D is a coevolutionary algorithm that uses a deterministic robustness measure. Other recent strategies for robust optimization or noisy optimization can be found at [15, 16, 26].

4.2 Experimental Setup

The Function Generator Extension (Sect. 3) will be utilized in the experiments. The Pareto front will be tested with convex, linear, and concave formats with simultaneous uncertainties. Problems with only perturbations or noise will be demonstrated using the concave Pareto front. The primary aim of this study is to evaluate algorithms in the presence of simultaneous uncertainties. To achieve this, the tests will be conducted with bi-objective and unconstrained scenarios. As mentioned earlier, the function generator is adaptable to these parameters. Tests with perturbations only (Robust Optimization) are conducted as shown in [18].

The following parameters were used for all tests: a population size of 210; a total of 24 decision variables; the stopping criterion is the maximum number of evaluations of the objective function, equal to 150,000. The RNSGA-II and RMOEA/D algorithms used 100 samples for averaging. The C-RMOEA/D used the PBIFootnote 2 (Penalty Boundary Intersection) aggregation function and a subpopulation size of 15. The RMOEA/D also utilized a subpopulation size of 15.

4.3 Results

The results will be presented in three parts: (i) robust optimization (with only perturbation); (ii) noisy optimization (with only noise); (iii) robust and noisy optimization (with both perturbation and noise - simultaneous uncertainties).

Robust Optimization. Figure 2 shows the results for \(\delta _x = 0.1\), which is considered a high perturbation as it represents an uncertainty of \(\pm 10\%\). The RNSGA-II and RMOEA/D (using a mean-based robustness measure) mapped the Robust Front. The algorithm based on a worst-case measure, C-RMOEA/D, also mapped the Robust Front. The results with convex and linear fronts are not displayed due to page limitations. The results are similar to those obtained with a concave front.

Noisy Optimization. The C-RMOEA/D does not incorporate coevolution with noise only. Hence, it becomes the MOEA/D algorithm, without a second evolutionary cycle. The results were compared between MOEA/D and RMOEA/D and between NSGA-II and RNSGA-II. This comparison was made between the original algorithm without any uncertainty handling, and the algorithm that uses sampling followed by the mean metric.

Figure 3 presents the solutions obtained from RNSGA-II and NSGA-II. The results were similar for Gaussian and Uniform noise. The algorithms showed good convergence and dispersion from the Global Front with moderate intensity. However, they did not produce any mapping for severe intensity. Finally, both RNSGA-II and NSGA-II mapped the Global Front well for Cauchy noise (single additive noise) at both intensities. Due to page limitations, the results of MOEA/D and RMOEA/D will not be shown. These results were similar to those of NSGA-II and RNSGA-II from the Fig. 3.

Robust and Noisy Optimization. Figure 4 displays the outcome of extending the function generator with Gaussian noise and \(\delta _x=0.1\). The three algorithms failed to converge for high-intensity noise. This outcome was predictable because the severe noise greatly impacted the original function. However, the C-RMOEA/D solutions exhibited a tendency towards the Robust Front. For moderate-intensity noise, the algorithms achieved convergence and good dispersion. Nonetheless, the RMOEA/D had some poor solutions, but, generally, the solutions converged. The same can be said for the C-RMOEA/D algorithm with concave PF.

Figure 5 displays the results of extending the function generator with Uniform noise and \(\delta _x=0.1\). The analysis of results for Uniform noise is similar to Gaussian noise. The three algorithms did not converge for high-intensity noise. The algorithms converged and had good dispersion for moderate-intensity noise. However, the RMOEA/D and C-RMOEA/D had some poor solutions. RNSGA-II had some poor solutions for concave PF. As a result, it becomes evident that these algorithms with simultaneous uncertainties begin to deteriorate (even with small noise).

Figure 6 displays the result of extending the function generator with Cauchy noise and \(\delta _x=0.1\). This noise differs from the previous ones in that it is additive and not multiplicative. The C-RMOEA/D and RNSGA-II showed good convergence and dispersion for all PF formats and intensities. For high-intensity noise, the C-RMOEA/D had some solutions without convergence, and for the convex PF it failed to map the robust PF at the extremes. The RMOEA/D algorithm showed good convergence and dispersion for moderate-intensity noise. Only some final solutions were poor. Finally, RMOEA/D produced some solutions that mapped small parts of the PF to high-intensity noise. Therefore, the solutions did not have good dispersion and most of them did not converge. The worst convergence result was for convex PF.

As stated in the previous results, the original function is severely contaminated by high Gaussian and Uniform noise. Therefore, we created an intermediate-intensity noise for further testing. The value was \(\beta =0.5\) for Gaussian noise. For Uniform noise, the parameters were \(\beta =0.5\) and \(\alpha =0.2*(0.49+1/D)\). The effect of intermediate-intensity noise showed that the trend of the original function remained. Consequently, EAs must be able to eliminate the effect of noise at this intensity.

For this test set (intermediate-intensity), 30 simulations were performed for each front and algorithm. The IGD (Inverted Generational Distance) evaluation metric [24] was calculated for each simulation, using the Robust Front as the reference set. The solution set that performed best according to the IGD metric (closest to zero) was chosen, and Fig. 7 shows these results for tests of the function generator extension with intermediate noise intensity and \(\delta _x=0.1\).

The algorithms did not achieve good convergence, regardless of the geometry of the PF (convex, linear, or concave) or the type of noise (Gaussian or uniform). This indicates that, when the noise has a greater effect (intermediate intensity), the tested algorithms were unable to eliminate its effect with perturbation.

Specifically, RNSGA-II started to converge at the extremes and in the middle of the PF for Gaussian noise and convex PF. The same happened for linear PF, but with a smaller number of solutions. Some RMOEA/D solutions also converged in the central region of the robust PF (linear PF and Gaussian noise). These algorithms did not show convergence when the PF was concave. The solutions of the C-RMOEA/D algorithm showed a trend of robust PF shapes for Gaussian noise, mainly the convex PF.

Uniform noise had a more significant detrimental effect (compared to Gaussian) on the original function. The results (Fig. 7) were consistent for all PF formats and Uniform noise. The algorithms did not converge. The C-RMOEA/D showed a tendency towards solutions on the border.

Table 3 contains the average of the IGD metric for the 30 simulations, considering the Robust Front as a reference. The previous results qualitatively demonstrated that the algorithms were not able to deal with simultaneous uncertainties. The quantitative results (Table 3) reinforce this conclusion. The average IGD was high for all algorithms (keep in mind that a lower IGD value indicates better performance), except for the CRMOEA/D algorithm with convex shape. The CRMOEA/D can be considered the superior algorithm regardless of the format of the Pareto Front. Only for the Concave Front and Uniform noise, the RNSGA-II algorithm showed slightly better performance.

Table 3 displays the variability of the IGD metric across 30 simulations, using the Robust Front as the reference, as indicated by the standard deviation. The RNSGA-II algorithm demonstrated superiority in this test across different noise and Border Shape settings, with consistently low standard deviation. The standard deviation test indicates that the RNSGA-II algorithm exhibited minimal variations in IGD across the 30 simulations. The CRMOEA/D demonstrated superior performance compared to the average IGD. However, it is worth noting that the standard deviation was high, suggesting the presence of simulations with exceptionally poor results. Conversely, the RMOEA/D exhibited unsatisfactory outcomes in terms of both the mean and standard deviation.

4.4 Discussion

Algorithms with a robustness measure had no difficulties in finding the Robust Front when the problem has only perturbation.

The evolutionary algorithms were able to eliminate the effect of noise for moderate intensity (noisy optimization), including algorithms without any robustness measure (NSGA-II and MOEA/D). However, the algorithms did not achieve convergence when the effect of Gaussian and Uniform noise was high (severe intensity). One possible explanation is that the intensity of these noises severely deteriorates the original function, causing the function’s trend to be lost.

Cauchy noise is different from the previous ones. This noise has peaks of high intensity. As a result, the trend of the original function remains even with severe Cauchy noise. The RMOEA/D algorithm had difficulties in mapping the Global Front to severe Cauchy noise. The reason is that noise has contaminated the average value (noise spikes have increased the average). The original MOEA/D algorithm managed to map the Global Front for this noise. One explanation is that the evolutionary process eliminated the effect of noise on solutions. The NSGA-II and RNSGA-II algorithms achieved excellent mapping of the Global Front for Cauchy noise. This shows that the characteristics of the NSGA-II (archive, elitism, dominance) eliminated the effect of this noise, even when the average was used (RNSGA-II).

Finally, for robust noisy problems, the results demonstrate that the tested algorithms had difficulties in this new class of problems. Some solutions started to deteriorate with moderate noise and perturbation. We performed some tests by increasing the noise intensity and observed that the algorithms presented greater difficulty for problems with simultaneous uncertainties. Thus, we created an intermediate noise. The algorithms did not solve the problems with perturbation and intermediate noise.

To confirm the conclusion presented in this discussion, results are provided for Gaussian noise with a value of \(\beta = 0.25\), as depicted in Fig. 8. The RNSGA-II algorithm successfully mapped the Global Frontier when the problem contained only noise. However, when both perturbation and noise were present, the majority of algorithm solutions did not converge.

5 Conclusion

In the real world, simultaneous uncertainties are common. A practical example is a drilling process, where the sensor measuring the depth is subject to uncertainty (resulting in noise in the objective function), and the drill (actuator) is also subject to uncertainty (resulting in perturbations in the decision variables). The authors identified a deficiency in the field of optimization with uncertainty, as no previous research had dealt with simultaneous uncertainties.

The results indicated that the algorithms tested (RNSGA-II, RMOEA/D, and CRMOEA/D) were unable to effectively handle simultaneous uncertainties. Consequently, there is a need to formulate algorithms that are capable of handling both robust and noisy environments concurrently.

Notes

- 1.

For Gaussian noise: moderate intensity considers \(\beta = 0.01\), which corresponds to a variation of up to 3\(\%\) (either multiplying the function by 1.03 or multiplying the function by 0.97); severe intensity considers \(\beta = 1\), resulting in a variation of up to 20 times (either multiplying the function by 20 or dividing the function by 20). For uniform noise: moderate intensity considers \(\beta = 0.01\) and \(\alpha = 0.01(0.49 + 1/D)\), where D represents the number of decision variables (always considered as 24 in this work), resulting in a variation of up to 12\(\%\); severe intensity considers \(\beta = 1\) and \(\alpha = 0.49 + 1/D\), resulting in a variation of up to tens of thousands. For Cauchy noise: moderate intensity considers \(\alpha = 0.01\) and \(p = 0.05\); severe intensity considers \(\alpha = 1\) and \(p = 0.2\).

- 2.

Further details on the decomposition algorithm and methods can be found in [23].

References

Balouka, N., Cohen, I.: A robust optimization approach for the multi-mode resource-constrained project scheduling problem. Eur. J. Oper. Res. 291(2), 457–470 (2021)

Ben-Tal, A., El Ghaoui, L., Nemirovski, A.: Robust Optimization, vol. 28. Princeton University Press, Princeton (2009)

Ben-Tal, A., El Ghaoui, L., Nemirovski, A.: Robust Optimization in Applied Mathematics. Princeton Series, Princeton (2009)

Beyer, H.G., Sendhoff, B.: Robust optimization – a comprehensive survey. Comput. Methods Appl. Mech. Eng. 196(33), 3190–3218 (2007). https://doi.org/10.1016/j.cma.2007.03.003

Deb, K., Pratap, A., Agarwal, S., Meyarivan, T.: A fast and elitist multiobjective genetic algorithm: NSGA-II. IEEE Trans. Evol. Comput. 6(2), 182–197 (2002)

Deb, K., Sindhya, K., Hakanen, J.: Introducing robustness in multi-objective optimization. Evol. Comput. 14(4), 463–494 (2006)

Duan, J., He, Z., Yen, G.G.: Robust multiobjective optimization for vehicle routing problem with time windows. IEEE Trans. Cybern. 52(8), 8300–8314 (2021)

Finck, S., Hansen, N., Ros, R., Auger, A.: Real-parameter black-box optimization benchmarking 2010: presentation of the noisy functions. Technical report. Citeseer (2010)

Gaspar-Cunha, A., Covas, J.A.: Robustness in multi-objective optimization using evolutionary algorithms. Comput. Optim. Appl. 39(1), 75–96 (2007). https://doi.org/10.1007/s10589-007-9053-9

Goerigk, M., Schöbel, A.: Algorithm Engineering in Robust Optimization. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-49487-6_8

Gorissen, B.L., Yanıkoğlu, İ, den Hertog, D.: A practical guide to robust optimization. Omega 53, 124–137 (2015). https://doi.org/10.1016/j.omega.2014.12.006

Häse, F., et al.: Olympus: a benchmarking framework for noisy optimization and experiment planning. Mach. Learn. Sci. Technol. 2(3), 035021 (2021)

Jin, Y., Branke, J.: Evolutionary optimization in uncertain environments – a survey. Trans. Evol. Comput. 9(3), 303–317 (2005)

Klir, G.J., Folger, T.A.: Fuzzy Sets, Uncertainty, and Information. Prentice-Hall, Englewood Cliffs (1998)

Liu, J., Liu, Y., Jin, Y., Li, F.: A decision variable assortment-based evolutionary algorithm for dominance robust multiobjective optimization. IEEE Trans. Syst. Man Cybern. Syst. 52(5), 3360–3375 (2021)

Liu, R., Li, Y., Wang, H., Liu, J.: A noisy multi-objective optimization algorithm based on mean and Wiener filters. Knowl.-Based Syst. 228, 107215 (2021)

Lu, Y., Xu, Y., Herrera-Viedma, E., Han, Y.: Consensus of large-scale group decision making in social network: the minimum cost model based on robust optimization. Inf. Sci. 547, 910–930 (2021)

Meneghini, I.R., Alves, M.A., Gaspar-Cunha, A., Guimaraes, F.G.: Scalable and customizable benchmark problems for many-objective optimization. Appl. Soft Comput. 90, 106139 (2020)

Meneghini, I.R., Guimaraes, F.G., Gaspar-Cunha, A.: Competitive coevolutionary algorithm for robust multi-objective optimization: the worst case minimization. In: IEEE Congress on Evolutionary Computation (CEC), pp. 586–593 (2016). https://doi.org/10.1109/CEC.2016.7743846

Mou, W., Wang, Q., Peng, J.: Accelerating gradient-based optimization via importance sampling. J. Mach. Learn. Res. 22(22), 1–29 (2021)

Ong, Y.S., Nair, P.B., Lum, K.Y.: Max-min surrogate-assisted evolutionary algorithm for robust design. IEEE Trans. Evol. Comput. 10(4), 392–404 (2006). https://doi.org/10.1109/TEVC.2005.859464

Sahmoud, S., Topcuoglu, H.R.: Dynamic multi-objective evolutionary algorithms in noisy environments. Inf. Sci. 634, 650–664 (2023)

Trivedi, A., Srinivasan, D., Sanyal, K., Ghosh, A.: A survey of multiobjective evolutionary algorithms based on decomposition. IEEE Trans. Evol. Comput. 21(3), 440–462 (2016)

Van Veldhuizen, D.A., Lamont, G.B.: Multiobjective evolutionary algorithm research: a history and analysis. Technical report. Citeseer (1998)

Yang, J., Su, C.: Robust optimization of microgrid based on renewable distributed power generation and load demand uncertainty. Energy 223, 120043 (2021)

Yang, Y.: Robust multi-objective optimization based on the idea of multi-tasking and knowledge transfer. In: Proceedings of the 14th International Conference on Computer Modeling and Simulation, pp. 257–265 (2022)

Zhang, Q., Li, H.: MOEA/D: a multiobjective evolutionary algorithm based on decomposition. IEEE Trans. Evol. Comput. 11(6), 712–731 (2007)

Acknowledgment

This work has been supported by the Brazilian agencies (i) National Council for Scientific and Technological Development (CNPq), Grant no. 312991/2020-7; (ii) Coordination for the Improvement of Higher Education Personnel (CAPES) through the Academic Excellence Program (PROEX) and (iii) Foundation for Research of the State of Minas Gerais (FAPEMIG, in Portuguese), Grant no. APQ-01779-21. MINDS Laboratory – https://minds.eng.ufmg.br/

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

de Sousa, M.C., Meneghini, I.R., Guimarães, F.G. (2023). Assessment of Robust Multi-objective Evolutionary Algorithms on Robust and Noisy Environments. In: Naldi, M.C., Bianchi, R.A.C. (eds) Intelligent Systems. BRACIS 2023. Lecture Notes in Computer Science(), vol 14197. Springer, Cham. https://doi.org/10.1007/978-3-031-45392-2_3

Download citation

DOI: https://doi.org/10.1007/978-3-031-45392-2_3

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-45391-5

Online ISBN: 978-3-031-45392-2

eBook Packages: Computer ScienceComputer Science (R0)