Abstract

In clustering problems where the objective is not based on specifically spatial proximity, but rather on feature patterns and the semantic description, traditional internal cluster validation indices might not be appropriate. This article proposes a novel validity index to suggest the most appropriate number of clusters based on a semantic description of categorical databases. To assess our index, we also propose a synthetic data generator specifically designed for this type of application. We tested data sets with different configurations to assess the performance of the proposed index compared to well-known indices in the literature. Thus, we demonstrate that the index has great potential for discovering the number of clusters for the type of application studied and the data generator is able to produce relevant data sets for the internal validation process.

Supported by Coordenação de Aperfeiçoamento de Pessoal de Nível Superior - Brasil (CAPES).

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Clustering is a partitioning technique that aims to group data points so that points within a group have similar characteristics (or are close to each other) while points in other groups are different [11]. In this type of task, defining the most suitable technique for the type of data and the correct number of clusters can be a challenge, especially if little information about the data is known [12]. Despite the existence of several approaches to solve this problem, there is no optimal method, especially when considering categorical data. On this type of data the distance-based techniques may not be suitable [6, 8].

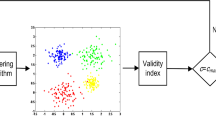

In order to discover the adequate number of clusters, Cluster Validity Indices (CVI) [2] are generally used, which can have different approaches, such as distance between points [3, 4, 17], density [16], entropy [7], comparison between different indices [20], among others [18]. Thus, depending on the configuration of the data points and the purpose of the clustering certain indices may be more suitable.

Therefore, in scenarios where the objective is not based on specifically clustering nearby data points, but rather on obtaining clusters based on feature patterns or explaining them, using a CVI developed for this purpose may be more appropriate.

For example, consider a human resources data set in which each data point represents a person described by categorical features related to their professional characteristics, such as education, leadership, teamwork, creativity, etc. Given that a decision maker needs to identify the profiles present in this data set in order to select people for a given task, how could he know the number of potential professional profiles and their respective descriptions?

In this type of scenario, given the purpose of clustering and the type of features that describe the data, using a distance-based index may not be the best choice. An example of application of this type of scenario can be observed in the data sets of the competence system of the Department of Aerospace Science and Technology (DCTA)Footnote 1 of the Brazilian Air Force called Hippocampus. This system is restricted and stores competence levels in several areas – such as public administration, research, informatics, among others – of all DCTA’s employees. DCTA’s People Management sector uses the stored information to monitor the development of the skills of the workforce.

Thus, this article aims to propose an index that suggests the most appropriate number of clusters based on the semantic description potential, for applications in categorical data sets in binary form. Although the proposal is initially focused on a specific type of data, the index can also be applied to any type of data set, as long as the features are transformed into the categorical type [21].

In addition to the potential application in different types of data sets, the index can also be used to compare the quality of different partitions; it is independent of distance metrics and has significant performance when applied to competency-based data sets, where data points have features (competences) common to each other.

2 Related Works

In this section, we discuss various popular CVIs using different approaches to determine how good a particular clustering is. This variety, we think, ensures we have a solid set of CVIs to compare our proposed method against (for the actual comparison, see Sect. 6).

Average Silhouette Width (ASW) [17]

The Silhouette Width index scores how well a particular data point is clustered based on cohesion (the average within-cluster distance) and separation (how far clusters are from each other). Let \(x_i\) be a data point in cluster \(S_l\). Then, we can calculate the cohesion of \(x_i\) with

and its cluster separation with

Thus, the ASW of a data set X under a partition S is given by

Notice that \(-1 \le ASW(X,S) \le 1\), where a higher value indicates a better partition in terms of cohesion and separation. The literature certainly indicates there is no index that is the best in all cases, but the ASW has proven to be particularly successful (for a comprehensive comparison, see [2]).

Calinski-Harabasz (CH) [3]

The Calinski-Harabasz index is also rather popular and very much like ASW it can be easily found in many software packages used in data analysis, including MATLAB [10], scikit-learn [14], and R [15]. CH assesses the quality of a particular partition based on the ratio of the between-cluster-means and within-cluster sum of squares. Given a data set X and clustering S, we have that

where T is the data scatter \(T=\sum _{i=1}^n \sum _{v=1}^m (x_{iv} - \bar{x}_v)^2\), and \(\bar{x}_v\) is the mean of feature v over all \(x_i \in X\). The higher this index value is, the better the clustering is.

Davies-Bouldin (DB) [4]

The Davies-Bouldin index scores a clustering S by computing the cohesion of each cluster \(S_l \in S\) as the average distance from each \(x_i \in S_l\) and \(z_l\) (the centroid of \(S_l\)), and taking into account the pairwise distance between all centroids \(z_l \in Z\). That is, one first calculates the cohesion for each cluster \(S_l \in S\) as

and then the value of this index is given by

Unlike ASW and CH, a low value of DB indicates a good clustering.

Contiguous Density Region (CDR) [16]

The index is based on the uniformity measure of the clusters, obtained from the spatial pattern of the data points and the local density.

Initially, we define the local density of a data point \(x_i\) in a set of data points X as the distance to the nearest neighbor \(x_j\), which belongs to the same cluster by the equation

The average density of a cluster \(S_l\) is calculated as the average of all the local densities of the cluster, defined as

Thus, the uniformity of each cluster is calculated as the difference between the local density of the cluster and the average of all the local densities of the cluster. It measures the degree of local density variation within a cluster. Given a cluster, its uniformity is defined by

for values of \(n_{S_l}\) greater than 1, otherwise a null uniformity value is assigned.

Given a partition S, the value of the CDR index is defined as

where n corresponds to the total number of data points in X. The CDR index minimization estimates the optimal data partition among all partitions generated by the clustering algorithm based on the structural characteristics of the data points.

CUBAGE [7]

The CUBAGE index (clustering utility based on the averaged information gain of isolating each cluster) uses the proposed averaged information gain of isolating each cluster (AGE) to measure the separation, and the reciprocal entropy of the set of data points conditioned on the partition to measure the compactness of the clusters.

Given that the data set X is described by a set of m independent features \(V = \left\{ v_1, \dots , v_m\right\} \) and the value of the feature \(v_j\) can only be taken from the domain \(D(v_j) = \left\{ v_j^1, \dots , v_j^{\eta _j} \right\} \) where \(\eta _j\) is the number of possible values of the respective feature.

Thus, starting from the concept of entropy, we initially calculate the information gain referring to the set of features H(V) through the equation

where \(p(\alpha _j^{i})\) is the probability of feature \(v_j\) taking the value \(\alpha _j^{i}\).

Given a partition S, the entropy of V conditioned on S, i.e., H(V|S), and considered as the whole entropy of the partition is defined as

where \(p(\alpha _j^i, S_l)\) is the conditional probability of the value \(\alpha _j^i\), given cluster \(S_l\). Finally, the information gain between variables and partitions is related through the equation

The index takes the form of the product of separation and compactness. The largest index value across partitions indicates the most suitable number of clusters.

Consensus Index (CI) [20]

This index aims to calculate the average pairwise similarity between different partitions. These partitions can be obtained by running different algorithms. Thus, suppose a set of w solutions of different clustering algorithms \(S= \left\{ S^1, \dots , S^w \right\} \). The consensus index between the different partitions is given by

where \(\varPhi \) is validation index based in similarity. In this study, we use the Adjusted Rand Index [9], also used in a similar study [19]. Hence, higher index values indicate higher quality in the partitions resulting from clustering.

Cluster Number Assisted K-means (CNAK) [18]

The CNAK algorithm is defined as a variant of k-means in which the proper cluster number is learned during the clustering process. The proposal is based on randomly sampled large-sized sets of data points having the same distribution as the original set, such that the number of generated cluster centroids from the sample is approximately the same as the original.

According to the results of the study, the algorithm presented satisfactory results, being able to successfully detect clusters, identify the hierarchy, but was not able to demonstrate robustness in dealing with noise and data overlap. In this study, we used the \(\tilde{k}\) suggested by CNAK and compared it with other indices for the process of validating the results of the proposed method.

3 Validation Based on the Semantic Description of the Clusters

Given that validation indices with distance-based metrics can be a problem for applications on categorical data sets, since the spatial representation of data points is not adequate [6, 8], we propose an index, based on the potential for semantic description of each cluster, for internal validation of clustering results.

For this, given the result of the clustering, frequent patterns within the clusters are identified and we calculate some indicators that are related to the semantic description potential of the set of frequent patterns. Thus, we consider an adequate clustering to be one in which the resulting clusters are adequately described, since the points within each of them were selected based on the similarity between the observed frequent patterns.

3.1 Proposed Index

Let \(V = \left\{ v_1, \dots , v_m\right\} \) be a set of m features (item set) each representing a description of a categorical data set (transactional data set) \(X = \left\{ x_1, \dots , x_n\right\} \) of n data points, where each point \(x_i \in \mathbb {Z}_{2}^{m}\) represents the competency description of a person (transaction) from a subset of V items.

The support \(\sigma _{v_j}\) (or frequency of occurrence) of a \(v_j\) item is defined as the proportion of transactions that contain \(v_j\). Even if \(\sigma _{v_j}\) is greater than a minimum support limit \(\sigma _{min}\), we define \(v_j\) as frequent pattern [1].

Thus, through the partition \(S=\{S_1, \ldots , S_k\}\) containing k clusters, obtained by a clustering algorithm, we define \(F_1(S_l)\) as the collection of all \(n_l \ge 0\) frequent patterns with length 1 contained in \(S_l\), each with a support value \(\sigma _l\) assigned, which represent the semantic description of the respective cluster.

After that we evaluate the potential of the sets of items to perform the semantic description of the clusters. For this, we propose the Support, Length, Exclusivity and Difference for Group Evaluation (SLEDge) index, where the support values found, set size, exclusivity and support variability between items are evaluated, respectively. Thus, each of the indicators that are part of the index are presented in the subsections that follow.

Support. The indicator \(S_{F_1(S_l)}\) is calculated from the average of support values \(\sigma _{l}\) of the collection of frequent patterns belonging to \(F_1(S_l)\) by the equation

when \(n_l \ge 1\), otherwise the indicator is equal to zero.

We suggest that clusters described with frequent patterns of greater support between items are better, as this way we can infer more accurate information about the data points belonging to the cluster.

Length. To calculate this indicator we initially get the average cardinality based on the number of frequent patterns in all partitions by the equation

where k represents the number of clusters. Then, we obtain the indicator through the equation

We suggest that clusters are best described when they have an average cardinality of frequent patterns, given that either the presence of a large or small number of these should be insufficient to accurately define the semantic description of the cluster.

Exclusivity. The \(E_{F_1(S_l)}\) indicator is related to the number of exclusive frequent patterns within the cluster and is calculated by the function

where \(\hat{e}_l\) is the number of non-exclusive frequent patterns of the cluster compared to others \(k-1\) clusters g such that \(g \ne j\).

We suggest that clusters with exclusive frequent patterns are better defined in relation to the others that have more common patterns.

Difference. This indicator assess the maximum variability among the frequent patterns that describe each cluster. If there is more than 1 pattern, the indicator is calculated by taking the maximum difference \(\delta _{max}\) between the support values of all frequent patterns belonging to \(F_1(S_l)\). If there is only 1 pattern, the value of the indicator will be its own support value and if there are no patterns, the value will be null, as described by the function such

As the variation is small between the values of the supports, we decided to increase the influence by the square root. We assume that greater variation between support values within the cluster is better for a more accurate semantic description. Given that there are less representative frequent patterns within the cluster, we expect that the difference in proportion of these in relation to the largest values are as large as possible.

SLEDge Index. The index of each of the j clusters is calculated from the median of the indicators obtained by the Eqs. (1) to (4) and the global index value (SLEDge) is the average of the cluster indices.

The index evaluates the quality of each grouping and of the grouping in general based on the semantic description potential given from the identified frequent patterns. The higher the index value, the better the clustering quality. The index can be easily obtained from a specific function (sledge_score), which is part of a package (sledge) built in Python and available in a repository on GitHubFootnote 2.

4 Synthetic Data

Consider the following scenario. Each data sample represents a person. Each feature indicates whether such a person has a certain skill. We assume that people can be grouped in clusters such that:

-

the presence/absence of a certain few features (called dependent features) are correlated; and

-

other features may be present/absent independently.

Dependent features vary among clusters. The rationale is that, for a particular group of people, some features are more important than others. These important features appear more frequent than other features in the group. Moreover, they are positively or negatively correlated. For example, IT professionals usually share common skills: programming, data base skills, etc. On the other hand, the presence of some pairs of skills may be rare. For example, IT professionals who program rarely use the same programming language or development environment.

Thus, let \(x_{ij}\) be the indicator whether the i-th person, belonging to cluster c(i), has the j-th feature. We want to generate a dataset such that

where \(0 < \epsilon < \lambda < 1\) and \(\mathcal {F}_{c(i)}\) is the set of dependents features of the cluster c(i).

We also want that the following statements to hold:

To generate data with such properties, each cluster c in the dataset is associated with an m by m matrix \(W^c\) whose elements are

and each sample \(\textbf{x}_i = (x_{i1}, x_{i2}, \dots , x_{im})\) such that \(c(i) = c\) is associated with a vector m-dimensional \(\textbf{a}^i\) whose elements are

Then, let

then, we generate the dependent features (\(j \in \mathcal {F}^{c(i)}\))

where the \(\varPhi \) is the cumulative distribution function of the standard normal distribution. The other features, \(j \not \in \mathcal {F}^{c(i)}\), are generated using

Remark: note that the product XY of random variables \(X, Y \sim \mathcal {N}(0, 1)\) is not a standard normal distribution. However, it is similar enough to use \(\varPhi \) to satisfy Eq. 5.

5 Set of Experiments

In this section we do some experiments to evaluate the performance of the proposed index, defined in Sect. 3, comparing with known indices described in Sect. 2. For this, we use synthetic data generated using the Categorical Binary Random Data (catbird) function, described in Sect. 4, which is part of the library called Random Data Generator Algorithm for Clustering (rdga_4k), built in Python and available in a GitHub repositoryFootnote 3.

For the generation of synthetic binary data, all possible combinations of the parameters listed in Table 1 are used. In the end, we obtain 3600 unique data sets from combinations of 2 parameters of \(\epsilon \), 2 of \(\lambda \), 3 different numbers of both clusters and features, 2 types of data balance for each number of clusters and 50 seeds. The criteria for choosing the values of \(\epsilon \) and \(\lambda \) are based on the different scattering scenarios of data points that the combinations of these parameters can simulate. In this way, we choose more extreme scenarios and two intermediate ones. For the K values, we try to evaluate three intervals of the same distance between 2 and 10 in order not to overload the tests, but still have interesting results to analyze. For the number of seeds, we believe that 50 seeds are enough for the analysis. In addition, during the tests, we identified that with half of the seeds the results did not change significantly.

As clustering algorithms for the experiments, we use k-means and two hierarchical clusters with average (HACa) and ward (HACw) linkages. Although they are based on distance, they are considered suitable for use on binary data sets [5].

The algorithms are applied to each of the data sets for different values of k, starting at 2 and increasing in intervals from 1 to 10. Thus, using the obtained labels we calculate each of the indices, except CNAK, as it uses its own clustering algorithm (k-means++) to suggest the value of k. Given that the CI index uses different labels in a consensus approach, we named KAW the algorithm responsible for calculating the index, given that it uses the three algorithms.

6 Results and Discussion

In this section, we present and discuss some results obtained after the experiments performed, as described in Sect. 5.

As criteria to determine the quality of the indices, we used two approaches: one based on the proportion of times each index identifies the correct number of clusters (Hit rate) and another on the Mean Relative Error (MRE), defined by the equation

where k represents the correct number of clusters in the data set and \(\tilde{k}\) the predicted number of clusters. Thus, we use the average of the MRE to analyze each of the indices and calculate the Standard Deviation (STD). To improve the visualization of the results, we highlight the best performances in bold.

Initially, analyzing the overall performance of the indices, presented by Table 2, we can observe that SLEDge obtains the best performance, i.e. the smallest MRE. We use the standard deviation (STD) in order to verify how much the MRE values deviate from the average. The Hit rate presents the ratio of the number of times the MRE is zero, i.e. the index correctly identifies the number of clusters. On both measures, SLEDge also performs best, identifying the correct number of clusters in 85% of the data sets and the lowest STD among the rest. In second, we can observe that the ASW obtains a very similar performance, even with a similar STD, despite the proportion of hits being lower than expected.

Given the different configurations of dispersion of the data points of the variables \(\epsilon \) and \(\lambda \), we expect that in the configuration with \(\epsilon = 0.1\) and \(\lambda = 0.9\) the MRE is smaller, since the clusters are more separated and the data points closer to each other. Unlike the setting \(\epsilon = 0.2\) and \(\lambda = 0.8\), where the opposite scenario is observed. As described by Saha and Mukherjee (2021) [18], as the proportion of overlapping between data points increases and the separation between clusters decreases, the performance of the indices tends to decrease as well. Despite varying the noise (\(\epsilon \)) and overlapping (\(\lambda \)) parameters to produce different dispersion configurations, these configurations are not significantly different to the point that sudden changes are verified within the same index.

As for the results, according to Table 3, we can see that the SLEDge index achieves the best performance, despite being just a little higher than the ASW index. CNAK, given that it is especially sensitive to overlapping and noise in the data sets [18], presented a much lower performance, but expected, given the presence of a large number of these characteristics in the test data sets. As for CUBAGE [7], the index performs better in more compact and separate clusters, which can be observed when we analyze the different scenarios.

As for the number of clusters tested, we identified that for most indices the MRE tends to increase directly proportional to the number of k, except for the DB index. Some indices in particular, such as CNAK, CH and ASW for example, the smaller the number of clusters in the data set, the greater the chances of success of the indices [18]. Thus, given Table 4, we can observe that the SLEDge index had the best performance, followed by the ASW, but that it decreases a little when the number of clusters increases.

In the studies we use as a reference, given the average performance of the classic cluster validation indices, when different algorithms are used to evaluate the experiments, it is observed that ASW usually performs better, followed by CH and finally DB, as shows Table 2. The same is also observed for different degrees of data point dispersion. When the k-means algorithm is used, the ASW remains the best evaluated, but the DB usually presents a better result than the CH index, as demonstrated by studies by [5, 16, 18, 19]. Thus, the indexes demonstrate to have influence on the type of clustering algorithm, except for the DB index that has better performance when k-means is applied instead of hierarchical, as shown in Table 5.

In addition to verifying the performance of the indices for different values of k, we decided to do some tests in scenarios where the data points in the clusters are balanced and unbalanced. Thus, for the same number of k, we generate both types of data sets. As we can see, the SLEDge and ASW indices demonstrate significant and very similar performances, however, the SLEDge, given the proportions, continues to perform well in cases where the data is unbalanced. The results can be observed from the Table 6.

At the end, for the statistical significance test, we select the best performing indices in each data set, given the different algorithms, we rank them (using the average rank in the tie) and apply the Wilcoxon-Mann-Whitney test [13]. As a result, given a significance level of 0.05, we reject the null hypothesis that the performance of all indices has some similarity.

7 Conclusion

The objective of this article is to propose an index that suggests the most adequate number of clusters based on the potential of semantic description, for applications in categorical data sets, especially in clusters of profiles based on competences.

For index validation, we also propose a synthetic data generator suitable for the application, with a similar Gaussian distribution and apparently well-defined clusters, despite some overlapping points. As test experiments we use 3600 datasets with different configurations and clustered by different algorithms. Finally, we compare the proposed index with other well-known indices in the literature and with different approaches regarding the prediction of the number of clusters.

As for the results, the proposed index identifies with significant precision the correct number of clusters in relation to the others, although other indices present a similar performance. From the analysis of the results and comparing with other works, we identified that the behavior of some indices were the same of those works when applied in the synthetic data of the proposed generator. Thus, we believe that the generator can produce adequate data for the internal cluster validation task.

For future work we intend to test the index in real data sets for external validation and to develop a clustering algorithm to be applied in competence-based data sets that cluster data points in close profiles. We intend to apply our method in Hippocampus to increase the capabilities of the DCTA’s People Management sector.

References

Agrawal, R., Srikant, R., et al.: Fast algorithms for mining association rules. In: Proceedings 20th International Conference on Very Large Data Bases, VLDB. vol. 1215, Santiago, Chile, pp. 487–499 (1994)

Arbelaitz, O., Gurrutxaga, I., Muguerza, J., Pérez, J.M., Perona, I.: An extensive comparative study of cluster validity indices. Pattern Recogn. 46(1), 243–256 (2013)

Caliński, T., Harabasz, J.: A dendrite method for cluster analysis. Commun. Stat.-Theory Methods 3(1), 1–27 (1974)

Davies, D.L., Bouldin, D.W.: A cluster separation measure. IEEE Trans. Pattern Anal. Mach. Intell. 2, 224–227 (1979)

Dimitriadou, E., Dolničar, S., Weingessel, A.: An examination of indexes for determining the number of clusters in binary data sets. Psychometrika 67(1), 137–159 (2002)

Dorman, K.S., Maitra, R.: An efficient k-modes algorithm for clustering categorical datasets. Stat. Anal. Data Mining ASA Data Sci. J. 15(1), 83–97 (2022)

Gao, X., Yang, M.: Understanding and enhancement of internal clustering validation indexes for categorical data. Algorithms 11(11), 177 (2018)

Guha, S., Rastogi, R., Shim, K.: Rock: a robust clustering algorithm for categorical attributes. Inf. Syst. 25(5), 345–366 (2000)

Hubert, L., Arabie, P.: Comparing partitions. J. Classif. 2, 193–218 (1985)

Inc., T.M.: Matlab version: 9.13.0 (r2022b) (2022). https://www.mathworks.com

Jain, A.K., Murty, M.N., Flynn, P.J.: Data clustering: a review. ACM Comput. Surv. (CSUR) 31(3), 264–323 (1999)

Liu, Y., Li, Z., Xiong, H., Gao, X., Wu, J.: Understanding of internal clustering validation measures. In: 2010 IEEE International Conference on Data Mining, pp. 911–916. IEEE (2010)

Mann, H.B., Whitney, D.R.: On a test of whether one of two random variables is stochastically larger than the other. Ann. Math. Stat., 50–60 (1947)

Pedregosa, F., et al.: Scikit-learn: machine learning in python. J. Mach. Learn. Res. 12, 2825–2830 (2011)

R Core Team: R: A language and environment for statistical computing (2021). https://www.R-project.org/

Rojas-Thomas, J.C., Santos, M.: New internal clustering validation measure for contiguous arbitrary-shape clusters. Int. J. Intell. Syst. 36(10), 5506–5529 (2021)

Rousseeuw, P.J.: Silhouettes: a graphical aid to the interpretation and validation of cluster analysis. J. Comput. Appl. Math. 20, 53–65 (1987)

Saha, J., Mukherjee, J.: Cnak: cluster number assisted k-means. Pattern Recogn. 110, 107625 (2021)

Ünlü, R., Xanthopoulos, P.: Estimating the number of clusters in a dataset via consensus clustering. Expert Syst. Appl. 125, 33–39 (2019)

Vinh, N.X., Epps, J.: A novel approach for automatic number of clusters detection in microarray data based on consensus clustering. In: 2009 Ninth IEEE International Conference on Bioinformatics and BioEngineering, pp. 84–91. IEEE (2009)

Witten, I.H., Frank, E., Hall, M.A., Pal, C.: Data Mining: Practical Machine Learning Tools and Techniques. Morgan Kaufmann, Burlington (2016)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

de Aquino, R.D.G., Curtis, V.V., Verri, F.A.N. (2023). A Clustering Validation Index Based on Semantic Description. In: Naldi, M.C., Bianchi, R.A.C. (eds) Intelligent Systems. BRACIS 2023. Lecture Notes in Computer Science(), vol 14197. Springer, Cham. https://doi.org/10.1007/978-3-031-45392-2_21

Download citation

DOI: https://doi.org/10.1007/978-3-031-45392-2_21

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-45391-5

Online ISBN: 978-3-031-45392-2

eBook Packages: Computer ScienceComputer Science (R0)