Abstract

Speaker diarization, the task of automatically identifying different speakers in audio and video, is frequently performed using probabilistic models and deep learning techniques. However, existing methods usually rely on direct analysis of the audio signal, which presents challenges for languages that lack established diarization methodologies, such as Portuguese. In this article, we propose a new approach to speaker diarization that leverages generative models for automatic speaker identification in Portuguese. We employed two generative models: one for refining the transcribed audio and another for performing the diarization task, as well as a model for initially transcribing the audio. Our method simplifies the diarization process by capturing and analyzing speaker style patterns from transcribed audio and achieves high accuracy without depending on direct signal analysis. This approach not only increases the effectiveness of speaker identification but also extends the usefulness of generative models to new domains. It opens a new perspective for diarization research, especially for the development of accurate systems for under-researched languages in audio and video applications.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Diarization is the task of automatically identifying different speakers in speech, whether from audio or video [21]. It is based on two main processes: the recognition of who is speaking and the initial segmentation of the speech. Its applications are wide-ranging, including meeting transcriptions [3], call center assistance [5], and the development of personalized voice assistants [23].

Traditional methods for diarization employ probabilistic models, such as Gaussian Mixture Models (GMM) and Hidden Markov Models (HMM), which are effective at clustering audio according to voice patterns [12, 25]. Subsequently, other techniques based on Deep Learning (DL) have brought significant advances to the field. Examples include Recurrent Neural Networks (RNNs), which are adapted for sequential data [29], and Convolutional Neural Networks (CNNs), which are capable of analyzing audio directly from spectrograms [15].

Most recently, methods based on the Transformer architecture have demonstrated state-of-the-art performance across various domains [27, 28]. This success is largely attributed to self-attention, a mechanism that allows the model to focus on different parts of the input sequence when producing output while also capturing long-range dependencies in the input, regardless of their distance in the sequence. Large Language Models (LLMs) are built upon this architecture, utilizing very deep neural networks with stacked multi-head attention layers. Models such as GPT-4 [19], Llama 3 [1], and Claude [4], exhibit impressive capabilities in natural language understanding and in solving complex tasks via text generation.

Despite these advancements, there remains a gap in applying such approaches to audio and video tasks. Exploring the use of Transformer-based architectures for diarization presents a promising opportunity for achieving similar breakthroughs in audio and video processing.

In addition, language variations are also a challenge in diarization tasks. Most models are adapted to processing English content due to the abundance of materials in the language. Although Portuguese is a high-resource language for text-based tasks, it is considerably under-researched in audio and video applications, especially regarding models, transcription tools, and new diarization methods.

In this article, we investigate existing diarization methods and introduce a novel approach that addresses identified gaps in the field, leveraging generative models for automatic speaker identification in Portuguese videos and audio recordings. Unlike traditional methods that require direct analysis of audio signals, our approach captures and analyzes speaker style patterns from transcribed audio with high accuracy. This method not only simplifies the process but also extends the applicability of generative models beyond their traditional domains, opening up a promising new line of research. Furthermore, our approach is language-agnostic and can be extended to any language supported by both a generative language model and a transcription model.

2 Background

2.1 Speech Data

Speech data contains a variety of information that reflects the identity of the speaker, including distinctive characteristics of the vocal tract, excitation source, and behavioral features. These cues are important for speaker recognition [13] and form the basis for the features of a speaker. In our approach, the model identifies the speaker’s traits from the text.

Processing such information involves a set of challenges, such as high variability due to factors like accent, background noise, and recording quality [24]. Furthermore, the audio quality can be significantly affected when not recorded in controlled environments, such as outdoor settings, rooms with echo, or audio with simultaneous speech. Our approach aims to mitigate these issues by employing advanced deep learning-based preprocessing techniques for audio transcription and refinement, as detailed in Sect. 4.

Another issue is labeling, as accurate annotation of speech data is crucial but can be demanding and costly. Techniques like large-scale weak supervision are employed to address these challenges [24]. In our case, we used the labeling from the Brazilian Chamber of Deputies as a foundation, manually correcting some of its errors for better accuracy. The process is detailed in Subsect. 4.1.

Finally, considering that handling speech data must comply with privacy regulations and ethical guidelines due to its sensitive nature [18], it is important to note that we used already transcribed examples of sessions that are required to be public. Hence, the data already complied with the expected privacy standards.

2.2 Speech to Text

Speech to text (STT) is the process of converting speech signals to a sequence of words. The main technique based on STT is speech recognition [13]. There are several speech recognition models available, such as Whisper large v2 [24], Deepgram Nova-2, Amazon, Microsoft Azure Batch v3.1, and the Universal Speech Model [30]. In the present work, Whisper large v2 was employed to transcribe public sections from the Brazilian Chamber of Deputies.

The Whisper model, a state-of-the-art method for speech transcription, is a pre-trained model designed for automatic speech recognition (ASR) and speech translation. It was trained on 680,000 h of labeled speech data, utilizing large-scale weak supervision for annotation. In its process, all audio is resampled to 16,000 Hz and converted into an 80-channel log-magnitude Mel spectrogram.

Whisper encoder handles this input representation, whereas the decoder utilizes learned position embeddings and tied input-output token representations. It employs an encoder-decoder Transformer architecture, and both the encoder and decoder have the same width and number of transformer blocks. The architecture was trained to handle multiple tasks simultaneously, including voice activity detection and inverse text normalization.

STT approaches have influenced numerous fields. In medicine, these models can document interactions between patients and doctors [9]. For customer service, STT enables real-time transcription of customer interactions, resulting in enhanced service quality [16]. Additionally, STT offers tools for individuals with disabilities, improving accessibility [7].

In our study, we extended the application of STT to the political domain, focusing on diarizing speeches from the Chamber of Deputies. Furthermore, transcription captures the spoken words without distinguishing between speakers. Our goal task, diarization, goes beyond transcription by segmenting speech according to different speakers.

2.3 Diarization

Diarization involves assigning audio segments to the appropriate speaker. It can be accomplished through various techniques, but typically involves voice activity detection (VAD) to identify speech regions and clustering these segmented audio portions to associate them with individual speakers [8]. Diarization also often includes speaker embedding extraction, where speaker characteristics are encoded into numerical representations. These embeddings are then utilized in the clustering process to group audio segments based on speaker similarity [22].

Our methodology is an innovative approach to solving the challenges of the diarization task. It uses audio transcription and advanced prompt engineering steps, which are detailed in Sect. 4.

2.4 Large Language Models

Large language models (LLMs) based on Transformers [27] are an essential part of our study, as they were used for refining the transcriptions and identifying the speakers, as detailed in Sect. 4. These very deep neural networks are trained on extensive datasets, enabling them to learn complex patterns.

Each token within the model, whether it represents a whole word or part of one, is interpreted as a numerical embedding that encapsulates its semantic meaning [17]. Similar words are mapped to embeddings that are close to each other in a high-dimensional space, facilitating the model’s ability to comprehend semantic relationships. The breadth and quality of the training dataset influence the model’s knowledge and vocabulary. Therefore, a vast and high-quality training dataset is essential to ensure the model’s comprehension and accuracy.

3 Related Work

There are several diarization models that support the English language, including Pyannote [8]. Pyannote works by implementing tasks such as voice activity detection (VAD), speaker change detection (SCD), and overlapped speech detection (OSD), which assign a value of 1 if the event occurs and 0 otherwise. Additionally, re-segmentation is used to refine the boundaries and labels of speech segments, assigning a label of 0 when no speaker is active and the corresponding speaker’s label when they are speaking.

Google also offers a model widely used for speech diarization on its cloud platform, the Speech-to-Text API. Both versions, V1 and the recently introduced V2, can be used for this task in English.

Unfortunately, all aforementioned tools lack support for the Portuguese language. Therefore, we explored other multilingual options, such as Oracle Speech AI or Assembly AI, which we tested and compared against our approach.

The Oracle Speech AI service [20] offers a range of functionalities, including transcriptions utilizing the Whisper model, which supports over 50 languages. Additionally, it provides features such as text normalization, profanity filtering, and confidence scoring per word. Moreover, it has the ability to recognize between 2 and 16 distinct speakers. However, the lack of detailed information about the training of their diarization model makes it difficult to understand the internal mechanisms of the service.

On the other hand, the Assembly AI service [6] also includes STT functionalities such as speech recognition and diarization. The speech recognition models supported by this service are Best and Nano. However, there is also not much information available about the methodology employed in their diarization process.

4 Materials and Methods

4.1 Dataset

As mentioned, there are very few public videos with identified speakers in Portuguese. Hence, the resource used in our study was one of the few available, obtained from the “Ordem do dia” part of the sessions of the Brazilian Chamber of Deputies [10]. Given the source’s relevance, the data is from a trusted source, which makes it suitable for evaluating our method. Another reason why this dataset was chosen is due to its sudden changes in speakers. While a number of videos have speaker identification, most of these instances are singular. This means that when one person speaks, there is usually a pause before another person responds. On the other hand, our dataset does not follow a specific order when people are speaking; anyone can interrupt and start talking at any time. This characteristic makes it ideal for our purposes, as we want to develop a method that can skillfully handle complex conversational dynamics.

It is worth mentioning that some video transcripts may not be entirely accurate, as some instances may not follow the exact order of the speakers, having slightly different lines from the original ones. For example, in the session entitled “2nd Ordinary Legislative Session of the 57th Legislature, 109th Session”, at the 13:56 mark, the audio says: “pelo Rio de Janeiro no Rio de Janeiro, nós vamos ouvir ela, que foi Senadora, Governadora, Deputada Federal, que é a nossa Deputada.” The transcript’s meaning remains the same, but with some new words and is organized differently: “pelo Rio de Janeiro com a ex-Senadora, ex-Governadora e Deputada Federal.”

Given the inconsistencies between the actual audio and the written transcription, we used each of Whisper’s transcriptions as the ground truth. We then manually inserted the real speakers and their labels. That resulted in a better evaluation of our method and ensured that the transcriptions were adequate.

Despite these discrepancies, our final goal was speaker diarization. Therefore, we focused solely on identifying the speakers rather than ensuring perfect correspondence between the audio and transcripts, even though we manually inserted the speaker labels.

4.2 Diarization Strategy

Our approach involves two key stages: transcribing audio from videos and processing the resulting text with generative models to identify the speakersFootnote 1. We utilized Whisper Large-v2 [24] to transcribe eight parts of sessions from the Brazilian Chamber of Deputies held in 2024. Each part of the session lasted for 4 min, providing substantial audio data for analysis. For text refinement and speaker diarization, we employed the advanced GPT-4o generative model.

Furthermore, we developed a prompt engineering method to guide the models in both refining the transcriptions and generating the speaker predictions, aiming to refine the output and ensure speaker identification accuracy.

An overview of the process can be found in Fig. 1. It begins by transcribing the video with Whisper, and its output is then fed into a generative model. The model is then instructed to refine the text, removing misspelled words and correcting misplaced or contextually incorrect words. This refinement is achieved through prompt engineering, through which a detailed instructional prompt guides the model to make such changes and improve the quality of the text generated by Whisper.

Finally, the refined text is passed on to another generative model to identify the speaker in the diarization task. Although this second model’s architecture is the same as the model used for refinement, it is given a more robust prompt, allowing it to accurately identify the speaker.

Each speaker presents not only variations in their voice but also in how they communicate, using unique vocabulary and expressions. When applying a generative model to perform diarization on a transcribed text, it takes into account the individual characteristics of each speaker. Additionally, the model leverages the knowledge acquired during training to identify patterns in the dialogue structure, such as recognizing that a question followed by an answer typically indicates that one person asked the question and another provided the response.

4.3 Prompts

Generative models can perform poorly if they are not given precise instructions on the task they need to solve. Therefore, this work also developed a prompt engineering strategy to ensure robustness. The task instructions used in this work are specified in Figs. 2 and 3 for each generative model, respectively, text refinement and speaker identification. The specific prompts are available on our GitHub.

Each of these tasks is further elaborated in the following subsections.

Text Refinement. As mentioned, the first model functions as a “text refiner”. The prompt used for this task is detailed in Fig. 2. It is important to firstly include the context in which the model will operate. This is specified in the ‘Model role’, where the model is assigned a function or task at a higher level. Subsequently, it requires instructions regarding the data that needs refinement.

In our example, we explained the context of the video, instructing the model to act as a stenographer of sessions in the Chamber of Deputies and detailing the type of content of the transcript. In addition, we asked it to output an improved text, correcting Portuguese errors and editing wrong words.

For this step, to ensure consistent and accurate word correction, it is essential that the model has extended deterministic characteristics, avoiding variations in its responses. We therefore set the temperature to zero to eliminate any chance of variability. With both of these settings, the generative model could be effectively guided to provide the necessary text refinement.

Speaker Diarization. The second model developed is significantly more complex. The prompt used for the diarization task includes almost all the refinement instructions involved in the first model, but with the addition of steps, results, and examples, providing much more detailed guidance. The prompt used in this task is detailed in Fig. 3.

Specifically, the diarization prompt consists of three segments. Firstly, an example of a conversation relevant to the task is provided. In our case, we simulated a dialog between individuals in a courtroom setting. If a different context is needed, a more contextually relevant example could be employed. For example, in a restaurant scenario, it could involve a conversation between a waiter, a customer, and the restaurant owner. The second segment comprises the transcript itself, which serves as the base text for identifying the speaker. Finally, the third segment includes a set of instructions that the model must follow to perform the diarization task. They include both general instructions about the task and specific instructions for each of the potential speakers. With these three segments, we can achieve state-of-the-art performance in speaker identification tasks.

Another essential factor that influences the model’s prediction is the temperature setting. Unlike the first model, we made this one slightly less deterministic since it needs to “understand” and accurately predict the number of speakers. We, therefore, increased the temperature and sacrificed some of the quality of the response at each iteration. However, this compensation significantly improves performance, with the model obtaining nearly perfect responses every two iterations.

4.4 Evaluation

There are several methods to evaluate speaker diarization, including the diarization error rate (DER) [11], word error rate (WER), Jaccard error rate (JER) [26], and e-WER [2], among others, such as the extensions discussed by Galibert [14]. However, since our approach primarily uses text, we focused on evaluating the quality of diarization as a text classification method.

In this work, we used accuracy and F1-score as our primary metrics for assessing the quality of speaker prediction. Accuracy measures how often speakers are correctly labeled compared to the actual speaker for each sentence or word, reflecting the overall correctness of the classification across all speakers. The F1-score, being the harmonic mean of recall and precision, considers each correctly classified speaker. We specifically used the weighted F1-score to account for any imbalance among speakers.

We evaluated these metrics at both the sentence level and the word level, comparing speaker predictions with the ground truth for each phrase and word, respectively. This separation between levels was implemented to enhance the accuracy of classifications. Evaluating only at the sentence level may allow for multiple speakers within a sentence without significant penalties. Therefore, we incorporated word-level evaluation to address this issue and provide a more granular assessment.

To assess the variability and robustness of the models, we also utilized confidence intervals to statistically demonstrate these differences. Additionally, we employed the Wilcoxon Signed-Rank Test to show that the differences between models are statistically significant. In this test, we specified the “greater” mode, focusing on superior performance rather than using a two-sided test. This approach ensured that our evaluation emphasized the best-performing model.

5 Results

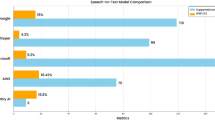

Our main results are shown in Table 1. It displays the average accuracy and F1-score for the proposed method and the baseline models, as well as the respective 95% confidence intervals.

The Oracle model exhibited the lowest performance, with both accuracy and F1-score consistently around 0.48 for both sentence-level and word-level evaluations. The Assembly model performed moderately well, with scores of approximately 0.81, yet it still fell short when compared to our model’s performance.

Our model demonstrated a significant improvement in accuracy, achieving 0.95 and 0.98 for sentence-level and word-level evaluations, respectively. This represents a substantial increase over both the Oracle and Assembly models. The improvement is similarly reflected in the F1-scores, where our model achieved 0.96 and 0.98 for sentence-level and word-level evaluations, respectively.

Additionally, the evaluation of the confidence intervals reveals that our approach exhibits the lowest variability, indicating high precision and consistency in the results. This suggests that our method is both robust and effective, especially when compared to the other models, which show greater variability in their confidence intervals.

The superior performance of our method across both evaluation metrics indicates that it generalizes well across different audio types, performing effectively on both high and low conversational recordings within our dataset.

To further validate these differences, we performed a Wilcoxon Signed-Rank Test to compare the results across the different models. The outcomes of this test are presented in Table 2.

As shown in Table 2, all results are statistically significant, providing strong evidence of differences between the models. The Wilcoxon test results allow us to state with 95% confidence that our model performs significantly better than both the Assembly model and the Oracle model at both the sentence and word levels, in terms of both accuracy and F1-score.

Additionally, our method has the unique ability to identify speakers based on their roles within a simple hierarchy (e.g., President and deputies). This functionality could be integrated into speaker-identified transcription tasks if required, though it would necessitate a robust example for effective implementation.

All transcriptions and predictions generated by our model are available on our GitHub.

5.1 Limitations

Our model is aligned with the Whisper-v2 model, which can transcribe audio segments up to 25 MB in size. Consequently, our diarization method achieves state-of-the-art results only for audio segments within this size limit. Although enhancing the model’s robustness is a potential area for future work, this study operates within the specified constraint.

Furthermore, since we leveraged generative models, the outputs may slightly vary with each prompt. We recommend iterating the process a few times to ensure the effectiveness of the task.

6 Conclusion

In this work, we introduced a new method for the diarization of audio and video. Our approach leverages the power of large language models to excel in this task, particularly in languages that lack established diarization methodologies. By prioritizing an understanding of textual context and cues over traditional audio signal-based speech diarization techniques, we achieved state-of-the-art results for the Portuguese language.

Our primary objective was to compare our method with other available methods for Portuguese, such as the Oracle model and AssemblyAI. The results demonstrated that our method significantly outperformed baseline methods, achieving higher accuracy and F1-scores (0.95 and 0.98 for sentence-level and 0.96 and 0.98 for word-level evaluations). Additionally, the narrow confidence intervals indicated high precision and consistency.

The statistical significance confirmed by the Wilcoxon Signed-Rank Test further supported the superior performance of our model. Moreover, its ability to identify speakers based on their roles within a hierarchy, such as distinguishing between a President and deputies, adds another layer of functionality that could be integrated into more complex diarization tasks.

For future work, we aim to investigate its behavior on longer audio segments and expand its evaluation by validating it on a wider variety of data. Additionally, we see potential in extending it to other languages, especially those lacking well-established diarization tools, such as Spanish and Italian, and including multilingual evaluations in our comparisons.

Furthermore, we aim to adapt the method for real-time applications and explore the integration of Retrieval-Augmented generation (RAG). By connecting the generative model to a website, RAG could enable the model to selectively search for relevant examples and content. In addition, we plan to perform ablation studies to better understand the contributions of each component within the proposed framework.

Finally, LLMs, with their vast parameter-rich architectures, hold the potential to tackle a wide array of challenges. In this sense, we believe this work aligns with the emerging trend of leveraging generative models for tasks beyond text, extending into areas such as video, audio, and beyond.

Notes

- 1.

In this article, we focus on diarizing videos; however, the process can be equally applied to audio inputs.

References

AI, M.: Llama 3 (2024). https://llama.meta.com/. Accessed 28 Aug 2024

Ali, A., Renals, S.: Word error rate estimation for speech recognition: e-WER. In: Gurevych, I., Miyao, Y. (eds.) Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), pp. 20–24. Association for Computational Linguistics, Melbourne, Australia (2018). https://doi.org/10.18653/v1/P18-2004, https://aclanthology.org/P18-2004

Anguera, X., Bozonnet, S., Evans, N., Fredouille, C., Friedland, G., Vinyals, O.: Speaker diarization: a review of recent research. IEEE Trans. Audio Speech Lang. Process. 20(2), 356–370 (2012)

Anthropic: Claude AI (2024). https://www.anthropic.com/claude. Accessed 28 Aug 2024

Aronowitz, H.: Speaker diarization using a priori acoustic information. In: INTERSPEECH, pp. 937–940 (2011)

AssemblyAI: Assemblyai (2024). https://www.assemblyai.com/. Accessed 30 Aug 2024

Bain, K., Basson, S., Faisman, A., Kanevsky, D.: Accessibility, transcription, and access everywhere. IBM Syst. J. 44(3), 589–603 (2005)

Bredin, H., et al.: Pyannote. Audio: neural building blocks for speaker diarization. In: ICASSP 2020-2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 7124–7128. IEEE (2020)

Chiu, C.C., et al.: Speech recognition for medical conversations. arXiv preprint arXiv:1711.07274 (2017)

Câmara dos Deputados do Brasil: Discursos e notas taquigráficas (2024). https://www.camara.leg.br/internet/sitaqweb/discursodireto.asp. Accessed 10 Jun 2024

Fiscus, J.G., Ajot, J., Michel, M., Garofolo, J.S.: The rich transcription 2006 spring meeting recognition evaluation. In: Renals, S., Bengio, S., Fiscus, J.G. (eds.) MLMI 2006. LNCS, vol. 4299, pp. 309–322. Springer, Heidelberg (2006). https://doi.org/10.1007/11965152_28

Fox, E.B., Sudderth, E.B., Jordan, M.I., Willsky, A.S.: The sticky HDP-HMM: bayesian nonparametric hidden Markov models with persistent states. Arxiv preprint 2 (2007)

Gaikwad, S.K., Gawali, B.W., Yannawar, P.: A review on speech recognition technique. Int. J. Comput. Appl. 10(3), 16–24 (2010)

Galibert, O.: Methodologies for the evaluation of speaker diarization and automatic speech recognition in the presence of overlapping speech. In: Interspeech (2013). https://doi.org/10.21437/Interspeech.2013-303

Hrúz, M., Zajíc, Z.: Convolutional neural network for speaker change detection in telephone speaker diarization system. In: 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 4945–4949 (2017). https://doi.org/10.1109/ICASSP.2017.7953097

Meng, J., Zhang, J., Zhao, H.: Overview of the speech recognition technology. In: 2012 Fourth International Conference on Computational and Information Sciences, pp. 199–202. IEEE (2012)

Mikolov, T., Chen, K., Corrado, G., Dean, J.: Efficient estimation of word representations in vector space. arXiv preprint arXiv:1301.3781 (2013)

Nautsch, A., et al.: Preserving privacy in speaker and speech characterisation. Comput. Speech Lang. 58, 441–480 (2019)

OpenAI: Gpt-4o (2023). https://openai.com/index/hello-gpt-4o/. Accessed 28 Aug 2024

Oracle Corporation: Oracle AI speech (2024). https://www.oracle.com/artificial-intelligence/speech/. Accessed 30 Aug 2024

Park, T.J., Kanda, N., Dimitriadis, D., Han, K.J., Watanabe, S., Narayanan, S.: A review of speaker diarization: recent advances with deep learning. arXiv preprint arXiv:2101.09624 (2021)

Park, T., Koluguri, N.R., Jia, F., Balam, J., Ginsburg, B.: Nemo open source speaker diarization system. In: INTERSPEECH, pp. 853–854 (2022)

Ponraj, A.S., et al.: Speech recognition with gender identification and speaker diarization. In: 2020 IEEE International Conference for Innovation in Technology (INOCON), pp. 1–4. IEEE (2020)

Radford, A., Kim, J.W., Xu, T., Brockman, G., McLeavey, C., Sutskever, I.: Robust speech recognition via large-scale weak supervision. In: International Conference on Machine Learning, pp. 28492–28518. PMLR (2023)

Reynolds, D.A.: Speaker identification and verification using Gaussian mixture speaker models. Speech Commun. 17(1), 91–108 (1995). https://doi.org/10.1016/0167-6393(95)00009-D, https://www.sciencedirect.com/science/article/pii/016763939500009D

Ryant, N., et al.: The second dihard diarization challenge: dataset, task, and baselines. arXiv preprint arXiv:1906.07839 (2019)

Vaswani, A., et al.: Attention is all you need. In: Guyon, I., et al. (eds.) Advances in Neural Information Processing Systems, vol. 30. Curran Associates, Inc. (2017). https://proceedings.neurips.cc/paper_files/paper/2017/file/3f5ee243547dee91fbd053c1c4a845aa-Paper.pdf

Wang, D., Xiao, X., Kanda, N., Yoshioka, T., Wu, J.: Target speaker voice activity detection with transformers and its integration with end-to-end neural diarization. arXiv preprint arXiv:2208.13085 (2022)

Weninger, F., Wöllmer, M., Schuller, B.: Automatic assessment of singer traits in popular music: Gender, age, height and race. In: Proceedings 12th International Society for Music Information Retrieval Conference, ISMIR 2011 (2011)

Zhang, Y., et al.: Google USM: scaling automatic speech recognition beyond 100 languages. arXiv preprint arXiv:2303.01037 (2023)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Boll, A.O., Puttlitz, L.M., Boll, H.O., Malossi, R.M. (2025). Beyond Audio Signals: Generative Model-Based Speaker Diarization in Portuguese. In: Paes, A., Verri, F.A.N. (eds) Intelligent Systems. BRACIS 2024. Lecture Notes in Computer Science(), vol 15412. Springer, Cham. https://doi.org/10.1007/978-3-031-79029-4_17

Download citation

DOI: https://doi.org/10.1007/978-3-031-79029-4_17

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-79028-7

Online ISBN: 978-3-031-79029-4

eBook Packages: Computer ScienceComputer Science (R0)