Abstract

The classification of images of elementary mathematical function graphs presents a significant challenge in computer vision; this is due to the varied shapes and formats of each functions curves. This classification is crucial for identifying function graphs, which have important applications in text and mathematical symbol recognition technologies, aiding visually impaired individuals by providing access to printed content. In educational environments, this identification helps obtain the analytical expression of drawn graphs, facilitating the extraction of information from educational materials. This article investigates various convolutional neural network (CNN) architectures to identify the most suitable model for classifying images of elementary mathematical function graphs. We compare our model with other renowned architectures, such as ResNet, MobileNet, and EfficientNet, using a custom dataset of function graphs. Our experiments show that the proposed architecture significantly outperforms networks of general purpose, achieving an accuracy of \(98.51\%\) in classifying elementary mathematical function graphs.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Classifying images of elementary mathematical function graphs is challenging in computer vision because of the varied shapes and formats of these curves [7]. Identifying the type of graph of a function is a preliminary step toward identifying the analytic expression of the graph in question. Determining the analytic expression of graphs of elementary mathematical functions can be used in technologies for recognizing text and mathematical symbols, assisting visually impaired individuals by enabling them to access information contained in printed material [10]. In educational environments, such identification can aid in extracting information from educational materials, helping research, and the study of mathematical concepts.

In this article, we propose a solution for classifying images of graphs of elementary mathematical functions using convolutional neural networks (CNNs). Our goal is to find a CNN architecture that best suits this problem.

This article is structured as follows: Sect. 2 discusses related work regarding the use of CNNs for image classification; Sect. 3 describes the proposed methodology; Sect. 4 presents results and discussions; and Sect. 5 lists the study’s conclusions.

2 Related Work

In the literature, there are few works demonstrating techniques for extracting elements within images of graphs of elementary mathematical functions. In [9], a method is proposed that divides the graph image into regions: regions outside the axes (such as titles, axis labels, and scales) and contour regions of the line. Scale information is obtained from the regions outside the axes, and the coordinate values of each line graph are also extracted from these regions. Finally, this information is integrated to convert graph data into numerical information. However, the method in [9] was used only for graphs with a single configuration regarding the positioning of graph elements within the image.

In [21], an offline image segmentation approach based on the technique from [9] is proposed. Images are segmented into connected components and characters. The characters represent the analytical expressions typically accompanying the image, while the connected components encompass everything from the axes to the function curves. Subsequently, elements of connected components are grouped to identify the function curve. Thus, the author managed to separate 15 of a universe of 20 images and group 104 of a total of 112 components within the images. The study highlights the need to improve the accuracy of their results.

Another study, aimed at developing an automatic system for transforming function graphs into tactile graphics that can be used by visually impaired individuals, was conducted by [5]. The authors present a method for extracting graphical elements in mathematical function graphs. The method involves identifying all components of the images (curves, lines, axes, equations, dashed lines, points, etc.) and dividing them into primitive elements (x and y axes, main lines, and curves). After identifying the primitive elements, these elements are combined to form graphical elements. In an experiment with 33 scanned images from science textbooks, the authors were able to extract 502 primitive elements. Of these, 373 were successfully combined into 115 graphical elements, while another 129 were not.

Another approach suggested by [18] uses the MathGraphReader, a tool that extracts data from graphs and creates alternative textual descriptions. Using text processing, image processing, plotting, and mathematical concepts, the authors built a tool that allows visually impaired students to access graph information interactively. The technique involves first detecting the origin of the axes and then tracing the intersection points of the axes with the function curve to find inflection points. However, the study does not address cases where the dashed line of the graph curve is displayed without intersecting the axes.

The methods mentioned so far propose offline extraction techniques, where the extraction of elements is performed on previously provided images. A different method was suggested by [19], which proposes obtaining the analytic expression from a function constructed online, i.e., when the graph curve is drawn in real-time. This method captures the pixels of the curve on the screen and then uses polynomial regression to find a curve that best fits the pixel points. Although the method yields good results, it has not been evaluated on a large dataset to verify accuracy. Additionally, it is limited to polynomial expressions and is restricted to the range \( x \in [-1, 1] \).

CNNs have revolutionized the field of computer vision and have been widely used in a broad range of applications [1, 3], such as image recognition, object detection, image segmentation, among others [2, 15, 16]. However, the use of CNNs for classifying images of graphs of elementary mathematical functions has been limited due to the scarcity of datasets [4]. The proposed methodology encompasses not only the creation of a dataset that contains different types of functions and their variations but also conducts an investigation into CNN architectures applied to the recognition of mathematical function patterns in graphs. Additionally, it compares these results with a set of renowned architectures applied to more generalizable computer vision tasks.

3 Methodology

This section describes the generation of a dataset of images of elementary functions graphs, the rationale behind the chosen deep learning model architecture, and how the model’s performance was assessed and benchmarked against other existing methods.

3.1 Process of Data Generation

The lack of open public datasets addressing the problem of classifying function graphs motivated the development of a systematic dataset generation process. Figure 1 illustrates the process of creating a dataset containing images of various elementary functions to be modeled.

To create the dataset, we first listed various types of elementary functions, as depicted in Fig. 2: linear (2a), quadratic (2b), cubic (2c), exponential (2d), logarithmic (2e), square root and cubic root (2f), sine (2g), cosine (2h), tangent (2i), and cotangent (2j). We randomly generated analytic expressions that could plot visible graphs within the range \(y \in [-5, 5]\) and for the domain x the interval was set to \(x \in [-2\pi , 2\pi ]\) for trigonometric functions and \(x \in [-5, 5]\) for other functions. Subsequently, a script was generated to automate the construction of these functions using Winplot [20], a free software for plotting two-dimensional and three-dimensional graphs of mathematical functions, including explicit, implicit, parametric, and polar function graphs.

Some functions, such as exponential and logarithmic functions, do not offer many variations within the specified range and therefore could generate a limited number of images. To avoid class imbalance, we limited the number of generated images to 1,450 per class. In total, 14,500 images were generated with a resolution of 128\(\,\times \,\)128 pixels and saved with names like \(\texttt {<class>\_<expression>.png}\).

As for generated images, we divided them into training (80%, 11,600 images), validation (10%, 1,450 images), and test sets (10%, 1,450 images). Each group contains all classes with a balanced number of samples. The images were flattened into a one-dimensional vector and labeled using a one-hot encoding format. Finally, the dataset was shuffled and saved in serialized Pickle files.

3.2 Selection and Definition of the CNN Architecture

Due to the scarcity of studies focusing on constructing deep neural network architectures for classifying images in elementary function graphs data, we will compare the performance of the proposed architecture with architectures that have excelled on broader datasets. Three CNN architectures were selected for comparison, known for their strong performance in image classification tasks on datasets such as ImageNet [8] and CIFAR-10 [14]:

-

ResNet [11]: Designed to ensure that learning is not hindered by adding more layers, using shortcuts or residual connections to facilitate optimization. Variants include ResNet-50 (50 layers), ResNet-101 (101 layers), ResNet-152 (152 layers). ResNet-50 was chosen for comparison as it achieved 93% to 94% accuracy on CIFAR-10 dataset.

-

MobileNet [12]: Designed for mobile and low-power devices, known for its lightweight and low computational cost. Variants include V1 (depthwise separable convolutions), V2 (inverted residual blocks and linear bottlenecks), and V3 (automated neural architecture search and additional optimizations). MobileNetV3 was chosen for comparison as it achieved 91% to 92% accuracy on CIFAR-10 dataset.

-

EfficientNet [22]: Designed to optimize the balance between accuracy and computational efficiency, using a compound scaling method that simultaneously scales depth, width, and resolution of the network. Variants include EfficientNet-B0 (more efficient) to B7 (more layers, filters, and resolution). EfficientNet-B0 was chosen for comparison as it achieved approximately 95% accuracy on CIFAR-10 dataset.

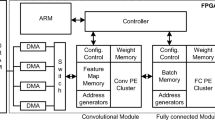

The CNN architecture proposed for classifying elementary function graph images was named F-Graphs. The hyperband algorithm [17] was used to perform an optimized search of neural network hyperparameters that enhance its performance in image classification tasks.

From the initializations made, the hyperband algorithm returned the CNN architecture illustrated in Fig. 3. The network consists of three convolutional layers activated with the Rectified Linear Unit (ReLU) function, combined with max pooling layers after the convolutional layers. In the fully connected layer, only one hidden layer with 128 neurons activated with the sigmoid function was included. At the output, a dense layer with 10 neurons corresponding to the 10 classes of the dataset and activated with the softmax function produces the probability distribution for the output classes.

3.3 Model Training and Evaluation

F-Ggraphs was created using the Keras library for Python [6], which simplifies the construction and training of neural networks, often used to create and experiment with deep learning models. Figure 4 shows the loss behavior and accuracy evolution during the training of the proposed model. Training included five different initializations for each architecture and 40 epochs using early stopping based on monitoring loss on the validation data as the stopping criteria.

To optimize training, the Adaptive Moment Estimation (Adam) optimizer [13] and a learning rate of \(9 \times 10^{-5}\) were used. Loss estimation during training for the 10 classes was performed using categorical cross-entropy. The metric used to evaluate performance during training was accuracy.

The results will be shown through comparisons between the accuracy, precision, recall, and F1-score metrics averaged over the 5 runs of the model fit on the data, as well as the memory size used to load the model and the total number of parameters for each architecture. Visualizations have also been built to show the model’s predictions between classes.

4 Results

The average behavior results for accuracy, precision, recall, F1-score, size, and number of parameters for the five runs of the compared models are presented in Table 1. The proposed architecture achieved its best performance with the configuration described in Subsect. 3.2, reaching \(98.51\%\) accuracy on the test data. In contrast, ResNet-50, MobileNet-V3, and EfficientNet-B0 returned low accuracies of \(10.57\%\), \(13.02\%\), and \(11.09\%\), respectively.

The proposed model F-Graphs also achieved better results in precision, recall, and F1-score, with values of \(98.43\%\), \(98.41\%\), and \(98.41\%\), respectively. However, F-Graphs is surpassed in terms of memory size and number of parameters by MobileNet-V3, which has the smallest memory footprint at 38.63MB and the lowest number of parameters at 10,126,560. As expected, the latter presents a lower space complexity, due to the fact that it is specifically designed for mobile devices, which are constrained by limited computational resources.

ResNet-50 is a deep CNN with multiple convolutional layers designed to extract more features from images [11]. The number of convolutional layers influences the models learning process, leading to inferior performance in classifying images of function graphs. In terms of memory size, it occupies approximately 138MB, making it heavier due to the large number of network parameters.

Similarly, the number of convolutional layers heavily influenced the generalization power of MobileNet-V3 and EfficientNet-B0, although these architectures outperformed ResNet-50. To improve the performance of ResNet-50, MobileNet-V3, and EfficientNet-B0 on the dataset of elementary function images, parameter tuning would be necessary.

Although the proposed model F-Graphs achieved an accuracy of 98.51%, it struggled with misclassifying some functions, as illustrated in Fig. 5. For example, the misclassification of the cosine function with sine occurs quite often, since these functions are very similar, especially when translated horizontally.

Another important fact is that the F-Graphs model tends to generalize linear functions more frequently. Functions such as the exponential function (Eq. 1), shown in Fig. 6a, and the cubic function (Eq. 2), shown in Fig. 6b, were often misclassified as linear. This is because the coefficients of the functions were generated randomly, forming analytical expressions that generate functions of this type. To address this issue, we replaced some of these functions that tended to be confused with other less ambiguous functions.

5 Conclusion

In this work, we aimed to find an optimal architecture for the classification of elementary mathematical function graphs. CNNs are excellent for solving image classification tasks. However, different datasets with different characteristics may require a specific architecture for the problem being solved.

Images of graphs of elementary mathematical functions tend to have few features when compared to images of other objects in the real world. In an image of a mathematical function graph, the convolution process involves extracting features to identify the vertical line (y-axis), horizontal line (x-axis), and the curve of the function. With fewer features, the model does not require many convolutional layers for feature extraction, making the learning process lighter and faster.

Despite having a larger size and number of parameters compared to MobileNet-V3, the proposed model F-Graphs could be beneficial for incorporation into mobile assistive technologies that aid in extracting information from printed texts for visually impaired people.

The proposed model F-Graphs demonstrated good performance on the test data. However, although the model made errors in classifying some graphs that had ambiguities, we think that this will not be an issue in deployment environments, since scenarios of this kind are unlikely to occur. One limitation of this model arises when presented with two or more graphs sketched on the same axes. In some real-world scenarios, the model tends to misclassify functions when there are multiple analytical expressions in the image.

Future work should focus on extracting additional information from images, such as identifying the analytical expressions of functions, to provide more comprehensive details about graph behaviors.

References

Algan, G., Ulusoy, I.: Image classification with deep learning in the presence of noisy labels: a survey. Knowl.-Based Syst. 215, 106771 (2021). https://doi.org/10.48550/arXiv.1912.05170

Bakhtiarnia, A., Zhang, Q., Iosifidis, A.: Efficient high-resolution deep learning: a survey. ACM Comput. Surv. 56 (2024). https://doi.org/10.1145/3645107

Bhatt, D., et al.: CNN variants for computer vision: history, architecture, application, challenges and future scope. Electronics 10(20) (2021). https://doi.org/10.3390/electronics10202470

Cao, P., Zhu, Z., Wang, Z., Zhu, Y., Niu, Q.: Applications of graph convolutional networks in computer vision. Neural Comput. Appl. 34(16), 13387–13405 (2022). https://doi.org/10.1007/s00521-022-07368-1

Chen, J., Nagaya, R., Takagi, N.: Development of a method for extracting graph elements from mathematical graphs. In: 2012 IEEE International Conference on Systems, Man, and Cybernetics (SMC), pp. 2921–2926. IEEE (2012)

Chollet, F., et al.: Keras (2015). https://keras.io

Dai, J., Lin, S.: Image recognition: current challenges and emerging opportunities (2018). https://www.microsoft.com/en-us/research/lab/microsoft-research-asia/articles/image-recognition-current-challenges-and-emerging-opportunities/. Accessed 20 Jun 2024

Deng, J., Dong, W., Socher, R., Li, L.J., Li, K., Fei-Fei, L.: ImageNet: a large-scale hierarchical image database. In: 2009 IEEE Conference on Computer Vision and Pattern Recognition, pp. 248–255. IEEE (2009).https://doi.org/10.1109/cvpr.2009.5206848

Fuda, T., Omachi, S., Aso, H.: Recognition of line graph images in documents by tracing connected components. Syst. Comput. Japan 38(14), 103–114 (2007). https://doi.org/10.1002/scj.10615

Goncu, C., Marriott, K.: Accessible graphics: graphics for vision impaired people. In: Cox, P., Plimmer, B., Rodgers, P. (eds.) Diagrams 2012. LNCS (LNAI), vol. 7352, pp. 6–6. Springer, Heidelberg (2012). https://doi.org/10.1007/978-3-642-31223-6_5

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016). https://doi.org/10.1109/CVPR.2016.90

Howard, A.G., et al.: MobileNets: efficient convolutional neural networks for mobile vision applications. Comput. Res. Repository (CoRR) (2017). https://doi.org/10.48550/arXiv.1704.04861

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization. arXiv (2014). https://doi.org/10.48550/arXiv.1412.6980

Krizhevsky, A., Hinton, G.: Learning multiple layers of features from tiny images. Tech. Rep. 0, University of Toronto, Toronto, Ontario (2009). https://www.cs.toronto.edu/~kriz/learning-features-2009-TR.pdf

Krizhevsky, A., Sutskever, I., Hinton, G.E.: ImageNet classification with deep convolutional neural networks. Commun. ACM 60(6), 84–90 (2017). https://doi.org/10.1145/3065386

LeCun, Y., Bengio, Y., Hinton, G.: Deep learning. Nature 521(7553), 436–444 (2015). https://doi.org/10.1038/nature14539

Li, L., Jamieson, K., DeSalvo, G., Rostamizadeh, A., Talwalkar, A.: Hyperband: a novel bandit-based approach to hyperparameter optimization. J. Mach. Learn. Res. (2016). https://doi.org/10.48550/ARXIV.1603.06560

Nazemi, A., Fernando, C., Murray, I., A. McMeekin, D.: Accessible and navigable representation of mathematical function graphs to the vision-impaired. Comput. Inf. Sci. 9(1), 31 (2015). https://doi.org/10.5539/cis.v9n1p31

Phyo, Y.K.: Graphical functions made from an effortless sketch. MS paint that returns equations. https://towardsdatascience.com/graphical-functions-made-from-an-effortless-sketch-266ccf95c46d (2020). Accessed 8 Apr 2024

Souza, S.D.A.: Usando o winplot. http://www.mat.ufpb.br/~sergio/winplot/winplot.html (2004). Accessed 08 Apr 2024

Takagi, N.: Mathematical figure recognition for automating production of tactile graphics. In: 2009 IEEE International Conference on Systems, Man and Cybernetics, pp. 4651–4656 (2009). https://doi.org/10.1109/ICSMC.2009.5346749

Tan, M., Le, Q.V.: EfficientNet: rethinking model scaling for convolutional neural networks. Computing Research Repository (CoRR) (2019). https://doi.org/10.48550/ARXIV.1905.11946

Acknowledgments

This research was funded by the Brazilian Federal Agency for Support and Evaluation of Graduate Education (CAPES) and developed with the support of the Center for High-Performance Distributed Computing of Pará (CCAD) of the Federal University of Pará (UFPA).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Ethics declarations

Disclosure of Interests

The authors have no competing interests to declare that are relevant to the content of this article.

Dataset Availability

The dataset for this project is publicly available to facilitate transparency, reproducibility, and further research through the following link: https://github.com/jvianna07/fgraphs_dataset/.

The script used is available here: https://github.com/jvianna07/functions_graphs/

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Viana, J., Matos, H., Mota, M., Santos, R. (2025). Classifying Graphs of Elementary Mathematical Functions Using Convolutional Neural Networks. In: Paes, A., Verri, F.A.N. (eds) Intelligent Systems. BRACIS 2024. Lecture Notes in Computer Science(), vol 15412. Springer, Cham. https://doi.org/10.1007/978-3-031-79029-4_19

Download citation

DOI: https://doi.org/10.1007/978-3-031-79029-4_19

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-79028-7

Online ISBN: 978-3-031-79029-4

eBook Packages: Computer ScienceComputer Science (R0)