Abstract

Atrial fibrillation (AF) is a common arrhythmia that originates in the heart’s upper chambers and can lead to serious complications like strokes and systemic embolism due to atrial thrombi. To mitigate these risks, anticoagulants such as warfarin and rivaroxaban are frequently prescribed. This study examines how known biomarkers behave under treatment with either warfarin or rivaroxaban. We developed high-performance models (AUROC > 0.94) to distinguish patient subpopulations based on their treatment. Additionally, we built models (AUROC > 0.97) to differentiate individuals with AF from a control group without the condition. Using synthetic data generation for training augmentation and explainable machine learning techniques, we analyzed biomarker behavior, uncovering distinct patterns based on whether patients received warfarin or rivaroxaban. Our approach provides valuable insights into the critical factors influencing biomarker variations across different treatments, enhancing our understanding of their roles in AF management.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Atrial fibrillation (AF) is the most prevalent cardiac arrhythmia, impacting millions of people worldwide [31]. Its prevalence escalates with age, affecting approximately 10% of individuals by the age of 80 [12]. Additionally, underlying cardiac conditions, such as coronary artery disease, significantly heighten the risk of developing AF [12]. AF is characterized by erratic electrical impulses in the atria, leading to an irregular and rapid heart rhythm that disrupts blood flow, causing stasis and increasing the risk of thromboembolic events such as ischemic stroke and systemic embolism [16]. This condition is associated with a fourfold increase in mortality risk, as evidenced by a population-based study in Korea [19].

The primary goal in managing AF is to reduce the risk of thromboembolism and stroke, primarily through anticoagulation therapy [3, 17, 20]. Warfarin, a traditional anticoagulant, has been extensively used for this purpose but poses challenges due to its food and drug interactions and the need for frequent monitoring [26]. Maintaining therapeutic levels of warfarin requires regular international normalized ratio (INR) checks and frequent dose adjustments to mitigate bleeding risks. Recently, direct oral anticoagulants (DOACs), such as rivaroxaban, have emerged as more favorable alternatives due to their improved pharmacological profiles [1, 26].

Machine learning has become a valuable tool in healthcare [9], aiding in the development of robust risk prediction algorithms and enhancing our understanding of disease-related features to improve patient outcomes [33]. These techniques are particularly effective in uncovering patterns and associations in large datasets that may be challenging to discern manually [28]. However, the application of machine learning in healthcare demands a focus on explainability due to the high-stakes nature of medical decision-making.

Explainability involves articulating the internal mechanisms and outputs of an algorithm in a way that is comprehensible to humans. For healthcare professionals to trust and effectively utilize these tools in clinical settings, they must understand the rationale behind a model’s predictions. For example, when a machine learning model forecasts a high risk of stroke in an AF patient, clinicians need to grasp the factors influencing this prediction to make well-informed treatment decisions [14, 21]. Trust in these models is built through transparency and interpretability, aligning model predictions with clinical knowledge and patient data [6]. Similarly, clear and transparent communication of these predictions fosters patient adherence to treatment plans [10].

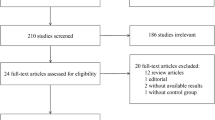

In this study, we built a dataset comprising biomarkers from 195 individuals, including 109 in the control group (without AF) and 86 patients with AF. Among the AF patients, 47 were using warfarin and 39 were using rivaroxaban. The biomarkers used comprise patient data from characterization, blood count, lipid profile, coagulation, inflammatory and cardiac diseases of the individuals. We built high-performance machine learning models using this data (i) to identify biomarkers that differentiate individuals with AF from those without, and (ii) to compare biomarker responses between patients on different anticoagulant therapies (warfarin \(\times \) rivaroxaban). Additionally, we conducted ablation studies to better understand the significance of two major biomarker groups (coagulation and inflammatory) given their known strong correlation with AF [11, 23]. In summary, we utilize synthetic data generation [32], machine learning algorithms [18], and explainable machine learning [22] to create a framework that provides insights into AF pathophysiology and the effects of anticoagulant therapy.

2 Related Work

Machine learning is changing healthcare by enabling the analysis of vast amounts of patient data to uncover patterns and insights that improve diagnosis, treatment, and patient outcomes [2, 29, 34]. In [25], authors used convolutional neural networks to predict the evolution of Alzheimer’s disease, and explainable machine learning to explain patterns within this evolution. In [5], authors employed Raman Spectroscopy to identify traces of proteins and developed machine learning models to find which proteins are more related to melanoma. In [4], authors developed a machine learning model for predicting the risk of death in COVID-19 patients. These models employ blood count data and present high prognostic performance. Authors in [7] developed a new ensemble algorithm to predict the evolution of pain relief in patients suffering from chronic pain.

Specific patterns in patients with AF were already studied using typical procedures in the biomedical literature. In [11], authors showed that platelet and coagulation activation profiles in patients with AF were reduced by warfarin or rivaroxaban use and that patients with AF using rivaroxaban were less hypocoagulable than patients using warfarin. This means that endogen thrombin potential was less reduced in patients using rivaroxaban compared to those taking warfarin. Similarly, authors in [23] showed that patients with AF (both warfarin and rivaroxaban groups) presented increased levels of inflammatory cytokines in comparison with controls. The use of rivaroxaban was associated with decreased levels of inflammatory cytokines in comparison with warfarin. On the other hand, patients with AF using rivaroxaban presented increased levels of the chemokines (MCP-1 in comparison with warfarin users; MIG and IP-10 in comparison with controls). Finally, authors in [8] showed that Hp levels and Hp1-Hp2 polymorphism are not associated with AF, and authors in [24] provided a basis for the study of inflammatory markers that had not yet been well addressed in AF, especially IP-10, besides supporting evidence about molecules that had previously been associated with the disease.

We employ the aforementioned findings to select the biomarkers to be included in our models. To the best of our knowledge, our study is the first to use machine learning models to provide a deep understanding of AF in terms of its known biomarkers and given the possible therapies.

3 Materials and Methods

Let D be a dataset comprising biomarkers from patients, including a control group without AF and patients diagnosed with AF. Specifically, D consists of individuals in the control group and AF patients who are either on warfarin or rivaroxaban. Further, let \(X = \{ x_1, x_2, \ldots , x_n \}\) be a feature vector containing the biomarker values for each individual. Missing values in numerical features are imputed using the mean of the feature within the corresponding class, and categorical features are imputed using the mode of the feature within the same class. Formally, for a numerical feature \(x_i\) in class \( C_k \), the imputation is given by:

For a categorical feature \( x_i \) in class \( C_k \), the imputation is:

The task is formulated as a binary classification problem, in which we are concerned with two distinct contexts: classifying individuals based on the presence or absence of AF, and classifying AF patients based on their medication (warfaring \(\times \) rivaroxaban). The goal is to learn a function \(f(X; \phi ) \rightarrow \{0, 1\}\), where X represents the biomarker features and \(\phi \) represents the learning parameters of the model. We perform ablation experiments to evaluate and better understand the impact of different biomarker subsets on classification performance. Thus, the evaluated models are trained on three different sets of variables:

-

All biomarkers are used in our models.

-

All biomarkers, excluding coagulation biomarkers.

-

All biomarkers, excluding inflammatory biomarkers.

This ablation approach allows us to understand how different groups of biomarkers contribute to the classification task and identify which features are more informative for distinguishing between the patient groups.

3.1 Data

The dataset for our analysis comprises biomarkers from 195 individuals, including 109 in the control group (without AF) and 86 patients with AF. Among the patients, 47 were using warfarin and 39 were using rivaroxaban. The biomarkers used comprise patient data from characterization, blood count, lipid profile, coagulation, inflammatory and cardiac diseases of the individuals and can be found in Table 1.

Prior to further analysis, missing feature values for patients were imputed based on their respective classes (control, rivaroxaban, warfarin). Our data was divided into two distinct datasets for separate analysis. The first subset \( D_1 \) segregates individuals based on the presence or absence of atrial fibrillation:

The second subset, \( D_2 \), includes only AF patients and further classifies them based on their medication:

As previously mentioned, for numerical features imputation was performed using the mean value of the feature within the class in either \( D_1 \) or \( D_2 \). For categorical features, the mode of the values within the class was used. By imputing missing values based on class-specific statistics we obtain results more closely related with the underlying data distribution, resulting in more realistic and reliable values.

Synthetic Data and Training Augmentation: To improve the visualization and analysis of explanatory factors, we generate synthetic data and create an expanded dataset \(D'\) using two techniques: Gaussian Copula [27, 30] and Tabular Variational Autoencoder (TVAE) [32]. The Gaussian Copula method models the dependency structure between variables by transforming the original data into a space where the variables follow a multivariate normal distribution. This involves computing the empirical cumulative distribution function for each variable and transforming them using their inverse of the standard normal to produce data that follows a standard normal distribution. We then proceed to estimate the covariance matrix of these transformed variables to capture their dependencies. New samples are generated from a multivariate normal distribution and are transformed back to the original space using the inverse of the initial transformations. Formally, let \(X = (X_1, X_2, \ldots , X_n)\) represent the original data and \(U = (U_1, U_2, \ldots , U_n)\) be the transformed variables that follow a standard normal distribution. The Gaussian Copula C is defined as:

in which \(\Phi \) represents the CDF of the standard normal distribution, \(\Phi ^{-1}\) its inverse, and \(\Phi _{\Sigma }\) is the CDF of the multivariate normal distribution with the covariance matrix.

In contrast, TVAE uses a variational autoencoder to learn the underlying data distribution and generate synthetic samples. It consists of an encoder and a decoder structure, in which the encoder \(q_{\phi }(Z|X)\) maps the input data X to a latent representation Z using a neural network characterized by a mean \(\mu \) and variance \(\sigma ^2\). Formally:

The decoder \(p_{\theta }(X|Z)\) then maps the latent representation Z back to the original data space, attempting to reconstruct the original data \(\hat{X}\):

We create synthetic data points by sampling from the latent space and feeding it to the decoder. For each technique, synthetic samples equivalent to \(33\%\) of the total number of individuals in each subset are generated, resulting in the expanded dataset \(D'\). While synthetic data generation can raise concerns about the representativeness of the data distribution and the potential for introducing bias in real-world applications [15], validating the model on real datasets helps mitigate these issues and ensures its reliability in real-world scenarios.

3.2 Model Training

For each comparison, the model is trained on three different sets of variables: one including all biomarkers, one excluding coagulation markers, and one excluding inflammatory markers. The target variable corresponds to the group that the individual belongs to. Our models were built using an implementation of the LightGBM algorithm [18]. It works by generating a model made up of hundreds of simple decision trees, these trees are integrated into a unified model through boosting [13].

Explainability: As a key point in any health domain application, we employ explainability tools to better understand the decision-making process of the model. Specifically, we employ SHAP [22]. We represent how model \(f(X; \phi )\) explains a phenomenon as a d-dimensional vector \(E(f(X)) = {e_1, e_2, . . . , e_d }\) showing which features are contributing to the model’s prediction. Specifically, \(e_i\) takes a value that corresponds to the influence that the respective feature \(x_i\) had on the model decision. This influence is quantified by calculating SHAP values, which provide a unified measure of feature importance across different instances.

SHAP values are calculated using Shapley values from cooperative game theory, ensuring that each feature’s contribution to the prediction is fairly attributed. For each prediction, the SHAP values are computed by considering all possible combinations of feature inputs, thus capturing the complex interactions between features. In particular, SHAP contains three desirable properties:

-

Additivity: the explanations are truthfully explaining the model and each evaluated instance. This means that the sum of the SHAP values for all features equals the model output for that instance, ensuring that the attributions are consistent with the model’s actual predictions.

-

Consistency: if a model changes such that some feature’s contribution increases or stays the same regardless of the other features, that feature’s attribution should not decrease. This property ensures that SHAP values remain stable and interpretable even if the underlying model changes.

-

Missingness: A missing feature gets an attribution of zero. That is, a feature not present in a model does not impact its output or explanation.

4 Experimental Results

Model performance was evaluated by the area under the receiver operating characteristic curve (AUROC). It is used to show how much the model is capable of distinguishing between competing classes (i.e., patient groups). In our experiments we employed stratified 5-fold cross-validation by dividing the dataset into five parts and, at each iteration, we use four folds for training and the remaining one as the testing set for the model. No hyperparameter tuning was performed. Following, we discuss the results of each study.

4.1 Atrial Fibrillation and Control Group

Table 2 shows the model performance in differentiating AF patients from the control group, with all models achieving high AUROC values. Literature suggests that physiological patterns in AF patients are markedly different from those in the control group, which the trained models were able to learn. Given the high performance of our models, our main interest lies in understanding these distinctive patterns. We used SHAP values for this analysis.

When using SHAP to find the most significant features using all biomarkers, we found a great impact of IL-4, Peak, MPEs/ul, IL-2, ETP, F1+2, LDLc, IFN, TRIG, IL-10, TGF\(\beta \), and HDLc in model prediction (Fig. 1 (Top)). If we exclude the coagulation biomarkers the most important features are IL-4, IL-10, IFN, Total Cholesterol, IL-6, sICAM-1, LDLc, MCP-1, IL-2, IL-8, GDF-15, and HDLc (Fig. 1 (Middle)). Furthermore, without inflammation biomarkers the most impacting features are Peak, TAFI, F1+2, Lagtime, TRIG, MPEs/ul, PAI-1, Total Cholesterol, sICAM-1, GDF-15, MPP/ul and ETP (Fig. 1 (Bottom)).

These findings suggest that inflammation, lipid metabolism, and coagulation processes are all critical in the model’s predictions. Without coagulation biomarkers, cytokines and lipid measures remain important, indicating that inflammation and lipid metabolism are still significant. Additional markers like sICAM-1 and MCP-1 become more prominent, highlighting the role of inflammation and immune response. Without inflammation biomarkers, coagulation and lipid measures (such as TAFI, F1+2, Lagtime, PAI-1) are significant. This shift underscores the importance of blood clotting mechanisms and lipid metabolism.

4.2 Warfarin \(\times \) Rivaroxaban

Table 3 shows the model performance in differentiating AF patients on warfarin from AF patients on rivaroxaban. Again, all models achieved very high AUROC values, and given the high performance of our models, our main interest lies in understanding the distinctive patterns in both groups. We used SHAP values for this analysis.

When using SHAP to find the most significant features using all biomarkers, we found a great influence of IL-2, F1+2, ETP, GDF-15, sICAM-1, RANTES, TNF, t-PA, IL-4, TGF\(\beta \), PLT, and IL-8 in model prediction (Fig. 2 (Top)). When excluding the coagulation biomarkers the most impacting features became IL-2, IL-4, Genotype Hp (1), IL-6, TGF\(\beta \), IFN, Haptoglobin (Hp), MCP-1, PLT, Dyslipidemia (hypercholesterolemia), TRIG and HAS (Fig. 2 (Middle)). Furthermore, without inflammation biomarkers the most impating features are F1+2, ETP, ttPeak, t-PA, Peak, Dyslipidemia (hypercholesterolemia), TRIG, PLT, Total Cholesterol, HDLc, LDLc, and TAFI (Fig. 2 (Bottom)).

5 Conclusion

This study underscores the potential of machine learning models in providing a deeper understanding of atrial fibrillation (AF). By leveraging ML models, we successfully identified and analyzed key biomarkers that differentiate AF patients from healthy individuals and elucidate the effects of different anticoagulant therapies. Our high-performance models demonstrate robust predictive capabilities, offering significant insights into the distinct biomarker patterns associated with warfarin and rivaroxaban treatments. The application of explainable machine learning methods enabled us to uncover critical factors influencing biomarker behavior, providing a deeper understanding of the pathophysiological processes in AF and the impacts of specific treatments (we can clearly see patients using rivaroxaban as having lower inflammation markers in contrast to those using warfarin). These insights contribute to a more nuanced view of how anticoagulant therapy affects biomarker profiles, which can inform clinical decision-making and optimize treatment strategies. Finally, synthetic data generation proved invaluable in augmenting our dataset, enhancing model robustness, and ensuring reliable predictions despite potential limitations in real-world data availability. This approach highlights the efficacy of combining data augmentation techniques with machine learning to overcome data scarcity issues common in clinical research.

References

Alberts, M., Chen, Y.W., Lin, J.H., Kogan, E., Twyman, K., Milentijevic, D.: Risks of stroke and mortality in atrial fibrillation patients treated with rivaroxaban and warfarin. Stroke 51(2), 549–555 (2020)

Amador, T., Saturnino, S., Veloso, A., Ziviani, N.: Early identification of ICU patients at risk of complications: regularization based on robustness and stability of explanations. Artif. Intell. Med. 128, 102283 (2022)

Amin, A., Houmsse, A., Ishola, A., Tyler, J., Houmsse, M.: The current approach of atrial fibrillation management. Avicenna J. Med. 6(01), 8–16 (2016)

Araújo, D.C., Veloso, A.A., Borges, K.B.G., das Graças Carvalho, M.: Prognosing the risk of COVID-19 death through a machine learning-based routine blood panel: a retrospective study in brazil. Int. J. Med. Inf. 165, 104835 (2022)

Araújo, D.C., et al.: Finding reduced raman spectroscopy fingerprint of skin samples for melanoma diagnosis through machine learning. Artif. Intell. Med. 120, 102161 (2021)

Bayer, S., Gimpel, H., Markgraf, M.: The role of domain expertise in trusting and following explainable ai decision support systems. J. Decis. Syst. 32(1), 110–138 (2022)

Costa, A.B.D., Moreira, L., Andrade, D.C.D., Veloso, A., Ziviani, N.: Predicting the evolution of pain relief: Ensemble learning by diversifying model explanations. ACM Trans. Comput. Heal. 2(4), 36:1–36:28 (2021)

Costa, L.B.X., et al.: Evaluation of serum haptoglobin levels and hp1-hp2 polymorphism in the haptoglobin gene in patients with atrial fibrillation. Molec. Biol. Rep. 49, 7359–7365 (2022)

Deo, R.C.: Machine learning in medicine. Circulation 132(20), 1920–1930 (2015)

DiMatteo, M.R., et al.: Physicians’ characteristics influence patients’ adherence to medical treatment: results from the medical outcomes study. Health Psychol. 12(2), 93 (1993)

Duarte, R.C.F., et al.: Thrombin generation and other hemostatic parameters in patients with atrial fbrillation in use of warfarin or rivaroxaban. J. Thrombosis Thrombolysis 51, 47–57 (2021)

Fang, M.C., Chen, J., Rich, M.W.: Atrial fibrillation in the elderly. Am. J. Med. 120(6), 481–487 (2007)

Freund, Y., Schapire, R.E.: A decision-theoretic generalization of on-line learning and an application to boosting. J. Comput. Syst. Sci. 55(1), 119–139 (1997)

Gerlings, J., Jensen, M.S., Shollo, A.: Explainable ai, but explainable to whom? an exploratory case study of xai in healthcare. In: Handbook of Artificial Intelligence in Healthcare: Practicalities and Prospects, vol. 2 pp. 169–198 (2022)

Giuffrè, M., Shung, D.L.: Harnessing the power of synthetic data in healthcare: innovation, application, and privacy. npj Dig. Med. 6(1), 186 (2023)

Hindricks, G., et al.: ESC Scientific Document Group: 2020 ESC guidelines for the diagnosis and management of atrial fibrillation developed in collaboration with the European association for Cardio-Thoracic surgery (EACTS): the task force for the diagnosis and management of atrial fibrillation of the European society of cardiology (ESC) developed with the special contribution of the European heart rhythm association (EHRA) of the ESC. Eur. Heart J. 42(5), 373–498 (2021)

Joglar, J.A., et al.: 2023 acc/aha/accp/hrs guideline for the diagnosis and management of atrial fibrillation: a report of the American college of cardiology/American heart association joint committee on clinical practice guidelines. Circulation 149(1), e1–e156 (2024)

Ke, G., et al.: Lightgbm: a highly efficient gradient boosting decision tree. In: Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R. (eds.) Advances in Neural Information Processing Systems, vol. 30. Curran Associates, Inc. (2017)

Lee, E., et al.: Mortality and causes of death in patients with atrial fibrillation: a nationwide population-based study. PLoS ONE 13(12), e0209687 (2018)

Lip, G.Y., Tse, H.F.: Management of atrial fibrillation. Lancet 370(9587), 604–618 (2007)

Lötsch, J., Kringel, D., Ultsch, A.: Explainable artificial intelligence (xai) in biomedicine: making ai decisions trustworthy for physicians and patients. BioMed. Inf. 2(1), 1–17 (2021)

Lundberg, S.M., Lee, S.I.: A unified approach to interpreting model predictions. In: Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R. (eds.) Advances in Neural Information Processing Systems, vol. 30. Curran Associates, Inc. (2017)

Martins, G.L., et al.: Comparison of inflammatory mediators in patients with atrial fibrillation using warfarin or rivaroxaban. Front. Cardiovasc. Med. 7, 47–57 (2020)

Martins, G.L., et al.: Evaluation of new potential inflammatory markers in patients with nonvalvular atrial fibrillation. Int. J. Molec. Sci. 24, 3326 (2023)

Nigri, E., Ziviani, N., Cappabianco, F.A.M., Antunes, A., Veloso, A.: Explainable deep cnns for mri-based diagnosis of alzheimer’s disease. In: 2020 International Joint Conference on Neural Networks, IJCNN 2020, pp. 1–8. IEEE (2020)

Patel, M.R., et al.: Rivaroxaban versus warfarin in nonvalvular atrial fibrillation. New Engl. J. Med. 365(10), 883–891 (2011)

Patki, N., Wedge, R., Veeramachaneni, K.: The synthetic data vault. In: 2016 IEEE International Conference on Data Science and Advanced Analytics (DSAA), pp. 399–410 (2016)

Phillips, P.J., et al.: Four principles of explainable artificial intelligence (2021)

Silcox, C., et al.: The potential for artificial intelligence to transform healthcare: perspectives from international health leaders. npj Dig. Med. 7(1) (2024)

Sun, Y., Cuesta-Infante, A., Veeramachaneni, K.: Learning vine copula models for synthetic data generation (2018)

Wyndham, C.R.: Atrial fibrillation: the most common arrhythmia. Tex. Heart Inst. J. 27(3), 257 (2000)

Xu, L., Skoularidou, M., Cuesta-Infante, A., Veeramachaneni, K.: Modeling tabular data using conditional gan. In: Wallach, H., Larochelle, H., Beygelzimer, A., d’Alché-Buc, F., Fox, E., Garnett, R. (eds.) Advances in Neural Information Processing Systems, vol. 32. Curran Associates, Inc. (2019)

Zuin, G., et al.: Prediction of sars-cov-2-positivity from million-scale complete blood counts using machine learning. Commun. Med. 2(1), 72 (2022)

Zuin, G., et al.: A modified louvain approach for medical community detection using geographic data. In: 2023 IEEE 36th International Symposium on Computer-Based Medical Systems (CBMS), pp. 143–148. IEEE (2023)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Ethics declarations

Disclosure of Interests

The authors have no competing interests to declare that are relevant to the content of this article.

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Veloso, A., Zuin, G., Sena, L. (2025). Explaining Biomarker Response to Anticoagulant Therapy in Atrial Fibrillation: A Study of Warfarin and Rivaroxaban with Machine Learning Models. In: Paes, A., Verri, F.A.N. (eds) Intelligent Systems. BRACIS 2024. Lecture Notes in Computer Science(), vol 15412. Springer, Cham. https://doi.org/10.1007/978-3-031-79029-4_33

Download citation

DOI: https://doi.org/10.1007/978-3-031-79029-4_33

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-79028-7

Online ISBN: 978-3-031-79029-4

eBook Packages: Computer ScienceComputer Science (R0)