Abstract

Many-Objective Optimization Problems (MaOPs) present a significant challenge due to the increased complexity associated with optimizing more than three conflicting objectives. Traditional Pareto-based algorithms often struggle to scale effectively with the number of objectives, leading researchers to explore alternative approaches. This study investigates the impact of parent selection strategies on the performance of the Fuzzy Decomposition-Based Multi/Many-Objective Evolutionary Algorithm (FDEA). The FDEA uses a fuzzy decomposition approach to divide a MaOP into subproblems, each solved individually to improve the adaptability and precision of solutions. The study compares the FDEA with three selection methods: binary tournament based on Pareto dominance, binary tournament using crowding distance, and a combination of Pareto dominance and crowding distance. Empirical evaluations were conducted using the DTLZ and WFG benchmark problem sets, with 2, 3, 5, 8, 10, and 15 objectives. The results indicate that incorporating quality indicators into the selection process improves the efficiency and effectiveness of the FDEA, especially in high-dimensional optimization scenarios. However, no variant was superior in all contexts. The study highlights the potential for methodological advances in Indicator-Based Mating Selection, emphasizing the need for robust and scalable algorithms capable of handling the complexities of MaOPs.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Multi-Objective Optimization Problems (MOPs) are those that require the simultaneous optimization of two or more objective functions. These objectives are usually conflicting, resulting in a set of solutions rather than a single optimal solution. To find this set of solutions, Pareto optimality theory is generally used [1].

When a MOP has more than three objectives, it is called a Many-Objective Optimization Problem (MaOP). MaOPs present greater complexity compared to traditional MOPs due to the increase in the number of objectives [1]. This added complexity makes it harder to accurately approximate the Pareto front, making it challenging to differentiate between high-quality and low-quality solutions. In addition, maintaining the convergence and diversity of solutions becomes more challenging, requiring advanced mechanisms to prevent premature convergence and ensure diversity.

In the context of Multi-Objective Evolutionary Algorithms (MOEAs), the Fuzzy Decomposition-Based Multi/Many-Objective Evolutionary Algorithm [11] stands out by combining problem decomposition with the principles of fuzzy logic. The FDEA employs a fuzzy decomposition approach to divide the MOP into a set of subproblems, each solved individually.

An essential component of the FDEA, as well as other evolutionary algorithms, is crossover selection. Crossover selection is a crucial step aimed at identifying pairs of individuals that can generate offspring with desirable characteristics [14]. This process is fundamental to ensure compatibility and complementarity of genetic traits, thereby enhancing the generation of superior solutions.

Related research indicates that only a few MOEAs utilize the crossover selection strategy, highlighting the need for more studies and methodological advances in this area. Indicator-based methods, such as R2-IBEA and MaOEA/IGD, demonstrate the effectiveness of crossover selection based on quality indicators to improve algorithm performance in various optimization scenarios.

This paper presents a study on using different crossover selection mechanisms in the FDEA algorithm. Four variants of the FDEA were compared, each with different selection strategies, to assess their impact on the algorithm’s efficiency and effectiveness in solving complex MaOPs. The variants are: FDEA, the original variant that uses random selection; FDEA_TB, which employs binary tournament selection based on Pareto dominance; FDEA_CD, which uses binary tournament selection based on crowding distance; and FDEA_CDTB, which combines Pareto dominance and crowding distance to make the final selection.

The results indicate that incorporating quality indicators into the selection process enhances the efficiency and effectiveness of the FDEA, particularly in high-dimensional optimization scenarios. However, it is important to note that no single variant proved superior across all tested contexts, suggesting that the optimal selection strategy may vary depending on the specific characteristics of the problem at hand. This study underscores the potential for methodological advancements in Indicator-Based Mating Selection.

The remaining of this paper is organized as follows: some backgroud concepts are presented in Sect. 2. Section 3 presents the Related Work. In Sect. 4 an empirical study comparing the modification of the crossover selection method on the FDEA algorithm and Sect. 5 presents the conclusions.

2 Background

2.1 Many-Objective Optimization

A Multi-Objective Optimization Problem (MOP) is a type of problem that requires the simultaneous optimization of two or more objective functions. These objectives are usually in conflict, so these problems do not present only one optimal solution but a set of them. This set of solutions is usually found using Pareto optimality theory.

A solution \({\textbf {x}} \in \varOmega \) is optimal relative to \(\varOmega \) if and only if there is no \({\textbf {x'}} \in \varOmega \) that dominates x in all objectives [1]. In other words, a solution is Pareto optimal if there is no other solution that is better or equal in all objectives and better in at least one simultaneously.

In multi-objective optimization, it is inappropriate to use the term “optimal solution” since individual objectives often conflict. Instead, a set of sufficiently good solutions is sought, representing a balance between the different objectives.

When a multi-objective optimization problem (MOP) has more than three objectives, it is classified as a Many-Objective Optimization Problem (MaOP). MaOPs are more complex than MOPs due to the increased number of objectives, which makes approximating the Pareto front more difficult and reduces selective pressure, complicating the distinction between high and low-quality solutions. Additionally, maintaining convergence and solution diversity becomes more challenging, requiring advanced mechanisms to prevent premature convergence and preserve diversity [1].

Recently, there has been a growing interest in enhancing the performance of MOEAs in MaOPs [2]. The most popular methodologies include: (1) MOEAs that use relaxed Pareto dominance relations, applying alternative preferences to increase selection pressure [3]; (2) decomposition-based MOEAs, which transform an MOP into several single-objective optimization problems using scalarization functions; and (3) indicator-based MOEAs, which use quality indicators to evaluate solution sets and define selection mechanisms, aiming to optimize the population indicator value throughout the evolutionary process.

2.2 Quality Indicators

Evaluating the performance of a multi-objective optimizer is challenging, but there are three main requirements: convergence, which seeks results close to the true Pareto set; diversity, which looks for solutions widely distributed in the objective space to allow comprehensive choices; and pertinence, which directs convergence and diversity to relevant areas of the search space where the decision-maker has the most interest. These characteristics are desired to ensure the optimizer is efficient and provides meaningful options to the decision-maker in multi-objective problems [4].

Quality indicators are functions that evaluate approximation sets and can be used to define selection mechanisms in multi-objective evolutionary algorithms (MOEAs). The idea is to optimize the value of the population indicator throughout the evolutionary process [2].

Indicator-based selection involves identifying good parental solutions based on quality indicator values. This type of selection mechanism does not aim to solve or approximate the subset selection problem based on indicators; instead, it attempts to produce promising solutions to accelerate the evolutionary process.

Crowding Distance is a metric that estimates the density of solutions around a specific solution. It is calculated as the average of the normalized absolute distances between the objective function values of two adjacent solutions in each objective. The population is sorted according to the values of the objective function, and frontier solutions are given an infinite distance to ensure their selection. The distances of intermediate solutions are normalized between the minimum and maximum values of each objective, ensuring that all solutions have the same impact on the calculation of the metric. This metric is crucial for comparing solutions that belong to the same level of non-dominance, favoring those in less “crowded” regions [12].

IGD. Inverted Generational Distance (IGD) measures the average distance from each reference point in the objective space to the nearest solution in the approximation set. This metric considers both convergence and the diversity of solutions, ensuring that the solutions not only approach the true Pareto front but are also well distributed along it. IGD is useful to assess how well the solutions cover the entire Pareto front [5].

Hipervolume (HV) is a crucial metric in multi-objective optimization used to evaluate the quality of solution sets by measuring the volume of the objective space dominated by the solutions. It assesses both convergence to the Pareto front and the dispersion of solutions within the objective space, with higher HV values usually indicating better performance. Calculating HV can be computationally intensive and requires a carefully chosen reference point, as this significantly impacts the results. Overall, HV provides a comprehensive measure of an optimization algorithm’s effectiveness by considering both the proximity of solutions to the Pareto front and their distribution across the objective space [2].

2.3 Benchmark Problems

Benchmark problems are widely used in the literature to evaluate the performance of multi-objective optimizers and compare algorithms. They are specifically designed for evaluation purposes and offer several advantages, such as known true Pareto fronts for precise assessment and control over difficulty levels, objectives, and decision variables, which facilitates comparisons between algorithms [9]. By isolating the optimizer’s performance, these problems eliminate potential real-world interferences, providing an objective evaluation of the algorithms’ capabilities, strengths, and limitations. However, it is important to note that benchmark problems are simplifications of real-world challenges, and the results obtained may not be directly transferable to real-world situations. Among the most commonly used benchmark problems in academia are the DTLZ and WFG problem sets, which are widely recognized and accepted for evaluating multi-objective optimization algorithms.

DTLZ. The DTLZ benchmark problem set, formulated by Deb et al. [10], was designed to provide a comprehensive and rigorous evaluation of multi-objective optimization algorithms, particularly those based on evolutionary principles. These problems are scalable, allowing researchers to adjust the number of objectives and decision variables to test an algorithm’s ability to manage increasing complexities. This scalability is essential because challenges in multi-objective optimization typically increase with the problem’s dimensionality, both in terms of search space and the complexity of the Pareto front.

Each function within the DTLZ set is constructed to evaluate distinct characteristics of evolutionary algorithms. For example, DTLZ1 tests the convergence of algorithms to a linear Pareto front, serving as an effective starting point for initial efficacy assessments. Conversely, DTLZ2 examines the ability of algorithms to converge to a concave Pareto front, necessitating a robust search for optimal solutions within a more complex solution space. Additionally, DTLZ3 increases complexity by incorporating multiple local optima into the DTLZ2 function. These problems, featuring non-convex and discontinuous Pareto front surfaces, challenge algorithms to find suitable solutions while navigating the complex topology of the solution space. Consequently, the DTLZ problems are invaluable to the scientific community, providing a robust foundation for systematic benchmarking and objective comparison of diverse multi-objective optimization strategies.

WFG. The WFG (Walking Fish Group) benchmark problem set is designed to evaluate the efficiency of multi-objective optimization algorithms. These problems are particularly valuable because they encompass a wide range of Pareto front shapes, allowing for the assessment of algorithm performance in various complex scenarios. WFG problems utilize a vector of underlying parameters, x, linked to a simple base problem that defines the fitness space. This vector results from sequential transformations from an initial parameter vector, z, adding layers of complexity such as multi-modality and non-separability.

All WFG problems follow a specific format that involves minimizing a set of functions with parameters, z and x, where M, represents the number of objectives. The set x defines underlying parameters related to distance and position, while z comprises working parameters divided into position-related elements and distance. The constants \(A_1\) to \(A_{M-1}\) act as degeneracy factors, reducing the dimensionality of the Pareto front. The functions \(h_1\) to \(h_M\) define the shape, and \(S_1\) to \(S_M\) are scaling constants. These transformations and structures provide a robust framework for systematic benchmarking and comparing different multi-objective optimization strategies.

2.4 FDEA

In the domain of Multi-Objective Evolutionary Algorithms (MOEAs), the complexity of managing high-dimensional solution spaces presents unique challenges concerning algorithm complexity and performance. The Fuzzy Decomposition-Based Multi/Many-Objective Evolutionary Algorithm (FDEA) distinguishes itself by combining problem decomposition with the principles of fuzzy logic. This algorithm employs a fuzzy decomposition approach to divide a Multi-Objective Optimization Problem (MOP) into a set of subproblems, each solved individually to enhance the adaptability and precision of solutions in multi-objective problems [11].

The FDEA begins by generating an initial random population and creates a new population by applying variation operators (crossover and mutation) to the parent population. The algorithm then performs the fuzzy decomposition process, which is divided into two main stages: fuzzy prediction and weight vector extraction.

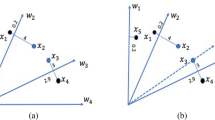

Fuzzy Prediction: In this phase, the solution space is partitioned into subregions using fuzzy logic. Each solution is assigned to one or more subregions based on a membership function that determines the proximity of the solution to different reference vectors. This approach allows the FDEA to capture a variety of solutions representing different parts of the Pareto front.

Weight Vector Extraction: After fuzzy prediction, the algorithm extracts weight vectors that define the preferred directions in the objective space for each subproblem. These weight vectors are used to guide the search in each subregion, encouraging exploration in directions that promote a uniform coverage of the Pareto front.

Following the fuzzy decomposition, an elite selection procedure is implemented to form the next generation. This selection strategy ensures that high-quality solutions, which best represent the desired characteristics of the objective space (such as convergence and diversity), are preserved and propagated to subsequent generations. Elite selection in the FDEA uses a combination of Pareto dominance and crowding distance to select the most promising solutions from each subregion.

The FDEA adopts a normalization procedure derived from the NSGA-III algorithm to ensure uniform treatment of objectives during the optimization process. This normalization adjusts the solutions so that all objectives are treated equitably, preventing some objectives from unduly dominating others during the selection process. Evolutionary operators, such as Simulated Binary Crossover (SBX) and Polynomial Mutation (PM), are applied to pairs of parent solutions that are randomly selected or chosen based on indicator-based strategies. This normalization is crucial for the effective performance of the FDEA, especially in problems with highly conflicting objectives.

2.5 Crossover Selection

Crossover selection in evolutionary algorithms aims to identify pairs of individuals that can produce offspring with desirable characteristics. This process is crucial to ensure compatibility and complementary genetic traits, which enhance the generation of better solutions. Producing promising offspring is critical for efficient convergence to optimal or near-optimal solutions [14].

According to Falcón-Cardona and Coello [2], crossover selection involves using quality indicator values to identify good parental solutions. This selection mechanism focuses on producing high-quality offspring to accelerate the evolutionary process rather than solving the subset selection problem based on indicators. By prioritizing the generation of promising offspring, the mechanism aims to optimize the search in the solution space, increasing the probability of finding superior solutions in fewer generations [14].

Indicator-Based Mating Selection. Multi-objective evolutionary algorithms (MOEAs) traditionally use Pareto dominance to identify a set of optimal solutions. However, quality indicators can provide additional insight that reflects user preferences and enhances the conventional concept of optimality. Within this context, IB-Mating Selection leverages these indicators to select parental solutions, aiming to improve the generation of high-quality offspring [2].

IB-Selection, a broader category within indicator-based mechanisms, includes IB-Environmental Selection, IB-Density Estimation, and IB-Archiving. IB-Environmental Selection prioritizes solutions based on their quality and contribution to the population’s environment, promoting diversity and overall set quality. IB-Density Estimation assesses the density of solutions in the search space, guiding algorithms to explore less dense regions or refine high-quality solutions in denser areas. IB-Archiving uses indicators to maintain a diverse and high-quality archive of solutions representing the Pareto front.

Specifically, IB-Mating Selection focuses on selecting \(\mu \) parental solutions that, through genetic operations, produce \(\lambda \) offspring with high potential for quality and diversity. By prioritizing high-quality offspring, IB-Mating Selection drives the evolutionary process efficiently, ensuring a constant flow of superior solutions across generations.

3 Related Work

According to Falcón-Cardona and Coello [2], only three Multi-Objective Evolutionary Algorithms (MOEAs) use the crossover selection strategy: R2-IBEA, MaOEA/IGD, and AR-MOEA. These algorithms represent distinct approaches to optimizing the selection of parent solutions, each with its own methodologies and specific focuses.

The R2-IBEA [15] is one of the algorithms that uses the binary R2 indicator to select solutions. This method ensures that the chosen solutions outperform others in terms of weak Pareto dominance, prioritizing high-quality solutions. The main advantage of this approach is the maintenance of a robust and competitive population of solutions, improving the overall performance of the algorithm in finding optimal solutions.

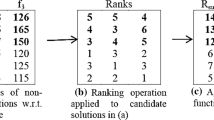

The MaOEA/IGD [16] implements a binary tournament where solutions are evaluated based on two main criteria: the dominance rank and the relative distance to the hyperplane, which serves as the reference set for the IGD indicator. Initially, the solutions are ranked by their dominance, with a preference for those with lower ranks. If there is a tie in the rank, the distance is used to determine the final selection. This method is effective in identifying solutions that not only have good convergence but also present an adequate distribution in the objective space.

The AR-MOEA [18] uses the IGD-NS metric to evaluate the solutions in the tournament, selecting those that offer the highest contribution in terms of fitness value. The solution with the highest contribution is selected for the parent set, ensuring that the generated solutions are optimal in terms of convergence. This focus on maximizing the contribution of each solution helps to enhance the efficiency of the evolutionary process, resulting in superior algorithm performance in multi-objective optimization problems.

Falcón-Cardona and Coello [2] observe a significant gap regarding the emphasis and in-depth study of Indicator-Based Crossover Selection, highlighting its underestimation and potential for new methodological advances. Indicator-Based Crossover Selection methods integrate Quality Indicators (QIs) as a central selection criterion, quantifying specific characteristics of solutions or the population for more informed and targeted evaluations.

4 Empirical Study

This section presents the results of the modification of the crossover selection operator in the FDEA algorithm. The study involved adapting the FDEA to use different selection strategies to select the parents, which were then subjected to evolutionary operators SBX and PM. An experimental study was carried out to compare the original FDEA selection (random) and the new selection strategies to assess their effectiveness. The following sections detail the standard and modified parameters, as well as the evaluation criteria used to assess the results.

4.1 Experimental Setup

In this study, the FDEA algorithm was executed with the same parameters as those outlined in the original article [11], as shown in Table 1, to maintain result consistency and comparability. The number of objectives (m) ranged from 2 to 15, addressing various levels of MOP complexity. The population size (N) was modified based on the number of objectives. The maximum number of generations (Gmax) was set between 300 and 1500, depending on the objective numbers, to ensure adequate convergence. These parameters were kept to provide a thorough and comparable assessment of the FDEA, aiming to validate the proposed hypothesis and evaluate performance across different multi-objective optimization scenarios.

The study used four variants of the FDEA algorithm to assess the impact of different selection methods on its performance. The original FDEA variant uses random selection to choose pairs of individuals for crossover and mutation. This simple approach involves randomly selecting two solutions and ensuring that they are different if the set contains more than one solution.

The second variant, FDEA_TB, employs binary tournament selection based on Pareto dominance. In this method, two solutions are randomly selected and compared using a dominance comparator, if there is a domination relation between them, the non-dominated one is selected, otherwise, one of them is selected randomly.

The third variant, FDEA_CD, also uses binary tournament selection but compares solutions based on crowding distance, a metric that helps maintain diversity among non-dominated solutions by selecting the solution in the less crowded area.

The fourth variant, FDEA_CDTB, combines the approaches of FDEA_TB and FDEA_CD. The solutions are first compared using Pareto dominance and, if they are equally good, crowding distance is used to make the final selection.

The objective of exploring these variants is to determine how different selection strategies impact the algorithm’s effectiveness, focusing on enhancing the evolutionary process by incorporating different criteria for parent selection.

The results of modifying the crossover selection method in the FDEA algorithm were obtained through a total of 30 independent runs for each variant of the algorithm, for each problem and number of objectives. After each run, the IGD of each obtained front was calculated. The results were subjected to a Kruskal-Wallis test with a significance level of 5%. In cases where there was no significant difference between any pairs, a post-hoc test was used to determine which pairs were equivalent.

The best results are displayed in Table 2 for the DTLZ problems and in Table 4 for the WFG problems, showing the indicator values for the analyzed algorithms. The tables are organized by the results of the statistical tests, highlighting the best results, and, in cases where there is no significant difference, those that are not significantly different from the best are also highlighted.

4.2 Results and Discussion for DTLZ Problems

For the DTLZ1 problem, known for its multi-modal decision space and linear Pareto front, all variants achieved no statistical difference, except for FDEA_CD which was outperformed by the other variants in two objectives.

In the DTLZ2 problem with a concave Pareto front, FDEA_CDTB had inferior performance in the 2-objective case. However, as objectives increased, FDEA_CD achieved good results in 3, 5, 8, and 15 objectives. FDEA and FDEA_TB were outperformed in the 8-objective setup, but all variants performed well and were statistically equivalent otherwise.

For the DTLZ3 problem, which includes multiple local Pareto fronts, FDEA and FDEA_TB had the best performance regardless of objective number. Conversely, FDEA_CD and FDEA_CDTB were outperformed for 5 and 15 objectives. For 2, 3, 8, and 10 objectives, all variants were statistically equivalent.

In the DTLZ4 problem, challenging for maintaining a diverse solution set, FDEA_TB was outperformed by the other variants for 2 objectives. FDEA_CDTB had the worst results in the 5 and 10-objective configurations, and both FDEA_CD and FDEA_CDTB performed poorly for 15 objectives. For 3 and 8 objectives, all variants were statistically equivalent. A possible explanation for this poor performance of CDTB over the others is that is may favor dominance resistant solutions, hence, lowering diversity.

For the DTLZ5 problem, which is aconcave and degenerate problem, FDEA_CD and FDEA_CDTB performed worst in the 15-objective setup. For other configurations, all variants were statistically equivalent.

In the DTLZ6 problem, which is degenerate and presents convergence challenges, all variants were statistically equivalent for two objectives. For 3, 5, 8, 10, and 15 objectives, FDEA_CD and FDEA_CDTB performed better than the others.

Finally, in the DTLZ7 problem, characterized by a disconnected Pareto front, all variants performed equivalently for 2, 3, and 8 objectives. For 5 objectives, FDEA and FDEA_TB are tied for the best performance. For 10 and 15 objectives, FDEA_CD and FDEA_CDTB tied for the best results.

Based on the results summarized in Table 3, a few patterns can be identified, first, in the non-degenerate problems that impose convergence challenge (DTLZ1 and DTLZ3) using the Crowding Distance that favors diversity does not seem to be a good choice. Second, in the easier problems (DTLZ1, DTLZ2 and DTLZ5) there are fewer differences between the variants, while in the DTLZ6 and DTLZ7 there are more differences. Third, original FDEA with random selection performs very well for two objectives, however its performance starts deteriorating as the number of objectives increase.

4.3 Results and Discussion for WFG Problems

For the WFG1 problem, which presents a convex geometry with a mixture of Pareto shapes, the FDEA_CD and FDEA_CDTB variants had the best performances for 2, 3, and 5 objectives. As the number of objectives increases, all variants show similar performances.

In the WFG2 problem, characterized by a convex and disconnected Pareto front, the results show that, regardless of the number of objectives, all variants had statistically equivalent performance.

In the WFG3 problem, characterized by a linear and degenerate geometry, the FDEA and FDEA_TB variants had the worst performance for 3 objectives. However, for 2, 5, 8, 10, and 15 objectives, all variants showed equivalent performances.

For the WFG4 problem, which has a concave Pareto front and is multi-modal, increasing the complexity of the problem, the FDEA and FDEA_TB variants had the worst performance for 10 and 15 objectives. However, for 2, 3, 5, and 8 objectives, all variants had equivalent performance.

In the WFG5 problem, which tests convergence ability, the FDEA and FDEA_TB variants had the worst performances for 2, 10, and 15 objectives. For 3, 5, and 8 objectives, all variants had equivalent performance.

In the WFG6 problem, a simple non-separable problem, all variants had statistically equivalent performance.

For the WFG7 problem, which is a biased problem, the FDEA variant had the worst performance for 10 objectives. For 2, 3, 5, 8, and 15 objectives, all variants had statistically similar performance.

For the WFG8 problem, characterized by distance parameters dependent on position parameters, resulting in a biased and highly complex problem, the FDEA and FDEA_TB variants had the worst performance for 2, 10, and 15 objectives. The FDEA_CDTB variant had the worst performance for 3 objectives, while for 5 objectives, the FDEA_CD and FDEA_CDTB variants showed the worst results. For 8 objectives, all variants had statistically equivalent performance.

Finally, for the WFG9 problem, which is a multi-modal, deceptive and biased problem, the FDEA variant had the worst results for 8 and 15 objectives. For the other numbers of objectives, all variants had statistically equivalent performances.

In the WFG problems, where the results are summarized in Table 5, the introduction of the new operators had one of three effects: it made the results worse than the original algorithm, which only happened on WFG8 for 3 and 5 objectives, hence, two instances (problem/objective number); it does not make a significant difference, which happened for all instances of the WFG2 and WFG6 problems, and a total of 37 instances considering all problems; it improves the results of the original algorithm, which happened in 15 instances along the problems. These results indicate that the operators proposed are beneficial when solving problems with the characteristics presented in the WFG suite.

5 Conclusion

This paper investigated the impact of different crossover selection strategies on the performance of the FDEA algorithm for multi-objective optimization. Four variants of the FDEA were employed: FDEA (random selection), FDEA_TB (binary tournament with Pareto dominance), FDEA_TB (binary tournament with crowding distance), and FDEA_CDTB (combination of FDEA_TB and FDEA_CDTB). The performance was evaluated using the IGD metric on DTLZ and WFG problems with 2, 3, 5, 8, 10 and 15 numbers of objectives.

The results demonstrate that the choice of crossover selection strategy is capable of influencing the performance of the FDEA. FDEA_TB and FDEA_CDTB showed good performance across various problems, especially in configurations with a higher number of objectives. FDEA performed well in problems with multiple local Pareto fronts, and random selection (original FDEA) can be a simpler and computationally cheaper alternative, though with inferior results in some cases.

Despite the modifications, most variants maintained statistically equivalent performances in many of the tested scenarios. This suggests that while certain variants may excel in specific situations, there is no single variant that is superior in all configurations. This finding highlights the importance of adapting the choice of the FDEA variant based on the specific problem and its characteristics.

In conclusion, modifying the crossover selection method in the FDEA was effective in improving the algorithm’s performance in different multi-objective optimization scenarios. These results encourage further studies on the hybridization of evolutionary algorithms, incorporating indicator-based selection methods to further explore the adaptive capabilities of algorithms in complex optimization environments. Future work includes exploring other variants of crossover selection, utilizing additional quality indicators, and conducting comprehensive tests in various multi-objective optimization scenarios. This will allow the identification of the most effective strategies for different problem characteristics and further refine the selection parameters to achieve consistent and robust performance across a wide range of situations.

References

Coello, C.A.C., et al.: Evolutionary Algorithms for solving multi-objective problems, 5th edn. Springer, New York (2007)

Falcón-Cardona, J.G., Coello, C.A.C.: Indicator-based multi-objective evolutionary algorithms: a comprehensive survey. ACM Comput. Surv. 53(2), 1–35 (2020). https://doi.org/10.1145/3376916

Liefooghe, A., Derbel, B.: A correlation analysis of set quality indicator values in multiobjective optimization. Association for Computing Machinery, New York (2016). https://doi.org/10.1145/2908812.2908906

Adra, S.F.: Improving Convergence, Diversity and Pertinency in Multiobjective Optimization. UK (2007)

Ishibuchi, H., Masuda, H., Tanigaki, Y., Nojima, Y.: Modified distance calculation in generational distance and inverted generational distance. In: Gaspar-Cunha, A., Henggeler Antunes, C., Coello, C.C. (eds.) EMO 2015. LNCS, vol. 9019, pp. 110–125. Springer, Cham (2015). https://doi.org/10.1007/978-3-319-15892-1_8

Falcón-Cardona, J.G., Coello Coello, C.A.: Towards a more general many-objective evolutionary optimizer. In: Auger, A., Fonseca, C.M., Lourenço, N., Machado, P., Paquete, L., Whitley, D. (eds.) PPSN 2018. LNCS, vol. 11101, pp. 335–346. Springer, Cham (2018). https://doi.org/10.1007/978-3-319-99253-2_27

Luke, S.: Essentials of metaheuristics, 2nd edn (2012)

Derrac, J., García, S., Herrera, F.: A practical tutorial on the use of nonparametric statistical tests as a methodology for comparing evolutionary and swarm intelligence algorithms. Swarm Evol. Comput. 3–18 (2011)

Huband, S., et al.: A review of multiobjective test problems and a scalable test problem toolkit. IEEE Trans. Evol. Comput. 477–506 (2006)

Deb, K., et al.: Scalable multi-objective optimization test problems. IEEE Congr. Evol. Comput. 825–830 (2002)

Liu, S., et al.: A fuzzy decomposition-based multi/many-objective evolutionary algorithm. IEEE Trans. Cybern. 3495–3509 (2022)

Deb, K., et al.: A fast and elitist multiobjective genetic algorithm: NSGA-II. IEEE Trans. Evol. Comput. 182–197 (2002)

Deb, K., Jain, H.: An evolutionary many-objective optimization algorithm using reference-point-based nondominated sorting approach, part I: solving problems with box constraints. IEEE Trans. Evol. Comput. 18, 577–601 (2014)

Esquivel, S.C., et al.: Selection mechanisms in evolutionary algorithms. Fundamenta Informaticae 17–33 (1998)

Phan, D.H., Suzuki, J.: R2-IBEA: R2 indicator based evolutionary algorithm for multiobjective optimization. IEEE Congr. Evol. Comput. 1836–1845 (2013)

Sun, Y., Yen, G.G., Yi, Z.: IGD indicator-based evolutionary algorithm for many-objective optimization problems. IEEE Trans. Evol. Comput. 173–187 (2019)

Hochstrate, N., Naujoks, B., Emmerich, M.: SMS-EMOA: multiobjective selection based on dominated hypervolume. Eur. J. Oper. Res. 1653–1669 (2007)

Tian, Y., et al.: An indicator-based multiobjective evolutionary algorithm with reference point adaptation for better versatilit. IEEE Trans. Evol. Comput. 609–622 (2018)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

de Oliveira, J.P.A., Castro, O.R. (2025). Impact of Parent Selection Operator on the FDEA Algorithm. In: Paes, A., Verri, F.A.N. (eds) Intelligent Systems. BRACIS 2024. Lecture Notes in Computer Science(), vol 15413. Springer, Cham. https://doi.org/10.1007/978-3-031-79032-4_7

Download citation

DOI: https://doi.org/10.1007/978-3-031-79032-4_7

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-79031-7

Online ISBN: 978-3-031-79032-4

eBook Packages: Computer ScienceComputer Science (R0)