Abstract

Efficiently learning and generalizing from interactions with the environment is a concern in reinforcement learning. Model-based approaches offer promising potential to address these challenges, leveraging the underlying structure of the world to speed learning and improve generalization. By assuming a structured environment, such approaches aim to capture key patterns from limited interactions and synthesize experiences for enhanced exploitation and generalization to unexplored territories. In this paper, we test a model-based reinforcement learning framework aimed at further advancing the frontiers of efficiency and generalization. Motivated by the insight that environments often exhibit distinct regions with varying dynamics, we introduce additional assumptions about the structure of the world to facilitate faster generalization. Specifically, we construct a model comprising a classifier that, given a state input, selects parameters characterizing the operating regime of the environment. Both the model and the regional parameters are learned from past experiences using a likelihood function. Leveraging this model, we employ a rapid exploring random tree based planner to generate new real experiences, capitalizing on the identified structural nuances within the environment. To evaluate the efficacy learning a segmented model, we conduct a comparative analysis against a traditional method that employs a single general model to learn the dynamics of the entire environment. Our results demonstrate the superiority of the segmented approach in terms of both efficiency and generalization, underscoring the benefits of incorporating additional assumptions about the structure of the world into model-based reinforcement learning paradigms.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Model-based reinforcement learning (RL) has a focus on efficiency and generalization through model construction. By leveraging these models, the agent can simulate possible outcomes of actions without directly interacting with the environment, or explore a state [11]. This can potentially reduce the number of interactions needed to learn an effective policy. However, model-based methods may suffer from errors or inaccuracies in the learned model, leading to sub-optimal performance [2].

The concerns of model-based RL lies in the balance between the accuracy of the learned model and the performance achieved by utilizing it to derive a policy. By incorporating learned models into the decision-making process, these methods can adapt more readily to new situations and exploit previously acquired knowledge to achieve better performance. As demonstrated by Young et al. [14] the benefits of the model-based approach could go even further at the point of presenting better solutions for some problems, as in cases where the model’s error account for an unintended exploration that can have a high return payoff.

We intend to delve into the nuanced advantages of model-based solutions, particularly in environments characterized by distinct regions with varying dynamics. Consider a scenario where a robot operates in an environment comprising two areas with markedly different frictional properties on their floors. In one area, the effects of actions accounting for movement differ significantly from those in the other.

This paper aim to explore how assumptions like the existence of distinct regions in model-based solutions can enhance its capabilities of planning. Constructing multiple models as done by Doya et al. [3] tailored to individual regions exhibiting stable dynamics, rather than attempting to capture the complex dynamics of both regions within a single model could yield better results. We propose an approach wherein distinct models are built for each identifiable region, and selected for use based on agent past experience.

The learned models are leveraged for plan generation through the utilization of a rapidly exploring random tree (RRT) based algorithm. Our planning strategy draws inspiration from the work of Lin and Zhang [9], which introduced the integration of RRT with reinforcement learning for planning tasks.

By adopting this multi-model approach, we aim to investigate its efficacy in accurately representing the environment’s dynamics and its impact on the agent’s decision-making process. We hypothesize that by aligning models with specific regions, the agent can better understand and adapt to the unique characteristics of each area, ultimately leading to improved performance and efficiency in task execution.

Through empirical evaluation and analysis, we aim to provide insights into the comparative advantages and limitations of both traditional single-model and proposed segmented model approaches in addressing challenges posed by environments with heterogeneous dynamics. Our findings aim to contribute to a deeper understanding of the applicability of model-based solutions in real-world scenarios characterized by spatially varying dynamics.

In Sect. 2 of our paper, we delve into related works that have explored the concept of leveraging multiple models to enhance generalization in RL. Section 3 formalizes the segmented environment problem in terms of a Markov decision problem. Section 4 introduces our approach based on segmented models problem that forms the basis of our comparative analysis. Moving on to Sect. 5, we provide a concise description of the simple environment utilized for collecting empirical results. Finally, in Sect. 6, we present the results of our empirical evaluations, detailing the performance metrics and outcomes observed across different experimental conditions.

2 Related Works

Several existing works have explored the concept of constructing a segmented model of the environment’s dynamics, aiming to create a comprehensive understanding of the environment. One notable example is the work by Doya et al. [3], which introduces a concept akin to a mixture of specialists. In this approach, the environment’s dynamics are modeled by a collection of smaller models, each specialized for specific states or moments where distinct behaviors are observed. The fundamental difference between observed approach [3] and our methodology lies in the mechanism for combining the outputs of these specialized models. In Multiple model based reinforcemnt learning [3], the specialists contribute to the overall model through a continuous responsibility signal, allowing for a potentially blended output that may not be directly attributable to any individual specialist. In contrast, our approach adopts a structured mechanism where the output is determined by the specialist that maximizes its signal. This ensures that the output is directly linked to the most relevant specialist, facilitating clearer interpretation and utilization of the model’s predictions.

An approach was proposed [5], which introduces a novel representation of the problem by augmenting the traditional Markov decision process (MDP) with contextual information. This augmentation enables the inference of different underlying models based on the context provided, offering a flexible framework for adapting to diverse environmental dynamics. One notable aspect of this work is the similarity in notation used for representing context, which bears resemblance to the representation of our models. However, a significant distinction lies in the treatment of context over the course of a trajectory. Unlike our approach, which allows for the possibility of encountering multiple contexts within the same episode, the contextual MDP framework [5] assumes a fixed context for the entire trajectory.

The idea of dynamically identifying the context have been addressed [12] with a solution capable of learning a new local model whenever it detects a context not yet experienced. Also using as a basis the prediction error of models already learned. This approach resulted in solutions that proved to be very suitable for dealing with non-stationary environments, as detailed by Alegre, Bazzan e Silva (2021) [1] who explored the power of rapid adaptation by identifying change points with high confidence.

Li et al. [7, 8], have delved into hierarchical approaches, where segments of information are compounded in a hierarchical manner. This hierarchical structuring enables more efficient learning and decision-making processes, particularly in complex environments with multiple levels of abstraction.

There are works [10] that have focused on incremental learning techniques, which have shown promising results in adapting to changing environments and evolving tasks. By incrementally updating models based on new data and experiences, these approaches offer flexibility and scalability in learning complex tasks over time.

Offline learning settings, [6] have demonstrated the effectiveness of model-based approaches, showcasing their utility in offline learning scenarios where access to real-time data may be limited or impractical. Leveraging offline data, these approaches enable efficient learning and decision-making based on previously collected experiences.

The utilization of a likelihood estimator in the context of building options [4], inspired our exploration into employing a similar approach for constructing models tailored to different regions within the world. The likelihood estimator framework proved instrumental in our efforts to construct region-specific models, enhancing the efficiency and effectiveness of our reinforcement learning approach in complex and heterogeneous environments as it was used in the options context.

3 k-Models MDP

The Markov decision process (MDP) [13] framework serves as the foundational formalism for representing the core aspects of reinforcement learning. An MDP is typically characterized by the tuple \(<\mathcal {S}, \mathcal {A}, \mathcal {P}, \mathcal {R}>\), where \(\mathcal {S}\) denotes the set of states, \(\mathcal {A}\) represents the set of actions, \(\mathcal {P}\) defines the transition probabilities between states given actions, and \(\mathcal {R}\) denotes the reward function associated with each state.

If we assume a know \(\mathcal {P}\) parameterized function a natural implementation for a model based approach would be to find the parameters that fit a observed experience, and if we assume that the dynamics of the world vary spatially, meaning that different regions exhibit distinct behaviors, we can define a function \(\beta : \mathcal {S} \mapsto \theta \) that give us the parameter for the model based on a given state. We are going to extend this concept now based in a assumption that the world has k regions where the parameters can be different

In the context of modeling segmented dynamics of the world, we extend the MDP framework to \(<\mathcal {S}, \mathcal {A}, \mathcal {P}, \mathcal {R}, \varTheta , \beta , \mathcal {G}, \mathcal {S}_0>\) where the transition function \(\mathcal {P}\) is parameterized by \(\theta \), so a segment of the world is represented by a \(\theta \) that dictates the dynamics applied by \(\mathcal {P}\) on that region of the state space, and to define that region we introduce a new function \(\beta : \mathcal {S} \times \theta \mapsto [0,1]\), which assigns a probability of the parameters \(\theta \) based on the current state. So given a state s and a parameter \(\theta \) the \(\beta (s, \theta )\) function yields the probabilities for parameter \(\theta \) to be the one that parameterize \(\mathcal {P}\) on s.

The set \(\varTheta \) represents the union of all possible parameters evolved in functions \(\mathcal {P}\) that maps a region in the environment, in a way that for a world with k distinct regions \(\varTheta = \{\theta _1, \theta _2..., \theta _k\}\). Each \(\theta _i\) represents a distinct parameter for \(\mathcal {P}\) that captures the dynamics of the environment within a specific region.

We define \(\mathcal {G} \subset \mathcal {S}\) as the set of goal states used by the planning module to construct plans to reach them, and \(\mathcal {S}_0 \subset \mathcal {S}\) as the set of initial states used to randomly define the initial state of each episode.

4 Likelihood Solution for a k-Models MDP

The model-based approach to solving MDPs involves learning a model that describes the transitions between states in the environment. This model is acquired by observing real transitions that occur as the agent interacts with the environment. Over time, as more experience is gathered, the model can be refined and adjusted to more accurately reflect the dynamics of the environment.

The process of learning the model typically involves estimating transition probabilities or dynamics from observed state-action-state transitions. We bring a likelihood approach to find the best parameters of the model based on the observed experience. As the agent interacts with the environment and accumulates more experience, we can update the parameters so the learned model becomes increasingly accurate and reliable. By learning and utilizing this model, the agent can plan and make decisions more effectively, for solving the reinforcement learning problem at hand.

To facilitate performance comparison, we have implemented a separate planner module capable of generating plan sequences to reach a goal state given a model of the world. This planner module can be utilized with various implementations, including the k-models approach, a standard single-model approach, or even with real-world models.

By integrating these components, we create a comprehensive framework for modeling segmented dynamics, learning multiple models, and conducting performance evaluations across different approaches. This approach enables us to explore the effectiveness of our proposed method in improving sample efficiency and generalization in reinforcement learning tasks.

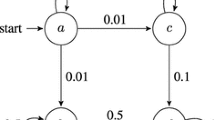

Figure 1 shows a x-ray of the proposed framework that brings k parameters that are updated with likelihood estimation based on experienced transitions. We can see above the dashed line the components that are activated every time step, like the memory that every interaction receives new data to be stored and the current executing plan that is driving the decisions the agent is taking every step. Below the dashed line are the components that are not activated every time step like the planner, that uses the models to generate a new plan, and the estimator that relies on the memory containing new observed transitions together with past experiences, to update the parameters of the models.

4.1 Likelihood Estimator

Drawing inspiration from the likelihood methodology used to find deep options [4], we devise an estimation approach for all parameters \(\varTheta \) within our framework. for the options it was utilized the likelihood in a gradient-based expectation-maximization algorithm to identify the optimal parameterized options from observed trajectories. Leveraging this insight, we develop a likelihood function tailored to our parameters \(\varTheta \).

Our likelihood function captures the probability of observing the transitions in the environment given the current parameters \(\varTheta \). By maximizing this likelihood function, we aim to iteratively update and refine our parameter estimates to better align with observed data. To achieve this, we employ a stochastic gradient ascent algorithm, which iteratively adjusts the parameters \(\varTheta \) based on online experience. By maximizing the log likelihood of the observed data, we can effectively update our parameter estimates in a principled manner, leveraging the information gleaned from interactions with the environment.

This approach allows us to adaptively learn and refine the parameters \(\varTheta \) over time, thereby improving the accuracy and effectiveness of our multi model-based reinforcement learning system. By continuously updating our parameter estimates based on observed data, we can enhance the performance and efficiency of our algorithm in learning the dynamics of segmented environments and generating optimal plans for achieving desired goals.

The likelihood function for a trajectory \(\xi = {s_1, a_1, s_2, a_2, ..., s_T}\) in a standard MDP with a model parameterized by \(\varTheta \) is calculated using the transition probabilities as follows:

To adapt this likelihood to the context of k models, we incorporate the \(\beta \) function and account for all \(\mathcal {P}_{\theta }\) as follows:

We can use this equation in the traditional likelihood equation. By iteratively applying stochastic gradient descent on the negative log likelihood, we aim to optimize the parameters \(\theta \):

where \(\eta \) represents the learning rate and \(\alpha \) is the decay factor for momentum. This iterative process allows us to update the parameters \(\varTheta \) in a principled manner, leveraging the observed trajectory \(\xi \) to refine our model and improve its accuracy in capturing the dynamics of the environment.

4.2 Planner

With the established models we have chosen a planning approach for obtaining value of the representation of the world, inspired by the implementation RRT based algorithms with RL [9] for optimizing policies to find a path to the goal state, we decides to implement our modified version of the RRT algorithm that makes use of a model to come with a plan for reaching the goal. The implementation builds a tree of state transitions based on the execution of actions on a model passed as input, so the model can generate synthetic transitions that are incorporated to the tree plan, when completed the tree starts from a start state, and have a depth of a certain max number of steps that we wish the plan to have. The Algorithm 1 is responsible for building this plan tree.

Once the plan tree is constructed, we can extract a path to the goal state or to the state within the tree that is closest to the goal. To accomplish this, we only require a function to calculate the relative distance between states. Algorithm 2 provides a systematic method for extracting a path from the input tree to either the goal state or the closest state found using the tree.NEAREST function.

The tree.NEAREST function plays a crucial role in this process by identifying the closest state to the goal within the plan tree. In this case it is used the euclidean distance to compare the states distances. Once this closest state is determined, the algorithm proceeds to construct a path from the initial state to either the goal state or the identified closest state. By leveraging Algorithm 2, the agent can effectively obtain a plan of actions to take.

The quality of plans generated by the algorithm is directly influenced by the accuracy and fidelity of the underlying models used to construct them. Initially, when the models are far from reflecting the real transitions in the environment, the generated plans may be misguided or sub optimal. However, as the agent gathers more experience and refines its models to better reflect the true dynamics of the environment, the quality of the generated plans improves significantly.

This highlights the iterative nature of model-based reinforcement learning, and works [14] have even shown that this initial misguidance of poor models could bring valuable exploration experiences in some specific problems.

5 Applied Environment

Our challenge lies in simultaneously learning the k parameters \(\theta \) and the probability distribution given by \(\beta \) across states. To address this challenge, we assume that the function \(\mathcal {P}\) is based in a Gaussian parameterized, with \(\theta _i = \{\tau _i, \sigma _i\}\) representing the parameters that will be used in the transitions.

To create a simulation environment reflecting practical problems characterized by segmented dynamics, we developed a two-dimensional space where states are represented by x and y coordinates specifying \(\mathcal {S} \in \mathbb {R}^2\). In this environment, agents have four possible actions corresponding to movement in the cardinal directions then giving us \(\mathcal {A} \in \{\uparrow , \leftarrow , \downarrow , \rightarrow \} = \{(0,-1), (-1, 0), (0, 1), (1,0)\}\).

The uncertainty of the agents’ movements, are formulated in transitions as linear transformations of the state with respect to the chosen action with a random factor drawn from a Gaussian distribution. Notably, the parameters of this distribution vary across distinct regions of the state space. So if we have k regions resulting state \(s'\) after executing action a in state s would be \(s' \sim \mathcal {N}(s+a\tau _i, \sigma _i)\) with \(\tau _i\) and \(\sigma _i\) being parameters \(\theta _i\) of region \(M_i\) where \(M_i \subset \mathcal {S}; s \in M_i\) and \(s' \in \mathcal {S}\); \(a \in \mathcal {A}\);

This give us a transition function that is derived from the probability density function dependent of the region i:

The probability defined by Eq. 4 will be used to determine the likelihood function,as elaborated in Sect. 4.

An illustration of such an environment can be observed in Fig. 2, providing a visual representation of the two-dimensional space with segmented dynamics. This figure effectively encapsulates the concept of distinct regions within the state space, each characterized by unique parameters of the Gaussian distribution governing uncertainty in transitions.

By implementing this approach, we effectively model environments where dynamics vary between different regions. Adjusting the parameters of the Gaussian distribution enables us to replicate diverse levels of uncertainty and variability within each region. Consequently, agents must adapt their strategies accordingly as they navigate through the environment.

This simulation framework offers a versatile platform for investigating and evaluating model-based reinforcement learning algorithms in scenarios where the state space exhibits heterogeneous dynamics. Through experimentation and analysis within this environment, researchers gain valuable insights into the effectiveness and adaptability of different learning approaches in complex real-world settings.

5.1 Functions Aproximators

In our approach, we leverage the likelihood estimators to optimize both the parameters of the \(\beta \) function and the k-models, which can also be represented by parameterized functions. For simplicity and flexibility, in our experiments, we employed a feedforward neural network with an softmax activation function in the output layer to represent the \(\beta \) functions. Additionally, we assumed a known distribution function for the k-models, specifically a Gaussian distribution.

The parameters of the neural network, along with the means and variances of the Gaussian distributions representing the k-models, collectively form the parameter set \(\varTheta \) that we aim to estimate using the likelihood-based optimization method described previously. Replacing the structural assumptions for the k-model’s functions with a universal function approximation, similar to what we’ve done for the \(\beta \) function, is a natural step towards generalizing this solution for more practical problems, and for that, no changes in the likelihood estimator is necessary, since it’s agnostic to the functions structure, optimizing the parameters directly.

The dynamics for updating the models based on new experience and the generation of a new plan base on the new model can be visualized on Fig. 3 that show how an episode starts on \(s_0\) and a plan is generated to start guiding the agent’s initial actions, and after a certain number of steps, the model can be updated based on the new gained information, which enable the agent to come with a new plan, and this goes on, until the agent reaches the goal.

There are two aspects that can be adjusted in our approach that can change the impact of the learning of the models. The first one is the memory size, since we use real past experience to update the parameters via likelihood, we have decided to specify the size of the memory, in other words, how many experiences the agent will remember, and use to recalculate the likelihood. As the size increases, the better the updates reflects the reality, but given a infinity horizon problem, this could became a problem.

The second relevant aspect is the frequency in which the models are updated. We control that frequency by specifying the number of steps that the agent will be forced to exploit the models after each update. So every fixed number of steps, the experience collected are added to the memory and the parameters can be recalculated afterwards with the extended trajectory.

With all that put together we have a solution that start roaming for some steps and then uses its short experience to fit a model, and then uses the model to plan the next actions generating new experiences. Following this loop for some time, eventually the models are able to get precise and the plans actually leads to the goal.

The Fig. 4 show us two examples of tree plan generated with the models learned trough a episode, on the left is the tree generated in the beginning and in the right at the end of the episode. We can see how in the end of the episode, the quality of the models were improved resulting in a more consistent plan.

6 Results

To compare the advantages of using a segmented representation of the world against the standard approach of a single unified model, we designed a series of experiments aimed at highlighting the differences in performance.

First we design the experiment in which we conducted ten runs of two episodes with at most three hundred steps each, where both models interacted with the same environment. By comparing their performances in this controlled setting, we expect to have both solution being very similar in the first episode. We limited the horizon to three hundred steps.

So with this experiment setup we develop two scenarios, the first one just as we described but the second one we change the environment between first and second episode. As the agent maintain the knowledge of the previous episode, we expected to see a better adaption by the k-model solution in a different environment that brings familiar dynamics.

So, for the first experiment, we run the process into two episodes. In this setup, each solution retained knowledge from previous episodes, allowing us to observe how their performance evolved over time. We expected both models to improve across episodes, leveraging their accumulated experience to make increasingly informed decisions.

In the second experiment, we introduced a novel twist by presenting the agent with a different environment in the second episode. While the dynamics of this environment remained very similar to the one previously encountered, the regions within it were arranged in different shapes and positions, as depicted in Fig. 5.

Despite this change, both models retained persistent knowledge from the previous episodes. However, we anticipated that the segmented model would exhibit superior adaptability in this scenario. This expectation stemmed from the segmented model’s ability to identify previously learned models and adjust its routing network accordingly to accommodate the new configuration of regions.

The analysis presented in Fig. 6 reveals interesting insights into the performance of both approaches across episodes. Initially, in the first episode, both the single-model and the k-models exhibit comparable performances, as evidenced by the similar duration of the episodes with the k-model being slightly worse. This suggests that both models are capable of effectively learning to navigate through the environment in a similar fashion.

However, in the subsequent episode shown in Fig. 6 a notable disparity emerges between the two approaches. The k-models demonstrate a significant reduction in episode length in most of the runs, compared to the single model. This observation suggests that the k-models have a better reset power to retrieve the knowledge from the beginning of the previous episode, while the changes presented through the episode had more impact in the single model approach, resulting in a better performance compared to that observed in the first episode but not as good as the improvement observed by the k-models.

The improvement became even more evident when analysing the performances in the second episode for the scenario when the environment changes. The Fig. 7 show us the difference between the two approaches on this scenario. The k-models demonstrate a significant reduction in episode length in most of the runs, compared to the single model as it did in the first scenario, but this time we can’t see the same improvement in the single model approach. This observation suggests that the single model struggles to transfer knowledge from the previous episode to the next when the environment changes, resulting in performance similar to that observed in the first episode, where it had to learn the environment from scratch. In contrast, the k-models leverage the dynamics learned in the first episode to adapt and improve their performance in the second episode. By dynamically adjusting their routing network, the k-models demonstrate a remarkable ability to rapidly adapt to the new environment, resulting in shorter episode duration.

Overall, these findings underscore the effectiveness of the segmented k-models approach in leveraging accumulated knowledge from previous episodes to facilitate faster adaptation and improved performance in subsequent episodes. This highlights the advantages of employing a segmented representation of the world’s dynamics, particularly in scenarios where the environment exhibits dynamic changes between episodes.

7 Conclusion

This work aims to demonstrate the benefits of leveraging segmented dynamics in reinforcement learning problems. By recognizing that the environment’s dynamics can be effectively segmented into distinct regions, we introduce a novel approach that enhances the efficiency of reinforcement learning solutions.

Through the utilization of a structured framework comprised of smaller models, the agent gains the ability to discern situations where it can switch between models. This segmentation allows for improved generalization across diverse contexts and facilitates faster adaptation to familiar dynamics within different environments.

By adopting this approach, our research highlights the importance of understanding and leveraging the underlying structure of the environment’s dynamics. By incorporating segmented models into the learning process, we empower agents to navigate complex environments more effectively, ultimately leading to enhanced performance and adaptability in a variety of reinforcement learning tasks.

One limitation of our approach is the requirement to specify the number of models as input, which may be unknown in practical applications. This presents a challenge, as determining the optimal number of models can be non-trivial and may require prior knowledge or experimentation.

To address this limitation, future research could explore methods for automatically determining the appropriate number of models based on the characteristics of the environment or through data-driven approaches. Techniques such as model selection criteria, Bayesian optimization, or clustering algorithms could be employed to dynamically adjust the number of models based on observed data and performance metrics.

Additionally, the flexibility of our approach allows for the incorporation of adaptive mechanisms that can dynamically adjust the number of models based on feedback from the learning process. By incorporating such adaptive mechanisms, our approach can become more robust and applicable to a wider range of real-world scenarios where the number of models may vary or change over time.

Overall, addressing the limitation of requiring a predefined number of models is an important direction for future research in order to enhance the scalability and applicability of our approach in practical reinforcement learning applications.

References

Alegre, L.N., Bazzan, A.L.C., da Silva, B.C.: Minimum-delay adaptation in non-stationary reinforcement learning via online high-confidence change-point detection. In: Proceedings of the 20th International Conference on Autonomous Agents and MultiAgent Systems, AAMAS ’21, pp. 97–105. International Foundation for Autonomous Agents and Multiagent Systems, Richland (2021)

Chitnis, R., Silver, T., Tenenbaum, J., Kaelbling, L.P., Lozano-Pérez, T.: Glib: efficient exploration for relational model-based reinforcement learning via goal-literal babbling, vol. 13B, pp. 11782–11791 (2021). https://www.scopus.com/inward/record.uri?eid=2-s2.0-85100285730&partnerID=40 &md5=4e2e2fca957818475950cdcd634527fe

Doya, K., Samejima, K., Katagiri, K.i., Kawato, M.: Multiple model-based reinforcement learning. Neural Comput. 14(6), 1347–1369 (2002). https://doi.org/10.1162/089976602753712972

Fox, R., Krishnan, S., Stoica, I., Goldberg, K.: Multi-level discovery of deep options (2017)

Hallak, A., Castro, D., Mannor, S.: Contextual Markov decision processes (2015)

Kidambi, R., Rajeswaran, A., Netrapalli, P., Joachims, T.: Morel: Model-based offline reinforcement learning. In: NIPS 2020. Curran Associates Inc., Red Hook (2020)

Li, J., Tang, C., Tomizuka, M., Zhan, W.: Hierarchical planning through goal-conditioned offline reinforcement learning. IEEE Rob. Autom. Lett. 7(4), 10216–10223 (2022). https://doi.org/10.1109/LRA.2022.3190100

Li, T., Lambert, N., Calandra, R., Meier, F., Rai, A.: Learning generalizable locomotion skills with hierarchical reinforcement learning. In: 2020 IEEE International Conference on Robotics and Automation (ICRA), pp. 413–419 (2020). https://doi.org/10.1109/ICRA40945.2020.9196642

Lin, D., Zhang, J.: A reinforcement learning based rrt algorithm with value estimation. In: 2022 3rd International Conference on Big Data, Artificial Intelligence and Internet of Things Engineering (ICBAIE), pp. 619–624 (2022). https://doi.org/10.1109/ICBAIE56435.2022.9985840

Ng, J.H.A., Petrick, R.P.A.: Incremental learning of planning actions in model-based reinforcement learning. In: Proceedings of the 28th International Joint Conference on Artificial Intelligence, IJCAI 2019, pp. 3195–3201. AAAI Press (2019)

Rybkin, O., Zhu, C., Nagabandi, A., Daniilidis, K., Mordatch, I., Levine, S.: Model-based reinforcement learning via latent-space collocation, vol. 139, pp. 9190–9201 (2021). https://www.scopus.com/inward/record.uri?eid=2-s2.0-85161314975&partnerID=40 &md5=0f9769abe528c97cbf983f602ecff397

da Silva, B., Basso, E., Bazzan, A., Engel, P.: Dealing with non-stationary environments using context detection, vol. 2006, pp. 217–224 (2006). https://doi.org/10.1145/1143844.1143872

Sutton, R., Barto, A.: Reinforcement Learning: An Introduction. MIT Press, Cambridge (2018)

Young, K., Ramesh, A., Kirsch, L., Schmidhuber, J.: The benefits of model-based generalization in reinforcement learning. In: Proceedings of the 40th International Conference on Machine Learning. ICML 2023. JMLR.org (2023)

Acknowledgments

This study was supported in part by the Coordenação de Aperfeiçoamento de Pessoal de Nível Superior (CAPES) – Finance Code 001, and by the Center for Artificial Intelligence (C4AI-USP), with support by FAPESP (grant #2019/07665-4) and by the IBM Corporation.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Albarrans, G., Freire, V. (2025). Likelihood Estimator for Multi Model-Based Reinforcement Learning. In: Paes, A., Verri, F.A.N. (eds) Intelligent Systems. BRACIS 2024. Lecture Notes in Computer Science(), vol 15413. Springer, Cham. https://doi.org/10.1007/978-3-031-79032-4_13

Download citation

DOI: https://doi.org/10.1007/978-3-031-79032-4_13

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-79031-7

Online ISBN: 978-3-031-79032-4

eBook Packages: Computer ScienceComputer Science (R0)