Abstract

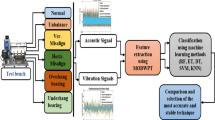

Traditional Machine Fault Detection (MFD) techniques usually rely on multiple sensor data sources, such as vibration, temperature, force, and audio/acoustic signals. Acoustic signals, in particular, are quite appealing in the context of MFD, as they are often among the first manifestations of machine failure. Furthermore, they are associated with high sensitivity, environmental resilience, and do not require machine interference. Given these compelling characteristics, MFD based exclusively on acoustic signals can be highly beneficial. In this work, we evaluate an unsupervised MFD approach based on Autoencoders (AE) trained exclusively on features extracted from acoustic signals of a rotating machine. The data employed in this work comes from the Machine Fault Database (MaFaulDa), which includes information from vibration and velocity sensors, besides the acoustic measurements. This allows us to compare the performance of the AE models to that of supervised models (such as MLPs) trained on the same acoustic-based feature set, as well as feature sets that incorporate all sensors from MaFaulDa. Our results support that unsupervised MFD based on Autoencoders and acoustic signals is particularly appealing, as it requires only normal machine operation for training. Indeed, we obtained AUC values of 0.86 for the task.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Electric motors play a key role in industrial processes. Although generally reliable, they are nonetheless susceptible to malfunction. In industrial environments, unforeseen machinery faults might cause processes delay, economic losses, potential harm to individuals, and even death [1]. Traditional preventive maintenance is often costly and time-consuming, demanding trained personnel and specialized equipment. Advances in IoT (Internet of Things) allowed for massive data collection that, when combined with Machine Learning (ML) techniques, allow for the development of predictive maintenance in the context of Industry 4.0. As the adoption of Industry 4.0 expands, so does the number of works focusing on the context of Machine Fault Detection (MFD) [19, 26].

Given that faults will inevitably occur, it is crucial to detect them as early as possible to prevent further implications. Usual approaches to MFD rely primarily on signal analysis of vibration, force, temperature, and audio [26]. As discussed by Chacon et al. [5], traditional signal-based MFD approaches that rely solely on vibration measurements are not effective for early-stage detection. Conversely, acoustic signals should be favored due to their cost-effectiveness, high sensitivity, environmental resilience, ease of deployment, and lack of interference with equipment operation. Such a point of view is also emphasized by Saufi et al. [21], in their discussion of failure manifestations through time, summarized in Fig. 1. Considering that acoustic signals are amongst the first manifestations of failure, it is not surprising that their use has been gaining attention in MFD.

Evolution of an electric rotary machine’s health subsequent to a fault. Important landmarks in fault manifestation are highlighted from 1 to 6. Adapted from [21].

As discussed by different authors, Convolutional Neural Networks (CNNs) and Autoencoders (AEs) are the state-of-the-art approaches in ML for acoustic signal-based MFD [14, 21, 26]. Autoencoders are particularly appealing in this setting due to their unsupervised nature, i.e., they are trained to reconstruct actual inputs. As normal operational data from machines is easily obtainable, it can be employed to model exclusively their regular behaviour. Later, faults can be identified on the basis of their (ideally high) reconstruction error, as the model is not expected to provide a reasonable reconstruction for data that deviates from standard machine operation. This eliminates the need of faulty data collection for model training, which might be difficult and costly to obtain. Moreover, collecting training data to represent all fault scenarios might be impractical.

In this work we present a case study aimed at investigating the suitability of Autoencoders for Machine Fault Detection built solely based on acoustic features. For that we consider the Machinery Fault Database (MaFaulDa) [11], which accounts for recordings of an electrical rotating machine during normal and faulty operation at different speeds. Each recording is obtained from eight sensors: six accelerometers, a tachometer, and a microphone. It is worth noticing that this dataset has already been employed for Machine Fault Detection, but considering combined data from all input sensors, as we shall discuss later.

Here, we place these results in perspective by considering exclusively acoustic data and “vanilla” or standard Autoencoders (AEs). We compare the performance of AEs to that of MLPs trained on statistical and spectral attributes from all the sensors’ signals, similar to the input feature set from previous work on the MaFaulDa database [11, 20]. The potential of acoustic MFD based on an unsupervised approach (AE) is contrasted to that of a supervised method (MLP).

This work is organized as follows. Related work on the MaFaulDa is presented in Sect. 2. A proper description of the case study is given in Sect. 3. The proposed approach for acoustic-based MFD employing Autoencoders is detailed in Sect. 4. The experimental setup adopted for evaluation and comparison, including metrics and baselines, is discussed in Sect. 5. Results are presented in Sect. 6. Final remarks and future work directions are made in Sect. 7.

2 Previous Works on MaFaulDa

Different strategies have already been explored in the context of MFD using MaFaulDa. It is worth noticing, however, that the vast majority of these works employ all signals from the database (from six accelerometers, a tachometer, and a microphone) or perform the analysis considering only vibration data.

The works of Ribeiro et al. [20] and Marins et al. [11] perform feature extraction from all the original signals considering statistical and spectral measures. Both works employ Similarity-Based Modeling (SBM) to perform classification of the signals according to their fault type (five classes), besides the normal class. A Random Forest is also employed in [11]. In both works, accuracy values higher than 80% are obtained. For the best models, accuracies close to 100% were observed. It is worth noticing, once more, that all signals are employed.

More recently, Zhao et al. [29] employed a Parallel CNN (Convolutional Neural Network) with an attention mechanism to perform multi-class fault diagnosis considering the MaFaulDa database. The model was trained considering only accelerometer data (vibration data) and four out of five classes from the database, normal condition and three fault types. The proposed classification methodology achieved an accuracy of \(99.6\%\). As previously discussed, however, this particular approach has a major disadvantage, that is, by relying solely on vibration measurements, the model is not effective for early-stage fault detection.

Finally, Saufi et al. [22] proposed a hybrid approach that employs deep sparse Autoencoders to perform feature extraction from MaFaulDa’s raw vibration signals. Afterwards, a softmax classifier is trained on this data to perform the classification considering four classes: normal condition and three fault types. Their approach reached an accuracy of \(95\%\), but suffers from the same drawback previously discussed, namely, early faults cannot be detected with vibration signals.

3 Case Study

3.1 The MaFaulDa Database

MaFaulDa stands for Machinery Fault Database. The data was acquired by the Federal University of Rio de Janeiro from a SpectraQuest’s Machinery Fault Simulator (MFS) Alignment-Balance Vibration Trainer (ABVT), which is an emulator of motor dynamics [11]. The database comprises 50 kHz time series recordings of eight sensors: six accelerometers, a tachometer, and a microphone. These recordings were obtained from a working rotating machine operating at different rotation speeds, in normal and faulty conditions. Five fault types are presented: imbalance, horizontal misalignment, vertical misalignment, overhang and underhang bearing. The faulty scenarios were obtained taking into account several degrees of intensity. Overall, the database is heavily imbalanced, with 49 recordings from normal operation and 1,902 from abnormal operation (fault scenarios). The full database, along with further information, is available at [10].

3.2 Dataset Preprocessing

Studies on MFD that consider spectrogram-based features extracted from acoustic signals, as in this case study, are commonly carried out on signals sampled at a maximum of 16 kHz [4, 7, 8, 16, 25, 27]. Indeed, recently, Yi et al. [27] observed that, in the case of their application, almost all relevant information was below 8 kHz. An in-depth frequency analysis regarding the acoustic signals for MaFaulDa has not been carried out to verify if such observations also hold for this case. Nevertheless, in order to reduce the final complexity of the models (and thus computational time), we down-sampled the database’s acoustic data to 16 kHz, following previous works. Some preliminary/investigatory experiments were carried out with models trained on spectrogram features derived from the database at 50 and 16 kHz. No significant changes in effectiveness were observed between the results obtained with higher and lower frequency signals.

As the down-sampling procedure is applied, it is possible to split the original 50 kHz signal into three signals of 16 kHz each, by shifting the sampling window one unit per sub-sampling group, as depicted in Fig. 2. As a result, three signals with unique samples are obtained. Even though unique, such sub-sampled signals resemble the original one and, therefore, some care shall be taken during training and testing of the models, to allow for a fair evaluation. In brief, sub-sampled signals that stem from the same original signal are all placed together either in the train or test set, not in both. On the one hand, this prevents training and testing on signals that are too similar to each other, which would yield overoptimistic results. On the other, this procedure allows for obtaining a larger number of objects.

Following this approach, we ended up with 147 normal acoustic signals and 5,706 abnormal ones (failure cases). Before applying any MFD method, however, feature extraction was performed, as we detail in the next section.

3.3 Feature Extraction

In the context of Machine Fault Detection (MFD) based on acoustic signals, spectrogram-based approaches are often employed for feature engineering, i.e., feature extraction, with Mel Frequency Energy Coefficients (MFECs) figuring as the preferred representation [14, 26]. In brief, it consists of the application of the Short-Time Fourier Transform (STFT), which, in turn, corresponds to performing the Fast Fourier Transform (FFT) in overlapping windowed segments of the acoustic signal. The resulting amplitude-frequencies are then grouped into bins (a.k.a mel bins) according to triangular filter banks. Finally, their log-values are taken (more details regarding the feature extraction procedure can be obtained in [3, 26]). The final spectrogram organizes the time dimension into ranges, and maps for each time range a column of bins, where each bin is an MFEC, and each column is termed as a frame, as depicted in Fig. 3.

The acoustic signal (left) is converted to an MFEC space (center). Once the sizes of the frame stack and the frame hop are defined, frame stacks are obtained. These constitute the dataset (right) that later is provided as input to the models. In this example, frame hop and frame stack sizes are set to 3 and 4, respectively. At the center Frame Stack 2 is highlighted (a total of three frame stacks are obtained).

In this work, we adopted the stack of frames featuring approach, which is a traditional methodology to compose the objects of a dataset stemming from mel-spectrograms [26]. This strategy builds each data object on the basis of a group of frames, which is referred to as a frame stack. Consecutive frame stacks are shifted from each other by a number of frames, i.e., a frame hop. Note that if the size of the frame hop is smaller than that of the frame stack there will be some overlap for successive frames. Each acoustic signal is processed into a batch of frame stacks. The final dataset is formed from the collection of all frame stacks (objects) extracted from all the available acoustic signal segments (recordings) for the phenomenon under study. The process is illustrated in Fig. 3.

Considering this procedure, Table 1 shows the parameter values adopted for feature extraction in this work. Parameter values for Short-Time Fourier Transform (STFT) were set based on the works from [3, 17, 24]. Parameter values for Mel bins, Frame Stack Size and Frame Hop Size were empirically determined. Applying this featuring engineering to the outcome from data pre-processing (Sect. 3.2), we ended up with a dataset composed of 409,710 objects, 10,290 normal and 399,420 abnormal, each with 1,600 dimensions.

4 Machine Fault Detection Based on Autoencoders

In brief, Autoencoders (AEs) are Artificial Neural Networks (ANNs) designed primarily for input reconstruction, that is, the model is trained to reproduce inputs as outputs [6]. A vanilla autoencoder architecture has three distinct elements: encoder, bottleneck, and decoder. The encoder processes the input feature vector, reducing its original dimension. The smallest representation obtained with the encoder constitutes the bottleneck (a.k.a. latent space) of the model. This representation is, in turn, the input for the decoder, which aims to reconstruct the original input from the compressed information.

The relevance of AEs lies not only in their input reconstruction capabilities, but also in their ability to compress the input, which allows the model to capture the fundamental aspects needed for its reconstruction. Due to the constraints imposed by the bottleneck structure, AEs are compelled to represent internally only fundamental characteristics regarding input reconstruction, often learning interesting properties about it during the process [6]. Despite using supervised learning algorithms for training (e.g., backpropagation) AEs are regarded as unsupervised methods, given that no labeled data is needed [6].

The aforementioned characteristics are appealing in the context of MFD. In brief, after being successfully trained exclusively on data regarding normal machine behaviour, an AE is capable of reconstructing new instances drawn from healthy machine operation with minor errors. However, when instances of faulty machine operation are presented to the same AE, a higher reconstruction error is expected since the model was not trained to represent such phenomena. Reconstruction errors can be measured/quantified and, if they exceed some pre-defined threshold, can be deemed as faults. The whole procedure is summarized by Fig. 4. Besides the feature extraction procedure, which was already discussed in Sect. 3.3, we are left to define reconstruction errors and the definition of the threshold to determine a fault. This is done in the following.

When presented with an unseen frame stack (\(\textbf{fs}\)), an Autoencoder model will produce as output a reconstructed version of the input (\(\widehat{\textbf{fs}}\)). The reconstruction error of the AE (re) is computed as the average of the individual errors obtained for each pair of corresponding bins (b) from: the input frame stack (\(\textbf{fs}_{b}\)) and the reconstructed stack (\(\widehat{\textbf{fs}}_b\)). Considering that these frames have the same length (\(l_{fs}\)), Equation (1) provides the definition for reconstruction error (re).

Here, we recall that each 16 kHz acoustic signal yielded a number of frame stacks (\(n_{fs}\))—see Sect. 3.3 for details. We perform fault detection at a signal level, that is, we evaluate the 16 kHz signal reconstruction error score (\(s_{re}\)), computed as the average over all its frame stacks’ reconstruction errors, so that \(s_{re} = {1}/{n_{fs}} \sum _{i = 1}^{n_{fs}} re(\textbf{fs}(i),\widehat{\textbf{fs}}(i))\), where \(\textbf{fs}(i)\) is a frame stack at index i.

With that in mind, a faulty behaviour can be identified if its acoustic signal yields a reconstruction error score (\(s_{re}\)) higher than a pre-defined acceptable threshold. To determine such threshold, we resort to the Youden index, in the context of ROC Curves [28], which identifies the score with the greatest vertical distance to the chance line and has been widely employed to identify the best threshold cutoff [15]. It seeks to maximize the effectiveness of a binary model by indicating the cutoff where TPR (True Positive Rate) and TNR (True Negative Rate) are optimal. The optimal cutoff (\(c^*\)) is given by Eq. (2).

It is worth noting that calibration of the cutoff with the use of Youden Index was performed on training and validation set, never considering the test set (that is, the cutoff was estimated as in a real application scenario). Given that a 5-fold cross-validation was employed for that (details are provided Sect. 5 – Experimental Setup) four approaches were considered to determine the actual threshold from the validation folds for posterior fault determination within the test fold, namely: minimum (min), maximum (max), median and mean.

5 Experimental Setup

5.1 Architecture of the Autoencoder

We evaluate Autoencoders w.r.t. to their ability to model the behaviour of normal condition frame stacks, extracted from the acoustic signals. The AE input/output size is 1,600, i.e., the frame stack length obtained with the parameters presented in Table 1. We performed a grid search over the number of layers \([2,4,\ldots ,10,12]\), neurons per inner layer \([400, 800,\ldots , 3200, 3600]\) and, neurons in the bottleneck layer \([4,8,16,\ldots ,128,256,512]\). The L2 penalty value was also included in the grid search, for which we considered the following values: [0.01, 0.1, 0.5, 1, 1.3, 1.5, 1.8, 2]. The most promising set of hyper-parameters identified was: six inner layers (considering encoder and decoder), each with 1,600 input neurons, except for the bottleneck, which is strangled from 1600 to 16 neurons. The best L2 penalty value was 1.0. This is the architecture employed in our evaluation. In our architecture, the ReLu activation function provided the best results. A batch normalization layer was included in the input. We trained our models using the Mean Squared Error (MSE) loss function, Adam optimizer, and learning rate of \(10^{-5}\). Figure 5 summarizes the design. Note that the input’s reconstruction results from a direct linear combination of the last layer.

5.2 Baselines

Several studies have been carried out on the MaFaulDa database. The majority of them focus on multi-class classification and employ either all eight sensors, or only accelerometer data [11,12,13, 20, 23]. In this work we focus only on the acoustic signal in order to establish if it is sufficient to detect faults in a binary fashion (i.e., we do not perform fault isolation). We compare the Autoencoder approach against four baseline models, all based on MLPs (Multilayer Perceptrons). It is worth noticing that all baselines are evaluated on the same binary task of determining if the machine is under normal or faulty operation. The different baselines arise from distinct feature spaces, as we detail in the following.

The literature considers statistical and spectral features as a traditional and efficient approach to engineer features and perform Machine Fault Detection [18, 26]. In fact, former works with MaFaulDa relied on this approach, considering all eight available signals [11, 20]. A total of 13 features are extracted from each raw signal, namely: root mean square; square root of the signal’s amplitude; kurtosis value; kurtosis factor; skewness value; peak-to-peak value; crest factor; impulse factor; margin factor; shape factor; frequency center; root variance frequency and; rms frequency. For further information on these features, see [18].

The first two baselines were trained with the statistical and spectral features listed above. The first, referred to as B104, is an MLP with 104 inputs, 13 for each one of the eight signal sensors from MaFaulDa. The second, is an MLP with 13 inputs, referred to as B13, that is based only on the 13 features extracted from the acoustic signal. The remaining two baselines, are referred to as MLP and cMLP (constrained MLP, due to a bottleneck in its arrangement), respectively. These are trained with the very same input features as provided to the Autoencoder, that is, the MFEC-based feature space described in Sect. 3.3.

Regarding architectures B104 and B13, for a given acoustic signal, statistical and spectral features are extracted and provided as inputs. Once trained, each model outputs a single representative score (\(S_B\)) in the range [0, 1]. For MLP and cMLP a single score is produced either. Recall, however, that these two models take a frame stack (\(\textbf{fs}\)) as input. Therefore, for a given 16 kHz acoustic signal with \(n_{fs}\) frame stacks, we take the average over all frame stacks’ score predictions (\(score(\textbf{fs})\)) as the signal representative score (\(S_{mlp}\)), so that \(S_{mlp} = {1}/{n_{fs}}\sum _{i=1}^{n_{fs}} score({{ \textbf{fs}}(i)})\), where \(\textbf{fs}(i)\) is a frame stack at index i.

The representative scores (\(S_B\) and \(S_{mlp}\)) from all baselines were calibrated following the same procedure employed for the autoencoder, i.e., using the Youden Index. Each baseline has its hyper-parameters individually fine-tuned with a grid-search procedure. The hyper-parameters that led to the best MFD performance for each baseline were chosen, resulting in the configuration presented in Table 2.

5.3 Training of Score Generators

In order to obtain estimates regarding the quality of all the models a stratified 5\(\,\times \,\)5 fold cross-validation was employed. In brief, it comprises two nested 5-fold cross-validation estimators. The first one separates the data for testing, with the remaining data (referred to as full-train) being subjected to another 5-fold cross-validation approach, which further divides the data into training and validation. These last two sets are employed for hyper-parameter estimation and calibration of the thresholds for the fault detectors. At the end, the full-train set is used to train each model with the best parameters found. All models, are finally evaluated with the test set, which was not employed anywhere before.

The split of the dataset occurs at a signal segment level. First, all signals are arranged and distributed according to the cross-validation folds. Afterwards, the dataset is built by replacing the original acoustic segment by its three respective sub-sampled acoustic signals (see Sect. 3.2). Finally, feature extraction is performed on those. This approach prevents a model from being trained and tested with sub-samples that stem from the same acoustic signal, which would bias the evaluation, providing overconfident results. The first two baselines (traditional feature extraction) were trained with features extracted from the original signal.

5.4 Evaluation Metrics

In this work, following traditional convention, we consider the normal class negative and the faulty class positive. Given that we are dealing with a heavily imbalanced dataset (towards the positive class) metrics such as accuracy are not appropriate for evaluation. Therefore, we perform our analysis on the basis of the Area Under the ROC Curve (AUC), True Positive Rate (TPR), True Negative Rate (TNR) and, G-means. The G-means metric was introduced by [9]. It is defined as \(G{\scriptstyle -}means = \sqrt{TPR*TNR} = \sqrt{Sensitivity * Specificity}\) and is maximized when the Sensitivity and Specificity are both high and balanced. Conversely, a low G-means score indicates a model that has a poor performance in predicting at least one of the classes, even if it correctly classifies the other.

6 Results and Discussion

The results regarding the evaluation of the Autoencoders and the four baselines are presented in Fig. 6 and Table 3. These results were obtained with a 5-fold cross-validation procedure considering the following metrics: \(G\text {-}means\), AUC, TPR and, TNR. For these, we depict both averages and standard deviation. Recall that for each method we have to establish a threshold in order to perform fault detection. We considered four different approaches in order to select such a threshold from those of the validation set (minimum, maximum, mean, median).

Performance of the different models w.r.t. Machine Fault Detection (MFD). The proposed model (AE) and the baselines MLP and cMLP were built using only features extracted on the basis of MFECs from the acoustic signal. Baselines B104 and B13 were built with statistical and spectral features from all signals and only the acoustic signal, respectively. The figure shows the average AUC, G-means, TNR and TPR, for each threshold approach: mean, median, minimum and maximum.

We begin our assessment considering the AUC metric. It is worth noticing that the AUC evaluation is threshold agnostic, that is, no particular threshold is considered (for that reason there is only one value of AUC for each method in Table 3). For this particular measure, the baseline B104, the MLP built on the basis of spectral and statistical features from all eight sensors, is the most promising model. The other baseline built on the basis of traditional features considering only acoustic signals (B13), however, has a poor performance, with an AUC of \(0.63\pm 0.06\), which is barely above that of a completely random classifier. The remaining methods, the AE and the remaining baselines (MLP and cMLP), also perform well in terms of AUC. These three are built considering features extracted from the MFECs from the acoustic signals only. From these, the AE was actually the worst performer (around 10% worse than the two MLPs). It is important to recall, however, that the AE model does not require labeled data.

The performance of the models in light of the G-means metric follows a similar pattern of that from the AUC analysis. The most accurate anomaly detector is still B104 (with a performance similar to that observed in previous works on MaFaulDa), succeeded by both MLPs and the AE. In the case of B104, the four thresholds yield almost the same performance. The MLP models are lightly affected by threshold selection, whereas for the AE model, mean and median thresholds taken from the validation set are more promising. Once again, the model built on the basis of statistical and spectral features of the acoustic signal alone (B13) was the worst performer among all models under evaluation.

The values of TNR and TPR metrics provide some insights into the values of the G-means metric. There is a higher standard deviation for the TNR metric, which might be justified by the small amount of negative (normal behaviour) class. The AE model predominantly exhibits higher TNR values than TPR. This might indicate that the threshold selected from the validation sets is too high. Indeed, for the min threshold, TPR is higher than TNR for AE. Such an effect might be an indicative that the AE did not fully learn how to represent the normal signal. This, in turn, might result in reconstruction errors for abnormal signals (faults) that are not clearly distinguishable from normal ones.

Even though the Autoencoder approach did not provide the best results, we believe that taking this result on its own would be misleading. To fully appreciate these results, we must take into account some key points, discussed next.

First, these results should be appreciated in light of the fact that there is a severe imbalance in the MaFaulDa database towards the positive class (faulty class). This means that the AEs were trained on a subset from a total of 10,290 normal objectsFootnote 1. Meanwhile, the MLP baselines that employed the same feature space (MLP and cMLP) were trained on a subset from a total of 409,710 objects (10,290 normal and 399,420 faulty). In brief, the baselines had almost 40 times more data available during training, which might have helped them to better represent the phenomenon under investigation, enhancing their performance.

Second, it is important to highlight that the Autoencoder (AE) is an unsupervised method, whereas all baselines are supervised. This has major practical implications, given that the AE model depends exclusively on normal operation data from a machine for its training. All the baselines, on the other hand, cannot be trained without labeled data (normal and faulty). Faulty data might be not only difficult to obtain, but also costly. Moreover, it is almost impossible to foresee all fault types and magnitudes in real-world scenarios.

7 Conclusions

In this work a case study in the context of fault detection for electrical rotating machines was considered. More specifically, we evaluated unsupervised fault detection, performed with Autoencoders, taking into account exclusively acoustic data. Our case study was based on Machine Fault Database (MaFaulDa), which is publicly available [10]. Following good research practices, our experiments are fully reproducible, with all source code publicly available at [2].

Our proposed approach was evaluated against baselines considering two feature extraction techniques, i.e., (i) statistical and spectral features (a “traditional” way of dealing with acoustic signals) and; (ii) the very same MFEC-based feature space employed by our proposed approach. We also considered baselines that took as input additional signals from the rotating machine, other than the acoustic one, to place results in perspective.

Results from the evaluation indicate that a Multilayer Perception, trained on data from all the sensors available in MaFaulDa (baseline referred to as B104), provides the best overall results. In fact, these are close to perfect fault detection in the application. Another MLP model (also a baseline—referred to as B13), however, that considered only acoustic signals, did not provide promising results. In fact, its AUC values were close to that of a chance classifier. The remaining baselines (MLP and cMLP), which are based on acoustic signals, with the same feature extraction procedure considered for the AE, provided favorable results.

We argue that the results obtained in this case study are encouraging and promising, despite the fact that the Autoencoder model was not the top performer. The key points to support this claim are discussed in the following.

First and foremost, our experiments confirm that fault detection based exclusively on acoustic signals is viable, even for cases in which acoustic collection was not optimized. We believe that this is the case for MaFaulDa, given that a set of sensory inputs was collected, with no focus on acoustic signals in particular.

Second, the Autoencoder was the only unsupervised model from the set of models under evaluation. It is important to emphasize that it was trained only with normal behaviour data which, for MaFaulDa, consists in the minority class. Indeed, the normal class has around 40 times fewer objects than the faulty one. Note that even with this severe imbalance, the Autoencoder model delivered AUC values of 0.86, only 12% below the supervised MLP models that were trained on both normal and faulty data. Still considering the learning paradigms employed by the models, we argue that the Autoencoder is preferable in real-world application scenarios, as obtaining faulty data is not only time-consuming and costly, but might also pose a risk to equipment and people’s lives.

We believe that, in light of these facts, future works considering acoustic signals and unsupervised fault detection based on Autoencoders are promising and worth exploring. Therefore, we plan to explore Autoencoder architectures besides the “vanilla” flavour explored in this work. In particular, Convolutional and Variational Autoencoders are of particular interest, due to their compelling properties. Different approaches for determining thresholds will also be explored.

Notes

- 1.

Note that a 5-fold cross-validation was employed for performance estimation of the models, which means that not all data was actually available, due to train/test split.

References

Alharbi, F., et al.: A brief review of acoustic and vibration signal-based fault detection for belt conveyor idlers using machine learning models. Sensors 23(4), 1902 (2023). https://doi.org/10.3390/s23041902

Bortoni, L.: Gitlab/leobortoni (2024). https://gitlab.com/LeoBortoni/mafaulda-stats-classifiers

Coelho, G., et al.: Deep autoencoders for acoustic anomaly detection: experiments with working machine and in-vehicle audio. Neural Comput. Appl. 34(22), 19485–19499 (2022). https://doi.org/10.1007/s00521-022-07375-2

Dohi, K., et al.: MIMII DG: sound dataset for malfunctioning industrial machine investigation and inspection for domain generalization task. arXiv preprint arXiv:2205.13879 (2022)

Ferrando Chacon, J.L., et al.: A novel approach for incipient defect detection in rolling bearings using acoustic emission technique. Appl. Acoust. 89, 88–100 (2015). https://doi.org/10.1016/j.apacoust.2014.09.002

Goodfellow, I., Bengio, Y., Courville, A.: Deep Learning. MIT Press (2016). http://www.deeplearningbook.org

Harada, N., Daisuke Niizumi, D.T., Yasunori Ohishi, M.Y., Saito, S.: ToyADMOS2: another dataset of miniature-machine operating sounds for anomalous sound detection under domain shift conditions (2021)

Koizumi, Y., et al.: ToyADMOS: a dataset of miniature-machine operating sounds for anomalous sound detection. In: 2019 IEEE Workshop on Applications of Signal Processing to Audio and Acoustics (WASPAA), pp. 313–317. IEEE, New Paltz, NY, USA (2019). https://doi.org/10.1109/WASPAA.2019.8937164

Kubát, M., Matwin, S.: Addressing the curse of imbalanced training sets: one-sided selection. In: International Conference on Machine Learning (1997)

Mafaulda: Machinery fault database (2016). https://www02.smt.ufrj.br/~offshore/mfs/. Accessed 10 May 2024

Marins, M.A., et al.: Improved similarity-based modeling for the classification of rotating-machine failures. J. Franklin Inst. 355(4), 1913–1930 (2018). https://doi.org/10.1016/j.jfranklin.2017.07.038

Martins, D.H.C.S.S., Hemerly, D.O., Marins, M., Lima, A.A., Silva, F.L., Prego, T.M., Ribeiro, F.M.L., Netto, S.L., da Silva, E.A.B.: Application of machine learning to evaluate unbalance severity in rotating machines. In: Cavalca, K.L., Weber, H.I. (eds.) IFToMM 2018. MMS, vol. 61, pp. 144–160. Springer, Cham (2019). https://doi.org/10.1007/978-3-319-99268-6_11

Messaoudi, M., et al.: Classification of mechanical faults in rotating machines using smote method and deep neural networks. In: IECON 2022 - 48th Annual Conference of the IEEE Industrial Electronics Society, pp. 1–6 (2022). https://doi.org/10.1109/IECON49645.2022.9968875

Nunes, E.C.: Anomalous sound detection with machine learning: a systematic review. arXiv preprint arXiv:2102.07820 (2021)

Perkins, N.J., Schisterman, E.F.: The inconsistency of optimal Cutpoints obtained using two criteria based on the receiver operating characteristic curve. Am. J. Epidemiol. 163(7), 670–675 (2006). https://doi.org/10.1093/aje/kwj063

Purohit, H., et al.: MIMII dataset: sound dataset for malfunctioning industrial machine investigation and inspection. In: Proceedings of the Detection and Classification of Acoustic Scenes and Events Workshop, pp. 209–213 (2019)

Purohit, H., et al.: Hierarchical conditional variational autoencoder based acoustic anomaly detection. In: 30th European Signal Processing Conference, pp. 274–278 (2022).https://doi.org/10.23919/EUSIPCO55093.2022.9909785

Rauber, T.W., De Assis Boldt, F., Varejao, F.M.: Heterogeneous feature models and feature selection applied to bearing fault diagnosis. IEEE Trans. Ind. Electron. 62(1), 637–646 (2015). https://doi.org/10.1109/TIE.2014.2327589

Ren, S., et al.: A comprehensive review of big data analytics throughout product lifecycle to support sustainable smart manufacturing: a framework, challenges and future research directions. J. Cleaner Prod. 210, 1343–1365 (2019). https://doi.org/10.1016/j.jclepro.2018.11.025

Ribeiro, F., Marins, M., Netto, S., Silva, E.: Rotating machinery fault diagnosis using similarity-based models. In: Anais de XXXV Simpósio Brasileiro de Telecomunicações e Processamento de Sinais (2017). https://doi.org/10.14209/sbrt.2017.133

Saufi, S.R., Ahmad, Z.A.B., Leong, M.S., Lim, M.H.: Challenges and opportunities of deep learning models for machinery fault detection and diagnosis: a review. IEEE Access 7, 122644–122662 (2019). https://doi.org/10.1109/ACCESS.2019.2938227

Saufi, S.R., Isham, M.F., Ahmad, Z.A., Hasan, M.D.A.: Machinery fault diagnosis based on a modified hybrid deep sparse autoencoder using a raw vibration time-series signal. J. Ambient. Intell. Humaniz. Comput. 14(4), 3827–3838 (2023). https://doi.org/10.1007/s12652-022-04436-1

Souza, R.M., et al.: Deep learning for diagnosis and classification of faults in industrial rotating machinery. Comput. Ind. Eng. 153, 107060 (2021). https://doi.org/10.1016/j.cie.2020.107060

Suefusa, K., et al.: Anomalous sound detection based on interpolation deep neural network. In: ICASSP 2020 - 2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 271–275 (2020). https://doi.org/10.1109/ICASSP40776.2020.9054344

Tanabe, R., et al.: MIMII due: sound dataset for malfunctioning industrial machine investigation and inspection with domain shifts due to changes in operational and environmental conditions. In: 2021 IEEE Workshop on Applications of Signal Processing to Audio and Acoustics, pp. 21–25. IEEE, New Paltz, NY, USA (2021). https://doi.org/10.1109/WASPAA52581.2021.9632802

Tang, L., Tian, H., Huang, H., Shi, S., Ji, Q.: A survey of mechanical fault diagnosis based on audio signal analysis. Measurement 220, 113294 (2023). https://doi.org/10.1016/j.measurement.2023.113294

Yi, W., Choi, J.W., Lee, J.W.: Sound-based drone fault classification using multitask learning (2023)

Youden, W.J.: Index for rating diagnostic tests. Cancer 3(1), 32–35 (1950)

Zhao, Z., et al.: A fault diagnosis method of rotor system based on parallel convolutional neural network architecture with attention mechanism. J. Sig. Process. Syst. 95(8), 965–977 (2023). https://doi.org/10.1007/s11265-023-01846-y

Acknowledgements

The authors thank FAPESC for the financial support.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Bortoni, L.A.F., Jaskowiak, P.A. (2025). Acoustic Features and Autoencoders for Fault Detection in Rotating Machines: A Case Study. In: Paes, A., Verri, F.A.N. (eds) Intelligent Systems. BRACIS 2024. Lecture Notes in Computer Science(), vol 15414. Springer, Cham. https://doi.org/10.1007/978-3-031-79035-5_3

Download citation

DOI: https://doi.org/10.1007/978-3-031-79035-5_3

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-79034-8

Online ISBN: 978-3-031-79035-5

eBook Packages: Computer ScienceComputer Science (R0)