Abstract

This work relies upon raw data to present the time-frequency consistency (TF-C) evaluation for human activity recognition (HAR). The original paper utilized data for this task in the pretext stage but did not explore its application in the downstream task. An application with a modified TF-C architecture uses HAR data on the downstream task, reporting an accuracy of 64.08%. We propose three experiments. First, we reproduce the original experiment with the epilepsy dataset, comparing the results with the reported ones. Second, we make a performance comparison test using different percentages of data from 0.1% to 100% and report the corresponding accuracy. Finally, we compare the results with supervised Convolutional Neural Networks and the supervised TF-C. This work demonstrates the feasibility of utilizing TF-C to perform HAR as downstream task, achieving an accuracy of 96% utilizing all data of the training dataset in fine-tuning. Even with just 42 samples of the training dataset, the model achieved an accuracy of 85% and to obtain an accuracy greater than 90% it is only necessary 126 train samples.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

The smartphone-based human activity recognition (HAR) task amounts to identifying the activity performed by an individual given samples from inertial sensors, such as gyroscope and accelerometer. In recent years, approaches based on deep neural networks (DNNs), including convolutional networks (CNNs), have achieved good results for different datasets [4, 7, 14].

Training deep models in a supervised fashion requires a significant amount of labeled data, which, in turn, depends on a labor-intensive and error-prone process of manually labelling each sample or even each time window. In this context, adopting self-supervised learning (SSL) methods to pretrain part of the network structure emerges as an interesting alternative, since it may lead to learning an effective latent representation using only unlabeled data, which are more easily collected. Additionally, it may also allow the use of a relatively small labeled dataset for training the model in the target task (HAR, for instance), so that an adequate classifier can be obtained even with a few labeled samples.

A common SSL framework involves two training steps: the pretext task and training for the downstream task. In the first step, a feature extractor, called the backbone, followed by a projection head—typically a shallow neural network—is trained to solve a supervised learning task with labels automatically derived from the original unlabeled input samples. This task, although not particularly useful in practice, is designed to encourage the model to learn meaningful data representations. The idea is that by training the structure to solve this pretext task, the backbone learns to capture general and relevant information from the input data into a latent representation, which can then be used in the downstream task. Examples of pretext tasks include reconstruction tasks, jigsaw tasks, and contrastive techniques (performing augmentations and comparing similar samples), such as TF-C. In the second step, the pretrained backbone is combined with a new prediction head to create the downstream model, which is trained to solve the target task using a labeled dataset.

Most SSL techniques are first proposed and evaluated on image classification tasks [3, 5, 6, 9, 26]. Nonetheless, several methods have also been proposed for time-series [27], such as CPC [17], TNC [23], and TF-C [28]. TF-C is a contrastive learning technique focused on time series problems with two main characteristics: (i) the backbone is divided in two separate parts that process the raw time windows and the frequency spectra, respectively; and (ii) the pretext task encompasses three losses a contrastive time domain loss, a contrastive frequency domain loss, and a cross-domain contrastive loss.

Recent works analyzed TF-C in different time series scenarios, with modifications on the framework and on the architecture for backbone [8, 10, 24, 28]. However, there are few works that studied TF-C for HAR in the downstream task and with a CNN architecture. This work focus on TF-C for HAR, expanding the comparison between SSL and supervised strategies by: (i) considering the influence of the number of labeled training samples in the downstream task performance; (ii) comparing the latent spaces; and (iii) analyzing the impact of time and frequency-domain pipelines in the final performance. The obtained results indicate a similar accuracy in comparison to the state-of-the-art and an excellent class separation in latent space, even when only a few samples are employed in the training stage.

The remainder of this text is organized as follows: Sect. 2 presents the related work; Sect. 3 provides an overview of the TF-C technique; Sect. 4 discusses the experimental results; finally, Sect. 5 presents the conclusions and future work.

2 Related Work

This section presents the related work. First it provices an overview of works that employed SSL techniques to support the development of models for HAR. Then, it lists works that evaluated TF-C on non-HAR tasks. Finally, it discusses works that assess the performance of TF-C on HAR.

SSL for HAR: Several works evaluate the potential of SSL techniques on human activity recognition tasks. Saeed et al. [20] introduced an SSL technique using transformation classification as a pretext task. The model is composed of a three-layer 1D CNN backbone with multiple binary prediction heads, each identifying a single transformation such as noise addition, rescaling, rotation, negation, horizontal flipping, permutation, time warping, and channel shuffling. Applied to accelerometer and gyroscope data, their approach outperforms both supervised and unsupervised methods on datasets like HHAR, UniMiB, UCI-HAR, WISDM, and MotionSense, with improvements in performance up to 4%.

Haresamudram et al. [11] proposed an SSL technique using Masked Autoencoders. Here, n distinct time instants are randomly selected and perturbed. This input is passed to a transformer-based Autoencoder that, then, go through fully connected layers aiming to reconstruct the original input, with the loss function calculated only on the perturbed part. The authors demonstrate that the model learns useful representations and achieves good results in HAR using the MotionSense and USC-HAD datasets.

Tang et al. [22] adapted SimCLR [5] for HAR. SimCLR is a popular SSL method that uses contrastive learning to learn discriminative representations by maximizing the similarity between positive pairs and minimizing it between negative pairs. Positive pairs are generated from two augmentations of the same input, while negative pairs come from different inputs. In their work, the authors use eight augmentations for time-series data and reported a performance gain of over 2% with pretrained models.

In a later work, Tang et al. [21] introduced SelfHAR, a teacher-student architecture for HAR that integrates semi-supervised and SSL techniques. The teacher model, a 1D CNN, generates pseudo-labels for unlabeled data, which are then used to train the student model on high-confidence samples. The student model employs a self-supervised, multi-task learning approach with transformation classification as the pretext task, similar to Saeed et al. [20]. Self-Supervised training utilized the Fenland Study data, encompassing over 12,000 participants to identify causes of obesity and type 2 diabetes. Evaluated on six datasets (HHAR, MotionSense, MobiAct, PAAS, UniMib, UCI, and WISDM), SelfHAR showed significant performance improvements, with a 12% gain on UniMiB compared to fully supervised methods, and outperformed Saeed’s method.

Contrastive Predictive Coding (CPC) [17] is an SSL technique where the pretext task is to predict future information based on present context, similar to time-series forecasting tasks. It has two main components: an encoder, which maps input data to a latent representation, and an autoregressive model, which processes this latent representation to capture temporal dependencies. Haresamudram et al. [12] proposed a modified encoder architecture for CPC on HAR, with three one-dimensional convolutional layers, while retaining the autoregressive network as a GRU with 256 states. The authors achieved strong results by using a pretrained backbone, rather than training the network from scratch, in a supervised manner.

Qian et al. [18] conducted an empirical study on contrastive-based methods of SSL in HAR context, evaluating several properties (e.g. backbone architecture, augmentation strategies, and pair construction). Comparing five SSL methods, BYOL, SimSiam, SimCLR, NNCLR, and TS-TCC, they made an interesting insight related to data-scarce cross-person generalization. NNCLR achieved the best performance in the UCI dataset, while SimCLR performed best with the SHAR dataset, highlighting that the effectiveness of SSL methods can be dataset-dependent, with nearest neighbor pair construction being particularly effective for certain datasets like UCI.

Similarly to Qian et al., in 2022 Haresamudram et al. [13] also assessed the performance of several SSL methods on HAR tasks. The Multi-task Self-supervision [20], masked reconstruction [11], CPC [12], Autoencoder [20], SimCLR [5, 22], SimSiam [6], and BYOL [9] techniques were evaluated under different criteria on 10 different datasets. The authors affirm the capability of these techniques for empowering HAR tasks.

Recently, Yuan et al. [25] employed a Multi-Task SSL approach to train a ResNet backbone using the UK Biobank data, a large-scale dataset with more than 700,000 person-days of data from wearable sensors. The SSL method is the same as in Saaed’s work [20], however, the authors incorporated weighted sampling to improve training stability. They sampled data windows in proportion to their standard deviation, giving more weight to high-movement periods. The authors demonstrated significant performance improvements in several HAR datasets compared to classical algorithms and fully-supervised ResNet training.

TF-C: TF-C is an SSL technique that was proposed by Zhang et al. [28], in 2022. In their work, the authors evaluated the technique on a variety of time-series related tasks, such as Epilepsy detection [1], sleeping disorder classification [15], and hand gesture recognition [16]. They also employed a HAR dataset to pretrain the TF-C backbone, however, they did not evaluate the impact of TF-C on the HAR task.

Recently, in 2024, Xiao et al. [24] employed a modified version of TF-C, known as TFCSRec, for product recommendations. This adapted version learns frequency-domain representations through a trainable frequency-domain encoder, similar to the original TF-C. However, this study does not extend its analysis to the HAR domain, focusing solely on recommendation systems.

Dong et al. [8] introduced a another variant of the TF-C framework named SimMTM, focusing on masked time-series modeling tasks. Unlike traditional methods that reconstruct the original series from unmasked points, SimMTM reconstructs the original series from multiple neighboring masked series. Although, this study utilized TF-C and an architecture with minor modifications, retaining the convolutional structure, it did not perform pretraining and downstream tasks in the HAR domain.

TF-C for HAR: Guo et al. [10] explored the TF-C method for HAR. The authors evaluate two training schemes: (a) pretraining in PAMAP2 dataset and, then, fine-tuning using the UCI-HAR dataset, reaching an accuracy of 60.31%; and (b) pretraining in Opportunity dataset and, then, fine-tuning using the UCI-HAR dataset, reaching an accuracy of 64.04%. However, the main focus of this work is the ModCL framework, similar to TF-C due to its pipeline, with contrastive learning and data augmentation. The architecture for both was a ResNet.

Table 1 provides a summary of the work presented in this section. To the best of our knowledge, only the work of Guo et al. [10] assess the performance of TF-C on the Human Activity Recognition task, however, as discussed previously, it does not achieve high performances with TF-C due to its backbone architecture. Here we analyze the convolutional neural network as the backbone, which generates better results, and take the opportunity to discuss the quality of latent space and compares different amount of fine-tuning data.

3 The Time Frequency Consistency Framework (TF-C)

The Time Frequency Consistency (TF-C) Framework is a Self-Supervised Learning approach based on contrastive learning that simultaneously explores information in both the time and frequency domains. Figure 1 exhibits the main elements of TF-C and the corresponding pretext task. The backbone is composed of two encoders (\(G_T\) and \(G_F\)) and two projectors (\(R_T\) and \(R_F\)) and organized in three pipelines, one that processes the data in the time domain (top) and another that processes the data in the frequency domain (bottom), and one that projects the time and frequency embeddings into a common latent space for consistency (right).

The time pipeline processes the original time series (\(x_i^T\)) and its augmented version (\(\tilde{x}_i^T\)) using the \(G_T\) encoder, resulting in the embeddings \(h_i^T\) and \(\tilde{h}_i^T\). Meanwhile, the frequency pipeline converts the original time series into its frequency domain version (\(x_i^F\)) using a Fast Fourier Transform (FFT) algorithm. An augmented version of this frequency data (\(\tilde{x}_i^F\)) is also produced. Both the original and augmented frequency data are encoded by the \(G_F\) encoder, yielding the representations \(h_i^F\) and \(\tilde{h}_i^F\). Finally, the consistency pipeline (right) encode the time embeddings (i.e., \(h_i^T\) and \(\tilde{h}_i^T\)) using the time projector (\(R_T\)), producing the \(z_i^T\) and \(\tilde{z}_i^T\) embeddings, and encode the frequency embeddings (i.e., \(h_i^F\) and \(\tilde{h}_i^F\)) using the frequency projector (\(R_F\)), producing the \(z_i^F\) and \(\tilde{z}_i^F\) embeddings.

The time and frequency encoders (\(G_T\) and \(G_F\)) are trained to ensure that the representations produced by the time and frequency pipelines are discriminative. Additionally, the encoders and the projectors in the consistency pipeline (i.e., \(R_T\) and \(R_F\)) are trained to produce time and frequency representations that are consistent with each other. To achieve this, the TFC framework employs a loss function with three components: time loss, frequency loss, and consistency loss. The time and frequency losses ensure that the time and frequency representations of the data are discriminative and invariant to augmentations. They use contrastive loss to make the embeddings of the original and augmented versions of the data (positive pairs) similar, while ensuring embeddings from distinct samples in the batch are different. The consistency loss, also a contrastive loss, is designed to ensure that the representations produced by the projectors are consistent across the time and frequency domains.

Encoder’s Architecture: In our experiments, we employ Convolutional Neural Networks to implement the time (\(G_T\)) and frequency encoders (\(G_F\)). These CNNs have the same architecture/layers, but with independent weights. All convolutional layers implement one-dimensional (1D) convolutions with kernel size of 8, stride of 1, padding of 4, and no bias. The first convolutional block produces 32 channels and is composed of the Conv. layer, batch normalization, ReLU activation and Max-Pool 1D (kernel size of 2, stride of 2, and padding of 1). Only this block includes a dropout layer with a rate of 0.35 at the output. The subsequent convolutional blocks only differ in the number of channels – 64 and 128, respectively –, and explore the same structure and hyper-parameters of the first block (except dropout). This architecture is illustrated in Fig. 2a.

Projector’s Architecture: The projectors (both \(R_T\) and \(R_F\)) are composed of a dense linear layer with 256 neurons, followed by batch-normalization, ReLU activation and another linear layer with 128 units, as indicated in Fig. 2b.

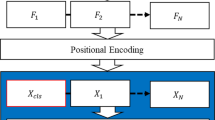

Prediction Head and Downstream Model: Once the model components (i.e., \(G_T\), \(G_F\), \(R_T\), and \(R_F\)) are trained in the pretext task, a downstream model is build by combining with a prediction head. The prediction head is attached after the time (\(R_T\)) and frequency (\(R_F\)) projectors, receiving as input the concatenation of \(z^T\) and \(z^F\). It is composed of a linear dense layer with 64 elements, a ReLU activation and another linear dense layer with 6 neurons to produce the class-logits, as illustrated in Fig. 2c.

Time-Frequency Consistency Augmentations: In the time domain, the data is augmented by a jitter transformation with scale of 0.8. In the frequency domain some frequencies, selected when a random variable with normal distribution (mean 0.5 and standard deviation 0.5) is greater than 1.

4 Experimental Results

This section presents the analyses of the TF-C framework. First, Sect. 4.1 lists the materials and methods used in our experiments. Then, Sect. 4.2 presents the validation results, demonstrating that our code is competitive with the state-of-the-art. Next, Sect. 4.3 discusses the quantitative results, assessing the performance of the ML models under different data regimen. Finally, Sect. 4.4 assess the quality of the representations learned by the models.

4.1 Materials and Methods

Models: To understand the importance of each component of TF-C on HAR, we evaluate four models in this work:

-

TFC: corresponds to the TF-C downstream model. It is composed of the TF-C backbone (i.e., \(G_T\), \(G_F\), \(R_T\), and \(R_F\)) and a prediction head. The backbone structures are pretrained by the TF-C pretext task and further finetuned together with the prediction head on the target (downstream) task.

-

SUP TFC: has the same architecture as the previous model (i.e., TFC), however, the backbone is not pretrained on the pretext task. It serves as a reference to measure the impact of the pretraining process.

-

SUP Time: is composed of \(G_T\) concatenated with \(R_T\) and the prediction head. This model is not pretrained. In other words, this model consists of the time portion of the backbone, followed by the prediction head (which here has half the number of input features).

-

SUP Frequency: is similar to SUP Time, but using the frequency portion of the TF-C backbone.

The architecture of the SUP Time and SUP Frequency models is depicted in Fig. 2d.

TF-C framework modifications: We utilized the code made by Zhang et al. [28], which is publicly available on GitHub. However, in order to conduct our experiments in HAR, we carried out two main modifications. First, the code employed transformer models as time (\(G_T\)) and frequency (\(G_F\)) encoders. Here, we adapted the encoders in order to explore CNNs, as occurs in their original paper [28]. Second, the original implementation combined cross-entropy with the TF-C overall loss during the training stage for the downstream task. In our work, we simplified the downstream loss to be only the cross-entropy, since this led to similar or better results in the performed tests and fewer terms. The loss in Eq. 1 was presented by TF-C authors as the fine-tune total loss, where \(\lambda \) is a hyper-parameter, \(\mathcal {L}_{\text {T}, i}\) is the temporal loss, \(\mathcal {L}_{\text {F}, i}\) is the frequency loss, \(\mathcal {L}_{\text {C}, i}\) is the consistency loss and \(\mathcal {L}_{\text {P}i}\) is a common Cross Entropy Loss. On the other hand the loss in Eq. 2 is the fine-tune loss used on this work, which is simply the \(\mathcal {L}_{\text {P}i}\).

In summary, the changes were in the backbone and the downstream loss.

Datasets: We employed the SleepEEG [15], Epilepsy [1], and the UCI [2, 19] datasets on this work. Table 2 summarizes the characteristics of these datasets, but, in particular, the UCI dataset has windows of length 128 and 9 channels of the inertial signals: total acceleration, body acceleration and body gyroscope. The train set has 7352 samples and the test set has 2947 samples. The UCI labels used were: walking, walking upstairs, walking downstairs, sitting, standing, and lying.

Hyperparameters: We used the Adam optimizer with learning rate of \(3\cdot 10^{-4}\). The temperature was 0.2 and the model was trained for 40 epochs, with an early stopping based on validation loss. Any other hyperparameter was the same adopted by Zhang et al. [28]

4.2 Validating Our Implementation

The first experiment mimics one of the scenarios explored by the Zhang et al. [28], and is performed in order to assess the correctness of our implementation.

We pretrain the TF-C backbone using the SleepEEG dataset. Once the backbone is trained, we train and evaluate the downstream model on the Epilepsy dataset. Finally, we select the model with the best accuracy (Acc), the Area Under Receiver Operating Characteristic (AUROC), and Area Under the Precision-Recall Curve (AUPRC) metrics on the test dataset. This was the process adopted for original authors, which may introduce some bias. Once we need to replicate the pipeline, at this stage we use the test set, while in the next experiments the best model is selected by the loss value on the validation set and its test performance is reported. We repeat the experiment five times and report the average and standard deviation for each metric.

Table 3 shows the results reported by Zhang et al. [28] and our results. Our reproduction of TF-C yields accuracy similar to that reported by the authors of TF-C (taking into account both uncertainties), validating our implementation.

4.3 Accuracy by Percentage of HAR Data

In this experiment we evaluate the performance of TF-C on the HAR task.

First, we pretrain the TF-C backbone using all the samples of the UCI dataset, but without labels. Then, we build the downstream model and fine-tune it using the UCI train set. We conduct several experiments with different subsets of the train set to assess the benefits of TF-C in a low-data regime. Specifically, we train the downstream model using 0.1%, 1%, 10%, and 100% of the training set. To maintain a consistent number of training iterations across experiments, we proportionally increase the number of epochs relative to the reduction in the training set size. Finally, we select the best model according to the validation loss computed at the end of each epoch. This model is tested on the UCI test set and the accuracy, AUROC, and AUPRC metrics are exhibited on Table 4 and Fig. 3, as average of five runs.

Regarding Table 4, we can observe that pretrained models (TFC) have better performance when compared to the models trained from scratch (SUP TFC) in low data scenarios (1% and 0.1% of the data). On the other hand, in the 10% and 100% scenarios, the difference between the models is not so significant.

A similar behavior is observed in Fig. 3, where we compare the accuracy of the four models evaluated in this work. We can observe that the TFC model has the best performance in almost all cases. In a low-data regime, SSL plays a crucial role in the performance of the model, as indicated by the performance differences between the TFC and the SUP TFC models. Despite on very low data scenarios (using 0.1% of the data) where SUP Frequency achieves a slightly better (but poor) performance, the pretraining seems to allow the model to achieve a better performance faster than other supervised models. In fact, only 1% of the data is necessary to achieve an accuracy above 85%, while others models need, at least, 2% of the data to reach this threshold. In the 100% scenario, the difference between the models is not so significant, which suggests that the UCI training set is large enough to support the supervised training of the discussed models.

A Wilcoxon Signed Rank hypothesis test was conducted in order to analyze the influence of the SSL technique on the accuracy of the TFC, comparing it with the values of the tests of the SUP TFC, SUP Time, and SUP Frequency models individually. The comparison between the 9 samples of the TF-C and the 9 samples of the SUP TFC (as shown in Fig. 3) yielded a p-value of 0.01953, which is below the 5% significance threshold. This result supports the alternative hypothesis, indicating an improvement with the SSL technique.

Similarly, when comparing the SSL technique with the temporal pipeline (SUP Time), a p-value of 0.01172 was obtained, further supporting the alternative hypothesis. However, when the SSL technique was compared with the frequency pipeline (SUP Frequency), the resulting p-value was 0.3594, indicating that we cannot conclude there is a significant difference between the results produced by the TF-C and SUP Frequency models.

The test was conducted comparing the SSL results with complete SUP TFC, separately from SUP Time and after SUP Frequency.

4.4 Qualitative Assessment of Learned Representations

Another perspective to consider in this analysis involves the distribution of samples in the latent spaces generated by each model. Hence, we exhibit in Fig. 4 the visualization in two dimensions produced by t-SNE (with standard parameters) of the test samples considering: (i) the raw representation in time domain (Fig. 4a); (ii) the corresponding frequency-domain representation (magnitude of Fourier transform) (Fig. 4b); (iii) the latent representation (embedding with 256 dimensions) associated with the backbone + projector pretrained with TF-C (Fig. 4c); and (iv) the latent representation (embedding with 256 dimensions) of backbone + projector after fine-tuning with the complete train dataset (Fig. 4d).

It is possible to draw some important observations from Fig. 4. Firstly, Fig. 4a indicates that the raw data present a significant overlap between the activities; it is possible to recognize parts that resemble clusters, mainly for the “Laying” samples, but in general the data are mixed.

Secondly, as indicated by Fig. 4b, by simply mapping the samples to the frequency domain, we can notice a more distinctive separation between the activities, which corroborates the appeal for exploring the fourier transform in HAR. In Fig. 4c, we can see three main groups of activities: (a) laying; (b) sitting and standing; and (c) walking, upstairs and downstairs, with a non-negligible overlap between classes within the last two groups. This suggests that by pretraining the model without exploring the activity labels, the model still was capable of recognizing features that end up discriminating certain aspects of the underlying activities.

Finally, as indicated by Fig. 4d, when we perform a fine-tuning of the backbone and projector using labeled samples, the activities become even more separated, with well-defined clusters that could be more easily identified by machine learning methods.

5 Conclusions and Future Work

Our work effectively utilizes the TF-C model from original authors [28] to test performance across various amounts of training data. The TF-C, exhibits a significant facility in solving tasks of time series in scenarios with limited labeled data and an abundance of unlabeled data. In scenarios with large labeled data, pretrained and supervised strategies perform equally well, while with small amount of data, there is a tiny difference between both, but a significant difference between only time pipeline of supervised TF-C. These conclusions are specific to the HAR domain.The source code can be found at https://github.com/H-IAAC/KR-Papers-Artifacts.

First, we conducted a performance comparison test using varying percentages of the dataset, ranging from 0.1% to 100%. Remarkably, even with just 1% of training set (42 samples) we achieved an accuracy of \(85.9\%\). The highest accuracy achieved was \(95.6\%\) using the entire dataset, comprising 7350 samples. The minimal number of samples to obtain an accuracy greater than 90% was only 126 samples (0.2% of dataset)

Subsequently, we compared the results with supervised CNNs, which showed a significant performance drop in scenarios with small percentages of the dataset. We can conclude that the TF-C technique with pretraining is slightly advantageous in scenarios with limited labeled data. Except when compared with the complete frequency pipeline.

Analyzing the latent space, we conclude that TF-C has a great ability to combine frequency and temporal features and work well in different domains, but do not help a lot when the downstream and fine-tune tasks were in the same dataset. In these cases, the backbone after fine-tune produces very relevant features to the target task and separate the data in distinctive clusters based on their labels.

This work demonstrates the potential of using HAR datasets in downstream tasks of TF-C using a pre-trained model with same kind of data, achieving an accuracy of 95.6% when utilizing the entire training dataset for fine-tuning. Even with just 42 samples of the training dataset, our approach achieves an accuracy of 85.9%. This highlights the effectiveness of classifying human activity recognition data using TF-C, surpassing the performance of individual supervised CNNs with same architecture.

For future work, we aim at expanding the analysis of TF-C considering other HAR datasets, as well as the impact of TF-C pretraining for cross-dataset scenarios, in addition to investigating the effects of different architectures on TF-C

References

Andrzejak, R.G., Lehnertz, K., Mormann, F., Rieke, C., David, P., Elger, C.E.: Indications of nonlinear deterministic and finite-dimensional structures in time series of brain electrical activity: dependence on recording region and brain state. Phys. Rev. E 64, 061907 (2001). https://doi.org/10.1103/PhysRevE.64.061907

Anguita, D., Ghio, A., Oneto, L., Parra, X., Reyes-Ortiz, J.L., et al.: A public domain dataset for human activity recognition using smartphones. In: ESANN, vol. 3, p. 3 (2013)

Caron, M., Misra, I., Mairal, J., Goyal, P., Bojanowski, P., Joulin, A.: Unsupervised learning of visual features by contrasting cluster assignments. Adv. Neural. Inf. Process. Syst. 33, 9912–9924 (2020)

Challa, S.K., Kumar, A., Semwal, V.B.: A multibranch CNN-BiLSTM model for human activity recognition using wearable sensor data. Vis. Comput. 38(12), 4095–4109 (2022)

Chen, T., Kornblith, S., Norouzi, M., Hinton, G.: SimCLR: a simple framework for contrastive learning of visual representations. In: International Conference on Learning Representations, vol. 2, p. 4 (2020)

Chen, X., He, K.: Exploring simple Siamese representation learning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 15750–15758 (2021)

Cho, H., Yoon, S.M.: Divide and conquer-based 1D CNN human activity recognition using test data sharpening. Sensors 18(4), 1055 (2018)

Dong, J., Wu, H., Zhang, H., Zhang, L., Wang, J., Long, M.: SimMTM: a simple pre-training framework for masked time-series modeling. Adv. Neural Info. Process. Syst. 36 (2024)

Grill, J.B.: Bootstrap your own latent-a new approach to self-supervised learning. Adv. Neural. Inf. Process. Syst. 33, 21271–21284 (2020)

Guo, C., Zhang, Y., Chen, Y., Xu, C., Wang, Z.: Modality consistency-guided contrastive learning for wearable-based human activity recognition. IEEE Int. Things J. 11(12), 21750–21762 (2024)

Haresamudram, H., et al.: Masked reconstruction based self-supervision for human activity recognition. In: Proceedings of the 2020 ACM International Symposium on Wearable Computers, pp. 45–49 (2020)

Haresamudram, H., Essa, I., Plötz, T.: Contrastive predictive coding for human activity recognition. Proc. ACM Interact. Mob. Wearable Ubiquit. Technol. 5(2), 1–26 (2021)

Haresamudram, H., Essa, I., Plötz, T.: Assessing the state of self-supervised human activity recognition using wearables. Proc. ACM Interact. Mob. Wearable Ubiq. Technol. 6(3) (2022). https://doi.org/10.1145/3550299

Ismail, W.N., Alsalamah, H.A., Hassan, M.M., Mohamed, E.: AUTO-HAR: an adaptive human activity recognition framework using an automated CNN architecture design. Heliyon 9(2) (2023)

Kemp, B., Zwinderman, A., Tuk, B., Kamphuisen, H., Oberye, J.: Analysis of a sleep-dependent neuronal feedback loop: the slow-wave microcontinuity of the EEG. IEEE Trans. Biomed. Eng. 47(9), 1185–1194 (2000). https://doi.org/10.1109/10.867928

Liu, J., Zhong, L., Wickramasuriya, J., Vasudevan, V.: uWave: accelerometer-based personalized gesture recognition and its applications. Pervasive Mob. Comput. 5(6), 657–675 (2009). https://doi.org/10.1016/j.pmcj.2009.07.007

Oord, A.v.d., Li, Y., Vinyals, O.: Representation learning with contrastive predictive coding. arXiv preprint arXiv:1807.03748 (2018)

Qian, H., Tian, T., Miao, C.: What makes good contrastive learning on small-scale wearable-based tasks? In: Zhang, A., Rangwala, H. (eds.) KDD ’22: The 28th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, Washington, DC, USA, August 14 - 18, 2022, pp. 3761–3771. ACM (2022). https://doi.org/10.1145/3534678.3539134

Reyes-Ortiz, J., Anguita, D., Ghio, A., Oneto, L., Parra, X.: Human activity recognition using smartphones. UCI Mach. Learn. Repository (2012). https://doi.org/10.24432/C54S4K

Saeed, A., Ozcelebi, T., Lukkien, J.: Multi-task self-supervised learning for human activity detection. Proc. ACM Interact. Mob. Wearable Ubiq. Technol. 3(2) (2019). https://doi.org/10.1145/3328932

Tang, C.I., Perez-Pozuelo, I., Spathis, D., Brage, S., Wareham, N., Mascolo, C.: SelfHAR: improving human activity recognition through self-training with unlabeled data. Proc. ACM Interact. Mob. Wearable Ubiq. Technol. 5(1) (2021). https://doi.org/10.1145/3448112

Tang, C.I., Perez-Pozuelo, I., Spathis, D., Mascolo, C.: Exploring contrastive learning in human activity recognition for healthcare. arXiv preprint arXiv:2011.11542 (2020)

Tonekaboni, S., Eytan, D., Goldenberg, A.: Unsupervised representation learning for time series with temporal neighborhood coding. In: International Conference on Learning Representations (2021). https://openreview.net/forum?id=8qDwejCuCN

Xiao, Y., Huang, J., Yang, J.: TFCSRec: time-frequency consistency based contrastive learning for sequential recommendation. Expert Syst. Appl. 245, 123118 (2024)

Yuan, H., et al.: Self-supervised learning for human activity recognition using 700,000 person-days of wearable data. NPJ Dig. Med. 7(91), 1–10 (2024). https://doi.org/10.1038/s41746-024-01062-3

Zbontar, J., Jing, L., Misra, I., LeCun, Y., Deny, S.: Barlow twins: self-supervised learning via redundancy reduction. In: International Conference on Machine Learning, pp. 12310–12320. PMLR (2021)

Zhang, K., et al.: Self-supervised learning for time series analysis: taxonomy, progress, and prospects. IEEE Trans. Pattern Anal. Mach. Intell. 46(10), 6775–6794 (2024)

Zhang, X., Zhao, Z., Tsiligkaridis, T., Zitnik, M.: Self-supervised contrastive pre-training for time series via time-frequency consistency. Adv. Neural. Inf. Process. Syst. 35, 3988–4003 (2022)

Acknowledgments

This project was supported by the Ministry of Science, Technology, and Innovation of Brazil, with resources granted by the Federal Law 8.248 of October 23, 1991, under the PPI-Softex [01245.003479/2024-10]. The authors also thank CNPq (315399/2023-6 and 404087/2021-3) and Fapesp (2013/08293-7) for their financial support.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Hecker, N., Napoli, O.O., Delgado, J., Rocha, A.R., Boccato, L., Borin, E. (2025). An Analysis of Time-Frequency Consistency in Human Activity Recognition. In: Paes, A., Verri, F.A.N. (eds) Intelligent Systems. BRACIS 2024. Lecture Notes in Computer Science(), vol 15414. Springer, Cham. https://doi.org/10.1007/978-3-031-79035-5_5

Download citation

DOI: https://doi.org/10.1007/978-3-031-79035-5_5

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-79034-8

Online ISBN: 978-3-031-79035-5

eBook Packages: Computer ScienceComputer Science (R0)