Abstract

Automated anomaly detection in medical images greatly benefits the medical community by assisting in the diagnosis and identification of clinical cases. This work focuses specifically on medical images obtained from endoscopies. We categorized the images into two classes: normal (including normal-cecum, normal-pylorus, normal-z-line) and abnormal (including dyed-lifted-polyps, dyed-resection-margins, esophagitis, polyps, ulcerative colitis). We propose data augmentation to balance the classes and deep learning model techniques for classification. We employed an Ensemble Voting method with deep learning models to enhance the accuracy of the process. Our results are robust, achieving an accuracy of 98.62%, a precision of 96.47%, a sensitivity of 99.64%, and an F1-score of 98.03%. This approach proves to be an effective tool for identifying anomalies in endoscopies. We believe our method can contribute to significant advancements in AI-assisted medical diagnostics.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

The digestive system is responsible for nutrient absorption, involving physical and chemical processes from chewing to waste excretion. However, abnormalities can occur, impacting its function. These abnormalities can range from relatively benign conditions to severe diseases that require medical intervention. Two notable examples are polyps and ulcerative colitis [3, 20].

Polyps are small abnormal growths that resemble mushrooms and appear in various regions of the body, such as the anus and intestine. Although considered benign tumors, identifying them is crucial, as their presence can indicate cancer in the area where they are found [18]. Ulcerative colitis is an intestinal inflammation present in the colon, which can increase the chances of developing cancer. Early identification of these abnormalities is beneficial, as it can be essential for the early diagnosis of stomach cancer [20].

Stomach cancer ranks as one of the most frequent malignant neoplasms worldwide, holding the third position in terms of incidence. This aggressive form of cancer primarily affects individuals over the age of 50 and those with obesity. Early detection is crucial for effective treatment and improved patient prognosis. Physicians use endoscopic procedures to identify abnormalities in the digestive tract, allowing detailed visualization and early intervention in suspicious lesions [14].

To identify these abnormalities, endoscopists utilize two main procedures: upper gastrointestinal endoscopy (UGIE) and lower gastrointestinal endoscopy (LGIE). In UGIE, the physician introduces an endoscope through the patient’s mouth, allowing direct visualization of the esophagus, stomach, and duodenum mucosa via images. LGIE follows the same principle, but the endoscope is inserted through the patient’s anus to visualize the lower portion of the intestine, such as the colon [16].

One of the primary challenges encountered by endoscopists is the substantial volume of data generated during UGIE. This influx of information can be effectively managed using computational systems like Computer-Aided Detection (CAD) and Computer-Aided Diagnosis (CADx). These systems facilitate disease analysis, thereby accelerating and enhancing the diagnostic process [1]. The literature documents various computational methods developed for disease detection and diagnosis using medical images [2, 4,5,6, 8, 15].

Based on the problem described previously, the proposed method aims to identify specific abnormalities, such as polyps, ulcerative colitis, esophagitis, dyed-lifted-polyps, and dyed-resection-margins, in UGIE and LGIE exams. This method is based on Convolutional Neural Networks (CNNs), deep learning techniques, and Region of Interest (ROI) approach. The contributions of the proposed method include:

-

Ensemble Voting of multiple neural networks, which results in superior performance across evaluated metrics;

-

Implementation of an enhanced ROI approach, which improves accuracy in the abnormalities classification.

2 Related Works

In this section, we present relevant articles that discuss methods similar to the one presented in this study.

In the work of [17], they focus on Ensemble Voting methods with RGB channel extraction for bleeding area segmentation. This approach aims to improve the classification of the Bleeding, Healthy, and Ulcer classes. Additionally, the article tests the Ensemble Voting method with several ensemble methods, including F-Tree, L-Disc, L-SVM, C-SVM, M-G-SVM, and F-KNN. The objective is to differentiate among eight distinct classes: dyed-lifted-polyp (DLP), dyed-resection-margins (DRM), esophagitis (ESO), normal-cecum (NC), normal-pylorus (NP), normal-z-line (NZL), polyps (P), and ulcerative-colitis (UCE). The results obtained by this multiclass model on the Kvasir V1 dataset [19] were remarkable, achieving an accuracy of 86.6%, precision of 87.8%, F1-score of 86.8%, specificity of 98.08%, and sensitivity of 86.62%.

In the study proposed by [22], the emphasis is on pooling methods aimed at improving accuracy in the binary classification between the polyp and non-polyp categories. The pooling method that yielded the best results was DWT-pool, achieving an accuracy, recall, precision, and F1-score of 93.55%. This result was obtained using the Kvasir V1 dataset. Additionally, data augmentation techniques were employed to augment the image dataset.

The method proposed by [12] presents a method using a dataset with 14 distinct classes. The primary strategy was to categorize these classes into two main groups: normal and abnormal. This method proposes performance metric improvements by simplifying the problem from multiclass to binary classification. The results were promising considering the number of classes, with an accuracy of 76.02%, sensitivity of 78.9%, precision of 56.2%, and F1-score of 59.3%. It is noteworthy that these results were achieved using the Kvasir Capsule dataset [24] and the ResNet-50 architecture as the foundation for the machine learning model.

In the referenced work [26], researchers utilized CNN models VGG-16, GoogLeNet, and ResNet-18 to classify various categories: DLP, DRM, ESO, NC, NP, NZL, P, and UCE. They conducted an efficacy comparison among these neural networks. Particularly, the VGG-16 network achieved superior results, with an accuracy of 96.33%, sensitivity of 96.37%, precision of 96.5%, and an F1-score of 96.5%. These results were obtained using the Kavasir V2 image dataset [19] and data augmentation techniques, demonstrating the effectiveness of CNNs in medical image classification.

In [10], the study focuses on the Kvasir V2 dataset, which includes 8000 images across categories DLP, DRM, ESO, NC, NP, NZL, P, and UCE. The methodology involved applying data augmentation techniques alongside traditional CNNs and a fine-tuning process. The VGG-16 network yielded the most significant results, achieving an accuracy of 98.20%, recall of 92.75%, specificity of 99%, Matthews correlation coefficient of 91.73%, precision of 92.86%, and an F1-score of 92.76%.

Binary classification between normal and abnormal findings in medical examinations such as endoscopy is crucial for optimizing screening processes and early intervention. By focusing on quickly identifying patients with abnormal results, we can allocate medical resources more efficiently, ensuring these individuals receive timely assessment and treatment. It improves patient health outcomes by enabling early interventions that can reduce future complications and helps alleviate the burden on healthcare systems by prioritizing cases needing immediate attention. However, there is a noticeable scarcity of binary classification approaches for this problem in the literature. Addressing this need, we propose a binary method that distinguishes between normal and abnormal conditions.

Our proposed method includes edge cropping to remove unnecessary information, uses data augmentation techniques to balance classes, and compensates for the dataset’s limited image count. Furthermore, we introduce an Ensemble approach based on voting with multiple CNNs to improve classification performance. This method aims to introduce a novel approach not extensively explored in the literature, offering an automated system to aid specialist physicians in diagnosing these abnormalities.

3 Materials and Proposed Method

The proposed method comprises four stages, validated using the database presented in Sect. 3.1. We remove all image edges through an ROI process in the first step. In the second step, we apply data augmentation techniques to diversify and expand the database. The third stage involves training several CNNs and combining through an Ensemble Voting process. In the fourth stage, we construct a predictive model using Ensemble Voting, and subsequently, in the fifth stage, we calculate validation metrics to measure the method’s robustness. Figure 1 provides a described process visual illustration.

3.1 Image Database

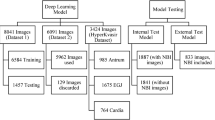

In this step, we gathered data from the public Kvasir V1 database on Kaggle [19]. This dataset contains 4000 images spread across eight distinct classes: DLP, DRM, ESO, NC, NP, NZL, P, and UCE, with each class comprising 500 images. The images vary in dimensions from 730\(\,\times \,\)576 to 1920\(\,\times \,\)1072 pixels, all in JPG format. However, our method distinguishes between normal and abnormal classes. The normal includes NC, NP, and NZL, totaling 1500 images. The abnormal classes consist of DLP, DRM, ESO, P, and UCE, totaling 2500 images. Figure 2 illustrates the separation of classes in the image database.

3.2 ROI Extraction

Some images in the Kvasir dataset have black borders used for annotations by specialists. However, these areas are not essential for the classifier and can even confuse it. Therefore, we opted to extract the ROI from the image. In practice, this involves image cropping, leaving only a square region without black pixels in the central areas of each side of the square. Figure 3 illustrates the ROI extraction presented.

3.3 Data Augmentation and Class Balancing

In this section, the process of class balancing and data augmentation used in the proposed method will be demonstrated.

Balancing the classes was necessary due to the disparity between them, with the normal class having 1500 images and the abnormal class having 2500 images. Balancing is important because when a class is a majority, the classifier can develop a bias towards it, reducing precision. Initially, the image base was randomly divided into training and test sets. Subsequently, a difference of 742 images was observed between the two classes in the training set. To correct this imbalance, a data augmentation process was performed to generate 742 additional images in the minority class, thus balancing the classes in the training set.

In addition, to reduce overfitting, a data augmentation process was performed, which includes a rotation of a maximum of 10% to the right or left and a vertical or horizontal mirroring, generating 1000 images in each class. Figure 4 provides visual examples of these data augmentation techniques applied to the test dataset.

3.4 Ensemble Voting

We chose the Hard Voting Ensemble [7] for this study, which involves training multiple models and then conducting a voting process to determine the normal or abnormal class based on the majority of votes. For example, if we train five models and three of them predict that a particular image belongs to the normal class, then the image will be classified as normal.

In this context, we individually trained the following CNNs, each chosen for their unique characteristics and strengths:

-

VGG-19 [23]: Known for its deep architecture with 19 layers, VGG-19 achieved one of the highest results in sensitivity among the tested networks. Despite this, it showed lower precision for one of the classes, indicating that while it is effective at general classification tasks, it might struggle with fine-grained distinctions.

-

MobileNetV2 [21]: This network is designed to be both efficient and powerful, employing depthwise separable convolutions to reduce the number of parameters and computational costs while maintaining performance. MobileNetV2 significantly improved ROI extraction, achieving results comparable to heavier networks.

-

ResNet-50 [11]: Famous for its use of residual blocks to combat the vanishing gradient problem. This characteristic makes it particularly adept at learning intricate features, leading to fewer false positives in the classification task.

-

DenseNet-121 [13]: With its dense connections that encourage feature reuse throughout the network, DenseNet-121 notably enhanced its performance with ROI extraction and data augmentation. It showed the most substantial improvement, particularly in accuracy and sensitivity, making it excellent at detecting true positives.

-

EfficientNetB0 [25]: This model is designed to achieve better performance with fewer parameters by scaling depth, width, and resolution in a balanced manner. After ROI extraction and data augmentation, EfficientNetB0 demonstrated a good trade-off between accuracy, precision, and sensitivity.

Each of these networks contributes uniquely to our study, providing a better understanding of how different architectures respond to techniques like ROI extraction and data augmentation.

We trained all models using same randomly selected images with identical seed and data augmentation parameters. Figure 5 visually illustrates the described Ensemble Voting method.

After training, the models make predictions based on voting in the test set. The normal or abnormal class is then determined by the majority of the models’ votes. This procedure ensures greater robustness and accuracy in classification, leveraging the diversity and complementarity of the models used.

3.5 Validation Metrics

At the end of the process, we present the results using validation metrics to assess the method’s effectiveness. In this study, we adopted metrics such as accuracy (which measures the overall correct predictions considering all observations), sensitivity (which measures the proportion of true positives among all actual positives), precision (which measures the proportion of true positives among all positive predictions), and F1-score (which measures the harmonic mean of precision and sensitivity) [9].

4 Results and Discussion

In this section, we will explore the experiments conducted at each stage of the proposed method, the configurations used, and a case study demonstrating the evolution of each stage of the method employed. This detailed analysis will provide a deeper understanding of the process and the tools involved.

4.1 Experimental Setup

The models were trained in a virtual environment on Google Colab using a Jupyter Notebook. The hardware specifications included Nvidia A100 GPU and Nvidia T4 Tensor Core GPU. Development was carried out in Python, primarily utilizing the libraries OpenCV, Pandas, Scikit-Learn, and Keras.

4.2 Method Experimental Results

We performed several experiments to validate each stage of the proposed method. It’s important to note that we opted to conduct each experiment using only DenseNet-121, taking into account the training cost of all architectures.

The implementation adheres to Keras library conventions, with specific modifications in the final layers: initially, a Flatten layer was added to connect the convolutional layers to the fully connected layers. Subsequently, a dense layer with 256 neurons and Rectified Linear Unit (ReLU) activation was incorporated, followed by a 50% Dropout layer to mitigate overfitting. Finally, a dense layer with one neuron and sigmoid activation was introduced to produce the output. The hyperparameters utilized included an Adam optimizer with a 0.001 learning rate and early stopping after 50 epochs based on accuracy. All other models followed the same standards and hyperparameters.

Furthermore, the dataset was splitted into three subsets: training (70%), validation (10%), and testing (20%). The training and validation subsets were utilized for model training, while the testing subset was reserved for method validation.

Experiment with and Without ROI Extraction.

In this step, we conducted two experiments to validate the ROI extraction step. The first experiment used only the original images without any modifications or data augmentation. In the second experiment, we applied ROI extraction to the images to validate this technique. Table 1 summarizes the results.

The results unequivocally indicate significant improvements across all metrics when employing ROI extraction. This improvement suggests that the removed areas likely contained irrelevant information that could potentially confuse the classifier. By eliminating these unnecessary areas, the model achieved superior generalization capability, resulting in notably enhanced accuracy and efficiency in the endoscopic classification images. This validation underscores the critical role of ROI extraction in optimizing model performance and ensuring robustness in medical image analysis applications.

Data Augmentation Experiment with Class Balancing.

In Sect. 3.3, we discuss the addition of the data augmentation technique used in this study. To validate our approach, we conducted two experiments: the first experiment did not employ data augmentation, while the second experiment utilized these techniques. The experiments were performed using the DenseNet-121 model. The results of these experiments are summarized in Table 2.

As evidenced by the results in Table 2, the implementation of data augmentation resulted in substantial improvements across all evaluated metrics. These enhancements underscore the crucial role of data augmentation in improving the robustness of our proposed method. By enriching the dataset’s diversity and enhancing the model’s capacity to generalize intricate patterns in endoscopic images, this technique has proven instrumental. It effectively mitigates overfitting while facilitating more accurate and reliable classification outcomes. Thus, integrating data augmentation emerges not only as a methodological imperative but also as a strategic enhancement that fortifies the overall efficacy of our approach in clinical applications.

Individual CNNs Vs Ensemble Voting Experiment.

Finally, we validated the Ensemble method using the CNNs VGG-19, MobileNetV2, ResNet-50, DenseNet-121 and EfficientNetB0. In general, all steps of the method were maintained, just replacing DenseNet-121 with the mentioned CNNs. Initially, we present the individual results of these CNNs, as well as the performance of the Ensemble Voting method that combines these networks (Table 3). Subsequently, we present all experiments conducted for the final method validation (using DenseNet-121), demonstrating its robustness and highlighting the contribution of each stage to its effectiveness (Table 4).

Analyzing the Table 3 we can see that Ensemble Voting significantly outperforms each CNN in all aspects evaluated. The increase in accuracy, precision, sensitivity, and F1-score indicates that the combination of multiple CNNs allows us to capture the characteristics of the anomalies better, taking advantage of the strengths of each model and compensating for their weaknesses.

Table 4 presents the evolution of the proposed method showing the results of each of the steps using DenseNet-121. The first step was extracting the ROI, which significantly improved all metrics. Following this experiment, data augmentation was employed to balance both classes and generate more images, greatly enhancing the metrics for all models. Finally, Ensemble Voting was performed, combining features from various distinct CNNs to achieve a more robust model. In this method, DenseNet-121 provided the highest accuracy among all models; ResNet-50 achieved the best precision; DenseNet-121 and MobileNetV2 showed exponential improvements in metrics with ROI extraction and data augmentation contributing to enhanced sensitivity; and EfficientNetB0 yielded balanced results, improving the F1-score. Each CNN showcased unique characteristics amplified with ROI extraction and data augmentation, culminating in a robust Ensemble method that outperforms individual model performance.

4.3 Case Study

In this section, we present two case studies analyzing the misclassification of stomach images. The first involves a normal image erroneously classified as abnormal (Fig. 6 (A)), while the second deals with an abnormal image classified as normal (Fig. 6 (B)).

By analyzing Fig. 6 (A), which shows an NC image, we observe that despite being a normal image, it contains some yellowish residues that may have confused the classifier. These residues are likely food remnants or bile that visually resemble pathological features. In contrast, Fig. 6 (B) depicts a UCE image incorrectly classified as normal. The classifier might have been misled by the absence of mucus and bleeding, which are typically present in UCE images, resulting in the incorrect classification as normal. Despite these misclassifications, we consider the model to be robust. When combined with medical expertise, it can play a crucial role in detecting stomach abnormalities.

4.4 Related Works Comparison

This section proposes a comparative analysis with the studies discussed in Sect. 2. It is important to note that the methodologies employed in various studies are significantly different from each other, making this comparison not absolute but rather an attempt to establish a comparative parameter. Table 5 provides a summary of the metrics used for this comparison.

Several studies in the field employ CNN to detect abnormalities in endoscopic examinations. For instance, researchers such as [26] and [10] extensively test various architectures to identify those that yield superior performance metrics, aiming to integrate these architectures into their methodologies. While this approach is valuable, it’s important to note that not all architectures consistently outperform others; different architectures often possess unique strengths that can complement each other, as demonstrated in Ensemble methods.

The work by [17] utilizes an Ensemble method to combine multiple classifiers and extract features from each. However, this method is constrained by its reliance solely on the dataset and does not utilize any data augmentation step. In contrast, the proposed method presented in this study demonstrates significant improvements in model performance by leveraging data augmentation techniques. An exemplary study illustrating the benefits of data augmentation is [12], which employs this approach to balance classes in a binary classification (normal and abnormal). This research underscores that addressing problems in binary classification can enhance performance metrics, utilizing CNNs for testing and highlighting the strengths of each network.

If these studies were to adopt an Ensemble method similar to the one presented, they could effectively leverage the positive attributes of multiple models. Additionally, the study by [22] focuses on a binary approach for polyp detection, achieving an accuracy of 93.55%. In contrast, the proposed method achieves an accuracy of 98.52%, even with the inclusion of four additional abnormalities.

A comparison with existing literature reveals that while CNN-based approaches have demonstrated effectiveness in detecting gastrointestinal abnormalities, there remains ample opportunity for improvement, particularly in integrating diverse architectures and optimizing data augmentation strategies. This study contributes significantly by integrating Ensemble methods with class balancing, data augmentation, and ROI extraction, resulting in a more robust and precise model. Consequently, combining this approach with medical expertise holds promise for enhancing the early detection of gastrointestinal abnormalities.

5 Conclusion

The proposed method combines ROI extraction with data augmentation and class balancing. Furthermore, it employs an Ensemble voting of CNNs applying a binary approach to the problem that remains underexplored in the literature. Ensemble Voting integrates decisions from multiple CNN models, enhancing the diagnostic capability of the method. The impressive results, with an accuracy of 98.62%, precision of 96.47%, and an F1-score of 98.03%, highlight the method’s effectiveness in detecting abnormalities in UGIE and LGIE exams.

For future work, we suggest validating the method across diverse datasets, automating the optimization of network hyperparameters, and further exploring a broader range of CNNs and Ensemble techniques. These steps aim to enhance diagnostic accuracy and improve the robustness of results.

References

Alagappan, M., Brown, J.R.G., Mori, Y., Berzin, T.M.: Artificial Intelligence in Gastrointestinal Endoscopy: The Future is Almost Here. National Library of Medicine (2018)

Araújo, J.D.L., et al.: Liver segmentation from computed tomography images using cascade deep learning. Comput. Biol. Med. 140, 105095 (2022)

Chiras, D.D.: Human Body Systems: Structure, Function, and Environment. Jones & Bartlett Publishers (2013)

da Cruz, L.B., et al.: Kidney segmentation from computed tomography images using deep neural network. Comput. Biol. Med. 123, 103906 (2020)

da Cruz, L.B., et al.: Kidney tumor segmentation from computed tomography images using Deeplabv3+ 2.5D model. Expert Syst. Appl. 192, 116270 (2022)

da Cruz, L.B., et al.: Interferometer eye image classification for dry eye categorization using phylogenetic diversity indexes for texture analysis. Comput. Methods Programs Biomed. 188, 105269 (2020)

Diniz, J.O., Dias Jr, D.A., da Cruz, L.B., Gomes Jr, D.L., Cortês, O.A., de Carvalho Filho, A.O.: Efficientensemble: Diagnóstico de câncer de mama em imagens de ultrassom utilizando processamento de imagens e ensemble de efficientnets. In: Simpósio Brasileiro de Computação Aplicada à Saúde (SBCAS), pp. 202–213. SBC (2024)

Diniz, J.O.B., et al.: Heart segmentation in planning CT using 2.5D U-Net++ with attention gate. Comput. Methods Biomech. Biomed. Eng. Imag. Vis. 11(3), 317–325 (2023)

Duda, R.: Pattern Classification and Scene Analysis. Tronto A Wiley-Interscience Publication, New-York, London, Sydney (1973)

Escobar, J., Sanchez, K., Hinojosa, C., Arguello, H., Castillo, S.: Accurate deep learning-based gastrointestinal disease classification via transfer learning strategy. Department of Electrical Engineering (IEEE) (2021)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. arXiv (2015)

Hollstensson, M.: Detecting gastrointestinal abnormalities with binary classification of the Kvasir-capsule dataset. Bachelor Degree Project (2022)

Huang, G., Liu, Z., van der Maaten, L., Weinberger, K.Q.: Densely connected convolutional networks. arXiv (2016)

Ilic, M., Ilic, I.: Epidemiology of stomach cancer. World J. Gastroenterol. 28(12), 1187 (2022)

Júnior, D.A.D., et al.: Automatic method for classifying COVID-19 patients based on chest X-ray images, using deep features and PSO-optimized XGBoost. Expert Syst. Appl. 183, 115452 (2021)

Koh, P., Cole, N., Evans, H.M., McFarlane, J., Roberts, A.J.: Diagnostic utility of upper and lower gastrointestinal endoscopy for the diagnosis of acute graft-versus-host disease in children following stem cell transplantation: a 12-year experience. Pediatr. Transplant. 25(7), e14046 (2021)

Naz1, J., Khan1, M.A., Alhaisoni, M., Song, O.Y., Tariq, U., Kadry, S.: Segmentation and Classification of Stomach Abnormalities using Deep Learning. Tech Science Press (2021)

Noffsinger, A.E.: Serrated Polyps and Colorectal Cancer: New Pathway to Malignancy, Mechanisms of Disease. Ann. Rev. Pathol. (2008)

Pogorelov, K., et al.: Kvasir: a multi-class image dataset for computer aided gastrointestinal disease detection (2017). https://doi.org/10.1145/3083187.3083212

Rogler, G.: Chronic ulcerative colitis and colorectal cancer. Cancer Letters (2014)

Sandler, M., Howard, A., Zhu, M., Zhmoginov, A., Chen, L.C.: MobileNetv2: inverted residuals and linear bottlenecks. arXiv (2018)

Sharma, P., Das, D., Gautam, A., Balabantaray, B.K.: LPNet: a lightweight CNN with discrete wavelet pooling strategies for colon polyps classification. Wiley (2022)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. arXiv (2014)

Smedsrud, P.H., et al.: Kvasir-Capsule, a video capsule endoscopy dataset (2021). https://doi.org/10.1038/s41597-021-00920-z

Tan, M., Le, Q.V.: EfficientNet: rethinking model scaling for convolutional neural networks. arXiv (2019)

Yogapriya, J., Chandran, V., Sumithra, M.G., Anitha, P., Jenopaul, P., Suresh Gnana Dhas, C.: Gastrointestinal tract disease classification from wireless endoscopy images using pretrained deep learning model. Hindawi (2021)

Acknowledgments

This work was supported by the Coordenação de Aperfeiçoamento de Pessoal de Nível Superior - Brasil (CAPES) - Finance Code 001, Fundação de Amparo à Pesquisa do Maranhão (FAPEMA), and Conselho Nacional de Desenvolvimento Científico e Tecnológico (CNPq). We also acknowledge LLM use for spell-checking, grammar correction, and assistance in translating specific terms.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

da S. Viana, P., da Cruz, L.B., Dias Jr., D.A., Diniz, J.O.B. (2025). Anomalies Diagnostic in Endoscopic Images Using Deep Learning Ensemble Models. In: Paes, A., Verri, F.A.N. (eds) Intelligent Systems. BRACIS 2024. Lecture Notes in Computer Science(), vol 15414. Springer, Cham. https://doi.org/10.1007/978-3-031-79035-5_8

Download citation

DOI: https://doi.org/10.1007/978-3-031-79035-5_8

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-79034-8

Online ISBN: 978-3-031-79035-5

eBook Packages: Computer ScienceComputer Science (R0)