Abstract

We are currently witnessing growing concerns regarding fairness in online marketplace environments, particularly in situations involving platform-owned first-party selling, biased recommendation algorithms and trust formation. Regulatory measures, such as those imposed by the European Commission and the US Federal Trade Commission, underscore the importance of fair practices on these platforms. While machine learning ranking models are widely used in ranking multiple offers from various sellers, they can inadvertently introduce biases, raising regulators’ concerns. Despite recent advances in fair ranking algorithms, the long-term impact of fairness in ranking remains understudied. The contributions of this research are threefold: 1) it establishes a simulated online marketplace environment to complement traditional static experiments; 2) it assesses the long-term impact of fairness in utility of ranking algorithms; and 3) it evaluates state-of-the-art fairness techniques in dynamic environments. Findings reveal how utility in ranking fairness algorithms can be affected by the application of fairness techniques and how data drift impacts regular and fair ranking algorithms in a long-term scenario.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Online marketplace services for third-party sellers are commonly provided by major e-commerce platforms such as Amazon and Alibaba. However, concerns about fairness have emerged in recent years, particularly in situations where: (i) the marketplace platform itself engages in first-party selling and increases revenue by employing biased algorithms or by making an unfair use of private seller data [8]; and (ii) the platform’s recommendation algorithms favor sellers that utilize paid services, such as fulfillment or delivery [3].

In response to these concerns, the European Commission has taken concrete action by determining that these practices may lead to violations to the antitrust rules of the European Union. As a result, regulatory measures have been imposed on Amazon’s Buy Box and Prime program to ensure compliance with the established guidelines [19].

Machine learning ranking models are widely employed in the construction of systems that rank multiple offers from multiple sellers for the same product. However, these models may, inadvertently or not, introduce biases that favor the first-party seller or clients of other services provided by the platform-owning company, which were considered by regulatory entities as anticompetitive practices [3].

Despite recent advancements in fair ranking machine learning algorithms and the detection of bias in ranked outputs [23], the examination of the long-term impact of fairness in ranking algorithms remains relatively understudied, especially when compared to the extensive research conducted on fairness in classification techniques [7]. While efforts have been made to develop fair ranking algorithms and identify biases in rankings, there is a need for further investigation into the sustained effects of these algorithms on different stakeholders, including sellers, consumers, and the overall competitive landscape of online marketplaces. Exploring the long-term implications of fairness in ranking algorithms is essential for developing effective strategies to mitigate bias and promote fair practices in the context of online marketplace platforms.

Amazon’s project Nessie [1] illustrates the long-term implications of unfair algorithms. The U.S. Federal Trade Commission (FTC) scrutinized the algorithm’s behavior, expressing concerns about its potential adverse effects on competition. Ensuring compliance in an adaptive pricing algorithm is complicated due to the non-deterministic nature of machine learning models [17], which makes it difficult to keep track of all behavior changes in each version, especially in corner cases. The model interacts with potentially millions of products, considers numerous concurrent offers, has to make adjustments over time in response to the dynamic pricing environment, and deals with the continual addition of new products and the long-term impact of price changes on competitors and customers’ behaviors.

Previous studies have utilized simulations to evaluate long-term fairness in binary decision scenarios, such as loans and college admissions [7]. However, ranking tasks are different from binary decision scenarios, requiring different algorithms, metrics and definitions of fairness [23]. This research aims to fulfill this gap, with the following contributions: (i) to establish a simulated online marketplace environment as a baseline framework for future implementations involving ranked data; (ii) to assess the long-term impact of fairness in utility, providing valuable insights for companies, regulators and other stakeholders; and (iii) to assess how state-of-art fairness in ranking techniques address data distribution changes.

The results underscored that current methods and metrics for optimizing fairness in rankings are significantly influenced by changes in distribution. Moreover, they highlighted that the impact of the fairness-utility trade-off can vary depending on the distribution of attribute values considered by the underlying ranking algorithm and fairness optimization method. These findings emphasize the need for robust approaches that can adapt to dynamic distributions while balancing fairness and utility effectively.

2 Related Work

This section focuses on studies that evaluate the long-term impact of fairness interventions and examine the sustained effects of fairness-aware algorithms on decision-making processes, highlighting their efficacy and potential drawbacks over extended periods. It also explores research that proposes various ranking fairness techniques, case studies, applications and limitations.

In their study, D’Amour et al. [7] analyze three illustrative toy scenarios to investigate the dynamics of fairness. The first scenario is a loan domain originally proposed by Liu et al. [14], where the focus is on fairness in credit allocation. The second scenario revolves around attention allocation and was introduced by Elzain et al. [9] and Ensign et al. [10], highlighting the significance of fairness in resource allocation. Lastly, the researchers examine a college admission scenario, adopting the problem setup of a two-player game known as the Stackelberg game [4], to explore fairness challenges and strategies within the educational context.

Agent-environment interaction loop of ml-fairness-gym [7]

To analyze the implications of fairness within these scenarios, D’Amour et al. [7] developed a framework called ml-fairness-gymFootnote 1. This framework utilizes a Markov Decision Process (MDP) paradigm, as illustrated in Fig. 1, to facilitate the dynamic interaction between agents and their environment. Within this setup, the environment undergoes state transitions in response to the agent’s actions, while the agent, on the other hand, observes the environment’s state to make informed decisions. At each state of the environment, fairness metrics are calculated, providing an understanding of fairness dynamics throughout the simulation. The ml-fairness-gym framework is an extension of OpenAI’s Gym framework, a state-of-the-art tool for training reinforcement learning. This inherited capability allows it to assist in the creation of adaptative solutions for decision-making process in pursuit of fairness.

The paper underscores a series of instances where discrepancies emerge between one-step analysis and dynamic environmental conditions. For instance, even when ensuring an equitable true positive rate (TPR) between the two groups at every stage, the cumulative TPR aggregation displays inequality. This phenomenon, known as Simpson’s Paradox, remains imperceptible within a static analysis, but was detected thought simulation. To summarize, the authors emphasize three key points: firstly, simulations can complement empirical data in the experimentation process; secondly, there exists significant potential in using reinforcement learning algorithms for policy optimization through a fairness agent; and finally, the application of simulation techniques can ease the implementation and exploration of online or adversarial learning, as it allows to implement different scenarios, including rare and corner cases.

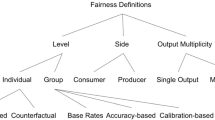

Zehlike, Yang, and Stoyanovich [23] conducted a survey on fairness in ranking techniques. In their work, they analyzed state-of-the-art algorithms, also introducing a comprehensive taxonomy for classifying these algorithms. This taxonomy involves categorizing fairness ranking techniques based on the specific stage within the ranking task at which mitigation strategies are applied.

In accordance with this established taxonomy, the stages at which a fairness intervention may take place within a ranking task can be categorized into three distinct phases:

-

The fairness algorithm for ranking can intervene on the initial candidate set before the execution of the ranking function. In the case of the marketplace environment, it would be an intervention in the offer data (e.g. price, delivery time).

-

It can integrate itself within the ranking function, directly affecting the ranking process. In a marketplace environment, it would be an intervention in the process of scoring offers.

-

It can act in the processed ranked set, subsequent to its treatment by the ranking function, refining the fairness attributes of the final rankings. Considering a marketplace environment, this intervention would happen after the scoring of the offers, using the score as input and generating fair scores.

Another taxonomy refers to the type of bias that the data presents [23]. They are:

-

Pre-existing bias: a bias is categorized as “pre-existent” when societal or environmental frameworks predispose discrimination against specific members within a certain group. An illustrative instance is the Scholastic Assessment Test (SAT) math scores in the context of US college applicants, where discernible variations exist in distribution and averages among racial groups. As highlighted by Reeves and Halikias [16], the average SAT math scores for black and latino individuals are markedly lower compared to those for white and Asian counterparts. This disparity is further accentuated by the distribution patterns: scores for black and Latino individuals tend to cluster at the lower end, white scores exhibit a more standard normal distribution, and Asian scores tend to cluster at the higher end of the spectrum. Occasionally, this form of bias may manifest within a marketplace environment, particularly when a company introduces premium services. For instance, such services might give advantages to sellers utilizing them, even if the services were not explicitly designed to function in such a manner. An example of this can be observed in the realm of delivery services, where a particular service provider is consistently better than its competitors, consequently affording an unintended advantage to sellers who subscribe to this superior service.

-

Technical bias: a form of bias that comes from technical constraints or deliberate decisions is characterized as “technical bias”. An illustrative case is the presence of positional bias that happens when a marketplace website accentuates a singular offer by positioning it in a more prominent position, relegating others to smaller sections of the screen. This scenario underscores the challenge of mitigating bias within the constraints of platform design. An exemplar solution, as suggested by the European Commission [3], involves the strategic highlighting of two comparable offers whenever feasible. However, achieving a solution that genuinely aims for equitable treatment is invariably limited by the inherent limitations imposed by the design of the marketplace environment.

-

Emergent bias: occurs when a ranking system is conceived with a distinct agent archetype in focus or when it interfaces with a societal construct that exhibits temporal evolution [23]. Within a marketplace ecosystem, the dispersion of offer attributes like price and delivery time can undergo continual transformation due to the incessant influx of new sellers into the marketplace. This dynamic process can cause shifts in the score distributions among different groups, providing a latent source of bias that gradually materializes over time.

All these three forms of bias may manifest in a marketplace platform. A robust simulation framework may put fairness algorithms under experimentation, analysing even corner cases and multiple sources and types of bias.

In their study [23], which introduced method classification frameworks and examined bias mitigation approaches within score-based ranking tasks, Zehlike, Yang, and Stoyanovich found that none of the investigated methods were explicitly tailored or assessed for the mitigation of emergent bias. As documented in the study, every method addressed pre-existing bias [2, 5, 6, 12, 13, 18, 21, 22]. However, only a single method [21] was specifically designed to address technical bias mitigation as well.

Yang and Stoyanovich [22] introduced a framework providing metrics for quantifying fairness and a toolset to integrate these metrics into an optimizer. The optimization method proposed by the authors was tested in both synthetic and real world datasets, being able to produce equitable outcomes while also optimizing utility.

Celis, Straszak, and Vishnoi [6] provided an optimization framework capable of handling multiple sensitive attributes with nested fairness constraints in linear complexity. The algorithm proposed by these authors was subjected to an evaluative assessment employing essential metrics such as discounted cumulative gain (DCG), Spearman’s rank correlation coefficient (rho), and the Bradley-Terry model.

Yang, Gkatzelis, and Stoyanovich [21] complemented previous research efforts, which emphasized diversity constraints, by showing other interrelated fairness dimensions, most notably in-group fairness. This fairness concept revolves around the possibility that candidates selected from a particular group might not inherently represent the best fit when evaluated within the group’s context. Through an optimization framework, the authors introduced new fairness constraints, made to combine in-group fairness alongside established diversity constraints. To empirically substantiate their framework’s efficacy, an experimental evaluation was undertaken, utilizing various public datasets. The results showed that, as expected, the enforcement of regular diversity constraints caused an utility loss of 28%, with an increase of 3% of loss when including in-group fairness.

Stoyanovich, Yang, and Jagadish [18] introduced a framework for enforcing fairness constraints within the domain of score-based ranking in streaming tasks. In streaming environments, the inherent complexity arises from the challenge of not possessing access to the entirety of candidates during the prediction phase. The authors proposed more efficient set selection techniques and new insights on how to circumvent this limitation. Their work was tested against both real-world and synthetic datasets. The framework demonstrated success in providing fair outcomes, with an acceptable trade-off in utility.

Kleinberg and Raghavan [13] described implicit bias, which can be classified as a form of pre-existing bias, showing how unconscious prejudice linked to group affiliations can inadvertently steer the decision-making process. The authors proposed a theoretical framework that enables the analysis of the ramifications from implicit bias, showing potential trade-offs and benefits associated with bias mitigation strategies.

Celis, Mehrotra, and Vishnoi [5] also study implicit bias, analyzing fairness constraints inspired by the Rooney Rule. The Rooney Rule, created by the U.S. National Football League (NFL), mandates that all its teams include at least one minority candidate for head coaching positions. When scrutinizing the outcomes of implementing Rooney Rule-like constraints on curated datasets, this approach was successful in dealing with implicit bias, presenting outcomes congruent with the findings of Kleinberg and Raghavan [13].

Yang, Loftus, and Stoyanovich [12] presented a causal fairness framework specifically designed to deal with the concept of intersectionality, wherein a candidate is found affiliated with multiple protected groups simultaneously. The framework was tested in a real-world and synthetic datasets, exposed to several structure assumptions, presenting more diverse outcomes than the original datasets ranking.

Asudeh et al. [2] developed a system aimed at aiding human decision-makers in fine-tuning weights within ranking score functions. Empirical validation via tests on real-world datasets has proven the efficacy of the system. Moreover, the complexity of this solution, which uses a simple weighted function during a ranking task, is much lower compared to techniques that use optimization or causal fairness.

In summary, this section presented state-of-the-art fairness algorithms addressing various biases in ranked inputs. While none of them specifically addresses long-term fairness impacts, research on this aspect exists for classification and resource allocation tasks [4, 7, 9, 10, 14]. Identifying this research gap and inspired by recent regulatory discussions on fairness in marketplace environments, we developed a simulation framework. The framework draws inspiration from D’Amour et al.’s work [7] and utilizes the algorithm proposed by Celis, Straszak, and Vishnoi [6]. Additionally, we incorporated the Rooney Rule, whose effects were studied by Celis, Mehrotra and Vishnoi [5].

3 Experimental Setup

The main objective of this section is to present the experimental procedure for designing and evaluating the marketplace simulation, detailing the fundamental mechanics of this kind of environment and the setup for systematically changing affiliation group sizes. This approach aims to understand the effects of bias mitigation strategies within the dynamic context of a marketplace.

Algorithm 1 provides an illustrative depiction of the complete simulation procedure. It iterates a total of \(n \cdot ss\) times, where n denotes the frequency of parameter updates and the generation of new seller pools. Here, ss signifies the number of ranking tasks executed with consistent parameters and seller pools, both premium and non-premium.

The creation of seller pools, which represent the sellers registered in the marketplace, is segmented into premium and non-premium categories (Cp and Cnp, respectively). This is made through the function GeneratePool, which receives the following parameters:

-

Pp: This parameter signifies the proportion of premium sellers relative to the total number of sellers. The distinct sizes of the two pools may emulate the evolution of premium services within a business context.

-

NTotal: This variable represents the overall count of sellers subscribed to the marketplace environment.

-

\(p_p\) and \(p_{np}\): These parameters, which refer to the premium pool and non-premium pools, respectively, encompass the mean and standard deviation values for each attribute of the generated offers (e.g., price, delivery time). Consequently, within the simulation, it is possible to fine-tune these attributes and optimize parameters for specific groups, conducting trade-off analysis as needed.

Following the generation of both seller pools, at each iteration of j, the RandomSelection function comes into play. This function randomly gets a subset from these pools, forming an offer list. Each selected seller has the capacity to generate an offer, with individual attributes being determined based on the mean and standard deviation values specified in \(p_p\) and \(p_{np}\).

The default behavior of this random generation adheres to the Rooney Rule, ensuring that there is always at least one offer from each group. This characteristic aligns with the principles of fairness and inclusivity, mirroring real-world practices in which diversity is prioritized.

Next, the generated offers (Co) undergo ranking through a weighted function, which is an approach commonly used for naive ranking algorithms [23]. Attribute weights assigned within this process serve to accentuate the significance of particular attributes, facilitating the generation of a ranking score. The most important attributes are generally determined by the machine learning algorithm using the provided data or by a set of business rules created by stakeholders, which prioritize factors such as price and delivery time over other attributes. For this setup, based on observations from real-world data, the following attributes were generated for each offer, and these weights were assigned to them in the regular ranking agent:

-

Price (weight=5): the cost associated with the seller’s offerings, determinant for buyers.

-

Delivery Time (weight=2): the estimated time frame within which the product or service will be delivered, another critical factor influencing purchase decisions.

-

Installment Ratio (weight=1): the proportion of the payment that can be made in installments, providing flexibility to buyers.

-

Seller Score (weight=1): the seller’s historical performance and reputation within the marketplace, a trust-building metric for buyers.

-

Premium Status (weight=1): indicates whether the seller belongs to the premium category or not. Premium sellers are meant to have access to exclusive services that may provide advantage in competition. In the simulation, the weight introduces bias explicitly. However, it is worth noting that in most real-world scenarios, bias typically manifests implicitly or emerges from the training data used or as a side effect of stakeholder decisions.

The aforementioned attributes have been subject to normalization using a min-max scaler, rescaling their values within the range of 0 to 1. It is important to note that, for consistency, all attributes are configured with a “bigger is better” orientation, even in cases where this contradicts the natural interpretation of the non-normalized features. For instance, attributes like price and delivery time, which are conventionally considered more attractive to consumers when they are smaller, have been configured as “bigger is better”.

Following the initial ranking procedure, the FairRankOffers function intervenes to refine the fairness of the ranking. This optimization mechanism implemented the methodology proposed by Yang and Stoyanovich [22], publicly accessible on GitHubFootnote 2, replicating their approach.

The GroundTruthRankOffers function receives the same parameters as the RankOffers function and behaves similarly. The key distinction is that while the RankOffers function favors premium sellers, the GroundTruthRankOffers function ignores the premium attribute, serving as a reliable oracle for comparing both fair and regular ranking agents against an utility-optimized agent.

At the conclusion of each iteration i, the UpdateParameters function is invoked and changes the distribution of both seller groups poll sizes, represented by the parameters \(p_p\) and \(p_{np}\). The variation size of the sellers distribution may be configured at the beginning of the experiment. The idea of this function is to gradually change the pool size of each group.

At the conclusion of each iteration i, the UpdateParameters function is invoked to alter the distribution of both seller groups’ pool sizes, represented by the parameters \(p_p\) and \(p_{np}\). The magnitude of variation in the sellers’ distribution can be configured at the beginning of the experiment. The purpose of this function is to gradually adjust the pool size of each group.

The data is sampled from data distributions calculated with real product offer data using the following method:

-

Data distributions of 100 products are obtained using the fitter libraryFootnote 3, which approximates the data distribution of the attributes of each product. Then, the features mean price and mean delivery time are aggregated to enrich the metadata.

-

The HDBSCAN clustering algorithm is used to cluster the data and segregate it into 4 groups, excluding the outliers.

-

A qualitative evaluation is applied to the results, and the distribution of each attribute representing each cluster of products is consolidated using the most common distribution within the clusters and the mean of the distribution parameters.

-

At the beginning of each iteration, the simulation sellers’ feature sampler first chooses which cluster the product belongs to, respecting the original data distribution. It then samples the offers using the evaluated distribution of the chosen cluster. The outliers cluster is also considered, and when chosen, the distribution of each attribute is uniform.

The code utilized for the simulation is openly accessible on GitHubFootnote 4, ensuring full reproducibility of this setup.

4 Results and Discussion

This section presents the results of the simulation outlined in the preceding section. The simulated scenario involved adding more premium sellers over time, removing 3% of the non-premium seller pool and adding them to the premium seller pool. In the simulation, the regular ranking agent is biased towards premium sellers. This bias is represented by a 0.1 weight on the premium status attribute. In real-world scenarios, this bias can appear in an implicit form in the training data or as a complex set of requirements from stakeholders.

The ground truth ranking agent doesn’t consider the premium status attribute in the ranking function. This provides an unbiased version that doesn’t have utility metrics degraded by the fairness-utility trade-off. It is worth noting that simply not considering the premium status attribute doesn’t solve any type of bias problem, as this is a simulated scenario with a conveniently simple biased ranking function and a parameterized amount of bias. Additionally, the attributes of premium and non-premium sellers have the same distribution, which is not the case in production environments.

In Fig. 2, the red line with squared points represents the number of offers from premium sellers that won first place in the simulated offer ranking, while the blue dotted line represents the non-premium first-place winners. The x-axis represents the iterations of the simulation. In this experiment, we conducted the simulation for 20,000 iterations. Every 1,000 iterations, 30 sellers from the non-premium seller pool move to the premium seller pool. The grey bars in the figure represent the number of premium sellers, shown in darker grey, and non-premium sellers, shown in lighter grey, in each pool, with the total pool size always summing to 1,000.

In each graph, we have a type of agent. The regular agent is clearly biased towards premium sellers. At the beginning of the simulation, premium sellers are only 10% of the total pool, yet the number of premium winners was slightly more than 50%, surpassing the number of non-premium winners, which comprised 90% of the total seller pool. The fair agent’s premium winner plot line adheres almost perfectly to the proportion of premium sellers, with slight variations. This indicates that the fairness optimization used in the fair agent algorithm keeps the participation of each group in line with the group size, even in the buybox scenario where only the top-ranked seller is analyzed.

In the ground truth agent graph, the number of premium sellers that won in the offer ranking also adheres to their proportion in the total seller pool. This indicates that, in terms of fairness, the fair and the ground truth agents exhibit similar behaviors, especially when considering how much the graph diverges from that of the regular agent.

Figure 3 shows the mean price evolution of the top-ranked offer during simulation iterations for each product type. These product types were derived from clustering real-world data using the HDBSCAN algorithm, as detailed in Sect. 3, where each identified cluster represents a distinct product type. The HDBSCAN algorithm was chosen because it does not require a fixed number of groups, automatically creates an outlier group, and allows for qualitative analysis of the results using the hierarchical structure [15]. The outlier group was not considered in this analysis.

Since each product type has a different distribution of the price attribute, the figure shows that the winners chosen by the fair agent consistently have a worse mean price compared to those of the ground truth agent and the regular agent. Throughout all iterations, the regular agent consistently demonstrates a worse price mean than the ground truth agent.

Figure 3 also highlights how the distribution of the attribute can affect the results of the fair agent. Product types 2 and 4 have more variation in their fair agent price mean, ranging between 0.4 and 0.6, while in product types 1 and 3 this variation is between 0.5 and 0.6. The variation between the regular and ground truth agents is also more pronounced in product types 2 and 4, with some iterations showing a wider gap between the regular and ground truth price means.

Figure 4 shows two critical difference diagrams of the Normalized Discounted KL-divergence (rKL), the metric proposed by Yang and Stoyanovich [22], with smaller rKL values indicating fairer rankings. For each ranking agent, the mean rKL is calculated over 20 groups, each with 1,000 iterations, to ensure robust results. The agents are then ranked based on their mean rKL values for each group, and the average rank for each agent is computed across all groups.

In Fig. 4, in the diagram at the left side, the rKL was calculated using a cutoff point of 5 in the evaluated ranking, meaning that only the top 5 positions are considered when calculating the metric. The diagram at the right side uses a cutoff point of 10.

We performed a Nemenyi post-hoc test for both cutoff point options. The test identifies the sets of agents whose mean rKL ranked differences are not statistically significant. This is represented by a black horizontal bar connecting them: if two algorithms are connected by the bar, their fairness, as measured by the rKL metric, does not differ significantly.

The horizontal bar in the right side of Fig. 4 (cutoff point value of 10) connects all three agents. This means that there is not a significant statistical difference between them. This can be explained by several factors. The first factor is the changing data distribution. Initially, the smallest rKL for both cutoff point values belongs to the regular agent because the premium seller pool starts with fewer members than the non-premium seller pool. As the premium seller ratio in the total pool approaches 50%, the rKL measurements of all agents begin to align with expectations. Looking at the whole simulation, the best method for fairness is the fair agent, followed by the ground truth agent with a 0.1 difference in the average ranking, and then the regular agent, with a 0.5 difference in the average ranking.

For the cutoff point value of 5, the same behavior is observed, but with a more pronounced difference between the agents. The fair agent and the regular agent show a significant statistical difference in their results, but neither shows a significant difference with the ground truth agent, which divides both. The best cutoff point may depend on the use case and the parameters of the fairness optimizer, but the low sensitivity of the metric can also significantly affect how the metric behaves during the simulation with changing group sizes. When evaluating more members in the ranking, we often include more members of both affiliation groups, making position bias more pronounced.

Despite the influence of the cutoff point value on metric performance and the expected results shown in both diagrams, using rKL in an environment where there are changes in group affiliations can lead to unexpected outcomes. Yang and Stoyanovich [22] assert that, compared with other similar metrics, the symmetric behavior of rKL makes it more resilient to distribution changes. However, the lack of sensitivity of the metric can be detrimental to its performance in measuring fairness in such scenarios.

5 Concluding Remarks

While the fair agent has proven effective in maintaining group proportionality at the top of the ranking, it has shown inferior results in average prices compared to the regular agent and the ground truth agent. The fair agent frequently selects offers with worse prices. This disparity in average prices highlights the inherent trade-off between fairness and utility in ranking systems, where optimizing for one objective can lead to a negative impact on another.

The fairness-utility trade-off, coupled with the challenge posed by constantly changing group size distributions, has underscored limitations in state-of-the-art methods and metrics for optimizing ranking fairness in the proposed simulated environment. Novel approaches borrowed from fairness in classification methods, which are resilient to distribution shifts and capable of detecting unaware protected attributes and mitigating bias, can be adapted and tested for fairness in ranking tasks. Promising methods of this kind include quantification methods [11] and adversarial architectures for fairness [20].

References

Federal trade commission, et al. v. amazon.com, inc. Case No. 2:23-cv-01495-JHC, United States District Court for the Western District of Washington (2023). https://www.ftc.gov/system/files/ftc_gov/pdf/1910134amazonecommercecomplaintrevisedredactions.pdf. Accessed 29 Jun 2024

Asudeh, A., Jagadish, H., Stoyanovich, J., Das, G.: Designing fair ranking schemes. In: Proceedings of the 2019 International Conference on Management of Data, pp. 1259–1276 (2019)

Browne, R.: Amazon avoids multibillion-dollar fine as it reaches EU antitrust settlement (2023). https://www.cnbc.com/2022/12/20/amazon-reaches-settlement-with-eu-on-antitrust-case.html. Accessed 29 June 2024

Brückner, M., Scheffer, T.: Stackelberg games for adversarial prediction problems. In: Proceedings of the 17th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 547–555 (2011)

Celis, L.E., Mehrotra, A., Vishnoi, N.K.: Interventions for ranking in the presence of implicit bias. In: Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, pp. 369–380 (2020)

Celis, L.E., Straszak, D., Vishnoi, N.K.: Ranking with fairness constraints. In: 45th International Colloquium on Automata, Languages, and Programming (ICALP 2018), vol. 107, pp. 28 (2018)

D’Amour, A., Srinivasan, H., Atwood, J., Baljekar, P., Sculley, D., Halpern, Y.: Fairness is not static: deeper understanding of long term fairness via simulation studies. In: Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, pp. 525–534 (2020)

Dash, A., Chakraborty, A., Ghosh, S., Mukherjee, A., Gummadi, K.P.: When the umpire is also a player: bias in private label product recommendations on e-commerce marketplaces. In: Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, pp. 873–884 (2021)

Elzayn, H., et al.: Fair algorithms for learning in allocation problems. In: Proceedings of the Conference on Fairness, Accountability, and Transparency, pp. 170–179 (2019)

Ensign, D., Friedler, S.A., Neville, S., Scheidegger, C., Venkatasubramanian, S.: Runaway feedback loops in predictive policing. In: Conference on fairness, accountability and transparency, pp. 160–171 (2018)

Fabris, A., Esuli, A., Moreo, A., Sebastiani, F.: Measuring fairness under unawareness of sensitive attributes: a quantification-based approach. J. Artif. Intell. Res. 76, 1117–1180 (2023)

Ke, Y., Loftus Joshua, R., Julia, S.: Causal intersectionality and fair ranking. In: 2nd Symposium on Foundations of Responsible Computing, FORC 2021, June 9–11, 2021, Virtual Conference (LIPIcs), vol. 192, pp. 1–7 (2021)

Kleinberg, J., Raghavan, M.: Selection problems in the presence of implicit bias. In: 9th Innovations in Theoretical Computer Science Conference (ITCS 2018). Schloss-Dagstuhl-Leibniz Zentrum für Informatik (2018)

Liu, L.T., Dean, S., Rolf, E., Simchowitz, M., Hardt, M.: Delayed impact of fair machine learning. In: International Conference on Machine Learning, pp. 3150–3158 (2018)

McInnes, L., Healy, J.: Accelerated hierarchical density based clustering. In: 2017 IEEE International Conference on Data Mining Workshops (ICDMW), pp. 33–42 (2017)

Reeves, R.V., Halikias, D.: Race gaps in sat scores highlight inequality and hinder upward mobility (2017). https://www.brookings.edu/articles/race-gaps-in-sat-scores-highlight-inequality-and-hinder-upward-mobility. Accessed 29 June 2024

Riccio, V., Jahangirova, G., Stocco, A., Humbatova, N., Weiss, M., Tonella, P.: Testing machine learning based systems: a systematic mapping. Empir. Softw. Eng. 25, 5193–5254 (2020)

Stoyanovich, J., Yang, K., Jagadish, H.: Online set selection with fairness and diversity constraints. In: Proceedings of the EDBT Conference (2018)

The European Commission: Antitrust: Commission accepts commitments by amazon barring it from using marketplace seller data, and ensuring equal access to buy box and prime. https://ec.europa.eu/commission/presscorner/detail/en/ip_22_7777. Accessed 29 June 2024

Yang, J., Soltan, A.A., Yang, Y., Clifton, D.A.: Algorithmic fairness and bias mitigation for clinical machine learning: insights from rapid COVID-19 diagnosis by adversarial learning. medRxiv (2022)

Yang, K., Gkatzelis, V., Stoyanovich, J.: Balanced ranking with diversity constraints. In: Proceedings of the Twenty-Eighth International Joint Conference on Artificial Intelligence, IJCAI, pp. 6035–6042 (2019)

Yang, K., Stoyanovich, J.: Measuring fairness in ranked outputs. In: Proceedings of the 29th International Conference on Scientific and Statistical Database Management, pp. 1–6 (2017)

Zehlike, M., Yang, K., Stoyanovich, J.: Fairness in ranking, part i: Score-based ranking. ACM Comput. Surv. 55(6), 1–36 (2022)

Acknowledgements

We would like to thank Americanas S.A. for their technical support. This study was financed in part by the Coordenação de Aperfeiçoamento de Pessoal de Nível Superior - Brasil (CAPES) - Finance Code 001.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Silva, L.C., Lucrédio, D. (2025). Exploring Score-Based Ranking Fairness in Marketplace Environments Through Simulation. In: Paes, A., Verri, F.A.N. (eds) Intelligent Systems. BRACIS 2024. Lecture Notes in Computer Science(), vol 15414. Springer, Cham. https://doi.org/10.1007/978-3-031-79035-5_19

Download citation

DOI: https://doi.org/10.1007/978-3-031-79035-5_19

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-79034-8

Online ISBN: 978-3-031-79035-5

eBook Packages: Computer ScienceComputer Science (R0)