Abstract

Cardiovascular disease is one of the leading causes of worldwide death and an annual burden for European and American economies. The primary source of data to identify heart abnormalities is the electrocardiogram (ECG). However, its identification requires significant human efforts. In many cases, traditional rule-based diagnosis is inefficient due to the data heterogeneity, motivating the use of deep neural network approaches. However, ECG data varies significantly between equipment, including the sampling rate. Self-supervised Representation Learning (SSL) has gained increasing attention due to its generalization capacity in scenarios with scarce availability of a large volume of labeled data. In this work, we investigate using SSL methods in a cross-dataset scenario. We raised different research questions supported by the assumption that high-resource data, with a large sampling rate and volume of records, is helpful for training models in an SSL manner to classify ECG in low-resource datasets. Besides, we evaluate different resampling methods to match the sampling rate between datasets, including a novel upsampler based on ESPCN. Our experimental evaluation shows that using the state-of-the-art SSL models TS-TCC and TS2Vec can improve the accuracy in the low-resource dataset. However, the best results were obtained using the same dataset in both pre-training and downstream task steps. These results indicate the need to develop more suitable pretext tasks for cross-dataset scenarios, which may use our experimental analysis to build new ideas.

Access provided by University of Notre Dame Hesburgh Library. Download conference paper PDF

Similar content being viewed by others

1 Introduction

Cardiovascular Disease (CVD) was one of the leading causes of worldwide death in 2016, being an annual burden for the European and American economies estimated on a scale of hundreds of billions of dollars [4]. On the other hand, identifying heart abnormalities and providing effective treatment requires significant human efforts and expensive medical procedures [17].

The primary source of data to identify these abnormalities is the electrocardiogram (ECG). The ECG is a non-stationary physiological signal characterized for being a low-cost, rapid, and simple exam that provides an overview of the physiological and structural condition of the heart by identifying pathological patterns among the heartbeats [14]. Alongside monitoring the heart’s health, ECGs can provide valuable diagnostic clues for systemic conditions such as mental stress and drug toxic effects [12].

In many cases, the traditional rule-based diagnosis paradigm is inefficient due to the data heterogeneity, motivating the proposal of deep neural networks-based approaches for various related tasks [4]. Generally, the availability of sufficiently large labeled data is one of the critical factors for reliable deep learning methods [19]. However, the labeling process is particularly costly in the health domain, and clinical ground truth is hard to define in many cases [10]. It encouraged some studies to explore the use of representation learning for ECG data [20].

Self-supervised Representation Learning, or only Self-supervised Learning (SSL), has gained increasing attention due to its generalization capacity in scenarios where sufficiently large labeled data for training deep neural networks is scarce [19]. SSL methods are a two-step framework consisting of an unsupervised pre-training step followed by a supervised task, also called downstream task [12]. The pre-training step relies on an optimization task generated using pseudo-supervision signals from unlabeled data [22]. The pretext task defines how the model will construct the pseudo-supervision signals, serving as a means to learn informative representations for downstream tasks. We can generally organize the pretext tasks into three categories: context prediction, instance discrimination, and instance generation [19]. ECG is stored and analyzed as a time series. In this domain, context prediction and instance discrimination are the most commonly used category [3, 7, 21].

However, despite the variety of proposed pretext task approaches, most research on time series SSL evaluates the quality of the proposed pre-trained network using the same dataset in both self-supervised and downstream steps. This kind of evaluation helps demonstrate that the pretext task and its architecture may help classify a low-resource target dataset. However, it neglects cross-dataset scenarios where a high-resource dataset is used as source data to pre-train a model to increase the performance on the downstream tasks that only rely on low-resource data.

The cross-dataset scenario is particularly interesting for healthcare applications like ECG classification. While a large volume of ECG data can be collected, it is prevalent for annotated datasets to be small. Additionally, an ECG can vary significantly from one device to another, including its sampling rate, and even from one patient to another. Therefore, understanding the quality of SSL methods in this context can be a significant step in creating suitable models for ECG analysis. This observation inspired us to investigate the question: Can a high-resource dataset be used to tackle a low-resource ECG classification task with pre-trained SSL models with good performance?

To answer this question we conducted a set of investigations that identify the keys aspects need to be addressed when inserted on a ECG cross-dataset scenarioFootnote 1. These investigation can be summarized as follows:

-

Performance comparison between same dataset and cross-dataset scenario. We investigate the capacity of self-supervised models to transfer knowledge between datasets from the same domain using a high-resource dataset to build a robust feature extractor. For this, we compare the performance and quality of the embedding produced by SSL encoder pre-trained on high-resource with other pre-trained on the target dataset (low-resource).

-

The impact of pretext task in the final performance. We analyze how much the pre-training step increases the model’s capacity to classify ECG under a low-resource scenario. To investigate this aspect, we compare the classifier’s performance with a pre-trained SSL encoder against that of a classifier with a random initialized SSL encoder.

-

Suitable approaches to deal with different sampling rates. We compare the results obtained by upsampling the target dataset to match the high-resource data with those achieved when we downsample the source data to match the sampling rate of the target dataset.

-

Using a cross dataset pre-trained upsampling model instead of a simple interpolator. We investigate if using an upsampler model trained in the source data outperforms using linear interpolation to resample the target dataset. We adapt the original upsampler model to lead the univariate time series and arbitrary-scale upsampling.

2 Motivations

Deep learning (DL) has been successfully applied in detecting arrhythmia using ECGs [1]. However, alongside the need for extensive labeled data, DL techniques may face generalization difficulties, especially when the feature space distribution varies significantly, and ECG signal can vary considerably from person to person [1, 17]. Figure 1 illustrates this fact, where we can observe a considerable difference between two samples of the same class but recorded from different subjects. The performance of a DL model trained in particular ECG datasets may not be reliable when applied to another dataset, requiring the model to have a high capacity to learn more general and meaningful representation by incorporating the ECG variability.

Difference between samples from same class in ECG Fragment (c.f. Sect. 4.1).

Motivation 1: SSL Aims to Train Powerful Feature Extractors. SSL techniques aim to train a powerful feature extractor using a discriminate learning pretext task that exploits the knowledge about the data modality without the need for manual annotation of the training examples [6]. Conceptually, in this process, we have two datasets: one used during the training of the feature extractor, the source dataset, and our task dataset, the target dataset. However, in recent works, SSL methods for time series have been intensely evaluated on the same dataset scenario when the source and target dataset are the same, while the analysis on cross-dataset scenarios has been neglected [3, 5, 18, 21].

Given the lack of information about the performance of SSL methods in cross-dataset scenarios, we raise the following research questions to be answered in the ECG classification context:

-

RQ1: Does using a high-resource dataset increase the performance of the SSL method in a low-resource dataset?

-

RQ2: How much does the pretext task impact the performance of the downstream task?

Motivation 2: The Improvement of the Diversity of the ECG Dataset. In recent years, we have had an improvement in ECG database diversity in terms of sampling rate, number of channels, duration of the signal, and propose [17]. When leading with a transfer learning scenario the similarity or dissimilarity of the datasets play an important role [22]. To mitigate this effects, we selected datasets from the same domain and resampled to match their sampling rate.

Aiming to incorporate the sampling rate differences in the self-supervised workflow, we raise the following research questions:

-

RQ3: What is the better resampling strategy when the source dataset has a higher sampling rate, upsampling the target dataset or downsampling the source dataset?

-

RQ4: Does the utilization of a pre-trained upsampling model outperform the use of a linear interpolation?

This paper tries to answer these questions through empirical analysis.

3 Research Methodology

To answer the research questions, we conducted a comprehensive set of experiments to investigate the performance of two SSL methods on a low-resource and low sampling rate multi-class classification ECG task. The experiments were split into four different scenarios: (1) Target Interpolation, (2) ESPCN, (3) Source Interpolation, and (4) Same Dataset. Figure 2 illustrates the architecture of each workflow. Through scenarios 1 and 2, we aim to evaluate the difference between resampling the dataset using linear interpolation and the upsampling model. Scenario 3 helps us to decide where to resample the dataset (at the pre-training or calibration phase). At last, scenario 4 is a baseline for cross-dataset scenarios. All scenarios end with a task model consisting of the downstream task’s decision layers. In our experiments, we vary the SSL encoder, pretext, and task models (c.f. Sect. 4.3).

The conducted experiments scenarios. (1) Target Interp. - Pre-train the SSL encoder in the source dataset and calibrate using the linear interpolated target dataset. (2) ESPCN - Pre-train the SSL encoder in the source dataset and calibrate using the target dataset upsampled using the ESPCN model. (3) Source Interp. - Pre-train the SSL encoder in the downsampled source dataset and calibrate with the target dataset at the original sampling rate. (4) Same Dataset - pre-training and calibration phases are made using the target dataset at the original sampling rate.

3.1 Time Series Upsampling with ESPCN

Single image super-resolution (SISR) refers to a computer vision task of reconstructing a super-resolution image (SR) from its low-resolution (LR) counterpart [2]. Dominant approaches focus on extracting features using a deep vision architecture and then upsample this feature space to high-resolution images at the network’s end [8]. SwinIR [9] combines shallow features extracted by the convolution layer with deep features extracted using transformer blocks, which can capture long-range dependencies within varying contents.

Considering the already substantial computational cost associated with the pretext task of the SSL models, we opted to utilize ESPCN [13], an SISR method that uses a shallow feature extractor [22]. ESPCN introduced an efficient way of extracting feature maps on LR image space and upscaling them into the SR image output through the sub-pixel convolution layer. Initially proposed for image domain and produce outputs with integer scale factor, we adapted the ESPCN architecture to upsample univariate time series with arbitrary-scale factor, as shown in Fig. 3.

The architecture of the adapted ESPCN for univariate time series with arbitrary-scale. A feature extractor module captures the temporal characteristics of the input data and, after a sub-pixel convolution layer, aggregates these features into a univariate time series space. Finally, the linear layer reduces the univariate time series to match the original arbitrary-scale factor.

Our adapted version of the ESPCN maintains the feature extractor module with the same number of convolution blocks as the original proposal, changing the 2d-convolution for a 1d-convolution layer. The features encoded into embedding space are tensors of size \(B \times 1*\lceil s \rceil \times L\), where B is the batch size, s the arbitrary-scale factor, and L is the time series original length. The sub-pixel convolution layer is also the same as the original one, a periodic shuffling operator that rearranges the elements of a \(B \times 1*\lceil s \rceil \times L\) tensor to a tensor of shape \(B \times 1 \times L*\lceil s \rceil \). After, the arbitrary-scale factor property is obtained by the addition of a linear layer that reduces this univariate representation into the resampled univariate time series, \(B \times 1 \times L*s\).

To validate these modifications, we compare the quality of the reconstructed signal. Figure 4 presents a time series upsampled with the original ESPCN and our adapted version, called Arbitrary-ESPCN. Both models were trained using the same dataset, the PTB-XL-700 (c.f. Sect. 4.1) downsampled to 250 Hz with its original in 500 Hz version being the labels, with a learning rate of \(1e-4\) and batch size of 64 samples. Figure 4 shows that the Arbitrary-ESPCN can correctly upsample a signal like the original ESPCN, so visually, both signals are almost identical with Arbitrary-ESPCN and original ESPCN achieving an MSE of 0.0038 and 0.0006, respectively.

Aiming to verify the generalization capacity of both models, we upsampled an ECG Fragment’s (c.f. Sect. 4.1) sample without the previous calibration step. To make the ECG Fragment’s sample (that has a sampling rate of 360 Hz) compatible with the already pre-trained models, we resampled it by setting the input to 250 Hz and its respective label to 500 Hz through linear interpolation. Both models correctly reconstructed the time series, with Arbitrary-ESPCN and original ESPCN obtaining an MSE of 0.0008 and 0.0001, respectively, certifying the robustness of the selected upsampler model and that our adaptation doesn’t have caused a significant performance reduction.

3.2 TS2Vec - Towards Universal Representation of Time Series

TS2Vec [18] is a well-established SSL method for learning contextual representation of time series at various semantic levels through hierarchical contrasting and contextual consistency. Contextual consistency is obtained by applying timestamp masking and randomly cropping two context views from the input time series. The masking increases the robustness of the learned representation, while random cropping generates new contexts without collapsing the representation. A hierarchical contrastive loss extracts the multi-scale contextual information by computing temporal and instance-wise contrast for all granularity levels using a recursive max-pooling operation. As the feature extractor uses a dilated CNN module with ten residual blocks, enabling a large receptive field.

3.3 TS-TCC - Time-Series Representation Learning via Temporal and Contextual Contrasting

TS-TCC [5] uses two augmented versions of the input signal – one strongly augmented and the other weakly augmented – to learn robust representation using a cross-view prediction task. A permutation-and-jitter strategy is applied for strong data augmentation, while weak augmentation uses a jitter-and-scale strategy. The augmented versions are passed to an encoder with a 3-block of convolutions to extract high-dimensional representation. The temporal contrasting module uses a transformer as the autoregressive model to summarize the latent representations into a context vector. The context vector is used in a cross-view prediction task using the strong augmentation context to predict the weak augmentation’s future timestep and vice versa. A contextual contrastive module learns to discriminate the contexts using a non-linear projection head; this module aims to learn more discriminative representations.

4 Experimental Evaluation

To answer our research questions, we conducted a comprehensive experimental evaluation. It is important to note that we evaluated various SSL methods [7, 21] and chose to follow with TS-TCC and TS2Vec. This selection was made for two main reasons: these are described in the literature as the state-of-the-art SSL for time series, and the other methods led to a significant overfitting in their pretext task for the datasets used. This section describes the experimental setup and the results achieved in our experiments. We initially present the datasets used in our analysis.

4.1 Datasets

We used two datasets to explore our research questions, one with a higher volume of data and sampling rate. This dataset, PTB-XL-700, is used to pre-train the model, which we refer to as source data. ECG Fragment contains fewer examples sampled at a lower sampling rate than the source data. Therefore, it is used as the target data to configure the downstream task. We previously split both datasets into train, validation, and test sets and normalized datasets’ instances using z-score, transforming the observations to have zero mean and unit variance.

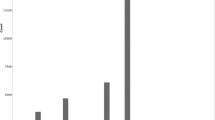

ECG Fragment is a low-resource univariate dataset that contains 1016 ECG records of 2-second fragments with a sampling rate of 360 Hz. Each record presents one type of rhythm disturbance, labeled into six classes according to the potential risk to the patient’s life, with some classes composed of multiple disorders [11]. One of the most challenging characteristics of this dataset is the high within-class variation (Fig. 1) at the same that some classes share similarities, as shown in Fig. 5. Figure 6 shows the dataset’s class distribution.

PTB-XL-700 is a high-resource dataset constructed by randomly selecting 700 records from PTB-XL dataset [15], which contains 12-lead long-term ECG obtained at a sampling rate of 500 Hz. We randomly segmented each record into five 2-second segments to increase the number of examples while standardizing the signal duration. Besides, we used each lead as a univariate time series to provide data variability. We apply the z-normalization after the segmentation steps. These operations lead to a dataset containing 42,000 samples. Figure 7 illustrates four out of twelve leads from the same record.

4.2 Experimental Settings

The hyperparameters of the investigated models was empirically selected withing the follow ranges: dimensions of the feature extractor representation = \(\{ 128 \}\); learning rate = \([1e^{-3}, 1e^{-6}]\); epochs = \(\{ 20, 40, 60, ..., 160 \}\). For the optimizer algorithm, TS-TCC and TS2Vec specific parameters, we followed their original implementation.

In all SSL models’ configurations, we applied three adjustment strategies: (i)Random initialization: we do not pre-train the SSL encoder, so the target dataset is responsible for training the SSL encoder and task models; (ii) Fine-tuning: we allow the training with the target dataset to adjust the task model’s and pre-trained SSL encoder’s parameters, as well as the ESPCN (when it applies); (iii) Linear probing: once the SSL is pre-trained, we only allow the adjustment of the task model’s parameter.

In the pipeline that applies the ESPCN (scenario 2), we pre-train the upsampler model using the PTB-XL-700 dataset with the following parameters: learning rate = \(1e^{-4}\), epochs = 25 and batch size of 64 samples.

Finally, we evaluate our results through the macro F1-score due to the class imbalance of the downstream task dataset (c.f. Fig. 6).

As previously stated, we varied the task models to evaluate the effects of the pre-trained model with different decision functions. Specifically, we applied three architectures: (i) Linear: a single linear/dense layer with softmax function; (ii) MLP: a two-layer densely connected with ReLU and a softmax as the activation functions in the penultimate and the output layers, respectively; (iii) Oates’ MLP: a multi-layer perceptron model proposed for time series classification, with a larger number of parameters than MLP [16].

4.3 Experimental Results

In this section, we comprehensively assess the performance of all the variations of experiments described in the previous sections to handle the ECG classification task. The results are systematically summarized in Table 1. Our first observation is that TS-TCC outperformed TS2Vec in three out of four scenarios. The only scenario in which TS2Vec outperforms TS-TCC is when we apply ESPCN as the first step. One possible reason for that is the model complexity. Once the TS2Vec encoder comprehends ten blocks of dilated convolution, more data may be necessary to adjust the parameters compared to the three convolution blocks of the TS-TCC encoder. One evidence for this claim is that linear probing or fine-tuning obtained all the best results for TS2Vec, while TS-TCC obtained some of the best results from random initialization.

To improve the robustness of our analysis, we also provide a baseline scenario to compare the SSL with supervised learning using well-known and widely applied architectures [16]: Oates’ MLP, FCN, and ResNet-64. In addition, we evaluate the Linear and MLP task models under the supervised scenario. Table 2 summarizes the results. The scarcity of data in the target dataset hampers the FCN and ResNet-64 model’s capacity to identify discriminative features, while the Linear model lacks representation capacity. The different MLP configurations obtained the best scores. Given these results, we will discuss each research question raised in Sect. 1.

Performance Comparison Between Same Dataset and Cross-Dataset Approaches (RQ1). To compare these scenarios, we excluded scenario 4 to avoid the influence of ESPCN in the final results. Therefore, the evaluation compares the scenarios cross-dataset that use linear interpolation (scenarios 1 and 3) against the one that pre-trains and calibrates the classification model using the same dataset (scenario 4). Valuable observations can be summarized as follows.

Firstly, the use of a high-resource dataset doesn’t increase the maximum performance of the selected SSL models’ performance. The maximum F1 score achieved under the considered cross-dataset scenarios was 0.5248 for TS2Vec. Meanwhile, the best F1 score achieves 0.5666 when the SSL model uses the same dataset in the training and evaluation phases. Besides TS-TCC’s best performance being a cross-dataset case, there is no significant difference in the best result in the same dataset scenarios. The difference between these cases is 0.0011.

To better understand this outcome, we visualized the projection of the representation learned during the pre-training. Figure 8 shows the t-SNE projections of the embeddings in all scenarios, including the one with ESPCN. In all scenarios (even in scenario 3), the projection of the produced embedding is similar regarding the class overlaps and well-separated groups of instances. It indicates that using a high-resource dataset does not increase the capacity of the encoders to extract meaningful features from the low-resource target dataset.

The Impact of Pretext Task in the Performance (RQ2). The central comparison to answer this research question relies on the results obtained by random initialization and linear-probing and fine-tuning calibration strategies.

We first analyze the results obtained by TS-TCC, given that it achieved the best performance. However, these best results are only partially related to its pretext task. Most TS-TCC models achieved their macro-F1 score under the random initialization strategy without relying on the pre-training phase. Remarkably, the best results were obtained by random initialization, and the same dataset scenario achieved the second-best score. It indicates that the high-resource data does not improve the performance of TS-TCC in any scenario.

In contrast, random initialization did not achieve the best F1 score in any scenario based on the TS2Vec. As this model is more complex, it is probably more benefited by the high-resource data. However, as it did not achieve results as good as those obtained by TS-TCC, the source data may be not enough to train a suitable model using TS2Vec. It points to the intuition that a higher volume of data to train a larger SSL model may be a promising research path. On the other hand, the difference between the datasets (c.f. Figs. 5 and 7) may affect the results.

In contrast, the architectures proposed to the SSL models seem relevant for learning better representations, given that the results achieved by applying both of them are considerably better than those of the supervised scenario. It indicates that these architectures are extracting more relevant features for this application than the MLPs, FCN, and ResNet applied directly to the signal.

Upsampling the Target Dataset or Downsampling the Source Dataset? (RQ3). We analyze this research question based on the results of scenarios 1 and 3. For TS-TCC, the difference in performance is almost nonexistent, with an average difference of \(\approx 0.0073\), while downsampling the source dataset reduced the performance of the TS2Vec models by \(\approx 0.0351\). As the differences are minor, and the same dataset scenario performs better, we did not draw significant conclusions to this question.

A Pre-Trained Upsampling Model Outperform the Use of a Linear Interpolation? (RQ4). Through the analysis of scenarios 1 and 2, we can observe that TS-TCC suffered a significant performance reduction when using ESPCN. Conversely, ESPCN configured most of the best workflow scenarios for the ECG fragment classification task using TS2Vec as SSL model. Under the supervised strategy, FCN and ResNet-64 results considerably improved using upsampler model. Considering these observations, using ESPCN as an upsampler with larger models seems promising, mainly if associated with a pretext task for cross-dataset scenarios. In other words, if the research on SSL for ECG data follows the use of large models, we recommend using our adapted ESPCN to resample data to an arbitrary sampling rate.

5 Discussion

Through the investigation of our research questions, we obtained diverse insights about the quality of SSL methods in a cross-dataset context that can be helpful to future research. The experiments indicate that TS-TCC and TS2Vec models are ineffective in transferring knowledge from unlabeled datasets with higher resources to enhance the model’s capacity to solve the downstream task (RQ1). Despite the low transfer learning capacity, the SSL models showed architectures that could extract more relevant features than the baseline methods (RQ2). Also, it seems promising to adopt ESPCN when associated with larger models (RQ4). However, due to the exploratory nature of the present investigation, including more diverse pretexts (e.g., context prediction and instance generation) and downstream tasks will allow us to obtain more significant and general conclusions, especially for the RQ3. Even though we focused on the ECG domain, we expect these insights to contribute to advancing SSL models in time series domains with labeled data scarcity, like mobile health applications.

6 Conclusions

In this paper, we investigate using a high-resource dataset to increase the performance of SSL models in a low-resource ECG classification dataset. Specifically, we compare the performance of two SSL models (TS-TCC and TS2Vec) under four different scenarios, with three being cross-dataset, and present an adaptation of the ESPCN model for univariate time series and arbitrary-scale factor. The experiments demonstrated that using a high-resource dataset does not increase the performance of the SSL model, especially for TS-TCC, where the random initialization scenario has obtained the best overall result. Also, the ESPCN upsampler increases the performance of the TS2Vec, FCN, and ResNet-64. Suggesting that the use of ESPCN with larger models is promising. These results indicate the need to develop pretext tasks more suitable for cross-dataset scenarios where knowledge transfer is fundamental.

Notes

- 1.

Source code https://github.com/RafaelSilva7/tackling-low-ecg.

References

Chen, A., et al.: Multi-information fusion neural networks for arrhythmia automatic detection. Comput. Methods Programs Biomed. 193, 105479 (2020)

Chen, H., et al.: Real-world single image super-resolution: a brief review. Inf. Fusion 79, 124–145 (2022)

Darban, Z.Z., Webb, G.I., Pan, S., Salehi, M.: Carla: a self-supervised contrastive representation learning approach for time series anomaly detection. arXiv preprint arXiv:2308.09296 (2023)

Ebrahimi, Z., Loni, M., Daneshtalab, M., Gharehbaghi, A.: A review on deep learning methods for ECG arrhythmia classification. Expert Syst. Appl. X 7, 100033 (2020)

Eldele, E., et al.: Time-series representation learning via temporal and contextual contrasting. arXiv preprint arXiv:2106.14112 (2021)

Ericsson, L., Gouk, H., Loy, C.C., Hospedales, T.M.: Self-supervised representation learning: introduction, advances, and challenges. IEEE Signal Process. Mag. 39(3), 42–62 (2022)

Foumani, N.M., Tan, C.W., Webb, G.I., Salehi, M.: Series2vec: similarity-based self-supervised representation learning for time series classification. arXiv preprint arXiv:2312.03998 (2023)

Lee, J., Jin, K.H.: Local texture estimator for implicit representation function. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 1929–1938 (2022)

Liang, J., Cao, J., Sun, G., Zhang, K., Van Gool, L., Timofte, R.: Swinir: image restoration using swin transformer. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 1833–1844 (2021)

Mehari, T., Strodthoff, N.: Self-supervised representation learning from 12-lead ECG data. Comput. Biol. Med. 141, 105114 (2022)

Nemirko, A., Manilo, L., Tatarinova, A., Alekseev, B., Evdakova, E.: ECG fragment database for the exploration of dangerous arrhythmia (2022)

Rabbani, S., Khan, N.: Contrastive self-supervised learning for stress detection from ECG data. Bioengineering 9(8), 374 (2022)

Shi, W., et al.: Real-time single image and video super-resolution using an efficient sub-pixel convolutional neural network. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1874–1883 (2016)

Siontis, K.C., Noseworthy, P.A., Attia, Z.I., Friedman, P.A.: Artificial intelligence-enhanced electrocardiography in cardiovascular disease management. Nat. Rev. Cardiol. 18(7), 465–478 (2021)

Wagner, P., et al.: PTB-XL, a large publicly available electrocardiography dataset. Sci. Data 7(1), 1–15 (2020)

Wang, Z., Yan, W., Oates, T.: Time series classification from scratch with deep neural networks: a strong baseline. In: 2017 International Joint Conference on Neural Networks (IJCNN), pp. 1578–1585. IEEE (2017)

Xiao, Q., et al.: Deep learning-based ECG arrhythmia classification: a systematic review. Appl. Sci. 13(8), 4964 (2023)

Yue, Z., et al.: Ts2vec: towards universal representation of time series. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 36, pp. 8980–8987 (2022)

Zhang, K., et al.: Self-supervised learning for time series analysis: taxonomy, progress, and prospects. IEEE Trans. Pattern Anal. Mach. Intell. (2024)

Zhang, W., Geng, S., Hong, S.: A simple self-supervised ECG representation learning method via manipulated temporal-spatial reverse detection. Biomed. Signal Process. Control 79, 104194 (2023)

Zhang, W., Yang, L., Geng, S., Hong, S.: Self-supervised time series representation learning via cross reconstruction transformer. arXiv preprint arXiv:2205.09928 (2022)

Zhao, Z., Alzubaidi, L., Zhang, J., Duan, Y., Gu, Y.: A comparison review of transfer learning and self-supervised learning: definitions, applications, advantages and limitations. Expert Syst. Appl. 122807 (2023)

Acknowledgments

This work was supported by Coordenação de Aperfeiçoamento de Pessoal de Nível Superior (CAPES) grant 88887.925347/2023-00 and the São Paulo Research Foundation (FAPESP) grant numbers 2024/07016-4 and 2022/03176-1.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

da Costa Silva, R., Silva, D.F. (2025). Tackling Low-Resource ECG Classification with Self-supervised Learning. In: Paes, A., Verri, F.A.N. (eds) Intelligent Systems. BRACIS 2024. Lecture Notes in Computer Science(), vol 15415. Springer, Cham. https://doi.org/10.1007/978-3-031-79038-6_17

Download citation

DOI: https://doi.org/10.1007/978-3-031-79038-6_17

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-79037-9

Online ISBN: 978-3-031-79038-6

eBook Packages: Computer ScienceComputer Science (R0)